A Comparative Analysis of Design of Experiments (DoE) Methods for Optimizing Biosensor Development

This article provides a systematic comparison of Design of Experiments (DoE) methodologies for optimizing biosensor performance.

A Comparative Analysis of Design of Experiments (DoE) Methods for Optimizing Biosensor Development

Abstract

This article provides a systematic comparison of Design of Experiments (DoE) methodologies for optimizing biosensor performance. Tailored for researchers, scientists, and drug development professionals, it explores foundational DoE principles and their application across various biosensor types, including electrochemical and optical platforms. The content delivers practical guidance on selecting appropriate experimental designs, from factorial to response surface methodologies, for screening and optimization. It further addresses critical troubleshooting strategies, validation protocols to ensure reliability, and a direct comparative analysis of different DoE approaches. The objective is to equip practitioners with the knowledge to efficiently develop highly sensitive, robust, and clinically viable biosensing devices.

Foundations of DoE: A Primer for Systematic Biosensor Development

The Fundamental Flaw of One-Variable-at-a-Time Approaches

In scientific research, particularly in complex fields like biosensor development and drug discovery, the traditional one-variable-at-a-time (OVAT) method has long been the default approach for experimentation. This method involves changing a single factor while keeping all others constant, which appears straightforward and intuitive. However, this approach contains a critical flaw: it cannot detect interactions between factors, which are often fundamental to understanding complex biological and chemical systems [1].

Consider a simple experiment optimizing temperature and pH for chemical yield. An OVAT approach might first optimize temperature while holding pH constant, then optimize pH using the previously determined "optimal" temperature. This sequential process can completely miss the true optimum if the factors interact. In a documented case, an OVAT approach identified a maximum yield of 86% at 30°C and pH 6, while a designed experiment revealed a superior optimum of 92% yield at 45°C and pH 7 - a combination the OVAT method never tested and could not identify [1]. The OVAT method not missed the true optimal conditions but also failed to reveal the twisting response surface that indicated a significant temperature-pH interaction.

For biosensor development, where multiple interdependent parameters influence performance, this limitation becomes particularly problematic. Biosensor optimization typically encompasses formulation of the detection interface, immobilization strategy of biorecognition elements, and detection conditions - all of which may interact in complex ways [2]. When researchers optimize these parameters independently, the established conditions may not represent the true optimum, potentially hindering biosensor performance in critical point-of-care diagnostic settings [2].

Design of Experiments: A Systematic Framework for Optimization

Core Principles of DoE

Design of Experiments (DoE) represents a paradigm shift from traditional OVAT approaches. DoE is a systematic, statistical framework that enables researchers to study multiple factors simultaneously in a structured, efficient manner [1]. Rather than exploring the experimental space point-by-point, DoE examines the entire domain through a strategically selected set of experimental runs, allowing for the development of mathematical models that describe how factors influence responses, both individually and through their interactions [2].

The fundamental advantage of DoE lies in its ability to provide global knowledge of the experimental domain. Unlike OVAT approaches that generate localized knowledge based on sequential experiments, DoE establishes an experimental plan a priori, enabling prediction of responses at any point within the experimental domain, including untested locations [2]. This comprehensive understanding comes from studying factors at carefully selected combinations, typically represented as the corners of a geometric shape (square for 2 factors, cube for 3 factors, hypercube for more factors) [2].

Key DoE Methodologies and Designs

Several experimental designs form the backbone of DoE methodology, each suited to different experimental objectives:

Full Factorial Designs: These fundamental designs study all possible combinations of factors at their specified levels. A 2^k factorial design (where k is the number of factors) requires 2^k experiments, with each factor tested at two levels (coded as -1 and +1) [2]. For example, a 2^2 factorial design investigating two factors (X1 and X2) would consist of four experiments: (-1, -1), (+1, -1), (-1, +1), and (+1, +1) [2]. These designs efficiently fit first-order models and can estimate all main effects and interactions.

Central Composite Designs: When response curvature is suspected, central composite designs extend factorial designs by adding axial points, allowing estimation of quadratic terms and enabling the modeling of nonlinear responses [2]. These second-order designs are particularly valuable for optimization when the true optimum lies within the experimental region rather than at its boundaries.

Mixture Designs: These specialized designs apply when the factors are components of a mixture that must sum to 100% [2]. In such cases, factors cannot be varied independently - changing one component necessarily changes the proportions of others. Mixture designs accommodate this constraint while enabling optimization of formulation composition.

Table 1: Comparison of Common Experimental Design Types

| Design Type | Best Use Case | Key Advantages | Limitations |

|---|---|---|---|

| Full Factorial | Screening 2-5 factors; estimating all interactions | Measures all main effects and interactions; relatively simple to implement | Number of runs grows exponentially with factors (2^k) |

| Central Composite | Response surface modeling; optimization | Captures curvature; identifies stationary points | Requires more runs than factorial designs |

| Mixture Designs | Formulation optimization | Accounts for component interdependence | Specialized for mixture problems only |

DoE in Action: Biosensor Optimization Case Studies

Whole-Cell Biosensor Engineering

The power of DoE methodology is vividly demonstrated in whole-cell biosensor development. Researchers applied a Definitive Screening Design to optimize a protocatechuic acid (PCA)-responsive biosensor by systematically varying three genetic components: the promoter regulating the transcription factor (Preg), the output promoter (Pout), and the ribosome binding site controlling translation (RBSout) [3].

The DoE approach enabled the researchers to efficiently map the complex relationships between genetic components and biosensor performance metrics, including OFF-state expression (leakiness), ON-state expression, and dynamic range (ON/OFF ratio). Through structured experimentation and statistical modeling, they identified factor combinations that dramatically enhanced biosensor performance, achieving a 30-fold increase in maximum signal output, >500-fold improvement in dynamic range, and >1500-fold increase in sensitivity compared to initial designs [3].

Notably, the DoE methodology also enabled modulation of the biosensor's dose-response behavior to create both digital (switch-like) and analog (graded) response profiles suited to different applications [3]. This level of systematic optimization would be extremely challenging, if not impossible, to achieve through traditional OVAT approaches due to the complex interactions between genetic components.

Ultrasonic Spray Pyrolysis for Material Synthesis

In materials science relevant to biosensor fabrication, researchers employed a 2^3 full factorial design to optimize the deposition of SnO₂ thin films via ultrasonic spray pyrolysis [4]. The study investigated three critical factors: suspension concentration (0.001-0.002 g/mL), substrate temperature (60-80°C), and deposition height (10-15 cm), with the response variable being the net intensity of the principal X-ray diffraction peak, indicating film quality [4].

Statistical analysis through ANOVA revealed that suspension concentration was the most influential factor, followed by significant two-factor and three-factor interactions [4]. The developed model exhibited excellent predictive capability (R² = 0.9908) and identified the optimal process conditions as the highest suspension concentration (0.002 g/mL), lowest substrate temperature (60°C), and shortest deposition height (10 cm) [4]. This systematic optimization approach provided a robust framework for controlling deposition outcomes that would be difficult to achieve through sequential experimentation.

Table 2: DoE Applications Across Research Domains

| Research Domain | DoE Design Applied | Factors Studied | Performance Improvements |

|---|---|---|---|

| Whole-Cell Biosensors [3] | Definitive Screening Design | Promoters, RBS sequences | 30× max signal output; >500× dynamic range; >1500× sensitivity |

| Thin Film Deposition [4] | 2^3 Full Factorial | Concentration, temperature, height | High predictive model (R² = 0.9908); identified significant interactions |

| Naringenin Biosensors [5] | D-optimal Design | Promoters, RBS, media, supplements | Context-aware optimization; biology-guided machine learning |

Implementing DoE: A Step-by-Step Methodology

The DoE Workflow

Implementing a successful DoE follows a systematic workflow that ensures reliable, actionable results:

Define Objectives and Responses: Clearly articulate the research goals and identify measurable responses that indicate success or performance [6].

Select Factors and Ranges: Choose factors to investigate and establish appropriate experimental ranges based on prior knowledge or preliminary experiments [2].

Choose Experimental Design: Select an appropriate design (factorial, response surface, etc.) based on the objectives, number of factors, and need to detect interactions or curvature [2].

Randomize and Execute: Run experiments in randomized order to minimize confounding from lurking variables [1].

Analyze and Model: Use statistical analysis to identify significant effects and develop mathematical models relating factors to responses [6].

Validate and Refine: Confirm model predictions through confirmation experiments and refine the model or experimental domain as needed [6].

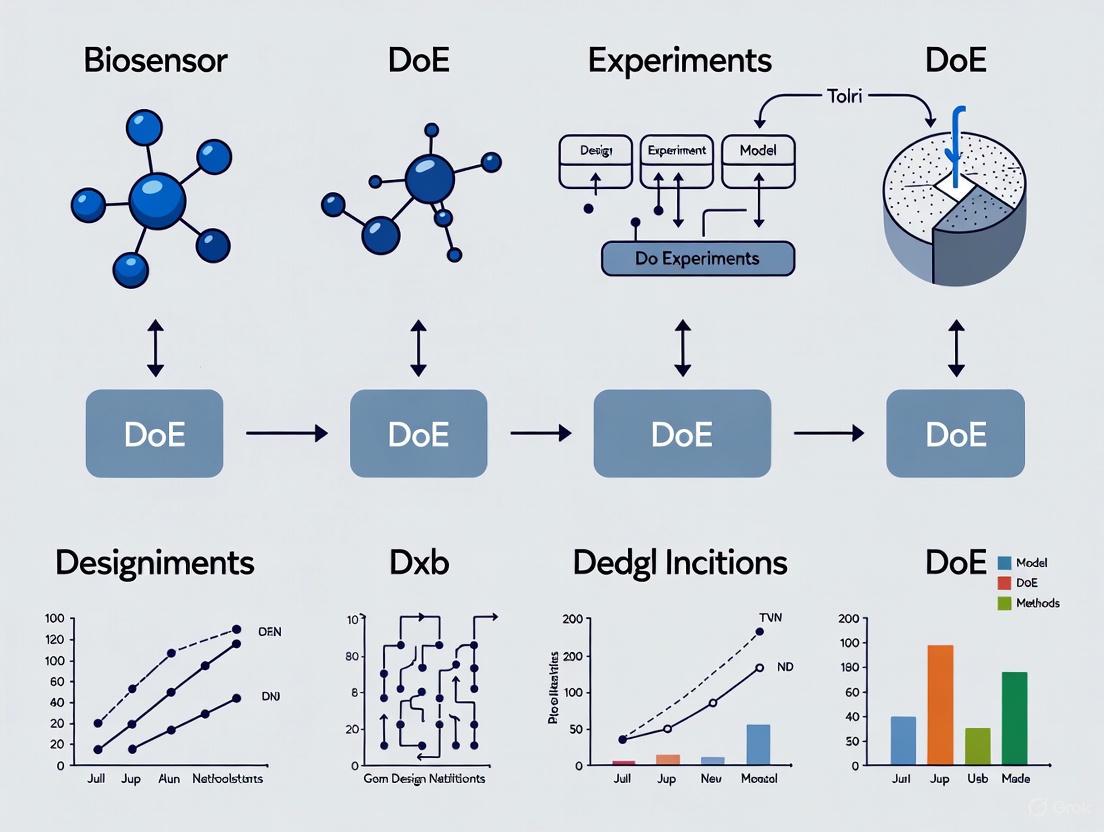

The following diagram illustrates the key decision points and workflow for a typical DoE process:

Statistical Analysis and Interpretation

Proper analysis of DoE data typically involves both graphical and numerical methods. Initial analysis should include examination of response distributions, time-order plots, and responses versus factor levels to understand data structure and identify potential outliers or time effects [6]. Subsequent statistical modeling typically employs analysis of variance (ANOVA) to determine the significance of factor effects and their interactions.

Model development often involves simplifying initial models by removing nonsignificant terms while preserving hierarchy. The resulting mathematical model, typically derived through linear regression, enables prediction of responses throughout the experimental domain according to the form:

For a two-factor model with interaction: Y = b₀ + b₁X₁ + b₂X₂ + b₁₂X₁X₂ [2]

Where Y is the predicted response, b₀ is the intercept, b₁ and b₂ are coefficients for the main effects of factors X₁ and X₂, and b₁₂ is the coefficient for their interaction.

Model adequacy is checked through residual analysis - examining differences between measured and predicted responses - and if assumptions are violated, investigators may need to add missing terms, transform responses, or refine the experimental domain [6].

Essential Research Reagents and Tools for DoE Implementation

Successfully implementing DoE requires both methodological expertise and appropriate experimental tools. The following table outlines key research reagent solutions essential for conducting DoE studies in biosensor research:

Table 3: Essential Research Reagents and Tools for Biosensor DoE Studies

| Reagent/Tool Category | Specific Examples | Function in DoE Implementation |

|---|---|---|

| Biosensor Platforms | Biacore T100, ProteOn XPR36, Octet RED384, IBIS MX96 [7] | Provide quantitative binding data (kD, kon, koff) for response variables |

| Genetic Parts | Promoters, RBS sequences, reporter genes (GFP) [3] | Enable systematic variation of genetic factors in whole-cell biosensor optimization |

| Bio-Layer Interferometry Systems | ForteBio Octet platforms [8] | Generate kinetic data for biorecognition element characterization |

| Statistical Software | JMP, R, Python statsmodels | Facilitate experimental design generation and statistical analysis of results |

| Material Deposition Systems | Ultrasonic spray pyrolysis [4] | Enable controlled variation of processing parameters for sensor fabrication |

The transition from one-variable-at-a-time approaches to Design of Experiments represents a fundamental shift in how researchers approach complex optimization challenges in biosensor development and beyond. DoE provides a structured framework for efficiently exploring multifactor experimental spaces while capturing the interaction effects that frequently govern system behavior in biological and chemical systems.

The documented case studies in whole-cell biosensor engineering and material synthesis demonstrate that DoE methodologies can yield dramatic improvements in performance metrics while providing comprehensive system understanding. By embracing these systematic approaches, researchers and drug development professionals can accelerate innovation, enhance reproducibility, and develop more robust, high-performing biosensors for point-of-care diagnostics and therapeutic development.

As the field advances, the integration of DoE with emerging technologies like biology-guided machine learning [5] and artificial intelligence [9] promises to further enhance our ability to navigate complex experimental landscapes and optimize next-generation biosensing technologies.

Design of Experiments (DoE) represents a systematic, statistical approach to process optimization that has become indispensable in advanced biosensor development. Unlike the traditional "one variable at a time" (OVAT) approach, which varies individual factors while holding others constant, DoE simultaneously investigates multiple factors and their complex interactions through a structured experimental framework [10] [11]. This methodology is particularly valuable in biosensor research, where multiple parameters affecting sensor performance must be optimized efficiently amid constraints of time, resources, and material costs [12].

The fundamental principle of DoE lies in constructing a mathematical model that describes the relationship between input variables (factors) and output measurements (responses). This model enables researchers to not only identify critical parameters but also to understand how these parameters interact to influence key biosensor performance metrics [13]. For biosensor applications, where achieving optimal sensitivity, specificity, and reproducibility is paramount, DoE provides a rigorous framework for developing robust sensing platforms while significantly reducing experimental effort compared to conventional approaches [12].

Core DoE Terminology and Principles

Fundamental Terminology

The language of DoE provides precise definitions for concepts that form the foundation of experimental design. Understanding these terms is essential for proper implementation in biosensor development:

- Factors: Variables that are deliberately manipulated in an experiment because they may influence the response. In biosensor development, factors can be quantitative (e.g., temperature, pH, concentration) or qualitative (e.g., immobilization method, bioreceptor type) [14] [15].

- Responses: The measured outcomes that reflect the experimental objectives. In biosensor research, typical responses include limit of detection (LOD), sensitivity, selectivity, signal-to-noise ratio, and reproducibility [12] [16].

- Levels: The specific values or settings at which a factor is tested. For a two-level design, factors are typically tested at high (+) and low (-) values [14] [15].

- Interactions: Occur when the effect of one factor on the response depends on the level of another factor. For example, the optimal pH for a biosensor might vary depending on the temperature [14] [13].

- Aliasing/Confounding: A phenomenon where the estimate of an effect also includes the influence of one or more other effects, which occurs in fractional factorial designs where not all combinations are tested [14].

- Design Space: The multidimensional combination and interaction of input variables that have been demonstrated to provide assurance of quality [10].

- Randomization: The practice of running experimental trials in a random order to minimize the effects of uncontrolled variables [14].

- Replication: Performing the same treatment combination multiple times to obtain an estimate of experimental error [14].

- Center Points: Experimental runs where all factors are set at their midpoint values, used to detect curvature in the response surface [14].

The Concept of Factors, Responses, and Interactions

In biosensor development, the relationship between factors and responses forms the core of the optimization process. Factors represent the controllable inputs during biosensor fabrication or operation. These typically include physical parameters (temperature, incubation time), chemical parameters (pH, ionic strength, reagent concentrations), and biological parameters (bioreceptor density, blocking agent concentration) [12].

Responses correspond to the critical performance metrics of the biosensor. The most crucial responses in biosensor research include:

- Sensitivity: The ability to detect low analyte concentrations, often quantified as the limit of detection (LOD) [16]

- Selectivity: The ability to distinguish the target analyte from interferents [16]

- Reproducibility: The precision of repeated measurements, often expressed as coefficient of variation [16]

- Linearity: The range of analyte concentrations over which the response changes linearly [16]

- Stability: The maintenance of performance characteristics over time [16]

Interactions between factors represent particularly important phenomena in biosensor systems. For instance, the interaction between immobilization pH and crosslinker concentration might significantly impact bioreceptor activity. When one factor's impact on the response is influenced by the level of another factor, this interaction can be captured through DoE but would be completely missed in OVAT approaches [13]. The ability to detect and quantify these interactions represents one of DoE's most significant advantages for optimizing complex biosensor systems.

Comparative Analysis of DoE Methods for Biosensor Optimization

Different experimental designs serve distinct purposes throughout the biosensor development process. The selection of an appropriate design depends on the number of factors to be investigated, the desired model complexity, and the available resources.

Table 1: Comparison of Common DoE Designs for Biosensor Development

| DoE Design Type | Key Characteristics | Typical Applications in Biosensor Research | Advantages | Limitations |

|---|---|---|---|---|

| Full Factorial | Tests all possible combinations of factor levels [14] [12] | Initial method development with limited factors (<5) [12] | Captures all main effects and interactions; Simple interpretation | Number of runs grows exponentially with factors (2^k for 2-level designs) |

| Fractional Factorial | Tests a carefully selected subset of full factorial combinations [13] | Screening multiple factors to identify critical parameters [13] [11] | Much more efficient than full factorial; Good for screening | Effects are aliased (confounded); Lower resolution |

| Response Surface Methods (e.g., Central Composite) | Includes factorial points, center points, and axial points to fit quadratic models [12] | Optimization after critical factors are identified [12] [11] | Can model curvature in responses; Identifies optimal conditions | Requires more runs than screening designs; More complex analysis |

| Mixture Designs | Components are proportions of a mixture that must sum to 100% [12] | Optimizing formulation composition (e.g., reagent mixtures, buffer compositions) [12] | Handles constraint that components sum to constant | Specialized for mixture problems only |

Quantitative Comparison of DoE Efficiency

The experimental efficiency of DoE compared to traditional OVAT approaches can be dramatic. In a case study optimizing copper-mediated radiofluorination reactions for tracer synthesis, DoE provided more comprehensive process understanding with more than two-fold greater experimental efficiency than the OVAT approach [11]. Similar efficiency gains have been demonstrated in biosensor development, where DoE enabled researchers to optimize multiple fabrication and operational parameters simultaneously while capturing their interactions [12].

Table 2: Experimental Efficiency Comparison: DoE vs. OVAT

| Metric | DoE Approach | OVAT Approach |

|---|---|---|

| Number of Experiments Required | Significantly fewer (e.g., 50-70% reduction) [10] [11] | Increases linearly or exponentially with number of factors |

| Ability to Detect Interactions | Yes, explicitly models and quantifies interactions [14] [13] | No, cannot detect interactions between factors |

| Quality of Optimization | Finds global optimum across all factors [11] | Risk of finding local optimum due to interaction effects |

| Range of Conditions Explored | Systematically explores entire design space [12] [11] | Limited exploration around baseline conditions |

| Statistical Validity | Provides statistical significance of effects [13] [17] | Limited statistical basis for conclusions |

Experimental Protocols for DoE in Biosensor Research

General Workflow for Implementing DoE

Implementing DoE in biosensor development follows a structured workflow that ensures comprehensive understanding and optimization:

Define Clear Objectives: Specify the primary goals of the study, such as improving sensitivity, expanding dynamic range, or enhancing stability [17].

Identify Factors and Responses: Select input factors to investigate and output responses to measure. This should be informed by prior knowledge and risk assessment [10].

Determine Factor Ranges: Establish appropriate ranges for each factor based on preliminary experiments or literature values [12].

Select Experimental Design: Choose an appropriate design based on the number of factors, desired model complexity, and resource constraints [12] [11].

Randomize and Execute Experiments: Run experiments in randomized order to minimize confounding from external variables [14].

Analyze Data and Build Model: Use statistical analysis to identify significant factors and build a mathematical model relating factors to responses [13].

Validate Model: Confirm model predictions with additional verification experiments [17].

Establish Design Space: Define the ranges of critical factors that ensure acceptable product quality [10].

Case Study: DoE for Ultrasensitive Biosensor Optimization

A recent perspective review highlighted the application of DoE for optimizing ultrasensitive biosensors with sub-femtomolar detection limits [12]. The researchers employed a sequential approach:

Phase 1: Screening

- Used a 2^k factorial design to screen 5 potential factors affecting biosensor performance

- Identified 3 critical factors from the initial 5 through statistical analysis

- Required only 16 experiments instead of the much larger number needed for OVAT

Phase 2: Optimization

- Applied a Central Composite Design (CCD) to the 3 critical factors

- Developed a quadratic model describing the response surface

- Identified optimal factor settings that minimized detection limit while maintaining reproducibility

Phase 3: Verification

- Conducted confirmation runs at the predicted optimum conditions

- Validated model accuracy by comparing predicted and actual performance

- Established a design space for routine biosensor operation

This systematic approach reduced experimental effort by approximately 60% compared to traditional methods while providing deeper insight into factor interactions [12].

Figure 1: DoE Implementation Workflow for Biosensor Optimization. This diagram illustrates the sequential approach for applying Design of Experiments in biosensor development, from initial planning through final implementation.

Essential Research Reagent Solutions for DoE in Biosensor Development

Successful implementation of DoE in biosensor research requires careful selection of reagents and materials. The following table outlines key research reagent solutions and their functions in experimental designs for biosensor optimization.

Table 3: Essential Research Reagent Solutions for Biosensor DoE Studies

| Reagent Category | Specific Examples | Function in Biosensor Development | Considerations for DoE |

|---|---|---|---|

| Biorecognition Elements | Antibodies, enzymes, aptamers, nucleic acids, whole cells [16] [18] | Provide molecular recognition for specific analytes | Quality, purity, and activity must be controlled; Often a categorical factor in DoE |

| Immobilization Materials | Glutaraldehyde, EDC/NHS, SAMs, PEG spacers, affinity tags [18] | Anchor biorecognition elements to transducer surface | Concentration, pH, and time often important continuous factors |

| Signal Transduction Materials | Redox mediators, fluorescent dyes, electrochemiluminescent compounds, enzyme substrates [16] [19] | Generate measurable signal from binding event | Stability and compatibility with detection system must be considered |

| Blocking Agents | BSA, casein, synthetic blocking peptides, commercial blocking buffers [18] | Reduce nonspecific binding on sensor surface | Type and concentration often optimized through DoE |

| Nanomaterials | Gold nanoparticles, graphene, quantum dots, carbon nanotubes [19] [18] | Enhance signal amplification and transducer performance | Size, shape, and functionalization can be factors in DoE |

| Buffer Components | PBS, HEPES, Tris, MES, carbonate-bicarbonate [18] | Maintain optimal pH and ionic strength for biorecognition | pH, ionic strength, and buffer capacity are common continuous factors |

Advanced Visualization of Factor-Response Relationships

The relationship between experimental factors and biosensor responses can be complex, particularly when interaction effects are present. The following diagram illustrates how multiple factors collectively influence critical biosensor performance metrics, highlighting the interconnected nature of these relationships.

Figure 2: Factor-Response Relationships in Biosensor Optimization. This diagram visualizes how multiple experimental factors both directly and interactively influence critical biosensor performance metrics.

Design of Experiments provides a powerful statistical framework for efficiently optimizing biosensor performance by systematically exploring factor-response relationships and their interactions. The methodology enables researchers to simultaneously investigate multiple parameters, significantly reducing experimental effort while providing deeper process understanding compared to traditional OVAT approaches [12] [11]. As biosensing technologies advance toward increasingly sophisticated applications, particularly in point-of-care diagnostics and ultrasensitive detection, the rigorous approach offered by DoE becomes increasingly essential [12] [10].

The core terminology of factors, responses, and interactions forms the foundation for implementing effective DoE strategies in biosensor research. By applying appropriate experimental designs—from initial screening to response surface optimization—researchers can develop robust biosensing platforms with defined design spaces that ensure consistent performance [10]. This systematic approach not only accelerates development timelines but also enhances regulatory compliance by building quality into the process from the earliest stages [10] [17]. As the field continues to evolve, DoE will remain an indispensable tool in the biosensor researcher's toolkit, enabling the development of next-generation sensing technologies through efficient, knowledge-driven optimization.

Design of Experiments (DoE) is a powerful statistical methodology used for planning, conducting, and analyzing controlled tests to evaluate the factors that influence a process or product. In biosensor research and development, DoE provides a structured approach to optimize complex multi-parameter systems efficiently, moving beyond traditional one-variable-at-a-time (OVAT) approaches that often miss critical factor interactions and can lead to suboptimal results [12] [20]. The systematic application of DoE enables researchers to understand both individual factor effects and their interactions while minimizing experimental effort and resources.

This comparative guide examines three prevalent DoE types—factorial, response surface, and mixture designs—within the context of biosensor development. These methodologies address different optimization challenges encountered throughout the biosensor development pipeline, from initial screening of significant factors to final formulation optimization. As the demand for more sensitive, reliable, and cost-effective biosensing platforms grows, particularly for point-of-care diagnostics, the strategic implementation of appropriate DoE methods becomes increasingly critical for accelerating development and enhancing performance [12] [21].

Comparative Analysis of DoE Methodologies

The table below summarizes the key characteristics, applications, and limitations of the three predominant DoE types discussed in this guide.

Table 1: Comprehensive Comparison of Prevalent DoE Types for Biosensor Research

| DoE Type | Primary Function | Typical Model | Key Features | Optimal Use Cases in Biosensor Development | Key Limitations |

|---|---|---|---|---|---|

| Factorial Designs [12] [20] | Factor screening & interaction analysis | First-order linear: ( Y = β0 + ΣβiXi + Σβ{ij}XiXj + ε ) | Tests all factor combinations at 2+ levels; estimates main effects & interactions; foundation for more complex designs | Identifying significant factors (e.g., probe concentration, pH, temperature); preliminary optimization of fabrication steps | Requires many runs for many factors; cannot model curvature; optimal point may be outside design space |

| Response Surface Methodology (RSM) [22] [23] | Optimization & finding optimal conditions | Second-order quadratic: ( Y = β0 + ΣβiXi + Σβ{ii}Xi^2 + Σβ{ij}XiXj + ε ) | Models curvature; identifies stationary points (max, min, saddle); Central Composite (CCD) & Box-Behnken (BBD) are common designs | Fine-tuning analytical performance (LOD, sensitivity); optimizing incubation time/temp; final performance maximization | More complex model requiring more runs than factorial designs; assumes continuous factors |

| Mixture Designs [12] [23] | Optimizing component proportions | Special polynomial (e.g., Scheffé): Components sum to a constant (1 or 100%) | Proportions of components are dependent; experimental space is a simplex; models response based on relative proportions | Optimizing reagent cocktails; formulating immobilization matrices; developing nanocomposite sensing layers | Restricted to formulation problems; experimental space constrained by mixture constraint |

Factorial Designs: The Foundation for Factor Screening

Core Principles and Experimental Protocols

Factorial designs form the cornerstone of systematic experimentation, enabling the simultaneous study of multiple factors and their interactions. In a full factorial design, all possible combinations of the factor levels are investigated. For k factors each at 2 levels, this requires 2^k experiments [12]. For example, a 2^2 factorial design with factors X1 and X2 involves four experimental runs: (-1, -1), (+1, -1), (-1, +1), and (+1, +1), where -1 and +1 represent the low and high levels of each factor, respectively [12].

The standard protocol for implementing a factorial design in biosensor development begins with identifying potential influential factors through literature review or preliminary experiments. These factors are then discretized into defined levels (typically two initially). The experimental matrix is constructed, and runs are randomized to minimize confounding effects of external variables. After conducting experiments and measuring responses, statistical analysis (typically ANOVA and regression analysis) identifies significant main effects and interactions [20] [24].

Application Case Study: Electrochemical Biosensor Optimization

Factorial designs have been successfully applied to optimize electrochemical biosensors. In one study, a fractional factorial design was employed to systematically evaluate the significance of five different factors affecting the analytical performance of an in-situ film electrode for detecting Zn(II), Cd(II), and Pb(II). The factors included the mass concentrations of Bi(III), Sn(II), and Sb(III) used to design the electrode, along with accumulation potential and accumulation time [24].

This approach enabled researchers to determine which factors significantly impacted key analytical parameters—including limit of quantification, linear concentration range, sensitivity, accuracy, and precision—simultaneously. The factorial design revealed significant factor interactions that would have been missed in traditional OVAT approaches, ultimately leading to an optimized electrode formulation with superior performance compared to single-element electrodes [24].

Response Surface Methodology: Modeling for Optimization

Core Principles and Experimental Protocols

Response Surface Methodology (RSM) comprises statistical techniques for designing experiments, building models, evaluating factor effects, and searching for optimal conditions. RSM is particularly valuable when the goal is to optimize a response influenced by several factors, especially when the relationship between factors and response may exhibit curvature [22] [23].

The most common RSM designs are Central Composite Design (CCD) and Box-Behnken Design (BBD). CCD consists of a 2^k factorial (or fractional factorial) points, 2k axial points, and center points, allowing efficient estimation of a second-order model [23]. BBD is an alternative that combines 2^k factorial points with center points but excludes axial points, often requiring fewer runs than CCD for the same number of factors [22] [25].

The experimental workflow for RSM begins with identifying factors and their ranges, typically informed by prior factorial experiments. An appropriate RSM design (CCD, BBD, etc.) is selected based on the number of factors and resource constraints. Experiments are conducted in randomized order, and data is collected for the response variable(s). A second-order model is then fitted to the data, and its adequacy is checked using statistical measures (R², adjusted R², lack-of-fit test) [22]. Finally, the fitted model is analyzed through contour plots and surface plots to locate optimal conditions [23].

Application Case Study: Tuberculosis DNA Biosensor

RSM has demonstrated significant utility in optimizing complex biosensor systems. In developing an electrochemical DNA biosensor for detecting Mycobacterium tuberculosis, researchers employed RSM to optimize eleven different factors simultaneously [21]. The process began with a Plackett-Burman screening design to identify the most influential factors, which were then further optimized using a central composite design.

This systematic approach enabled the researchers to efficiently navigate the complex multivariable system, accounting for interactions among factors such as probe concentration, immobilization time, and hybridization conditions. The RSM-guided optimization resulted in a biosensor with desirable analytical performance, including minimal analysis time coupled with high sensitivity and selectivity for detecting M. tuberculosis DNA targets [21].

Table 2: Key Research Reagent Solutions for Biosensor Optimization Studies

| Reagent/Material | Primary Function in DoE Studies | Exemplary Application |

|---|---|---|

| Multi-walled Carbon Nanotubes (MWCNTs) [21] | Enhance electrical conductivity & surface area for immobilization | Electrochemical DNA biosensor for Mycobacterium tuberculosis |

| Hydroxyapatite Nanoparticles (HAPNPs) [21] | Biomaterial substrate for reliable biomolecule immobilization | Improving biocompatibility in genosensors |

| Polypyrrole (PPY) [21] | Conductive polymer for increased biocompatibility & stability | Electrochemical biosensor fabrication |

| Heavy Metal Ions (Bi(III), Sn(II), Sb(III)) [24] | Formation of in-situ film electrodes for trace metal detection | Stripping voltammetry for Zn(II), Cd(II), Pb(II) detection |

Mixture Designs: Optimizing Component Proportions

Core Principles and Experimental Protocols

Mixture designs represent a specialized class of experimental designs used when the response depends on the relative proportions of components in a mixture rather than their absolute amounts. The critical constraint in mixture designs is that the sum of all component proportions must equal a constant, typically 1 or 100% [12] [23]. This constraint distinguishes mixture designs from standard factorial and RSM designs, as changing one component's proportion necessarily alters the proportions of other components.

Common mixture designs include simplex-lattice designs, simplex-centroid designs, and extreme-vertices designs (the latter being used when additional constraints on component proportions exist). The experimental space for a mixture design with q components can be represented as a (q-1) dimensional simplex—a line for two components, triangle for three components, tetrahedron for four components, and so forth [23].

The implementation protocol for mixture designs involves defining the components and any constraints on their proportions (minimum and/or maximum values). An appropriate mixture design is selected based on the number of components and the nature of constraints. Experiments are conducted according to the design, with careful preparation of mixtures with precise proportions. Specialized mixture models (typically Scheffé polynomials) are fitted to the data, and the model is used to optimize the mixture composition to achieve the desired response characteristics [12].

Application Context in Biosensor Research

In biosensor development, mixture designs find particular utility in optimizing formulation parameters where component proportions are critical. This includes developing nanocomposite materials for electrode modification, where the relative amounts of conductive materials, binding agents, and bioactive components must be balanced to achieve optimal sensor performance [12]. Similarly, mixture designs can optimize the composition of reagent cocktails used in enzymatic biosensors or the formulation of immobilization matrices that preserve biomolecule functionality while ensuring stability and accessibility.

The unique advantage of mixture designs in these applications is their ability to model synergistic or antagonistic effects between components and identify the optimal balance between sometimes competing requirements, such as sensitivity, stability, and response time.

Integrated Workflow and Visual Guide

The strategic application of different DoE types throughout the biosensor development process creates an efficient optimization pipeline. The following diagram illustrates the typical sequential relationship between these methodologies and their primary roles in the optimization workflow.

Typical DoE Application Workflow in Biosensor Development

This sequential approach begins with factorial designs to screen numerous potential factors efficiently, identifying which parameters significantly affect biosensor performance. The knowledge gained then informs the selection of factors and their ranges for subsequent RSM studies, which model curvature and interaction effects to locate optimal operating conditions. When the optimization challenge involves formulating mixtures with components that must sum to a constant, mixture designs become the appropriate tool, often applied after critical components have been identified through earlier experimental phases [12] [22] [23].

Factorial, response surface, and mixture designs each offer distinct capabilities for addressing different optimization challenges in biosensor research and development. Factorial designs provide an efficient approach for screening multiple factors and identifying significant interactions. Response Surface Methodology enables detailed modeling of complex response surfaces with curvature, guiding researchers to optimal operating conditions. Mixture designs address the specialized challenge of optimizing component proportions in formulations where the total must sum to a constant.

The comparative analysis presented in this guide demonstrates that these DoE methodologies are not mutually exclusive but rather complementary tools that can be integrated into a powerful optimization strategy. By selecting the appropriate design based on the specific research question and stage of development, researchers can systematically navigate complex multivariable systems, ultimately accelerating the development of biosensors with enhanced analytical performance, reliability, and commercial viability. As biosensing technologies continue to advance toward more complex multi-parameter assays and point-of-care applications, the strategic implementation of these DoE approaches will become increasingly essential for achieving robust optimization with minimal experimental resources.

The development of high-performance biosensors is a complex, multivariate challenge that requires careful balancing of sensitivity, specificity, and robustness. Traditional optimization approaches, often referred to as "One Variable at a Time" (OVAT), systematically alter a single factor while holding others constant. While intuitively simple, this method is experimentally inefficient, prone to finding local optima rather than global optima, and critically, cannot detect factor interactions where the optimal level of one factor depends on the setting of another [11]. In contrast, Design of Experiments (DoE) is a statistical approach that varies all relevant factors simultaneously according to a predefined experimental matrix. This methodology enables researchers to not only identify critical factors with greater experimental efficiency but also to model complex interactions and generate detailed predictive maps of a process's behavior [11].

The adoption of DoE is particularly valuable in biosensor development, where performance is governed by the interplay of multiple biochemical and physical parameters. For genetically encoded biosensors, key performance metrics include dynamic range, operating range, response time, and signal-to-noise ratio [26]. Optimizing these metrics often involves tuning the stoichiometry of biosensor circuit components (e.g., promoters, ribosome binding sites) and host-biosensor interactions, creating a vast combinatorial design space [27]. The structured, fractional sampling approach of DoE algorithms is uniquely positioned to efficiently map this space, accelerating the development of biosensors with tailored performance characteristics for applications in diagnostics, biomanufacturing, and metabolic engineering [27] [26].

Comparative Analysis of DoE Methods for Biosensor Research

Different stages of the biosensor development workflow necessitate different DoE strategies. The choice of design is strategic, balancing experimental effort against the depth of information required.

Types of DoE Designs and Their Applications

The table below summarizes the primary DoE designs relevant to biosensor development.

Table 1: Key DoE Designs in Biosensor Development

| DoE Design Type | Primary Objective | Key Advantages | Typical Application in Biosensor Development |

|---|---|---|---|

| Screening Designs (e.g., Fractional Factorial, Plackett-Burman) | To efficiently identify the few critical factors from a large set of potential variables [11]. | High experimental efficiency; minimizes initial runs to find vital factors [11]. | Initial assessment of factors (e.g., promoter strength, RBS sequence, ligand concentration, host cell type) affecting biosensor dynamic range [27]. |

| Response Surface Optimization (RSO) Designs (e.g., Central Composite, Box-Behnken) | To model non-linear relationships and locate optimal factor settings after critical factors are known [11]. | Provides a detailed mathematical model of the process; can find a true optimum and map the response surface [11]. | Fine-tuning the performance of a selected biosensor configuration to maximize sensitivity or minimize response time [11]. |

| Mixture Designs | To optimize the proportions of components in a mixture that sum to a constant total. | Specifically designed for formulation challenges where component ratios are critical. | Optimizing the composition of a sensing cocktail or the electrolyte solution in an electrochemical biosensor [28]. |

DoE vs. OVAT: A Quantitative Comparison

The fundamental advantages of DoE over the OVAT approach can be quantified. A study on copper-mediated radiofluorination, a process analogous to complex biosensor optimization, demonstrated that DoE was able to identify critical factors and model their behavior with more than two-fold greater experimental efficiency than the traditional OVAT approach [11]. Furthermore, while OVAT results are often misleading due to undetected factor interactions, DoE explicitly models these interactions. For instance, a biosensor's response time might be optimal at a specific combination of temperature and pH that would not be discovered if each factor was optimized independently.

Experimental Protocols and Workflows

Implementing DoE involves a structured workflow, from initial screening to final validation. The following protocol outlines a generalized approach applicable to various biosensor types.

A Generalized DoE Workflow for Biosensor Optimization

The diagram below illustrates the key stages of a sequential DoE workflow.

Detailed Experimental Methodology

Phase 1: Factor Screening

- Objective Definition: Clearly define the primary response variables (e.g., fluorescence output, electrochemical current, signal-to-noise ratio) and the desired performance targets [26].

- Factor Selection: Brainstorm and select all potential factors that could influence the responses. For a genetic biosensor, this could include plasmid copy number, inducer concentration, incubation temperature, and host cell strain [27] [26].

- Experimental Design: Select a screening design, such as a Resolution III or IV fractional factorial design. This allows for the evaluation of 5-10 factors in only 16-32 experimental runs, efficiently separating vital few factors from the trivial many [11].

- Execution & Analysis: Execute the experiments in a randomized order to avoid confounding from lurking variables. Analyze the data using multiple linear regression (MLR) to identify factors and two-factor interactions that have a statistically significant effect on the responses [11].

Phase 2: Response Surface Optimization

- Factor Refinement: Select the 2-4 most critical factors identified in the screening phase for further study.

- Advanced Design: Employ an RSO design like a Central Composite Design (CCD). This design includes axial points that allow for the estimation of curvature, revealing non-linear effects and enabling the location of a true performance maximum or minimum [11].

- Model Building: Fit the data to a quadratic model. The resulting equation describes the response surface and can be visualized in 3D surface or 2D contour plots.

- Prediction & Validation: Use the model to predict the optimal factor settings. Conduct a small number of confirmation experiments (e.g., 3-5 replicates at the predicted optimum) to validate the model's accuracy and ensure the biosensor performance meets expectations [11].

Essential Research Reagent Solutions for DoE in Biosensor Development

The following table catalogues key materials and reagents commonly employed in the experimental phases of biosensor development, highlighting their function within a DoE framework.

Table 2: Key Research Reagent Solutions for Biosensor DoE Studies

| Reagent / Material | Function in Biosensor Development & DoE |

|---|---|

| Allosteric Transcription Factors (TFs) | Protein-based sensor module; its concentration and identity are common factors to screen and optimize in genetic circuit DoE [26]. |

| Riboswitches / Toehold Switches | RNA-based sensors; their sequence and structure are design variables that can be tuned via DoE to alter sensitivity and dynamic range [26]. |

| Promoter & RBS Libraries | Provide a source of genetic variability; DoE is used to sample this library space efficiently to find optimal combinations for biosensor output [27]. |

| Electroactive Bacteria / Enzymes | Biological components in bioelectronic sensors (e.g., for microbial fuel cells); their type and concentration are key factors in a DoE to enhance signal generation [29]. |

| Organic Electrochemical Transistors (OECTs) | Signal amplification components; their material properties and integration configuration (anode-gate vs. cathode-gate) are factors for optimizing signal-to-noise ratio [29]. |

| High-Throughput Automation Platforms | Enables the practical execution of DoE by automating liquid handling, cell culture, and titration analyses, allowing for the rapid testing of dozens to hundreds of conditions [27]. |

Case Studies and Data Presentation

Case Study 1: Optimizing Genetically Encoded Biosensors

A protocol for biosensor development detailed the use of DoE to efficiently sample the vast combinatorial design space of genetic circuits [27]. The workflow involved creating promoter and ribosome binding site (RBS) libraries, which were transformed into structured dimensionless inputs. A DoE algorithm was then used to perform fractional sampling of this space, coupled with automated effector titration analysis. This approach successfully identified distinct biosensor configurations with both digital and analog dose-response curves, demonstrating the power of DoE in navigating complex biological design problems. The resulting data allows for the computational mapping of the full experimental design space, guiding the selection of optimal configurations without exhaustive testing.

Case Study 2: Amplifying Bioelectronic Sensor Signals

A breakthrough study utilized a DoE-like approach to enhance bioelectronic sensors, integrating enzymatic and microbial fuel cells with Organic Electrochemical Transistors (OECTs) [29]. Researchers explored different configurations (cathode-gate vs. anode-gate) and materials, which function as the "factors" in a DoE. The results were dramatic: signal amplification by factors of 1,000 to 7,000 and a significant improvement in the signal-to-noise ratio. This enabled the detection of arsenite in water at concentrations as low as 0.1 µmol/L. The quantitative outcomes are summarized in the table below, showcasing the performance gains achievable through systematic optimization.

Table 3: Performance Data from OECT-Amplified Biosensor Study [29]

| Sensor Configuration | Amplification Factor | Key Demonstrated Application | Detection Performance |

|---|---|---|---|

| Enzymatic Fuel Cell + OECT (Cathode-Gate) | 1,000 - 7,000 | Glucose / Lactate Sensing | High sensitivity in sweat for metabolic monitoring |

| Microbial Fuel Cell + OECT (Engineered E. coli) | ~1,000 | Arsenite Detection in Water | Limit of detection: 0.1 µmol/L |

The integration of Design of Experiments into the biosensor development workflow represents a paradigm shift from iterative, sequential testing to a holistic, model-based approach. DoE's superior experimental efficiency and its unique ability to quantify factor interactions provide researchers with a powerful toolkit for navigating the inherent complexity of biosensor systems. From initial screening of genetic components to the fine-tuning of electrochemical interfaces, DoE facilitates a more rapid and insightful path to optimized performance. As the demand for more sensitive, specific, and robust biosensors grows in fields from personalized medicine to environmental monitoring, the adoption of rigorous statistical methodologies like DoE will be crucial for accelerating innovation and translating promising concepts into practical, high-performance devices.

In the field of biosensor development, achieving optimal performance in sensitivity, specificity, and reproducibility is paramount for clinical and environmental applications. Traditional one-variable-at-a-time (OVAT) optimization approaches often fail to capture the complex interactions between multiple factors that influence biosensor performance. A systematic approach utilizing Design of Experiments (DoE) provides a powerful statistical framework for efficiently exploring these multidimensional experimental spaces. This methodology enables researchers to simultaneously investigate numerous factors and their interactions, leading to significantly enhanced biosensor characteristics while reducing experimental time and resources. For researchers and drug development professionals, adopting structured optimization methods represents a paradigm shift from iterative, intuitive tuning to data-driven, model-based development, ultimately accelerating the translation of biosensors from laboratory concepts to reliable point-of-care diagnostics [12] [3].

This guide objectively compares the performance outcomes of different DoE methodologies applied to biosensor optimization, presenting experimental data that demonstrates their respective advantages in enhancing critical performance parameters.

Comparative Analysis of DoE Methods for Biosensors

Fundamental DoE Methodologies in Biosensor Development

Systematic optimization in biosensor research employs several core DoE methodologies, each with distinct advantages for specific optimization goals. Factorial designs form the foundation, systematically investigating the effects of multiple factors and their interactions by testing each factor at two or more levels across all possible combinations. For more complex response surfaces with curvature, central composite designs extend factorial designs by adding center and axial points, enabling the fitting of second-order quadratic models. When dealing with mixture components where the total proportion must sum to 100%, mixture designs provide specialized methodologies for formulating optimal detection interfaces and immobilization matrices. These methodologies collectively enable researchers to move beyond univariate approaches that often miss critical factor interactions, instead providing comprehensive maps of the experimental domain that reveal global, rather than localized, optima [12].

The selection of appropriate DoE methodology depends heavily on the biosensor's development stage and optimization objectives. Screening designs efficiently identify the most influential factors from a large set of potential variables, while response surface methodologies precisely characterize optimal regions of the experimental space. For biosensors with complex, non-linear behaviors, sequential DoE approaches iteratively refine the experimental domain based on previous results, progressively moving toward the global optimum with minimal experimental effort [12].

Performance Comparison of DoE Methods

Table 1: Performance Outcomes of Different DoE Methods in Biosensor Optimization

| DoE Method | Key Applications in Biosensor Research | Impact on Sensitivity | Impact on Specificity | Impact on Reproducibility | Experimental Efficiency |

|---|---|---|---|---|---|

| Factorial Designs | Screening multiple factors (e.g., immobilization conditions, buffer composition) | Identifies factors with significant effects | Reveals interactions affecting binding selectivity | Establishes robust operating conditions | High efficiency for 2-5 factors; minimal runs for maximum information [12] |

| Central Composite Designs | Optimizing complex response surfaces (e.g., signal-to-noise ratio, detection limit) | Enables precise modeling of quadratic effects for LOD optimization | Models non-linear effects on molecular recognition | Characterizes curvature for robust operational windows | Moderate efficiency; requires more runs than factorial but provides comprehensive model [12] |

| Definitive Screening Designs | Evaluating genetic circuit components (promoters, RBS) with limited resources | Identifies optimal genetic configurations for signal amplification | Maintains specificity through proper regulatory control | Reduces biological variability through optimal expression balancing | Exceptional efficiency; screens many factors with minimal experimental runs [3] |

| Mixture Designs | Formulating optimal biorecognition layer compositions | Optimizes transducer-biolayer interface for signal transduction | Balances recognition element density to minimize non-specific binding | Ensures consistent layer fabrication through precise composition control | High efficiency for formulation optimization; accounts for dependency between components [12] |

Experimental Data Supporting DoE Advantages

Substantial experimental evidence demonstrates the advantages of systematic DoE approaches over traditional methods. In one notable study optimizing a whole-cell biosensor for protocatechuic acid (PCA), researchers applied a definitive screening design to systematically modify biosensor dose-response behavior. The results showed remarkable improvements: the maximum signal output increased by up to 30-fold, dynamic range improved by >500-fold, sensing range expanded by approximately 4 orders of magnitude, and sensitivity increased by >1500-fold compared to initial constructs. Furthermore, the systematic approach enabled modulation of the response curve slope to produce biosensors with both digital and analog dose-response characteristics suited for different applications [3].

Another significant advantage documented in systematic approaches is the dramatic reduction in experimental requirements. A standard OVAT approach to optimize a three-factor system would require numerous experiments, but a well-designed factorial approach can achieve comprehensive mapping with significantly fewer runs while capturing all interaction effects. This efficiency enables researchers to explore broader experimental spaces more thoroughly, increasing the likelihood of discovering truly optimal conditions rather than local maxima that represent suboptimal performance [12] [3].

Experimental Protocols for DoE in Biosensor Optimization

Protocol for DoE-Based Optimization of Whole-Cell Biosensors

The following protocol outlines the key steps for implementing a definitive screening design to optimize genetically encoded biosensors, based on established methodologies [3] [27]:

Define Optimization Objectives and Critical Quality Attributes: Identify key biosensor performance metrics including dynamic range (ON/OFF ratio), sensitivity (EC50), maximum output signal, and background expression (leakiness). Establish target values for each attribute based on the intended application.

Select Genetic Factors and Experimental Ranges: Create modular genetic libraries for regulatory components (promoters, RBS) with varying expression strengths. Characterize each library element's expression level quantitatively. Transform these discrete genetic variants into continuous factors by normalizing expression levels and assigning coded levels (-1, 0, +1) representing low, medium, and high expression.

Design Experimental Matrix: Select an appropriate DoE array (definitive screening design, fractional factorial, etc.) based on the number of factors and resources. The design matrix specifies the exact combination of factor levels for each experimental construct. For a three-factor system, a definitive screening design typically requires 10-15 constructs to efficiently explore the design space.

Construct Biosensor Variants and Characterize Performance: Assemble biosensor constructs according to the experimental matrix using automated cloning methods where possible. Measure biosensor response across a comprehensive concentration range of the target analyte (e.g., 0-1 mM PCA), with appropriate replicates (n≥3) to account for biological variability.

Statistical Analysis and Model Building: Fit response data to mathematical models using linear regression. Identify significant factors and interaction effects through ANOVA. Generate response surface models predicting biosensor performance across the entire experimental space.

Validation and Refinement: Select predicted optimal configurations from the model and validate experimentally. If necessary, perform iterative DoE rounds with refined experimental ranges to converge on the global optimum.

Research Reagent Solutions for DoE Biosensor Studies

Table 2: Essential Research Reagents and Materials for DoE Biosensor Optimization

| Reagent/Material | Function in Biosensor Optimization | Application Examples |

|---|---|---|

| Modular Promoter Libraries | Provides tunable transcriptional control for regulatory components | Varying expression of allosteric transcription factors (e.g., PcaV) [3] |

| RBS Library | Modulates translation initiation rates for fine-tuning protein expression | Optimizing reporter gene (e.g., GFP) expression levels [3] |

| Allosteric Transcription Factors | Biological recognition element conferring specificity to target molecules | PCA detection (PcaV), ferulic acid detection [3] |

| Reporter Genes (GFP, Enzymatic) | Generates measurable output signal corresponding to analyte concentration | Quantitative fluorescence measurements, colorimetric assays [3] |

| Analytical Grade Target Analytes | Used for dose-response characterization and sensitivity assessment | Protocatechuic acid, ferulic acid for lignin-derived biomarker detection [3] |

| High-Throughput Cloning Systems | Enables rapid assembly of multiple biosensor genetic constructs | Golden Gate assembly, Gibson assembly for library construction [27] |

| Automated Cultivation and Assay Platforms | Provides reproducible, scalable experimental execution for multiple conditions | Robotic liquid handling, microplate readers for high-throughput characterization [27] |

Visualization of Systematic Optimization Workflows

DoE Optimization Process for Biosensors

DoE Optimization Process for Biosensors

Biosensor Architecture and Optimization Targets

Biosensor Architecture and Optimization Targets

The comparative analysis presented demonstrates unequivocally that systematic DoE approaches outperform traditional OVAT methods across all critical biosensor performance parameters. The experimental data reveals that proper implementation of structured optimization strategies can enhance biosensor sensitivity by several orders of magnitude, improve specificity through understanding of factor interactions, and ensure reproducibility through statistically defined optimal operating conditions. For researchers and drug development professionals, the adoption of these methodologies represents not merely a technical improvement but a fundamental shift toward more efficient, predictive, and robust biosensor development. The systematic mapping of experimental space enables both the achievement of performance targets and the discovery of novel biosensor configurations with emergent properties, ultimately accelerating the development of next-generation diagnostic and monitoring platforms.

Applied DoE Strategies for Optical and Electrochemical Biosensors

In the development and optimization of biosensors, researchers are often confronted with a large number of potential factors that could influence performance metrics such as sensitivity, selectivity, and detection limit. Screening designs serve as powerful statistical tools to efficiently identify which factors among many candidates have significant effects on the response variables, thereby focusing subsequent optimization efforts on the truly critical parameters. Within the framework of Design of Experiments (DoE), these screening methodologies enable scientists to navigate complex experimental spaces with structured approaches, minimizing experimental runs while maximizing information gain. This comparative analysis focuses on two fundamental screening designs: full factorial designs and Plackett-Burman (PB) designs, examining their theoretical foundations, practical applications, and performance within biosensor research [2] [30].

The strategic application of these designs is particularly crucial in biosensor technology, where multiple variables—including biological recognition elements, transducer materials, immobilization strategies, and detection conditions—can interact in complex ways. Traditional one-variable-at-a-time (OVAT) approaches fail to capture these interactions and require substantially more resources. As the demand for ultrasensitive, reliable, and point-of-care biosensing platforms grows, the implementation of efficient screening methodologies becomes indispensable for accelerating development cycles and enhancing analytical performance [2].

Theoretical Foundations of Factorial and Plackett-Burman Designs

Two-Level Full Factorial Designs

The two-level full factorial design is a cornerstone of experimental screening methodologies. This approach investigates k factors simultaneously, each evaluated at two levels (typically coded as -1 for the low level and +1 for the high level). The design requires 2k experimental runs to complete all possible combinations of factor levels. A key advantage of this configuration is its orthogonality, meaning the factor estimates are uncorrelated, which simplifies statistical interpretation [31] [2].

A primary strength of full factorial designs is their ability to estimate not only the main effects of each factor but also all possible interaction effects between factors. For example, in a 22 factorial design (two factors, each at two levels), the model can be represented by the equation: Y = b0 + b1X1 + b2X2 + b12X1X2 where Y is the response, b0 is the overall mean, b1 and b2 represent the main effects of factors X1 and X2, and b12 quantifies their interaction effect [2]. This ability to detect interactions is critical in biosensor development, where factors such as pH and temperature often exhibit interdependent effects on sensor performance.

However, the main limitation of full factorial designs is their exponential growth in required experimental runs as factors increase. While studying 3 factors requires 8 runs, investigating 10 factors would necessitate 1024 runs, which is often impractical in resource-constrained research environments [31].

Plackett-Burman Designs

Plackett-Burman (PB) designs represent a class of highly fractional factorial designs specifically developed for screening large numbers of factors with minimal experimental effort. These designs are based on two-level orthogonal arrays that allow the investigation of up to N-1 factors in only N experimental runs, where N is a multiple of 4 (e.g., 8, 12, 16, 20) [31] [30].

The exceptional economy of experimental runs makes PB designs particularly attractive for preliminary investigations. For instance, a 12-experiment PB design can screen up to 11 different factors, whereas a full factorial approach for 11 factors would require 2048 experiments [31]. This efficiency comes at a cost: PB designs are primarily intended for estimating main effects only and cannot reliably estimate interaction effects due to the complex confounding pattern where "every main factor is partially confounded with all possible two-factor interactions not involving the factor in question" [31].

The validity of PB designs as screening tools therefore rests on the sparsity-of-effects principle—the assumption that only a few factors will have substantial effects, while most will have negligible influence, and that interaction effects are sufficiently small to not significantly bias the main effect estimates [31] [30].

Table 1: Fundamental Characteristics of Screening Designs

| Design Feature | Full Factorial Design | Plackett-Burman Design |

|---|---|---|

| Experimental Runs for k Factors | 2k | N (where k ≤ N-1) |

| Example: 6 Factors | 64 runs | 12 runs |

| Model Capability | Main effects + all interactions | Main effects only |

| Confounding Structure | No confounding between effects | Complex confounding of main effects with two-factor interactions |

| Primary Application | When interactions are suspected | Initial screening of many factors |

| Efficiency | Low for large k | High for large k |

| Interpretation | Straightforward | Requires caution due to confounding |

Comparative Experimental Analysis in Biosensor Development

Case Study 1: Optimizing Biosurfactant Production

In a study focused on enhancing glycolipopeptide biosurfactant production by Pseudomonas aeruginosa, researchers employed a sequential DoE approach. The initial phase utilized a Plackett-Burman design to screen 12 trace nutrients in only 20 experimental runs. This efficient screening identified five significant trace elements (nickel, zinc, iron, boron, and copper) that substantially influenced biosurfactant yield. The researchers noted that "PBD simply screens the design space to detect large main effects" without resolving interactions [30].

Following this screening phase, the research team applied Response Surface Methodology (RSM) with a central composite design to optimize the concentrations of the five significant factors identified by the PB design. This sequential approach culminated in an optimized medium that produced 84.44 g/L of glycolipopeptide, dramatically demonstrating the effectiveness of combining screening and optimization designs [30].

Case Study 2: Optimizing Enzyme Expression for Biosensor Application

In another biosensor-related application, researchers optimized the expression conditions for 2,3-dihydroxybiphenyl 1,2-dioxygenase (BphC_LA-4), an enzyme used in catechol biosensors. They initially employed a Plackett-Burman design to screen multiple factors affecting recombinant enzyme expression in E. coli, including pH, culture medium composition, induction time, and temperature [32].

The PB design successfully identified the most influential factors, which were subsequently optimized using RSM. The authors emphasized the importance of this statistical approach, noting that "not only each of these variables, but also the interactions of them play an important role in the high expression of recombinant enzyme." This case illustrates how PB designs can serve as an efficient preliminary screening step before more detailed optimization studies [32].

Case Study 3: Bioelectricity Production from Winery Residues

A comparative study on bioelectricity production from winery residues provided direct experimental comparison between PB and factorial approaches. Researchers initially screened eight factors using a Plackett-Burman design, which identified vinasse concentration, stirring, and NaCl addition as the three most influential variables. Subsequently, they employed a Box-Behnken design (a type of RSM that is also a fractional factorial design) to optimize these critical parameters, achieving a peak bioelectricity production of 431.1 mV [33].

This study highlighted the complementary nature of these approaches, using PB designs for initial screening followed by more focused factorial-based designs for optimization. The authors described this sequential strategy as making "experimentation more efficient" by first reducing the number of variables before detailed optimization [33].

Table 2: Experimental Applications of Screening Designs in Biosensor and Bioprocess Development

| Application Context | Screening Design Used | Factors Screened | Significant Factors Identified | Key Outcome |

|---|---|---|---|---|

| Glycolipopeptide Biosurfactant Production [30] | Plackett-Burman | 12 trace nutrients | Ni, Zn, Fe, B, Cu | 5 significant factors identified; RSM optimization achieved 84.44 g/L yield |

| Enzyme Expression for Catechol Biosensor [32] | Plackett-Burman | 8 culture parameters | pH, seed age, inoculation amount, temperature | Enhanced enzyme specific activity to 0.58 U/mg |

| Bioelectricity from Winery Residues [33] | Plackett-Burman | 8 process variables | Vinasse concentration, stirring, NaCl addition | Peak bioelectricity production of 431.1 mV achieved after optimization |

Experimental Protocols and Methodologies

Standard Protocol for Plackett-Burman Screening Design

The implementation of a Plackett-Burman design for biosensor optimization typically follows a structured work-flow. First, researchers must select factors and define levels based on preliminary knowledge, choosing appropriate high (+1) and low (-1) levels for each factor. The number of experimental runs (N) is then selected to accommodate the factors (k ≤ N-1), with 12, 20, or 24 runs being common choices [30] [32].

Next, the experimental matrix is generated according to the specific Plackett-Burman template, which ensures orthogonality. Experiments should be performed in randomized order to minimize confounding with external variables. After completing the experiments and measuring responses, statistical analysis is performed to identify significant factors, typically using linear regression with significance testing (e.g., p-values < 0.05) or Bayesian-Gibbs analysis for more robust estimation in the presence of complex confounding [31].

Standard Protocol for Full Factorial Screening Design

For a full factorial design, the initial steps mirror those of the PB design: select factors and define levels. However, the experimental plan requires 2k runs without the fractional economy of PB designs. The experimental matrix includes all possible combinations of factor levels, typically organized in standard order but executed randomly [2].

The key analytical advantage emerges during statistical analysis, where researchers can estimate both main effects and all two-factor and higher-order interactions. The significance of effects is typically determined using Analysis of Variance (ANOVA) with appropriate F-tests. The full model including all interactions can be represented as: Y = β0 + ΣβiXi + ΣΣβijXiXj + ... + e where β0 is the intercept, βi are main effect coefficients, βij are two-factor interaction coefficients, and e represents error [31] [2].

Research Reagent Solutions for DoE in Biosensor Development

The implementation of screening designs requires specific reagents and materials tailored to biosensor research. The following table summarizes key components referenced in experimental case studies:

Table 3: Essential Research Reagents for Biosensor DoE Studies

| Reagent/Category | Function in DoE Studies | Specific Examples from Literature |

|---|---|---|

| Trace Elements | Screening nutrient effects on bioproduction | NiCl2, ZnCl2, FeCl3, K3BO3, CuSO4 used in biosurfactant production optimization [30] |

| Enzyme Substrates | Response variable measurement in enzyme biosensors | 2,3-dihydroxybiphenyl, catechol derivatives for BphC_LA-4 enzyme activity determination [32] |

| Biological Recognition Elements | Factors affecting biosensor specificity | Antibodies, enzymes, nucleic acids, aptamers immobilized on transducer surfaces [34] [35] |

| Nanomaterial Labels | Signal amplification and detection | Gold nanoparticles, quantum dots, magnetic beads used for enhanced biosensor signals [34] [35] |

| Buffer Components | Optimization of biochemical environment | pH, ionic strength, blocking agents, detergents affecting biorecognition efficiency [34] |

| Electrode Materials | Transducer optimization in electrochemical biosensors | Carbon nanotubes, graphene, copper, zinc electrodes in bioelectricity production [33] |

Strategic Implementation and Best Practices

Selection Guidelines for Screening Designs

Choosing between full factorial and Plackett-Burman designs depends on several considerations. Plackett-Burman designs are recommended when: investigating a large number of factors (typically >5), available resources are limited, initial screening is needed to reduce factor space, and the assumption of negligible interactions is reasonable. Conversely, full factorial designs are preferable when: the number of factors is small (typically ≤5), interaction effects are suspected or must be estimated, and sufficient experimental resources are available [31] [36].

For biosensor applications with complex biochemical systems where interactions are likely, a sequential approach often provides the most balanced strategy: beginning with a PB design to screen many factors, followed by a full factorial or response surface design to investigate significant factors and their interactions in greater depth [30] [33].

Advanced Analytical Approaches for Complex Confounding