A Systematic Protocol for Biosensor Optimization Using Factorial Design: Enhancing Sensitivity, Robustness, and Reproducibility for Biomedical Applications

This article provides a comprehensive guide for researchers and drug development professionals on applying factorial design of experiments (DoE) to optimize biosensor performance.

A Systematic Protocol for Biosensor Optimization Using Factorial Design: Enhancing Sensitivity, Robustness, and Reproducibility for Biomedical Applications

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying factorial design of experiments (DoE) to optimize biosensor performance. It covers foundational principles, demonstrating how systematic optimization surpasses traditional one-variable-at-a-time approaches by efficiently capturing critical factor interactions. The protocol details methodological steps for designing and executing factorial experiments, supported by case studies from clinical diagnostics and pharmaceutical analysis. It further addresses troubleshooting common pitfalls and outlines rigorous validation strategies to ensure method robustness and comparability with gold-standard techniques. By integrating foundational knowledge with practical application, this guide empowers scientists to develop highly sensitive, reliable, and reproducible biosensors suitable for point-of-care testing and therapeutic drug monitoring.

Why Systematic Optimization? Mastering the Core Principles of Factorial Design for Biosensors

The Critical Limitation of One-Variable-at-a-Time (OFAT) Approaches in Complex Biosystems

The One-Factor-at-a-Time (OFAT) experimental approach, while historically prevalent, presents significant limitations for optimizing complex biosystems where multiple interacting factors govern outcomes. This application note details the critical drawbacks of OFAT, including its failure to detect factor interactions and its experimental inefficiency, and provides a structured protocol for implementing factorial Design of Experiments (DoE) as a superior alternative. Framed within biosensor optimization research, we demonstrate through a case study and detailed methodology how factorial designs enable researchers to systematically explore multifactor spaces, identify interaction effects, and develop robust, optimized systems with minimal experimental effort.

Critical Limitations of the OFAT Approach

The traditional OFAT method involves varying a single experimental factor while holding all others constant. Despite its intuitive appeal and historical widespread use, this approach is fundamentally inadequate for the optimization of complex biosystems, such as biosensors, for two primary reasons.

- Inefficiency and Resource Intensity: OFAT requires a large number of experimental runs to study multiple factors, leading to an inefficient use of time, reagents, and other valuable resources [1] [2]. This becomes prohibitive as the number of factors increases.

- Failure to Detect Factor Interactions: The most severe limitation of OFAT is its inability to detect interactions between factors [1] [3] [2]. In a biosystem, an interaction occurs when the effect of one factor (e.g., pH) depends on the level of another factor (e.g., temperature). OFAT assumes factors are independent, a dangerous and often incorrect assumption for biological systems. Consequently, conditions identified as "optimal" by OFAT are often suboptimal or unreliable, hindering the development of robust and high-performing biosensors [2] [4].

The following diagram illustrates the fundamental difference in how OFAT and factorial DoE explore the experimental space, leading to the failure of OFAT to find the true optimum in the presence of factor interactions.

Case Study: Factorial Design for a COVID-19 Biosensor

A recent study on optimizing a fluorescent ZIF-8 biosensor for detecting COVID-19 RNA sequences provides a compelling example of DoE's superiority [5]. The researchers aimed to maximize the biosensor's fluorescence quenching efficiency, a critical performance parameter.

Experimental Factors and Design

A 2^3 full factorial design was employed to investigate three critical factors simultaneously, each at two levels. This design required only 8 experimental runs but provided information on all main effects and interaction effects.

Table 1: Experimental Factors and Levels for Biosensor Optimization

| Factor | Description | Low Level (-1) | High Level (+1) |

|---|---|---|---|

| A | ZIF-8 Concentration | 0.3 mg/mL | 0.7 mg/mL |

| B | Buffer pH | 6.0 | 8.0 |

| C | Solution Temperature | 25 °C | 37 °C |

Results and Data Analysis

The results from the factorial design were analyzed to calculate the main effect of each factor and the interaction effects between them.

Table 2: Analysis of Effects on Quenching Efficiency

| Effect | Description | Impact on Quenching Efficiency |

|---|---|---|

| Main Effect A | ZIF-8 Concentration | Strong Positive |

| Main Effect B | Buffer pH | Moderate Positive |

| Main Effect C | Solution Temperature | Negative |

| Interaction A×B | Concentration × pH | Significant Synergistic |

| Optimal Conditions | A=+1, B=+1, C=-1 (0.7 mg/mL, pH 8.0, 25°C) | 72.41% Quenching |

The analysis revealed a significant interaction between ZIF-8 concentration and buffer pH (A×B), meaning the effect of pH was different at different concentrations. This type of interaction is completely undetectable by an OFAT approach. The model led to the identification of an optimal condition that yielded a high quenching efficiency of 72.41% and enabled the biosensor to achieve a detection limit of 12.02 pM for COVID-19 RNA [5].

Protocol: Implementing a 2^k Factorial Design for Biosensor Optimization

This protocol provides a step-by-step guide for using a 2^k factorial design to optimize a biosensor system, where k is the number of factors to be investigated.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biosensor Optimization via DoE

| Item | Function in Experiment |

|---|---|

| Biorecognition Element | The core sensing component (e.g., antibody, enzyme, DNA probe) that confers specificity to the target analyte. |

| Transduction Platform | The material or surface (e.g., electrode, nanoparticle, MOF like ZIF-8) that translates molecular recognition into a measurable signal. |

| Buffer Components | Maintain the pH and ionic strength of the reaction environment, critically influencing biomolecular activity and stability. |

| DoE Software Package | Statistical software for generating the experimental design matrix and performing subsequent data analysis. |

| Microtiter Plates & Liquid Handler | Enable high-throughput execution of multiple experimental runs in parallel, ensuring consistency and facilitating randomization. |

Step-by-Step Experimental Workflow

Step 1: Define Objective and Select Factors

- Clearly define the primary response variable to be optimized (e.g., fluorescence intensity, limit of detection, signal-to-noise ratio).

- Select

kcritical factors (typically 2-4) believed to influence the response. Use prior knowledge or screening experiments for selection. - Define two relevant levels for each factor (e.g., low/-1 and high/+1 for pH, temperature, concentration).

Step 2: Generate the Experimental Design Matrix

- Construct a

2^kfactorial design matrix. This matrix specifies the factor level settings for each experimental run. - For a 2-factor design, this creates 4 unique combinations (2^2). For a 3-factor design, it creates 8 combinations (2^3).

- Randomize the run order of all experiments to minimize the impact of confounding variables and systematic errors [2] [4].

Example Design Matrix for a 2^3 Design (8 runs):

Step 3: Execute Experiments and Collect Data

- Prepare reagents according to the factor levels specified for each run.

- Perform all experiments in the randomized order.

- Precisely measure and record the response variable for each run.

Step 4: Statistical Analysis and Model Interpretation

- Input the response data into DoE software or a statistical package.

- Calculate the main effects and interaction effects.

- Perform Analysis of Variance (ANOVA) to determine the statistical significance of the effects.

- Generate a statistical model (e.g.,

Y = b₀ + b₁A + b₂B + b₃C + b₁₂AB + b₁₃AC + b₂₃BC) that describes the relationship between the factors and the response [3] [4].

Step 5: Identify Optimal Conditions and Validate

- Use the model to predict the factor level combinations that will optimize the response.

- Conduct confirmation experiments at the predicted optimal conditions to validate the model's accuracy and the robustness of the biosensor performance.

Moving beyond the OFAT paradigm is not merely an option but a necessity for the efficient and effective development of advanced biosensors and complex biotechnological products. The factorial DoE approach provides a rigorous, statistically sound, and resource-efficient framework for navigating multi-factor experimental spaces. By adopting the protocols outlined in this application note, researchers and drug development professionals can systematically uncover critical interaction effects, accelerate development timelines, and ultimately achieve superior, more reliable biosystem performance.

The Fundamental Limitation of Traditional Methods

The "one variable at a time" (OVAT) approach has traditionally been a common method for process optimization in scientific research. This method involves holding all process variables constant while adjusting a single factor until an optimal response is observed, then repeating this process sequentially for each variable [6]. However, this approach possesses critical flaws that limit its effectiveness and efficiency.

OVAT is inherently incapable of detecting factor interactions, a common phenomenon where the effect of one factor depends on the level of another factor [7] [6]. In biosensor optimization, for instance, the effect of promoter strength may depend on the specific ribosome binding site being used. OVAT methodologies typically require more experimental resources, take longer to complete, and often identify only local optima rather than the true global optimum for a process [6]. The limitations of OVAT become particularly problematic when optimizing complex, multicomponent systems like genetically encoded biosensors, where multiple components and their interactions significantly impact performance [8].

Design of Experiments: Core Principles and Advantages

Design of Experiments is a statistical approach to process optimization that systematically varies all relevant factors simultaneously according to a predefined experimental matrix [6]. Rather than exploring one dimension at a time, DoE maps the entire experimental space, enabling researchers to understand both main effects and factor interactions with unprecedented efficiency.

Key Advantages of DoE

- Detection of Factor Interactions: DoE can identify and quantify how factors interact, providing crucial insights into system behavior that OVAT inevitably misses [7] [6]

- Experimental Efficiency: DoE typically requires fewer total experimental runs to characterize a system, saving time, resources, and potentially reducing researcher exposure to hazardous materials [6]

- Comprehensive Process Understanding: The methodology generates mathematical models that predict system behavior across the entire experimental space, not just at isolated points [6]

- Statistical Rigor: DoE analyses incorporate estimates of error and effect significance, providing confidence in the identified optimal conditions [6]

Factorial Designs: The Foundation of DoE

Factorial experiments form the basis of many DoE approaches. In factorial notation, a 2³ design has three factors, each with two levels, and 2³=8 experimental conditions [9]. Similarly, a 2⁴3² design has four two-level factors and two three-level factors, totaling 16×9=144 treatment combinations [7]. This notation conveniently conveys the number of factors, their levels, and the total experimental conditions [9].

Table 1: Comparison of Experimental Approaches for Optimizing a Three-Factor System

| Characteristic | OVAT Approach | DoE Approach |

|---|---|---|

| Total Experiments | 12-15 (estimated) | 8 (full factorial) |

| Information Gained | Main effects only | Main effects + all interactions |

| Ability to Detect Interactions | No | Yes |

| Statistical Confidence | Limited | Comprehensive error estimation |

| Optimization Outcome | Local optimum | Global optimum |

In factorial designs, researchers can estimate main effects by comparing the means of all conditions where a factor is set to one level against all conditions where it is set to the other level, effectively "recycling" subjects across multiple comparisons [9]. This efficient use of data provides more statistical power for detecting effects than OVAT approaches requiring similar total sample sizes [9].

DoE Workflow for Biosensor Optimization

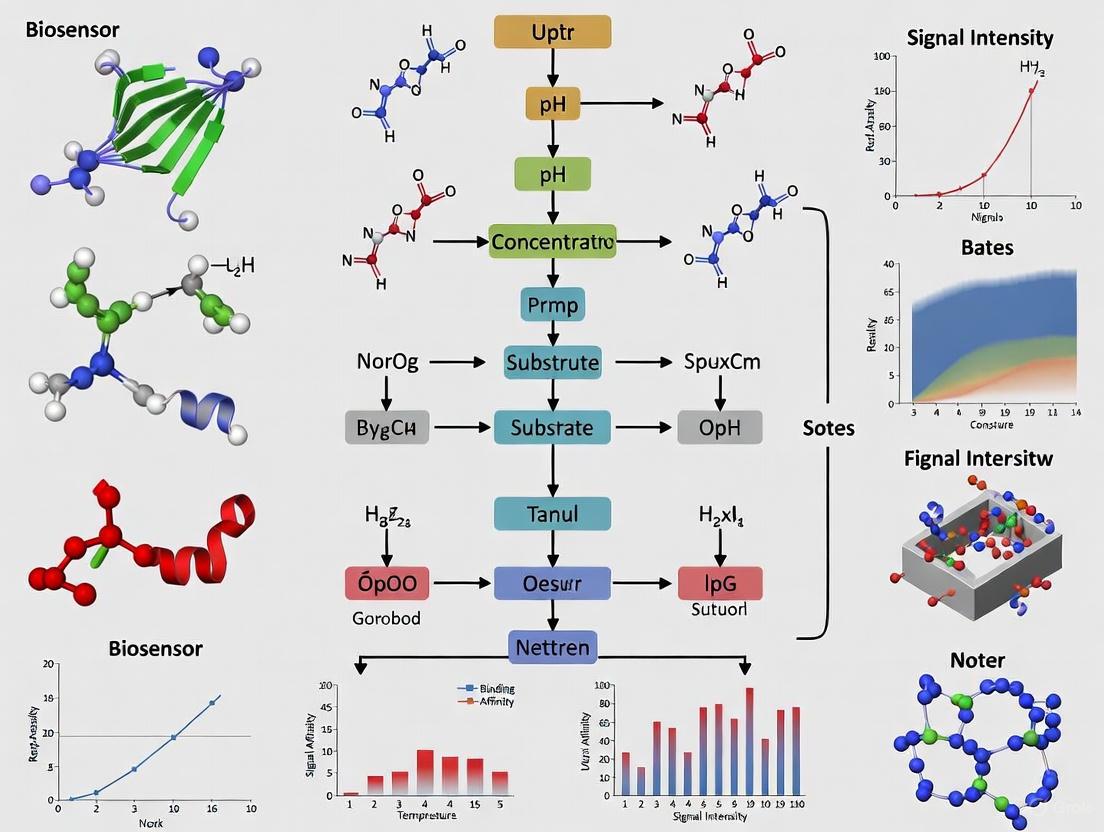

The following diagram illustrates the complete DoE workflow for biosensor optimization, from initial planning through final model validation:

Phase 1: Factor Screening

The initial phase employs efficient fractional factorial designs to screen many potential factors quickly [6]. These designs identify which factors have significant effects on biosensor performance with minimal experimental runs.

Phase 2: Response Surface Optimization

Once significant factors are identified, more detailed response surface methodology (RSM) designs characterize factor effects and interactions more precisely, enabling the building of predictive mathematical models [6].

Protocol: Implementation of DoE for Biosensor Development

Stage 1: Experimental Planning and Design

Materials:

- Genetically encoded biosensor components (promoter libraries, RBS libraries, effector modules)

- Host organism (bacteria, yeast, mammalian cells)

- High-throughput measurement system (flow cytometer, plate reader)

- DoE software (JMP, Modde, R-based packages)

Procedure:

- Define Response Variables: Identify key biosensor performance metrics (e.g., dynamic range, sensitivity, selectivity, response time)

- Select Experimental Factors: Choose 3-5 critical factors to optimize (e.g., promoter strength, ribosome binding site variants, transporter expression levels)

- Establish Factor Ranges: Set appropriate high and low levels for each factor based on prior knowledge

- Choose Experimental Design: Select a fractional factorial design for screening or full factorial/response surface design for optimization

- Randomize Run Order: Randomize the execution order of experimental conditions to avoid bias

Stage 2: Library Creation and Transformation

Materials:

- Molecular biology reagents for DNA assembly

- Automated liquid handling systems

- Transformation equipment

Procedure:

- Create promoter and ribosome binding site libraries using automated assembly methods [8]

- Transform host organisms with biosensor variants according to the experimental design matrix

- Include appropriate controls for normalization and quality assessment

Stage 3: High-Throughput Characterization

Materials:

- Microtiter plates suitable for measurement devices

- Effector compounds for titration analysis

- Automated sampling and dilution systems

Procedure:

- Culture biosensor variants under standardized conditions

- Perform effector titration analysis using automated platforms [8]

- Measure response signals using appropriate detection methods (fluorescence, luminescence, etc.)

- Collect data in structured format for computational analysis

Stage 4: Computational Analysis and Model Building

Materials:

- Statistical analysis software

- Computational resources for data processing

Procedure:

- Transform expression data into structured dimensionless inputs [8]

- Perform multiple linear regression to build predictive models

- Evaluate model significance and lack-of-fit statistics

- Identify significant main effects and interaction terms

- Generate response surface plots to visualize factor effects

Stage 5: Model Validation and Implementation

Procedure:

- Perform confirmation experiments at predicted optimal conditions

- Compare predicted vs. actual biosensor performance

- Refine model if necessary with additional experiments

- Implement validated optimal biosensor configuration

Research Reagent Solutions for DoE Implementation

Table 2: Essential Materials for DoE-Based Biosensor Optimization

| Reagent Category | Specific Examples | Function in DoE Workflow |

|---|---|---|

| DNA Parts Libraries | Promoter variants, RBS sequences, coding sequences | Create genetic diversity for testing different biosensor configurations |

| Host Organisms | E. coli, yeast, mammalian cell lines | Provide cellular context for biosensor performance evaluation |

| Measurement Tools | Flow cytometers, plate readers, microscopes | Quantify biosensor performance parameters at high throughput |

| Automation Systems | Liquid handlers, colony pickers, microplate dispensers | Enable execution of complex experimental matrices with precision |

| Statistical Software | JMP, Modde, R with DoE packages | Design experiments and analyze results to build predictive models |

Case Study: DoE in Genetically Encoded Biosensor Development

A recent study demonstrated the power of DoE for sampling the design space of allosteric transcription factor-based biosensors [8]. The researchers combined high-throughput automation with computational approaches to efficiently map the combinatorial experimental design space, enabling them to identify biosensor configurations with both digital and analog dose-response characteristics.

The protocol began with creating promoter and ribosome binding site libraries, which were transformed into structured dimensionless inputs for computational mapping [8]. Fractional sampling using a DoE algorithm coupled with effector titration analysis on an automation platform enabled comprehensive characterization of the biosensor design space with unprecedented efficiency.

This approach provides an agnostic framework for developing and optimizing future biosensor systems and genetic circuits, significantly advancing the regulatory toolkit available to the synthetic biology community [8].

Comparative Efficiency: DoE Versus Traditional Methods

The efficiency advantages of DoE are substantial. In one radiochemistry optimization study, researchers identified critical factors and modeled their behavior with more than two-fold greater experimental efficiency than the traditional OVAT approach [6]. Similar efficiency gains are achievable in biosensor optimization, where the number of possible permutations creates complex combinatorial design spaces that would be practically impossible to explore comprehensively using OVAT [8].

Table 3: Efficiency Comparison for a Three-Factor Biosensor Optimization

| Metric | OVAT Approach | Full Factorial DoE |

|---|---|---|

| Experimental Runs | 15-20 | 8 |

| Information Obtained | Main effects only | Main effects + interactions |

| Time Requirement | 4-6 weeks | 2-3 weeks |

| Resource Consumption | High | Moderate |

| Optimization Confidence | Limited | Comprehensive |

For factorial experiments, when additional factors need to be studied, they can often be added without increasing the total sample size requirement, as the same subjects are "recycled" to estimate multiple effects [9]. This represents a fundamental advantage over approaches that require additional experimental arms for each new factor studied.

Design of Experiments represents a paradigm shift in optimization methodology for biosensor development and other complex biological systems. By enabling efficient, systematic exploration of multifactor experimental spaces while capturing crucial interaction effects, DoE provides researchers with a powerful framework for accelerating the development of optimized biosensor configurations. The integration of DoE with high-throughput automation and computational analysis creates a robust workflow for tackling the combinatorial complexity inherent in genetically encoded biosensor design, ultimately advancing the synthetic biology toolkit and enabling more rapid development of these powerful biological tools.

The development and optimization of high-performance biosensors represent a critical challenge in analytical chemistry and diagnostics. A primary obstacle to their widespread adoption as dependable point-of-care tests is the difficulty of systematic optimization, as biosensor performance is influenced by a complex interplay of multiple fabrication and operational parameters [3]. Traditional univariate optimization methods, which vary one factor at a time (OFAT), present significant limitations: they require extensive experimental work, fail to capture interaction effects between variables, and often identify local rather than global optima [10] [11]. Design of Experiments (DoE) provides a powerful chemometric solution to these challenges by enabling statistically guided, efficient exploration of complex experimental spaces [3].

Factorial designs offer a model-based optimization approach that establishes data-driven models connecting variations in input variables (e.g., materials properties, fabrication parameters) to sensor outputs [3]. This methodology allows researchers to simultaneously investigate multiple factors and their interactions with reduced experimental effort compared to univariate strategies. For ultrasensitive biosensing platforms with sub-femtomolar detection limits, where challenges like enhancing signal-to-noise ratio, improving selectivity, and ensuring reproducibility are particularly pronounced, DoE becomes especially crucial [3]. This article examines three fundamental factorial design models—Full Factorial, Central Composite, and Mixture Designs—within the context of biosensor optimization, providing detailed protocols for their implementation in research settings.

Full Factorial Design

Theoretical Foundations

Full Factorial Designs are first-order orthogonal designs that systematically investigate all possible combinations of factors across their specified levels [3]. In a full factorial design, each factor is assigned two or more levels, coded as -1 and +1 for two-level designs, which correspond to the variable's range selected based on the specific application [3]. The experimental matrix for a 2^k factorial design contains 2^k rows, each representing an individual experiment, and k columns, each representing a specific variable [3]. From a geometric perspective, the experimental domain for two factors forms a square, for three factors a cube, and for more than three factors, a hypercube [3].

The mathematical model for a two-factor full factorial design is expressed as:

Y = b₀ + b₁X₁ + b₂X₂ + b₁₂X₁X₂

where Y is the predicted response, b₀ is the constant term (representing the overall mean), b₁ and b₂ are the main effects of factors X₁ and X₂, and b₁₂ is the interaction effect between X₁ and X₂ [3]. This model captures both the individual effects of each factor and their interactive effects, providing a comprehensive understanding of how factors influence the response variable.

Application Protocol for Biosensor Optimization

Protocol Title: Optimization of Biosensor Fabrication Parameters Using Two-Level Full Factorial Design

Purpose: To efficiently identify significant factors and their interactions affecting biosensor performance metrics (e.g., sensitivity, selectivity, limit of detection).

Experimental Workflow:

Step-by-Step Procedure:

Define Objective and Response: Clearly identify the primary response variable (e.g., oxidation current, limit of detection, signal-to-noise ratio) and the optimization goal (maximize, minimize, or target value) [12].

Factor Selection: Identify k critical factors potentially influencing biosensor performance based on prior knowledge. For biosensor fabrication, common factors include:

Level Setting: Establish appropriate low (-1) and high (+1) levels for each factor based on preliminary experiments or literature values [3].

Experimental Matrix Generation: Create a 2^k full factorial design matrix. The table below illustrates the experimental matrix for a 2^3 full factorial design investigating laser power (A), laser speed (B), and electrode width (C) for laser-scribed graphene electrodes [12]:

Table 1: Experimental Matrix for 2³ Full Factorial Design

| Run Order | Laser Power (A) | Laser Speed (B) | Electrode Width (C) | Response: Current Peak (Ip) |

|---|---|---|---|---|

| 1 | -1 | -1 | -1 | Measured Value |

| 2 | +1 | -1 | -1 | Measured Value |

| 3 | -1 | +1 | -1 | Measured Value |

| 4 | +1 | +1 | -1 | Measured Value |

| 5 | -1 | -1 | +1 | Measured Value |

| 6 | +1 | -1 | +1 | Measured Value |

| 7 | -1 | +1 | +1 | Measured Value |

| 8 | +1 | +1 | +1 | Measured Value |

Randomization and Execution: Randomize the run order to minimize systematic error and conduct experiments according to the matrix [3].

Data Analysis: Calculate main effects and interaction effects using statistical software. Main effects represent the average change in response when a factor moves from its low to high level. Interaction effects occur when the effect of one factor depends on the level of another factor [3].

Model Validation and Next Steps: Evaluate model adequacy using residual analysis. If significant curvature is detected or higher precision is required, proceed to a Response Surface Methodology (RSM) design such as Central Composite Design [3].

Central Composite Design (CCD)

Theoretical Foundations

Central Composite Design is a second-order experimental design widely used for response surface methodology and optimization of analytical methods [10]. CCD was introduced by Box and Wilson in the 1950s and has since been extensively applied across various technological domains due to its flexibility and robustness [10]. This design can be considered an evolution of the two-level factorial design, augmented with additional points to estimate curvature and quadratic effects [10].

A CCD comprises three distinct sets of points: (1) factorial points from a 2^k design, (2) axial (or star) points positioned at a distance α from the center along each factor axis, and (3) center points replicated to estimate pure error [10]. The value of α depends on the desired design properties, with |α| > 1 for rotatable or spherical designs [10]. The total number of experiments (N) required for a CCD with k factors is calculated as N = 2^k + 2k + nc, where nc is the number of center points [10].

The mathematical model for a CCD is a second-order polynomial:

Y = b₀ + ΣbiXi + ΣbiiXi² + ΣbijXiX_j

This model can accurately capture nonlinear relationships and identify optimal conditions within the experimental domain [10].

Application Protocol for Biosensor Optimization

Protocol Title: Response Surface Optimization of Biosensor Performance Using Central Composite Design

Purpose: To model quadratic response surfaces and identify optimal factor settings for maximizing biosensor performance.

Experimental Workflow:

Step-by-Step Procedure:

Factor and Range Definition: Select 2-5 critical factors identified from previous factorial screening experiments. Define appropriate ranges covering the region of interest [14].

Design Configuration: Choose the appropriate CCD type based on experimental constraints:

Experimental Matrix Generation: Create a CCD matrix with appropriate α value and center points. The table below shows a partial CCD matrix for optimizing silver nanoparticle biosynthesis for biosensing applications [14]:

Table 2: Partial CCD Matrix for Biosynthesis of Silver Nanoparticles [14]

| Standard Order | Temperature (°C) | pH | Extract Volume (mL) | AgNO₃ Volume (mL) | Time (min) | Response: SPR Intensity |

|---|---|---|---|---|---|---|

| 1 | -1 | -1 | -1 | -1 | -1 | Measured Value |

| ... | ... | ... | ... | ... | ... | ... |

| 16 | +1 | +1 | +1 | +1 | +1 | Measured Value |

| 17-22 | ±α | 0 | 0 | 0 | 0 | Measured Values |

| 23-32 | 0 | 0 | 0 | 0 | 0 | Measured Values (Center) |

Response Measurement: Measure all responses specified in the design matrix. For biosensor optimization, multiple response metrics may include sensitivity, selectivity, response time, and stability [15].

Model Fitting and Analysis: Use multiple regression to fit a second-order model. Evaluate model significance and lack-of-fit using analysis of variance (ANOVA). Identify significant linear, quadratic, and interaction terms [10].

Response Surface Visualization: Generate contour and 3D surface plots to visualize the relationship between factors and responses, identifying regions of optimal performance [10].

Optimization and Verification: Use desirability functions or numerical optimization to identify optimal factor settings. Conduct confirmation experiments at predicted optimal conditions to validate model predictions [14].

Mixture Designs

Theoretical Foundations

Mixture designs represent a specialized class of experimental designs used when the response depends on the relative proportions of components in a mixture rather than their absolute amounts [3]. The fundamental constraint in mixture designs is that the sum of all component proportions must equal 100% (or 1.0) [3]. This constraint means that mixture components cannot be varied independently—changing the proportion of one component necessitates proportional adjustments to the others [3].

Unlike factorial designs where factors can be independently manipulated, mixture designs operate within a constrained experimental space that forms a simplex. For two components, this space is a straight line; for three components, it forms a triangle; and for four components, it creates a tetrahedron [3]. Common types of mixture designs include simplex-lattice designs, simplex-centroid designs, and extreme vertices designs, each appropriate for different experimental scenarios.

The mathematical models for mixture designs differ from standard polynomial models because of the mixture constraint. Common models include:

- Linear: Y = ΣbiXi

- Quadratic: Y = ΣbiXi + ΣbijXiX_j

- Special Cubic: Y = ΣbiXi + ΣbijXiXj + ΣbijkXiXjX_k

These models help understand how component proportions affect the response and identify optimal blend formulations.

Application Protocol for Biosensor Optimization

Protocol Title: Optimization of Biosensor Formulation Blends Using Mixture Design

Purpose: To determine optimal proportions of multiple components in biosensor formulations (e.g., electrode composites, immobilization matrices).

Experimental Workflow:

Step-by-Step Procedure:

Component Identification: Identify key components in the biosensor formulation that must sum to a constant total. Examples include:

Constraint Definition: Set upper and/or lower bounds for each component based on practical limitations or prior knowledge.

Design Selection: Choose an appropriate mixture design based on the number of components and experimental goals. For three components with upper and/or lower bounds, an extreme vertices design is often appropriate.

Experimental Matrix Generation: Create a mixture design matrix specifying the proportion of each component for each experimental run. The table below illustrates a constrained mixture design for a three-component biosensor electrode formulation:

Table 3: Mixture Design for Three-Component Biosensor Electrode Formulation

| Run Order | Carbon Nanotube (%) | Conductive Polymer (%) | Binder (%) | Response: Conductivity (S/m) | Response: Stability (days) |

|---|---|---|---|---|---|

| 1 | 70 | 20 | 10 | Measured Value | Measured Value |

| 2 | 60 | 30 | 10 | Measured Value | Measured Value |

| 3 | 50 | 40 | 10 | Measured Value | Measured Value |

| 4 | 60 | 20 | 20 | Measured Value | Measured Value |

| 5 | 50 | 30 | 20 | Measured Value | Measured Value |

| 6 | 40 | 40 | 20 | Measured Value | Measured Value |

| 7-10 | Center Points | Center Points | Center Points | Measured Values | Measured Values |

Formulation Preparation and Testing: Precisely prepare each formulation according to the design proportions and measure relevant performance metrics.

Model Fitting: Fit appropriate mixture models (linear, quadratic, or special cubic) to the experimental data. Evaluate model adequacy using statistical measures.

Optimization: Use contour plots (ternary plots for three components) and numerical optimization to identify component proportions that maximize desirable responses while meeting all specification constraints.

Comparative Analysis of Factorial Design Models

Selection Guide and Applications

Table 4: Comparative Analysis of Full Factorial, Central Composite, and Mixture Designs

| Design Characteristic | Full Factorial Design | Central Composite Design | Mixture Design |

|---|---|---|---|

| Primary Application | Factor screening and main effects analysis [3] | Response surface optimization and quadratic modeling [10] | Formulation optimization with proportional components [3] |

| Experimental Structure | All combinations of k factors at 2 levels (2^k runs) [3] | 2^k factorial + 2k axial points + center points [10] | Constrained proportions summing to 100% [3] |

| Model Type | First-order with interactions (linear) [3] | Second-order (quadratic) [10] | Special polynomials with mixture constraint [3] |

| Key Advantage | Identifies all main effects and interactions with minimal assumptions [3] | Models curvature and identifies optimal conditions precisely [10] | Handles component interdependence in formulations [3] |

| Key Limitation | Cannot model curvature within the factor range [3] | Requires more runs than factorial designs [10] | Limited to proportional mixture systems [3] |

| Typical Runs (k=3) | 8 [3] | 14-20 (with center points) [10] | 10-15 (depending on constraints) [3] |

| Biosensor Application Examples | Screening fabrication parameters (laser power, speed, focus) [12] | Optimizing biosensor sensitivity and detection limit [15] | Optimizing electrode composite formulations [13] |

Research Reagent Solutions for DoE Implementation

Table 5: Essential Research Reagents and Materials for Biosensor Optimization Studies

| Reagent/Material | Function in Biosensor Development | Application Context |

|---|---|---|

| Polyimide Films | Flexible substrate for laser-scribed graphene electrodes [12] | Fabrication of wearable biosensor platforms [12] |

| Silver Nitrate (AgNO₃) | Precursor for silver nanoparticle synthesis [14] | Signal enhancement in optical and electrochemical biosensors [14] |

| Plantago major Extract | Green reducing and capping agent for nanoparticle synthesis [14] | Environmentally friendly nanomaterial preparation for sensing applications [14] |

| Reduced Graphene Oxide (rGO) | High-surface-area conductive nanomaterial [13] | Electrode modification for enhanced electron transfer [13] |

| K₃Fe(CN)₆ | Electrochemical redox probe [12] | Electrode characterization and performance evaluation [12] |

| Specific Antibodies/Aptamers | Biorecognition elements [13] | Molecular recognition for pathogen or biomarker detection [13] |

| Nafion Polymer | Permselective membrane [13] | Interference rejection in complex samples [13] |

| Screen-Printed Electrodes | Disposable sensor platforms [13] | Point-of-care biosensor development [13] |

Full Factorial, Central Composite, and Mixture Designs provide complementary approaches to addressing different stages and types of optimization challenges in biosensor development. Full factorial designs offer an efficient strategy for initial factor screening, Central Composite Designs enable precise modeling of nonlinear response surfaces, and Mixture Designs address the unique constraints of formulation optimization. The sequential application of these methodologies—beginning with screening experiments and progressing to detailed optimization—represents a powerful framework for advancing biosensor performance while conserving resources. As biosensing technologies evolve toward increasingly complex multiparameter systems, the systematic application of these factorial design models will be essential for developing robust, high-performance biosensors suitable for clinical diagnostics, environmental monitoring, and food safety applications.

The performance and reliability of biosensors are governed by a complex interplay of physicochemical and biological factors, making the understanding of their interactions an unavoidable and critical challenge in device development. A biosensor's operational profile—encompassing its sensitivity, selectivity, stability, and reproducibility—is not determined by isolated parameters but rather by the multifactorial relationships between its constituent elements. These interactions occur between the biological recognition element (e.g., enzyme, antibody, aptamer), the transducer platform (e.g., electrochemical, optical), and the target analyte within a specific sample matrix. The optimization bottleneck in biosensor development frequently arises from unanticipated interactions between seemingly independent variables, such as the impact of surface chemistry on bioreceptor orientation and function, or the influence of nanomaterial properties on signal transduction efficiency.

Failure to systematically address these interactions during the design and fabrication phases inevitably leads to suboptimal performance in real-world applications. For instance, a biosensor optimized for buffer solutions may demonstrate significantly compromised functionality in complex biological matrices like blood or urine due to non-specific adsorption (NSA) and fouling phenomena [16]. Similarly, the mechanical mismatch between rigid sensor components and soft biological tissues creates interfacial stress concentrations that undermine long-term stability and signal fidelity in wearable and implantable devices [17]. These challenges necessitate a paradigm shift from one-factor-at-a-time (OFAT) experimentation to structured multivariate approaches that can efficiently elucidate interaction effects and identify optimal operational windows. This application note provides a structured framework for investigating, quantifying, and controlling critical factor interactions throughout the biosensor development pipeline, with particular emphasis on design of experiments (DoE) methodologies tailored for biosensor optimization.

Critical Factor Interactions in Biosensor Systems

Material-Biological Interface Interactions

The interface where synthetic materials meet biological systems represents a primary domain of critical factor interactions in biosensors. At this junction, multiple factors converge to determine overall device performance. Surface energy of substrate materials directly influences bioreceptor adsorption kinetics and conformation, ultimately affecting binding affinity and specificity. Concurrently, the mechanical modulus of device components must harmonize with target tissues to minimize inflammatory responses and maintain signal stability during long-term implantation or wear [17]. Research demonstrates that devices with engineered tissue-like mechanical properties significantly reduce immune responses and improve chronic stability through enhanced biocompatibility profiles.

The nanoscale architecture of transducer surfaces introduces another dimension of complexity, where porosity, roughness, and functional group density collectively govern molecular accessibility and binding efficiency. For instance, nanostructured composites incorporating highly porous gold with polyaniline and platinum nanoparticles have demonstrated exceptional glucose sensing performance (95.12 ± 2.54 µA mM−1 cm−2 sensitivity) due to optimal interaction between surface morphology and enzymatic activity [18]. Similarly, the application of polydopamine coatings—inspired by mussel adhesion proteins—provides a versatile platform for surface modification that improves biocompatibility and functionalization while modulating interfacial interactions with biological systems [18]. These examples underscore how deliberate engineering of material-biological interfaces can harness factor interactions to enhance biosensor performance.

Transduction-Bioreceptor Integration Challenges

The integration of biological recognition elements with signal transduction mechanisms represents another critical interaction domain where multiple factors converge. The orientation and density of immobilized bioreceptors (antibodies, aptamers, enzymes) directly influence both binding kinetics and the resulting signal magnitude. For electrochemical biosensors, the distance-dependent electron transfer between redox centers and electrode surfaces creates a fundamental interaction between bioreceptor placement and transduction efficiency. Recent advances in SERS-based immunoassays utilizing Au-Ag nanostars demonstrate how precisely engineered plasmonic properties can enhance Raman scattering signals through optimal interaction with vibrational modes of target biomarkers like α-fetoprotein [18].

The rise of multi-functional bioinks for 3D-bioprinted biosensors further illustrates the complexity of transduction-bioreceptor integration. These advanced materials must simultaneously maintain bioreceptor viability, provide electrical conductivity, and enable analyte diffusion—requirements that often present competing design constraints [19]. The development of stimuli-responsive and conductive bioinks represents efforts to balance these interacting factors by creating environments where biological and transduction functions coexist synergistically. Additionally, the incorporation of organic electrochemical transistors (OECTs) based on PEDOT:PSS in ultrathin flexible platforms demonstrates how material selection can optimize interactions between transistor operation and biomolecular detection, achieving high transconductance (>400 mS) while maintaining conformal contact with biological tissues [17].

Table 1: Critical Factor Interactions in Biosensor Systems

| Interaction Domain | Key Interacting Factors | Impact on Performance | Mitigation Strategies |

|---|---|---|---|

| Material-Biological Interface | Surface energy, Mechanical modulus, Nanotopography | Bioreceptor functionality, Non-specific adsorption, Inflammatory response | Polydopamine coatings [18], Tissue-like soft materials [17], Ultraflexible substrates [17] |

| Transduction-Bioreceptor Integration | Immobilization density, Bioreceptor orientation, Electron transfer distance | Binding affinity, Signal-to-noise ratio, Detection limit | Anisotropic nanomaterials [18], Site-specific immobilization, Conducting bioinks [19] |

| Sample Matrix-Device Interface | Ionic strength, Interfering species, Fouling agents | Sensitivity, Specificity, Operational stability | Anti-fouling coatings [16], Microfluidic separation, Selective membranes |

Quantitative Analysis of Factor Interactions: Experimental Data

Systematic investigation of factor interactions requires quantitative assessment of their effects on critical performance parameters. The following data, compiled from recent biosensor studies, illustrates the magnitude and direction of these interactions across different biosensor platforms.

Table 2: Quantitative Analysis of Factor Interactions in Representative Biosensor Platforms

| Biosensor Platform | Interacting Factors | Performance Metric | Optimal Range/Interaction Effect | Reference |

|---|---|---|---|---|

| SERS Immunoassay (α-fetoprotein) | Nanostar concentration (centrifugation time: 10-60 min), Antibody concentration | Limit of Detection (LOD) | LOD: 16.73 ng/mL; Signal intensity scaled with nanostar content | [18] |

| Glucose Sensor (Enzyme-free) | Porous gold structure, Polyaniline, Platinum nanoparticles | Sensitivity | 95.12 ± 2.54 µA mM−1 cm−2 in interstitial fluid | [18] |

| THz SPR Biosensor | Graphene conductivity, External magnetic field, Prism configuration | Phase Sensitivity | 3.1043×10⁵ deg RIU⁻¹ (liquid), 2.5854×10⁴ deg RIU⁻¹ (gas) | [18] |

| OECT Bioelectronics | PEDOT:PSS thickness (≤5 μm), Substrate flexibility (parylene-C), Channel structure | Transconductance, Signal Quality | >400 mS transconductance; High-quality ECG, EOG, EMG signals | [17] |

The data reveals several important patterns regarding factor interactions in biosensor systems. First, the relationship between nanomaterial properties and sensing performance often follows non-linear trends, requiring multidimensional optimization. For instance, in SERS-based platforms, the plasmonic enhancement factors depend critically on the sharpness, composition, and distribution of metallic nanostructures [18]. Second, the integration of flexible electronics with biological tissues involves competing demands between mechanical compliance and electrical performance, with ultrathin device geometries (1-5 μm) enabling optimal balance through reduced bending stiffness and conformal contact [17]. Third, the application of external modulation strategies, such as magnetic field tuning of graphene conductivity in THz SPR sensors, demonstrates how dynamic control can enhance sensitivity by exploiting specific factor interactions [18].

Experimental Protocols for Investigating Factor Interactions

Protocol: Two-Stage Optimization for Electrochemical Biosensor Fabrication

Objective: Systematically optimize multiple interacting factors in electrochemical biosensor fabrication to maximize sensitivity and minimize fouling in complex matrices.

Materials and Reagents:

- Electrode substrates: Glassy carbon, Gold, or FTO electrodes (3 mm diameter)

- Nanomaterial modifiers: Graphene oxide dispersion (2 mg/mL), CNT suspension (1 mg/mL), Au nanoparticles (10 nm, 0.1 mM)

- Bioreceptors: Target-specific aptamers (100 μM stock) or antibodies (1 mg/mL)

- Crosslinkers: EDC/NHS mixture (400 mM/100 mM), glutaraldehyde (2.5% v/v)

- Blocking agents: Bovine serum albumin (10 mg/mL), casein (5 mg/mL), PEG-thiol (1 mM)

- Electrochemical probes: Ferricyanide/ferrocyanide (5 mM each in PBS), methylene blue (1 mM)

Stage 1: Transducer Surface Optimization

- Electrode pretreatment: Polish electrodes with 0.05 μm alumina slurry, rinse with DI water, and perform electrochemical cleaning via cyclic voltammetry (CV) in 0.5 M H₂SO₄ (-0.2 to 1.5 V, 10 cycles).

- Nanomaterial modification: Drop-cast 10 μL of nanomaterial suspension and dry under ambient conditions. Optimize loading density using I-V characterization.

- Electrochemical characterization: Perform electrochemical impedance spectroscopy (EIS) in 5 mM Fe(CN)₆³⁻/⁴⁻ (0.1-100,000 Hz, 10 mV amplitude) and CV at 50 mV/s. Calculate electron transfer rate (kₑₜ).

- Factor interaction analysis: Using a full factorial design, investigate interactions between nanomaterial type, deposition method, and surface charge. Model response surfaces for kₑₜ and double-layer capacitance.

Stage 2: Bioreceptor Integration and Anti-fouling Strategies

- Surface functionalization: Apply oxygen plasma treatment (50 W, 1 min) to introduce carboxyl groups on nanomaterial surfaces.

- Immobilization optimization: Test EDC/NHS (2h, RT) versus glutaraldehyde (1h, RT) crosslinking. Vary bioreceptor concentration (0.1-10 μM) and incubation time (1-16h).

- Blocking strategy evaluation: Compare BSA (2h), casein (1h), and PEG-thiol (4h) for minimizing non-specific adsorption.

- Performance validation: Measure dose-response in buffer and spiked serum samples. Quantify signal reduction in serum versus buffer to assess fouling effects.

Data Analysis: Fit response surfaces for sensitivity, LOD, and fouling index. Identify regions where multiple performance metrics are simultaneously optimized.

Protocol: Mechanical-Electrical Co-Optimization for Flexible Biosensors

Objective: Identify optimal conditions balancing mechanical compliance and electrical performance for flexible biosensors.

Materials and Reagents:

- Substrates: Parylene-C, Polyimide, PDMS, Ecoflex

- Conductive materials: PEDOT:PSS, Silver nanowires (AgNWs), Graphene ink

- Encapsulation: Silicone elastomer, Parylene-C, SU-8

- Characterization equipment: Profilometer, Universal mechanical tester, Semiconductor analyzer, Electrochemical workstation

Procedure:

- Substrate selection and fabrication:

- Spin-coat or deposit flexible substrates at varying thicknesses (1-100 μm)

- Pattern electrode structures using photolithography or printing techniques

- Apply conductive layers via spin-coating, evaporation, or transfer methods

Mechanical characterization:

- Measure elastic modulus via nanoindentation or tensile testing

- Perform cyclic bending tests (1000+ cycles) at various radii (5-20 mm)

- Quantify adhesion strength using peel tests or tape tests

Electrical performance assessment:

- Measure sheet resistance and conductivity before/after mechanical stress

- Perform CV and EIS in physiological buffer

- Record signal-to-noise ratio for target biomarkers

Stability testing:

- Immerse devices in PBS (pH 7.4) at 37°C for extended periods

- Monitor electrical performance and mechanical integrity over time

- Assess biocompatibility through cell culture assays

Experimental Design:

- Use a Box-Behnken design with three factors: substrate thickness, conductive material concentration, and encapsulation thickness

- Response variables: sheet resistance after bending, signal-to-noise ratio, delamination probability

- Build predictive models for device lifetime under operational conditions

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents for Investigating Biosensor Factor Interactions

| Reagent Category | Specific Examples | Function in Biosensor Development | Key Considerations |

|---|---|---|---|

| Nanomaterial Modifiers | Graphene oxide, Carbon nanotubes, Au/Ag nanoparticles, MXenes | Enhance electron transfer, provide functional groups, increase surface area | Purity, size distribution, dispersion stability, functional group density |

| Bioreceptors | Monoclonal antibodies, DNA aptamers, engineered enzymes, molecularly imprinted polymers | Molecular recognition, target binding specificity | Affinity, stability, orientation, labeling efficiency, lot-to-lot consistency |

| Crosslinkers | EDC/NHS, glutaraldehyde, sulfo-SMCC, dopamine-based adhesives | Immobilize bioreceptors, create stable interfaces | Specificity, reaction efficiency, spacer arm length, side reactions |

| Anti-fouling Agents | PEG derivatives, zwitterionic polymers, bovine serum albumin, casein | Reduce non-specific binding, improve signal-to-noise | Compatibility with bioreceptors, stability, thickness, charge characteristics |

| Conductive Polymers | PEDOT:PSS, polyaniline, polypyrrole, multicomponent bioinks [19] | Facilitate signal transduction, provide flexible conductors | Conductivity, processability, biocompatibility, environmental stability |

| Substrate Materials | Parylene-C, polyimide, PDMS, thermoplastic polyurethanes | Provide mechanical support, enable flexibility | Young's modulus, surface energy, chemical resistance, biocompatibility |

Statistical Framework for Analyzing Factor Interactions

Experimental Design and Data Analysis Workflow

Implementing a structured approach to experimental design and data analysis is essential for efficiently elucidating factor interactions in biosensor development. The following workflow provides a systematic framework for this process:

Implementation Guidelines for DoE in Biosensor Optimization

The effective implementation of design of experiments (DoE) for investigating biosensor factor interactions requires careful planning and execution:

Factor Selection and Range Definition:

- Identify 3-5 critical factors based on prior knowledge and screening experiments

- Set realistic ranges that cover both current operating conditions and potential improvements

- Include both continuous (e.g., concentration, temperature, time) and categorical (e.g., material type, immobilization method) factors

Experimental Design Selection:

- Use full factorial designs for 2-3 factors to capture all possible interactions

- Implement response surface methodologies (Box-Behnken, Central Composite) for optimization

- Consider D-optimal designs when facing constraints on factor combinations

Response Measurement:

- Measure multiple responses simultaneously (sensitivity, selectivity, stability, etc.)

- Include both primary performance metrics and secondary characterization data

- Replicate center points to estimate experimental error and model adequacy

Data Analysis and Interpretation:

- Perform ANOVA to identify statistically significant factors and interactions

- Calculate interaction effects and visualize with interaction plots

- Develop empirical models linking factors to responses

- Use optimization algorithms to identify optimal factor combinations

Validation and Implementation:

- Confirm model predictions with confirmation experiments

- Establish control strategies for critical process parameters

- Document design space boundaries for regulatory submissions

This structured approach enables efficient exploration of the complex factor interactions that inevitably arise in biosensor fabrication and operation, transforming this challenge from an unavoidable obstacle into a manageable development phase.

The systematic optimization of biosensors presents a significant obstacle to their widespread adoption as dependable point-of-care tests [3]. Defining the experimental domain—the carefully selected factors, their experimental ranges, and the measured responses—constitutes the foundational step in the design of experiments (DoE) framework. This strategic approach moves beyond traditional one-variable-at-a-time (OFAT) methodologies, which often fail to detect interactions between variables and may not identify true optimum conditions [3] [20] [21]. A well-defined experimental domain enables researchers to construct a data-driven model that connects variations in input variables to biosensor performance outputs, facilitating a more efficient and statistically reliable optimization process [3]. This protocol provides a comprehensive framework for defining the experimental domain within biosensor optimization projects, complete with practical applications across diverse biosensor technologies.

Theoretical Foundation: Key Concepts in Experimental Design

Fundamental Principles of Domain Selection

The experimental domain encompasses the multidimensional space defined by all factors under investigation and their respective ranges. Within this domain, experimental points are arranged according to a specific design (e.g., factorial, central composite) to efficiently explore how factor variations affect the response [3]. The process initiates by identifying all factors that may exhibit a causality relationship with the targeted output signal, referred to as the response [3]. This systematic approach provides comprehensive, global knowledge of the optimization space, offering maximum information for optimization purposes while considering potential interactions between variables [3].

Advantages Over Traditional Approaches

Traditional OFAT approaches optimize individual variables independently, a straightforward yet problematic method particularly when dealing with interacting variables [3]. The conditions established for sensor preparation and operation under OFAT may not represent the true optimum, hindering practical applications [3]. In contrast, the DoE approach accounts for interactions among variables, which occur when an independent variable exerts varying effects on the response based on the values of another independent variable [3]. Such interactions consistently elude detection in OFAT approaches but are efficiently captured through proper experimental domain definition and DoE application [3] [21].

Protocol: Defining Your Experimental Domain

Step 1: Factor Identification and Classification

Objective: Identify and categorize all potential factors that may influence biosensor performance.

- Assemble Multidisciplinary Team: Gather experts from biology, chemistry, engineering, and statistics to ensure comprehensive factor identification.

- Conduct Brainstorming Session: List all potential factors using techniques like mind mapping or process flow analysis. Consider factors across these categories:

- Biological Components: Biorecognition element concentration, immobilization method, incubation time, buffer composition.

- Transducer Elements: Electrode geometry, material composition, surface modification parameters.

- Detection Conditions: Temperature, pH, ionic strength, flow rate, detection time.

- Classify Factors: Categorize each factor as continuous (e.g., concentration, temperature) or categorical (e.g., buffer type, electrode material).

- Prioritize Factors: Use prior knowledge and preliminary experiments to identify the most influential factors. A Pareto analysis can help focus resources on the critical few.

Step 2: Establishing Factor Ranges

Objective: Define appropriate minimum and maximum levels for each continuous factor and select specific alternatives for categorical factors.

- Literature Review: Examine published research on similar biosensor systems to establish baseline ranges.

- Preliminary Experiments: Conduct univariate scouting experiments to determine feasible ranges where effects are expected. Avoid ranges that produce insignificant responses or physically impossible conditions.

- Consider Practical Constraints: Account for limitations such as reagent solubility, detector saturation, and physiological relevance when setting ranges.

- Document Rationale: Record the justification for each selected range to maintain methodological transparency and support future optimization cycles.

Step 3: Selection and Definition of Response Variables

Objective: Identify quantifiable metrics that accurately reflect biosensor performance.

- Identify Critical Performance Metrics: Select responses that directly correlate with biosensor efficacy. Common responses in biosensor optimization include:

- Sensitivity: Signal change per unit concentration change.

- Limit of Detection (LOD): Lowest detectable analyte concentration.

- Dynamic Range: Concentration range over which the biosensor responds.

- Selectivity: Ability to distinguish target from interferents.

- Reproducibility: Measurement precision under identical conditions.

- Ensure Measurability: Confirm that selected responses can be quantified reliably with available instrumentation.

- Define Measurement Protocol: Standardize procedures for response measurement to minimize variability.

- Prioritize Responses: If multiple responses are measured, determine their relative importance for eventual multi-objective optimization.

Step 4: Experimental Design Selection and Domain Mapping

Objective: Select an appropriate experimental design that efficiently explores the defined domain.

- Assume Linear Effects: For initial screening of many factors, employ two-level full factorial designs, which require 2^k experiments where k represents the number of variables being studied [3].

- Account for Curvature: If nonlinear responses are suspected, use response surface methodologies like central composite designs that augment initial factorial designs with additional points for estimating quadratic terms [3].

- Consider Fractional Factorials: When facing resource constraints with many factors, employ fractional factorial designs to estimate main effects and lower-order interactions with fewer experiments.

- Randomize Run Order: Randomize the sequence of experimental runs to mitigate the effects of lurking variables and systematic errors.

Step 5: Iterative Refinement

Objective: Use initial results to refine the experimental domain for subsequent optimization cycles.

- Analyze Initial Data: Identify insignificant factors that can be eliminated or fixed in subsequent rounds.

- Adjust Ranges: Narrow or shift ranges based on initial results to focus on promising regions of the experimental domain.

- Modify Model: Upgrade from linear to quadratic models if curvature is detected in the response.

- Allocate Resources: Do not allocate more than 40% of available resources to the initial set of experiments, reserving the majority for iterative refinement based on initial findings [3].

Application Examples Across Biosensor Technologies

Electrochemical Biosensors

In the optimization of an in-situ film electrode for heavy metal detection, researchers employed a fractional factorial design using five factors: mass concentrations of Bi(III), Sn(II), and Sb(III), accumulation potential, and accumulation time [20]. The experimental domain was defined with specific ranges for each factor, and the response was evaluated using a combination of analytical parameters including limit of quantification, linear concentration range, sensitivity, accuracy, and precision [20]. This approach enabled simultaneous consideration of multiple performance metrics, revealing factor interactions that would have been missed in OFAT approaches.

Optical Biosensors

For a photonic crystal fiber-based surface plasmon resonance (PCF-SPR) biosensor, machine learning and explainable AI were used to identify critical design parameters [15]. The experimental domain included factors such as wavelength, analyte refractive index, gold thickness, and pitch, with sensitivity and resolution as primary responses [15]. SHAP analysis revealed that these factors were the most influential on sensor performance, demonstrating how advanced statistical techniques can guide experimental domain definition in complex systems.

Transcription Factor-Based Biosensors

In developing a TphR-based terephthalate biosensor, researchers simultaneously engineered the core promoter and operator regions of the responsive promoter [22]. The experimental domain encompassed genetic sequence variations, and responses included dynamic range, sensitivity, and steepness of the biosensor response [22]. This approach enabled efficient sampling of complex sequence-function relationships, demonstrating how experimental domain definition applies to genetic circuit optimization.

Immunosensor Optimization

For a quantitative sandwich ELISA, researchers applied full factorial designs in successive steps of the assay, optimizing factors such as antibody concentration, buffer composition, incubation temperature, and plate type [21]. The experimental domain was refined iteratively, with each round incorporating the best combination of factors and levels from the previous stage. This stepwise approach to domain definition resulted in a 20-fold improvement in analytical sensitivity and a significant reduction in the lower limit of quantification from 156.25 to 9.766 ng/mL [21].

Figure 1: Experimental domain definition involves a systematic, iterative process from factor identification through refinement based on model adequacy assessment.

Experimental Factors and Responses in Biosensor Optimization

Table 1: Common Factors and Ranges in Biosensor Experimental Domains

| Biosensor Type | Factor Category | Specific Factors | Typical Ranges | Response Variables Measured |

|---|---|---|---|---|

| Electrochemical [20] | Biological Layer | Receptor concentration | 0.1-10 mg/mL | Sensitivity, LOD, Selectivity |

| Transducer | Electrode material, Geometry | 3-5 μm gap for IDE [23] | Signal-to-noise ratio, Reproducibility | |

| Detection | Accumulation potential, Time | -1.2 to -0.8 V, 60-300 s [20] | Peak current, Linear range | |

| Optical [15] | Physical Structure | Gold thickness, Pitch | 30-50 nm, 1.5-2.5 μm [15] | Wavelength sensitivity, Resolution |

| Detection | Wavelength, Analyte RI | 0.6-1.2 μm, 1.31-1.42 [15] | Amplitude sensitivity, FOM | |

| Whole-Cell [22] [24] | Genetic Circuit | Promoter strength, RBS | Varies by system | Dynamic range, Signal steepness |

| Expression | TF concentration, Inducer | 0.1-10 μM | Response time, Sensitivity | |

| Immunosensors [21] | Assay Conditions | Antibody concentration, Incubation time | 1-10 μg/mL, 30-120 min [21] | LLOQ, Analytical sensitivity |

| Surface Chemistry | Coating buffer, Plate type | Carbonate vs. PBS [21] | Background signal, Specificity |

Table 2: Examples of Response Variables in Biosensor Optimization

| Response Variable | Definition | Measurement Method | Importance in Biosensor Performance |

|---|---|---|---|

| Sensitivity [15] | Signal change per unit concentration change | Slope of calibration curve | Determines ability to detect small concentration changes |

| Limit of Detection (LOD) | Lowest detectable analyte concentration | 3×standard deviation of blank/slope | Defines lowest measurable concentration |

| Dynamic Range [22] | Concentration range over which biosensor responds | Range of linear response in calibration | Determines applicability across concentration levels |

| Selectivity | Ability to distinguish target from interferents | Response ratio target vs. similar compounds | Ensures accuracy in complex samples |

| Reproducibility | Precision under identical conditions | Relative standard deviation (%RSD) | Determines reliability across repeated measurements |

| Response Time [24] | Time to reach stable signal | Time from exposure to stable reading | Critical for real-time monitoring applications |

| Linearity | Degree of proportional response | R² value of calibration curve | Affects quantification accuracy |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Biosensor Experimental Domain Definition

| Reagent/Material | Function in Experimental Domain | Application Examples | Considerations for Selection |

|---|---|---|---|

| Allosteric Transcription Factors [24] | Biological recognition element for genetic circuits | Whole-cell biosensors for metabolites | Ligand specificity, expression level, orthogonality |

| Monoclonal Antibodies [21] | Capture and detection elements for immunosensors | Sandwich ELISA for protein detection | Specificity, affinity, cross-reactivity, stability |

| Electrode Materials (Gold, Bismuth, Antimony) [20] [23] | Transducer surface for electrochemical detection | Heavy metal sensors, impedance biosensors | Conductivity, biocompatibility, fouling resistance |

| Buffer Components [21] | Maintain optimal biochemical conditions | All biosensor types | pH stability, ionic strength, compatibility with biological elements |

| Plasmid Vectors [22] [24] | Genetic backbone for circuit implementation | Transcription factor-based biosensors | Copy number, compatibility with host, selection markers |

| Signal Amplification Reagents (Enzyme conjugates, Protein G) [23] | Enhance detection signal | Immunosensors, optical detection | Turnover rate, stability, background signal |

| Surface Modification Reagents (SAMs, crosslinkers) | Immobilize biological recognition elements | All surface-based biosensors | Orientation control, density, stability, non-fouling properties |

Troubleshooting and Best Practices

Common Challenges in Domain Definition

- Overly Broad Ranges: Excessively wide factor ranges may miss subtle optimum regions and require more experimental runs to achieve sufficient resolution. Solution: Use preliminary experiments and literature data to set informed ranges.

- Ignoring Critical Factors: Omitting important factors limits model utility and may lead to suboptimal conditions. Solution: Conduct thorough process mapping and consult multidisciplinary experts during factor identification.

- Inadequate Response Selection: Choosing responses that don't correlate with real-world performance metrics. Solution: Align responses with intended application requirements through stakeholder consultation.

- Resource Misallocation: Investing too heavily in initial designs without reserving resources for refinement. Solution: Follow the 40% rule for initial experimentation [3].

Best Practices for Effective Domain Definition

- Leverage Prior Knowledge: Utilize existing information from similar systems, literature, and preliminary experiments to inform factor selection and range setting.

- Embrace Iteration: View experimental domain definition as an iterative process rather than a one-time activity, with each cycle providing insights for refinement.

- Consider Practical Constraints: Account for technical limitations, budget constraints, and timeline requirements when defining the experimental domain.

- Document Thoroughly: Maintain detailed records of factor selection rationale, range justifications, and response measurement protocols to ensure reproducibility and support methodological decisions.

- Validate Models: Always confirm model adequacy through residual analysis and confirmation experiments before proceeding to optimization [3].

Figure 2: Complex relationships between experimental factors and performance responses in biosensors, highlighting the importance of capturing factor interactions through proper experimental domain definition.

Defining the experimental domain through careful selection of factors, ranges, and response variables represents a critical first step in the systematic optimization of biosensors. This structured approach enables efficient exploration of the multi-dimensional parameter space while capturing interaction effects that traditional OFAT methodologies inevitably miss. The provided protocol, together with the illustrative examples across various biosensor platforms, offers researchers a practical framework for implementing these principles in their optimization projects. Proper experimental domain definition not only enhances optimization efficiency but also contributes to the development of more robust, reliable biosensors capable of meeting the demanding requirements of point-of-care diagnostics and other applications. Through iterative refinement and application of statistical principles, researchers can maximize information gain while conserving valuable resources, accelerating the development timeline for novel biosensing platforms.

From Theory to Bench: A Step-by-Step Protocol for Implementing Full Factorial Design

Pre-optimization and factor screening constitute a critical first phase in the biosensor development pipeline. This stage focuses on identifying the most influential genetic and environmental factors that dictate biosensor performance, such as sensitivity, dynamic range, and specificity. By employing structured preliminary experiments, researchers can efficiently allocate resources to the most significant variables in subsequent full-factorial optimization studies, ensuring a robust and effective final biosensor design [25]. This protocol outlines a detailed methodology for conducting these essential preliminary screens, using transcription factor (TF)-based biosensors as a primary example.

Factor Screening Case Studies

The following case studies illustrate the application of pre-optimization screens for different biosensor types, highlighting key performance metrics and the factors that influence them.

Table 1: Pre-Optimization of a Naringenin Biosensor Library. This study screened a library of FdeR-based biosensors in E. coli under different genetic and environmental contexts to identify optimal combinations for dynamic regulation [25].

| Factor Category | Specific Factors Screened | Performance Metrics Assessed | Key Screening Findings |

|---|---|---|---|

| Genetic Components | Promoters (P1, P3, P4), Ribosome Binding Sites (RBSs) | Normalized Fluorescence Output | Promoter P3 consistently produced the highest fluorescence output across various RBSs, media, and supplements [25]. |

| Environmental Conditions | Media (M0/M9, M2/SOB), Carbon Sources (S0/Glucose, S1/Glycerol, S2/Sodium Acetate) | Normalized Fluorescence Output | M9 medium (M0) yielded the highest signal; the carbon source Sodium Acetate (S2) significantly enhanced output compared to Glucose (S0) [25]. |

Table 2: Factor Screening for a TtgR-Based Flavonoid Biosensor. This research engineered the ligand-binding pocket of the TtgR transcription factor to develop biosensors with altered specificity and sensitivity [26].

| Factor Category | Specific Factors Screened | Performance Metrics Assessed | Key Screening Findings |

|---|---|---|---|

| Transcription Factor Mutations | TtgR ligand-binding pocket mutations (e.g., N110F, N110Y, V96S, H114N) | Specificity, Sensitivity, Quantitative Detection Accuracy | The N110F TtgR mutant enabled accurate quantification (>90% accuracy) of resveratrol and quercetin at 0.01 mM [26]. |

| Ligand Structure | Diverse flavonoids (e.g., naringenin, quercetin, phloretin) and resveratrol | Fluorescence Response | Biosensor response varied with ligand chemical structure, influenced by hydrogen bonding and van der Waals forces within the binding pocket [26]. |

Experimental Protocols

This section provides a detailed, step-by-step methodology for the construction, screening, and analysis of a TF-based biosensor library, as exemplified in the case studies.

Protocol: Construction of a Transcription Factor Biosensor Library

Objective: To assemble a combinatorial library of biosensor constructs by varying regulatory genetic elements [25] [26].

DNA Parts Preparation:

- Transcription Factor Module: Assemble a collection of genetic parts for the inducible expression of your transcription factor (e.g., FdeR, TtgR). This includes:

- Reporter Module: Prepare a reporter construct where a TF-specific operator sequence (e.g., PttgABC, FdeR operator) controls the expression of a fluorescent reporter protein (e.g., enhanced Green Fluorescent Protein - eGFP) [25] [26].

Combinatorial Assembly:

- Use standard molecular biology techniques such as restriction enzyme digestion and ligation or Golden Gate assembly to combinatorially combine each promoter-RBS pair from the TF module with the reporter module [26].

- Clone the assembled constructs into appropriate plasmid vectors with compatible origins of replication and antibiotic resistance markers. A common practice is to use a dual-plasmid system, with the TF on one plasmid and the reporter on another [26].

Transformation and Validation:

- Transform the assembled plasmid libraries into a suitable microbial chassis, typically E. coli BL21(DE3) or DH5α strains [26].

- Confirm successful assembly of the library by extracting plasmids from multiple colonies and verifying them via analytical digestion and DNA sequencing.

Protocol: High-Throughput Screening of Biosensor Performance

Objective: To characterize the dynamic response of biosensor variants to target ligands under different environmental conditions [25].

Cultivation and Induction:

- Inoculate deep-well plates containing a suitable growth medium (e.g., Lysogeny Broth - LB) with individual biosensor variants from the library [26].

- Grow the cultures to mid-exponential phase (OD₆₀₀ ≈ 0.5-0.6) under constant agitation in a controlled environment.

- Induce the biosensor response by adding a predetermined, saturating concentration of the target ligand (e.g., 400 μM naringenin for FdeR biosensors). Include negative control cultures with no ligand or with a solvent like DMSO [25].

Contextual Screening:

- To assess environmental impact, grow and induce the same biosensor constructs in different media (e.g., M9, SOB) and with different carbon sources (e.g., Glucose, Glycerol, Sodium Acetate) [25].

Response Measurement:

- At regular intervals post-induction (e.g., hourly for 7 hours), measure both optical density (OD₆₀₀) and fluorescence (e.g., Ex/Em for eGFP: 488/510 nm) using a plate reader.

- Calculate the normalized fluorescence (Fluorescence/OD₆₀₀) for each variant and time point to account for differences in cell density.

Data Analysis:

- Plot the normalized fluorescence over time to visualize the dynamic response of each variant.

- Calculate key performance indicators, such as the maximum output signal and the response time, to identify the top-performing constructs for further optimization.

Protocol: In Silico Analysis and Mutant Design

Objective: To use computational tools to understand ligand-TF interactions and guide the design of TFs with altered specificity [26].

Structural Modeling:

- Obtain or generate a 3D structural model of the wild-type transcription factor (e.g., TtgR) from a protein data bank or via homology modeling.

Ligand Docking:

- Perform molecular docking simulations to predict the binding poses and affinities of various target ligands (e.g., naringenin, quercetin, resveratrol) within the TF's binding pocket.

- Analyze the interactions (e.g., hydrogen bonds, hydrophobic contacts) that contribute to ligand binding and specificity [26].

Site-Directed Mutagenesis Design: