Advanced Signal Processing Techniques for Biosensor Baseline Drift Correction: From Foundations to AI Integration

This article provides a comprehensive overview of signal processing techniques specifically designed for correcting baseline drift in biosensors, a critical challenge that impacts data accuracy and reliability.

Advanced Signal Processing Techniques for Biosensor Baseline Drift Correction: From Foundations to AI Integration

Abstract

This article provides a comprehensive overview of signal processing techniques specifically designed for correcting baseline drift in biosensors, a critical challenge that impacts data accuracy and reliability. Tailored for researchers, scientists, and drug development professionals, it covers the foundational causes of drift, explores a range of algorithmic and digital correction methodologies, and offers practical troubleshooting guidance. It further delivers a comparative analysis of classical and modern techniques, including the role of artificial intelligence (AI), and validates performance through real-world case studies and metrics. The goal is to equip professionals with the knowledge to select, implement, and optimize drift correction strategies, thereby enhancing the quality of biosensor data in biomedical research and clinical applications.

Understanding Biosensor Baseline Drift: Causes, Impacts, and Fundamental Concepts

Defining Baseline Drift and Its Critical Impact on Quantitative Biosensing

What is Baseline Drift?

In quantitative biosensing, baseline drift refers to the slow, unwanted low-frequency change in the biosensor's output signal when no analyte is present or during a constant measurement condition. It is a deviation from the stable, expected baseline and appears as a gradual upward or downward trend in the sensorgram or measurement data [1].

This phenomenon is critically different from abrupt signal changes like spikes or jumps. Drift is a sign that the sensor system is not fully equilibrated and can be caused by factors such as [1]:

- System Inequilibration: The sensor surface is not fully adjusted to the running buffer, often seen after docking a new sensor chip or immobilizing a new ligand.

- Environmental Factors: During long-term operation, changes in light source, temperature, or humidity can cause the baseline to wander [2].

- Sensor Aging: The biological recognition element (e.g., an enzyme) can degrade over time, leading to a loss of sensitivity and a drifting signal [3].

- Buffer Changes: Inadequate system priming after a buffer change can cause mixing and a wavy baseline until equilibrium is re-established [1].

Why is Correcting Baseline Drift Critical?

The critical impact of baseline drift lies in its direct threat to the accuracy, reliability, and precision of quantitative biosensor data.

- Reduced Predictive Accuracy: When baseline-drifted spectra are used for quantitative and qualitative analysis, the prediction accuracy of the analytical model is significantly reduced, leading to inaccurate or even erroneous results [2].

- Compromised Data Interpretation: Drift affects the precision of results from pattern recognition algorithms, making it difficult to correctly identify and quantify analytes, especially in complex mixtures [3].

- False Positives/Negatives: Uncorrected drift is one of the underlying factors that can contribute to false diagnostic results in both conventional and AI-powered biosensors [4].

The following table summarizes the key challenges drift introduces.

| Challenge | Impact on Quantitative Biosensing |

|---|---|

| Quantification Errors | Inaccurate calculation of analyte concentration due to an incorrect baseline reference point. |

| Compromised Sensitivity | Reduced ability to detect low concentrations of analyte, as the drift can obscure small signal changes. |

| Impaired Kinetics Analysis | Incorrect determination of binding affinities and reaction rates in real-time monitoring assays. |

| Degraded Model Performance | Introduces noise and error into multivariate calibration models (e.g., PLS, PCA), reducing their robustness [3]. |

Troubleshooting Guide: Identifying and Minimizing Drift

This section addresses frequently asked questions to help you diagnose and prevent common sources of baseline drift.

Q: I've just immobilized a new ligand, and my baseline is drifting. What should I do? A: This is a common sign of a non-optimally equilibrated sensor surface. The surface may be rehydrating, or chemicals from the immobilization procedure may be washing out.

- Solution: Flow running buffer overnight to fully equilibrate the surface before beginning your analyte injections [1].

Q: My baseline is unstable after changing the running buffer. Why? A: The system likely contains a mixture of the old and new buffers, creating a concentration gradient and an unstable signal.

- Solution: Always prime the system after each buffer change and wait for a stable baseline before starting experiments [1].

Q: My biosensor's sensitivity is decreasing over time, causing a downward drift in signal. How can I manage this? A: Ageing of the biological element (e.g., enzyme deactivation) is a key cause of sensitivity loss.

- Solution: Implement mathematical sensitivity correction algorithms. One approach is to regularly analyze reference samples and use a multiplicative drift correction algorithm to compensate for the ageing effect within a measurement sequence [3].

Q: What general practices can minimize baseline drift? A:

- Use Fresh Buffers: Prepare fresh, filtered (0.22 µm), and degassed buffers daily. Do not top up old buffer solutions [1].

- Add Start-up Cycles: Include at least three start-up cycles in your method that inject buffer instead of analyte. This "primes" the surface and stabilizes it before real data collection [1].

- Incorporate Blank Injections: Space blank (buffer alone) cycles evenly throughout your experiment (e.g., one every five to six analyte cycles). These are essential for robust data correction [1].

Advanced Correction: Methodologies and Protocols

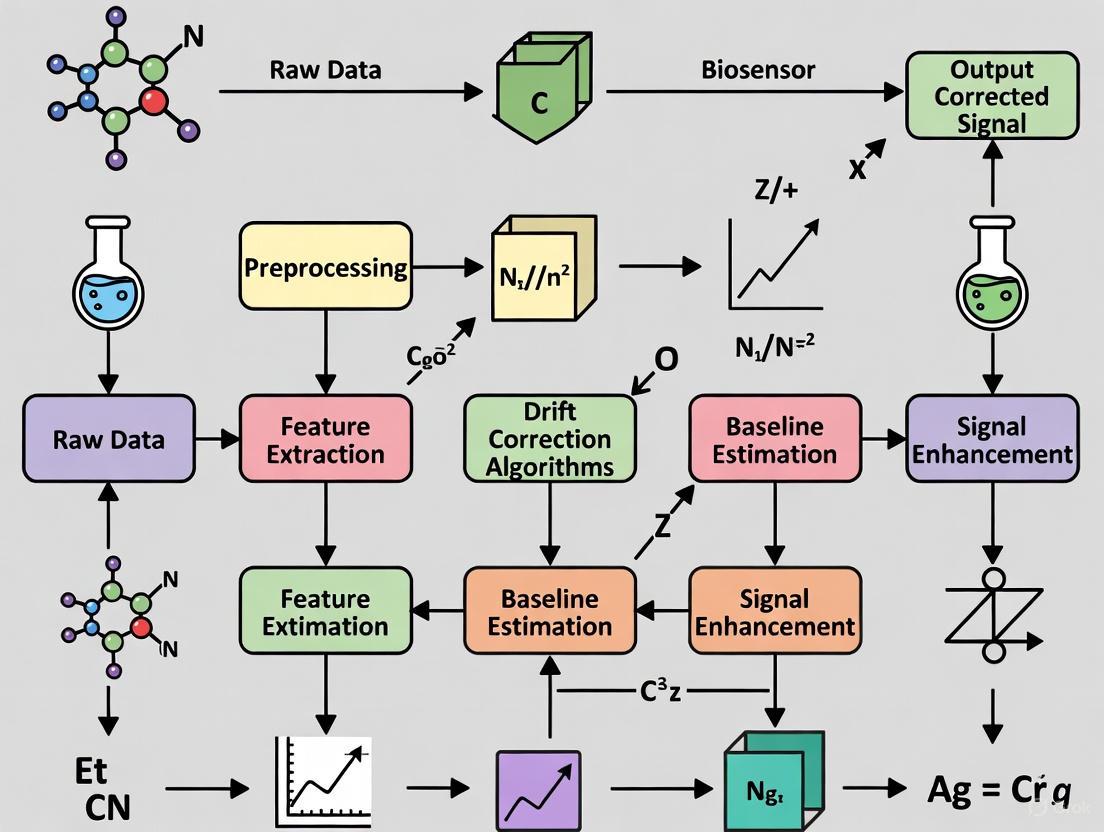

For advanced research and data processing, several algorithmic methods exist to correct for baseline drift post-measurement. The workflow for implementing these corrections generally follows a logical sequence, as outlined below.

Experimental Protocol: Correcting Drift with the erPLS Algorithm

The extended Range Penalized Least Squares (erPLS) method is an advanced, automatic technique for correcting baseline drift in spectroscopic biosensor data [2].

- Principle: The method balances the fidelity (how well the fitted baseline matches the original data in non-peak regions) and smoothness of the fitted baseline. It automatically selects the optimal smoothing parameter (λ), which is often a user-dependent hurdle in other methods [2].

- Procedure:

- Linear Expansion: The ends of the spectrum signal are linearly expanded.

- Gaussian Peak Addition: A Gaussian peak is added to the expanded range to create a known reference signal.

- Parameter Optimization: The asPLS algorithm is run with different λ values. The optimal λ is selected as the one that yields the minimal root-mean-square error (RMSE) in the extended range where the Gaussian peak was added.

- Baseline Estimation: The entire original signal's baseline is estimated using the asPLS method with this optimally selected λ.

- Subtraction: The estimated baseline is subtracted from the original signal to yield a corrected, drift-free spectrum [2].

Experimental Protocol: Multivariate Drift Correction for Sensor Arrays

For biosensor arrays or electronic tongues, drift can be corrected using component correction, a multivariate method.

- Principle: This method assumes that sensor drift has a preferred direction in multivariate space. The correction is done by subtracting the drift direction component, identified from the responses of reference samples, from the entire dataset [3].

- Procedure:

- Reference Measurement: Regularly measure a stable reference sample throughout the analysis sequence.

- Model Drift: Use a multivariate method like Principal Component Analysis (PCA) or Partial Least Squares (PLS) to model the direction of the drift in the response data from the reference samples.

- Apply Correction: Subtract this modeled "drift component" from the analyte sample responses [3].

The Scientist's Toolkit: Key Reagent Solutions

The following table details key materials and their functions in managing and studying baseline drift.

| Research Reagent / Material | Function in Drift Investigation & Correction |

|---|---|

| Stable Reference Samples | Used for periodic calibration and to model drift direction in multivariate correction methods [3]. |

| Fresh, Degassed Buffers | Prevents bubble formation and chemical instability, which are common physical causes of baseline drift [1]. |

| Antifoaming Agents (Detergents) | Added to running buffer after degassing to prevent foam, which can cause spikes and baseline instability [1]. |

| Tyrosinase Enzyme with Stabilizing Polymers (e.g., Eastman AQ55D) | Used to create more stable enzymatic biosensors; studying its immobilization helps understand and reduce biological drift [3]. |

| Polynomial and Penalized Least Squares Algorithms (e.g., arPLS, asPLS) | Mathematical tools implemented in software (e.g., MATLAB, R) for automatic baseline estimation and subtraction from spectral data [2]. |

Quantitative Impact: Data at a Glance

The table below synthesizes data from various studies to illustrate the quantitative impact of baseline drift and the efficacy of correction methods.

| Study Focus / Method | Key Quantitative Finding / Performance Metric |

|---|---|

| General Impact of Drift | Using baseline-drifted spectra for analysis reduces the prediction accuracy of quantitative models [2]. |

| erPLS Correction Method | An automatic algorithm capable of handling diverse baseline drift types without user-tuned parameters, improving model accuracy [2]. |

| Multivariate Drift Correction | Applying multiplicative drift correction to a tyrosinase-based biosensor enabled accurate quantification of components in binary mixtures despite sensor ageing [3]. |

| AI-Enhanced Biosensors | AI biosensors can provide high prediction performance (r > 0.8) but are still susceptible to inaccuracies from underlying drift and noise [5]. |

Frequently Asked Questions (FAQs)

Q1: How do temperature fluctuations specifically lead to biosensor signal drift? Temperature fluctuations induce drift by directly altering the kinetics of biological interactions and the physical properties of the sensor materials. For evanescent-field silicon photonic (SiP) biosensors, temperature changes cause a shift in the refractive index of the analyte solution and the sensor waveguide itself, leading to a measurable shift in the resonance wavelength (Δλres) that is indistinguishable from a true binding signal [6]. In electrochemical biosensors, temperature affects enzyme activity and electron transfer rates, creating signal instabilities that complicate calibration [7].

Q2: What are the primary mechanisms of sensor aging that contribute to baseline drift over time? Sensor aging is primarily driven by the gradual degradation of the sensor's functional layers. Key mechanisms include:

- Bioreceptor Deactivation: Over time, immobilized antibodies, enzymes, or aptamers can lose their activity due to denaturation or chemical decomposition, reducing the sensor's response [6].

- Surface Fouling: The non-specific accumulation of biomolecules (biofouling) from complex samples like blood serum can block binding sites and alter the sensor surface properties, leading to a continuous drift in the baseline signal [8] [6].

- Material Instability: Degradation of sensitive nanomaterials (e.g., oxidation of conductive polymers or leaching of metal nanoparticles) used to enhance signal transduction can permanently alter the sensor's performance characteristics [7].

Q3: Which surface reactions beyond target binding can cause unwanted signal drift? Several non-specific surface reactions can cause drift:

- Non-specific Adsorption (NSA): Proteins, lipids, or other molecules in a sample can physisorb to the sensor surface, changing its mass and optical properties [8] [6].

- Off-target Binding: Molecules structurally similar to the target analyte may bind weakly to the bioreceptor or other surface sites [6].

- Chemical Alteration of the Surface: The functionalization chemistry (e.g., a self-assembled monolayer on a gold electrode) can degrade or react with components in the sample buffer, leading to signal instability [8].

Q4: What signal processing techniques can correct for drift caused by these factors? Machine learning (ML) techniques are highly effective for drift correction. A comprehensive study evaluating 26 regression models found that decision tree regressors, Gaussian Process Regression (GPR), and artificial neural networks (ANNs) can achieve near-perfect signal prediction (R² = 1.00, RMSE ≈ 0.1465) [7]. Table 3 summarizes top-performing models. Furthermore, a co-simulation framework integrating COMSOL Multiphysics for physics-based modeling and CODIS+ for real-time signal processing with a 1D Convolutional Neural Network (CNN) has been shown to effectively reduce noise and signal errors (RMSE reduced from 7.8 to 2.1) [9].

Q5: How can I experimentally validate that observed drift is due to temperature and not other factors? A standard protocol involves performing a controlled temperature sweep experiment:

- Setup: Place the biosensor in a temperature-controlled chamber (e.g., a Peltier device) with a stable buffer solution and no analyte present.

- Measurement: Record the baseline signal while systematically varying the temperature (e.g., from 20°C to 40°C in 1°C increments).

- Analysis: Plot the signal output against temperature to establish a drift coefficient (e.g., signal change per °C). This calibration curve can later be used for software-based compensation [6] [7].

Troubleshooting Guides

Issue: Temperature-Induced Drift in Optical Biosensors

Symptoms: A steady, cyclical, or unpredictable shift in the baseline resonance wavelength or output signal that correlates with ambient temperature changes.

Step-by-Step Resolution:

- Confirm the Source: Monitor the sensor signal in a pure buffer solution while logging the room temperature. A direct correlation confirms thermal drift.

- Implement Physical Control:

- Use an incubator or a miniaturized Peltier device to maintain a constant temperature around the sensor and fluidic components.

- Use tubing with low thermal conductivity and pre-warm/cool all reagents to the assay temperature.

- Implement Signal Processing Correction:

- Reference Sensor: Use an on-chip reference sensor that is functionalized with a non-responsive molecule but is exposed to the same thermal environment. Subtract its signal from the active sensor's signal [6].

- ML Correction: Train a machine learning model (e.g., GPR or XGBoost) on data where temperature and sensor output are recorded. The model can then predict and subtract the thermal component of the signal in real-time [9] [7].

Issue: Signal Degradation and Drift Due to Sensor Aging

Symptoms: A consistent downward trend in the maximum signal output upon exposure to a known analyte concentration over days or weeks; increased signal noise; longer time to reach signal stability.

Step-by-Step Resolution:

- Characterize Aging Rate: Periodically calibrate the sensor with a standard analyte solution to track the decay of its sensitivity and baseline over time.

- Optimize Storage Conditions:

- Store sensors in a stable, dry environment, often in a protective buffer (e.g., with sucrose or BSA) to prevent dehydration and preserve bioreceptor activity.

- Improve Surface Stability:

- For electrochemical sensors, use covalent immobilization strategies (e.g., via EDC-NHS chemistry) instead of physical adsorption to secure bioreceptors to the surface [8] [7].

- For optical sensors, employ antifouling surface chemistries like self-assembled monolayers (SAMs) with longer alkyl chains or polyethylene glycol (PEG) to minimize non-specific adsorption and biofouling [8] [6].

Issue: Drift from Non-Specific Surface Reactions and Fouling

Symptoms: A gradual signal increase in control channels or when exposed to complex sample matrices (e.g., serum, blood); poor washout; inconsistent calibration curves.

Step-by-Step Resolution:

- Include Robust Controls: Always run a parallel control with a sensor that lacks the specific bioreceptor or is blocked with an inert protein.

- Enhance Surface Passivation:

- After immobilizing the bioreceptor, incubate the sensor with a blocking agent like BSA, casein, or specialized commercial blockers to cover any remaining reactive sites.

- Optimize Microfluidic Design and Operation:

- Implement effective bubble mitigation strategies, as bubbles can damage functionalization and cause massive signal instability. This includes microfluidic device degassing, plasma treatment, and the use of surfactant solutions [6].

- Ensure consistent and stable flow rates to prevent fluctuations in mass transport to the sensor surface [6].

- Utilize Advanced Functionalization: Consider using polydopamine coatings or protein A layers to improve the orientation and stability of immobilized antibodies, which can reduce heterogeneity and non-specific interactions [6].

Table 1: Impact of Common Factors on Biosensor Signal and Variability

| Factor | Impact on Signal & Variability | Mitigation Strategy |

|---|---|---|

| Temperature Fluctuations | Alters reaction kinetics & transducer physics; major source of baseline drift [6] [7] | Use on-chip reference sensors; implement ML-based thermal compensation [9] [7] |

| Bioreceptor Immobilization | Inconsistent density/orientation causes inter-assay variability [6] | Use covalent chemistry (e.g., EDC-NHS); optimize via polydopamine or protein A [8] [6] |

| Non-Specific Binding | Gradual signal drift in complex samples; increases noise [8] [6] | Apply blocking agents (BSA, casein); use antifouling SAMs/PEG coatings [8] |

| Microfluidic Bubbles | Sudden signal artifacts and functionalization damage [6] | Degas devices & reagents; use plasma treatment & surfactants [6] |

Table 2: Performance of Machine Learning Models for Signal Prediction and Drift Correction [7]

| Model Family | Example Algorithm | RMSE | R² | Key Advantage for Drift Correction |

|---|---|---|---|---|

| Tree-Based | Decision Tree Regressor | 0.1465 | 1.00 | High accuracy & interpretability |

| Gaussian Process | Gaussian Process Regression (GPR) | 0.1465 | 1.00 | Provides uncertainty estimates |

| Artificial Neural Network | Wide Neural Network | 0.1465 | 1.00 | Models complex non-linearities |

| Stacked Ensemble | GPR + XGBoost + ANN | 0.1430 | 1.00 | Superior stability & generalization |

Experimental Protocols

Protocol 1: Characterizing Temperature Drift Coefficient

Objective: To quantify the baseline signal change of a biosensor per degree Celsius of temperature change.

Materials:

- Biosensor chip integrated with a microfluidic system.

- Temperature-controlled stage or incubator with high accuracy (±0.1°C).

- Data acquisition system for continuous signal monitoring.

- Phosphate Buffered Saline (PBS), pH 7.4.

Methodology:

- Flush the sensor microchannel with PBS at a constant flow rate until a stable baseline is achieved.

- Set the temperature controller to a starting point (e.g., 20°C) and allow the system to equilibrate for 15 minutes.

- Record the baseline signal (e.g., resonance wavelength, current, or impedance) for 5 minutes.

- Increase the temperature by a fixed increment (e.g., 1°C or 2°C).

- Repeat steps 3 and 4 until the desired temperature range (e.g., up to 40°C) is covered.

- Plot the average baseline signal at each temperature versus the temperature. The slope of the linear fit is the temperature drift coefficient.

Protocol 2: Evaluating Sensor Aging via Accelerated Aging Study

Objective: To predict the long-term stability of a biosensor by studying its performance under stressed conditions.

Materials:

- Multiple biosensor units from the same production batch.

- High-temperature incubator.

- Calibration standard solution (known concentration of target analyte).

Methodology:

- Calibrate all fresh biosensors (Day 0) with the standard solution to establish initial sensitivity and baseline.

- Store one group of sensors at the recommended storage condition (control). Store other groups at elevated temperatures (e.g., 37°C, 45°C) in a dry environment or in a destabilizing buffer.

- At regular intervals (e.g., Day 1, 3, 7, 14), remove a sensor from each storage condition and perform the same calibration as in Step 1.

- Plot the normalized sensitivity (Sensitivityt / Sensitivityinitial) versus time for each condition.

- Use the Arrhenius equation to model the degradation rate and extrapolate the sensor's shelf-life at standard storage temperatures.

Research Reagent Solutions

Table 4: Key Reagents for Biosensor Functionalization and Drift Mitigation

| Reagent | Function | Example Application |

|---|---|---|

| EDC / NHS | Crosslinker pair for covalent immobilization of biomolecules to carboxylated surfaces [8] [7]. | Creating stable amide bonds between antibodies and graphene oxide electrodes. |

| Polydopamine | A versatile coating that facilitates a strong, universal adhesion layer for subsequent bioreceptor immobilization [6]. | Functionalizing silicon photonic microring resonators; shown to improve detection signal by 8.2x compared to some flow-based methods [6]. |

| Protein A | Binds the Fc region of antibodies, promoting a uniform, oriented immobilization on sensor surfaces [6]. | Improving antigen-binding efficiency and consistency on gold surfaces or optical sensors. |

| BSA / Casein | Blocking agents used to passivate unoccupied binding sites on the sensor surface after functionalization [8] [6]. | Reducing non-specific binding from serum proteins in immunoassays. |

| Pluronic F-127 | A non-ionic surfactant used in microfluidics to reduce bubble formation and minimize surface fouling [6]. | Adding to running buffers to improve wetting and prevent bubble-related artifacts in microfluidic channels. |

Signal Drift Analysis and Correction Workflow

How Drift Obscures Signals and Compromises Data Integrity in Biomedical Assays

Understanding Drift in Biomedical Data

What is drift and why is it a critical issue in biomedical assays?

In biomedical assays, drift refers to the unwanted change in a sensor's signal or a model's performance over time, which is not due to the target analyte but to external or systemic factors. It is a critical issue because it can obscure true biological signals, leading to inaccurate data, false positives/negatives, and ultimately, compromised diagnostic or research conclusions [10] [11].

It is important to distinguish between two key types of drift:

- Data Drift: This occurs when the statistical properties of the input data change. For example, variations in experimental setups, instrument calibration, or environmental conditions during data collection can cause data drift [12].

- Concept Drift: This is a more fundamental shift in the relationship between the input data (e.g., a biomarker) and the target output (e.g., a disease diagnosis). This can happen due to the emergence of new viral strains or changes in population demographics, making a previously reliable predictive model less accurate [13] [12].

What are the common root causes of signal drift in electrochemical biosensors?

Research into Electrochemical Aptamer-Based (EAB) sensors has identified two primary mechanisms that cause signal degradation in complex biological environments like whole blood:

- Fouling by Blood Components: Proteins, cells, and other biomolecules adsorb onto the sensor surface, physically blocking the redox reporter from reaching the electrode and slowing the electron transfer rate. This causes an initial, rapid, exponential signal loss [11].

- Electrochemically Driven Desorption: The repeated electrochemical interrogation of the sensor can cause the breakage of the gold-thiol bonds that anchor the sensing molecule to the electrode. This leads to a slower, linear degradation of the signal over time [11].

Other contributing factors can include enzymatic degradation of biological recognition elements (e.g., DNA or enzymes) and irreversible reactions of the redox reporter molecule itself [11].

How does "model drift" in machine learning affect COVID-19 diagnostic tools?

The dynamic nature of the COVID-19 pandemic, with evolving viral strains and changing demographics, has led to a phenomenon known as model drift in machine learning-based diagnostic tools. A study on models designed to detect COVID-19 from cough audio data demonstrated this clearly.

A baseline model experienced a significant performance drop when applied to data collected after its development period. To mitigate this, researchers successfully applied adaptation techniques:

- Unsupervised Domain Adaptation (UDA), which aligns data distributions from different periods without needing new labels, improved balanced accuracy by up to 24% [13].

- Active Learning (AL), which selectively labels the most informative new data points for model retraining, yielded even greater improvements, increasing balanced accuracy by up to 60% for one dataset [13].

This underscores that without continuous monitoring and adaptation, the accuracy of AI-driven diagnostic models can degrade over time.

Troubleshooting Guides & FAQs

FAQ: Addressing Common Assay and Sensor Problems

Q: My ELISA has a weak or no signal. What should I check? A: This is often a reagent or procedural issue. Follow this checklist:

- Temperature: Ensure all reagents are at room temperature before starting the assay [14].

- Reagent Integrity: Confirm reagents are within their expiration date and have been stored correctly [14].

- Protocol Adherence: Verify that all reagents were added in the correct order, with correct dilutions and incubation times [14].

- Washing: Ensure thorough and consistent washing to remove unbound components [14].

- Equipment: Check that the plate reader is set to the correct wavelength [14].

Q: The signal in my microplate-based fluorescence assay is inconsistent across the plate. What could be wrong? A: Inconsistent signals can stem from several factors related to your experimental setup:

- Meniscus Formation: A curved liquid surface can distort readings. Use hydrophobic plates, avoid detergents like Triton X, and ensure consistent sample volumes. Using a path length correction tool, if available, can also help [15].

- Autofluorescence: Media components like phenol red can cause high background. Switch to phenol-red-free media or PBS for measurements [15].

- Reader Settings: Optimize the gain setting to avoid saturation and ensure the focal height is correctly adjusted to the sample layer [15].

- Well Scanning: If your sample is unevenly distributed, use a well-scanning function that takes multiple measurements across the well to get a representative average [15].

Q: My electrochemical biosensor signal is decaying rapidly during a measurement. Is this reversible? A: It depends on the cause. Research suggests that the initial rapid (exponential) signal loss is often due to fouling and can be at least partially reversed. One study showed that washing the sensor with a concentrated urea solution recovered over 80% of the initial signal. However, signal loss from electrochemical desorption or enzymatic degradation is typically irreversible [11].

Systematic Troubleshooting Methodology

When faced with an experimental failure, a structured approach is more efficient than random checks. The following workflow outlines a general troubleshooting methodology that can be adapted for various experimental types, from molecular biology to sensor development [16].

Experimental Insights & Data

The table below summarizes key quantitative findings from recent research on drift in different biomedical contexts.

Table 1: Quantifying Drift and Mitigation Efficacy Across Studies

| Assay/Model Type | Impact of Drift | Mitigation Method | Performance Improvement | Source |

|---|---|---|---|---|

| COVID-19 Cough Audio Model | Performance decline on post-development data | Unsupervised Domain Adaptation (UDA) | Balanced accuracy ↑ up to 24% | [13] |

| COVID-19 Cough Audio Model | Performance decline on post-development data | Active Learning (AL) | Balanced accuracy ↑ up to 60% | [13] |

| Electrochemical Biosensor | Biphasic signal loss in whole blood | Optimizing potential window | Signal loss reduced to ~5% (vs. significant loss) | [11] |

| Metabolomic Predictions | Prediction inaccuracy due to confounding factors | Concept Drift Detection (CDD) | Enhanced prediction accuracy, reduced false negatives | [12] |

Experimental Protocol: Investigating Drift Mechanisms in Electrochemical Biosensors

Objective: To systematically evaluate the mechanisms underlying signal drift of an electrochemical biosensor in a biologically relevant environment (e.g., whole blood) [11].

Materials:

- Electrochemical biosensors (e.g., EAB sensors with a thiol-on-gold monolayer).

- Potentiostat.

- Whole blood sample (undiluted), maintained at 37°C.

- Phosphate Buffered Saline (PBS), for control experiments.

- Urea solution (e.g., concentrated) for fouling reversal tests.

Methodology:

- Baseline Measurement: Place the sensor in PBS at 37°C and record the stable square-wave voltammetry (SWV) signal.

- Blood Challenge: Transfer the sensor to undiluted whole blood at 37°C and initiate continuous or frequent SWV interrogation.

- Signal Monitoring: Record the sensor signal over several hours. Note the characteristic biphasic decay: an initial exponential drop followed by a linear decline.

- Mechanism Isolation:

- Fouling Test: After signal decay in blood, wash the sensor with a concentrated urea solution and re-measure the signal in PBS to assess recovery.

- Electrochemical Desorption Test: In a separate experiment in PBS, vary the SWV potential window to avoid reductive (< -0.5 V) and oxidative (> 1.0 V) desorption limits. Monitor the signal stability.

- Data Analysis: Plot signal amplitude over time. Compare degradation rates and signal recovery under different conditions to attribute drift to specific mechanisms.

Research Reagent Solutions for Drift Studies

Table 2: Essential Materials for Investigating and Correcting Drift

| Item | Function / Application | Specific Example / Note |

|---|---|---|

| Electrochemical Aptamer-Based (EAB) Sensor | A platform for real-time, in vivo molecular monitoring; subject to drift from fouling and desorption. | Used to study mechanisms of drift in biological fluids [11]. |

| Urea Solution | A denaturant used to solubilize proteins; can reverse signal loss caused by biofouling. | Recovered >80% of signal in EAB sensor studies [11]. |

| Concept Drift Detection (CDD) Algorithms | Software methods to detect changes in the underlying data-model relationship in ML. | DDM and EDDM are effective for metabolomic data [12]. |

| Baseline Correction Algorithms (e.g., arPLS, ConvAuto) | Computational methods to remove instrumental baseline drift from spectral/analytical data. | Crucial for accurate quantification in spectroscopy/chromatography [17]. |

The Scientist's Toolkit: Diagrams & Workflows

Signaling Pathway of Sensor Degradation

The following diagram illustrates the two primary competing pathways that lead to signal loss in electrochemical biosensors deployed in biological environments, based on the mechanistic study cited [11].

Machine Learning Model Drift Correction Workflow

For machine learning models used in biomedical diagnostics, maintaining performance requires continuous monitoring and adaptation. This workflow outlines a proactive framework to combat model drift [13].

Frequently Asked Questions

Q1: What are the most common causes of baseline drift in biosensor signals? Baseline drift is a low-frequency trend that causes a signal's baseline to shift over time. Common causes include changes in electrode-skin impedance, physiological processes like respiration or perspiration in biological measurements, and environmental fluctuations in sensing equipment. This drift can distort key signal parameters such as peak height and area [18] [19].

Q2: My peak identification algorithm is detecting too many false positives from noise. How can I improve its accuracy? This is often due to the algorithm's inability to distinguish between true peaks and random noise fluctuations. You can improve accuracy by:

- Adjusting the

SmoothWidthparameter in derivative-based methods (likefindpeaksx). A larger value will neglect small, sharp features, effectively reducing sensitivity to high-frequency noise [20]. - Increasing the

SlopeThreshold. This discriminates based on peak width, making the algorithm less likely to flag broad, noise-induced features as peaks [20]. - Applying a pre-smoothing filter (e.g., a Savitzky-Golay filter) to your data before peak detection to suppress high-frequency noise [21].

Q3: What is the advantage of using a method that performs baseline correction and peak finding jointly? Joint methods, such as the Derivative Passing Accumulation (DPA) method, can provide a more robust and accurate analysis. By solving these two interdependent problems together, these methods prevent error propagation that can occur when the output of a standalone baseline correction step (which might be imperfect) is fed into a separate peak finding algorithm. Testing has shown that joint methods can achieve lower peak area loss rates compared to processing steps performed in isolation [18].

Q4: When should I use an asymmetric least squares (ALS) algorithm for baseline correction? ALS is particularly powerful when your signal has a broad, slowly varying baseline superimposed with sharp peaks, a common characteristic in Raman and X-ray fluorescence (XRF) spectra. Its key feature is applying a much higher penalty to positive deviations (the peaks) than to negative deviations, which allows the fitted baseline to neglect the peaks and adapt closely to the true baseline points [22].

Troubleshooting Guides

Problem 1: Ineffective Baseline Removal

Symptoms: The corrected signal does not have a flat baseline; significant low-frequency trends remain, or the baseline is over-corrected and distorts the signal peaks.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect method selection | Visually inspect your signal. Is the baseline linear, polynomial, or a complex, slow undulation? | For simple linear drift, use detrend or polynomial fitting. For complex, non-linear drift, use wavelet-based methods or Asymmetric Least Squares (ALS) [23] [22]. |

| Poor parameter tuning | Check the baseline fit generated by your algorithm. Does it follow the baseline valleys or get pulled up into the peaks? | For ALS, increase the lam (smoothing) parameter for a smoother baseline. For wavelet methods, adjust the decomposition level or the coefficients being zeroed out [22]. |

| High-frequency noise interference | Apply a low-pass filter to your signal and attempt baseline correction again. If performance improves, noise is the issue. | Smooth the signal before baseline correction or use a baseline method that incorporates smoothing internally, such as the derivative-based methods used in findpeaksx [20]. |

Problem 2: Poor Peak Detection Accuracy

Symptoms: The algorithm misses valid peaks (low recall) or incorrectly identifies noise as peaks (low precision).

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient smoothing | Zoom in on a region of baseline noise. If many small, sharp spikes are visible, the data is too noisy for direct peak detection. | Increase the SmoothWidth parameter in functions like findpeaksx. This smooths the first derivative, reducing false zero-crossings caused by noise [20]. |

| Poorly set amplitude or slope thresholds | Run your peak finder and plot the results. Are missed peaks small and broad? Are false peaks small and sharp? | Increase AmpThreshold to ignore small-amplitude noise. Increase SlopeThreshold to discriminate against broad, low-slope features [20]. |

| Overlapping peaks | Check if the detected peak width is much larger than expected or if the peak shape is asymmetric. | Use algorithms capable of deconvolution or those that fit multiple peak models (e.g., findpeaksfit). Fourier Self-Deconvolution (FSD) can also help resolve overlapping peaks [21]. |

Comparative Performance of Signal Processing Methods

The table below summarizes the performance of various algorithms tested on authentic biological and spectroscopic data, as reported in the literature [18].

| Method | Principle | Best For | Performance Notes |

|---|---|---|---|

| Derivative Passing Accumulation (DPA) | Uses accumulation of first-order derivatives | General-purpose, especially for joint baseline and peak finding | Accurate, flexible; outperforms others on ECG and EEG data. |

| airPLS | Penalized least squares with asymmetry | Spectroscopic data (Raman, IR) | Excellent for spectra; can produce "dental" baselines in mass spectrometry. |

| Wavelet Transform | Multi-scale decomposition by frequency | Signals with well-separated noise/baseline/peak features | Can produce undercut baselines; performance depends on wavelet type and level. |

| Empirical Mode Decomposition (EMD) | Adaptive decomposition into intrinsic mode functions | Non-stationary signals like ECG | Often generates overestimated baselines. |

| Asymmetric Least Squares (ALS) | Iterative fitting with asymmetric penalties | Complex, non-linear baselines in Raman/XRF | Highly effective; baseline adapts well to valleys, neglecting peaks. |

Detailed Experimental Protocols

Protocol 1: Implementing Derivative Passing Accumulation (DPA)

The DPA method is a joint baseline correction and peak extraction algorithm that uses only first-order derivative information [18].

- Input: Acquire the raw signal profile, denoted as vector y.

- Differentiation: Calculate the discrete first-order derivative of y. This is often done via simple differencing:

dy = diff(y). - Separation and Accumulation: Separate the positive and negative parts of the derivative vector. Accumulate these parts to build a new signal descriptor that amplifies peak-related features.

- Thresholding: Apply a threshold to this new descriptor. The threshold value separates regions belonging to the baseline from regions containing signal peaks.

- Output: The procedure outputs a corrected baseline and the locations (intervals) of the identified signal peaks simultaneously.

Protocol 2: Baseline Correction with Asymmetric Least Squares (ALS)

This protocol is effective for Raman and XRF spectra [22].

- Initialization: Start with the original spectrum z and an initial weight vector w = 1.

- Iteration Loop: For a specified number of iterations (e.g.,

niter=5): a. Solve Linear System: Compute the baseline b by solving the linear system:(D' * W * D + λ * I) * b = D' * W * z, where D is a second-order difference matrix, W is a diagonal weight matrix, and λ is the smoothness parameter (e.g.,lam=1e6). b. Update Weights: Compute new weights w based on the residuals r = z - b. For positive residuals (points above the baseline, likely peaks), assign a small penalty p (e.g., 0.01). For negative residuals, assign a weight of 1. - Finalization: After the final iteration, subtract the fitted baseline b from the original signal z to obtain the baseline-corrected spectrum.

The Scientist's Toolkit: Essential Computational Reagents

| Item | Function in Analysis | Example / Note |

|---|---|---|

| Savitzky-Golay Filter | Smoothing and calculating derivatives while preserving peak shape. | Ideal for pre-processing before peak finding; available in most data analysis software [21]. |

| Daubechies Wavelets (db6) | Multi-resolution analysis for denoising and baseline correction. | Used in wavelet transform methods to separate signal components by frequency [22]. |

| Asymmetric Least Squares (ALS) Code | Iterative baseline fitting for complex, non-linear drifts. | Key parameters are smoothness (lam, e.g., 1e5-1e8) and asymmetry (p, e.g., 0.001-0.1) [22]. |

| findpeaksG / findpeaksx Functions | Command-line peak detection with Gaussian fitting or derivative-based search. | Provides precise estimation of peak position, height, and width [20]. |

| Polynomial Fitting Functions | Modeling and removing simple linear or polynomial baseline trends. | Use polyfit and polyval; careful not to overfit with high degrees [23]. |

Algorithmic Solutions: From Classical Methods to AI-Enhanced Correction Techniques

Frequently Asked Questions (FAQs)

Q1: What are the typical symptoms of an incorrectly chosen baseline correction method? You may observe an underestimated or boosted baseline in peak regions, distorted peak shapes, or the introduction of artificial oscillations near the signal edges. For instance, the airPLS algorithm can tend to produce an underestimated baseline if the signal has additive noise, while a poorly configured wavelet method might not fully capture a complex, non-linear baseline drift [24] [25].

Q2: The airPLS algorithm is not converging. What could be the reason? Slow or non-convergence in airPLS is often due to an improperly set smoothness parameter (λ) or an insufficient number of maximum iterations. If λ is too small, the fitted baseline may be too flexible and fit the peaks. If it is too large, the baseline may be overly rigid. It is recommended to use the default maximum iteration count (e.g., 20) and monitor the termination criterion, which stops the iteration when the difference between successive fits is minimal [26] [27].

Q3: For wavelet-based correction, how do I select the right wavelet and decomposition level?

Selecting an optimal wavelet basis (e.g., sym8) and the number of decomposition layers is critical and depends on your signal. A higher decomposition level is needed for baselines with very low-frequency drift. However, there is no universal rule; it requires experimentation. The key disadvantage of wavelet methods is this difficulty in selecting the right parameters without prior signal knowledge, which reduces its adaptability [28] [24].

Q4: My signal has a highly non-stationary and non-linear baseline. Which method is most suitable? The Empirical Mode Decomposition (EMD) method is particularly well-suited for non-linear and non-stationary signals, such as those from biosensors. Its major advantage is that the decomposition is fully data-driven and does not require a predefined basis function, unlike Fourier or wavelet transforms. This makes it adaptive to the complex characteristics of your signal [28] [25].

Q5: How can I automatically determine the best parameters for a baseline correction algorithm like airPLS?

Some advanced methods have been proposed to automate parameter selection. For example, the erPLS method automatically selects the optimal smoothness parameter λ for the asPLS algorithm by linearly expanding the ends of the spectrum, adding a Gaussian peak, and choosing the λ that yields the minimal root-mean-square error (RMSE) in the extended range [24].

Troubleshooting Guides

Issue 1: Overfitting Baseline in airPLS

- Problem: The fitted baseline appears to follow the peaks of the signal rather than the underlying drift.

- Solutions:

- Increase the Smoothness Parameter (λ): A higher λ places more emphasis on the smoothness of the baseline. For airPLS, values can range from

10^3to10^9, with10^7often used as a starting point [27]. - Check the Weight Vector: The iterative reweighting in airPLS should set weights to zero for data points identified as peaks. Verify that the algorithm is correctly identifying and down-weighting these points by examining the weight vector over iterations [27].

- Use an Improved Variant: Consider using the

arPLSorasPLSalgorithms, which are designed to be less vulnerable to noise and avoid treating small peaks as part of the baseline, thus reducing the chance of an underestimated baseline [24].

- Increase the Smoothness Parameter (λ): A higher λ places more emphasis on the smoothness of the baseline. For airPLS, values can range from

Issue 2: Edge Effects and Signal Distortion in Wavelet and EMD

- Problem: The corrected signal shows significant distortions or artifacts at the beginning and end after applying Wavelet or EMD correction.

- Solutions:

- Signal Extension: Prior to decomposition, extend the signal symmetrically or periodically at both ends. After correction, remove the extended parts. MATLAB's

cwtfunction, for example, has anExtendSignaloption to mitigate this [29]. - For EMD: Investigate IMFs: In EMD, the baseline wander is often contained in the higher-order Intrinsic Mode Functions (IMFs). Instead of simply discarding them, use an adaptive filtering approach (EEMD-AF) to process these IMFs and subtract the estimated baseline, which can reduce distortion [28].

- Avoid Short Epochs: Ensure your signal is long enough to contain multiple cycles of your lowest frequency of interest. Edge effects become more pronounced relative to the total signal length with shorter epochs [29].

- Signal Extension: Prior to decomposition, extend the signal symmetrically or periodically at both ends. After correction, remove the extended parts. MATLAB's

Issue 3: Mode Mixing and Incomplete Decomposition in EMD

- Problem: In EMD, a single IMF contains oscillations of dramatically different scales, or a similar scale of oscillation is spread across different IMFs.

- Solutions:

- Use Ensemble EMD (EEMD): This improved method adds white noise of finite amplitude to the original signal and performs the decomposition multiple times. The final IMFs are obtained by averaging the respective components from each realization. This helps resolve the mode mixing problem [28].

- Adjust Sifting Parameters: The sifting process in EMD has a stopping criterion (threshold ε, typically between 0.2 and 0.3). Adjusting this threshold or the maximum number of sifting iterations can sometimes lead to more physically meaningful IMFs [28] [30].

Comparison of Classical Baseline Correction Algorithms

Table 1: Key Characteristics, Advantages, and Limitations of Classical Algorithms

| Algorithm | Key Principle | Typical Applications | Key Parameters | Primary Advantages | Main Limitations |

|---|---|---|---|---|---|

| airPLS [26] [24] [27] | Adaptive iteratively reweighted Penalized Least Squares. Iteratively changes weights of SSE. | Raman imaging, various spectra (IR), chromatography. | Smoothness (λ), maximum iteration. | Fast, flexible, requires no peak detection, only one parameter to optimize. | Can underestimate baseline with noise; sensitive to λ choice. |

| Wavelet-Based [28] [24] | Multi-resolution decomposition analysis using wavelet transforms. | GREATEM signals, spectroscopy, ECG denoising. | Wavelet basis (e.g., sym8), decomposition levels. |

Good for non-stationary signals, can separate signal and noise in different frequency bands. | Poor adaptability; difficult to choose optimal wavelet and decomposition level. |

| EMD/EEMD [28] [30] [25] | Data-adaptive decomposition of a signal into Intrinsic Mode Functions (IMFs). | ECG BW removal, non-stationary signals (vibration, biomedical). | Number of IMFs (N), sifting stopping criterion (ε). | Fully adaptive, no pre-defined basis, excellent for non-linear and non-stationary signals. | Prone to mode mixing, can be computationally expensive, edge effects. |

Table 2: Algorithm Performance in Different Scenarios (Based on Published Studies)

| Algorithm | Signal-to-Noise Ratio (SNR) / Improvement | Mean-Square Error (MSE) | Qualitative Performance Notes |

|---|---|---|---|

| airPLS | N/A | N/A | Effective for various spectra; can be combined with machine learning (ML-airPLS) for parameter prediction [24]. |

| Wavelet-Based (sym8, 10 layers) | Result indicated higher SNR [28] | Result indicated lower MSE [28] | Practical but has poor adaptability; performance highly depends on parameter choice [28]. |

| EEMD-AF (Improved EEMD) | Higher SNR achieved in GREATEM signals [28] | Lower MSE achieved in GREATEM signals [28] | Outperformed standard EEMD and wavelet-based methods in suppressing baseline wander for specific applications [28]. |

| Median Window (MW) | N/A | N/A | Emerged as the best-performing method in correcting UPLC data of soil, based on prediction accuracy [31]. |

Detailed Experimental Protocols

Protocol 1: Implementing airPLS for Raman Spectral Data

This protocol is adapted from the method described by Zhang et al. [27].

- Initialization: The weight vector for fidelity

w^0is initialized to 1 for all data points. Set the smoothness parameterλ(a common starting value is10^7) and the maximum number of iterations (e.g., 20). - Iterative Fitting:

a. Compute the fitted baseline

z_tat iterationtby solving the weighted penalized least squares problem:(W + λ D' D) z_t = W x, wherexis the original signal,Wis the diagonal weight matrix, andDis the derivative matrix. b. Update the weight vector for the next iteration. For points where the signalxis greater than the candidate baselinez_t, their weight is set to zero, effectively identifying them as peaks. c. Calculate the termination criterion vectord_t, which contains the negative differences betweenxandz_t. - Termination Check: The iteration stops when the sum of absolute values in

d_tis less than a termination threshold (e.g., 0.001) or the maximum iteration count is reached. - Baseline Subtraction: The final corrected data

x*is obtained by subtracting the fitted baselinezfrom the original datax.

Protocol 2: EEMD with Adaptive Filtering (EEMD-AF) for Baseline Wander Correction

This protocol is based on the work by Li et al. for processing electromagnetic signals [28].

- Ensemble Decomposition:

a. Produce an ensemble of datasets by adding Gaussian white noise of finite amplitude (σ) to the original signal

S(t). b. Apply the standard EMD method to each noisy realization to obtain a set of IMFs for each run. c. Obtain the final set of IMFs by averaging the respective components from each realization:IMF^j(t) = (1/NE) * ∑(i=1 to NE) IMF_i(t), whereNEis the ensemble number. - Adaptive Filtering of IMFs: a. Identify the higher-index IMF components (e.g., IMF5 and above) that primarily contain the baseline wander. b. Apply an adaptive low-pass filter to these specific IMFs to obtain a refined estimate of the baseline wander.

- Signal Reconstruction: Subtract the filtered baseline wander (from step 2b) from the original noisy signal to obtain the de-noised signal.

Workflow and Signaling Pathways

Baseline Correction Algorithm Selection Workflow

EMD-based Baseline Wander Correction Pathway

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Computational Tools and Resources for Baseline Correction Research

| Item Name | Function / Purpose | Example / Note |

|---|---|---|

| R Statistical Software | Primary environment for implementing and testing algorithms like airPLS. | The airPLS R package is available on GitHub (zmzhang/airPLS) [26]. The baseline package in R provides implementations of AsLS, fill peak, and Median Window methods [31]. |

| MATLAB | Environment with built-in toolboxes for signal processing, including EMD and wavelet transforms. | The emd function is available in the Signal Processing Toolbox, providing empirical mode decomposition [30]. The cwt function performs continuous wavelet transform [29]. |

| C++/MFC Implementation | A high-performance version of airPLS for applications requiring real-time tuning. | Provides a user interface for easily tuning the lambda parameter via a slider, addressing parameter optimization issues found in the R and Matlab versions [26]. |

| Benchmark Datasets | Publicly available data for validating and comparing algorithm performance. | The MIT-BIH Arrhythmia Database is a common benchmark for ECG signal processing methods, including baseline wander correction [25]. |

| Python with SciPy/NumPy | A flexible platform for implementing custom baseline correction scripts and newer deep learning approaches. | Libraries like scipy.signal can be used for wavelet transforms and spline fitting. Custom implementations of airPLS, EMD, and other algorithms are also common. |

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind the Derivative Passing Accumulation (DPA) method? The DPA method is a signal processing algorithm that uses only first-order derivative information to simultaneously perform baseline correction and signal peak extraction. The core principle involves dividing the vector representing the discrete first-order derivative into negative and positive parts, which are then accumulated to build a signal descriptor. This descriptor allows for easy separation of signals from background fluctuations via thresholding, enabling both baseline correction and peak identification in a single procedure [18].

Q2: On which types of biological signals has the DPA method been successfully tested? Testing on authentic data has demonstrated the proficiency of the DPA method across a range of biological and analytical signals, including [18]:

- Mass spectrometry data, where it effectively captured the basic trend of baseline drift.

- Raman spectroscopy curves, where its performance was very close to specialized baseline detection methods.

- Electrocardiogram (ECG) and Electroencephalogram (EEG) data, where it produced stable, waveform-corrected results.

- Audio signals (e.g., from animal monitoring) and infrared spectroscopy data.

Q3: How does the DPA method's performance compare to classical baseline correction algorithms? The DPA method has been compared against several classical algorithms, such as wavelet analysis, Empirical Mode Decomposition (EMD), and the airPLS method. Results indicate that DPA is a powerful and often better choice for practical processing. It reportedly outperforms EMD and wavelet methods on several data types and performs similarly to the specialized airPLS method on Raman spectra, while avoiding the "dental baseline" artifact that airPLS can produce on mass spectrometry data [18].

Q4: What are the main advantages of using a derivative-based approach like DPA? The primary advantages of the DPA method include [18]:

- Simplicity and Efficiency: It relies solely on simple first-order differences, making the algorithm cleaner and more computationally efficient.

- Joint Processing: It performs baseline computation and peak identification simultaneously.

- Automatic Operation: The procedure is fully automatic, requiring no user intervention for peak detection operations.

Troubleshooting Guide: Common DPA Implementation Challenges

Issue 1: Poor Separation of Signal Peaks from Background Noise

- Problem: After applying DPA, the baseline is not adequately corrected, or noise is still misinterpreted as signal peaks.

- Solution: The effectiveness of DPA relies on thresholding the accumulated derivative descriptor. Re-evaluate and adjust the thresholding criteria. Ensure that the first-order derivative is calculated correctly from your discrete signal data. Testing the algorithm on synthesized data with known peak positions and areas can help calibrate the threshold parameters for your specific instrument and signal type [18].

Issue 2: Inaccurate Peak Location or Area Calculation

- Problem: The positions or areas of the extracted signal peaks do not match expected values.

- Solution: This issue can arise from improper handling of the derivative accumulation steps. Verify the algorithm's logic for building the signal descriptor from the positive and negative derivative parts. On artificially synthesized data, the DPA method has been analyzed for peak area loss rate, confirming its accuracy when correctly implemented. Ensure that the signal peaks in your data conform to the model (like Gaussian peaks) that the algorithm is designed to handle [18].

Issue 3: Performance Variation Across Different Data Modalities

- Problem: The DPA method works well on one type of data (e.g., Raman spectra) but underperforms on another (e.g., mass spectrometry).

- Solution: The DPA method is a general-purpose algorithm, and its performance can vary. Consult the comparative testing results [18]. For instance, if processing mass spectroscopy data where DPA performed well, it may be a suitable choice. However, for a specific application, other methods like asymmetric least squares (ALS) variants [32] or deep learning models [33] might offer better performance. Always validate the method against a known benchmark for your specific data.

Experimental Protocols & Data Presentation

The DPA method was validated using artificially synthesized data comprising a softly fluctuating baseline, Gaussian signal peaks of different heights/widths, and added white noise. The table below summarizes key performance metrics based on this testing [18].

Table 1: Performance of DPA on Synthesized Data with Known Signals

| Performance Metric | Description | DPA Method Outcome |

|---|---|---|

| Peak Area Loss Rate | Measures the quantitative accuracy of the extracted signals by comparing the calculated peak area after correction with the preset known area. | The method demonstrated accurate calculation of peak area at the preset peak locations, with low loss rates. |

| Peak Identification | Assesses the algorithm's ability to correctly locate the position of the simulated signal peaks. | The DPA method was able to directly and successfully locate the signal peaks. |

| Baseline Removal | Evaluates how effectively the underlying slow baseline drift was removed from the signal. | The algorithm effectively separated and removed the simulated baseline drift. |

Protocol: Testing DPA on Your Own Signal Data

This protocol outlines the steps to implement and validate the DPA method for a generic one-dimensional biological profile.

Objective: To apply the Derivative Passing Accumulation (DPA) algorithm for baseline correction and peak extraction on a given signal. Materials:

- Raw signal data (e.g., from a mass spectrometer, Raman spectroscope, or other biological instrument).

- Computational environment (e.g., MATLAB, Python, or R) for algorithm implementation.

Procedure:

- Data Input: Load the raw, digitized signal profile into your computational environment.

- First-Order Derivative Calculation: Compute the discrete first-order derivative of the signal. This is typically achieved by calculating the simple differences between consecutive data points:

derivative[i] = signal[i+1] - signal[i][18]. - Descriptor Construction: Split the derivative vector into its negative and positive components. Accumulate these parts to build the specific signal descriptor used for separation [18].

- Thresholding: Apply a threshold to the accumulated descriptor to distinguish regions containing true signal peaks from regions of background fluctuation.

- Baseline Correction & Peak Picking: Based on the thresholding result:

- Construct and subtract the estimated baseline from the original signal.

- Simultaneously, identify the intervals in the signal that correspond to genuine peaks.

- Output: The final outputs are the baseline-corrected signal and the coordinates (position, area) of the extracted peaks.

Validation:

- If available, validate the results against a dataset with known baseline and peak information.

- Visually inspect the corrected signal to ensure the baseline has been properly flattened without distorting the true signal peaks.

- Compare the peak areas and positions obtained from DPA with those from other established methods (e.g., airPLS, wavelet analysis) for consistency [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Strain Measurement System Utilizing Drift Correction

| Item | Function in the Context of Signal Acquisition & Drift Correction |

|---|---|

| Resistive Strain Gauge | The primary sensor that translates mechanical deformation (strain) into a small change in electrical resistance. This is the source of the signal [33]. |

| Wheatstone Bridge Circuit | Converts the minute resistance change from the strain gauge into a measurable voltage signal. This configuration is highly sensitive but susceptible to baseline drift [33]. |

| Signal Conditioning Circuit | Amplifies and filters the weak analog voltage signal from the bridge, preparing it for digitization. Proper design is crucial to minimize introduced noise [33]. |

| High-Precision ADC | The Analog-to-Digital Converter (ADC) transforms the conditioned analog signal into a discrete digital signal for computational processing and algorithm application [33]. |

| Computational Environment | The hardware (e.g., PC, embedded system) and software (e.g., MATLAB, Python) used to implement and run the DPA or other baseline correction algorithms on the digitized signal [18] [33]. |

Signaling Pathways & Workflow Visualizations

Diagram 1: DPA algorithm workflow.

Diagram 2: Baseline correction algorithm comparison.

Digital Calibration and Correction for Sensor Arrays (e.g., GMR Biosensors)

Giant Magnetoresistive (GMR) biosensors are highly sensitive devices capable of detecting proteins and nucleic acids by monitoring minute resistance changes, often as small as a few micro-ohms, when magnetic nanoparticle (MNP)-labeled analytes bind to the sensor surface [34] [35]. These sensors are typically deployed in array formats (e.g., 8x8 grids) for simultaneous monitoring of multiple biomarkers [34]. The core sensing mechanism involves measuring magnetoresistance (MR) changes proportional to the number of surface-bound MNPs, which are then translated into analyte concentration via calibration curves [35].

The fundamental requirement for digital calibration stems from several inherent challenges that affect measurement reproducibility and sensitivity. Process variations during manufacturing cause significant deviations in resistance, MR ratio, and transfer curves across individual sensors within an array [34] [35]. Additionally, GMR sensors exhibit substantial temperature dependence with temperature coefficients ranging from hundreds to thousands of parts per million per degree Celsius (°C) for both resistive and magnetoresistive components [35]. Magnetic field non-uniformity across the sensor array further compounds these issues, as the magnetic moment of superparamagnetic tags and sensor operating points are highly field-dependent [35]. Without sophisticated correction techniques, these factors severely hinder the utility and sensitivity of GMR biosensing systems, making digital calibration not merely beneficial but imperative for reliable operation [35].

Core Calibration and Correction Techniques

Dynamic Operating Point Adjustment

Principle: This technique maximizes sensor sensitivity and reproducibility by dynamically adjusting the magnetic "tickling field" amplitude to target a specific MR value, rather than applying a fixed magnetic field [35].

Methodology:

- Apply several different magnetic tickling fields to the sensor array

- Calculate the MR at each field using the equation:

MR = (CT + 2*ST)/(CT - 2*ST) - 1where CT is the carrier tone amplitude and ST is the side tone amplitude [35] - Interpolate the measured values to determine the tickling field amplitude that yields the target MR ratio

Benefits: This approach desensitizes the system to variability in sensor parameters, power amplifier characteristics, and electromagnet performance due to aging or temperature fluctuations. It ensures optimal operating points despite process variations [35].

MR Calibration for Magnetic Field Non-Uniformity

Principle: Corrects for magnetic field variations across the sensor array and sensor-to-sensor MR variations that would otherwise cause identical MNP counts to produce different signals [35].

Implementation Methods:

Table 1: MR Calibration Methods Comparison

| Method | Procedure | Advantages | Limitations |

|---|---|---|---|

| One-Point Calibration | Apply a tickling field step, calculate MR change, compute calibration coefficient as inverse MR change relative to array median [35] | Simple, rapid implementation | Assumes linear response within operating range |

| Absolute Amplitude Calibration | Utilize absolute side tone (ST) amplitudes rather than response to field changes [35] | Enables verification via magnetic field steps, identifies defective sensors | Assumes identical transfer curves with different operating points |

Effectiveness: MR calibration significantly improves signal uniformity across the array, with correction techniques demonstrating the ability to enhance reproducibility by over 3 times and improve the limit of detection by more than three orders of magnitude [34] [35].

Temperature Correction Algorithm

Principle: Compensates for temperature-induced signals without requiring precise temperature regulation or taking sensors offline, using the sensors themselves to detect relative temperature changes [35].

Technical Implementation: The double modulation scheme separates resistive and magnetoresistive components by modulating them to different frequencies. The output current of a GMR sensor using this scheme is represented by:

I_GMR(t) = [Vcos(2πf_c t)] / [R_0(1+αΔT) + (ΔR_0(1+βΔT))/2 * cos(2πf_f t)]

Where:

R_0= sensor resistance at operating pointΔR_0= magnetoresistive component at operating pointα= temperature coefficient (TC) of non-magnetoresistive portionβ= TC of magnetoresistive portionΔT= temperature change [35]

The relationship between α and β remains independent of temperature, enabling mathematical correction of temperature effects in the digital domain.

Performance: This background correction technique effectively renders sensors temperature-independent without the need for physical temperature regulation systems [35].

Adaptive Filtering for Noise Reduction

Principle: Applied post-assay to decrease noise and improve signal-to-noise ratio after completing temperature correction and other calibration steps [35].

Workflow Integration: This represents the final signal processing step in the correction pipeline, further refining signal quality after addressing major sources of error and variation [35].

Troubleshooting Guide: Common Experimental Issues and Solutions

Table 2: Troubleshooting Guide for GMR Biosensor Experiments

| Problem | Possible Causes | Diagnostic Steps | Solution |

|---|---|---|---|

| Non-uniform responses across array | Magnetic field non-uniformity, process variations [35] | Apply magnetic field steps and observe response patterns | Implement MR calibration using one-point or absolute amplitude methods [35] |

| Signal drift during experiments | Temperature fluctuations [35] | Monitor carrier tone (CT) and side tone (ST) amplitudes over time | Apply temperature correction algorithm using sensor-derived temperature data [35] |

| Poor reproducibility between assays | Uncorrected process variations, suboptimal operating points [34] | Compare transfer curves across sensors and experiments | Implement dynamic operating point adjustment and comprehensive calibration [34] |

| Low signal-to-noise ratio | Electronic flicker noise, environmental interference [34] | Analyze frequency spectrum of output signals | Apply double modulation scheme and post-assay adaptive filtering [34] [35] |

| False positive/negative results | Defective sensors, insufficient calibration [35] | Perform MR calibration and identify non-responsive sensors | Mark unresponsive sensors as defective during calibration procedures [35] |

Frequently Asked Questions (FAQs)

Q1: Why is digital calibration particularly important for GMR biosensor arrays compared to single sensors? As array size increases, statistical variations in sensor characteristics become more pronounced and significantly interfere with obtaining reproducible results. Digital correction techniques compensate for process variations across sensors, front-end electronics, temperature-induced signals, and magnetic field non-uniformity, which are exacerbated in array configurations [34].

Q2: Can temperature effects be compensated without physical temperature control systems? Yes, through a novel background correction technique that uses the sensors themselves to detect relative temperature changes. The double modulation scheme separates temperature-dependent parameters, enabling mathematical correction without taking sensors offline or requiring precise temperature regulation [35].

Q3: What performance improvements can be expected from implementing these correction techniques? Research demonstrates that comprehensive calibration and correction can improve reproducibility by over 3 times and enhance the limit of detection by more than three orders of magnitude. The techniques also effectively render sensors temperature-independent without physical cooling or heating systems [34] [35].

Q4: How is the optimal operating point for GMR sensors determined? Rather than applying a fixed tickling field, the system targets a specific MR value by applying several different magnetic fields, calculating MR at each field, and interpolating to find the field that yields the target MR. This maximizes sensitivity despite process variations [35].

Q5: What is the purpose of the double modulation scheme in GMR sensing? Double modulation modulates the signal from MNPs away from the flicker noise of both the sensor and electronics. By modulating the magnetic field (frequency ff) and the sensor voltage (frequency fc), the output contains a carrier tone at fc and side tones at fc±f_f, effectively separating desired signals from noise [34].

Experimental Protocols and Workflows

Comprehensive Calibration Protocol

Signal Processing Pathway

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for GMR Biosensor Experiments

| Material/Reagent | Function/Purpose | Application Notes |

|---|---|---|

| GMR Spin-Valve Sensor Array | Detection platform for magnetic nanoparticles [34] | Typically configured as 8×8 grid of individually addressable sensors [34] |

| Magnetic Nanoparticles (MNPs) | Magnetic labels for biomolecules [35] | Superparamagnetic nanoparticles (e.g., MACS beads) function as detectable tags [35] |

| Capture Antibodies | Immobilized recognition elements for target analytes [34] | Provide specificity through selective binding to target proteins or nucleic acids [34] |

| Detection Antibodies | Secondary binding elements conjugated to MNPs [34] | Form sandwich complexes with captured analytes for detection [34] |

| Transimpedance Amplifier | Converts sensor current to voltage [35] | Critical first-stage signal conditioning electronics [35] |

| Instrumentation Amplifier | Provides additional gain and carrier suppression [35] | Enhances signal quality and suppresses unwanted carrier components [35] |

In-Situ Calibration Approaches for Large-Scale Sensor Networks

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is sensor calibration drift and why is it a critical problem for biosensor data in drug development? Sensor calibration drift is the gradual deviation of a sensor's readings from its true, calibrated state over time. It signifies a time-dependent alteration in the functional relationship between a sensor's input and its output signal [36]. In the context of biosensors and drug development, this is critical because uncorrected drift compromises the veracity and reliability of data sets used for scientific inquiry. It can lead to flawed conclusions about a drug's mechanism of action or a patient's physiological response during clinical trials, directly impacting the understanding of treatment efficacy and underlying biological mechanisms [37] [36].

Q2: My large-scale biosensor network is showing inconsistent data. How can I determine if the issue is calibration drift? Inconsistent data across a sensor network can stem from various issues. To diagnose calibration drift specifically, we recommend a multi-step verification process:

- Check Data Consistency: Analyze data from multiple biosensors measuring the same physiological construct (e.g., heart rate). Significant, sustained deviations from the group consensus in one sensor can indicate drift [38].

- Perform a Baseline Check: If possible, expose the biosensor to a known baseline condition. For example, an electrodermal activity (EDA) sensor should show a stable, low reading in a resting state. A deviation from this expected baseline is a strong indicator of zero drift [36].

- Review Historical Performance: Compare the current sensor data against its own historical performance under similar conditions. A gradual, monotonic shift in reported values over weeks or months is characteristic of drift [36].

Q3: Are there remote calibration methods that do not require physically retrieving every biosensor? Yes, recent advances have led to several effective remote or in-situ calibration methods suitable for large-scale networks:

- In-situ Baseline Calibration (b-SBS): This method establishes a universal sensitivity value for a batch of similar sensors, requiring only the remote calibration of the baseline value. It has been shown to significantly improve data quality (e.g., 45.8% increase in median R² for NO2 sensors) without co-location with a reference monitor [39].

- Autoencoder with Virtual Samples: This machine learning approach uses an autoencoder trained on a large set of virtually generated faulty-normal sample pairs. Once trained, the model can perform end-to-end correction of faulty sensor data in-situ, leveraging correlations between different sensor variables in the network [40].

- Exploiting Network-Wide Uniformity: Some methods leverage periods where pollutant concentrations or physiological states are uniform across a network to establish concentration ranges for calibration [39].

Q4: What are the best practices for maintaining calibration in a large-scale deployment? Maintaining calibration at scale requires a proactive, layered strategy:

- Establish a Recalibration Schedule: Define a routine based on manufacturer recommendations and observed performance. For some electrochemical sensors, semi-annual recalibration may be sufficient, as baseline drift can remain stable within ±5 ppb over 6 months [39].

- Utilize Batch Calibration: Group sensors with closely matching output behavior and calibrate them together using universal parameters (e.g., median sensitivity values) to reduce effort and increase consistency [39] [38].

- Incorporate Redundancy: Deploy multiple sensors to measure the same key parameter. This allows for statistical cross-verification (e.g., majority voting) to detect and isolate a miscalibrated sensor in real-time [38].

- Automate Where Possible: Use software and machine learning models to automate drift detection and correction, minimizing human error and operational costs [40] [38].

Troubleshooting Common Experimental Issues

Problem: Rapid performance degradation of electrochemical biosensors in a clinical trial.

- Possible Cause: Sensor poisoning or irreversible fouling from exposure to specific biological analytes or contaminants in the sample matrix [36].

- Solution:

- Investigate the use of sensor-specific protective membranes or filters.

- Implement a more frequent baseline checking protocol to monitor for sudden sensitivity changes [36].

- If using a machine learning calibration model, ensure the training data (virtual or real) includes examples of fault conditions relevant to the clinical environment [40].

Problem: High inter-sensor variability in a distributed network measuring heart rate variability (HRV).

- Possible Cause: Sensitivity drift, where the response slope of individual sensors has changed at different rates [36].

- Solution:

- Apply a batch calibration approach. Determine the median sensitivity coefficient from a representative sample of the sensors and apply it universally across the network [39].

- Perform a multi-point calibration on a subset of sensors to fully characterize and correct for non-linear drift patterns [41].

Problem: Inability to perform frequent physical recalibration of biosensors in a naturalistic study.

- Possible Cause: The logistical burden and cost of retrieving and redeploying sensors are too high.

- Solution: Implement a purely data-driven in-situ calibration method. Train an autoencoder model on the correlations between different physiological signals (e.g., EDA, HR, HRV) from your network. The model can then be used to correct faulty readings remotely, relying only on the data stream [40].

Detailed Methodology: In-situ Baseline Calibration (b-SBS)