Beyond OFAT: How Design of Experiments Optimizes Biosensor Development for Biomedical Research

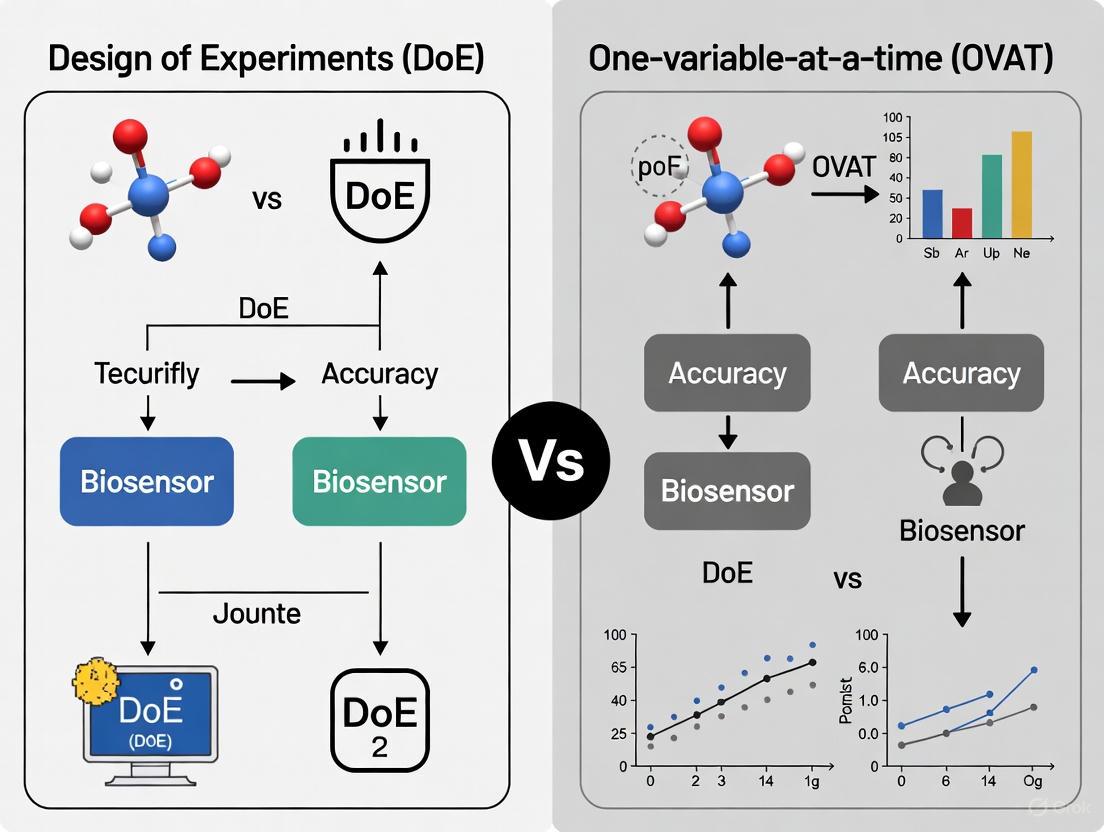

This article explores the critical methodological shift from One-Factor-at-a-Time (OFAT) experimentation to Design of Experiments (DoE) in biosensor development.

Beyond OFAT: How Design of Experiments Optimizes Biosensor Development for Biomedical Research

Abstract

This article explores the critical methodological shift from One-Factor-at-a-Time (OFAT) experimentation to Design of Experiments (DoE) in biosensor development. Aimed at researchers and drug development professionals, it provides a comprehensive analysis of how DoE's multivariate approach efficiently uncovers factor interactions, optimizes complex sensor parameters, and enhances performance metrics like sensitivity and specificity. Drawing on current literature and case studies, the content covers foundational principles, practical applications in electrochemical and optical biosensors, troubleshooting strategies, and a direct comparison of outcomes, offering a actionable framework for developing more reliable and robust sensing platforms for clinical and diagnostic use.

Foundations of Experimental Design: OFAT Limitations and the DoE Paradigm Shift in Biosensing

The development of high-performance biosensors is a complex endeavor, crucial for advancements in personalized healthcare, environmental monitoring, and food safety [1]. The analytical performance of these platforms—their sensitivity, selectivity, and reproducibility—is profoundly affected by the optimization of numerous experimental parameters [2]. Traditionally, this optimization has been dominated by the One-Factor-at-a-Time (OFAT) approach. However, this method is increasingly being supplanted by the statistically rigorous framework of Design of Experiments (DoE) [3] [4]. The choice between these two methodologies is not merely a technical preference but a strategic decision that influences the efficiency, cost, and ultimate success of biosensor development. This guide delineates the core principles of OFAT and DoE, providing researchers and drug development professionals with a clear understanding of their applications, limitations, and strengths within the context of modern biosensor research.

Unpacking the Core Principles

One-Factor-at-a-Time (OFAT): A Sequential Approach

The OFAT approach, also known as One-Variable-at-a-Time (OVAT), is a straightforward, sequential optimization strategy. It involves varying a single experimental factor while keeping all other parameters constant to observe its isolated effect on the response. Once the optimal level for that factor is identified, it is fixed, and the process repeats for the next factor.

- Underlying Logic: The process is based on the assumption that factors are independent and do not interact. The optimal condition for the system is believed to be the simple combination of the individual optimal levels found for each factor.

- Common Contexts: OFAT is widely used in preliminary studies or in systems presumed to be simple. It is often the default method in fields where statistical design is not routinely applied [2] [5].

Design of Experiments (DoE): A Multivariate Paradigm

DoE is a structured, statistical methodology for simultaneously investigating the effects of multiple factors and their interactions on one or more response variables. It is a model-based approach that strategically plans a set of experiments to efficiently explore the entire experimental domain [3].

- Underlying Logic: DoE recognizes that factors in complex systems like biosensors often interact. The effect of one factor (e.g., probe concentration) may depend on the level of another (e.g., ionic strength). DoE aims to build a mathematical model that describes this complex relationship, enabling the identification of a true optimum [2] [5].

- Common Contexts: DoE is the cornerstone of the Quality by Design (QbD) framework mandated by regulatory authorities for pharmaceutical development [4]. It is indispensable for optimizing complex processes with many variables, such as bioprocesses, sensor fabrication, and assay conditions [6] [4].

A Comparative Analysis: OFAT vs. DoE

The fundamental differences between OFAT and DoE lead to significant practical consequences in research outcomes. The table below provides a structured, quantitative comparison.

Table 1: A systematic comparison of OFAT and DoE core characteristics and outcomes.

| Aspect | One-Factor-at-a-Time (OFAT) | Design of Experiments (DoE) |

|---|---|---|

| Experimental Strategy | Sequential, univariate | Simultaneous, multivariate |

| Factor Interactions | Cannot be detected or quantified | Systematically measured and modeled |

| Number of Experiments | Increases linearly with factors; can be very high for complex systems [2] | Increases strategically; highly efficient for many factors [2] |

| Statistical Efficiency | Low; information gained per experiment is limited [7] | High; maximum information for a given number of runs [7] |

| Risk of Sub-Optimality | High; risks missing the true optimum due to ignored interactions [6] [5] | Low; maps the entire response surface to find a robust optimum [6] [5] |

| Foundational Assumption | Factor independence | Factor interdependence (interactions) |

| Model Output | No predictive model | A mathematical model for prediction and optimization |

| Example Efficiency | 486 runs for 6 factors [2] | 30 runs for the same 6 factors (D-optimal design) [2] |

The Critical Concept of Factor Interactions

Factor interactions are a primary reason for DoE's superiority in complex systems. An interaction occurs when the effect of one factor on the response depends on the level of another factor.

For instance, in optimizing a growth medium, the ideal concentration of a carbon source might be different at high and low nitrogen levels. OFAT would completely miss this nuance, while DoE would not only detect it but also quantify it [8]. In biosensor development, interactions are common between parameters like probe concentration, hybridization temperature, and ionic strength [2]. Ignoring them can lead to a sensor with significantly compromised performance.

Experimental Protocols and Case Studies in Biosensor Optimization

Case Study: DoE for a Paper-Based Electrochemical miRNA Biosensor

A compelling example of DoE application is the optimization of a hybridization-based paper-based electrochemical biosensor for detecting miRNA-29c, a biomarker for triple-negative breast cancer [2].

- Objective: Optimize six key variables related to sensor manufacture (e.g., gold nanoparticles, DNA probe concentration) and working conditions (e.g., ionic strength, hybridization time) to improve sensitivity and repeatability.

- Challenge: A full OFAT approach for six variables was estimated to require 486 experiments, which is prohibitively time-consuming and resource-intensive [2].

- DoE Solution: A D-optimal design was selected, which is a computer-generated design that maximizes the information obtained from a limited number of experimental runs.

- Protocol Summary:

- Factor Selection: Six critical factors were identified.

- Experimental Design: A D-optimal design was generated, requiring only 30 experiments.

- Execution & Analysis: The 30 experiments were conducted, and the results (e.g., electrochemical signal intensity) were used to build a statistical model.

- Optimization: The model identified the optimal combination of factor levels and revealed significant interactions between them.

- Outcome: The DoE-optimized biosensor achieved a 5-fold improvement in the limit of detection (LOD) compared to a version optimized using an OFAT strategy. This dramatic enhancement was achieved using 94% fewer experiments [2].

Essential Research Reagent Solutions for Biosensor Optimization

The following table details key materials and reagents commonly used in biosensor optimization experiments, as exemplified in the cited literature.

Table 2: Key research reagents and their functions in biosensor development and optimization.

| Reagent / Material | Function in Biosensor Optimization | Example Context |

|---|---|---|

| Gold Nanoparticles (AuNPs) | Enhance electron transfer, increase surface area for bioreceptor immobilization. | Electrochemical biosensor base modification [2]. |

| Immobilized DNA Probe | Biorecognition element that hybridizes with the target analyte (e.g., miRNA). | miRNA biosensor; concentration is a critical optimized factor [2]. |

| Specific Antibodies | Biorecognition element for immunoassays; binds to target antigen. | Immunosensor for human epididymis protein 4 (HE4) [2]. |

| Nafion | Cation-exchange polymer membrane; improves selectivity and anti-fouling properties. | Modifying electrode surfaces in electrochemical sensors. |

| Magnetic Beads | Solid support for immobilizing bioreceptors; enable separation and concentration of analyte. | Functionalization of antibodies in a competition assay [2]. |

Implementing DoE: A Practical Workflow for Biosensor Development

Adopting a DoE methodology involves a series of logical steps. The following workflow diagram and elaboration provide a guide for its implementation in biosensor research.

- Define Objective and Responses: Clearly state the goal (e.g., "minimize the limit of detection for glucose") and identify the measurable responses that define performance (e.g., current density, signal-to-noise ratio) [2] [1].

- Identify Potential Factors: Brainstorm all possible factors (e.g., pH, temperature, nanomaterial concentration, probe density, incubation time) that could influence the responses [3].

- Screening Design: When dealing with many factors (e.g., >5), use a screening design like a Plackett-Burman or a fractional factorial design to efficiently identify the "vital few" factors that have the most significant impact on the response. This allows for the elimination of insignificant factors, saving resources for the next step [6] [4].

- Optimization Design: Focus on the critical factors identified in the previous step. Use a design like Central Composite Design (CCD), Box-Behnken Design (BBD), or D-optimal design to model the response surface. These designs are capable of estimating quadratic terms and interactions, enabling the location of a true optimum, whether it is a maximum, minimum, or a plateau [2] [3].

- Model Validation and Verification: The final, crucial step is to run a small number of additional experiments at the predicted optimal conditions to verify that the observed response matches the model's prediction. This confirms the model's robustness and validity [3].

The transition from OFAT to DoE represents a paradigm shift from a linear, assumption-heavy approach to a holistic, knowledge-driven one. While OFAT offers simplicity, its inability to account for factor interactions poses a severe risk in the development of complex, high-performance biosensors, often leading to suboptimal performance and a waste of resources [2] [5]. In contrast, DoE provides a systematic, efficient, and statistically sound framework for navigating complex experimental landscapes. It not only finds better optimal conditions but also generates a deeper understanding of the system through the quantification of factor effects and their interactions. As the demand for more sensitive, reliable, and rapidly developed biosensors grows, the adoption of DoE, particularly within the QbD framework, is no longer a luxury but a necessity for researchers and drug development professionals aiming to deliver robust and impactful diagnostic technologies.

In the field of biosensor research, the pursuit of optimal performance—whether in sensitivity, dynamic range, or specificity—often requires careful optimization of multiple experimental parameters. The One-Factor-at-a-Time (OFAT) approach, where variables are altered sequentially while others remain constant, has been a traditional method for this optimization. However, its fundamental inability to capture interactions between factors presents a critical pitfall, often leading researchers to suboptimal outcomes and misleading conclusions. This article details this limitation and contrasts OFAT with the more robust Design of Experiments (DoE) methodology, providing technical guidance and protocols for its implementation within biosensor development.

The Fundamental Flaw: What OFAT Misses

In complex biological systems, such as a functioning biosensor, factors rarely act in isolation. The interaction between two variables occurs when the effect of one factor depends on the level of another. OFAT methodology is inherently incapable of detecting these interactions because it only tests variables individually.

- The Trap of Local Maxima: OFAT involves altering one variable while keeping the others constant, finding its optimal level, and then moving to the next variable. The final combination of variable set points after an OFAT approach is often suboptimal because the outcome is highly dependent on the order in which variables were perturbed. This sequential process can easily trap researchers in a local performance maximum, missing the global optimum that a multivariate approach could find [6].

- Misleading Conclusions: In an OFAT protocol, the impact of a factor is assessed while all other parameters are held constant. This can lead to incorrect conclusions if the optimal level of one factor (e.g., enzyme concentration) shifts when another factor (e.g., buffer pH) is changed. Without testing the full factorial space, these dynamic relationships remain invisible [9].

Table 1: Comparison of OFAT and DoE Approaches in Biosensor Development

| Feature | One-Factor-at-a-Time (OFAT) | Design of Experiments (DoE) |

|---|---|---|

| Factor Interactions | Cannot be detected, leading to suboptimal conditions | Explicitly measured and modeled |

| Experimental Efficiency | Low; requires many runs to explore few factors | High; screens or optimizes many factors with fewer runs |

| Statistical Power | Low; no estimate of experimental error for the full system | High; includes replication for robust error estimation |

| Nature of Solution | Often finds a local optimum | Aims to find the global optimum |

| Best Use Case | Preliminary, rough tuning of a single, dominant factor | Systematic optimization and robust model building |

Case Studies: The Cost of OFAT in Biosensor Research

Optimizing an Electrochemical Biosensor for Metal Ions

In developing a Pt/PPD/GOx amperometric biosensor for detecting heavy metal ions like Bi³⁺ and Al³⁺, researchers turned to DoE to overcome OFAT limitations. The performance was known to be influenced by multiple parameters: enzyme concentration, electropolymerization cycles, and flow rate [10].

An OFAT approach would have optimized one parameter at a time, for instance, finding the best enzyme concentration while keeping cycles and flow rate constant. However, a Central Composite Design (CCD) within a Response Surface Methodology (RSM) framework revealed how these factors interact. The analysis showed that the sensitivity (S, µA·mM⁻¹) was not a simple sum of individual effects but a product of their complex interactions. This allowed the team to identify a true optimal condition (50 U·mL⁻¹ enzyme, 30 cycles, 0.3 mL·min⁻¹ flow rate) that an OFAT search would likely have missed, ultimately achieving high reproducibility (RSD = 0.72%) [10].

Enhancing an RNA Integrity Biosensor

The optimization of an in vitro RNA biosensor highlights the inefficiency of OFAT. With eight different factors to optimize—including reporter protein concentration, poly-dT oligonucleotide concentration, and DTT concentration—an OFAT screen would have been prohibitively time-consuming and resource-intensive [11].

Instead, researchers employed a Definitive Screening Design (DSD), a type of fractional factorial design that efficiently screens many factors with a minimal number of experimental runs. The DSD could model not only the main effects of each factor but also two-factor interactions. This systematic exploration led to an optimized protocol that resulted in a 4.1-fold increase in dynamic range and reduced the required RNA concentration by one-third. The study concluded that key modifications, such as reducing reporter protein and poly-dT concentrations, would have been difficult to identify without a multivariate approach that captured these interactive effects [11].

A Practical Guide to DoE Methodologies for Biosensors

Transitioning from OFAT to DoE involves understanding a suite of statistical tools. The following workflow and descriptions outline the core methodologies.

Core DoE Designs

Screening Designs: These are used when many factors (e.g., pH, temperature, concentration of multiple reagents, buffer ionic strength) are potentially relevant, and the goal is to identify the few most influential ones.

- Plackett-Burman Designs: A highly efficient fractional factorial design used to screen a large number of factors (N) with only N+1 experimental runs. It identifies the main effects of factors but cannot reliably distinguish interaction effects [6].

- Definitive Screening Designs (DSD): A more advanced three-level screening design. DSDs can identify main effects and also model two-factor interactions without a dramatic increase in experimental runs, making them powerful for initial biosensor characterization [11].

Optimization Designs: Once the critical factors are identified, these designs map the response surface to find the optimum.

- Central Composite Design (CCD): A widely used response surface methodology design. It builds upon a two-level factorial design by adding axial (star) points and center points, allowing for the estimation of curvature and quadratic effects. This enables the model to find a maximum or minimum within the experimental region, which is crucial for finding a biosensor's peak performance [10] [9].

- Box-Behnken Design (BBD): Another efficient RSM design. Unlike CCD, Box-Behnken designs do not include points at the extremes (corners) of the factor space, which can be advantageous when testing at these extreme combinations is impractical or impossible [9] [6].

Table 2: Key DoE Designs for Biosensor Development

| DoE Design | Primary Goal | Key Strength | Typical Use in Biosensor Cycle |

|---|---|---|---|

| Full Factorial | Characterize all main effects and interactions | Provides complete data on all factor interactions | Studying a very small number (2-4) of critically important factors in depth |

| Plackett-Burman | Screen a large number of factors to find critical ones | High efficiency; minimal runs for many factors | Initial factor scoping after initial biosensor design |

| Definitive Screening (DSD) | Screen factors while being able to model interactions | Three-level design that captures curvature and interactions | A more robust alternative to Plackett-Burman for screening |

| Central Composite (CCD) | Model curvature and find an optimum | Excellent for building a strong predictive response model | Final performance optimization of critical parameters |

| Box-Behnken (BBD) | Model curvature and find an optimum | Avoids extreme factor combinations; often requires fewer runs than CCD | Optimization when extreme factor levels are undesirable |

Implementing a DoE strategy requires both physical reagents and software tools.

Table 3: Research Reagent Solutions for Biosensor Optimization

| Reagent / Material | Function in Biosensor Optimization |

|---|---|

| Glucose Oxidase (GOx) | Model enzyme used in electrochemical biosensor development; its inhibition by heavy metals is a common detection mechanism [10]. |

| Polymerization Monomers (e.g., o-Phenylenediamine) | Used to form selective polymer membranes on electrode surfaces via electrosynthesis, entrapping enzymes and controlling sensor selectivity [10]. |

| Streptavidin-Coated Magnetic Beads | Solid-phase support for immobilizing biotinylated capture probes (e.g., poly-dT oligonucleotides) in heterogeneous assay biosensors [11]. |

| Dithiothreitol (DTT) | Reducing agent that maintains a stable chemical environment, crucial for the functionality of protein-based biosensor components [11]. |

| Cap Analogs (e.g., ARCA) | Used in in vitro transcription to produce capped mRNA, a key target analyte for RNA integrity biosensors evaluating vaccine quality [11]. |

Software and Statistical Tools:

- Minitab, Design-Expert, JMP: Commercial software packages that provide comprehensive platforms for generating experimental designs, performing ANOVA, and visualizing response surfaces [10] [9].

- DoE Models for Bioreactors App (IDBS Polar): An example of integrated software that automatically calculates statistics and determines significant process parameters from experimental data, a functionality directly transferable to biosensor data analysis [12].

For researchers and drug development professionals working on the cutting edge of biosensor technology, clinging to the OFAT paradigm is a strategic liability. Its critical pitfall—the blindness to factor interactions—compromises performance, undermines reproducibility, and wastes precious resources. The adoption of DoE is no longer a niche advanced practice but a necessary component of rigorous, efficient, and successful biosensor research and development. By embracing the multivariate frameworks outlined in this guide, scientists can systematically navigate complex design spaces, unlock true optimal performance, and accelerate the development of robust, next-generation biosensors.

The development of high-performance biosensors is a quintessentially multidisciplinary challenge, intersecting fields of advanced materials, bioengineering, and nanotechnology [13]. Traditionally, biosensor optimization has relied heavily on the one-variable-at-a-time (OVAT) approach, where a single parameter is altered while all others are held constant. While straightforward, this method is fundamentally flawed for complex systems as it fails to capture interactions between variables and can lead to misleading optimal conditions [3]. The conditions established through OVAT may not represent the true optimum, ultimately hindering the practical application of biosensors in point-of-care diagnostic settings [3].

Design of Experiments (DoE) emerges as a powerful, systematic alternative. DoE is a model-based chemometric tool that enables the statistically reliable optimization of multiple parameters simultaneously [3]. By employing a structured experimental plan, DoE efficiently maps the relationship between input variables (e.g., material properties, fabrication parameters) and the desired sensor outputs (e.g., sensitivity, limit of detection). This approach not only reduces the total experimental effort required but also provides a global understanding of the system, capturing the critical interactions that OVAT inevitably misses [3]. For biosensors, where performance depends on the intricate interplay between the biochemical interface and the physical transducer, this holistic view is not just beneficial—it is essential.

Core Principles of Design of Experiments (DoE)

The DoE methodology hinges on the construction of a data-driven model from causal data collected across a predefined grid of experiments that cover the entire experimental domain of interest. Unlike OVAT, which provides only localized knowledge, DoE's a priori experimental plan allows for the prediction of responses across the entire domain, offering comprehensive, global knowledge for optimization [3].

The typical DoE workflow involves several key stages, as illustrated in the diagram below.

Key Experimental Designs for Biosensor Optimization

Several DoE frameworks are particularly relevant to biosensor development. The choice of design depends on the objective, whether it is screening for influential factors or modeling curvature in the response surface.

Full Factorial Designs: These are first-order orthogonal designs used to fit first-order approximating models. A

2^kfactorial design, wherekis the number of factors, investigates all possible combinations of factors at two levels (coded as -1 and +1). For example, a2^2design with factorsX1andX2requires only 4 experiments(-1,-1; +1,-1; -1,+1; +1,+1)to estimate the main effects of each factor and their interaction effect [3]. This makes them highly efficient for screening a moderate number of factors.Central Composite Designs (CCD): When a response follows a quadratic function, a second-order model is required. Factorial designs cannot account for this curvature. A Central Composite Design augments a factorial design with additional axial points and center points, allowing for the estimation of quadratic terms and thus providing an accurate model for finding a true optimum [3].

Mixture Designs: These are used when the factors are components of a mixture (e.g., the composition of a sensing hydrogel or an electrode ink) and the total must sum to 100%. In such cases, the components cannot be varied independently; changing one proportion necessitates adjusting others. Mixture designs are tailored to this constraint [3].

Table 1: Comparison of Common Experimental Designs in Biosensor Development

| Design Type | Primary Use | Key Advantage | Typical Experimental Effort | Model Equation |

|---|---|---|---|---|

| Full Factorial (2^k) | Factor screening | Efficiently estimates main effects and interactions | 2^k runs | Y = β₀ + Σβ_iX_i + Σβ_ijX_iX_j |

| Central Composite (CCD) | Response surface optimization | Models curvature; finds true optimum | ~10-20 runs for 2-4 factors | Y = β₀ + Σβ_iX_i + Σβ_ijX_iX_j + Σβ_iiX_i² |

| Mixture Design | Formulation optimization | Handles constrained factors that sum to 1 | Varies by design | Specialized Scheffé polynomials |

Implementing DoE in Biosensor Development: A Practical Guide

Defining the Optimization Objective and Variables

The first step in any DoE is to clearly define the objective. For an ultrasensitive biosensor, the key responses (Y) are often the Limit of Detection (LOD), sensitivity, and signal-to-noise ratio [3]. The factors (X) are the variables that can be controlled during biosensor fabrication and operation. These typically fall into three categories:

- Interface Formulation: This includes parameters like the concentration of the biorecognition element (e.g., antibody, enzyme, aptamer) for immobilization, the ratio of composite materials in a nanostructured ink, or the density of a self-assembled monolayer [3].

- Immobilization Strategy: Factors such as pH, ionic strength, and activation time of the surface can significantly impact the orientation and activity of immobilized biomolecules.

- Detection Conditions: Variables like temperature, pH of the running buffer, and incubation time can be optimized to maximize the assay performance [3].

Detailed Experimental Protocol: A Case Study on an Electrochemical Immunosensor

The following workflow and corresponding diagram outline a generalized protocol for optimizing a biosensor using a Central Composite Design.

Workflow:

- Define Objective: Enhance the sensitivity (

Y1) and lower the LOD (Y2) of a label-free electrochemical immunosensor. - Select Factors & Ranges:

X1: Antibody concentration (e.g., 10 - 50 µg/mL).X2: Electrode activation time with EDC/NHS chemistry (e.g., 30 - 90 minutes).X3: Incubation pH (e.g., 6.5 - 8.5).

- Choose Design: A Central Composite Design (CCD) is selected to model potential curvature.

- Execute Plan: Perform the 17-20 experiments dictated by the CCD in randomized order to minimize bias.

- Analyze Data & Build Model: Use statistical software to perform multiple linear regression. The output is a quadratic equation that predicts the responses for any combination of

X1,X2, andX3. - Validate Model: Conduct confirmation experiments at the predicted optimum conditions and compare the measured responses with the model's predictions.

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful application of DoE relies on the use of well-characterized materials and reagents. The table below details key components commonly used in biosensor development and their functions within a DoE framework.

Table 2: Key Research Reagent Solutions for Biosensor Development and Optimization

| Reagent / Material | Function in Biosensor Development | Role in DoE Optimization |

|---|---|---|

| Biorecognition Elements (Antibodies, Aptamers, Enzymes) | Provides specificity by binding the target analyte. | A key factor (X) whose concentration and immobilization density are often optimized. |

| Cross-linkers (EDC, NHS, Glutaraldehyde) | Activates surfaces or creates covalent bonds for immobilizing biorecognition elements. | The concentration and reaction time are critical factors (X) to be varied. |

| Nanomaterials (Graphene Oxide, Gold Nanoparticles, CNTs) | Enhances electron transfer, increases surface area, and improves signal amplification. | The composition, concentration, and deposition method are prime candidates for DoE factors. |

| Self-Assembled Monolayer (SAM) Reagents (Alkanethiols) | Creates a well-defined, functionalized interface on gold surfaces for biomolecule attachment. | The chain length and terminal functional group can be optimized as factors. |

| Blocking Agents (BSA, Casein) | Reduces non-specific binding on the sensor surface, lowering background noise. | The type and concentration are often optimized to improve the signal-to-noise ratio (Y). |

Advantages of DoE over OVAT: A Comparative Analysis in Biosensor Context

The systematic nature of DoE provides several decisive advantages over the traditional OVAT approach, which are critical for developing robust and high-performance biosensors.

- Detection of Interactions: This is the most significant advantage. In biosensors, it is common for factors to interact. For example, the optimal antibody concentration (

X1) might depend on the electrode activation time (X2). An OVAT approach would miss this interaction, potentially leading to a suboptimal configuration. DoE explicitly models and quantifies these interaction effects (e.g., through theβ₁₂X₁X₂term in the model) [3]. - Efficiency and Reduced Experimental Burden: While an OVAT study of 5 factors at 3 levels each would require 3^5 = 243 experiments, a fractional factorial design could screen the same factors in as few as 16-32 runs. This dramatic reduction in experimental effort saves time, resources, and valuable biological reagents [3].

- Global Optimization and Robustness: DoE models the entire experimental domain, allowing researchers to find a true optimum that may lie in the interior of the domain, not at the edge of the range tested for a single factor. Furthermore, the model can be used to find robust operating conditions where the biosensor's performance is insensitive to small, uncontrollable variations in the manufacturing process [3].

- Data-Driven Insight: The mathematical model generated from a DoE is not just a tool for prediction; it can offer physical insights into the underlying transduction and amplification processes. The significance of a factor or an interaction can reveal non-intuitive relationships about the biosensor's operation [3].

Table 3: Quantitative Comparison of DoE vs. OVAT for a Hypothetical 3-Factor Biosensor Optimization

| Criterion | One-Variable-at-a-Time (OVAT) | Design of Experiments (DoE) |

|---|---|---|

| Total Experiments | 15 (3 factors × 5 levels each, serially) | 15 (e.g., via a Central Composite Design) |

| Information Gained | Main effects only; optimal point may be false. | Main effects, all 2-way interactions, and curvature. |

| Ability to Find True Optimum | Low | High |

| Identification of Factor Interactions | No | Yes |

| Statistical Reliability | Low | High (includes replication and randomization) |

The complexity of modern biosensing—with its demands for ultrasensitive, multiplexed, and continuous monitoring—renders the one-variable-at-a-time approach obsolete [13] [3]. The Design of Experiments provides a necessary, systematic, and statistically sound framework for navigating the multi-parameter optimization landscape. By embracing DoE, researchers and drug development professionals can accelerate the development cycle, enhance biosensor performance, and gain deeper insights into their systems, thereby bridging the critical gap between laboratory innovation and reliable, commercially viable point-of-care diagnostic devices [13] [3]. The future of robust biosensor design is, without a doubt, multivariate.

In biosensor research and development, optimizing performance parameters such as sensitivity, selectivity, and stability is paramount for creating reliable diagnostic tools [14]. Traditionally, this optimization has relied on the One-Variable-At-a-Time (OVAT) approach, where researchers systematically alter a single factor while holding all others constant [15]. While intuitively simple, this method possesses critical limitations for complex biosensing systems where factor interactions significantly influence outcomes [3] [15]. A study optimizing a terephthalate biosensor highlighted that OVAT approaches struggle to investigate multidimensional design spaces efficiently and often miss crucial interactions between variables [16].

Design of Experiments (DoE) represents a fundamentally superior statistical framework for biosensor optimization. DoE is a branch of applied statistics that deals with planning, conducting, analyzing, and interpreting controlled tests to evaluate the factors that control the value of a parameter or group of parameters [17]. By manipulating multiple input factors simultaneously, DoE can identify important interactions that would be missed in OVAT experimentation [17]. This approach is particularly valuable for ultrasensitive biosensors, where challenges like enhancing the signal-to-noise ratio and ensuring reproducibility are pronounced [3]. The systematic nature of DoE not only reduces experimental effort but also enhances information quality, providing a data-driven model that connects variations in input variables to sensor outputs [3].

This technical guide examines the three foundational principles of experimental design—Randomization, Replication, and Blocking—within the context of biosensor research, demonstrating how their proper application leads to more reliable, reproducible, and efficient development processes.

Core Principles of Experimental Design

The three core principles of Randomization, Replication, and Blocking form the bedrock of statistically sound experimentation. When properly implemented, they work in concert to reduce bias, control variability, and provide reliable estimates of experimental error [18] [19].

Randomization

Principle and Mechanism

Randomization refers to the practice of performing experimental runs in a random order to prevent systematic biases from being introduced into the experiment [18]. This principle extends beyond mere random sequencing to include resetting conditions between runs whenever possible [18]. The fundamental purpose of randomization is to average out the effects of uncontrolled or lurking variables—factors that may influence results but are not explicitly included in the experimental design [18] [17].

In practical application, randomization requires assigning treatments to experimental units through a random process. For example, in testing four different types of drill bits on metal sheets, researchers would randomly assign the bits to the metal sheets rather than testing all of one type first, then another type [18]. This approach prevents systematic patterns in uncontrolled variables from confounding the results.

Application to Biosensor Development

Consider a scenario where a researcher is studying a cleaning process for titanium parts used in biosensor fabrication, with two factors: Bath Time and Solution Type [18]. If the researcher conducts all trials with a 10-minute bath time in the morning and all 30-minute trials in the afternoon, while ambient temperature and humidity increase throughout the day, any observed effect of Bath Time becomes confounded with the effects of temperature and humidity [18]. The researcher might conclude that Bath Time is statistically significant when, in reality, the environmental factors caused the observed difference.

Randomization is equally critical in biological aspects of biosensor development. When optimizing the formulation of a detection interface or the immobilization strategy of biorecognition elements, uncontrolled variations in buffer composition, reagent purity, or ambient conditions can systematically bias results if experiments are not properly randomized [3]. By randomizing the order of experiments, these potential sources of bias are distributed randomly across all experimental conditions, allowing their effects to be accounted for in the experimental error rather than falsely attributed to the factors being studied.

Table: Randomization Implementation Guide for Biosensor Experiments

| Scenario | Randomization Challenge | Recommended Approach |

|---|---|---|

| Multi-day experiments | Day-to-day variation in environmental conditions or reagent batches | Randomize run order across all days rather than completing one condition per day |

| Hard-to-change factors | Practical limitations prevent full randomization (e.g., oven temperature) | Use split-plot or strip-plot designs that restrict randomization only where necessary [18] |

| High-throughput screening | Position effects in multi-well plates | Randomize assignment of treatments to well positions |

| Biological replicates | Cell passage number or tissue source variation | Randomize processing order across all biological replicates |

Replication

Principle and Mechanism

Replication involves repeating the same experimental conditions one or more times and taking new measurements for these repeated settings [18]. Unlike repeated measurements on the same experimental unit, true replication means applying the same treatment to multiple independent experimental units [18]. This distinction is crucial—pseudoreplication occurs when researchers mistake multiple measurements from the same unit for true replication [18].

Replication serves two primary purposes in experimental design. First, it enables researchers to obtain an estimate of experimental error—the unexplained variation in the response that is not accounted for by changing the factors [18] [19]. This estimate of natural variation between experimental units is necessary for testing statistical significance [18]. Second, replication increases the accuracy of estimated effects by providing more data points for each treatment condition [19].

Application to Biosensor Development

In biosensor characterization, replication is essential for establishing reliable performance metrics. For example, when measuring the limit of detection (LOD) of an ultrasensitive biosensor, replicating measurements across multiple sensor batches and different days provides a more realistic estimate of performance under real-world conditions [3]. Without adequate replication, a researcher might report an optimistically low LOD based on a single favorable run, which doesn't represent the sensor's typical performance.

A critical consideration in biosensor research is identifying the appropriate experimental unit for replication. For instance, in developing the SweetTrac1 glucose biosensor, researchers expressed the biosensor in yeast cells and measured fluorescence response to glucose [20]. If a researcher measured the fluorescence response multiple times from the same cell culture, this would constitute repeated measurements rather than true replication. True replication would require preparing multiple independent cell cultures, each expressing the biosensor, and measuring the fluorescence response once from each [18] [20].

Table: Replication Strategies in Biosensor Development

| Replication Type | Definition | When to Use |

|---|---|---|

| Technical Replication | Multiple measurements of the same sample | Assessing measurement precision of analytical instruments |

| Biological Replication | Multiple biological sources (e.g., different cell cultures, animals) | Accounting for biological variability in sensor response |

| Experimental Replication | Completely independent repetitions of the entire experiment | Validating biosensor performance across different operators/labs |

| Material Replication | Multiple batches of sensor materials | Evaluating manufacturing consistency and shelf-life |

Blocking

Principle and Mechanism

Blocking is a design technique used to reduce or control variability from nuisance factors—variables that are not of primary interest but may affect the response [18] [19]. By grouping similar experimental units together into blocks, researchers can account for systematic variation caused by these nuisance factors [18] [19]. The key idea is to make comparisons between treatments within relatively homogeneous blocks, thereby increasing the precision of those comparisons.

Blocking represents a restriction on randomization rather than its elimination. When randomizing a factor is impossible or too costly, blocking allows researchers to carry out all trials with one setting of the factor, then all trials with the other setting [17]. This approach systematically controls for known sources of variability that cannot be practically randomized.

Application to Biosensor Development

Biosensor research frequently involves nuisance factors that can be effectively managed through blocking. For example, if an experiment must be conducted across multiple days, uncontrolled day-to-day variation can add substantial unexplained variation to the results [18]. Including "Day" as a blocking variable in the experimental design allows researchers to account for this variation in their analysis, thereby improving their ability to detect significant effects of the factors of interest [18].

Another common application in biosensor development involves material sourcing. If biosensor components must be sourced from different batches or suppliers, these differences might introduce variability that obscures the effects of factors being studied. By creating blocks based on batch or supplier, researchers can statistically separate this nuisance variation from the treatment effects they wish to estimate.

Diagram Title: Blocking Principle for Nuisance Factor Control

DoE Versus OVAT: A Comparative Framework for Biosensors

The fundamental differences between Design of Experiments and One-Variable-At-a-Time approaches become particularly significant in complex biosensor optimization, where multiple interacting factors determine overall performance.

Limitations of OVAT in Biosensor Research

The OVAT approach suffers from several critical limitations that hinder efficient biosensor development:

Failure to Detect Interactions: OVAT treats variables independently, meaning interaction effects between variables consistently elude detection [3]. In biosensor systems, factors such as immobilization strategy, detection interface formulation, and detection conditions frequently interact [3]. For example, the optimal pH for a biorecognition element might depend on the temperature, but this interaction would be missed in OVAT optimization.

Inefficient Exploration of Chemical Space: OVAT requires a minimum of 3 reactions (high, middle, low) to understand the effect of each variable independently [15]. With multiple variables, this approach probes only a minimal fraction of the possible chemical space, potentially missing the true optimum [15]. The explored space represents a limited grid rather than a comprehensive mapping of the response surface.

Suboptimal Compromise for Multiple Responses: Biosensors often require optimization of multiple responses simultaneously, such as sensitivity, dynamic range, and selectivity [16]. OVAT optimization of more than one response typically results in conditions that represent a compromise between different objectives rather than a true optimization [15].

Advantages of DoE in Biosensor Development

DoE methodology addresses these limitations through its systematic, multivariate approach:

Efficient Detection of Interactions: By simultaneously testing multiple variables in each experiment, DoE designs can account for and quantify effects between variables [17] [15]. This capability is particularly valuable when engineering transcriptional biosensors, where promoter regions, operator regions, and other genetic elements interact complexly to determine biosensor performance [16].

Comprehensive Model Building: DoE approaches develop a mathematical model through linear regression that elucidates the relationship between experimental conditions and outcomes [3]. This model enables prediction of the response at any point within the experimental domain, providing global rather than localized knowledge [3].

Systematic Multi-Response Optimization: DoE utilizes a statistical framework that determines the relationships between variables and their effects on multiple responses simultaneously [15]. This allows researchers to locate true optimum conditions that balance multiple performance characteristics, such as dynamic range, sensitivity, and selectivity in terephthalate biosensors [16].

Diagram Title: OVAT vs DoE Experimental Workflow Comparison

Table: Quantitative Comparison of OVAT vs. DoE for Biosensor Optimization

| Characteristic | One-Variable-At-a-Time | Design of Experiments |

|---|---|---|

| Experimental Efficiency | Inefficient: Requires numerous runs to test variables independently | Highly efficient: Experiments test multiple factors simultaneously [15] |

| Interaction Detection | Cannot detect interactions between factors [3] | Systematically identifies and quantifies interactions [17] |

| Optimum Location | Often finds false or suboptimal conditions [15] | Higher probability of finding true optimum conditions [15] |

| Model Building | No comprehensive model of the system | Develops predictive mathematical model [3] |

| Multi-response Optimization | Sequential optimization leads to compromises [15] | Simultaneous optimization of multiple responses [15] |

Implementation Protocols for Biosensor Optimization

Factorial Designs for Initial Screening

Factorial designs serve as powerful tools for initial screening of factors affecting biosensor performance. The 2^k factorial designs are first-order orthogonal designs that require 2^k experiments, where k represents the number of variables being studied [3]. In these designs, each factor is assigned two levels (coded as -1 and +1) corresponding to the selected range for that variable [3].

The experimental matrix for a 2^2 factorial design (two factors, each at two levels) includes four experimental runs [3]. From a geometric perspective, the experimental domain can be visualized as a square with points at each corner [3]. These designs are particularly valuable in early-stage biosensor development when numerous factors (e.g., pH, temperature, immobilization density, reagent concentration) may influence performance, and researchers need to identify which factors warrant further investigation.

Case Study: Terephthalate Biosensor Optimization

A recent study demonstrated the power of DoE for tuning the performance of a TphR-based terephthalate biosensor [16]. Researchers employed a DoE approach to build a framework for efficiently engineering activator-based biosensors with tailored performances, simultaneously engineering the core promoter and operator regions of the responsive promoter [16].

Experimental Design: Researchers used a dual refactoring approach to explore an enhanced biosensor design space and assign causative performance effects [16].

Outcomes: The DoE framework enabled development of tailored biosensors with enhanced dynamic range and diverse signal output, sensitivity, and steepness [16]. The optimized biosensors were successfully applied for primary screening of PET hydrolases and enzyme condition screening [16].

Advantages: The approach served as a foundational framework for engineering transcriptional biosensors and demonstrated the potential of statistical modeling in optimizing biosensors for tailored industrial and environmental applications [16].

Response Surface Methodology for Fine-Tuning

After identifying significant factors through factorial designs, Response Surface Methodology (RSM) provides powerful techniques for fine-tuning biosensor performance. Central composite designs and Box-Behnken designs are particularly valuable for estimating quadratic terms and modeling curvature in responses [3] [21].

These designs become essential when the response follows a quadratic function with respect to the experimental variables [3]. For biosensors, this might involve optimizing around a pH optimum where performance decreases at both higher and lower values, or finding the ideal temperature that balances reaction rate with biorecognition element stability.

Essential Research Reagent Solutions for Biosensor DoE

Implementing effective DoE strategies in biosensor research requires specific reagents and materials that enable precise control and measurement of experimental variables.

Table: Essential Research Reagents for Biosensor Development and Optimization

| Reagent/Material | Function in DoE | Application Examples |

|---|---|---|

| cpsfGFP (circularly permutated superfolded GFP) | Fluorescent reporter in genetically-encoded biosensors [20] | SweetTrac1 glucose biosensor construction [20] |

| Linker Peptides with Degenerate Codons | Optimization of structural connections in biosensor chimeras [20] | Creating gene libraries for linker optimization in SweetTrac1 [20] |

| Allosteric Transcription Factors | Biological recognition elements for synthetic biosensors [16] | TphR-based terephthalate biosensors [16] |

| Core Promoter and Operator Libraries | Engineering responsive genetic circuits [16] | Tuning dynamic range and sensitivity in transcriptional biosensors [16] |

| Screen-Printed Carbon Electrodes | Transducer platform for electrochemical biosensors [22] | Detection of organophosphate pesticides in milk [22] |

| Photocrosslinkable Polymers | Enzyme immobilization for stable biosensing interfaces [22] | Flow-based biosensors for pesticide quantification [22] |

The systematic application of Randomization, Replication, and Blocking principles through Design of Experiments represents a paradigm shift in biosensor research methodology. By embracing these statistical principles, researchers can overcome the limitations of traditional OVAT approaches, efficiently identifying optimal conditions while capturing crucial interaction effects between factors.

For biosensor researchers and drug development professionals, adopting DoE methodology translates to more efficient resource utilization, accelerated development timelines, and more robust, reproducible biosensor performance. As the field advances toward increasingly complex multiplexed detection systems and point-of-care applications, the rigorous experimental framework provided by proper DoE implementation will become increasingly essential for developing the next generation of biosensing technologies.

Implementing DoE in Biosensor Development: From Screening to Optimization

In biosensors research, the initial phase of identifying which factors critically influence performance is a fundamental step that can dictate the success or failure of the entire development process. Traditional One-Fariable-at-a-Time (OFAT) experimentation, where a single factor is altered while all others are held constant, has been widely used due to its apparent simplicity [23]. However, this approach presents significant limitations for complex biosensing systems, including the inability to detect factor interactions, inefficient resource use, and a high risk of misleading conclusions [23]. These shortcomings are particularly problematic in biosensor optimization, where multiple fabrication and operational parameters often exhibit interdependent effects on the final analytical performance.

Design of Experiments (DoE) addresses these limitations through structured, multivariate approaches that systematically evaluate multiple factors simultaneously [24]. Screening designs, a specific class of DoE methodologies, are strategically employed to efficiently identify the few critical factors from a large set of potential variables with minimal experimental effort [2]. This technical guide examines the application of these powerful screening methodologies within biosensor research, providing researchers with practical frameworks for accelerating development timelines while enhancing the reliability of identified critical factors.

The Limitation of One-Variable-at-a-Time (OVAT) Approaches

The OFAT approach, while intuitively simple, suffers from fundamental statistical and practical deficiencies that limit its effectiveness for optimizing complex systems like biosensors [23].

- Failure to Capture Interaction Effects: OFAT inherently assumes that factors act independently on the response variable. In biosensor systems, this assumption is frequently violated. For example, the optimal concentration of an immobilized DNA probe may depend on the ionic strength of the hybridization buffer. Such interactions between factors remain undetectable in OFAT, potentially leading researchers to suboptimal conditions [24] [2].

- Inefficiency and Resource Intensity: OFAT requires a large number of experimental runs to study multiple factors. For k factors, each examined at n levels, OFAT requires n×k experiments, which quickly becomes impractical. More efficient DoE screening designs can identify vital factors in a fraction of these runs [2].

- Increased Risk of Misleading Conclusions: Without replication and randomization—cornerstones of DoE—OFAT results are vulnerable to systematic bias and experimental error, compromising their reliability and reproducibility [23].

The following diagram contrasts the experimental space exploration of OFAT versus a factorial screening design, highlighting how OFAT misses critical interaction information.

Fundamental Screening Designs in Practice

Screening designs provide a structured framework to efficiently sift through many factors. The choice of design depends on the number of factors to be investigated and the resources available.

Two-Level Full and Fractional Factorial Designs

Full factorial designs evaluate all possible combinations of factors and their levels. For k factors, each at 2 levels (typically coded as -1 for 'low' and +1 for 'high'), this requires 2k experiments [24]. This design estimates all main effects and all interaction effects. A 2^2 full factorial design (2 factors, 2 levels each) requires 4 experiments, as shown in the experimental matrix below [24]:

Table 1: Experimental Matrix for a 2² Full Factorial Design

| Test Number | Factor X₁ | Factor X₂ |

|---|---|---|

| 1 | -1 | -1 |

| 2 | +1 | -1 |

| 3 | -1 | +1 |

| 4 | +1 | +1 |

When the number of factors increases, full factorial designs can become experimentally prohibitive. For example, with 6 factors, a full factorial would require 64 runs [2]. Fractional factorial designs resolve this by strategically examining only a fraction (e.g., half, quarter) of the full factorial combinations. While this reduces experimental effort, it comes at the cost of confounding (aliasing), where some interaction effects become statistically indistinguishable from main effects or other interactions. These designs are powerful for screening when higher-order interactions are assumed negligible.

Plackett-Burman Designs

Plackett-Burman (PB) designs are a highly efficient class of screening designs used to examine N - 1 factors in just N experimental runs, where N is a multiple of 4 (e.g., 4, 8, 12, 16...) [2] [25]. Their primary strength is their ability to screen a large number of factors with a minimal number of experiments. A key application was demonstrated in the development of a colorimetric method for a herbicide, where a PB design screened seven factors—pH, HCl concentration, sulfanilic acid concentration, sodium nitrite concentration, reaction time, and reagent volumes—using only 12 experimental runs [25]. The primary limitation of PB designs is that they provide information only on main effects and assume all interactions are negligible.

Specialized Screening Designs: D-Optimal and Definitive Screening Designs

For more complex scenarios, advanced designs offer unique advantages:

D-Optimal Designs: These are computer-generated designs that maximize the determinant of the information matrix (X'X), thereby providing the most precise estimates of model coefficients for a given number of experimental runs [2]. They are particularly useful when the experimental region is constrained (i.e., not all factor combinations are feasible) or when a standard factorial design would require too many runs. In one case, a D-optimal design optimized six variables for a paper-based electrochemical biosensor using only 30 experiments, compared to the 486 required by an OFAT approach, leading to a 5-fold improvement in the detection limit for miRNA [2].

Definitive Screening Designs (DSDs): DSDs represent a modern advancement that efficiently screens multiple factors while retaining the ability to estimate second-order (quadratic) effects and some interactions without a dramatic increase in run size [26]. They are highly valuable for identifying critical factors when the relationship between a factor and the response is suspected to be non-linear. This was successfully applied to optimize a whole-cell biosensor for protocatechuic acid by systematically modifying promoter and RBS (Ribosome Binding Site) strengths, which resulted in a >500-fold improvement in dynamic range [26].

Table 2: Comparison of Common Screening Designs for Biosensor Development

| Design Type | Key Principle | Best Use Case | Advantages | Key Limitations |

|---|---|---|---|---|

| Full Factorial | All possible combinations of factor levels. | <6 factors to study main effects + all interactions. | Estimates all interaction effects. | Runs grow exponentially (2^k) with factors. |

| Fractional Factorial | A carefully chosen subset (fraction) of full factorial. | 5+ factors, assuming some interactions are negligible. | Highly efficient vs. full factorial. | Effects are confounded (aliased). |

| Plackett-Burman | N-1 factors in N runs (N multiple of 4). | Very large factor sets (>6), main effects only. | Extreme efficiency for screening. | Cannot detect any interactions. |

| D-Optimal | Computer-optimized for max. information per run. | Non-standard design regions or complex constraints. | Handles constraints; highly flexible. | Design is specific to a pre-defined model. |

| Definitive Screening | Efficiently estimates quadratics and interactions. | Screening when curvature is suspected. | Balances screening with modeling capability. | More runs than Plackett-Burman. |

Experimental Protocol: Implementing a Screening Design

The following workflow outlines the key stages for executing a successful screening experiment in biosensor development, from planning to validation.

Stage 1: Pre-Experimental Planning (Steps 1-3)

- Define Objective and Response Metric: Clearly state the goal (e.g., "identify factors most critical for improving the limit of detection (LOD) of an electrochemical biosensor"). Select a quantitative, reliable response for measurement (e.g., electrochemical current, fluorescence intensity, LOD value) [2].

- Select Factors and Ranges: Choose the factors (e.g., probe concentration, hybridization time, temperature, ionic strength) to be screened based on prior knowledge and literature. Define realistic "low" and "high" levels for each factor that are sufficiently spaced to provoke a measurable effect but remain within practical or plausible bounds [24].

- Choose Experimental Design: Based on the number of factors and the objective, select an appropriate screening design (e.g., Plackett-Burman for >6 factors with minimal runs, or a Definitive Screening Design to capture potential curvature) [26] [25].

Stage 2: Experimental Execution (Step 4)

- Execute Runs in Randomized Order: Conduct the experiments as specified by the design matrix. Randomization is critical to avoid systematic bias from lurking variables (e.g., instrument drift, reagent degradation) [23].

Stage 3: Data Analysis and Validation (Steps 5-7)

- Statistical Analysis: Analyze the collected response data using statistical software. Analysis of Variance (ANOVA) is used to determine the statistical significance of the factor effects. Pareto charts and half-normal plots are useful visual tools to identify which factors stand out from noise [27].

- Identify Critical Factors: Factors with p-values below a chosen significance level (e.g., α = 0.05) are considered statistically significant and are selected as "critical" for further optimization.

- Confirm with Validation Runs: Conduct additional confirmation experiments at the optimal settings predicted by the screening analysis to verify the findings and ensure the model's adequacy [24].

Research Reagent Solutions and Materials

The following table details key reagents and materials commonly employed in biosensor screening experiments, as cited in the literature.

Table 3: Essential Research Reagents and Materials for Biosensor Screening

| Reagent/Material | Function in Screening Experiments | Example Application |

|---|---|---|

| Allosteric Transcription Factors (aTFs) | Sensing component in whole-cell biosensors; binds ligand and transduces signal to regulate reporter gene expression [26] [28]. | Engineered bacterial biosensors for metabolites like protocatechuic acid [26]. |

| Reporter Genes (e.g., gfp) | Encodes a measurable output (e.g., green fluorescent protein) linked to biosensor activation [29] [28]. | High-throughput screening of microbial populations via fluorescence-activated cell sorting (FACS) [29]. |

| Cell-Free Transcription/Translation (IVTT) Systems | Enables rapid in vitro expression of biosensor protein variants without using living cells [30]. | Encapsulation in gel-shell beads (GSBs) for high-content biosensor screening [30]. |

| Gold Nanoparticles | Used to modify electrode surfaces to enhance signal transduction in electrochemical biosensors [2]. | Component of a paper-based electrochemical biosensor for miRNA detection [2]. |

| Immobilized DNA Probes | Capture strand for hybridization-based biosensors; surface density is a critical optimization factor [2]. | Detection of cancer-associated microRNAs (e.g., miR-29c) [2]. |

Screening designs provide a statistically rigorous and resource-efficient methodology for identifying critical factors in biosensor development, fundamentally superior to the traditional OFAT approach. By enabling the simultaneous evaluation of multiple factors, these designs not only accelerate the R&D timeline but also uncover crucial interaction effects that OFAT inevitably misses. As the complexity of biosensing platforms increases, the adoption of systematic screening strategies—such as Plackett-Burman, D-optimal, and Definitive Screening Designs—will be essential for developing the next generation of highly sensitive, robust, and reliable biosensors for diagnostics and drug development. Researchers are encouraged to integrate these powerful DoE tools early in their development workflow to maximize learning and optimization efficiency.

Optimization with Response Surface Methodology (RSM)

In the development of electrochemical biosensors, researchers traditionally relied on the "one factor at a time" (OFAT) approach for optimization. This method involves varying a single parameter while keeping all others constant, requiring significant experimental work and only providing local optima without revealing interaction effects between factors [31]. In contrast, Response Surface Methodology (RSM) represents a collection of statistical and mathematical techniques that enables researchers to efficiently model relationships between multiple independent variables and one or more responses, capturing complex interactions with reduced experimental workload [32] [33].

The limitations of OFAT become particularly problematic in biosensor development, where multiple factors such as probe concentration, immobilization time, and electrode modification parameters can interact in complex ways. RSM addresses these limitations through structured experimental designs that systematically explore the entire factor space, enabling researchers to build predictive models and identify optimal operational conditions with fewer resources [31] [34]. This technical guide explores the application of RSM within biosensor research, providing detailed methodologies and protocols for implementing this powerful optimization approach.

Theoretical Foundations of Response Surface Methodology

Core Principles and Historical Context

Response Surface Methodology is a specialized subset of Design of Experiments (DoE) focused on building empirical models and optimizing processes when multiple variables potentially influence the outcomes. Originating from the pioneering work of Box and Wilson in the 1950s, RSM was developed to link experimental design with optimization needs in chemical engineering and manufacturing [32]. The methodology employs a combination of statistical, graphical, and mathematical techniques to explore and model the shape of a response across the experimental region [35].

The fundamental concept underlying RSM is that any measurable response (Y) can be represented as a function of multiple input variables (X₁, X₂, ..., Xₖ). In its most common form, this relationship is approximated using a second-order polynomial model:

Y = β₀ + ∑βᵢXᵢ + ∑βᵢᵢXᵢ² + ∑βᵢⱼXᵢXⱼ + ε [32]

Where β₀ is the constant term, βᵢ represents linear coefficients, βᵢᵢ represents quadratic coefficients, βᵢⱼ represents interaction coefficients, and ε denotes the error term. This quadratic model can capture curvature in the response surface, which is essential for identifying optimum conditions [32] [36].

Key Advantages of RSM over OFAT

Table 1: Comparative analysis of RSM versus OFAT approaches

| Aspect | One-Factor-at-a-Time (OFAT) | Response Surface Methodology (RSM) |

|---|---|---|

| Factor Interactions | Cannot detect or quantify interactions between factors | Systematically identifies and quantifies interaction effects |

| Experimental Efficiency | Requires extensive experimental runs; inefficient use of resources | Optimizes information gain per experimental run; reduced resource requirements |

| Model Capability | Provides only local optima; limited predictive capability | Builds predictive mathematical models across the entire design space |

| Curvature Detection | Cannot adequately model curved surfaces | Explicitly models curvature through quadratic terms |

| Multiple Responses | Difficult to optimize for multiple responses simultaneously | Enables simultaneous optimization of multiple responses |

RSM demonstrates particular superiority over OFAT in complex systems like biosensor development, where factors often exhibit significant interactions. For instance, in optimizing an electrochemical DNA biosensor for Mycobacterium tuberculosis detection, researchers found that RSM efficiently captured interactions between probe concentration, immobilization time, and other parameters that would have been missed by OFAT [34].

Experimental Design Strategies for RSM

Preliminary Screening Designs

Before implementing a full RSM optimization, researchers often conduct preliminary screening experiments to identify which factors significantly impact the response variables. The Plackett-Burman (PB) design is particularly valuable for this purpose, allowing efficient screening of numerous factors with minimal experimental runs [34]. In the M. tuberculosis biosensor study, researchers employed a PB design to evaluate eleven different factors, ultimately identifying the most significant parameters for subsequent RSM optimization [34].

Core RSM Experimental Designs

Central Composite Design (CCD)

The Central Composite Design is the most widely used RSM design for process optimization [32] [33]. A CCD consists of:

- Factorial points: Represent all combinations of factor levels (as in a standard factorial design)

- Center points: Repeated runs at the midpoint of the experimental region to estimate experimental error and check model adequacy

- Axial (star) points: Positioned along each factor axis at a distance α from the center to capture curvature

CCDs can be arranged to be rotatable, meaning the variance of predicted responses is constant at points equidistant from the center, ensuring uniform precision across the experimental region [32]. Variations include circumscribed CCD, inscribed CCD, and face-centered CCD, which differ in how the axial points are positioned relative to the factorial cube [32].

Box-Behnken Design (BBD)

The Box-Behnken Design offers an efficient alternative to CCD when a full factorial experiment is impractical due to resource constraints [32]. BBDs are spherical designs with all points lying on a sphere of radius √2, and they require fewer runs than CCDs for the same number of factors. For a three-factor system, a BBD requires only 13 runs (including center points), compared to 15-20 runs for a CCD [32]. The formula for the number of runs in a BBD is:

Number of runs = 2k × (k - 1) + nₚ

Where k is the number of factors, and nₚ is the number of center points [32].

Table 2: Comparison of common RSM experimental designs

| Design Type | Number of Factors | Typical Run Count | Key Advantages | Limitations |

|---|---|---|---|---|

| Central Composite Design (CCD) | 2-6 | 15-90 runs | Rotatable; estimates all quadratic effects; flexible α value | Higher run count compared to BBD |

| Box-Behnken Design (BBD) | 3-7 | 13-62 runs | Fewer runs than CCD; spherical design | Cannot include extreme factor combinations |

| Three-Level Full Factorial | 2-4 | 9-81 runs | Comprehensive data across factor space | Run count grows exponentially with factors |

Design Selection Considerations

Choosing an appropriate experimental design requires careful consideration of several factors:

- Number of factors: CCD generally handles 2-6 factors effectively, while BBD works well for 3-7 factors

- Resource availability: BBD typically requires fewer runs than CCD for the same number of factors

- Region of interest: CCD is preferable when exploring a cuboidal region, while BBD is better for spherical regions

- Previous knowledge: When augmenting existing screening data, CCD can efficiently add the necessary points to estimate curvature

Implementing RSM: A Step-by-Step Protocol

Problem Definition and Response Selection

The initial step involves clearly defining the optimization objectives and identifying critical response variables. In biosensor research, typical responses include sensitivity, detection limit, signal-to-noise ratio, and response time [31] [34]. Researchers must establish whether the goal is to maximize, minimize, or achieve a target value for each response.

Factor Screening and Level Determination

Based on prior knowledge or preliminary screening experiments, researchers select the most influential factors and determine appropriate ranges for each. Factors should be tested at at least three levels to estimate quadratic effects [35]. Continuous factors (e.g., temperature, concentration) are coded to a common scale (typically -1, 0, +1) to avoid multicollinearity and improve model computation [36].

Experimental Execution

Experiments should be conducted in randomized order to minimize the effects of extraneous variables. Replication, particularly at center points, provides an estimate of pure error and enables lack-of-fit testing [32] [36]. For biosensor studies, this may involve fabricating multiple electrode modifications under systematically varied conditions and measuring performance metrics [34].

Model Development and Analysis

Experimental data are analyzed using multiple regression to fit a response surface model. The significance of model terms is evaluated using ANOVA, with non-significant terms (except those involved in higher-order terms) potentially removed to simplify the model [35] [33]. Model adequacy is checked through residual analysis, R² values, and lack-of-fit tests [36].

Optimization and Validation

Once an adequate model is developed, optimization techniques identify factor settings that produce the desired response values. For single responses, this may involve analytical or numerical methods to find maxima or minima. For multiple responses, approaches like desirability functions or overlaid contour plots help balance competing objectives [32] [35]. Validation through confirmation experiments at the predicted optimum conditions is essential to verify model predictions [36].

Case Study: RSM Optimization of an Electrochemical DNA Biosensor

Research Context and Objectives

A compelling example of RSM application in biosensor research comes from the development of an electrochemical DNA biosensor for detecting Mycobacterium tuberculosis [34]. The researchers aimed to create a sensitive, PCR-free detection platform using a nanocomposite of hydroxyapatite nanoparticles (HAPNPs), polypyrrole (PPY), and multi-walled carbon nanotubes (MWCNTs) [34].

Experimental Design and Implementation

The optimization process employed a two-stage approach:

- Screening phase: A Plackett-Burman design identified significant factors from eleven potential parameters

- Optimization phase: A Central Composite Design based on RSM determined optimal conditions for maximum biosensor performance

Key factors investigated included probe concentration, probe immobilization time, scan rate for electrodeposition, and MB concentration. The response measured was the oxidation signal of Methylene Blue (MB) using differential pulse voltammetry [34].

Research Reagent Solutions

Table 3: Key research reagents and materials for electrochemical biosensor development

| Reagent/Material | Function/Application | Significance in Biosensor Development |

|---|---|---|

| Multi-walled Carbon Nanotubes (MWCNTs) | Electrode modification | Enhances electrical conductivity and surface-to-volume ratio [34] |

| Polypyrrole (PPY) | Conductive polymer coating | Improves biocompatibility, conductivity, and chemical stability [34] |

| Hydroxyapatite Nanoparticles (HAPNPs) | Biomolecule immobilization substrate | Provides excellent bioactivity, biocompatibility, and multiple adsorption sites [34] |

| Methylene Blue (MB) | Electroactive indicator | Generates oxidation signal for DNA hybridization detection [34] |

| Screen-printed Electrodes | Biosensor platform | Enables disposable, portable biosensor devices [31] |

Results and Outcomes

The RSM approach enabled researchers to efficiently identify optimal conditions that maximized biosensor sensitivity. The resulting biosensor demonstrated a wide detection range (0.25 to 200.0 nM) with a low detection limit of 0.141 nM, successfully detecting M. tuberculosis in clinical sputum samples [34]. This case highlights how RSM can streamline biosensor optimization while capturing complex factor interactions that OFAT would miss.

Advanced RSM Applications and Hybrid Approaches

Multiple Response Optimization

Many biosensor development projects require balancing multiple, often competing, response objectives. For instance, a researcher might need to maximize sensitivity while minimizing response time and manufacturing cost. RSM addresses this challenge through several approaches:

- Desirability functions: Transform individual responses into a dimensionless desirability score (0-1 range) and combine them into an overall composite desirability

- Overlaid contour plots: Visually identify regions where all responses simultaneously meet their respective targets

- Numerical optimization: Use algorithms to find factor settings that maximize composite desirability [32] [35]

Integration with Artificial Intelligence

Recent advances combine RSM with artificial intelligence techniques, particularly Artificial Neural Networks (ANN). In a study comparing several RSM designs with an ANN model for optimizing oxidation conditions of a lignocellulosic blend, the ANN demonstrated superior prediction capability with higher regression coefficients and fewer required experiments [37]. Similarly, pharmaceutical research has successfully integrated RSM and ANN for Quality by Design development of rivaroxaban push-pull osmotic tablets [38].

This hybrid approach leverages the structured design and interpretability of RSM with the superior nonlinear modeling capability of ANN, particularly valuable for highly complex systems with strong interactive effects.