Correcting for Signal Drift in Continuous Monitoring: From Foundational Concepts to Advanced Applications in Biomedical Research

This article provides a comprehensive guide to signal drift in continuous monitoring systems, a critical challenge impacting data reliability in scientific and clinical applications.

Correcting for Signal Drift in Continuous Monitoring: From Foundational Concepts to Advanced Applications in Biomedical Research

Abstract

This article provides a comprehensive guide to signal drift in continuous monitoring systems, a critical challenge impacting data reliability in scientific and clinical applications. It explores the fundamental causes and consequences of drift across diverse fields, from terrestrial gravimetry and medical imaging to real-time drug monitoring. The content details a suite of advanced correction methodologies, including hybrid frameworks and path-optimized scanning, and offers practical strategies for troubleshooting and optimization. Finally, it establishes a rigorous framework for validating correction efficacy and comparing model performance, synthesizing key takeaways to enhance measurement precision in biomedical research and drug development.

Understanding Signal Drift: Foundations, Impact, and Multi-Domain Manifestations

Frequently Asked Questions

What is signal drift and why is it a problem in continuous monitoring? Signal drift refers to the degradation of a sensor or model's performance over time, leading to increasingly unreliable measurements or predictions [1]. In continuous monitoring applications, such as in vivo biomarker sensing or bioprocess control, this is a critical problem because it can render long-term data useless, compromise scientific conclusions, or disrupt automated systems [2] [3]. Unlike sudden failures, drift is often gradual and can go undetected without proper monitoring.

What is the difference between data drift and concept drift? While both are types of model drift, they originate from different changes in the underlying data statistics [4].

- Data Drift (Covariate Shift): This occurs when the distribution of the input data (

P(X)) changes, but the relationship between the inputs and the output (P(Y|X)) remains the same [5] [6]. For example, an image recognition model trained on photos taken on sunny days may perform poorly if used on photos taken on cloudy days. - Concept Drift: This occurs when the fundamental relationship between the input and output variables (

P(Y|X)) changes, even if the input distribution (P(X)) stays the same [4] [6]. For instance, in finance, the relationship between economic indicators and stock prices may change after a major market event, making old predictive models less accurate.

What are common sources of drift in electrochemical biosensors? Research identifies several key mechanisms that cause signal degradation in electrochemical biosensors, such as Electrochemical Aptamer-Based (EAB) sensors [2]:

- Surface Fouling: The accumulation of proteins, cells, or other biological material on the sensor surface, which can slow electron transfer and reduce signal.

- Monolayer Desorption: The electrochemically driven desorption of the self-assembled monolayer (SAM) from the gold electrode surface.

- Enzymatic Degradation: The cleavage of DNA or RNA strands by nucleases present in biological fluids.

- Reporter Degradation: Irreversible chemical reactions that degrade the redox reporter molecule.

Troubleshooting Guides

Guide 1: Diagnosing and Correcting Sensor Drift in Biomedical Applications

This guide addresses the signal loss commonly encountered with in vivo biosensors.

Symptoms:

- Gradual, monotonic decrease in signal amplitude over time.

- Increased signal noise or a dropping signal-to-noise ratio.

- Biphasic signal loss: a rapid initial drop followed by a slower, linear decline [2].

Diagnostic Steps:

- Isolate the Mechanism: Deploy the sensor in a controlled buffer solution (e.g., PBS) and then in a complex biological fluid (e.g., whole blood). The absence of a rapid initial drift phase in the buffer suggests that fouling or enzymatic degradation (i.e., "biology") is a primary contributor [2].

- Test Potential Dependence: Monitor the drift rate while varying the electrochemical potential window. A strong dependence on the applied potential indicates that reductive or oxidative desorption of the sensor monolayer is a significant factor [2].

- Perform a Reversibility Test: Wash the drifted sensor with a denaturant like urea. A significant recovery of the signal suggests that surface fouling is a major, and at least partially reversible, cause of the drift [2].

Solutions:

- For Fouling: Use enzyme-resistant oligonucleotide backbones (e.g., 2'O-methyl RNA) or spiegelmers. Implement surface coatings or hydrogels that resist protein adsorption [2].

- For Monolayer Desorption: Optimize the electrochemical protocol to use the most narrow potential window possible that still captures the redox reaction. This minimizes stress on the gold-thiol bond [2].

- For Signal Correction: Implement a Multi Pseudo-Calibration (MPC) approach. This method uses periodic ground-truth measurements from the system (e.g., offline analyte concentration checks) as reference points to train a regression model that can non-linearly compensate for the drift [3].

Guide 2: Mitigating Model Drift in Intelligent Speed Assistance (ISA) Systems

This guide focuses on AI model drift in safety-critical automotive systems.

Symptoms:

- Misreading speed limits or traffic signs.

- Inconsistent interventions (e.g., unnecessary speed reduction).

- Discrepancies between different data sources (e.g., camera vs. GPS speed data) [1].

Detection Strategies:

- Real-Time Confidence Scoring: Monitor the model's confidence in its predictions. A series of low-confidence predictions can indicate drift [1].

- Sensor Fusion Cross-Validation: Use data from multiple independent sources (GPS, camera, HD maps) to cross-check for inconsistencies. Frequent conflicts signal potential drift in one of the sensors or models [1].

- Performance Feedback Loops: Implement a system where vehicles log anomalies and send them to a central backend for analysis, allowing for fleet-wide drift detection [1].

Mitigation Techniques:

- Continuous Learning: Design models that can adapt online to new data and changing environments without full retraining [1].

- Redundant Systems: Rely on sensor fusion so that if one sensor drifts (e.g., a dirty camera), others can provide a reliable baseline [1].

- Dynamic Map Integration: Use frequently updated high-definition maps to provide a ground-truth reference for the AI system [1].

Experimental Protocols for Drift Characterization

Protocol 1: Characterizing Drift in Electrochemical Biosensors

Objective: To systematically evaluate the mechanisms of signal drift for an electrochemical biosensor in a biologically relevant environment.

Materials:

- Apparatus: Potentiostat, flow cell or sterile beaker, temperature-controlled bath (37°C).

- Biological Medium: Undiluted, heparinized whole blood.

- Control Medium: Phosphate Buffered Saline (PBS).

- Sensor Proxies: Thiol-modified DNA or RNA strands attached to a gold electrode, with an internal redox reporter (e.g., Methylene Blue).

Methodology:

- Sensor Preparation: Immobilize the DNA proxy onto a gold electrode via thiol-gold chemistry to form a self-assembled monolayer.

- Baseline Recording: Place the sensor in PBS at 37°C and record square-wave voltammetry (SWV) scans for 1-2 hours to establish a stable baseline.

- Experimental Challenge: Transfer the sensor to undiluted whole blood maintained at 37°C.

- Continuous Interrogation: Run successive SWV scans over a period of several hours (e.g., 8-10 hours), monitoring the peak current of the redox reporter.

- Parameter Variation: Repeat the challenge in blood while systematically varying the SWV potential window to probe its effect on drift rate.

- Post-Hoc Analysis: After a period of significant drift, wash the sensor with a concentrated urea solution (e.g., 6-8 M) and re-measure in PBS to assess signal recovery.

Expected Outcomes:

- A biphasic drift curve: a rapid exponential phase (driven by blood fouling) followed by a slow linear phase (driven by electrochemical desorption) [2].

- A strong correlation between the applied potential window and the rate of the linear drift phase [2].

- Significant signal recovery after a urea wash, confirming the role of fouling [2].

Data Analysis Table:

| Drift Phase | Primary Mechanism | Key Evidence | Potential Remediation |

|---|---|---|---|

| Exponential | Biofouling | Absent in PBS; reversible with urea wash; electron transfer rate decreases. | Use fouling-resistant materials; enzyme-resistant oligonucleotides. |

| Linear | Electrochemical Desorption | Present in PBS; rate depends on potential window; not reversible. | Optimize electrochemical protocol; use narrower potential windows. |

Protocol 2: Implementing the Multi Pseudo-Calibration (MPC) Drift Compensation

Objective: To compensate for sensor drift in a deeply-embedded bioreactor monitor without interrupting the process.

Materials:

- Apparatus: Bioreactor, embedded cross-sensitive chemical sensor array, offline analyzer (e.g., HPLC, mass spectrometer).

- Software: Regression models (PLS, XGBoost, or MLP).

Methodology:

- Continuous Data Collection: The sensor array continuously collects measurements. Periodically, a small sample is extracted from the bioreactor.

- Offline Analysis: The sample is analyzed with the offline analyzer to obtain ground-truth analyte concentrations.

- Data Augmentation: Each new data point (sensor measurements

S_currentat timet_currentand ground truthC_true) is paired with all previous pseudo-calibration samples. This creates an augmented dataset where each input is a vector containing:- The difference

S_current - S_pseudo - The ground truth concentration

C_pseudoof the past sample - The time difference

t_current - t_pseudo

- The difference

- Model Training & Prediction: A regression model is trained on this augmented dataset. To make a prediction at time

t, the model uses the current sensor data paired with all available past pseudo-calibration points. The final prediction is the average of the predictions relative to each pseudo-point [3].

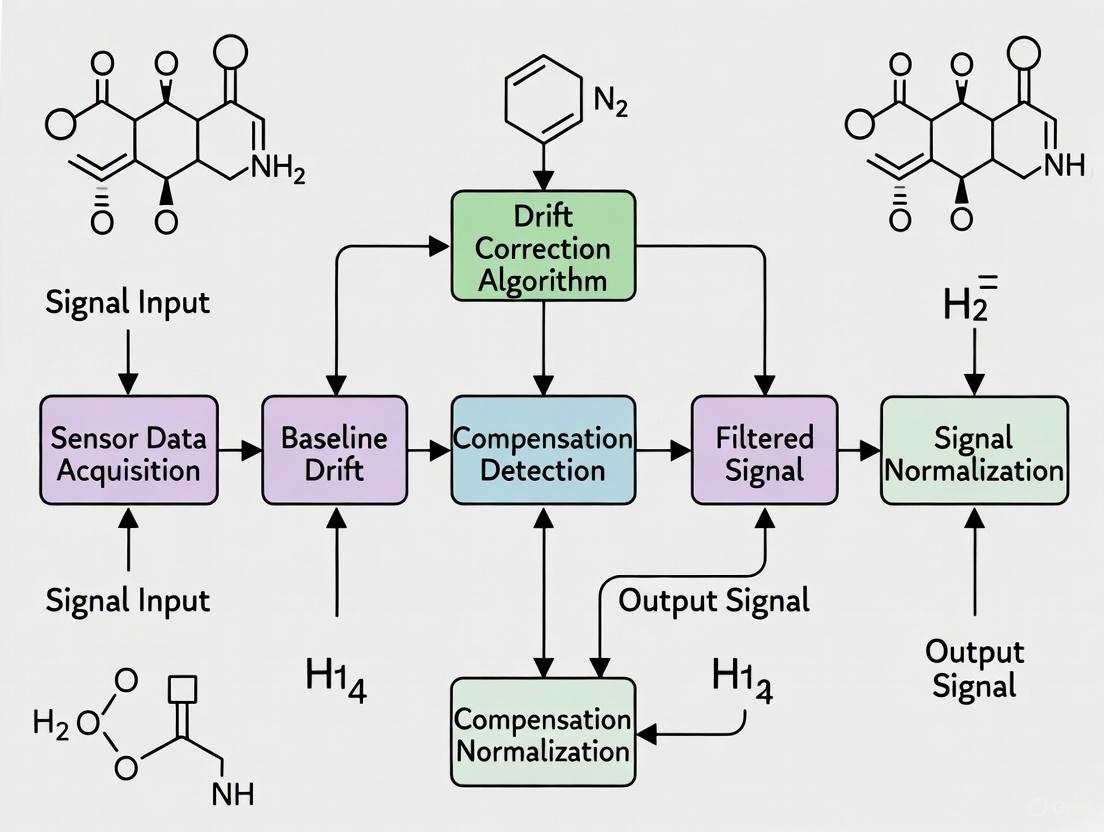

Visualization of the MPC Workflow:

Expected Outcomes:

- The MPC model should maintain significantly better prediction accuracy over time compared to a model without drift compensation [3].

- The technique allows for learning a non-linear model of the sensor drift.

- The quadratic augmentation of the training data improves model robustness.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| 2'O-Methyl RNA / Spiegelmers | Enzyme-resistant oligonucleotides used in place of DNA in aptamer-based sensors to reduce signal loss from enzymatic degradation by nucleases in biological fluids [2]. |

| Urea (6-8 M Solution) | A denaturant used in post-experiment washes to remove non-covalently adsorbed foulants (proteins, cells) from the sensor surface, helping to diagnose and partially reverse fouling-based drift [2]. |

| Hydrogel-based Magneto-resistive Sensors | A sensing platform used in bioprocess monitoring. Its cross-sensitive nature makes it suitable for advanced drift compensation techniques like the Multi Pseudo-Calibration (MPC) approach [3]. |

| Self-Assembled Monolayer (SAM) Components | Alkane-thiolates (e.g., in EAB sensors) form a well-ordered monolayer on gold electrodes, providing a stable interface for probe immobilization. Their stability is critical, as desorption is a key drift mechanism [2]. |

| Methylene Blue Redox Reporter | A common redox reporter used in electrochemical biosensors. It operates within a relatively narrow potential window, which helps minimize electrochemical desorption of the SAM, contributing to better sensor stability [2]. |

The Critical Impact of Drift on μGal-Level Precision and Pharmacokinetic Data

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What are the primary sources of signal drift in high-precision MEMS gravimeters and how can they be mitigated? In Micro-Opto-Electro-Mechanical-System (MOEMS) gravimeters, drift originates from multiple sources. Fabrication tolerances and internal stress in the miniature spring-mass system are key contributors, leading to a drift rate down to 153 μGal/day [7]. Temperature fluctuations significantly impact the mechanical properties of the system. Mitigation involves designated manufacturing and packaging to minimize internal stress and external temperature effects, and the integration of Pt resistors for active temperature measurement and control [7].

Q2: Why does my electrochemical aptamer-based (EAB) sensor signal degrade in biological fluids, and what are the proven stabilization methods? Signal degradation in EAB sensors is primarily due to two mechanisms. First, fouling from blood components (cells, proteins) adsorbs to the sensor surface, reducing the electron transfer rate and causing an initial exponential signal loss [2]. Second, electrochemically driven desorption of the self-assembled monolayer (SAM) from the gold electrode surface causes a subsequent linear signal decrease [2]. Stabilization strategies include using a narrow electrochemical potential window (-0.4 V to -0.2 V) to prevent SAM desorption and employing enzyme-resistant oligonucleotide backbones (e.g., 2'O-methyl RNA) to reduce degradation [2].

Q3: How does respiratory motion corrupt pharmacokinetic parameters in free-breathing DCE-MRI studies and how is it corrected? Respiratory motion causes misalignment of the tissue of interest across image frames in free-breathing Dynamic Contrast-Enhanced MRI (DCE-MRI). This misalignment prohibits reliable measurement of signal intensity changes over time, which is crucial for generating accurate time-intensity curves for pharmacokinetic modeling [8]. Correction is achieved through retrospective non-rigid motion correction using B-spline image registration, which realigns all image frames to a reference frame, significantly increasing the percentage of reliable pixels for parameter estimation (Ktrans, ve, kep) [8].

Q4: What rigorous testing methodology can conclusively distinguish biomarker detection from signal drift in BioFETs? A conclusive testing methodology for BioFETs must incorporate control devices and a stable measurement configuration. This involves fabricating and testing a control device with no bioreceptors (e.g., antibodies) printed over the transducer channel within the same chip environment. A true positive detection event is confirmed only when a significant signal shift is observed in the functionalized device while the control device shows no change [9]. Furthermore, relying on infrequent DC sweeps rather than continuous static or AC measurements helps to mitigate the influence of drift on the measured signal [9].

Troubleshooting Guides

Problem: High drift rate in a newly deployed MOEMS gravimeter.

- Step 1: Verify temperature control. Check the operation of the integrated Pt resistors and the stability of the local environment. The sensing unit requires active temperature control to minimize thermally induced drift [7].

- Step 2: Check packaging integrity. Ensure the packaging designed to minimize the impact of external pressure variations and internal stress is intact and hermetic [7].

- Step 3: Benchmark against a known standard. Co-locate your sensor with a commercial gravimeter (e.g., a gPhone) to characterize and separate your instrument's drift from the true geophysical signal [7].

Problem: Rapid signal loss in an electrochemical biosensor during in vitro testing in whole blood.

- Step 1: Interrogate the mechanism. The biphasic signal loss (fast exponential then slow linear) indicates two simultaneous problems. The initial rapid drop is likely biofouling, while the subsequent steady decrease is electrochemical desorption [2].

- Step 2: Mitigate fouling. Introduce a fouling-resistant polymer brush layer (e.g., POEGMA) above the electrode. A post-experiment wash with a solubilizing agent like concentrated urea can recover most of the signal lost to fouling, confirming the diagnosis [2].

- Step 3: Optimize electrochemistry. Narrow the potential window of your square-wave voltammetry scan to avoid the reductive (below -0.5 V) and oxidative (above ~1 V) desorption limits of the gold-thiol bond. A window of -0.4 V to -0.2 V dramatically improves stability [2].

Problem: Poor "goodness-of-fit" in pixel-wise pharmacokinetic parameter maps from DCE-MRI data.

- Step 1: Suspect tissue misregistration. A low percentage of pixels passing the χ²-test is a strong indicator that respiratory motion has corrupted the time-intensity curves [8].

- Step 2: Apply non-rigid motion correction. Implement a B-spline image registration algorithm to align all image frames in the DCE-MRI series to a reference frame (typically an expiratory frame after contrast enhancement) [8].

- Step 3: Re-run pharmacokinetic analysis. Generate new parameter maps (Ktrans, ve, kep) from the motion-corrected data. You should observe a statistically significant increase in the percentage of reliable pixels within the region of interest [8].

The following tables consolidate key performance metrics and statistical results from the cited research.

Table 1: Performance Metrics of Drift-Critical Sensing Platforms

| Sensor Platform | Key Parameter Measured | Self-Noise / Sensitivity | Drift Rate | Primary Mitigation Strategy |

|---|---|---|---|---|

| MOEMS Gravimeter [7] | Gravity Variation | 1.1 μGal Hz-1/2 @ 0.5 Hz | 153 μGal/day | Free-form anti-springs, optical readout, temperature control |

| D4-TFT BioFET [9] | Biomarker Concentration | Sub-femtomolar (aM) | Mitigated for conclusive detection | POEGMA polymer brush, control device, infrequent DC sweeps |

| EAB Sensor (in whole blood) [2] | Drug/Metabolite Concentration | Signal loss characterized | Biphasic (Exponential + Linear) | Narrow potential window, enzyme-resistant oligonucleotides |

Table 2: Impact of Motion Correction on Pharmacokinetic Analysis in DCE-MRI [8]

| Analysis Condition | Percentage of Reliable Pixels in SPNs | Statistical Significance (p-value) of Difference | Ability to Distinguish Benign vs. Malignant Nodules |

|---|---|---|---|

| Original (Misaligned) DCE-MRI | Significantly Lower | p = 4 × 10-7 | Not Significant |

| Motion-Corrected DCE-MRI | Significantly Higher | - | Significant (for Ktrans & kep) |

Experimental Protocols

Protocol 1: Multi-stage Design and Fabrication of a Low-Drift MOEMS Gravimeter This protocol outlines the creation of a chip-scale gravimeter with μGal stability.

- Mechanical Sensing Unit Design: Use a multi-stage algorithmic approach to design Freeform Anti-Springs (F-ASs).

- First Stage (Global Optimization): Define a freeform curve using B-spline control points to achieve a target resonant frequency (<2 Hz) and high acceleration-displacement sensitivity (>95 μm/Gal) [7].

- Second Stage (Local Fine-tuning): Adjust control points locally to meet constraints of fabrication tolerance and maximum stress without requiring a high etching aspect ratio [7].

- Fabrication: Fabricate the spring-mass system from a silicon wafer, using its full thickness for the proof mass. Integrate gold grid lines on the proof mass for optical readout [7].

- Optical Readout Integration: Assemble the mechanical unit opposite a fixed glass substrate with a matching gold grating. This creates an optical grating-based readout with pm-level displacement sensitivity [7].

- Packaging: Package the sensor with a supporting layer and adhesive, ensuring integrated Pt resistors are in place for temperature measurement and control. The package must minimize influence from external pressure and temperature [7].

Protocol 2: Validating Biomarker Detection in a BioFET While Accounting for Drift This protocol ensures observed signals originate from biomarker binding, not drift.

- Device Functionalization: Grow a non-fouling polymer brush layer (e.g., POEGMA) on the FET channel. Subsequently, inkjet-print capture antibodies (cAb) into this polymer matrix to create the sensing region [9].

- Control Device Fabrication: On the same chip, fabricate an identical device where the polymer brush layer is left unpatterned and contains no antibodies over the channel [9].

- Testing Configuration: Use a stable electrical setup, preferably with a palladium (Pd) pseudo-reference electrode to avoid bulky Ag/AgCl electrodes. Place the device in a biologically relevant solution (e.g., 1X PBS) [9].

- Data Acquisition: Perform measurements using infrequent DC current-voltage (I-V) sweeps rather than continuous monitoring. Simultaneously record signals from both the functionalized sensor and the control device [9].

- Signal Validation: A valid detection event is confirmed only when a significant on-current shift is recorded in the antibody-functionalized device, while the control device shows no concurrent change, thus ruling out system-wide drift [9].

Experimental Workflow Visualizations

Diagram 1: MOEMS gravimeter design and fabrication.

Diagram 2: DCE-MRI motion correction workflow.

Diagram 3: Diagnosing and mitigating EAB sensor drift.

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Reagents and Materials for Drift Mitigation

| Item Name | Function / Application | Brief Rationale |

|---|---|---|

| Poly(oligo(ethylene glycol) methyl ether methacrylate) (POEGMA) [9] | Polymer brush interface for BioFETs. | Extends Debye length via Donnan potential, enabling biomarker detection in physiological saline and reducing biofouling. |

| B-Spline Curves (Algorithmic Design) [7] | Defining free-form anti-spring geometries in MEMS. | Enables local adjustability for optimizing mechanical sensitivity and robustness within fabrication constraints. |

| 2'O-methyl RNA Oligonucleotides [2] | Enzyme-resistant backbone for EAB sensors. | Provides enhanced stability against nucleases in biological fluids compared to native DNA, reducing one source of signal degradation. |

| Platinum (Pt) Resistors [7] | Integrated temperature sensing and control. | Critical for monitoring and compensating thermal drift in high-precision physical sensors like gravimeters. |

| Palladium (Pd) Pseudo-Reference Electrode [9] | Stable electrode for BioFETs in point-of-care formats. | Replaces bulky Ag/AgCl electrodes, enabling compact device design while maintaining a stable electrochemical potential. |

| B-Spline Image Registration Model [8] | Non-rigid motion correction in medical imaging. | Corrects complex respiratory motion in free-breathing DCE-MRI, enabling reliable pharmacokinetic analysis. |

Frequently Asked Questions

1. What are the primary sources of drift in continuous monitoring sensors? Drift in sensors used for continuous monitoring, such as in gravity surveys, arises from multiple factors. Time-dependent degradation due to environmental exposure (e.g., water ingress, biofouling, radiation) alters the sensor's physical and chemical properties [10]. Furthermore, environmental perturbations like varying temperature and humidity, as well as instrumental effects such as thermal drifts and attitude determination residuals, introduce systematic biases and noise into the data stream [3] [11].

2. How can I correct for drift without interrupting long-term monitoring? Recalibration using a stable external reference is often not feasible for deeply-embedded sensors. Effective strategies include:

- On-site Pseudo-Calibration: Using periodic ground-truth measurements (e.g., from offline analyzers) as "pseudo-calibration" points to update and train drift-correction models without process interruption [3].

- Data Redundancy and Credibility Weighting: Deploying multiple sensors to measure the same analyte and using algorithms to estimate the true signal by weighting each sensor's output based on its historical and current credibility [10].

- Iterative Data Preprocessing: Implementing automated frameworks that systematically detect and remove outliers, then compensate for data gaps using interpolation techniques to maintain data continuity [11].

3. My data shows both gradual drift and sudden spikes. How should I handle this? A combined approach is necessary. Iterative residual correction can be used to handle different types of anomalies [11]:

- For significant outliers and spikes, the data point and its neighboring points within a specified window are discarded.

- For gradual drift, the data is segmented and fitted (e.g., using Fourier series). A residual threshold is defined, and points exceeding this threshold are filtered out. The resulting gaps are then filled with fitted data, assigned reduced weights during inversion to minimize their impact.

Troubleshooting Guide

| Problem Description | Possible Causes | Diagnostic Steps | Recommended Solutions |

|---|---|---|---|

| Gradual, monotonic signal shift over time | Sensor aging, biofouling, slow environmental changes (e.g., temperature). | Review long-term data trends. Check correlation of drift with environmental logs. | Apply the Multi Pseudo-Calibration (MPC) method [3] or the Maximum Likelihood Estimation (MLE) with drift correction [10]. |

| Sudden jumps or spikes in data (Discontinuities) | Power supply instability, hardware failure, transient external interference. | Plot data differentiation to identify discontinuities. Inspect instrument logs for events. | Use an iterative residual correction framework to detect and remove outliers, followed by spline interpolation for gap filling [11]. |

| High-frequency noise obscuring signal | Instrument noise, electronic interference, atmospheric effects. | Perform a frequency analysis (e.g., FFT) to identify noise components. | Implement a data preprocessing chain with filtering and regularization methods tailored to the noise characteristics [11]. |

| Loss of calibration in multiple sensors | Harsh deployment conditions, lack of reference points, simultaneous degradation. | Compare sensor outputs. Check if a majority of sensors show unreliable readings. | Employ a redundant sensor array with credibility-weighted data aggregation to estimate the true signal even when most sensors are unreliable [10]. |

Experimental Protocols for Drift Compensation

Protocol 1: Implementing the Multi Pseudo-Calibration (MPC) Approach

This methodology is designed for continuous monitoring systems where obtaining a ground-truth measurement is possible but physical recalibration is not [3].

- Data Collection: Continuously record sensor measurements and their timestamps.

- Ground-Truth Sampling: Periodically, extract samples and obtain accurate analyte concentrations using an offline analyzer. Record the timestamp of this sample.

- Data Augmentation: For a training set with

Nsamples, create an augmented set by pairing each sample with every previous sample, resulting inN(N-1)/2data points. - Model Input Construction: For each pair, construct an input vector that includes:

- The difference between current sensor readings and the pseudo-calibration sample readings.

- The ground-truth concentration of the pseudo-sample.

- The time difference between the current and pseudo-calibration sample.

- Model Training & Prediction: Train a regression model (e.g., PLS, XGB, MLP) on the augmented dataset. The model learns to predict current concentration, learning a non-linear model of the sensor drift.

MPC Workflow for On-Site Drift Compensation

Protocol 2: Data Preprocessing with Iterative Residual Correction

This protocol is crucial for preparing satellite gravimetry data (like GRACE-FO) and other time-series data for inversion, by addressing outliers and gaps [11].

- Data Segmentation: Partition the input time-series data into manageable segments.

- Model Fitting & Residual Calculation: Fit a model (e.g., Fourier series) to the data segment and calculate the residuals (R) between the data and the fitted model. Compute the Root Mean Square Error (RMSE).

- Outlier Detection and Removal:

- If

RMSE > threshold_a, classify as a High-Impact Segment. Identify the point with the maximum residual and remove it along with adjacent points within a defined window. - If

RMSE < threshold_a, classify as a Low-Impact Segment. Define a residual thresholdb. Remove any data points whereR > b.

- If

- Gap Compensation: For the data gaps created by outlier removal, apply a multivariate spline interpolation to fill in the missing values.

- Iteration and Output: Iterate steps 2-4 until the predefined accuracy criteria are met. Concatenate all processed segments to produce the final, cleaned output dataset.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Sensor Array | A set of multiple cross-sensitive chemical sensors that provide redundant measurements of the same analyte, enabling drift compensation algorithms [3] [10]. |

| Offline Analyzer | A high-precision laboratory instrument used to establish ground-truth concentrations for pseudo-calibration points, serving as a reference for updating in-field sensors [3]. |

| Fiducial Markers | Stable references used in microscopy and other imaging techniques to track and correct for sample drift physically. Their use complicates sample preparation [12]. |

| Iterative Preprocessing Framework | A software-based tool that automatically detects outliers, removes them, and interpolates missing data, ensuring high-quality input for gravity field inversion and other analyses [11]. |

| Regression Models (PLS, XGB, MLP) | Machine learning models used to learn the complex, non-linear relationship between sensor readings, time, and actual analyte concentration, thereby modeling and correcting for drift [3]. |

Logical Data Correction Pipeline

Troubleshooting Guides

Guide 1: Identifying and Correcting Signal Drift in dMRI Data

Problem: Researchers observe inconsistent apparent diffusion coefficient (ADC) metrics or tractography results between scanning sessions, potentially due to a systematic signal decrease during acquisition.

Explanation: Signal drift is a manifestation of temporal instability in the MRI scanner system, often associated with gradient coil heating. It causes a global signal decrease over the course of a diffusion-weighted MRI (dMRI) acquisition [13]. This drift introduces systematic non-linearities that bias the quantification of ADC, a fundamental metric for all dMRI analysis, from tensor models to tractography [14] [15]. If uncorrected, it affects all subsequent quantitative parameters, including fractional anisotropy, mean diffusivity, mean kurtosis, and even the directional information used for tractography [13].

Detection Steps:

- Plot Signal Time Course: Extract the mean signal intensity from a consistent region of interest (ROI) in all interspersed non-diffusion-weighted (b0) volumes.

- Identify Trend: Plot these mean signals against their acquisition time point or volume index. A consistent downward (or upward) trend indicates signal drift. Studies have observed signal decreases of up to 5% over a 15-minute scan on various scanner vendors [13], and even over 10% in some phantom ROIs [14].

Solution: Apply a signal drift correction model that uses the interspersed b0 volumes to estimate and compensate for the signal change.

- Acquisition Requirement: Ensure your dMRI protocol intersperses b0 volumes throughout the acquisition, not just at the beginning and end. The frequency of b0 volumes (e.g., every 8, 16, 32, or 96 diffusion-weighted volumes) impacts the accuracy of the drift model [14] [15].

- Choose a Correction Model:

- Temporal (T) Model: This method, proposed by Vos et al., fits a linear or quadratic curve to the mean signal of the b0 images across the entire ROI or brain [14] [13] [15].

- Voxelwise Temporal (Tx) Model: A generalization of the T model that performs a linear or quadratic fit independently for each voxel's time course [14].

- Temporal-Spatial (TS) Model: A more advanced model that captures interacting spatial and temporal patterns of drift, which has been shown to reduce error more effectively than temporal-only models [14].

Table 1: Comparison of Signal Drift Correction Methods

| Method | Spatial Modeling | Key Principle | Advantage | Consideration |

|---|---|---|---|---|

| Temporal (T) [13] | No | Fits a single global (linear/quadratic) trend to the mean signal of all b0s. | Simple to implement, robust for global drift. | Fails to account for spatially varying drift. |

| Voxelwise Temporal (Tx) [14] | Yes (Independent) | Fits a unique (linear/quadratic) trend to the time course of each individual voxel. | Accounts for spatial variation in drift. | May overfit noise in voxels with low SNR. |

| Temporal-Spatial (TS) [14] | Yes (Interactive) | Models drift using a low-order spatial basis set that interacts with a temporal trend. | Captures complex spatiotemporal patterns; can be more accurate and statistically robust. | More complex implementation. |

Guide 2: Unexplained Inconsistencies in Tractography Output

Problem: Tractography results show unexpected variations in streamline count or pathway reconstruction when comparing data from the same subject across different days or from different scanners.

Explanation: Signal drift can directionally bias ADC estimation [14] [15]. Since tractography algorithms are sensitive to the underlying directional diffusion profiles, a systematic bias introduced by drift can alter the estimated principal diffusion direction. This, in turn, can cause erroneous termination or deviation of tracked streamlines, leading to reduced reproducibility and accuracy of structural connectivity maps [13].

Detection Steps:

- Inspect Preprocessed Data: Before generating tractography, ensure that signal drift correction has been applied as part of your preprocessing pipeline.

- Quality Control: Use tools to visualize the coregistered and corrected diffusion data. Look for residual inconsistencies in signal intensity across the acquisition timeline.

Solution: Integrate signal drift correction into the standard dMRI preprocessing workflow.

- Pipeline Integration: Signal drift correction should be performed after initial data conversion but before other major steps like Gibbs ringing correction and eddy current correction [16].

- Software Implementation: Tools like ExploreDTI have built-in plugins for signal drift correction. The recommended approach is often a quadratic fit to the b0 signal time course [16].

- Comprehensive Correction: For best results, combine signal drift correction with other standard corrections (e.g., for susceptibility and eddy currents) and consider gradient nonlinearity correction, as their benefits are additive [17].

Diagram: Essential dMRI Preprocessing Workflow. Signal drift correction is an early, critical step [16].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental cause of signal drift in dMRI? Signal drift is primarily caused by temporal instabilities in the MRI scanner hardware. A commonly cited cause is heating of the gradient coils during prolonged or demanding sequences like dMRI, leading to a phenomenon known as B0 drift. This results in a global, but often spatially varying, decrease in signal intensity as the scan progresses [14] [13].

Q2: How does signal drift quantitatively impact my dMRI metrics? Uncorrected signal drift systematically biases the estimation of the apparent diffusion coefficient (ADC). The magnitude of this effect can be significant. Studies on phantoms have shown:

- Signal Change: Drift can cause a global signal decrease of up to 5% in a 15-minute scan on various scanner vendors [13], with spatially varying effects in some ROIs exceeding 10% [14].

- Metric Accuracy: Incorporating signal drift correction in preprocessing has been shown to lead to a statistically significant decrease in error for mean diffusivity (MD) measurements [17].

Q3: What is the minimum number of interspersed b0 volumes needed for effective correction? While more b0 volumes allow for a more robust model (e.g., enabling a quadratic fit), effective correction can be achieved with a practical number. Experimental protocols have successfully characterized drift using a variable number of b0s interspersed every 8, 16, 32, 48, and 96 diffusion-weighted volumes [14] [15]. A general rule is that a linear model can be applied with as few as three b0s, while a quadratic fit is preferred when more b0s are available [14].

Q4: Can I perform signal drift correction if my protocol didn't include interspersed b0s? No. Reliable estimation of the signal drift time course is dependent on having non-diffusion-weighted (b0) measurements distributed throughout the acquisition. If b0s are only acquired at the beginning and end of the scan, it is impossible to model the potential non-linearity of the drift. Therefore, incorporating interspersed b0s is a mandatory part of any dMRI protocol concerned with quantitative accuracy [13] [15].

Q5: Is signal drift only a problem in research, or does it affect clinical applications too? It affects both. The quantitative accuracy of dMRI-derived metrics across sessions and scanners is critically important for broader clinical application. Signal drift compromises this reproducibility, impacting longitudinal monitoring of disease progression or treatment response. Furthermore, its effect on tractography is directly relevant to clinical tasks like neurosurgical planning for brain tumors [13] [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for dMRI Phantom Experiments

| Item Name | Function in Experiment | Technical Specification |

|---|---|---|

| Polyvinylpyrrolidone (PVP) Phantom [14] [15] [17] | Mimics the diffusion properties of brain tissue. Used to characterize scanner performance and validate correction methods without subject variability. | A spherical isotropic phantom with a single PVP concentration, or a multi-vial phantom (e.g., 13 vials) with varying concentrations to mimic a range of ADC values (e.g., 0.36-2.2 × 10⁻³ mm²/s). |

| Ice-Water Bath [14] [15] [17] | Stabilizes the temperature of the phantom during scanning. Temperature control is critical as diffusion is temperature-dependent. | A container to submerge the phantom in ice water, maintaining a temperature of zero degrees Celsius to ensure stable and known diffusivity values. |

| HPD Diffusion Phantom [17] | A commercially available, standardized phantom designed for quality control in diffusion imaging. | Contains multiple vials with different diffusivities, providing known reference values for validating ADC and FA measurements. |

Experimental Protocol: Characterizing Signal Drift

Objective: To characterize the spatial and temporal patterns of signal drift on a specific MRI scanner using a stable diffusion phantom.

Materials:

- Ice-water phantom with 13 vials of varying PVP concentrations (or a single-concentration PVP sphere) [14] [17].

- MRI scanner (e.g., 3T Philips, as used in the cited study).

- Ice-water bath for temperature stabilization.

Acquisition Parameters (Example):

- Sequence: Diffusion-weighted echo-planar imaging (EPI).

- B-values: Primary b-value = 2000 s/mm²; interspersed minimal b-value = 0.1 s/mm² [14] [15].

- Gradient Directions: 96 [14].

- Interspersed b0 Scheme: Acquire multiple scans, varying the number of b0 volumes interspersed throughout the 96 directions (e.g., place them every 8, 16, 32, 48, and 96 volumes) [14].

- Other Parameters: TR = 8394 ms, TE = 70 ms, slice thickness = 2.5 mm, in-plane resolution = 2.5 mm [14].

Processing and Analysis Steps:

- Preprocessing: Perform basic preprocessing including susceptibility and eddy current correction (e.g., using FSL's

topupandeddy) [14]. - Signal Extraction: For each scan, manually define ROIs corresponding to each vial in the phantom. Extract the mean signal intensity from every b0 volume within each ROI.

- Model Fitting: For each ROI, fit the signal time course from the b0s using both a linear (Eq. 1) and quadratic (Eq. 2) model [14].

S(n) = d*n + s0(Eq. 1: Linear Model)S(n) = d2*n² + d1*n + s0(Eq. 2: Quadratic Model)- Where

nis the volume index,S(n)is the signal,d, d1, d2are drift coefficients, ands0is the signal offset.

- Validation: Apply the different correction models (Uncorrected, T, Tx, TS) and calculate the resulting ADC in each vial. Compare the consistency and accuracy of ADC metrics across the different protocols and correction methods [14].

Diagram: Signal Drift Characterization and Correction Protocol

FAQs: Understanding Multi-Physics Coupling and Signal Drift

Q1: What is signal drift in the context of continuous monitoring? Signal drift refers to the gradual deviation of an instrument's readings from the true, expected value over time. In continuous monitoring applications, this is a critical challenge as it compromises data reliability. Drift can manifest as a slow, consistent change or as erratic, unstable readings, and is often driven by environmental factors such as temperature fluctuations, carbon dioxide absorption, and changing electromagnetic conditions [19].

Q2: How does the multi-physics coupling effect cause instrument instability? Multi-physics coupling occurs when thermal, electrical, mechanical, and chemical domains interact within a system, creating a complex feedback loop that drives instability. For example, in a lab-scale combustor, the thermoacoustic feedback-loop is driven by the phase relationship between entropic and acoustic fluctuations at the injection point. Similarly, in electronic sensors, electrical losses generate heat, which elevates temperature and affects material properties, which in turn modifies electrical parameters [20] [21]. This interdependent relationship means a change in one physical domain (e.g., ambient temperature) can cause instability in another (e.g., sensor signal), leading to overall instrument drift.

Q3: What are the most common environmental factors leading to signal drift? The primary environmental factors are:

- Temperature Fluctuations: Rapid temperature changes shift hydrogen ion activity in solutions and affect electronic component performance [19].

- Carbon Dioxide (CO2) Absorption: In aqueous environments, CO2 absorption forms carbonic acid, releasing hydrogen ions and lowering pH [19].

- Electromagnetic Interference (EMI): Noise from motors, heaters, or power supplies can induce erratic signals in high-impedance sensors [22].

- Microbial Activity: Metabolic processes of microorganisms in aquatic environments release CO2, altering the chemical composition of the sample [19].

Q4: How can I diagnose if my sensor is suffering from drift versus a complete failure? A systematic diagnostic approach is recommended:

- Perform a Slope and Offset Check: For electrochemical sensors like pH probes, measure the sensor's response in standard buffer solutions. A functioning electrode typically has a slope between 92-102% and an offset within ±30 mV. Values outside this range indicate aging or drift [19].

- Analyze Response Time: A new sensor typically stabilizes in a buffer solution within 20-30 seconds. A response time longer than 60 seconds suggests a need for cleaning or potential replacement due to drift [19].

- Inspect for Physical Damage: Visually examine sensors for microscopic cracks, contamination, or clogged junctions that can degrade performance progressively [19].

- Conduct a Signal Drift Test: Monitor the signal magnitude of a reference standard over time. A consistent global signal decrease, as observed in diffusion MRI scanners, is a hallmark of signal drift [13].

Troubleshooting Guides

Guide to Resolving Signal Noise in Sensor PCBs

Signal noise, often manifested as erratic readings, is a frequent issue in sensitive monitoring equipment.

- Symptoms: Inaccurate, jumpy, or unstable sensor readings; data that appears "noisy."

- Root Causes: Electromagnetic Interference (EMI), poor PCB layout, improper grounding, or cable routing issues [22].

- Step-by-Step Solution:

- Inspect PCB Layout: Ensure analog and digital ground planes are separated. Route sensitive signal traces away from high-frequency components like switching regulators [22].

- Implement Shielding: Use a continuous ground plane beneath sensitive signal traces. Consider housing the PCB in a metal enclosure if operating in a high-EMI environment [22].

- Add Filtering: Incorporate low-pass filters on sensor output lines. A simple RC filter (e.g., 1 kΩ resistor and 0.1 μF capacitor) can effectively suppress high-frequency noise above 1.6 kHz [22].

- Check Cables: Use shielded cables for external sensor connections and ensure they are routed away from AC power lines [22].

Guide to Correcting Sensor Reading Drift

Sensor reading drift is a gradual deviation from accurate measurements, critical for long-term studies.

- Symptoms: Measurements gradually deviate from known references; consistent offset that increases over time.

- Root Causes: Aging sensor components, temperature changes, contamination, or clogged junctions [22] [19].

- Step-by-Step Solution:

- Verify Operating Environment: Confirm the sensor is operating within its specified temperature and humidity range. For example, many particulate matter sensors are rated for 0°C to 50°C [22].

- Clean the Sensor: Gently clean the sensor surface with compressed air or a soft brush to remove dust and debris, following the manufacturer's guidelines [22].

- Inspect and Unclog Junctions: For pH electrodes, a clogged junction is the most common cause of drift. Clean the junction according to the manufacturer's instructions to restore a stable electrical connection [19].

- Recalibrate: Recalibrate the sensor using a certified reference standard. If the sensor cannot be calibrated or its slope is outside the acceptable range, replacement may be necessary [22] [19].

Guide to Fixing Sensor Calibration Issues

Calibration issues prevent sensors from providing accurate readings even after adjustment.

- Symptoms: Inability to calibrate; readings remain inaccurate after calibration; calibration fails.

- Root Causes: Expired or contaminated buffer solutions, aged or damaged sensors, improper calibration procedure [19].

- Step-by-Step Solution:

- Use Fresh References: Always use fresh, certified reference standards or buffer solutions for calibration. Do not use expired buffers [19].

- Check Sensor Health: Before calibrating, perform a diagnostic check of the sensor's slope and offset to ensure it is capable of being calibrated [19].

- Follow Manufacturer Protocol: Adhere strictly to the manufacturer's calibration procedure, which often involves exposing the sensor to the reference condition and adjusting the output via software [22].

- Consider Replacement: If the sensor is beyond its usable life (typically 1-2 years for low-cost sensors) and cannot hold a calibration, it should be replaced [22].

Experimental Protocols for Drift Correction

Protocol: Correcting for Signal Drift in Diffusion MRI

This protocol, adapted from a methodology proven to minimize detrimental effects on MRI analysis, outlines the process for correcting signal drift in monitoring systems where a progressive signal decrease is observed [13].

Aim: To estimate and compensate for a global signal decrease over the duration of a scanning session. Materials:

- Monitoring instrument (e.g., MRI scanner, continuous spectroscopic monitor)

- Stable reference standard or phantom

- Data analysis software (e.g., Python, MATLAB)

Workflow: The following diagram illustrates the experimental workflow for signal drift correction.

Procedure:

- Intersperse Reference Measurements: Throughout the continuous monitoring session, periodically measure a stable, non-drifting reference standard. In diffusion MRI, this involves interspersing non-diffusion-weighted images throughout the scan sequence [13].

- Monitor Signal Magnitude: Record the signal magnitude of these reference measurements over the entire time series.

- Estimate Drift Profile: Plot the reference signal against time. A global signal decrease (e.g., up to 5% over 15 minutes, as documented in MRI studies) indicates the presence and magnitude of signal drift [13].

- Apply Mathematical Compensation: Use the estimated drift profile to create a correction algorithm. Apply this algorithm to the entire dataset to compensate for the temporal signal decrease before proceeding with final analysis [13].

Protocol: Mitigating pH Drift in Aqueous Environments

This protocol provides a methodology to stabilize pH readings in systems vulnerable to environmental coupling, such as those affected by CO2 absorption or temperature shifts [19].

Aim: To achieve and maintain stable pH measurements in low-buffering capacity aqueous solutions. Materials:

- Calibrated pH meter and electrode

- Appropriate pH buffer solutions (e.g., 4.0, 7.0)

- Chemical buffers or Cal/Mag supplements

- Temperature-controlled environment

Workflow: The logical relationship between causes, stabilization mechanisms, and outcomes in pH drift mitigation is shown below.

Procedure:

- Calibrate at Sample Temperature: Calibrate the pH electrode using fresh buffer solutions that are at the same temperature as the samples to be measured [19].

- Assess Buffering Capacity: For pure water or low-ionic-strength solutions, anticipate instability. Allow extra time for the reading to stabilize (at least 5 minutes at 25°C) [19].

- Apply a Stabilizing Agent: Introduce a chemical buffer with a pKa value close to your target pH. In hydroponics, for example, adding a Cal/Mag supplement increases water hardness and buffering capacity, which resists rapid pH swings [19].

- Utilize Automated Control: For continuous monitoring, employ a smart pH controller that can automatically dose small amounts of acid or base to maintain the pH within a set range, counteracting drift in real-time [19].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and reagents essential for experiments focused on diagnosing and correcting signal drift.

Table 1: Essential Research Reagents and Materials for Signal Drift Studies

| Item Name | Function/Brief Explanation | Example Application |

|---|---|---|

| Certified Buffer Solutions | Provides a known, stable reference point for calibrating sensors and verifying measurement accuracy. | Calibrating pH electrodes; verifying the slope and offset of electrochemical sensors [19]. |

| Reference Standard/Phantom | A stable material with known properties used to quantify instrument drift over time. | Measuring signal drift in MRI scanners [13] or validating the stability of other analytical instruments. |

| Low-Pass Filter Components | Electronic components (resistors, capacitors) used to build filters that suppress high-frequency electrical noise. | Creating RC filters on PCB signal lines to reduce EMI-induced noise in sensor data [22]. |

| Chemical Buffers (pKa ~ Target pH) | Substances that resist changes in pH when small amounts of acid or base are added, increasing solution stability. | Stabilizing the pH of low-ionic-strength solutions against drift caused by CO2 absorption [19]. |

| Cal/Mag Supplement | A solution of calcium and magnesium salts that increases water hardness (buffering capacity). | Reducing rapid pH swings in hydroponic growth systems by enhancing the solution's chemical stability [19]. |

| Sensor Storage Solution | A liquid formulation that keeps the sensing membrane (e.g., of a pH electrode) hydrated and prevents dehydration. | Properly storing electrochemical sensors to extend their lifespan and maintain calibration stability [19]. |

| Shielded Cables | Cables with a conductive layer that protects the internal signal wire from external electromagnetic interference. | Connecting external sensors to a data acquisition unit in electrically noisy environments [22]. |

| Decoupling Capacitors | Passive electronic components that filter out high-frequency noise from power supply lines on PCBs. | Stabilizing the voltage supply to sensitive microcontrollers and sensors, preventing power-related drift [22]. |

Data Presentation: Sensor Performance and Market Metrics

Table 2: Quantitative Data on Sensor Drift and Market Context

| Parameter | Reported Value / Specification | Context / Source |

|---|---|---|

| Typical pH Electrode Lifespan | 3 years (with proper maintenance) | General operational expectancy before aging causes significant drift [19]. |

| Acceptable pH Slope Range | 92% - 102% | Indicator of a properly functioning electrode; values outside this range suggest aging/decay [19]. |

| Acceptable pH Offset Range | Within ±30 mV | Indicator of a properly functioning electrode [19]. |

| MRI Signal Drift Magnitude | Up to 5% global signal decrease in a 15-min scan | Observed in phantom data across multiple scanners, affecting quantitative diffusion parameters [13]. |

| Sensor Current Draw | 50 - 100 mA (typical air quality sensor) | Important for calculating power supply requirements to prevent voltage-related instability [22]. |

| I2C Pull-up Resistor Values | 2.2 kΩ to 10 kΩ | Typical values required for stable I2C communication in sensor networks, dependent on bus speed and capacitance [22]. |

| Water Conductivity Threshold | Below 100 µS/cm | Low-conductivity samples like RO water are highly susceptible to pH drift from CO2 absorption [19]. |

Advanced Correction Methodologies: From Sensor Design to Computational Frameworks

SENSBIT Performance Specifications

The following table summarizes the key performance characteristics of the SENSBIT biosensor as reported in recent studies.

| Performance Parameter | Reported Result | Testing Condition |

|---|---|---|

| Functional Longevity (in vivo) | Up to 7 days [23] [24] [25] | Implanted in blood vessels of live rats [23] [24] |

| Signal Retention (in vivo) | >60% after 7 days [23] [25] | Implanted in blood vessels of live rats [23] |

| Signal Retention (in serum) | >70% after 30 days [23] [25] | Undiluted human serum [23] |

| Previous State-of-the-Art | ~11 hours in blood [23] [24] | Intravenous exposure for similar devices [24] |

| Key Demonstrated Capability | Real-time tracking of drug concentration profiles [24] | Monitoring of kanamycin antibiotic in live rats [23] |

Troubleshooting Guide: Addressing Common SENSBIT Experimental Challenges

This section addresses specific issues researchers might encounter when working with SENSBIT-type biosensors.

Q1: My biosensor signal is decreasing exponentially over the first few hours in whole blood. What is the primary cause and how can I address it?

A: An exponential signal decrease over the first 1-2 hours is typically caused by biofouling, where blood components like cells and proteins adsorb to the sensor surface, physically blocking electron transfer and reducing the signal [2]. This has been identified as a primary mechanism for the initial "biology-driven" drift phase.

- Solution: Implement a protective, fouling-resistant coating. The SENSBIT design addresses this by mimicking the gut's mucosal layer, using a hyperbranched polymer coating on a nanoporous gold structure to shield the sensing elements from immune attacks and protein buildup [23] [24] [25]. If signal drop occurs, one study showed that washing the sensor with a solubilizing agent like concentrated urea can recover up to 80% of the initial signal, confirming fouling as the culprit [2].

Q2: I am observing a slow, linear signal drift over time, even in controlled buffer solutions. What mechanism is responsible?

A: A slow, linear signal loss under constant electrochemical interrogation is primarily due to an electrochemical mechanism: the desorption of the alkane-thiolate self-assembled monolayer (SAM) from the gold electrode surface [2]. This is the main contributor to the "linear drift phase."

- Solution: Optimize your electrochemical interrogation parameters. Research has shown that this drift is strongly dependent on the applied potential window. By limiting the square-wave voltammetry scan to a narrow window (e.g., -0.4 V to -0.2 V), you can minimize redox-driven breakage of the gold-thiol bond and drastically improve stability, with one study showing only 5% signal loss after 1500 scans under such conditions [2].

Q3: How can I improve the stability of the molecular recognition element against enzymatic degradation?

A: While fouling is a major issue, enzymatic degradation of DNA-based aptamers can also contribute to signal loss.

- Solution: Utilize enzyme-resistant oligonucleotide backbones. Studies have shown that constructing the sensing element from non-natural analogs, such as 2'O-methyl RNA, can provide resistance to nucleases like DNAse I. Recent work with other enzyme-resistant constructs like spiegelmers supports this approach to enhancing longevity in biological fluids [2].

Q4: What are the best practices for data acquisition and processing to correct for residual signal drift?

A: Even with hardware improvements, software-based drift correction is often necessary for high-precision measurements.

- Solution: Employ empirical drift correction methods. A common and effective technique is signal normalization, where the changing electrochemical signal of the aptamer is normalized to a standardizing signal generated at a second, stable square-wave frequency [2]. This approach has been used to achieve good measurement precision over multi-hour in vivo deployments [2]. For general sensor drift, other software techniques include polynomial fitting to model non-linear drift or using look-up tables with pre-calibrated data for real-time interpolation [26].

Experimental Protocols for Key SENSBIT Evaluations

Protocol 1: In Vitro Stability Assessment in Human Serum

This protocol is used to determine the baseline stability and longevity of the biosensor in a complex biological fluid without live cells.

- Sensor Preparation: Fabricate the SENSBIT sensor with its nanoporous gold electrode and protective polymer coating [23] [24].

- Setup: Immerse the functionalized sensor in undiluted, cell-free human serum maintained at 37°C to simulate physiological temperature.

- Data Collection: Continuously interrogate the sensor using square-wave voltammetry (SWV) or another suitable electrochemical technique. Use a narrow potential window (e.g., -0.4 V to -0.2 V) to minimize monolayer desorption [2].

- Duration & Analysis: Run the experiment for an extended period (e.g., several weeks). Measure the signal amplitude at regular intervals and calculate the percentage of signal retention over time, with >70% retention after one month being a benchmark [23] [25].

Protocol 2: In Vivo Longevity and Drift Characterization

This protocol assesses sensor performance and drift correction in a live animal model, which is the most rigorous test.

- Animal Model: Select an appropriate model, such as a live rat.

- Sensor Implantation: Surgically implant the SENSBIT sensor directly into a major blood vessel (e.g., jugular vein) [24].

- Real-time Monitoring: Connect the sensor to a potentiostat for continuous electrochemical interrogation. Monitor the signal of a target molecule (e.g., the antibiotic kanamycin) in real-time as it is administered to the animal [23].

- Drift Analysis: Record the sensor signal over the duration of the implantation (e.g., 7 days). The data will typically show an initial exponential decay phase (driven by fouling) followed by a slower linear phase (driven by SAM desorption) [2]. Apply software drift correction algorithms (e.g., signal normalization) to the raw data.

- Endpoint Validation: After explanation, confirm the sensor's physical condition and remaining signal capability. Successful performance is indicated by >60% signal retention after one week in vivo [23].

The Scientist's Toolkit: Research Reagent Solutions

The table below details key materials and components essential for constructing and operating SENSBIT-like biosensors.

| Item Name | Function / Explanation |

|---|---|

| Nanoporous Gold Electrode | Creates a high-surface-area, 3D scaffold that mimics gut microvilli. It shields the molecular switches and provides the conductive substrate for electron transfer [23] [24] [25]. |

| Protective Hyperbranched Polymer Coating | Acts as an artificial mucosal layer. This coating protects the sensing elements from biofouling and immune system attacks, dramatically improving stability in whole blood [23] [24]. |

| DNA or RNA Aptamer | Serves as the molecular recognition element or "switch." It is a short sequence that folds into a specific shape to bind a target molecule (e.g., a drug), causing a conformational change that generates an electrical signal [27] [23]. |

| Methylene Blue Redox Reporter | A redox molecule attached to the aptamer. Its electron transfer rate to the electrode changes upon aptamer folding/unfolding, producing the measurable electrochemical signal. It is preferred for its stability within the safe potential window for thiol-on-gold monolayers [2]. |

| Alkane-thiolate Self-Assembled Monolayer (SAM) | Forms a dense, ordered layer on the gold electrode, providing a stable foundation for attaching the thiol-modified aptamers and helping to resist non-specific adsorption [2]. |

SENSBIT Workflow and Drift Mechanisms

The following diagrams illustrate the experimental workflow for SENSBIT deployment and the mechanisms behind signal drift.

SENSBIT In Vivo Deployment Workflow

Electrochemical Sensor Drift Mechanisms

FAQs on Hybrid Correction Frameworks

Q1: What is a hybrid correction framework, and why is it needed for continuous monitoring? A hybrid correction framework combines multiple computational techniques—often integrating local preprocessing steps with global adjustment strategies—to address complex artifacts in continuous data streams. These frameworks are essential because single-method approaches often excel in correcting only specific types of artifacts. For instance, in functional near-infrared spectroscopy (fNIRS), wavelet-based methods effectively handle high-frequency oscillations but perform poorly on baseline shifts, whereas spline interpolation correctly models baseline shifts but cannot deal with high-frequency spikes [28]. By hybridizing methods, researchers can achieve more comprehensive artifact correction, improving signal quality and reliability for long-term monitoring applications [28].

Q2: What are common data issues that hybrid frameworks address in sensor data? The primary issues include:

- Motion Artifacts: Caused by subject movement, resulting in signal oscillations (both slight and severe) and baseline shifts [28].

- Concept Drift: A machine learning phenomenon where the statistical properties of the target data stream change over time, leading to model performance degradation [29].

- Signal Decomposition Challenges: Difficulty in attributing variations in a composite signal, like Terrestrial Water Storage (TWS), to its individual components (e.g., groundwater, soil moisture) due to model and data uncertainties [30].

Q3: How do I choose between a model-based and a data-driven correction method? The choice depends on your data characteristics and the availability of mechanistic knowledge.

- Model-based methods (e.g., Wiener process degradation models) are suitable when the underlying physical or physiological degradation process is somewhat understood and can be described mathematically [31].

- Data-driven methods (e.g., neural networks) are powerful for capturing complex, non-linear patterns from large historical datasets without requiring explicit physical models [31].

- Hybrid approaches leverage the strengths of both. They use a physical model as a base (ensuring consistency) and employ data-driven techniques to model the residual errors or uncertain processes, thereby capturing both global degradation trends and local fluctuations [30] [31].

Q4: How can hybrid frameworks improve uncertainty quantification in predictions? Many single-method approaches provide only point predictions for metrics like Remaining Useful Life (RUL). Hybrid frameworks can integrate probabilistic methods to offer both point and probability distribution predictions. For example, a hybrid method combining an Auxiliary Particle Filter (APF) with Conditional Kernel Density Estimation (CKDE) can estimate the degradation state and then provide a complete probability distribution for the RUL, effectively quantifying prediction uncertainty [31]. Techniques like measuring prediction uncertainty via softmax margins in classifiers can also serve as early warnings for model degradation due to concept drift [29].

Troubleshooting Guides

Problem: Baseline Drift and Oscillations in Physiological Signals

Application Context: Correcting motion artifacts in continuous fNIRS monitoring during long-term experiments like sleep studies [28].

Solution: A hybrid detection and correction pipeline.

Step 1: Artifact Detection Use an fNIRS-based detection strategy. Calculate the two-side moving standard deviation

t(n)of the measured signalx(n)with a window of widthW(wheren=k+1, kandW=2k+1) to identify segments containing oscillations and baseline shifts [28].- Formula:

t(n) = (1/W) * [ Σ(x(n+j))² - (1/W) * (Σx(n+j))² ]^{1/2}forj = -k to k[28].

- Formula:

Step 2: Artifact Categorization Classify detected artifacts into three types for targeted correction:

- Severe Oscillation: High-frequency, large-amplitude fluctuations.

- Baseline Shift (BS): Slow, sustained deviation from the baseline.

- Slight Oscillation: Low-frequency, small-amplitude noise [28].

Step 3: Hybrid Correction Protocol Apply a sequential, multi-step correction tailored to the artifact category.

- Correct Severe Oscillations using cubic spline interpolation.

- Remove Baseline Shifts using spline interpolation.

- Reduce Slight Oscillations using a dual-threshold wavelet-based method [28].

Verification: Compare the processed signal to the original using Signal-to-Noise Ratio (SNR) and Pearson’s Correlation Coefficient (R). A successful correction will show significant improvement in both metrics [28].

Problem: Concept Drift in Real-Time Machine Learning Models

Application Context: Maintaining the performance of a machine learning model used for classifying data from a continuous stream, such as airline passenger information [29].

Solution: A hybrid Transformer-Autoencoder drift detection framework.

Step 1: Model Setup Train a baseline classifier (e.g., CatBoost) on initial data batches. In parallel, train a Hybrid Transformer-Autoencoder model to learn the underlying structure and contextual dependencies of the input feature space [29].

Step 2: Monitoring & Metric Calculation For each new batch of incoming data:

- Compute Statistical Drift Metrics:

- Population Stability Index (PSI):

PSI = Σ(A_i - E_i) * ln(A_i / E_i). A PSI > 0.2 indicates significant drift. - Jensen-Shannon Divergence (JSD): Measures the similarity between two probability distributions (e.g., training vs. current batch).

- Population Stability Index (PSI):

- Compute Reconstruction-based Drift Metrics:

- Reconstruction Loss:

L_AE = ||x - x̂||₂². A significant increase in the mean reconstruction error from the Transformer-Autoencoder indicates drift.

- Reconstruction Loss:

- Measure Prediction Uncertainty:

- Calculate the softmax margin (difference between the top two predicted class probabilities) from the baseline classifier. Smaller margins indicate higher uncertainty [29].

- Compute Statistical Drift Metrics:

Step 3: Drift Alerting A composite Trust Score that incorporates the above metrics, along with trends in classifier error and domain rule violations, is used to trigger a drift alert [29].

Problem: Decomposing Composite Signals for Attribution

Application Context: Attributing changes in total terrestrial water storage (TWS) to its component sources (groundwater, soil moisture, snowpack) using a hybrid model [30].

Solution: The Hybrid Hydrological Model (H2M).

Step 1: Model Architecture Develop a model that uses a physically based structure to ensure mass conservation and other physical laws. Within this structure, replace highly uncertain process representations with a trained recurrent neural network (RNN) that learns the water fluxes from data [30].

Step 2: Multi-Task Training Train the H2M model simultaneously against multiple observational data streams to ensure a balanced and realistic simulation. The training constraints should include:

- Terrestrial Water Storage (TWS) variations

- Grid cell runoff (Q)

- Evapotranspiration (ET)

- Snow Water Equivalent (SWE) [30]

Step 3: Analysis and Validation Analyze the model outputs to attribute TWS variations to different components. Validate the plausibility of the simulated contributions by comparing them to the ranges and patterns reported by state-of-the-art global hydrological models [30].

Experimental Protocols & Data

Protocol 1: fNIRS Motion Artifact Correction

Objective: Validate a hybrid motion artifact correction approach against established methods [28].

Materials:

- fNIRS data acquired during whole-night sleep monitoring.

- Processing environment (e.g., MATLAB, Python) with capability for spline interpolation and wavelet analysis.

Methodology:

- Extract hemodynamic signals (Δ[HbO₂] and Δ[Hb]) from raw optical density data using the Modified Lambert-Beer Law [28].

- Apply the proposed hybrid correction framework (Detection → Categorization → Severe Correction → BS Removal → Slight Correction).

- Compare performance against standalone methods (e.g., spline interpolation only, wavelet filtering only) using quantitative metrics.

Quantitative Results from fNIRS Hybrid Correction Experiment

| Performance Metric | Proposed Hybrid Method | Spline Interpolation Only | Wavelet Filtering Only |

|---|---|---|---|

| Signal-to-Noise Ratio (SNR) | Significant improvement reported [28] | Not specified | Exacerbates BS artifacts [28] |

| Pearson's Correlation (R) | Significant improvement reported [28] | Not specified | Not specified |

| Key Strength | Strong stability & handles multiple artifact types [28] | Effective for Baseline Shifts [28] | Effective for motion spikes [28] |

Protocol 2: Concept Drift Detection

Objective: Evaluate the sensitivity of a Hybrid Transformer-Autoencoder in detecting synthetic drift in a time-sequenced airline passenger dataset [29].

Materials:

- Airline passenger dataset with synthetic drift (e.g., permuted ticket prices) injected from batch 5 onwards.

- A baseline classifier (CatBoost).

- A configured Transformer-Autoencoder model.

Methodology:

- Preprocess data: clean, standardize, and order by a synthetic timestamp.

- Train the CatBoost classifier and Transformer-Autoencoder on initial, clean batches.

- Stream data through the system in batches, calculating the Trust Score (PSI, JSD, reconstruction error, prediction uncertainty) for each batch.

- Record the batch number at which each method (TAE, standard AE, statistical tests) first triggers a drift alert.

Performance Comparison of Drift Detection Methods

| Detection Method | Early Detection Capability | Sensitivity to Subtle Drift | Interpretability |

|---|---|---|---|

| Hybrid Transformer-AE | Superior; detected drift earlier [29] | High; captures complex temporal dynamics [29] | Enhanced via SHAP analysis [29] |

| Standard Autoencoder (AE) | Lower than Transformer-AE [29] | Limited to reconstruction error [29] | Limited |

| Statistical Tests (e.g., PSI) | Reactive; generally slower [29] | Low; may miss complex changes [29] | Moderate |

The Scientist's Toolkit

Key Research Reagent Solutions

| Item / Technique | Function in Hybrid Correction |

|---|---|

| Spline Interpolation | Models and subtracts slow, sustained baseline shifts (BS) from signals [28]. |

| Wavelet-Based Methods | Effectively isolates and removes high-frequency spikes and slight oscillations [28]. |

| Recurrent Neural Network (RNN) | Used within physical models to learn complex, uncertain processes (e.g., water fluxes) from data [30]. |

| Transformer-Autoencoder | Models complex temporal dependencies and provides a sensitive reconstruction-based metric for detecting data distribution drift [29]. |

| Auxiliary Particle Filter (APF) | Estimates the state of equipment degradation within a Bayesian framework, helping to forecast Remaining Useful Life (RUL) [31]. |

| Conditional Kernel Density Estimation (CKDE) | A data-driven method used for probabilistic prediction of residuals or RUL, without assuming a specific data distribution [31]. |

Workflow and Signaling Diagrams

Hybrid fNIRS Artifact Correction Workflow

Concept Drift Detection Framework

Hybrid Model-Data Integration for Prognostics

Welcome to the Technical Support Center

This resource is designed to assist researchers in implementing path-optimized scanning techniques to suppress low-frequency instrumentation drift in continuous monitoring applications. The following guides and FAQs address common experimental challenges, provide validated protocols, and present solutions based on recent research.

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind path-optimized scanning for drift suppression?

The fundamental principle is shifting the strategy from simple temporal averaging to altering the frequency-domain characteristics of the drift itself. Instead of trying to average out drift effects, path-optimized scanning deliberately reorganizes the temporal sequence of spatial measurement points. This disrupts the spatiotemporal correspondence between the true surface profile signal and the time-dependent drift error, converting what is a low-frequency disturbance in the time domain into a high-frequency artifact in the spatial domain. Once transformed, these high-frequency components can be effectively separated from the true signal using low-pass filtering [32].

Q2: My data still shows significant residual drift after using a simple random scan path. Why might this be?

True mathematical randomness requires infinite iterations for statistical validity, which is impractical in finite-duration experiments. Predefined "randomized" paths often fail to provide the consistent, optimal disruption of the temporal-spatial index needed for effective drift conversion. For linear drift errors, random scanning may offer no suppression benefit at all. The recommended solution is to use a deterministically optimized path, such as the forward-backward downsampled path, which is mathematically designed to modulate linear drift components and has been shown to outperform random and traditional sequential scanning, especially for nonlinear drifts [32].

Q3: How do I balance measurement accuracy with time efficiency when designing a scan path?

The relationship between sampling stepping scales and measurement accuracy/efficiency is a key consideration in path optimization. Research indicates that it is possible to determine an optimal sampling step that balances these competing demands. For instance, one experimental study using an optimized downsampled path scanning method achieved a 48.4% reduction in single-measurement cycles while successfully controlling drift errors at 18 nrad RMS. This demonstrates that path optimization can simultaneously enhance both precision and throughput [32].

Troubleshooting Guides

Issue 1: Poor Drift Suppression in Nonlinear Regimes

- Symptoms: Low-frequency drift remains coupled to your signal after applying traditional forward-backward sequential scanning.

- Root Cause: Traditional methods rely on averaging and have limited effectiveness against complex, nonlinear low-frequency drift [32].

- Solution:

- Implement Path-Optimized Scanning: Shift from a simple sequential path to an optimized forward-backward downsampled path or a heuristically optimal path (HOPS) [32] [33].

- Validate with Simulation: Before physical experiments, run simulations to compare the performance of your new scan path against traditional methods for your specific drift profile. Simulations have shown that path-optimized scanning can outperform traditional methods for nonlinear errors [32].

- Apply Low-Pass Filtering: After data acquisition using the optimized path, apply a spatial low-pass filter to remove the now-high-frequency drift components [32].

Issue 2: Inadequate Temporal Resolution for Dynamic Processes

- Symptoms: Inability to resolve the relative timing of fast events, such as neuronal action potentials or rapid chemical kinetics.

- Root Cause: Standard raster scanning techniques impose a severe limit on temporal resolution [33].

- Solution: