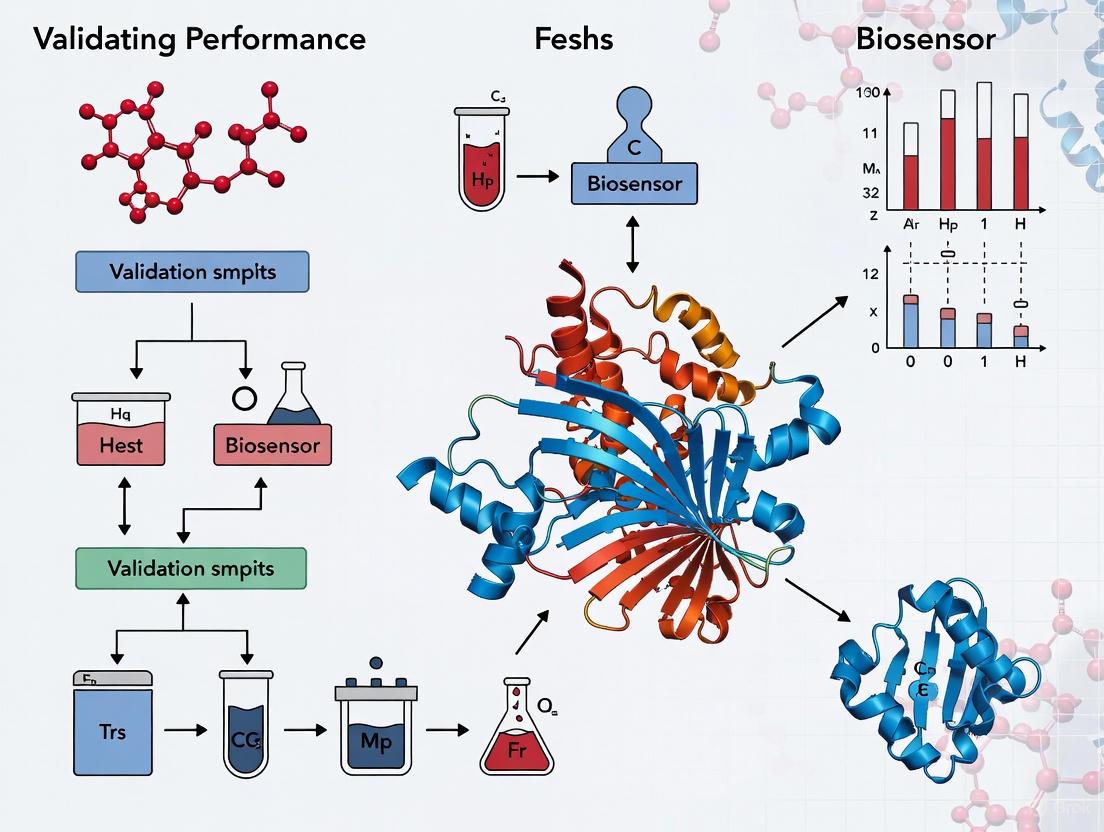

From Buffer to Bedside: A Strategic Framework for Validating Biosensor Performance in Fresh vs. Commercial Blood Samples

This article addresses a critical challenge in translational biosensor research: bridging the performance gap between idealized laboratory validation and real-world clinical application.

From Buffer to Bedside: A Strategic Framework for Validating Biosensor Performance in Fresh vs. Commercial Blood Samples

Abstract

This article addresses a critical challenge in translational biosensor research: bridging the performance gap between idealized laboratory validation and real-world clinical application. While biosensors often demonstrate high accuracy in buffer solutions and spiked commercial blood samples, their performance can significantly deteriorate when faced with the complex, variable matrix of fresh clinical blood. Tailored for researchers, scientists, and drug development professionals, this work provides a comprehensive framework for rigorous biosensor validation. We explore the foundational differences between sample types, present methodological best practices for application-specific testing, detail troubleshooting and optimization strategies to enhance robustness, and establish protocols for comparative validation against gold-standard methods. The goal is to equip developers with the knowledge to create biosensors that are not only sensitive and specific but also reliable and commercially viable for point-of-care diagnostics and personalized medicine.

The Sample Matrix Challenge: Understanding the Fundamental Differences Between Fresh and Commercial Blood

The validation of wearable biosensors represents a frontier in modern healthcare, promising real-time, non-invasive monitoring of physiological status [1]. The core challenge in this field lies in ensuring that the data generated from these devices is accurate and clinically relevant. A fundamental, yet often overlooked, aspect of this validation process is the type of blood sample used for calibration and testing. The physiological state of blood—ranging from fresh clinical whole blood to processed commercial blood components—varies dramatically in its composition and functional integrity. These variances can significantly impact the performance of biosensors that rely on specific biomarkers, such as glucose, metabolites, or coagulation factors [1] [2].

This guide provides an objective comparison between fresh and processed blood products, framing the discussion within the critical context of biosensor validation. For researchers and drug development professionals, understanding these compositional differences is not merely a methodological detail but a prerequisite for developing reliable and approved diagnostic technologies. The following sections will compare these sample types through quantitative data, detailed experimental protocols, and analytical frameworks to inform robust research design.

Comparative Analysis: Fresh Whole Blood vs. Processed Blood Components

The choice between fresh whole blood and processed components hinges on the balance between physiological fidelity and practical convenience. The table below summarizes the key characteristics of these sample types, highlighting critical variances that impact biosensor research.

Table 1: Compositional and Functional Comparison of Fresh vs. Processed Blood Samples

| Characteristic | Fresh Clinical Whole Blood | Processed/Commercial Blood Components |

|---|---|---|

| Definition & Source | Blood collected recently (ideally within hours) from a donor and used with minimal processing [3]. | Blood separated into components (e.g., RBCs, platelets, plasma) and stored for extended periods [4]. |

| Cellular Composition & Integrity | All cellular elements (RBCs, WBCs, platelets) remain intact and functional [5] [3]. Platelet and white blood cell viability is high. | Components are isolated; platelets and WBCs in stored whole blood show progressive functional decline. For instance, platelet aggregation declines after 7 days [5]. |

| Coagulation Profile | Preserves integrated, native coagulation function as measured by Thromboelastography (TEG) [5]. | Coagulation factors and platelet function degrade. TEG variables begin to show abnormalities after 11-14 days of refrigerated storage [5]. |

| Metabolic Environment | Physiological levels of pH, glucose, and electrolytes [5]. | Progressive metabolic derangement: pH and glucose decrease, while lactate and potassium increase significantly over time [5]. |

| Key Research Applications | - Gold standard for validating biosensor accuracy [1]- Coagulation studies and transfusion research [6]- Immunological assays (e.g., CAR-T therapy) [3] | - Convenience and standardization in some assays- Blood banking and transfusion medicine [4]- Research not requiring full cellular function |

| Impact on Biosensor Validation | Provides a true benchmark for analyte levels and correlations between blood and non-invasive biofluids [1]. | Risk of inaccuracy; degraded metabolites and cellular contents may not reflect true in vivo conditions, leading to faulty sensor calibration [1]. |

Experimental Data: Documenting the Decline in Processed Blood

Quantitative data from controlled studies vividly illustrates the temporal degradation of processed blood, underscoring why freshness is a critical variable.

Coagulation Function Over Time

A pivotal in vitro study tracked the coagulation properties of refrigerated whole blood over 31 days. Thromboelastography (TEG) was used to measure integrated coagulation function, with key variables including R time (clot initiation), K time (clot kinetics), and MA (maximum clot strength) [5].

Table 2: Coagulation Function in Stored Whole Blood (TEG Analysis) [5]

| Storage Time | TEG Variable R (min) | TEG Variable K (min) | TEG Variable MA (mm) | Interpretation |

|---|---|---|---|---|

| Day 1 (Baseline) | Normal | Normal | Normal | Normal integrated coagulation function. |

| Day 11 | Normal | Normal | Normal | Coagulation function preserved to a minimum of 11 days. |

| Day 14 | Normal | Begins to increase in some units | Begins to decrease in some units | Abnormal values begin; indicates clot formation is slower and weaker. |

| Day 31 | Significantly prolonged | Significantly prolonged | Significantly decreased | Severe degradation of coagulation capacity. |

Platelet Function and Metabolic Stability

The same study also measured platelet function via Light Transmission Aggregometry (LTA) using various agonists, and tracked basic metabolic parameters [5].

Table 3: Platelet Function and Metabolic Changes in Stored Whole Blood [5]

| Parameter | Agonist/Measure | Key Findings | Functional Implication |

|---|---|---|---|

| Platelet Aggregation | Adenosine Diphosphate (ADP), Epinephrine | No change from Day 1 to Day 21. | Platelet response to some agonists is stable. |

| Platelet Aggregation | Collagen | Decline begins on Day 7. | Impaired response to collagen-induced activation. |

| Platelet Aggregation | Ristocetin | Decline begins on Day 17. | Indicates degrading platelet/von Willebrand factor interaction. |

| Metabolic Environment | pH | Progressive decline through Day 31. | Creates an increasingly acidic, non-physiological environment. |

| Metabolic Environment | Lactate | Progressive increase through Day 31. | Indicates ongoing cellular metabolism and waste accumulation. |

| Metabolic Environment | Potassium | Increased over time, exceeding 20 mmol/L after Day 14. | Critical for sensor electrochemistry; hyperkalemia is non-physiological. |

Experimental Protocols: Key Methodologies for Blood Function Analysis

The data presented in the previous section were generated using standardized, rigorous laboratory protocols. Reproducing these experiments is essential for researchers seeking to validate the quality of their own blood samples.

Thromboelastography (TEG) Protocol

Purpose: To assess the integrated coagulation function of a whole blood sample, including the kinetics of clot formation, its strength, and stability [5].

Workflow Diagram: Thromboelastography Analysis

Detailed Procedure:

- Sample Preparation: Gently mix the whole blood unit by tilting the bag horizontally and vertically for several minutes to ensure homogeneity. Aseptically withdraw a sample [5].

- Activation: Pipette 340 µL of the blood sample into a vial containing kaolin to activate the intrinsic coagulation pathway. Mix gently [5].

- Loading: Pipette 20 µL of 0.2 mol/L calcium chloride into a TEG sample cup to recalcify the blood. Then, add the kaolin-activated blood to the same cup [5].

- Run Assay: Place the cup in the pre-warmed TEG analyzer (37°C). A pin is suspended in the sample, and the cup begins a slow oscillation. As fibrin strands form between the cup and the pin, the torque is transmitted to the pin and recorded [5].

- Data Analysis: Run the test in duplicate. The TEG software generates a trace from which several parameters are calculated:

- R time: The latency from start to initial fibrin formation.

- K time: The time from R to a fixed level of clot strength.

- MA (Maximum Amplitude): The ultimate strength of the clot, reflecting platelet function and fibrin interplay.

- LY30: The percentage of clot lysis 30 minutes after MA, indicating fibrinolytic activity [5].

Light Transmission Aggregometry (LTA) Protocol

Purpose: To quantitatively measure platelet aggregation in response to specific agonists, providing a detailed view of platelet function [5].

Workflow Diagram: Platelet Aggregometry Analysis

Detailed Procedure:

- Sample Collection: Draw blood into sodium citrate tubes (0.129 mol/L) to anticoagulate [5].

- Prepare Platelet-Rich Plasma (PRP): Centrifuge the blood at 800 rpm for 10 minutes. The supernatant is the PRP. Carefully collect it [5].

- Prepare Platelet-Poor Plasma (PPP): Centrifuge the remaining blood at 3500 rpm for 10 minutes. The supernatant is the PPP, which serves as a blank with near-zero platelets [5].

- Standardize Platelet Count: Adjust the platelet count in the PRP to a range of 100-300 x 10⁹/L by diluting it with autologous PPP. This ensures consistent baseline conditions across tests [5].

- Run Aggregometry: Place the adjusted PRP in a cuvette within the aggregometer at 37°C with a constant stir bar. Set the instrument to 100% transmission for PPP and 0% for PRP. Add a chosen agonist (e.g., ADP, collagen, epinephrine, ristocetin) and record the increase in light transmission as platelets aggregate for several minutes [5].

- Data Analysis: The final aggregation level is reported as a percentage, indicating the maximum extent of aggregation achieved after agonist addition [5].

The Scientist's Toolkit: Essential Reagents and Materials

Successful experimentation with blood samples requires specific, high-quality reagents and materials. The following table details key items used in the featured protocols.

Table 4: Essential Research Reagents for Blood Function Analysis

| Item Name | Function/Description | Application in Protocol |

|---|---|---|

| Citrate-Phosphate-Dextrose (CPD) | Anticoagulant solution that chelates calcium and provides nutrients to cells during storage [5] [4]. | Primary anticoagulant in blood collection bags for whole blood studies [5]. |

| Sodium Citrate Tubes (0.129 mol/L) | Standard anticoagulant for coagulation studies; chelates calcium to prevent clotting in vitro [5]. | Blood collection for TEG and LTA assays [5]. |

| Kaolin | Fine particulate clay used to activate the contact pathway of coagulation [5]. | Activator for the intrinsic pathway in the TEG assay [5]. |

| Calcium Chloride (CaCl₂) | Source of divalent calcium ions (Ca²⁺) to reverse citrate anticoagulation [5]. | Recalcification agent added to the TEG cup to initiate the clotting process [5]. |

| Agonists (ADP, Collagen, Epinephrine, Ristocetin) | Chemical agents that bind to specific platelet surface receptors to trigger activation and aggregation [5]. | Used in LTA to stimulate and test different pathways of platelet function [5]. |

| Thromboelastograph (TEG/ROTEM) | Instrument that measures the viscoelastic properties of whole blood during clot formation and dissolution [5]. | Core instrument for the TEG protocol. |

| Platelet Aggregometer | Instrument that measures platelet aggregation in plasma by monitoring changes in light transmission [5]. | Core instrument for the LTA protocol. |

Implications for Biosensor Validation Research

The documented variances between fresh and processed blood have profound implications for the development and validation of biosensors. Wearable biosensors often rely on establishing a correlation between analyte concentrations in easily accessible biofluids (like sweat or interstitial fluid) and their levels in blood [1]. Using processed blood components with degraded metabolites or altered electrolyte balances (e.g., elevated potassium) for calibration can establish a fundamentally flawed baseline, leading to inaccurate readings in real-world use [5] [1].

Furthermore, the validation of biosensors intended to monitor coagulation status—for example, in patients on anticoagulant therapy—would be severely compromised if tested on stored blood with abnormal TEG parameters. The decline in platelet aggregation in response to collagen after just 7 days of storage means that a sensor designed to detect platelet-related hemorrhagic risks would not be evaluated under physiologically relevant conditions [5]. Therefore, for high-fidelity research aimed at clinical translation, fresh whole blood remains the indispensable gold standard. It ensures that the biosensor is trained and validated against a biologically accurate representation of the in vivo environment, ultimately paving the way for more reliable and approved diagnostic devices [1] [3].

The validation of biosensor performance is a critical step in translating innovative research into reliable commercial and clinical applications. Within this process, the choice of blood sample—fresh or commercially sourced—represents a fundamental variable that can significantly influence the outcome and interpretation of validation studies. Commercial blood samples offer undeniable advantages in terms of standardization and stability, providing consistency for comparative assays and logistical convenience for distributed research efforts. However, this very stability may come at a cost, potentially limiting a biosensor's predictive accuracy in real-world conditions where samples are fresh and biologically active. This guide objectively compares the performance of biosensors validated with commercial versus fresh blood samples, framing the discussion within the broader thesis that a comprehensive validation strategy must acknowledge the distinct advantages and inherent limitations of each sample type. The aim is to provide researchers, scientists, and drug development professionals with the experimental data and protocols necessary to make informed decisions that enhance the reliability and clinical relevance of their biosensor platforms.

Commercial Samples: Engineered for Consistency

Commercial blood samples are biospecimens that have been processed, preserved, and stored by a specialized supplier for distribution to researchers. The core value proposition of these samples is the engineered consistency and reliability they bring to the early stages of biosensor development.

Key Advantages and Characteristics

- Standardization: Commercial suppliers provide meticulously characterized samples, often with detailed donor profiles and pre-defined analyte concentrations. This allows for a high degree of experimental reproducibility across different laboratories and testing dates, which is crucial for benchmarking new biosensor technologies against established methods [7].

- Stability and Shelf-Life: Through controlled processing and storage at temperatures like -20°C or -80°C, commercial samples offer extended stability for many analytes. Studies have demonstrated that a range of biochemical parameters, including sodium, potassium, urea, creatinine, and uric acid, remain stable in serum stored at -20°C for up to 30 days [8]. Similarly, trace elements in whole blood and plasma can be preserved at low temperatures (4°C and -20°C) for up to six months without substantial changes in concentration [9]. This stability decouples research activities from the logistical challenges of immediate sample processing.

- Logistical Convenience: The availability of commercial samples enables on-demand experimentation, facilitating experimental design and accelerating preliminary research and development cycles without the immediate need for clinical partnerships or ethical approvals for fresh blood draws.

Stability Profiles of Common Analytes in Stored Samples

The following table summarizes quantitative data on the stability of various analytes under different storage conditions, as reported in experimental studies. This data is critical for understanding the utility and limitations of commercial samples.

Table 1: Stability of Biochemical Analytes in Stored Serum and Plasma

| Analyte | Sample Type | Storage Condition | Storage Duration | Stability Outcome | Citation |

|---|---|---|---|---|---|

| Sodium, Potassium, Urea, Creatinine, Uric Acid, Total Calcium, Phosphorus, Bilirubin, Total Protein, Albumin | Serum | -20°C | 30 days | Stable (no clinically significant changes) | [8] |

| Amylase | Serum | -20°C | 7, 15, 30 days | Unstable (statistically and clinically significant decrease) | [8] |

| Glucose, Uric Acid, Creatinine, Total Bilirubin | Plasma & Serum | -20°C | 30 days | Instability detected (p<0.05), but clinical impact only for Total Bilirubin | [10] |

| Clinical Trace Elements (e.g., Ag, Al, As, Cd, Co, Cr, Cu, Mn, Mo, Ni, Pb, Se, Zn) | Whole Blood & Plasma | 4°C, -20°C | 6 months | Stable without substantial changes | [9] |

| Lactate Dehydrogenase (LD), Aspartate Aminotransferase (AST), Creatine Kinase MB (CK-MB), Troponin I | Serum & Plasma (BD RST & Barricor Tubes) | 4°C | 7 days | Unstable (unacceptable stability for re-analysis) | [11] |

Experimental Protocol: Validating Biosensor Linearity with Commercial Samples

A common application of commercial samples is in establishing the analytical linearity and detection range of a biosensor.

- Objective: To determine the relationship between the biosensor's output signal and the concentration of a target analyte across a specified range using commercial serum samples.

- Materials:

- Biosensor platform (e.g., electrochemical, optical).

- Commercial human serum samples with certified analyte concentrations or spiked with known quantities of the target analyte (e.g., glucose, a specific protein biomarker).

- Reference instrument (e.g., clinical chemistry analyzer, HPLC) for method comparison.

- Methodology:

- Sample Preparation: Acquire or prepare a series of commercial serum samples with the target analyte concentration spanning the expected physiological range (e.g., from low to high).

- Measurement: Analyze each sample in triplicate with the biosensor under development, following the standard operating procedure.

- Reference Analysis: Simultaneously, measure the analyte concentration in each sample using a validated reference method.

- Data Analysis: Plot the biosensor's response (y-axis) against the reference concentration (x-axis). Perform linear regression analysis to calculate the slope, y-intercept, and coefficient of determination (R²).

- Interpretation: A strong linear relationship (R² > 0.99) indicates good analytical performance within the tested range. The use of commercial samples here ensures that the linearity assessment is performed with a consistent and well-defined matrix.

The Fresh Sample Imperative: Capturing Biological Fidelity

Despite the advantages of commercial samples, fresh blood remains the gold standard for many applications, particularly those assessing dynamic biological functions. Fresh blood is typically defined as blood processed and analyzed within hours of collection, preserving the native state of its cellular and molecular components [7].

Key Advantages and Characteristics

- Preservation of Cellular Viability and Function: Fresh blood ensures that cells remain viable and functionally active. This is paramount for assays investigating cell signaling, receptor activation, cytokine secretion, and neutrophil migration [7]. The freeze-thaw process used for commercial samples can rupture delicate cells like B cells and dendritic cells, making them undetectable or non-functional [7].

- Accurate Biomarker Representation: The levels and structural integrity of certain biomarkers, particularly labile proteins, enzymes, and cell surface markers, are best preserved in fresh blood. For instance, one study noted that amylase activity decreased significantly in serum stored at -20°C, underscoring the need for fresh analysis for this analyte [8].

- Real-World Predictive Validity: Validating a biosensor with fresh samples most closely mimics the intended use case for point-of-care or continuous monitoring devices. It accounts for the complex, active matrix of a freshly drawn sample, providing a more accurate prediction of clinical performance [12] [1].

Experimental Protocol: Functional Cell-Based Assay for Biosensor Validation

This protocol uses fresh blood to test a biosensor's ability to detect a functional cellular response, such as neutrophil activation.

- Objective: To validate a biosensor's performance in detecting a functional cellular response (e.g., activation markers) in fresh whole blood compared to flow cytometry.

- Materials:

- Biosensor designed to detect a specific cell surface marker (e.g., CD11b on neutrophils).

- Fresh human whole blood (anti-coagulated, e.g., with heparin or EDTA) from healthy donors, processed within 1-2 hours of collection.

- Cell activation agent (e.g., fMLP for neutrophils).

- Flow cytometer with appropriate antibodies (reference method).

- Methodology:

- Sample Stimulation: Aliquot fresh whole blood. Treat one aliquot with the activation agent and keep another as an unstimulated control. Incubate at 37°C for a predetermined time.

- Biosensor Analysis: Apply a small volume of fresh whole blood (stimulated and unstimulated) directly to the biosensor and record the signal.

- Reference Analysis: Simultaneously, analyze the same blood samples by flow cytometry using fluorescently labeled antibodies against the target activation marker.

- Data Analysis: Compare the biosensor's signal intensity between stimulated and unstimulated samples. Correlate the biosensor's response with the mean fluorescence intensity (MFI) obtained from flow cytometry.

- Interpretation: A successful biosensor will show a statistically significant increase in signal upon cell activation, correlating well with the flow cytometry data. This validates the biosensor's capability in a biologically relevant, complex medium.

Direct Comparison: A Side-by-Side Analysis

The choice between fresh and commercial samples is not a matter of which is universally better, but which is more appropriate for a specific stage of biosensor development or a particular performance question. The following table provides a direct comparison.

Table 2: Objective Comparison of Fresh vs. Commercial Blood Samples

| Feature | Commercial Samples | Fresh Samples |

|---|---|---|

| Standardization & Reproducibility | High (Well-characterized, controlled matrix) [7] | Variable (Subject to donor biology and collection nuances) |

| Analytic Stability | High for many stable analytes (e.g., electrolytes, some metabolites) [8] [9] | Essential for labile analytes and enzymes (e.g., amylase, functional cells) [8] [7] |

| Logistical Convenience | High (Available on-demand, long shelf-life) | Low (Requires immediate access to donors and processing) |

| Cellular Viability & Function | Poor (Compromised by freeze-thaw cycle) [7] | Excellent (Preserves native cell state and activity) [7] |

| Cost & Accessibility | Variable, but readily accessible | Can be high, requires clinical collaboration or donor program |

| Ideal Use Case | Analytical validation (linearity, LOD, LOQ), assay reproducibility, pilot studies | Functional assays, cell-based validation, PoC device testing, assessing clinical correlation [7] |

| Real-World Predictive Power | Limited for functional biology | High, as it tests the biosensor in its intended matrix [12] |

Decision Framework for Biosensor Validation

The following diagram illustrates a logical workflow for selecting the appropriate sample type based on the research and development phase.

The Scientist's Toolkit: Essential Research Reagent Solutions

Selecting the right tools is critical for executing the experiments described in this guide. Below is a table of key materials and their functions in biosensor validation studies.

Table 3: Essential Reagents and Materials for Biosensor Validation

| Item | Function / Application | Key Considerations |

|---|---|---|

| BD Vacutainer Tubes (e.g., RST, Barricor) | Standardized blood collection for serum or plasma separation. Ensures consistent pre-analytical sample processing. [11] | Choice of tube (serum vs. plasma, gel separator) can affect analyte stability and test results. |

| Cobas c501 Autoanalyzer | High-throughput reference instrument for quantifying clinical chemistry analytes. Used for method comparison and validation. [10] | Provides gold-standard measurements for a wide range of metabolites, enzymes, and proteins. |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | Reference method for multi-element trace metal analysis in biological fluids like blood and plasma. [9] | Critical for validating biosensors targeting metal ions or for monitoring contamination. |

| Flow Cytometer | Reference method for cell-based assays. Provides quantitative data on cell surface markers, cell activation, and viability. [7] | Essential for validating biosensors that detect cellular targets or functional immune responses. |

| Stable Commercial Serum/Plasma Panels | Pre-characterized samples for assessing biosensor analytical performance (precision, linearity) across a range of concentrations. | Reduces variability and simplifies initial assay development and benchmarking. |

| Cell Activation Agents (e.g., fMLP, PMA) | Chemical stimulants used in functional cell-based assays to induce a cellular response (e.g., neutrophil activation). [7] | Allows for testing biosensor performance against a dynamic biological signal. |

| Microsampling Devices (e.g., DBS Cards) | Enable simplified collection, transport, and storage of blood samples by absorbing a small volume onto filter paper. [13] | Offers an alternative to venous draws; can stabilize some analytes at ambient temperature. |

The journey from a novel biosensor concept to a reliable tool for research or clinical decision-making is paved with rigorous validation. This guide has underscored that a critical component of this process is the strategic selection of blood samples. Commercial samples provide an unparalleled advantage in standardization and stability, making them indispensable for establishing the foundational analytical performance of a biosensor. However, an over-reliance on these stabilized samples can create a "commercial sample gap," where a biosensor performs excellently in controlled conditions but fails to predict real-world functionality. The integrity of cellular viability and labile biomarkers, best preserved in fresh blood, is non-negotiable for assessing biological relevance and functional accuracy. Therefore, a tiered validation strategy that leverages the consistency of commercial samples for initial development and the biological fidelity of fresh samples for final verification is paramount. By objectively understanding and utilizing the advantages and limitations of each sample type, researchers can ensure that their biosensors are not only precise instruments but also robust and predictive tools for advancing healthcare.

The validation of biosensor performance presents a significant translational gap between idealized laboratory conditions and real-world clinical environments. This gap is most pronounced when sensors encounter fresh, unprocessed clinical blood samples. Blood is a complex, dynamic matrix containing a high concentration of proteins, cells, and other biomolecules that actively interact with sensor surfaces, leading to phenomena such as biofouling and nonspecific binding that critically compromise analytical accuracy [14] [15]. These challenges are often underestimated when using commercially processed or pooled samples, which fail to capture the full spectrum of biological variability found in fresh clinical specimens from individual patients [15]. The "fresh sample reality" therefore represents a critical validation frontier where biosensor performance must be proven against complexity, biofouling, and dynamic interferents inherent in clinical blood matrices.

This guide objectively compares biosensor performance across these challenging sample types, providing experimental data and methodologies essential for researchers, scientists, and drug development professionals focused on robust biosensor validation.

Blood Matrix Complexity: Beyond the Buffer Solution

Composition of Blood and Major Interferents

Blood plasma is an extraordinarily complex medium comprising 91% water along with numerous proteins, nutrients, ions, lipids, and dissolved gases [14]. The high protein load (60–80 mg mL⁻¹) creates a competitive environment where nonspecific adsorption can overwhelm specific biorecognition events [15]. Understanding these components is essential for designing effective biosensing strategies.

Table 1: Major Protein Interferents in Blood Plasma

| Protein | Typical Concentration | Impact on Biosensing |

|---|---|---|

| Human Serum Albumin (HSA) | 35–60 mg mL⁻¹ | High abundance promotes nonspecific surface adsorption [14] |

| Immunoglobulin G (IgG) | 6–16 mg mL⁻¹ | Specific and nonspecific binding to sensor surfaces [14] |

| Fibrinogen | ~2 mg mL⁻¹ | Participates in coagulation cascades on sensor surfaces [14] |

Sample-to-Sample Variability: The Pooled Sample Fallacy

A critical consideration in biosensor validation is the significant variability between individual donors, which is often masked by using pooled samples. Research has demonstrated high sample-to-sample variability in background response from different levels of non-specific adsorption observed on the same coating when samples from the blood plasma of different individual donors are analyzed [15]. This variability can be influenced by factors including health status, age, and genetic background, with distinct non-specific adsorption profiles reported even among patient subgroups such as type-1 diabetic patients [15]. Pooled biofluids minimize this variability but create an unrealistic testing environment that fails to predict real-world clinical performance [15].

Experimental Approaches for Validation in Complex Matrices

Methodologies for Assessing Biofouling and Sensor Performance

Robust validation of biosensor performance in fresh blood matrices requires carefully controlled experimental protocols that can distinguish specific signal from nonspecific interference.

Table 2: Key Experimental Protocols for Blood Matrix Validation

| Methodology | Protocol Description | Key Outcome Measures |

|---|---|---|

| Reference Channel Subtraction | Using a parallel reference channel without specific biorecognition elements to subtract non-specific binding signal from total response [15] | Isolates specific binding signal; quantifies fouling background |

| Analyte-Spiked Validation | Adding known concentrations of target analyte to fresh clinical samples and comparing recovery with buffer-based calibrations [15] | Determines accuracy loss in complex matrix; measures matrix effects |

| Sample Dilution Series | Performing measurements at multiple dilution factors to assess concentration-dependent matrix effects [16] | Identifies optimal dilution that minimizes interference while maintaining sensitivity |

| Time-Dependent Fouling Studies | Monitoring signal stability during prolonged exposure to undiluted blood matrices [15] | Quantifies biofouling kinetics and sensor stability |

Case Study: Multi-Test CRP Performance Comparison

A comprehensive 2023 study evaluated 17 commercially available point-of-care tests for C-reactive protein (CRP) compared to a central laboratory reference standard (Cobas 8000 Modular analyzer) [16]. The investigation used stored serum samples (n=660) with CRP values across the clinically relevant range (10–100 mg/L), representing real-world validation conditions. Among eight quantitative POC tests evaluated, QuikRead go and Spinit exhibited the best agreement with the reference method, showing slopes of 0.963 and 0.921, respectively [16]. Meanwhile, nine semi-quantitative tests showed poor percentage agreement for intermediate CRP categories (10–40 mg/L), with higher agreement only at the extreme lower and upper concentration ranges [16]. This performance disparity highlights how matrix effects differentially impact various sensing platforms and the superiority of quantitative approaches for measurements across broad concentration ranges in clinical matrices.

Antifouling Strategies for Blood-Based Biosensing

Material Solutions to Biofouling Challenges

Creating reliable biosensors for use with blood matrices requires sophisticated antifouling strategies that minimize nonspecific protein adsorption while maintaining sensor functionality and sensitivity.

Diagram 1: Biofouling mechanisms and antifouling strategies for blood-based biosensing. Short title: Biofouling and Antifouling Strategies.

Several advanced material strategies have emerged to address biofouling in complex biological matrices:

Polymer-Based Coatings: Poly(ethylene glycol) (PEG) and zwitterionic polymers form highly hydrated layers that create a physical and energetic barrier against protein adsorption [15]. These coatings can be applied through various methods including grafting, self-assembly, and in-situ polymerization.

Dynamic Hydrogels: Recent advances in multifunctional dynamic hydrogels have created materials that can adapt and respond to external stimuli, allowing them to withstand robust changes in the biophysical microenvironment and trigger on-demand functionality [17]. These hydrogels can be designed with breakable and reversible covalent bonds as well as noncovalent interactions, providing self-healing and adaptive properties that resist biofouling [17].

Nanocomposite Materials: Integration of nanomaterials into dynamic hydrogels provides numerous functionalities for biomedical applications that cannot be achieved by conventional hydrogels [17]. These nanocomposites can be engineered with specific surface properties that minimize protein adsorption while maintaining biosensor functionality.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Blood Matrix Biosensing

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Antifouling Polymers (PEG, Zwitterions) | Reduce nonspecific protein adsorption via hydrated layers [15] | Coating thickness (15-70 nm) critical for SPR sensing due to evanescent field decay [15] |

| Reference Serum Samples | Control for inter-individual variability in validation studies [15] | Pooled samples minimize variability but analyte-depleted individual sera better mimic real conditions [15] |

| Surface Plasmon Resonance (SPR) Chips | Label-free real-time monitoring of binding events and fouling [15] | Enable quantification of fouling kinetics in complex matrices |

| Blocking Agents (BSA, Tween 20) | Traditional surface blocking to minimize non-specific adsorption [15] | Can suffer from slow response times and diffusion-limited kinetics [15] |

| Microfluidic Sampling Systems | Controlled transport of blood samples to sensor surfaces [1] | Enable reproducible sample transport; minimize handling variability |

Emerging Technologies and Future Directions

The biosensor field is rapidly evolving to address the challenges of fresh blood matrix analysis through several promising approaches:

AI-Enhanced Biosensing: Integration of artificial intelligence with optical biosensors enables enhanced analytical performance through improved signal processing, pattern recognition, and automated decision-making [18]. Machine learning algorithms can help distinguish specific signals from fouling background in complex data sets.

Multimodal Sensing Platforms: Emerging implantable sensor technologies are converging material science, electronics, and neurobiology to create flexible, wireless, bioresorbable, and multimodal sensors [19]. These platforms combine multiple sensing modalities to cross-validate measurements and improve reliability in challenging matrices.

Advanced Nanomaterials: Two-dimensional MXene coatings amplify electron mobility, boosting electrochemical biosensor response times by approximately 30% compared with conventional carbon inks [20]. Gold-nanoparticle surface functionalization delivers femtomolar detection thresholds for cancer biomarkers, enabling early detection in complex samples [20].

The transition from controlled buffer solutions to fresh clinical blood matrices represents a critical validation challenge that reveals the true limitations and capabilities of biosensing platforms. The "fresh sample reality" of complexity, biofouling, and dynamic interferents demands rigorous experimental design incorporating individual sample variability, appropriate antifouling strategies, and validation against relevant clinical standards. Quantitative biosensing platforms generally outperform semi-quantitative alternatives in these challenging environments, particularly when integrated with advanced materials and data processing approaches. As biosensor technology continues to evolve, embracing rather than avoiding the complexity of fresh blood matrices will be essential for developing clinically meaningful diagnostic tools that succeed beyond laboratory conditions.

Biosensor performance validation in fresh blood samples versus commercial standards reveals critical pitfalls in sensitivity, selectivity, and stability that directly impact translational success. This guide compares biosensor performance through experimental data and outlines methodologies essential for researchers and drug development professionals.

# Critical Performance Pitfalls in Biosensor Translation

Sensitivity Loss: Challenges in Detection Limits

Sensitivity refers to a biosensor's ability to reliably detect low analyte concentrations in complex matrices like blood. Loss often occurs during translation from controlled buffers to clinical samples.

Table 1: Experimental Sensitivity Data for Various Biosensor Platforms

| Biosensor Platform | Target Analyte | Reported Sensitivity | Sample Matrix | Key Challenge |

|---|---|---|---|---|

| SH-SAW HIV Biosensor [21] | Anti-gp41 antibodies | 100% clinical sensitivity | Human plasma | Maintaining sensitivity in viscous blood samples |

| SH-SAW HIV Biosensor [21] | Anti-p24 antibodies | 64.5% clinical sensitivity | Human plasma | Variable antibody expression across patients |

| SPR Cancer Biosensor [22] | Cancer cells (Blood cancer) | 342.14 deg/RIU | Buffer simulation | Translating high theoretical sensitivity to clinical samples |

| Electrochemical H₂O₂ Platform [23] | Hydrogen peroxide | LOD: 0.43 µM | Buffer | Signal amplification in biological matrices |

Supporting Experimental Data: A pilot clinical study of a Surface Acoustic Wave (SAW) biosensor for HIV diagnosis demonstrated varying sensitivity depending on the biomarker targeted. While detection of anti-gp41 antibodies achieved 100% sensitivity in 31 patient plasma samples, detection of anti-p24 antibodies showed only 64.5% sensitivity, highlighting how the same platform can exhibit different clinical performance based on analyte selection [21].

Selectivity Issues: Interference in Complex Matrices

Selectivity problems arise when a biosensor responds to interfering substances other than the target analyte, particularly problematic in blood with complex compositions.

Key Interference Sources:

- Protein Fouling: Nonspecific adsorption of proteins like human serum albumin (HSA, 35-60 mg/mL), immunoglobulin G (IgG, 6-16 mg/mL), and fibrinogen (2 mg/mL) can decrease sensitivity and functionality [14].

- Matrix Effects: Sample viscosity, pH variations, and ionic strength differences between fresh blood and commercial standards can generate false signals [24] [21].

- Structural Similarities: Cross-reactivity with molecules sharing structural similarities to the target analyte [12].

Experimental Protocol for Selectivity Assessment:

- Prepare Interferent Solutions: Create separate solutions of common blood interferents (ascorbic acid, uric acid, acetaminophen, lactate) at physiologically relevant concentrations [1].

- Spike Samples: Add these interferents to both buffer and blood samples containing the target analyte.

- Measure Response: Test biosensor response against interferent-only samples and analyte-plus-interferent samples.

- Calculate Selectivity Coefficient: Compare signal changes to establish interference thresholds [23].

Stability Failures: Operational and Shelf-Life Challenges

Stability encompasses both operational stability (performance consistency during use) and shelf stability (long-term storage viability).

Table 2: Stability Challenges Across Biosensor Types

| Biosensor Type | Primary Stability Challenge | Impact on Performance | Experimental Validation Method |

|---|---|---|---|

| Enzyme-based Electrochemical | Enzyme denaturation over time [12] | Reduced catalytic activity & sensitivity | Accelerated aging studies at different temperatures |

| Wearable Epidermal Sensors | Biofouling at body-sensor interface [1] | Signal drift during continuous monitoring | Continuous operation in simulated/real biofluids |

| Affinity-based Biosensors | Receptor degradation during storage [12] | Increased response time & false negatives | Periodic testing of stored sensors with control samples |

| Whole Blood Biosensors | Surface passivation by blood components [14] | Progressive sensitivity loss | Sequential testing in multiple blood samples |

Experimental Protocol for Stability Testing:

- Accelerated Aging: Store biosensors under controlled temperature and humidity conditions (e.g., 4°C, 25°C, 37°C) for predefined periods [12].

- Periodic Performance Assessment: Test stored biosensors at regular intervals using standardized samples.

- Real-Time Stability Monitoring: For continuous monitors, assess operational stability through extended testing in target biofluids [1].

- Statistical Analysis: Calculate degradation rates and determine half-life of biosensor activity.

# The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Biosensor Validation

| Research Reagent/Material | Function in Biosensor Development | Application Example |

|---|---|---|

| Quinoline-5,8-dione (QD) [25] | Water-soluble quinone mediator with high enzyme reactivity | Glucose sensor strips with enhanced sensitivity |

| Flavin-Adenine Dinucleotide-dependent Glucose Dehydrogenase (FAD-GDH) [25] | Oxygen-insensitive enzyme for improved selectivity | Mediator-type glucose biosensors |

| Cholesterol Oxidase (ChOx) [23] | Flavoenzyme with remarkable stability at extreme conditions | H₂O₂ detection platform for clinical applications |

| Transition-Metal Dichalcogenides (TMDCs) [22] | 2D materials enhancing plasmonic responses | SPR biosensors for cancer cell detection |

| Non-Animal Protein (NAP) [21] | Synthetic reference protein for control channel | SAW biosensors compensating for non-specific binding |

| Finite Element Method (FEM) Simulation [25] | Computational modeling of diffusion and reaction layers | Predicting biosensor performance before fabrication |

Successful biosensor translation requires rigorous validation in biologically relevant matrices. The performance pitfalls of sensitivity loss, selectivity issues, and stability failures can be mitigated through comprehensive testing protocols that directly compare performance in fresh blood samples versus idealized commercial standards. Implementation of the experimental frameworks and reagent solutions outlined here provides a foundation for robust biosensor validation, ultimately enhancing the reliability and commercial potential of novel biosensing platforms.

Building a Robust Testing Protocol: Methodologies for Cross-Sample Performance Evaluation

The validation of biosensor performance across different sample matrices is a critical step in the transition from laboratory research to clinical and point-of-care applications. This guide establishes a structured, paired-sample validation workflow to objectively compare biosensor performance between fresh and commercial blood samples—a key methodological consideration in biomarker research and drug development. The fundamental hypothesis is that identical biosensors may yield divergent results when challenged with fresh clinical specimens versus processed, stabilized commercial samples due to differences in metabolite integrity, matrix effects, and sample preparation artifacts. This guide provides a standardized experimental framework to quantify these differences, ensuring data reliability and supporting regulatory submissions for novel diagnostic platforms.

Experimental Design and Methodology

Core Principle: Paired-Sample Analysis

The foundational principle of this workflow is the parallel analysis of matched sample pairs from the same biological source, processed under different conditions. For each donor, blood is collected and simultaneously processed into multiple sample types:

- Fresh Whole Blood (WB): Analyzed immediately after collection to preserve the native metabolome.

- Fresh Plasma/Serum: Processed from fresh blood via centrifugation.

- Commercial Sample Equivalents: Typically lyophilized or stabilized samples reconstituted according to manufacturer specifications.

This paired design allows researchers to isolate the effect of sample processing and storage from biological variation, providing a controlled assessment of matrix effects on biosensor performance [26].

Sample Collection and Preparation Protocol

A standardized protocol is essential for generating comparable and reproducible data.

- Blood Collection: For a single donor, collect blood via three methods to account for collection variability [26]:

- Venipuncture: Using a hypodermic needle from the arm.

- Fingerstick: Capillary blood from a finger.

- Microblade Device: Capillary blood from the shoulder (e.g., Tasso+ devices).

- Sample Processing:

- Fresh Whole Blood: Aliquot and analyze immediately.

- Fresh Plasma: Collect blood in anticoagulant-containing tubes (e.g., EDTA, heparin), centrifuge at 4°C, and aliquot the supernatant plasma for immediate analysis.

- Fresh Serum: Collect blood in clot-activator tubes, allow to clot for 30 minutes, centrifuge, and aliquot the supernatant serum.

- Commercial Samples: Reconstitute lyophilized quality control samples or stabilized panel samples as per the manufacturer's protocol.

- Sample Storage: Flash-freeze fresh sample aliquots in liquid nitrogen and store at -80°C if not analyzed immediately. Avoid repeated freeze-thaw cycles.

Biosensor Calibration and Validation Experiment

The following experiment is designed to quantify biosensor performance metrics across the different sample types.

- Step 1: Calibration Curve Generation: Spike a pure solution of the target analyte (e.g., a metabolite or protein) into a synthetic buffer or a pooled, characterized sample matrix. Run the biosensor across a range of known concentrations to establish a reference calibration curve. This defines the ideal performance.

- Step 2: Limit of Detection (LOD) and Limit of Quantification (LOQ): Calculate the LOD and LOQ from the calibration curve using the formulas LOD = 3σ/k and LOQ = 10σ/k, where σ is the standard deviation of the blank response and k is the slope of the calibration curve [27].

- Step 3: Paired-Sample Analysis: Analyze the matched pairs of fresh and commercial samples from multiple donors (recommended n ≥ 5). For each sample, record the biosensor's output signal (e.g., impedance change, fluorescence intensity, colorimetric readout).

- Step 4: Cross-Validation with Reference Method: Analyze all samples using a gold-standard reference method, such as Liquid Chromatography-Mass Spectrometry (LC-MS) for metabolites [26] or Immunoradiometric Assay (IRMA) for proteins like Prostate-Specific Antigen (PSA) [28]. This provides ground-truth values for calculating accuracy.

Key Performance Metrics and Data Analysis

Quantitative Comparison of Biosensor Performance

The following table summarizes the core quantitative metrics that should be calculated for each sample type to enable objective comparison.

Table 1: Key Performance Metrics for Paired-Sample Validation

| Performance Metric | Description | Calculation Method | Interpretation in Paired Context |

|---|---|---|---|

| Dynamic Range | The range of analyte concentrations over which the biosensor provides a quantifiable signal. | From the calibration curve; from the lowest detectable concentration to the point of signal saturation. | A reduction in fresh samples may indicate matrix interference. |

| Limit of Detection (LOD) | The lowest analyte concentration that can be reliably distinguished from a blank. | LOD = 3σ/k, where σ is the std. dev. of the blank, and k is the calibration curve slope [27]. | Higher LOD in commercial samples could indicate analyte degradation. |

| Sensitivity | The change in biosensor signal per unit change in analyte concentration. | The slope (k) of the linear portion of the calibration curve. | A significant difference suggests matrix-specific effects on the sensing mechanism. |

| Signal-to-Noise Ratio | The ratio of the specific signal power to the background noise power. | Mean Signal / Standard Deviation of Noise. | Lower ratios in fresh samples may indicate interference from complex biological matrices. |

| Correlation with Reference Method | The agreement between biosensor results and gold-standard measurements. | Linear regression (slope, intercept, R²) or Bland-Altman analysis. | Measures accuracy; a consistent bias between sample types indicates a systematic error. |

| Intra-assay Precision | The reproducibility of repeated measurements within the same run. | Calculated as the Coefficient of Variation (CV = Standard Deviation / Mean). | High CV in one sample type can indicate instability or incompatibility. |

Expected Results and Data Interpretation

Research indicates that when identical biofluid types are compared, minimal metabolome differences are observed across blood collection methods (venous, microblade, fingerstick) [26]. This supports the validity of using microsampling in validation workflows. The primary differences are expected between whole blood and plasma/serum, regardless of collection method, due to the removal of cellular components [26].

A well-validated biosensor should show:

- High correlation between results from fresh and commercial samples for the same analyte.

- A consistent, minimal bias between sample types in Bland-Altman analysis.

- Comparable precision (CV) and dynamic range across all tested matrices.

Workflow Visualization

The following diagram illustrates the logical flow and decision points in the paired-sample validation workflow.

Diagram 1: Paired-Sample Validation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of this validation workflow requires specific, high-quality materials. The following table details key reagent solutions and their functions.

Table 2: Essential Research Reagents and Materials for Biosensor Validation

| Category | Item | Specification / Example | Critical Function in Workflow |

|---|---|---|---|

| Sample Collection | Blood Collection Kits | Hypodermic needles, EDTA/heparin tubes, Tasso+ microblade devices [26], lancets. | Standardizes the initial sample quality and minimizes pre-analytical variation from different draw methods. |

| Biosensor Components | Reporter Protein | Engineered chimeric proteins (e.g., B4E fusion of eIF4E and β-lactamase) [29]. | Generates the detectable signal (e.g., colorimetric change) upon target binding. |

| Solid Support | Functionalized beads (e.g., Streptavidin T1 Dynabeads) [29] or electrode arrays. | Immobilizes capture probes (e.g., poly-dT oligonucleotides or antibodies) to isolate the target. | |

| Assay Reagents | Buffer Systems | HEPES, KCl, Dithiothreitol (DTT) [29], PBS. | Maintains optimal pH and ionic strength; DTT creates a reducing environment for protein stability. |

| Signal Substrate | Nitrocefin [29] or fluorogenic/electroactive substrates. | Compound that is converted by the reporter to generate a measurable signal (color, light, current). | |

| Reference Analytics | Gold-Standard Kits | LC-MS metabolite kits [26], IRMA or ELISA kits for specific proteins (e.g., PSA) [28]. | Provides the reference "ground truth" values for calculating biosensor accuracy and correlation. |

| Cell-Based Sensors | Engineered Cells | Vero cells electroinserted with anti-target antibodies (e.g., via MIME technology) [28]. | Serves as the living sensing element in bioelectric impedance biosensors for detecting antigens. |

This paired-sample validation workflow provides a rigorous, standardized framework for benchmarking biosensor performance against the benchmark of fresh clinical samples. By systematically quantifying differences against commercial standards and gold-standard reference methods, researchers can confidently identify and address matrix-related interferences. The application of this design, utilizing the outlined protocols, metrics, and essential tools, will enhance the reliability of biosensor data, accelerate development cycles, and strengthen the case for clinical adoption of novel diagnostic platforms.

The validation of biosensor performance critically depends on the sample matrix, presenting a significant challenge for researchers developing diagnostic tools for complex biological fluids. A notable gap exists between the high volume of academic research on biosensors and the limited number of commercially successful products, largely due to difficulties in translating laboratory proof-of-concept devices into robust, reliable systems that perform consistently in real-world samples [12] [30]. While many biosensors demonstrate exceptional analytical performance with purified targets in buffer solutions, their functionality often deteriorates in clinically relevant matrices like blood, sweat, saliva, and interstitial fluid due to fouling, interference, and variable composition [12]. This comparison guide objectively evaluates electrochemical, optical, and wearable biosensor platforms within the specific context of validating performance in fresh versus commercial blood samples, providing researchers with critical insights for selecting appropriate sensing modalities for their diagnostic development workflows.

Technical Comparison of Biosensing Modalities

The selection of an appropriate biosensing platform requires careful consideration of transduction mechanisms, material compatibility, and operational requirements, particularly for applications involving complex biological fluids. Each platform offers distinct advantages and limitations for integration into wearable formats and performance in real-world matrices.

Table 1: Core Characteristics of Major Biosensor Platforms for Complex Fluid Analysis

| Parameter | Electrochemical Biosensors | Optical Biosensors | Wearable Biosensors |

|---|---|---|---|

| Fundamental Principle | Measures electrical changes (current, potential, impedance) from biological recognition events [31] | Detects optical signal changes (absorption, fluorescence, SPR) from analyte interaction [32] | Integrated platforms (electrochemical/optical) for continuous, on-body monitoring [33] |

| Key Sensing Mechanisms | Amperometric, voltammetric, potentiometric, impedimetric [31] [34] | Surface Plasmon Resonance (SPR), fluorescence, chemiluminescence, SERS [32] | Skin patches, microneedles, textiles, wrist-worn devices [33] [35] |

| Typical Sensitivity Range | Attomolar to picomolar (for advanced nanostructured sensors) [34] | Picomolar (fluorescence, SPR) [34] | Varies with transduction method; often micromolar for continuous monitoring in sweat [31] [35] |

| Sample Volume Requirements | Low (microliters) [12] | Low to moderate [32] | Continuous, non-invasive sampling (nanoliter to microliter per hour) [33] [35] |

| Compatibility with Blood Samples | High (established with blood glucose meters) [12] | Moderate (can be affected by sample turbidity and light absorption) [32] | Primarily targets surrogate fluids (sweat, ISF); direct blood contact less common [33] |

| Susceptibility to Matrix Effects | Moderate to High (dependent on surface fouling and interferents) [12] [30] | Low to Moderate (immune to electromagnetic interference) [32] | High (dynamic composition of sweat/saliva; motion artifacts) [32] [33] |

Electrochemical biosensors leverage biological recognition elements (e.g., enzymes, antibodies) coupled to electrochemical transducers to convert biological interactions into quantifiable electrical signals [30]. Their commercial success in blood glucose monitoring demonstrates a proven pathway for blood-based analysis, though challenges remain in achieving similar success for other targets [12]. These sensors are particularly valued for their high sensitivity, portability, and compatibility with mass manufacturing techniques [30]. Nanomaterials like graphene, carbon nanotubes, and metal nanoparticles have been extensively incorporated to enhance electron transfer and increase the electroactive surface area, pushing detection limits to exceptionally low concentrations [31] [34].

Optical biosensors utilize various light-matter interactions to detect target analytes, offering advantages such as high sensitivity, immunity to electromagnetic interference, and capability for multiplexing through spectral separation [32]. While not all optical sensing is non-invasive, these platforms are particularly promising for wearable applications that target biofluids like sweat, tears, or saliva, as they can often be engineered to function without direct blood contact [32] [35]. However, their performance in turbid media like whole blood can be compromised by light scattering and absorption, requiring sophisticated sample processing or optical designs to mitigate these effects [32].

Wearable biosensors represent an integrated systems-level approach, incorporating either electrochemical or optical transduction mechanisms into miniaturized, body-worn form factors [33] [35]. These devices facilitate continuous, real-time monitoring of biomarkers, moving beyond snapshot measurements to dynamic health assessment. A significant research focus in this area is establishing robust correlations between analyte concentrations in easily accessible biofluids (e.g., sweat, interstitial fluid) and their levels in blood, which remains the clinical gold standard for many diagnostics [33].

Performance Analysis in Complex Fluids

Validating biosensor performance in complex biological matrices is a critical step in translational development. This process requires rigorous testing against standardized reference methods and careful assessment of potential interferents.

Table 2: Analytical Performance and Validation Data for Biosensor Platforms

| Sensor Platform | Reported LoD (Buffer) | Reported LoD (Complex Fluid) | Key Interferents | Validation Approach |

|---|---|---|---|---|

| Electrochemical (Lactate) | ~0.05 nM (DPV in buffer) [31] | Not specified; signal attenuation common in blood [31] | Ascorbic acid, uric acid, acetaminophen [31] | Amperometric comparison with standard clinical analyzers [31] |

| Optical (SPR-based Immunosensor) | ~pM range for proteins [32] | Signal degradation in undiluted serum/blood [32] | Non-specific protein adsorption, cellular components [32] | Cross-validation with ELISA on split patient samples [32] |

| Wearable (Sweat Zinc Patch) | N/A (on-body calibration) | 1–200 µM (in human sweat) [33] | Other metal ions (e.g., Cu²⁺, Cd²⁺) [33] | On-body testing during exercise; reference method not specified [33] |

| Electrochemical (Glucose Meter) | N/A | ~5–10% deviation from lab standard in capillary blood [12] | Maltose, galactose (varies by sensor chemistry) [12] | Extensive clinical trials following FDA/ISO guidelines [12] |

A critical challenge in biosensor development is the discrepancy between performance in clean buffers versus complex biological samples. Many highly sensitive detection methods, including some electrochemical and optical platforms, experience significant performance degradation when transitioning from purified analyte solutions to heterogeneous clinical samples like blood, plasma, or serum [12]. This performance gap often arises from the "matrix effect," where other components in the sample interfere with the sensing mechanism through fouling, non-specific binding, or generating overlapping signals [12] [30]. Consequently, a new sensor must be tested on various unmodified, unspiked samples and cross-validated with a reference method to establish clinical credibility [12].

The distinction between fresh and commercial blood samples is particularly relevant for validation workflows. Commercial quality control samples and biobanked specimens provide consistency for initial benchmarking but may lack the full complexity and dynamic cellular components of freshly drawn blood. For instance, red blood cells can alter the viscosity and optical properties of fresh blood, potentially affecting sensor readings in ways that are not apparent in processed commercial samples [12]. Researchers must therefore incorporate fresh clinical samples early in the validation pipeline to identify matrix-related challenges and refine sensor interfaces accordingly.

Experimental Protocols for Biosensor Validation

Protocol for Cross-Validation in Fresh vs. Commercial Blood Samples

Objective: To systematically compare the performance of a biosensor platform using fresh human blood and commercial blood samples to quantify matrix-induced deviations.

Step 1: Sample Preparation

- Collect fresh human whole blood via venipuncture using anticoagulant tubes (e.g., K2EDTA) from consented donors following institutional review board (IRB) protocols.

- Source commercially available human whole blood quality control materials from certified vendors.

- Spike both sample types with a known concentration gradient of the target analyte (e.g., glucose, lactate, cardiac troponin) and allow for equilibration at room temperature for 30 minutes.

Step 2: Sensor Measurement

- For electrochemical sensors, perform measurements using a potentiostat in the relevant mode (e.g., amperometry for continuous monitoring, DPV for specific biomarkers) [31] [34].

- For optical sensors, acquire signals using the appropriate reader (e.g., fluorescence microscope, SPR spectrometer) [32].

- Analyze all samples in triplicate across at least three independent experimental runs to ensure statistical power.

Step 3: Reference Method Analysis

Step 4: Data Analysis

- Calculate key performance metrics: Limit of Detection (LoD), sensitivity, and recovery efficiency for both fresh and commercial blood matrices.

- Perform statistical analysis (e.g., Bland-Altman plot, Pearson's correlation) to compare sensor results with the reference method and to assess the agreement between data obtained from fresh versus commercial samples.

Protocol for Assessing Biofouling in Complex Fluids

Objective: To evaluate the susceptibility of the sensor surface to fouling and its impact on long-term signal stability.

Step 1: Sensor Functionalization

- Modify sensor surfaces with appropriate biorecognition elements (e.g., enzymes, antibodies, aptamers) and anti-fouling coatings (e.g., PEG, zwitterionic polymers) [33].

Step 2: Exposure to Complex Matrices

- Incubate functionalized sensors in undiluted blood plasma, serum, or artificial sweat for predetermined intervals (e.g., 1, 6, 24 hours) at 37°C.

Step 3: Signal Measurement

- Measure the sensor response to a fixed concentration of the target analyte before and after exposure to the complex fluid.

- Quantify signal drift and change in sensitivity.

Step 4: Surface Characterization

- Use techniques like Scanning Electron Microscopy (SEM) or Atomic Force Microscopy (AFM) to inspect the sensor surface for adsorbed proteins or other foulants post-incubation.

Diagram 1: Biosensor validation workflow for comparing fresh and commercial blood samples, highlighting parallel measurement paths and data comparison steps.

Essential Research Reagent Solutions

Successful development and validation of biosensors for complex fluids require a carefully selected toolkit of reagents and materials. The following table details essential components and their functions in a typical biosensor research pipeline.

Table 3: Research Reagent Solutions for Biosensor Development and Validation

| Reagent/Material | Function | Example Application |

|---|---|---|

| Biorecognition Elements | Provides specificity for the target analyte | Enzymes (e.g., Glucose Oxidase, Lactate Oxidase), antibodies, aptamers [31] [12] |

| Conductive Polymers | Enhances electron transfer, provides immobilization matrix | Polypyrrole, polyaniline, PEDOT:PSS for electrode modification [34] |

| Nanomaterials | Increases surface area, improves sensitivity & catalytic activity | Graphene, carbon nanotubes, metal nanoparticles (Au, Pt) [31] [32] |

| Anti-Fouling Agents | Reduces non-specific adsorption in complex fluids | Polyethylene glycol (PEG), hydrogels, zwitterionic polymers [33] |

| Electrochemical Mediators | Shuttles electrons between enzyme and electrode surface | Ferrocene derivatives, Prussian Blue, potassium ferricyanide [12] [30] |

| Flexible Substrates | Enables conformable, wearable sensor design | Polydimethylsiloxane (PDMS), polyimide (PI), waterborne polyurethane (PU) [32] [33] |

| Reference Blood Materials | Serves as a consistent matrix for benchmarking | Commercial quality control blood samples (lyophilized or liquid) [12] |

Electrochemical, optical, and wearable biosensor platforms each present a unique profile of advantages and limitations for applications in complex fluids like blood. Electrochemical sensors offer high sensitivity and a proven commercial pathway but can be susceptible to fouling and electrochemical interferents. Optical biosensors provide high specificity and immunity to electromagnetic noise but may struggle with turbid samples. Wearable platforms enable unprecedented continuous monitoring but require further validation of the correlation between surrogate fluid and blood analyte levels. The critical differentiator for successful translation lies in rigorous, holistic validation that explicitly tests sensor performance against reference methods using fresh clinical samples early in the development process. By adopting a validation workflow that accounts for the significant matrix differences between fresh and commercial blood, researchers can significantly de-risk the development pathway and enhance the translational potential of their biosensor technologies.

The core of any biosensor is its biorecognition element, the biological or biomimetic component responsible for the specific sequestration of a target bioanalyte [36]. The selection of this element is paramount, as it directly dictates the biosensor's performance in terms of sensitivity, selectivity, reproducibility, and reusability [36]. These characteristics are critically evaluated during the validation of biosensor performance, especially when comparing results from fresh clinical samples against those from commercial or processed blood samples. Biorecognition elements can be broadly classified into natural (e.g., antibodies, enzymes), pseudo-natural (e.g., aptamers), and synthetic (e.g., Molecularly Imprinted Polymers) categories, each with distinct advantages and limitations for detecting proteins, enzymes, and other metabolites [36]. This guide provides a comparative analysis of detection techniques and presents case studies highlighting the experimental protocols and data critical for researchers validating biosensor performance in complex matrices like blood.

Comparative Analysis of Biomolecular Detection Techniques

While the ELISA has long been the gold standard for biomolecular detection, modern techniques like Surface Plasmon Resonance (SPR) offer significant advantages for characterizing binding interactions in real-time [37].

Table 1: Side-by-Side Comparison of ELISA and SPR Techniques [37]

| Criterion | ELISA | SPR |

|---|---|---|

| Data Measurement | End-point assay; quantifies presence but not kinetics. | Real-time; provides both affinity (KD) and kinetics (ka, kd) data. |

| Label Requirement | Requires tagged antibodies and substrates for signal generation. | Label-free; detection via changes in refractive index. |

| Experiment Length | Long process including coating, incubation, washing, and blocking (>1 day). | Simplified, automated protocols; significantly faster time-to-answer. |

| Low-Affinity Interactions | Poor performance; multiple washing steps can remove low-affinity binders, risking false negatives. | Effectively quantifies both low- and high-affinity interactions. |

| Cost & Maintenance | Highly cost-effective and accessible; uses standard lab equipment. | Higher upfront costs; though modern benchtop systems lower ongoing maintenance. |

| Learning Curve | Short learning curve based on transferable pipetting skills. | Traditionally steep; newer systems feature intuitive software and automation. |

The data from this comparison clearly indicates that SPR outperforms ELISA in most technical criteria, particularly for applications requiring detailed kinetic profiling or the detection of low-affinity interactions, which are common in complex biofluids [37]. For instance, in detecting low-affinity anti-drug antibodies (ADAs), one study found an SPR positivity rate of 4%, compared to only 0.3% by ELISA, demonstrating a critical advantage for clinical sensitivity [37].

Case Study 1: Validation of a Novel Blood Test for Alzheimer's Disease

Experimental Protocol and Workflow

The recent FDA clearance of the Lumipulse G pTau217/ß-Amyloid 1-42 Plasma Ratio test exemplifies a successful transition from biomarker research to a validated clinical blood test [38]. The validation relied on samples from longitudinal cohort studies like the Wisconsin Registry for Alzheimer's Prevention (WRAP). Key steps involved:

- Sample Collection: Paired biofluids (cerebrospinal fluid - CSF - and blood plasma) were collected from registered participants [38].

- Biomarker Correlation: Researchers demonstrated that levels of Alzheimer's-associated proteins (p-tau217 and Aβ42) measured in CSF were congruent with their levels in blood plasma from the same individuals [38].

- Assay Validation: The clinical performance of the blood-based test was validated against the amyloid pathology in the brain, with WRAP and the Wisconsin Alzheimer's Disease Research Center (ADRC) contributing 40% of the validation samples [38].

The logical workflow from discovery to clinical validation is outlined below.

Research Reagent Solutions

- Lumipulse Instrument: An automated, immunoassay-based analyzer used for precise measurement of protein biomarkers in biofluids [38].

- Paired Clinical Samples: Carefully collected and curated CSF and blood plasma samples from well-characterized patient cohorts are essential for correlating blood-based biomarkers to central nervous system pathology [38].

- Specific Antibodies: Immunoassays require highly specific antibodies that recognize the target epitopes on phosphorylated tau (p-tau217) and amyloid-beta (Aβ42) proteins.

Case Study 2: An AI-Based Blood Test for Sepsis Diagnosis and Prognosis

Experimental Protocol and Workflow

The TriVerity test, run on the Myrna instrument, represents a breakthrough in host-response diagnostics for acute infection and sepsis [39]. Its validation was detailed in the prospective, multi-center SEPSIS-SHIELD study.

- Patient Enrollment: 1,441 adult patients presenting to emergency departments with suspected acute infection or sepsis were enrolled [39].

- Sample Testing: The TriVerity test uses isothermal amplification of 29 host immune mRNAs from a blood sample. Machine learning algorithms then process this data to generate three scores: Bacterial, Viral, and Severity [39].

- Data Analysis: Each score (0-50) is placed into an interpretation band (Very Low to Very High). The primary endpoints were clinically adjudicated infection status (bacterial vs. viral vs. non-infectious) and the need for critical care interventions within 7 days [39].

The following diagram illustrates the streamlined experimental workflow.

Performance Data and Comparison to Standard Biomarkers

The TriVerity test was benchmarked against traditional protein biomarkers, demonstrating superior accuracy [39].

Table 2: Diagnostic and Prognostic Accuracy of the TriVerity Test [39]

| TriVerity Score | Target Assessment | AUROC | Comparison to Standard Biomarkers |

|---|---|---|---|

| Bacterial Score | Likelihood of Bacterial Infection | 0.83 | More accurate than CRP, procalcitonin, or white blood cell count. |

| Viral Score | Likelihood of Viral Infection | 0.91 | Superior to standard biomarkers. |

| Severity Score | Need for ICU-Level Care within 7 days | 0.78 | Allows for risk reclassification compared to qSOFA alone. |

The study reported that the test had rule-in specificities >92% and rule-out sensitivities >95% for each score. A preliminary utility analysis suggested that using TriVerity could potentially reduce inappropriate antibiotic use by 60-70% [39].

Research Reagent Solutions

- Myrna Instrument: A cartridge-based, automated instrument that performs isothermal amplification and analysis, with an operator hands-on time of under one minute [39].

- Host mRNA Panel: A predefined set of 29 host immune mRNAs associated with infection status, type, and severity.

- Machine Learning Algorithms: Proprietary algorithms that convert the quantitative mRNA data into clinically actionable scores for bacterial infection, viral infection, and illness severity.

Challenges in Biosensor Commercialization and Validation

Despite technological advances, translating biosensor research into commercially successful products remains challenging. Key obstacles identified in the literature, which directly impact studies comparing fresh versus commercial samples, include [12]:

- Stability: The shelf-stability of biorecognition elements (e.g., enzymes, antibodies) is a major concern, particularly for single-use, disposable biosensors [12].

- Specificity and Selectivity: Ensuring high specificity in complex matrices like blood is difficult. Cross-reactivity with non-target analytes must be eliminated [12].

- Reproducibility and Mass Production: Fabricating multiple identical sensors with predictable performance is a significant hurdle for large-scale manufacturing [12].

- Validation with Real Samples: Biosensors must be tested on various unmodified, unspiked samples and cross-validated with a reference method. Performance should not only be confirmed with the target analyte in a clean buffer but also in samples containing all possible interfering substances found in body fluids [12].

The exceptional success of the glucose biosensor is frequently attributed to the intrinsic properties of glucose oxidase, which is inexpensive, has a rapid turnover, and is highly stable at physiological pH and temperature, setting a high bar for other biosensors to achieve [12].

Integrating Microfluidic Devices for Automated Sample Processing and Analysis

The integration of microfluidic devices with biosensors represents a transformative advancement in analytical science, enabling the development of compact, automated systems capable of sophisticated sample processing and detection. A central challenge in this field, however, lies in the validation of biosensor performance across different sample types, particularly when comparing fresh clinical samples with processed commercial blood products. The fundamental thesis guiding this comparison is that the sample matrix—influenced by processing, anticoagulants, and storage conditions—profoundly affects analytical outcomes. Reliable technology translation beyond research settings requires a deep understanding of how these factors influence key performance metrics such as sensitivity, replicability, and operational yield [40].