Implementing Sentinel Sensors for Background Subtraction: Advanced Methodologies for Biomedical and Clinical Research

This comprehensive article explores the implementation of Sentinel sensor technology for background subtraction, a critical technique for isolating dynamic signals from complex datasets.

Implementing Sentinel Sensors for Background Subtraction: Advanced Methodologies for Biomedical and Clinical Research

Abstract

This comprehensive article explores the implementation of Sentinel sensor technology for background subtraction, a critical technique for isolating dynamic signals from complex datasets. Tailored for researchers, scientists, and drug development professionals, we cover foundational principles of background subtraction and Sentinel sensor capabilities, detail methodological implementations including SAR-SIFT-Logarithm Background Subtraction and time-series analysis, provide troubleshooting and optimization strategies for data reliability, and present rigorous validation frameworks. By synthesizing remote sensing innovations with biomedical research needs, this guide enables enhanced precision in detecting subtle biological changes, supporting applications from high-content screening to longitudinal clinical monitoring.

Background Subtraction Fundamentals and Sentinel Sensor Technology

Core Principles of Background Subtraction in Signal Processing

Background subtraction (BS) is a foundational technique in computer vision and signal processing, serving as a critical pre-processing step for isolating moving objects of interest from their surroundings in a sequence of video frames [1]. For researchers implementing sentinel sensor systems, whether for security monitoring, pharmaceutical process tracking, or behavioral observation in drug development, mastering background subtraction is essential for accurate foreground detection. The core principle involves comparing each new video frame against a reference or dynamically updated background model to generate a binary mask where pixels corresponding to moving objects are labeled as foreground [1]. This process enables sentinel systems to focus computational resources on relevant changes while ignoring static or slowly varying environmental elements. Despite its conceptual simplicity, effective background subtraction must overcome significant challenges including dynamic backgrounds with moving elements (e.g., foliage, water), gradual and sudden illumination changes, camera jitter, and the introduction of shadows [1]. This document outlines the core principles, methodologies, and practical protocols for implementing robust background subtraction within sentinel sensor research frameworks.

Theoretical Foundations

Algorithmic Approaches

Background subtraction techniques span from simple statistical models to sophisticated machine learning-based approaches, each with distinct advantages for specific sentinel sensor applications.

Traditional Statistical Methods include frame differencing, which calculates absolute differences between consecutive frames but struggles with slow-moving objects [1]. The running Gaussian average models each pixel as a Gaussian distribution that updates incrementally, providing computational efficiency but limited effectiveness for multi-modal backgrounds [1]. Mixture of Gaussians (MoG) addresses this limitation by representing each pixel with multiple Gaussian distributions to handle complex, multi-modal backgrounds common in outdoor sentinel deployments [2] [1]. Kernel Density Estimation (KDE) offers a non-parametric approach that models background probability density using kernel functions, adapting well to dynamic backgrounds at increased computational cost [1].

Advanced Modern Algorithms include the Visual Background Extractor (ViBe), which uses a non-parametric pixel-level model that maintains a set of background samples for each pixel and updates randomly to preserve temporal consistency [1]. The Pixel-Based Adaptive Segmenter (PBAS) combines statistical modeling with feedback-based adaptation mechanisms that dynamically adjust decision thresholds and learning rates for each pixel [1]. The Codebook model represents each pixel with a codebook of codewords encoding various background states, effectively handling both static and dynamic background elements while enabling efficient memory usage [1]. Recent research has also introduced graph-based approaches such as GraphBGS, which utilizes concepts from graph signal processing and semi-supervised learning, demonstrating particular promise for both static and moving camera scenarios [3]. Morphological methods like the Mathematical Morphology Background Subtraction (MMBS) algorithm analyze texture information in discrete spaces using erosion, dilation, opening, and closing operations to create models robust to global luminance variations [4].

Critical Performance Metrics

Quantitative evaluation of background subtraction algorithms requires multiple metrics to provide a comprehensive assessment of performance characteristics relevant to sentinel sensor applications.

Table 1: Key Performance Metrics for Background Subtraction Algorithms

| Metric | Calculation | Interpretation | Optimal Value |

|---|---|---|---|

| Precision | TP / (TP + FP) | Proportion of correctly identified foreground pixels among all detected foreground pixels | 1 (higher is better) |

| Recall (Sensitivity) | TP / (TP + FN) | Proportion of correctly identified foreground pixels among all actual foreground pixels | 1 (higher is better) |

| F1 Score | 2 × (Precision × Recall) / (Precision + Recall) | Harmonic mean balancing precision and recall | 1 (higher is better) |

| Intersection over Union (IoU) | (Area of Intersection) / (Area of Union) | Overlap between predicted foreground mask and ground truth | 1 (higher is better) |

TP = True Positives, FP = False Positives, FN = False Negatives

These metrics enable objective comparison between different techniques and parameter settings, helping researchers select the most suitable algorithm for specific sentinel applications [1]. The F1 score is particularly valuable when a single performance metric is desired, especially with imbalanced datasets where foreground pixels are substantially outnumbered by background pixels [1]. IoU provides a spatial measure of accuracy that complements pixel-wise metrics, making it especially useful for object detection and segmentation tasks in pharmaceutical research environments [1].

Experimental Protocols

Implementation of Background Subtraction Algorithms

This protocol details the implementation of MoG and KNN background subtraction algorithms using OpenCV, suitable for initial sentinel sensor deployment.

Materials and Equipment:

- Static video sensor or camera system

- Computing hardware with OpenCV 4.0+

- Video sequence data (minimum 100 frames for initialization)

- Ground truth annotations for performance validation

Procedure:

- Sensor Calibration and Video Acquisition:

- Position the sentinel sensor in a fixed location with a stable field of view.

- Configure video resolution and frame rate according to monitoring requirements (e.g., 1080p at 30 fps).

- Capture initial video sequences representing various environmental conditions expected during monitoring.

Background Model Initialization:

- Select appropriate algorithm based on environmental conditions:

- For environments with dynamic backgrounds (e.g., vegetation movement, water surfaces), implement KNN:

- For environments with consistent lighting but multiple background states, implement MOG2:

- Initialize the model with at least 50-100 frames without foreground objects for stable background learning [5].

- Select appropriate algorithm based on environmental conditions:

Foreground Mask Processing:

- Apply the background subtractor to each frame to obtain the initial foreground mask:

- Implement post-processing operations to refine the mask:

- Morphological opening (erosion followed by dilation) removes small noise regions, while closing (dilation followed by erosion) fills small holes in foreground objects [5] [1].

Detection and Tracking:

- Perform connected component analysis on the refined mask to identify distinct foreground objects.

- Apply non-maximal suppression to eliminate overlapping detections [5].

- Implement object tracking by correlating detections across consecutive frames.

Model Update and Adaptation:

- Enable continuous model updating to adapt to gradual environmental changes.

- For MOG2, maintain default learning rate or adjust based on scene dynamics.

- Monitor performance metrics and recalibrate if precision/recall degradation exceeds 15%.

Morphological Background Modeling for Challenging Environments

This protocol implements the Mathematical Morphology Background Subtraction (MMBS) approach, particularly suited for sentinel sensors in outdoor environments with varying luminance conditions [4].

Materials and Equipment:

- Sentinel sensor system with texture capture capability

- Computing hardware supporting morphological operations

- Dataset with global luminance variations for validation

Procedure:

- Texture Characterization:

- Convert input frames to grayscale while preserving texture information.

- Apply multi-scale morphological filters to extract texture features invariant to global luminance changes.

Background Model Construction:

Foreground-Background Labeling:

- Establish discrete probability density functions for texture intensity at each pixel location.

- Implement thresholding based on morphological residuals to classify pixels as foreground or background.

Model Update Procedure:

- Implement selective updating strategy that preserves static background elements while incorporating permanent scene changes.

- Adjust model parameters based on global luminance measurements to maintain consistency across lighting conditions.

Performance Evaluation Protocol

This protocol establishes standardized procedures for quantifying background subtraction algorithm performance in sentinel sensor applications.

Materials and Equipment:

- Annotated dataset with pixel-wise ground truth (e.g., CDNet2014 [2])

- Computing environment for metric calculation

- Benchmark video sequences representing specific challenges

Procedure:

- Dataset Preparation:

- Select benchmark sequences that represent challenges relevant to the deployment environment (dynamic backgrounds, camera jitter, intermittent motion, shadows).

- Ensure availability of pixel-wise ground truth annotations for minimum 20% of frames.

Algorithm Execution:

- Process each video sequence through the background subtraction pipeline.

- Generate binary foreground masks for each frame.

Metric Calculation:

- For each frame, compute TP, FP, TN, FN by comparing output masks with ground truth.

- Calculate precision, recall, F1 score, and IoU using the formulas in Table 1.

- Generate aggregate statistics (mean, standard deviation) for each sequence and across all sequences.

Challenge-Specific Evaluation:

- For camera jitter: Evaluate performance degradation specifically on frames after jitter occurs.

- For ghosting artifacts: Measure the number of frames required for the artifact to disappear.

- For high-speed foreground movement: Assess whether "hangover" phenomena appear and their duration [6].

Comparative Analysis:

- Rank algorithms based on composite performance across all metrics.

- Document computational requirements (processing time, memory usage) for resource-constrained sentinel deployments.

Visualization of Background Subtraction Workflows

Core Background Subtraction Process

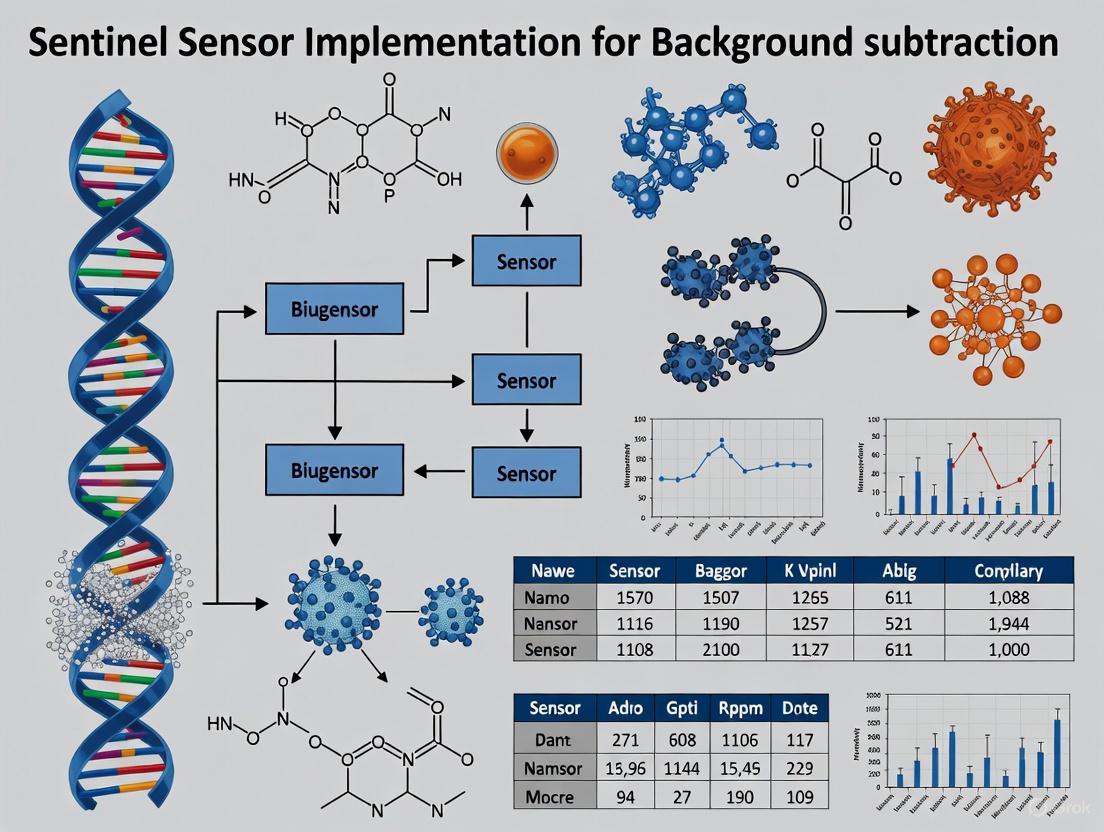

Diagram 1: Core background subtraction workflow with feedback

Morphological Background Subtraction (MMBS) Architecture

Diagram 2: Morphological background subtraction architecture

The Scientist's Toolkit

Research Reagent Solutions

Table 2: Essential Research Materials and Computational Tools for Background Subtraction Research

| Item | Function/Application | Implementation Notes |

|---|---|---|

| OpenCV BackgroundSubtractor Classes | Pre-implemented algorithms (MOG2, KNN, GMG) for rapid prototyping | MOG2 suitable for dynamic backgrounds; KNN effective for shadow detection [5] |

| CDNet2014 Dataset | Benchmark dataset with diverse challenge categories and pixel-wise ground truth | Contains 11 categories including bad weather, low frame-rate, night, PTZ [2] |

| Structural Elements (λ) | Define neighborhood relationships for morphological operations | Size and shape impact sensitivity to noise and object detection capability [4] |

| Graph Signal Processing Tools | Framework for graph-based background subtraction (GraphBGS) | Requires less labeled data than deep learning methods; effective for static and moving cameras [3] |

| Morphological Operators (Erosion, Dilation) | Fundamental operations for noise reduction and mask refinement | Erosion removes small noise regions; dilation fills holes in detected objects [4] [1] |

| Remote Scene IR Dataset | Specialized dataset for infrared video analysis with pixel-wise ground truth | Contains 12 video sequences with 1263 total frames representing specific BS challenges [6] |

| Precision-Recall Evaluation Framework | Quantitative assessment of algorithm performance | Essential for objective comparison between different techniques and parameter settings [1] |

Sentinel-Specific Implementation Considerations

For sentinel sensor deployment in pharmaceutical research and drug development environments, background subtraction systems must address several specialized requirements:

Environmental Adaptation: Sentinel sensors monitoring laboratory environments, production facilities, or animal research areas must accommodate specific challenges including sterile environments with uniform lighting, controlled access areas with intermittent human presence, and regulatory requirements for data integrity and audit trails. Background models should incorporate temporal awareness to distinguish between normal cyclic variations (e.g., lighting changes, scheduled activities) and anomalous events requiring intervention.

Multi-Camera Synchronization: Large-scale sentinel deployments require coordinated background subtraction across multiple sensors. Hardware-based synchronization using external triggers ensures temporal alignment, while software approaches employ timestamp matching or feature-based alignment [1]. View-invariant techniques utilizing homography transformations or 3D scene reconstruction create unified background representations across distributed sensor networks [1].

Robustness to Pharmaceutical Workflows: Effective background subtraction in drug development environments must accommodate specific workflow patterns including periodic high-activity periods, varying personnel density, equipment movement, and specialized monitoring conditions such as dark rooms for light-sensitive compounds. Algorithm selection should prioritize adaptability to these specialized conditions while maintaining detection accuracy for security and process monitoring applications.

The term "Sentinel sensor" encompasses two distinct but technologically advanced domains: the Microsoft Sentinel cybersecurity information platform and Sentinel satellite Earth observation systems. In the context of background subtraction research, these systems provide critical data acquisition and processing capabilities that enable sophisticated foreground-background separation across various applications, from video surveillance to cybersecurity analytics. Microsoft Sentinel operates as a cloud-native SIEM (Security Information and Event Management) system that ingests, correlates, and analyzes security data across enterprise environments using a connector ecosystem of over 350 integrations [7]. Its architectural strength lies in processing heterogeneous data streams to identify threats by distinguishing malicious signals (foreground) from normal system activity (background).

Complementarily, Sentinel satellite platforms, such as those referenced in multispectral imaging research, provide remote sensing capabilities using advanced optical and radar sensors to monitor terrestrial and atmospheric conditions [8]. The implementation of these Sentinel systems for background subtraction research represents a paradigm shift toward multisensor data fusion, where complementary sensing modalities overcome limitations inherent in single-source approaches. This technological convergence enables researchers to address classic background subtraction challenges—including illumination changes, dynamic backgrounds, and camouflage—through robust, multi-dimensional data analysis [9] [10].

Technical Capabilities and Data Specifications

Microsoft Sentinel Platform Architecture

Microsoft Sentinel's sensor capabilities are centered around its log ingestion framework and analytics engine. The platform processes security data through specialized connectors that normalize heterogeneous formats into a unified schema for analysis. Key architectural innovations include the Sentinel graph for visualizing entity relationships, User Entity and Behavior Analytics (UEBA) with expanded support for cross-platform data sources (including AWS, GCP, and Okta), and a Model Context Protocol (MCP) server that standardizes context-aware security automation [11]. These capabilities provide the analytical foundation for implementing sophisticated background subtraction methodologies in cybersecurity threat detection.

The platform's data characteristics are defined by its multi-tiered storage architecture, which includes Analytics and Data Lake tiers optimized for different query patterns and retention requirements. A significant capability enhancement is the introduction of summary rules, which perform real-time data aggregation to create condensed representations of verbose log data. These rules execute precompiled queries at defined intervals, storing results in custom log tables that support efficient historical analysis while reducing storage costs [12]. This functionality is particularly valuable for background subtraction research dealing with high-volume data streams, as it enables persistent querying of summarized security patterns beyond standard retention windows.

Satellite Remote Sensing Capabilities

Sentinel satellite systems provide complementary sensing capabilities through multispectral imaging technologies. The Sentinel-2 mission, for example, delivers optical imagery at spatial resolutions ranging from 10m to 60m across 13 spectral bands, capturing data from visible and near-infrared to shortwave infrared wavelengths [8]. These characteristics enable sophisticated environmental monitoring applications where background subtraction techniques isolate specific phenomena from complex terrestrial backgrounds.

The data characteristics of satellite-based Sentinel sensors include temporal resolution defined by revisit frequency, radiometric resolution determining sensitivity to reflectance variations, and atmospheric penetration capabilities that vary across spectral bands. Research demonstrates that fusion of Sentinel-1 (SAR) and Sentinel-2 (optical) datasets significantly enhances soil moisture assessment by combining the advantages of both sensor types—the vegetation penetration capability of radar with the spectral richness of optical imagery [8]. This multi-sensor approach effectively addresses the classic background subtraction challenge of distinguishing subtle moisture variations from vegetative background interference.

Table 1: Comparative Capabilities of Sentinel Sensor Platforms

| Feature | Microsoft Sentinel | Sentinel Satellite Systems |

|---|---|---|

| Primary Data Type | Security event logs | Multispectral imagery |

| Sensing Methodology | Connector ecosystem | Optical/SAR remote sensing |

| Spatial Characteristics | Logical network topology | 10m-60m ground resolution |

| Temporal Resolution | Real-time streaming | 5-day revisit (Sentinel-2) |

| Key Innovation | Summary rules & UEBA | Cross-sensor data fusion |

| Background Subtraction Application | Threat detection | Environmental change detection |

Experimental Protocols for Background Subtraction

Multi-Modal Sensor Fusion Protocol

The integration of multiple sensing modalities addresses fundamental limitations in single-source background subtraction. This protocol leverages the complementary strengths of different Sentinel sensors to achieve robust foreground detection under challenging conditions.

Materials and Reagents:

- Microsoft Sentinel Workspace: Configured with required permissions and data connectors

- Sentinel Data Lake: Enabled for cost-effective long-term storage

- Summary Rules: Implemented for data aggregation

- Threat Intelligence Feeds: Integrated for IoC matching

Procedure:

- Sensor Configuration: Initialize Microsoft Sentinel data connectors for target security data sources (e.g., firewall logs, identity management systems, cloud platform audits)

- Data Ingestion: Establish streaming of security telemetry into the Sentinel analytics tier using the Codeless Connector Framework

- Background Modeling: Implement summary rules to create baseline profiles of normal system behavior using KQL queries aggregated by time bins

- Foreground Detection: Configure analytics rules to identify anomalous deviations from behavioral baselines

- Multi-Sensor Correlation: Fuse threat intelligence signals with internal telemetry to reduce false positives

- Validation: Compare detection results against ground truth incident data to calculate precision and recall metrics

This protocol specifically addresses the background subtraction challenge of distinguishing true threats from benign anomalies by implementing a layered sensing approach that combines internal behavioral analysis with external threat context [12] [7].

Background Subtraction with Color and Depth Fusion

This protocol adapts the Codebook background subtraction algorithm for multi-modal sensing, combining color and depth information to overcome limitations of single-modality approaches. The methodology is based on research demonstrating that depth information is less affected by classic color segmentation issues such as shadows and camouflage [10].

Materials and Reagents:

- Active Depth Sensor: Kinect or similar ToF camera capable of simultaneous RGB and depth capture

- Codebook Algorithm Implementation: With extensions for depth channel processing

- Calibration Targets: For sensor alignment and color correction

- Test Sequences: With ground truth annotations for performance validation

Procedure:

- Sensor Calibration: Align color and depth coordinate spaces to ensure pixel correspondence

- Background Initialization: Acquire training sequence of N frames to construct initial background model

- Codebook Construction: For each pixel, create codewords containing both color (RGB) and depth (D) information:

- Color components: vi = (R̅i, G̅i, B̅i)

- Depth component: di = depth value

- Brightness bounds: Imin, Imax

- Frequency and temporal statistics: fi, λi, pi, qi [10]

- Background Maintenance: Update codewords using matching criteria combining color distortion and depth similarity:

- Color distortion: δ = ‖xt‖² - p² where p² = ⟨xt,vi⟩²/‖vi‖²

- Depth similarity: |dt - di| < εd

- Foreground Detection: Classify pixels as foreground if no matching codeword found in both color and depth dimensions

- Performance Evaluation: Calculate precision, recall, and F-measure using ground truth annotations

This protocol demonstrates significantly improved robustness to illumination changes, shadows, and color-based camouflage compared to single-modality approaches [10].

Diagram 1: Background subtraction workflow

Research Reagents and Computational Tools

Table 2: Essential Research Reagent Solutions for Sentinel Sensor Experiments

| Reagent/Tool | Function | Implementation Example |

|---|---|---|

| Microsoft Sentinel Summary Rules | Data aggregation for background modeling | KQL queries with scheduled execution |

| Sentinel Graph | Entity relationship visualization | Interactive attack path analysis |

| Codebook Algorithm | Multi-modal background modeling | RGB-D background subtraction |

| Active Depth Sensors | 3D spatial data acquisition | Kinect, ToF cameras |

| Codeless Connector Framework | Sensor data ingestion | Partner integration to Sentinel |

| Threat Intelligence Feeds | Foreground indicator sources | TI integration with Sentinel |

Data Analysis and Visualization Methodologies

Quantitative Performance Metrics

Background subtraction performance in Sentinel sensor applications requires comprehensive evaluation across multiple dimensions. For cybersecurity implementations, key metrics include detection accuracy (true positive rate), false positive rate, and mean time to respond (MTTR). Microsoft Sentinel's integration with SOAR platforms like BlinkOps has demonstrated MTTR reductions through automated playbook execution [11] [7]. For satellite-based applications, performance is measured through change detection accuracy, temporal consistency, and robustness to environmental factors such as atmospheric conditions and seasonal variations.

The integration of cross-platform UEBA in Microsoft Sentinel has expanded analytical capabilities to include behavioral anomaly detection across diverse data sources including AWS, GCP, and Okta [11]. This multi-source approach addresses the fundamental background subtraction challenge of distinguishing subtle threat signals from noisy system activity across complex enterprise environments.

Visualization of Multi-Sensor Data Relationships

Diagram 2: Multi-sensor data fusion architecture

Sentinel sensor systems represent a significant advancement in background subtraction research through their implementation of multi-modal sensing architectures and adaptive learning capabilities. Microsoft Sentinel's evolution into a unified security platform with graph analytics, expanded UEBA, and summary rules provides a robust framework for distinguishing relevant security events from background system noise [11]. Similarly, the fusion of Sentinel satellite datasets demonstrates how complementary sensing modalities can overcome fundamental limitations in environmental monitoring applications [8].

The continuing development of Sentinel sensor capabilities—particularly in the areas of real-time analytics, cross-platform correlation, and automated response—promises to address persistent challenges in background subtraction research. These include adaptive background maintenance in dynamic environments, disambiguation of foreground entities in crowded scenes, and minimization of false positives without compromising detection sensitivity. As these sensor platforms continue to evolve, they offer increasingly sophisticated foundations for implementing next-generation background subtraction methodologies across diverse application domains.

The Critical Role of Image Registration in Preprocessing Pipelines

Image registration is the computational process of aligning multiple images to a common coordinate system, enabling meaningful comparison, integration, and analysis of data obtained at different times, from different sensors, or from different viewpoints [13]. This process serves as a foundational step in preprocessing pipelines across diverse scientific domains, from medical imaging to remote sensing. In the context of Sentinel sensor implementation for background subtraction research, registration corrects for temporal, spatial, and sensor-specific variations that would otherwise confound the accurate detection of meaningful change against a modeled background.

The essential purpose of registration is to establish spatial correspondence between images, allowing researchers to distinguish genuine scene changes from artifacts induced by variations in acquisition geometry. For Sentinel-based background subtraction research—which aims to detect moving objects, monitor environmental changes, or identify anomalous activities—precise registration is the critical enabler that makes subsequent quantitative analysis scientifically valid [4]. Without proper registration, even sophisticated background models would generate excessive false positives from misaligned scene elements and fail to detect subtle changes of scientific interest.

Theoretical Foundations and Methodologies

Core Registration Principles

Image registration operates on several fundamental principles that transcend specific application domains. The process typically involves four key components: feature detection, where distinctive structures are identified in the images; feature matching, where correspondences between features are established; transform model estimation, where the mathematical mapping between images is determined; and image resampling, where the moving image is transformed to align with the fixed reference [13].

In mathematical terms, registration seeks to find an optimal spatial transformation T that maps coordinates from a moving image I to a reference image R, minimizing a dissimilarity metric D: T̂ = arg min D(R, I ∘ T). The complexity of transformation models ranges from simple rigid transformations (rotation and translation only) to affine and complex non-rigid deformations that accommodate local distortions [14]. For Sentinel satellite imagery, the transformation must typically account for orbital variations, terrain relief, and Earth curvature, necessitating sophisticated geometric models that incorporate digital elevation data and precise orbital parameters [15].

Registration in Multi-Modal Contexts

A particular challenge in registration arises when aligning images from different sensor modalities, such as combining synthetic aperture radar (SAR) data from Sentinel-1 with optical imagery from Sentinel-2. In such cases, intensity-based similarity measures commonly used in mono-modal registration often fail due to different sensor-specific representations of the same scene structures [15]. Successful multi-modal registration instead often relies on feature-based methods that extract and match geometrically distinctive elements recognizable across modalities, or information-theoretic measures like mutual information that capture statistical dependencies between different image representations of the same underlying scene [16].

Image Registration in Sentinel Sensor Pipelines

Sentinel-1 SAR Specific Processing

Sentinel-1 Synthetic Aperture Radar (SAR) data requires specialized preprocessing to correct for geometric distortions inherent to side-looking radar geometry before registration can be effective. The standard preprocessing workflow for Sentinel-1 Ground Range Detected (GRD) products involves a crucial Range Doppler Terrain Correction step that orthorectifies the SAR imagery using orbit state vectors, radar timing annotations, and reference digital elevation models to correct topographic distortions [15]. This process geocodes the SAR scene from radar to geographic geometry, establishing the foundation for precise registration with other data sources.

The preprocessing chain for Sentinel-1 GRD data involves multiple steps that collectively support accurate registration [17] [15]:

- Orbit Correction: Application of precise orbit files to accurately determine satellite position and velocity

- Thermal Noise Removal: Reduction of instrument noise to improve image quality

- Radiometric Calibration: Conversion to radiometrically calibrated sigma nought values

- Terrain Correction: Correction of geometric distortions using the Range Doppler method with a digital elevation model

This standardized workflow ensures that Sentinel-1 products from different acquisition times or tracks can be precisely co-registered for time-series analysis or integrated with other data sources in virtual constellations [15].

Sentinel-2 Processing and Registration

Sentinel-2 multispectral imagery undergoes systematic processing to Level-1C (top-of-atmosphere reflectance) and Level-2A (bottom-of-atmosphere reflectance) products, with geometric correction using a global reference digital elevation model and ground control points [18]. The Processing Baseline (PB) version indicates the algorithm version applied, with successive improvements enhancing geometric performance through refined DEM usage and optimized radiometric and geometric calibrations [18]. For background subtraction research, maintaining consistent Processing Baselines across the dataset is essential for registration stability.

Table 1: Key Sentinel-2 Processing Baseline Improvements Affecting Registration

| Processing Baseline | Acquisition Dates | Geometric Registration Improvements |

|---|---|---|

| PB 05.00 | 4 July 2015 – 31 December 2021 | Geometric refining using Copernicus DEM; Harmonized radiometry between S2A/S2B |

| PB 05.10 | 1 January 2022 – 13 December 2023 | Computing optimizations for processing efficiency |

| PB 05.11 | 4 July 2015 – 13 December 2023 | Optimized geometric refining for improved geolocation accuracy |

Registration for Background Subtraction Research

Theoretical Framework

Background subtraction represents a fundamental computer vision approach for detecting moving objects or changes in image sequences by creating a model that differentiates between static background elements and dynamic foreground elements [4]. The efficacy of any background subtraction methodology is critically dependent on precise image registration, as even sub-pixel misalignments can cause significant artifacts in the foreground/background segmentation.

In mathematical morphology-based background subtraction approaches, registration ensures that the structural elements and morphological operators are applied consistently across the spatial domain [4]. The background model initialization assumes spatial consistency across frames, requiring that corresponding pixels across the image sequence represent the same geographic location. Registration errors manifest as false foreground detections where misaligned background structures are interpreted as scene changes, while simultaneously causing missed detections of actual changes due to spatial smearing in the background model.

Implementation in Down Syndrome Research

The critical interdependence between registration and subsequent analysis is powerfully illustrated in medical imaging research on Down syndrome, where standardized quantification of brain amyloid deposition using the Centiloid method requires precise registration of T1-weighted MRI and amyloid PET scans to the Montreal Neurological Institute (MNI) 152 template space [19]. The initial high failure rate of Centiloid processing in Down syndrome participants (61.3% success) was substantially improved (to 95.6% success) through optimized preprocessing pipelines that enhanced registration performance [19].

This medical imaging case study demonstrates a universal principle applicable to Sentinel background subtraction research: domain-specific anatomical differences (in this case, Down syndrome brain morphology) or scene characteristics can challenge registration algorithms trained on standard templates, necessitating customized preprocessing approaches to achieve reliable results [19]. The research team implemented alternative preprocessing methodologies including image origin reset, filtering, MRI bias correction, and skull stripping to improve registration success, highlighting how targeted preprocessing enables robust registration even with challenging datasets.

Experimental Protocols and Workflows

Sentinel-1 to Sentinel-2 Registration Protocol

This protocol enables precise registration of Sentinel-1 SAR data to Sentinel-2 multispectral imagery grids, facilitating multi-sensor data fusion for enhanced background modeling and change detection.

Table 2: Research Reagent Solutions for Sentinel Registration

| Resource/Tool | Function in Registration | Implementation Notes |

|---|---|---|

| Sentinel Application Platform (SNAP) | Primary processing environment for SAR data | Open-source; contains specialized toolboxes for Sentinel data |

| Copernicus DEM | Digital elevation model for terrain correction | 30m resolution; critical for geometric accuracy |

| Precise Orbit Files | Accurate satellite position and velocity data | Available days/weeks after acquisition; improves geolocation |

| Python (skimage, torchio) | Custom registration algorithm development | Flexible implementation of complex registration transforms |

Procedure:

- Preprocess Sentinel-1 GRD Data using the standard workflow in SNAP: Apply Orbit File → Remove Thermal Noise → Remove Border Noise → Radiometric Calibration (Sigma nought) → Speckle Filtering (Refined Lee) [15].

- Apply Range Doppler Terrain Correction in SNAP, selecting the target Coordinate Reference System to match the Sentinel-2 granule's UTM zone. Set the output pixel spacing to 10m to match Sentinel-2 resolution and use cubic convolution resampling for optimal precision [15].

- Preprocess Sentinel-2 Data by performing atmospheric correction to generate Bottom-of-Atmosphere reflectance (Level-2A product) using the Sen2Cor processor or similar tool.

- Extract Ground Control Points (GCPs) using feature matching algorithms between the terrain-corrected Sentinel-1 image and the Sentinel-2 reference. Suitable features include permanent structures with distinct radar and optical signatures: bridges, airport runways, coastline features, or major infrastructure.

- Calculate Polynomial Transformation based on matched GCPs. For most Sentinel applications, a second-order polynomial sufficiently accounts for residual geometric differences after terrain correction.

- Apply Final Transformation to the Sentinel-1 data using the calculated transformation parameters, resampling to the Sentinel-2 grid using cubic convolution for amplitude data.

- Validate Registration Accuracy by measuring the root mean square error (RMSE) of independent check points not used in transformation calculation. Target accuracy should be sub-pixel (RMSE < 10m for 10m resolution data).

Temporal Series Registration for Background Modeling

This protocol establishes a standardized approach for registering multi-temporal Sentinel image sequences to support robust background model initialization and maintenance.

Procedure:

- Select Reference Image from the temporal series based on optimal cloud cover, acquisition geometry, and image quality.

- Perform Pairwise Registration of all temporal images to the reference using feature-based registration. For optical imagery (Sentinel-2), use SIFT or ORB feature detectors; for SAR (Sentinel-1), use SAR-SIFT or similar radar-appropriate detectors.

- Apply Consistent Resampling to all images in the series, transforming them to the reference image's grid using a single resampling operation to minimize quality degradation.

- Validate Temporal Consistency by measuring alignment accuracy across the entire series, paying particular attention to areas with challenging topography where misregistration is most likely.

- Initialize Background Model using the registered temporal series. For each pixel, compute statistical measures (mean, median, variance) across the temporal stack to characterize the background state [4].

- Implement Model Update Mechanism that accommodates both gradual environmental changes and abrupt scene modifications, with registration accuracy determining the update rate and sensitivity parameters.

Figure 1: Sentinel-1 SAR Preprocessing and Registration Workflow. Critical registration-focused steps highlighted in red and blue.

Quantitative Assessment and Validation

Registration Accuracy Metrics

Systematic quantification of registration accuracy is essential for validating preprocessing pipelines and ensuring the reliability of subsequent background subtraction analyses. The following metrics provide comprehensive assessment of registration performance:

Geometric Accuracy Measures:

- Root Mean Square Error (RMSE): Computed from the residuals of ground control points after transformation. Target values should be sub-pixel (e.g., <10m for 10m resolution Sentinel data).

- Mean Absolute Error (MAE): Less sensitive to outliers than RMSE, providing a robust measure of typical registration error.

- Peak Signal-to-Noise Ratio (PSNR): Particularly useful for evaluating registration quality in homogeneous regions where feature-based measures may be unreliable.

Application-Specific Validation: For background subtraction research, registration quality should additionally be assessed through:

- Background Model Stability: Measuring temporal consistency in static regions of the scene.

- False Positive Rate: Quantifying detection errors in known static areas attributable to misregistration.

- Change Detection Sensitivity: Evaluating the minimum detectable change size as a function of registration accuracy.

Table 3: Registration Accuracy Requirements for Background Subtraction Applications

| Application Scenario | Required Accuracy | Critical Factors | Validation Approach |

|---|---|---|---|

| Urban traffic monitoring [4] | < 1 pixel | Handling of tall structures; parallax effects | Manual inspection of vehicle detections |

| Agricultural change detection | < 2 pixels | Phenological consistency; field boundaries | Comparison with ground truth crop calendars |

| Flood mapping | < 1.5 pixels | Water boundary precision; temporal urgency | Comparison with high-resolution reference data |

| Forest disturbance | < 2 pixels | Handling of terrain; shadow effects | Correlation with lidar-based change maps |

Image registration represents an indispensable component in the preprocessing pipeline for Sentinel-based background subtraction research, forming the geometric foundation upon which reliable change detection and analysis are built. Through specialized preprocessing workflows that account for sensor-specific characteristics—including terrain correction for SAR data and consistent processing baselines for optical imagery—registration enables the precise spatial alignment required for robust background modeling and accurate foreground detection. The protocols and methodologies presented provide researchers with standardized approaches for implementing registration within their preprocessing pipelines, while the quantitative assessment frameworks offer mechanisms for validating performance against application-specific requirements. As Sentinel constellations continue to generate unprecedented volumes of Earth observation data, sophisticated registration methodologies will remain essential for transforming raw imagery into scientifically valid information for environmental monitoring, urban studies, and security applications.

Adapting Remote Sensing Methodologies for Biomedical Applications

The convergence of remote sensing (RS) methodologies and biomedical analysis represents a frontier in quantitative biology and diagnostic innovation. This paradigm applies algorithms and analytical frameworks originally developed for interpreting satellite, aerial, and unmanned aerial vehicle (UAV) imagery to biomedical data, particularly for isolating signals of interest from complex backgrounds. The core challenge in both fields is identical: to detect meaningful, often subtle, "foreground" signals against a pervasive and variable "background." In ecology, this might be detecting a diseased tree in a forest; in biomedicine, it is identifying a pathological cell in a tissue sample or a specific molecular signature in a complex biofluid [20] [21]. This document outlines detailed application notes and protocols for adapting background subtraction and change detection techniques, framing them within a broader thesis on sentinel sensor implementation for intelligent, automated biomedical analysis.

Quantitative Foundations: Core Remote Sensing Concepts and Biomedical Analogues

The table below summarizes key remote sensing concepts and their direct analogues in biomedical research, establishing a common lexicon for interdisciplinary translation.

Table 1: Translation of Remote Sensing Concepts to Biomedical Applications

| Remote Sensing Concept | Description in RS Context | Biomedical Analogue & Application |

|---|---|---|

| Background Subtraction | Separating static scene (background) from moving or novel objects (foreground) in video or image sequences [9]. | Isculating static or healthy tissue architecture from dynamic pathological features (e.g., circulating tumor cells in blood flow, abnormal cells in histology). |

| Multi-Sensor Data Fusion | Combining data from different sensors (e.g., SAR, optical, infrared) to create a more comprehensive scene understanding and improve change detection [22]. | Integrating multi-modal data (e.g., MRI, CT, genomics) for a holistic patient profile and more sensitive diagnostic classification. |

| Spectral/Spatial Resolution | The fineness of detail in the spectral (wavelength) and spatial (physical area) dimensions of an image [20]. | The level of molecular detail (e.g., proteomic, genomic) and the physical scale of analysis (e.g., tissue, cellular, sub-cellular). |

| Change Detection | Identifying significant alterations in a scene over time by comparing multi-temporal images [22]. | Monitoring disease progression (e.g., tumor growth/regression in serial MRI), or tracking treatment efficacy over time. |

| Vegetation Indices (e.g., NDVI) | Spectral indices calculated from different bands to highlight specific vegetation properties [20]. | "Molecular Phenotypes" or algorithmic combinations of biomarkers (e.g., from transcriptomic data) to classify cell states or disease subtypes. |

| Sentinel Sensor | A dedicated sensor or platform (e.g., Sentinel-1, -2) for continuous, systematic monitoring of the Earth's surface [22]. | A deployed biosensor or diagnostic platform for continuous, automated monitoring of a specific biomarker or physiological parameter in a clinical or lab setting. |

Application Notes & Experimental Protocols

The following protocols detail the practical implementation of adapted remote sensing methodologies.

Protocol 1: Background Subtraction for Cellular Dynamics Analysis

This protocol adapts video background subtraction techniques [9] for analyzing time-lapse microscopy data, such as tracking cell migration or division.

I. Research Reagent Solutions & Essential Materials

Table 2: Essential Materials for Cellular Dynamics Analysis

| Item | Function & Specification |

|---|---|

| Live-Cell Imaging Chamber | Maintains physiological conditions (temperature, CO₂, humidity) for long-term microscopy. |

| Inverted Fluorescence Microscope | Equipped with a high-sensitivity camera (sCMOS recommended) and automated stage. |

| Cell Line with Fluorescent Tag | e.g., H2B-GFP for nucleus labeling, enabling clear foreground (cell) segmentation. |

| Image Acquisition Software | e.g., MetaMorph, µManager, for automated, multi-position, time-lapse acquisition. |

| Computing Workstation | High RAM (>32 GB) and multi-core CPU/GPU for processing large image datasets. |

II. Experimental Workflow

The following diagram illustrates the core computational workflow for adapting background subtraction to cellular time-lapse data.

III. Step-by-Step Methodology

Data Acquisition:

- Seed cells in an appropriate live-cell imaging dish.

- Mount the dish on the pre-equilibrated imaging chamber.

- Program the acquisition software to capture images at multiple positions at defined intervals (e.g., every 10 minutes for 48 hours) using a 10x or 20x objective.

Computational Analysis:

- Background Initialization: Load the first

Nframes (e.g.,N=50) of the time series. Compute the median or Gaussian average intensity for each pixel across these frames to generate the initial background model [9]. - Foreground Detection: For each subsequent frame, perform a pixel-wise subtraction of the background model. Apply a threshold to the resulting difference image to create a binary mask where foreground pixels (likely cells) are 1 and background pixels are 0.

- Noise Reduction & Segmentation: Apply morphological "closing" (dilation followed by erosion) to the binary mask to fill small holes within cells. Apply "opening" (erosion followed by dilation) to remove small, noise-induced foreground pixels [9].

- Object Tracking & Quantification: Use a tracking algorithm (e.g., nearest-neighbor) to link cell centroids across frames. Quantify parameters like trajectory, displacement, and speed.

- Background Maintenance: Implement a model update strategy. A common method is to slowly update the background model for pixels not classified as foreground, e.g.,

BGT(t+1) = α * BGT(t) + (1-α) * ITwhereαis a learning rate between 0 and 1 [9].

- Background Initialization: Load the first

Protocol 2: Multi-Sensor Anomalous Change Detection for Multi-Omics Integration

This protocol adapts Multi-Sensor Anomalous Change Detection (MSACD) [22] for identifying significant outliers in integrated multi-omics datasets (e.g., transcriptomic and proteomic data from the same patient cohort).

I. Research Reagent Solutions & Essential Materials

Table 3: Essential Materials for Multi-Omics Change Detection

| Item | Function & Specification |

|---|---|

| Biospecimens | Matatched patient samples (e.g., tumor vs. normal tissue) for multi-assay analysis. |

| RNA-Seq Platform | For generating genome-wide transcriptomic data. |

| Proteomics Platform | e.g., Mass spectrometry, for generating protein abundance data. |

| High-Performance Computing Cluster | For computationally intensive matrix operations and distribution analysis. |

| Bioinformatics Software | R or Python environment with libraries for multivariate statistics (e.g., NumPy, SciKit-learn). |

II. Analytical Workflow

The workflow for integrating heterogeneous data types to find anomalous samples mirrors the MSACD approach used in satellite imagery.

III. Step-by-Step Methodology

Data Preprocessing:

- Obtain normalized and batch-corrected transcriptomic (X) and proteomic (Y) datasets from

Nmatched samples. - Perform log-transformation and standardization (z-score) on both datasets to ensure comparability.

- Obtain normalized and batch-corrected transcriptomic (X) and proteomic (Y) datasets from

Joint Distribution Modeling:

- The core of MSACD is to model the expected relationship between the two data modalities. Use Canonical Correlation Analysis (CCA) to find the linear combinations of transcripts and proteins that are maximally correlated [22].

- Project the original data onto the canonical components to define a joint feature space.

Anomalous Change Detection:

- For each sample

i, calculate the residual between its actual data and the data predicted by the joint model. In the CCA space, this can be the difference between the actual and predicted canonical scores. - Compute the Mahalanobis distance of the residuals for each sample. This distance measures how far a sample's multi-omics relationship deviates from the normative relationship established by the cohort, accounting for covariance [22].

- This Mahalanobis distance is the Anomaly Score.

- For each sample

Outlier Identification & Validation:

- Rank samples by their anomaly score. Set a statistical threshold (e.g., top 5% or values beyond 3 standard deviations) to identify significant outliers.

- Biologically validate these outlier patients by examining their clinical records, survival outcomes, or conducting pathway enrichment analysis on their discordant features to understand the biological basis of the anomaly.

The Scientist's Toolkit: Research Reagent Solutions

The following table expands on the essential tools and reagents for implementing these adapted methodologies.

Table 4: Comprehensive Research Reagent Solutions for Sentinel Sensor Implementation

| Category / Item | Specific Example / Technology | Function in Protocol |

|---|---|---|

| Imaging & Sensing | ||

| High-Content Screening System | PerkinElmer Operetta, ImageXpress Micro | Automated, high-throughput version of Protocol 1 for drug discovery. |

| Sentinel Microfluidic Device | Custom-designed PDMS chip with integrated sensors | Acts as the "sentinel sensor" for continuous, automated monitoring of cells or biomarkers in a micro-environment. |

| Computational Frameworks | ||

| Dynamic Cultural-Environmental Network (DCEN) [23] | Custom graph-based model (Python/TensorFlow) | A framework for modeling complex, bidirectional interactions, adaptable to cell-signaling pathways or host-pathogen interactions. |

| Optimized Attention Residual Network (OARN) [21] | Custom deep learning model (PyTorch) | For image super-resolution in biomedical imaging, enhancing detail in low-resolution MRI or histology scans. |

| Background Subtraction Algorithms | Gaussian Mixture Model (GMM) [9] | The core computational engine for distinguishing foreground cells from background in Protocol 1. |

| Data Types | ||

| Multispectral/Hyperspectral Imagery | Satellite data (Landsat, Sentinel-2) [20] | The original RS data; its analysis inspires the feature extraction and classification techniques used for complex biomedical images. |

| Synthetic Aperture Radar (SAR) Data | Sentinel-1 [22] | Provides all-weather, surface structure data; analogous to ultrasound or OCT in biomedicine for structural analysis independent of "optical" conditions. |

The implementation of Sentinel sensor data, particularly from the MultiSpectral Instrument (MSI) onboard Sentinel-2 satellites, has inaugurated a new era in high-to-moderate resolution imaging of Earth's resources [24]. Background subtraction stands as a fundamental low-level operation in the processing workflow of this data, aimed at separating persistent scene elements (background) from unexpected or moving entities (foreground) [9]. Within the broader context of a thesis on Sentinel sensor implementation for background subtraction research, this document addresses three interconnected pillars crucial for data quality and algorithmic performance: noise reduction, radiometric calibration, and data fidelity. These components are essential for developing robust applications in environmental monitoring, change detection, and moving object identification using satellite imagery.

Technical Challenges in Sentinel Data Processing

Noise Reduction

Noise in Sentinel imagery manifests from various sources, including sensor electronics, atmospheric interference, and varying illumination conditions. This noise presents significant challenges for background subtraction algorithms, which rely on stable statistical models of the background scene [25] [10].

- Environmental Noise: Dynamic backgrounds such as oscillating tree branches, water surfaces, and changing weather conditions contravene the static background assumption, leading to frequent false positives in foreground detection [9].

- Sensor-Induced Noise: Electronic noise from the MSI sensor and transmission artifacts can introduce spatial and temporal inconsistencies that corrupt the background model initialization and maintenance phases [24].

Radiometric Calibration

Radiometric calibration ensures that the digital numbers recorded by the Sentinel MSI sensor accurately represent the physical properties of the observed scene. This process is fundamental for generating reliable remote sensing reflectance products (Rrs), which are essential for retrieving near-surface concentrations of water constituents [24].

- Vicarious Calibration: Following vicarious calibrations using reference in-situ water-leaving radiances, studies have demonstrated overall absolute relative differences of <7% and root mean squared differences (RMSD) of <0.0012 1/sr for the blue and green bands of Sentinel-2A MSI data [24].

- Inter-Sensor Consistency: Calibration validation through intercomparisons with Landsat-8's Operational Land Imager (OLI) products has indicated reasonable product consistency, enabling the combined use of these missions for time-series analysis [24].

Data Fidelity

Data fidelity refers to the accuracy and reliability of the information extracted from the raw sensor data. Challenges to data fidelity directly impact the validity of background models and subsequent foreground detections.

- Atmospheric Corrections: Imperfect atmospheric correction, particularly over water bodies rich in dissolved organic matter or suspended particles, remains a significant source of error, affecting the fidelity of derived products [24].

- Artifact Mitigation: Image artifacts, such as those caused by haze or sea surface-reflected solar radiation at low solar zenith angles, must be minimized to maximize the utility of multi-mission products [24].

Table 1: Key Performance Metrics from Sentinel-2A MSI Validation for Aquatic Applications

| Metric | Blue Band Performance | Green Band Performance | Measurement Context |

|---|---|---|---|

| Absolute Relative Difference | < 7% | < 7% | Post-vicarious calibration [24] |

| Root Mean Squared Difference (RMSD) | < 0.0012 1/sr | < 0.0012 1/sr | Comparison with in-situ water-leaving radiances [24] |

| Product Consistency | Reasonable agreement | Reasonable agreement | Intercomparison with Landsat-8 OLI products [24] |

Experimental Protocols

Protocol 1: Radiometric Calibration and Atmospheric Correction of Sentinel-2 MSI Data

This protocol outlines the procedure for processing Level-1 Sentinel-2 data to atmospherically corrected, radiometrically calibrated surface reflectance products, suitable for background model initialization.

1. Principle: Raw top-of-atmosphere radiance is corrected for atmospheric effects to derive accurate surface reflectance, which is a fundamental input for robust background subtraction algorithms [24].

2. Reagents and Materials:

- Input Data: Sentinel-2 Level-1C Top-of-Atmosphere product.

- Software: SeaWiFS Data Analysis System (SeaDAS) with implemented MSI processing or equivalent radiative transfer model software [24].

- Validation Data: In-situ water-leaving radiance measurements from concurrent field campaigns (for validation purposes).

3. Equipment:

- High-performance computing workstation capable of processing large satellite imagery datasets.

- In-situ spectroradiometers for field validation.

4. Procedure: 1. Data Acquisition: Download the Sentinel-2 Level-1C product for the area and time of interest. 2. Radiometric Calibration: Within the processing software (e.g., SeaDAS), apply the sensor-specific calibration parameters to convert digital numbers to top-of-atmosphere radiance. 3. Atmospheric Correction: Execute an atmospheric correction algorithm to compensate for scattering and absorption by gases and aerosols. This step retrieves the remote sensing reflectance (Rrs). 4. Vicarious Calibration (Optional but Recommended): Adjust the calibration coefficients using match-ups with in-situ radiance measurements from ground truth sites to minimize systematic biases [24]. 5. Product Generation: Output the final surface reflectance product for use in background modeling.

5. Analysis: Quantify the calibration accuracy by comparing the satellite-derived Rrs with synchronized in-situ measurements. The target performance is an absolute relative difference of <7% and an RMSD of <0.0012 1/sr for visible bands [24].

Protocol 2: Multi-Modal Background Model Initialization with Color and Depth

This protocol describes an advanced background subtraction method that fuses color (RGB) and depth information to improve robustness against illumination changes, shadows, and camouflage. While designed for active sensors like Kinect, the conceptual framework of multi-sensor fusion is highly relevant for Sentinel data analysis [10].

1. Principle: By integrating complementary data channels (e.g., multispectral bands from Sentinel), background models can overcome limitations inherent to a single data type. Depth information, or its proxy from topographic data, is less affected by color-based challenges like shadows [10].

2. Reagents and Materials:

- Input Data: A sequence of video frames or multi-temporal satellite images containing both color and depth information (or a suitable proxy).

- Software: Programming environment (e.g., C++, Python) with the BGSLibrary or custom implementation of the Codebook algorithm [25] [10].

3. Equipment:

- A sensor capable of providing synchronized color and depth data (e.g., Microsoft Kinect, stereo camera) for protocol validation. For satellite applications, this implies access to co-registered multispectral and topographic datasets.

4. Procedure:

1. Model Construction: For each pixel, construct a codebook C = {c1, c2, ..., cL} from a training sequence of N frames. Each codeword ci contains an RGB vector vi = (R̅i, G̅i, B̅i) and auxiliary data auxi = ⟨Imini, Imaxi, fi, λi, pi, qi⟩ [10].

2. Depth Integration: Modify the codebook matching function to include a depth channel. A pixel xt matches a codeword cm if it satisfies three conditions:

* Color Distance: colordist(xt, vm) ≤ ϵ1

* Brightness Condition: brightness(I, ⟨Iminm, Imaxm⟩) = true

* Depth Compatibility: |depth_xt - depth_cm| ≤ ϵ_depth [10]

3. Foreground Detection: Pixels not matching any codeword in the fused color-depth model are classified as foreground.

4. Model Maintenance: Periodically update the codebooks to adapt to slow changes in the background scene (e.g., gradual illumination changes).

5. Analysis: Evaluate the foreground masks against manually annotated ground truth. Calculate performance metrics such as F-measure, Percentage of Wrong Classifications (PWC), and Structural Similarity Index (SSIM) to quantify improvement over color-only methods [25].

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Background Subtraction Experiments

| Item Name | Function / Application | Relevance to Sentinel Research |

|---|---|---|

| SeaDAS Software | Processing and analysis of ocean color data, including atmospheric correction of Sentinel-2 MSI data [24]. | Generates calibrated surface reflectance (Rrs) from raw Sentinel-2 data, the foundational input for background models. |

| BGSLibrary | An open-source C++ library providing 29+ implemented background subtraction algorithms for experimental comparison [25]. | Allows researchers to benchmark new algorithms against established methods using standardized metrics. |

| Codebook Algorithm | A background modeling technique that constructs a quantized representation of a pixel's historical states [10]. | Forms the basis for robust, multi-modal background models that can be extended with spectral and topographic data. |

| Vicarious Calibration Site | A ground-truth location with known reflectance properties used for sensor calibration validation [24]. | Critical for ensuring the radiometric accuracy of Sentinel-2 data, directly impacting data fidelity. |

| Active Depth Sensor (e.g., Kinect) | Provides synchronized color and depth data for developing and testing multi-sensor fusion algorithms [10]. | Serves as a proxy for understanding how to integrate complementary data types (e.g., multispectral + topographic). |

Implementation Strategies: From SAR-SIFT-Logarithm to Biomedical Adaptation

Step-by-Step SAR-SIFT-Logarithm Background Subtraction Methodology

Synthetic Aperture Radar (SAR) change detection is a critical application in remote sensing, enabling the monitoring of environmental changes, urban development, and resource management using satellite imagery. Traditional methods for analyzing spaceborne SAR time-series images typically employ pairwise comparison strategies, which can lose overall change information and require substantial processing time. To address these limitations, the SAR-SIFT-Logarithm Background Subtraction method combines SAR-SIFT image registration technology with logarithm background subtraction, providing an effective approach for detecting changes in multi-temporal SAR datasets from Sentinel-1 and similar SAR sensors. This methodology is particularly valuable for monitoring dynamic scenes such as vehicle movement in parking lots, urban development, and other temporal changes in terrestrial landscapes [26].

Principle of the Methodology

The SAR-SIFT-Logarithm Background Subtraction algorithm represents a significant advancement in SAR change detection by integrating robust image registration with sophisticated background modeling techniques. The core principle involves constructing a static background model from a time-series of SAR images and then identifying changes through subtraction of this background from individual images in the sequence. This approach effectively captures the overall change information across the entire observation period, unlike traditional pairwise methods that only compare consecutive images [26].

The methodology leverages the fact that for static scenes, pixel values in subaperture image sequences vary slowly, while moving targets or changes cause significant variations. By modeling the unchanged components throughout the time period using a median filter, the algorithm obtains a reliable static background representation. Change information is then enhanced through logarithmic subtraction operations and detected using Constant False Alarm Rate (CFAR) detection and clustering techniques [26] [27].

Experimental Workflow

The following diagram illustrates the complete SAR-SIFT-Logarithm Background Subtraction workflow:

Detailed Step-by-Step Protocol

Data Preprocessing

- Input Requirements: Collect spaceborne SAR time-series data (e.g., Sentinel-1 GRD products) covering the same geographical area across different acquisition times. A minimum of 10-15 images is recommended for robust background modeling [26].

- Orbit Correction: Download precise orbit files (e.g., Sentinel Precise .osv files) and apply orbit correction using tools like the Apply Orbit Correction tool in ArcGIS Pro. This updates the orbital information in the SAR data with precise position and velocity data, which is crucial for accurate geometric processing [17].

- Thermal Noise Removal: Process the SAR data with thermal noise removal to correct backscatter disturbances caused by internal satellite circuitry, which is particularly important for areas of low backscatter like water bodies [17].

- Radiometric Calibration: Convert digital pixel values to radiometrically calibrated radar cross-section values using sigma nought (σ°) or beta nought (β°) calibration to ensure meaningful backscatter measurements [26] [17].

- Speckle Filtering: Apply speckle reduction filters (e.g., Lee, Gamma Map, or Refined Lee filters) to mitigate the inherent speckle noise in SAR imagery while preserving important feature details [17].

SAR-SIFT Image Registration

- Feature Detection: Implement the SAR-SIFT (Scale-Invariant Feature Transform adapted for SAR) algorithm to detect stable keypoints in all images of the time-series. SAR-SIFT is specifically modified to handle the characteristics of SAR imagery, unlike traditional SIFT designed for optical images [26].

- Feature Matching: Establish correspondences between keypoints in the reference image and each subsequent image in the time-series.

- Transform Estimation: Compute spatial transformation models (affine or polynomial) based on matched keypoints to align all images to a common coordinate system with sub-pixel accuracy.

- Image Resampling: Apply the estimated transformation to all input images using appropriate interpolation methods (e.g., bilinear or cubic convolution) to create a precisely coregistered image stack [26].

Background Modeling

- Temporal Analysis: For each pixel location across the coregistered image stack, extract the temporal profile representing backscatter values over time.

- Median Filter Application: Apply a temporal median filter to each pixel's temporal profile. The median value across the time-series represents the static background, effectively ignoring transient changes [26].

- Background Image Generation: Construct the background image by compiling the median values for all pixel locations, representing the persistent components of the scene throughout the observation period [26] [27].

Logarithm Background Subtraction

- Logarithm Transformation: Apply a natural logarithm transformation to both the current image and the background model. This operation helps in converting the multiplicative speckle noise to additive noise and enhances the contrast between changed and unchanged areas [26] [27].

Subtraction Operation: Perform pixel-wise subtraction of the log-transformed background image from each log-transformed input image in the time-series according to the formula:

Result Interpretation: In the resulting difference image, pixels with values approaching zero represent unchanged areas, while significant positive or negative deviations indicate potential changes [26].

Change Detection and Refinement

- CFAR Detection: Implement Constant False Alarm Rate detection on the difference image. CFAR automatically determines an adaptive threshold based on the local statistical properties of the background clutter, maintaining a constant probability of false alarms [26] [28].

- Background Window Selection: For each pixel under test, select a surrounding background window excluding a guard area.

- Statistical Modeling: Estimate parameters of the background distribution (typically assuming a Gaussian or Gamma distribution).

- Threshold Calculation: Compute the detection threshold based on the desired false alarm probability and background statistics.

- Target Identification: Classify pixels as changed if their intensity exceeds the calculated threshold [28].

- Spatial Clustering: Apply clustering algorithms (e.g., Density-Based Spatial Clustering - DBSCAN) to group detected pixels into coherent changed regions. This helps eliminate isolated false alarms and provides more meaningful change objects [26] [29].

- Change Map Generation: Compile the final change map by labeling detected change clusters, optionally with timestamp information indicating when changes occurred.

Research Reagent Solutions

Table 1: Essential Research Reagents and Materials for SAR-SIFT-Logarithm Background Subtraction

| Category | Specific Solution/Tool | Function in Methodology |

|---|---|---|

| SAR Datasets | Sentinel-1 GRD Products [26]PAZ-1 Products [26] | Provides core input data with repeat-pass observations, all-weather capability, and appropriate resolution for change detection applications. |

| Software Platforms | ArcGIS Pro with Image Analyst [17]Custom MATLAB/Python Scripts | Offers specialized SAR processing tools for preprocessing steps and enables implementation of specialized algorithms for SAR-SIFT and background subtraction. |

| Registration Algorithm | SAR-SIFT [26] | Performs accurate image coregistration to avoid mismatches that would degrade change detection performance, specifically adapted for SAR imagery characteristics. |

| Detection Components | CFAR Detector [26] [28]DBSCAN Clustering [29] | Adaptively identifies changed pixels based on local statistics while maintaining constant false alarm rate; groups detected pixels into coherent change regions. |

| Validation Data | Ground Truth Field Measurements [26]High-Resolution UAV Imagery [30] | Provides reference data for quantitative accuracy assessment of change detection results. |

Experimental Validation and Results

Dataset Specifications

Table 2: Experimental Dataset Parameters for Methodology Validation

| Parameter | Sentinel-1 Dataset | PAZ-1 Dataset |

|---|---|---|

| Sensor Type | C-band SAR [26] | X-band SAR [26] |

| Application Scenario | Vehicle counting in parking lots [26] | Vehicle counting in CCTV Tower parking lot [26] |

| Temporal Span | 5 March 2020 to 14 November 2022 [26] | 14 February 2023 to 31 August 2023 [26] |

| Number of Images | 82 images [26] | 12 images [26] |

| Ground Truth | 6 sets of field-collected data [26] | Not specified in available sources |

Validation Metrics and Performance

The methodology was quantitatively evaluated using root mean square error (RMSE) between detected changes and ground truth data. Experimental results demonstrated that the SAR-SIFT-Logarithm Background Subtraction method effectively detects overall change information while reducing processing time compared to traditional pairwise comparison methods [26].

In practical applications involving vehicle counting in parking lots, the method successfully tracked temporal variations in vehicle presence, with validation showing strong correlation with field-collected ground truth data. The integration of SAR-SIFT registration proved crucial for handling geometric positioning errors caused by orbital offsets in spaceborne SAR platforms [26].

Technical Considerations

Advantages Over Traditional Methods

The SAR-SIFT-Logarithm Background Subtraction approach offers several significant advantages: (1) It captures holistic change information across the entire time-series rather than just between consecutive acquisitions; (2) It reduces processing time compared to exhaustive pairwise comparison methods; (3) The background subtraction framework effectively suppresses static clutter while highlighting temporal changes; (4) The method is particularly effective for detecting transient targets and changes in dynamic environments [26].

Implementation Challenges

Key challenges in implementing this methodology include: (1) The requirement for accurate coregistration to avoid false changes due to misalignment; (2) Sensitivity to radiometric variations across acquisitions that must be properly normalized; (3) The need for sufficient temporal sampling to build a reliable background model; (4) Computational demands when processing large time-series datasets [26] [17].

The SAR-SIFT-Logarithm Background Subtraction methodology represents a robust framework for change detection in spaceborne SAR time-series imagery. By integrating sophisticated image registration with temporal background modeling and log-ratio-based change enhancement, the approach effectively addresses limitations of traditional pairwise change detection methods. The protocol detailed in this document provides researchers with a comprehensive guide for implementing this advanced technique, particularly within the context of Sentinel sensor data utilization for environmental monitoring, urban observation, and other remote sensing applications requiring temporal change analysis.

Time-Series Analysis for Dynamic Biological Process Monitoring

Time-series analysis of sensor data enables the monitoring of dynamic biological processes, capturing critical changes and trends over time. Within the broader context of sentinel sensor implementation for background subtraction research, this methodology provides a powerful framework for distinguishing significant biological signals from static or slowly varying backgrounds. The core principle, as demonstrated in remote sensing, involves analyzing a sequence of observations to model the unchanging "background" and subsequently identify meaningful "foreground" changes [26]. This approach is directly transferable to biological sentinel systems, such as those used in bioreactor monitoring or live-cell imaging, where detecting deviations from a baseline state is crucial. This document outlines detailed application notes and protocols for implementing these techniques, providing researchers in drug development with the tools to extract actionable insights from complex, temporal biological data.

Application Notes

Core Principles and Analogous Applications

The foundational concept for dynamic monitoring in biological systems can be adapted from advanced change detection methods developed for geospatial analysis. In remote sensing, Background Subtraction is a technique used to identify changes across a time-series of satellite images. One specific implementation, the SAR-SIFT-Logarithm Background Subtraction algorithm, is designed to detect changes in spaceborne Synthetic Aperture Radar (SAR) time-series imagery [26]. This method's workflow provides a robust analog for biological process monitoring:

- Input Time-Series Data: A sequence of observations of the same target is acquired over time.

- Preprocessing: Data undergoes noise reduction and calibration to ensure consistency and quality.

- Coregistration: Sequential data points are aligned to a common reference frame to avoid misinterpretation of changes.

- Background Modeling: The static components of the scene, which remain unchanged throughout the time period, are modeled. This is often achieved using a statistical operator like a median filter to obtain the background [26].

- Change Detection: The current observation is compared against the modeled background. Changes are identified via subtraction and further refined using detection algorithms.

In a biological context, this allows researchers to model the baseline state of a system (e.g., a cell culture's metabolic profile) and automatically highlight significant deviations (e.g., a metabolic shift indicating product formation or stress).

Quantitative Data and Sensor Selection