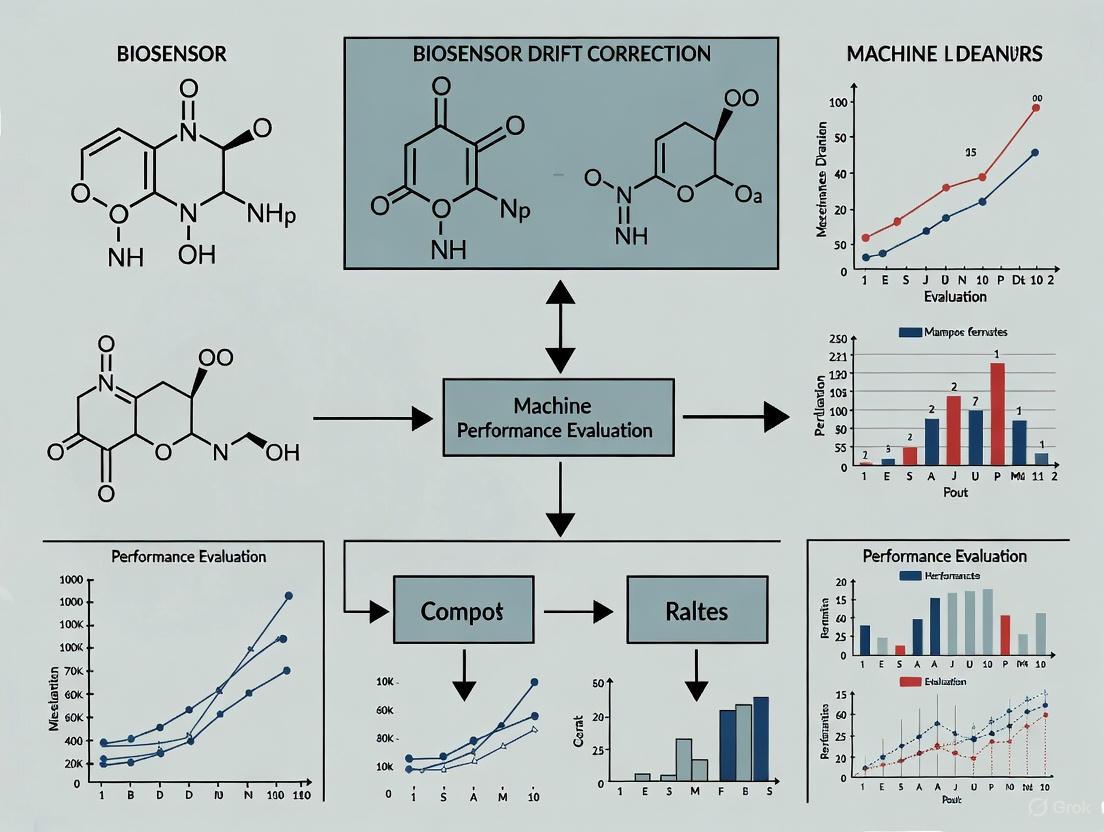

Machine Learning for Biosensor Drift Correction: Performance Evaluation of AI-Driven Calibration and Stability Solutions

Biosensor reliability is critically challenged by signal drift and performance degradation over time, posing significant obstacles in drug development and clinical diagnostics.

Machine Learning for Biosensor Drift Correction: Performance Evaluation of AI-Driven Calibration and Stability Solutions

Abstract

Biosensor reliability is critically challenged by signal drift and performance degradation over time, posing significant obstacles in drug development and clinical diagnostics. This article provides a comprehensive performance evaluation of machine learning (ML) methodologies for biosensor drift correction, tailored for researchers and scientists in biomedical fields. We explore the foundational causes of drift, systematically review and compare advanced ML algorithms—from ensemble methods to deep learning architectures—and present rigorous validation frameworks. The analysis covers real-world application case studies, addresses key implementation challenges, and outlines optimization strategies to enhance the accuracy, stability, and longevity of biosensing systems, ultimately supporting the development of robust, intelligent diagnostic tools.

Understanding Biosensor Drift: Causes, Impacts, and the Imperative for Machine Learning

Biosensor drift, the gradual and unintended change in a sensor's output signal over time despite a constant input, represents a critical challenge in pharmaceutical research and diagnostic development. This phenomenon can compromise data integrity, leading to inaccurate kinetic parameters for biomolecular interactions and potentially derailing drug discovery pipelines. This guide provides a comparative evaluation of how drift manifests across major biosensor platforms and examines the emerging machine learning-based strategies developed to correct it, providing scientists with a framework for performance evaluation.

What is Biosensor Drift? A Fundamental Definition

In the context of biosensors, drift is defined as a time-dependent deviation in a sensor's calibration curve, resulting in systematic measurement inaccuracies [1]. It is not a sudden failure but a gradual degradation that can arise from a complex interplay of factors, which can be broadly categorized as follows:

- Environmental Stressors: Changes in temperature, humidity, and pressure can induce physical and chemical changes in sensor materials [1].

- Component Aging: The natural degradation of electronic components or the sensing element itself over prolonged use [1].

- Biofouling: The non-specific adsorption of proteins, cells, or other biomolecules from a sample onto the sensor surface, which can insulate the sensor and alter its signal [2].

- Electrochemical Instability: For electrochemical biosensors, key mechanisms include the desorption of self-assembled monolayers from electrode surfaces and irreversible reactions of the redox reporter molecule used for detection [2].

The significance of controlling drift is paramount. In one documented scenario, drift in a temperature sensor at a chemical plant led to a dangerously inaccurate reading, ultimately causing a reaction vessel to overheat and explode, resulting in significant financial and reputational damage [3]. In research settings, drift compromises the reliability of collected data, leading to flawed analyses and decision-making, while also increasing costs and downtime due to the need for frequent recalibration [1].

A Comparative Look at Biosensor Platform Drift

A direct comparison of biosensor platforms reveals a inherent trade-off between data reliability and operational throughput. A benchmark study evaluating a panel of monoclonal antibodies across four platforms found that rank orders of association and dissociation rate constants were highly correlated between instruments, indicating that despite drift, trends can be consistent [4]. However, the platforms exhibited distinct strengths and weaknesses:

- GE Healthcare's Biacore T100 and Bio-Rad's ProteOn XPR36 demonstrated excellent data quality and consistency, making them suitable for applications where accuracy is critical [4].

- ForteBio's Octet RED384 and Wasatch Microfluidics's IBIS MX96 offered higher flexibility and throughput but with noted compromises in data accuracy and reproducibility, suggesting a potentially higher susceptibility to drift or its effects in data output [4].

The following table summarizes this performance comparison:

Table 1: Comparison of Biosensor Platform Characteristics

| Biosensor Platform | Data Quality & Consistency | Throughput & Flexibility | Primary Strengths | Noted Compromises |

|---|---|---|---|---|

| Biacore T100 | Excellent [4] | Moderate [4] | High data reliability [4] | --- |

| ProteOn XPR36 | Excellent [4] | Moderate [4] | Excellent consistency [4] | --- |

| Octet RED384 | Compromised [4] | High [4] | High flexibility and throughput [4] | Data accuracy and reproducibility [4] |

| IBIS MX96 | Compromised [4] | High [4] | High flexibility and throughput [4] | Data accuracy and reproducibility [4] |

Machine Learning Solutions for Drift Correction

Traditional drift compensation methods, such as periodic manual recalibration, baseline correction, and Principal Component Analysis (PCA), are often inadequate for the complex, nonlinear drift patterns in long-term deployments [5]. Machine learning (ML) offers a more adaptive and powerful approach. One comprehensive study systematically evaluated 26 regression algorithms for modeling biosensor behavior, finding that advanced models like Gaussian Process Regression (GPR), XGBoost, and Artificial Neural Networks (ANNs) delivered superior predictive accuracy for sensor signal optimization [6]. The study further introduced a novel stacked ensemble framework that combines GPR, XGBoost, and ANN to further enhance performance and provide interpretable insights into key fabrication parameters [6].

More recent advances include deep learning approaches like the Incremental Domain-Adversarial Network (IDAN), which integrates domain-adversarial learning with an incremental adaptation mechanism to manage temporal variations in sensor data effectively [5]. When combined with real-time correction algorithms like iterative random forest, such frameworks significantly enhance data integrity over extended periods, demonstrating robust accuracy even in the presence of severe drift [5].

Table 2: Comparison of Machine Learning Approaches for Drift Compensation

| Method Category | Specific Example(s) | Key Mechanism | Advantages |

|---|---|---|---|

| Traditional Chemometrics | Linear Regression, PCA [6] [5] | Linear calibration curves, statistical signal processing | Simple, interpretable [6] |

| Tree-Based Models | Random Forest, XGBoost [6] [5] | Ensemble learning with multiple decision trees | High robustness against noise, good generalization [6] |

| Kernel-Based Models | Support Vector Regression (SVR) [6] | Maps data to high-dimensional space to find linear relationships | Effective for nonlinear drift patterns like temperature drift [6] |

| Probabilistic Models | Gaussian Process Regression (GPR) [6] | Non-parametric, Bayesian approach | Provides uncertainty estimates for predictions [6] |

| Neural Networks | ANN, Incremental Domain-Adversarial Network (IDAN) [6] [5] | Learns complex hierarchical data representations | High accuracy, models complex temporal dependencies, enables adaptive learning [6] [5] |

| Stacked Ensembles | GPR + XGBoost + ANN [6] | Combines predictions from multiple models to improve performance | State-of-the-art predictive accuracy and robustness [6] |

Experimental Protocols for Drift Analysis

To rigorously evaluate drift, researchers employ controlled experimental protocols. A clear example comes from a study on Electrochemical Aptamer-Based (EAB) sensors, which systematically investigated signal loss mechanisms [2].

Protocol 1: Investigating Drift Mechanisms in Electrochemical Biosensors [2]

- Sensor Proxy: Use a simple, EAB-like proxy sensor (e.g., a methylene-blue-modified, single-stranded DNA sequence attached to a gold electrode via a thiol-on-gold monolayer).

- Challenge Conditions: Expose the sensor to two environments:

- Complex Medium: Undiluted whole blood at 37°C to mimic in vivo conditions.

- Control Medium: Phosphate buffered saline (PBS) at 37°C.

- Continuous Interrogation: Perform repeated square-wave voltammetry scans over several hours to monitor the signal decay.

- Mechanism Isolation:

- Biology vs. Electrochemistry: Compare signal loss in blood vs. PBS. A rapid exponential phase seen only in blood indicates biofouling or enzymatic activity. A linear phase in both indicates an electrochemical mechanism [2].

- Fouling vs. Enzymatic Degradation: Wash drifted sensors with a denaturant (e.g., concentrated urea). Significant signal recovery points to reversible biofouling as the dominant mechanism [2].

- Electrochemical Specifics: Vary the applied potential window. A strong dependence of drift rate on potential indicates monolayer desorption, whereas independence suggests degradation of the redox reporter [2].

The logical flow of this experimental investigation can be visualized as follows:

The Scientist's Toolkit: Key Reagents & Materials

The following table details essential materials used in the development and stabilization of biosensors, as featured in the cited research.

Table 3: Key Research Reagent Solutions for Biosensor Development

| Research Reagent / Material | Function in Biosensor Development & Drift Mitigation |

|---|---|

| Carbon Nanotubes (CNTs) | Nanomaterial used as a high-sensitivity transducer in field-effect transistor (BioFET) biosensors due to high electrical conductivity and surface-to-volume ratio [7] [8]. |

| Poly(oligo(ethylene glycol) methyl ether methacrylate) (POEGMA) | A polymer brush layer that acts as a non-fouling interface and a Debye length extender, enabling sensitive detection in biological solutions and reducing surface fouling [7]. |

| Self-Assembled Monolayer (SAM) | A layer of organic molecules (e.g., alkane thiols) that forms on an electrode surface (e.g., gold), providing a well-defined interface for bioreceptor immobilization and reducing non-specific binding [2]. |

| Methylene Blue (MB) | A redox reporter molecule used in electrochemical biosensors. Its stability within a specific potential window helps minimize electrochemical drift [2]. |

| 2'O-methyl RNA | An enzyme-resistant analog of DNA used in aptamer-based sensors to reduce signal loss caused by enzymatic degradation in biological fluids [2]. |

| Palladium (Pd) Pseudo-Reference Electrode | A stable alternative to bulky Ag/AgCl reference electrodes, facilitating the miniaturization and point-of-care application of biosensors [7]. |

The fight against biosensor drift is evolving from traditional calibration to intelligent, data-driven correction. While platform choice involves a trade-off between throughput and data reliability, the integration of advanced machine learning models like stacked ensembles and incremental domain-adversarial networks offers a powerful path forward. These ML frameworks not only compensate for drift but also transform it into a solvable variable, paving the way for more reliable, long-term biosensing in drug discovery and diagnostics. Future progress will hinge on the continued development of self-calibrating sensors and the creation of standardized, open-source datasets for benchmarking new algorithms, ultimately closing the gap between laboratory prototypes and robust clinical deployment.

Data integrity is the cornerstone of pharmaceutical development and clinical practice. The phenomenon of drift—the gradual degradation of data quality over time—poses a significant and often insidious threat to this integrity. In the context of this performance evaluation of machine learning (ML) biosensor drift correction research, drift refers to the systematic deviation in a sensor's or model's output from its true or initial calibrated value. This compromises the reliability of the data used for critical decisions, from patient safety in clinical trials to the accuracy of diagnostic tools. This guide objectively compares the performance of various ML-driven approaches designed to combat drift, providing a detailed analysis of their experimental protocols and efficacy.

Understanding Drift and Its High-Stakes Impact

Drift is a pervasive challenge that manifests differently across pharmaceutical and clinical settings. In clinical trials, a similar concept is observed as "protocol deviations," where any departure from the approved study protocol can introduce bias and affect data validity. Modern complex trials average over 100 such deviations, impacting roughly one-third of subjects and constituting a key finding in 30% of FDA warning letters [9]. For biosensors and predictive models, drift is more technical but equally detrimental. It can stem from sensor aging, material degradation, changes in environmental conditions, or shifts in the underlying patient population data that a model was trained on [5] [10] [11].

The stakes for managing drift are exceptionally high. In drug development, the failure to account for calibration drift in clinical prediction models can lead to periods of insufficient accuracy, potentially obscuring safety signals or efficacy endpoints [10]. For AI tools in the medicinal product lifecycle, regulators like the EMA and FDA now emphasize the importance of monitoring for performance changes, including "model drift," to ensure ongoing reliability [12]. The economic and health costs are significant, as unreliable data can lead to faulty regulatory decisions, compromised patient safety, and the costly failure of clinical programs.

Performance Comparison of Drift Correction Methods

Researchers have developed numerous machine learning strategies to detect and correct for drift. The table below summarizes the performance of several advanced methods as demonstrated in recent experimental studies.

Table 1: Performance Comparison of ML-Based Drift Correction Methods

| Method Name | Core Algorithm(s) | Reported Accuracy/Performance | Key Advantage | Primary Use Case |

|---|---|---|---|---|

| Incremental Domain-Adversarial Network (IDAN) with Iterative Random Forest [5] | Domain-Adversarial Training, Random Forest | ~91% accuracy in gas classification despite severe drift; ~30% improvement over non-adaptive baselines | Handles both abrupt and gradual drift via incremental learning | Sensor array data correction (e.g., E-noses) |

| Stacked Ensemble Framework [6] | GPR, XGBoost, ANN Stacking | R²: 0.978; Outperformed 26 individual regression models | Superior predictive accuracy for sensor signal optimization | Predicting biosensor responses during fabrication |

| Dynamic Calibration Curves with Adaptive Sliding Window (Adwin) [10] | Online Stochastic Gradient Descent (Adam), Adaptive Sliding Window | Accurately detected calibration drift onset in simulations and real-world clinical data | Provides actionable alerts and data windows for model updating | Clinical prediction model monitoring |

| Machine Learning-Optimized Graphene Biosensor [13] | Machine Learning (for design optimization) | Peak sensitivity of 1785 nm/RIU | Enhanced sensitivity and reproducibility through design-phase ML optimization | Optical biosensing for disease detection |

These methods can be broadly categorized. Model-centric approaches, like the Dynamic Calibration Curves, focus on continuously monitoring and updating software models to maintain their alignment with shifting data [10]. In contrast, sensor-hardware-centric approaches, such as the ML-optimized graphene biosensor, leverage ML to enhance the intrinsic stability and sensitivity of the physical sensor itself, making it more robust to drift from the outset [13]. Hybrid frameworks like IDAN combine real-time error correction with long-term model adaptation to address drift at multiple levels [5].

Experimental Protocols for Key Drift Correction Studies

To evaluate and compare these methods, researchers employ rigorous experimental protocols. Below are the detailed methodologies for two prominent studies.

Protocol: Incremental Domain-Adversarial Network (IDAN) for Sensor Drift

- Objective: To evaluate the efficacy of a novel framework combining an Iterative Random Forest for real-time error correction and an IDAN for long-term drift compensation on a benchmark sensor array dataset [5].

- Dataset: The Gas Sensor Array Drift (GSAD) dataset was used. This public benchmark contains data from 16 metal-oxide gas sensors exposed to six gases over 36 months, comprising 13,910 samples across 10 batches that capture chronological drift [5].

- Methodology:

- Data Preprocessing: The 128-dimensional feature vectors per sample (including features like response amplitude and recovery time) were normalized.

- Error Correction: An iterative Random Forest algorithm was applied to identify and correct abnormal sensor responses in real-time by leveraging data from all sensor channels.

- Drift Compensation: The processed data was fed into the IDAN. This network uses a domain-adversarial component to learn features that are invariant across different time domains (batches), while an incremental learning mechanism allows it to continuously adapt to new data without forgetting previously learned knowledge.

- Evaluation: The model was trained on earlier batches and tested on later batches to simulate a real-world deployment. Performance was measured by classification accuracy for gas types and compared against static models and other drift-compensation methods.

- Outcome: The combined framework achieved a high classification accuracy (~91%) on later batches, demonstrating robust compensation for severe, long-term sensor drift [5].

Protocol: Stacked Ensemble for Biosensor Response Prediction

- Objective: To develop and validate a stacked ensemble ML framework for accurately predicting electrochemical biosensor responses based on fabrication parameters, thereby reducing experimental optimization time [6].

- Dataset: Experimental data from a previous study on an enzymatic glucose biosensor, featuring parameters like enzyme amount, crosslinker (glutaraldehyde) concentration, and pH values [6].

- Methodology:

- Feature Definition: Five key fabrication parameters were defined as input features: enzyme amount, crosslinker amount, scan number of the conducting polymer, glucose concentration, and pH.

- Model Training and Validation: A total of 26 regression algorithms from six families (linear, tree-based, kernel-based, Gaussian Process Regression (GPR), ANN, and stacked ensembles) were trained and evaluated using 10-fold cross-validation.

- Ensemble Construction: A novel stacked ensemble was created by combining the predictions of the top-performing models, including GPR, XGBoost, and ANN.

- Interpretability Analysis: Permutation feature importance and SHAP (SHapley Additive exPlanations) analysis were employed to interpret the model and understand the impact of each fabrication parameter on the sensor's signal.

- Outcome: The stacked ensemble model outperformed all individual models, achieving an R² value of 0.978, providing a highly accurate and interpretable tool for biosensor optimization [6].

Visualizing Drift Correction Workflows

The following diagrams illustrate the logical workflows of two primary drift correction strategies, highlighting the role of ML in maintaining data integrity.

Model-Centric Clinical Prediction Monitoring

Diagram 1: Monitoring clinical prediction models for calibration drift. This model-centric workflow shows how a clinical prediction model is continuously monitored. A Dynamic Calibration Curve is updated in real-time using online gradient descent as new patient outcomes are observed. The associated error is fed to an Adaptive Sliding Window detector, which triggers an alert the moment a statistically significant increase in miscalibration is detected, prompting model updating [10].

Sensor-Centric Data Correction Framework

Diagram 2: Correcting drift in physical sensor arrays. This sensor-centric framework processes data from a physical sensor array (e.g., an electronic nose). Raw, drifting data first passes through an Iterative Random Forest model for real-time error correction. The cleaned data is then fed into an Incremental Domain-Adversarial Network (IDAN), which performs long-term drift compensation and final classification, ensuring reliable output over time [5].

The Scientist's Toolkit: Essential Research Reagents and Materials

The development and validation of drift-resistant biosensors and models rely on a suite of specialized materials and computational tools.

Table 2: Key Research Reagents and Solutions for Drift Correction Studies

| Item Name | Function/Description | Application Context |

|---|---|---|

| Metal-Oxide Semiconductor (MOS) Sensor Array | A collection of sensors (e.g., TGS series) with partial specificity that generates multi-dimensional response data prone to drift. | Serves as a benchmark platform (e.g., in the GSAD dataset) for developing and testing drift compensation algorithms [5]. |

| Graphene-Based Sensing Platform | A sensing layer with exceptional electrical conductivity and surface area, often optimized by ML for enhanced initial sensitivity and stability [13]. | Used in high-sensitivity biosensors for disease detection (e.g., breast cancer), where drift can compromise diagnostic accuracy. |

| Enzymatic Biosensor Construct | A biosensor incorporating a biological element (e.g., glucose oxidase) immobilized on a transducer (e.g., with conducting polymers). | Provides experimental data for ML models that predict how fabrication parameters (enzyme amount, crosslinker concentration) affect sensor output and drift [6]. |

| Gas Sensor Array Drift (GSAD) Dataset | A publicly available benchmark dataset containing long-term (3+ years) sensor data from 16 MOS sensors exposed to six gases. | The definitive dataset for rigorously evaluating the long-term performance and adaptability of drift compensation algorithms [5]. |

| SHAP (SHapley Additive exPlanations) | A game-theoretic method for interpreting the output of any ML model, explaining the contribution of each input feature. | Used in model interpretability to understand which sensor parameters or input features are most responsible for predictions and potential drift [6]. |

The fight against data drift is a continuous process, not a one-time fix. As regulatory bodies like the FDA and EMA increase their scrutiny of AI and sensor-based tools, the ability to demonstrate robust, ML-powered drift management will become a critical component of regulatory submissions [12]. The methods compared here—from model monitoring to hardware optimization—provide a powerful toolkit for researchers to ensure that the data driving pharmaceutical innovation and clinical decisions remains trustworthy from the first measurement to the last.

Biosensors are analytical devices that combine a biological recognition element with a physicochemical transducer to detect target analytes, playing vital roles in medical diagnostics, environmental monitoring, and food quality control [14]. Despite their utility, biosensors suffer from several reliability challenges that can compromise data integrity, including sensor aging, environmental interference, and biofouling [15] [16]. These factors collectively contribute to sensor drift—the gradual deviation from a baseline signal despite constant analyte concentration—resulting in inaccurate measurements, reduced sensitivity, and false positives/negatives [17] [18].

Traditional approaches to mitigating drift rely on hardware improvements or frequent recalibration, which are often costly, time-consuming, and impractical for deployed sensors [15] [16]. The emergence of machine learning (ML) offers a transformative approach to drift correction by leveraging algorithms that identify complex patterns in sensor data, compensate for signal variations, and maintain accuracy over time [14] [19] [18]. This review systematically analyzes the root causes of biosensor drift and compares the performance of ML-driven correction methods against conventional alternatives, providing researchers with a framework for selecting appropriate mitigation strategies.

Root Causes of Biosensor Drift: Mechanisms and Impacts

Sensor Aging and Material Degradation

Sensor aging refers to the gradual deterioration of sensor components through electrochemical fatigue, material depletion, and bioreceptor denaturation. In electrochemical biosensors, repeated potential cycling causes electrode fouling through the accumulation of non-conductive reaction products, reducing electron transfer efficiency and active surface area [18]. Bioreceptors such as enzymes and antibodies lose activity over time due to thermal instability and conformational changes, diminishing binding affinity and specificity [14]. Nanomaterial-enhanced sensors, while offering improved sensitivity, exhibit unique aging patterns where nanoparticle aggregation or dissolution alters electrochemical properties [18]. Studies report that unmitigated aging can reduce signal amplitude by 30-60% over 2-4 weeks of continuous operation, severely impacting long-term reliability [18].

Environmental Shifts and Matrix Effects

Environmental factors—including temperature fluctuations, pH variations, humidity changes, and complex sample matrices—introduce significant signal variability. Temperature changes as small as 2-5°C can alter bioreceptor kinetics and binding affinities, leading to signal deviations of 10-25% in biosensors lacking thermal compensation [19]. In food safety applications, electrochemical sensors face matrix effects from proteins, lipids, and salts that non-specifically adsorb to sensor surfaces, creating diffusion barriers and interfering with target detection [20]. Optical biosensors experience refractive index changes in response to salinity or solvent composition, generating false signals in label-free detection systems [21]. These environmental interferences are particularly challenging for point-of-care and field-deployable sensors operating in uncontrolled conditions [18] [20].

Biofouling in Aqueous Environments

Biofouling involves the colonization of sensor surfaces by microorganisms (bacteria, microalgae) and subsequent accumulation of extracellular polymeric substances (EPS), forming a complex biofilm that physically blocks sensing elements and reduces analyte access [15] [16]. The biofouling process occurs in distinct stages: initial molecular conditioning, microbial adhesion, EPS production, biofilm maturation, and macrofouling settlement [16]. In marine environments, moored observatory systems experience severe biofouling at depths up to 50 meters, with conductivity-temperature sensors showing 47% failure rates primarily due to fouling-induced drift [15]. Fouling layers up to 30mm thick dramatically increase hydrodynamic drag on sensor housings while simultaneously degrading measurement accuracy through species-dependent mechanisms: optical sensors experience light scattering and absorption, electrochemical sensors exhibit modified diffusion kinetics, and conductivity sensors show altered cell constant values [15] [16].

Table 1: Comparative Impact of Different Drift Mechanisms on Biosensor Performance

| Drift Mechanism | Primary Effects | Typical Signal Variation | Time Scale |

|---|---|---|---|

| Sensor Aging | Reduced sensitivity, increased noise | 30-60% decrease | Weeks to months |

| Environmental Shifts | Signal baseline drift, specificity loss | 10-25% deviation | Minutes to hours |

| Biofouling | Sensitivity loss, response time increase | Up to 50% false readings | Days to weeks |

Machine Learning Approaches for Drift Correction

ML Algorithms for Different Drift Types

Machine learning techniques address biosensor drift through pattern recognition, predictive modeling, and signal compensation. Algorithm selection depends on drift characteristics and data availability.

For sensor aging, recurrent neural networks (RNNs) and Long Short-Term Memory (LSTM) networks effectively model temporal degradation patterns, learning from historical data to predict and correct age-related signal decay [14] [18]. Transfer learning approaches adapt models trained under laboratory conditions to field-deployed sensors, compensating for performance variations across individual devices [17].

Environmental shift correction employs supervised learning algorithms, including Support Vector Machines (SVM) and Random Forests (RF), which correlate auxiliary measurements (temperature, pH, conductivity) with signal variations to isolate and remove environmental effects [19] [20]. These models trained on multi-parameter datasets achieve 85-92% accuracy in compensating for matrix effects in complex samples like food extracts and wastewater [20].

Biofouling mitigation utilizes unsupervised learning methods such as Principal Component Analysis (PCA) and k-means clustering to detect anomalous signal patterns indicative of fouling onset before significant accuracy degradation occurs [17] [16]. Convolutional Neural Networks (CNNs) analyze microscopic images of sensor surfaces to quantify biofilm coverage and trigger cleaning mechanisms [16].

Comparative Performance of ML Correction Methods

Table 2: Performance Comparison of ML Algorithms for Biosensor Drift Correction

| ML Algorithm | Drift Type Addressed | Accuracy Improvement | Limitations |

|---|---|---|---|

| PCA-SVM | Environmental shifts | 85-90% signal recovery | Requires labeled training data |

| LSTM Networks | Sensor aging | 75-88% long-term stability | Computationally intensive |

| Transfer Learning | Cross-device variations | 80-85% transfer accuracy | Needs substantial initial data |

| CNN | Biofouling detection | 90-95% classification accuracy | Limited to visual fouling assessment |

| Random Forest | Multi-factor drift | 87-93% compensation | Risk of overfitting without regularization |

Experimental Protocols for Drift Evaluation

Standardized Aging Assessment Protocol

Objective: Quantify signal degradation due to sensor aging under accelerated stress conditions.

Materials: Biosensors (n≥10 per group), potentiostat/impedance analyzer, environmental chamber, reference electrodes, buffer solutions.

Methodology:

- Baseline Characterization: Measure initial sensitivity, limit of detection, response time, and signal-to-noise ratio using standard analyte solutions.

- Accelerated Aging: Subject sensors to stress conditions (elevated temperature 37-45°C, continuous potential cycling, or extended storage).

- Periodic Performance Testing: At defined intervals (24h, 48h, 1 week, 2 weeks), recalibrate and compare current performance metrics against baseline.

- ML Model Training: Use time-series data from aging sensors to train LSTM networks, validating predictions against held-out test sensors.

- Effectiveness Evaluation: Quantify ML correction by comparing corrected signals against ground truth analyte concentrations using metrics like Mean Absolute Error (MAE) and R² values.

This protocol revealed that ML-corrected sensors maintained 85% of initial accuracy after 30 days, versus 40% for uncorrected sensors [18].

Environmental Interference Testing Protocol

Objective: Evaluate sensor resilience to environmental variables and ML compensation efficacy.

Materials: Biosensor array, environmental parameter controls (temperature, pH, ionic strength), data acquisition system, reference analytical method (e.g., HPLC for validation).

Methodology:

- Multivariate Testing: Systematically vary environmental parameters while measuring sensor response to known analyte concentrations.

- Interference Database Construction: Record sensor outputs across the parameter space to create a training dataset.

- Model Development: Train SVM or Random Forest models to predict true analyte concentration from sensor signals and environmental measurements.

- Cross-Validation: Assess model performance using k-fold cross-validation under previously unseen environmental conditions.

- Field Validation: Deploy ML-corrected sensors in real-world settings alongside reference methods to quantify practical improvement.

Studies implementing this approach demonstrated 90% reduction in temperature-induced drift and 80% reduction in matrix effects from complex samples [20].

Controlled Biofouling Evaluation Protocol

Objective: Quantify biofouling impact and test ML-enabled detection/compensation strategies.

Materials: Sensors with transparent viewing windows, flow cell system, bacterial cultures (e.g., Pseudomonas aeruginosa), microscopy imaging, nutrient media.

Methodology:

- Biofilm Development: Immerse sensors in nutrient-rich aqueous environments inoculated with relevant microorganisms under controlled flow conditions.

- Continuous Monitoring: Record sensor signals while simultaneously documenting biofilm accumulation via microscopic imaging and biomass quantification.

- Feature Extraction: Identify signal characteristics (response time, amplitude reduction, noise patterns) correlated with fouling progression.

- ML Model Training: Develop CNN models to classify fouling state from sensor signals and/or images, or PCA models to detect anomalous patterns indicating fouling onset.

- Compensation Testing: Implement ML-based signal correction and compare accuracy against unfouled baseline performance.

This protocol enabled early detection of biofouling 24-48 hours before significant signal degradation, with ML models achieving 92% accuracy in fouling state classification [15] [16].

Visualization of Drift Mechanisms and ML Correction

ML Correction for Biosensor Drift. This diagram illustrates the relationship between primary drift mechanisms, their signal manifestations, and the machine learning approaches most effective for their correction.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for Biosensor Drift Studies

| Item | Function | Application Examples |

|---|---|---|

| Standard Analyte Solutions | Reference materials for calibration and accuracy assessment | Glucose, hydrogen peroxide, specific antigens for biomarker detection |

| Artificial Test Matrices | Simulate complex sample environments to evaluate matrix effects | Synthetic wastewater, artificial serum, food extracts |

| Reference Sensors | Provide ground truth measurements for ML model training | Commercial pH, conductivity, temperature loggers |

| Microbial Cultures | Generate controlled biofouling for evaluation studies | Pseudomonas aeruginosa, Escherichia coli, marine diatoms |

| Nanomaterial Modifications | Enhance sensor stability and reduce aging effects | Graphene, carbon nanotubes, metal nanoparticles |

| Antifouling Coatings | Physical/chemical barriers against biofilm formation | PEG-based polymers, zwitterionic coatings, copper surfaces |

| Data Acquisition Systems | Collect high-frequency sensor data for ML analysis | Potentiostats, impedance analyzers, optical detectors |

This analysis demonstrates that sensor aging, environmental shifts, and biofouling represent distinct but interconnected challenges to biosensor reliability, each requiring specialized ML approaches for effective correction. While sensor aging benefits from temporal modeling with LSTM networks, environmental interference is best addressed by multivariate algorithms like Random Forests, and biofouling requires anomaly detection methods such as PCA. The integration of explainable AI (XAI) techniques improves model interpretability, allowing researchers to understand correction rationale and build trust in ML-corrected outputs [14].

Future directions include developing hybrid models that simultaneously address multiple drift mechanisms, creating standardized drift databases for algorithm benchmarking, and implementing edge AI for real-time correction in resource-limited settings [19] [18]. As ML-powered biosensors evolve toward greater autonomy and reliability, they hold immense potential to transform long-term monitoring applications across healthcare, environmental science, and food safety, provided researchers continue to advance both algorithmic sophistication and fundamental understanding of drift phenomena.

In the field of machine learning (ML) enhanced biosensing, model drift is a critical challenge that leads to the degradation of analytical performance over time, resulting in faulty decision-making and inaccurate predictions [22]. Biosensors, particularly those operating in dynamic biological environments, are inherently susceptible to such drift. For researchers and drug development professionals, understanding and mitigating drift is paramount for developing robust, clinically viable diagnostic and monitoring systems. This phenomenon occurs when the statistical properties of the data or the underlying relationships that a model learned during training change in the real world, a situation often described as a mismatch between the model and the data it currently encounters [23].

This guide objectively compares the performance of different algorithmic and engineering strategies designed to correct for three primary types of drift: concept drift, data drift, and the broader process-model mismatch. We frame this comparison within a broader thesis on performance evaluation, focusing on experimental data from recent scientific literature to provide a clear, evidence-based resource for scientists developing the next generation of intelligent biosensors.

Defining the Drift Spectrum

In machine learning for biosensing, it is crucial to distinguish between the different types of drift, as their causes and remedies differ. The table below summarizes the core definitions and characteristics.

Table 1: Types of Model Drift in Biosensing

| Drift Type | Core Definition | Mathematical Description | Common Causes in Biosensing |

|---|---|---|---|

| Concept Drift | Change in the relationship between input features and the target variable [24] [25]. | Pt1(Y|X) ≠ Pt2(Y|X) [25] | Changing biological pathways, evolving pathogen strains, altered host responses [24]. |

| Data Drift (Covariate Shift) | Change in the distribution of the input data itself, while the input-output relationship remains the same [26] [25]. | PTrain(X) ≠ PTest(X) [26] | Sensor fouling, reagent lot variation, environmental condition changes (e.g., temperature) [22] [17]. |

| Process-Model Mismatch | A discrepancy between a mathematical model's predictions and the actual bioprocess dynamics [27] [28]. | N/A (A systems biology challenge) | Unmodeled cellular dynamics, unexpected metabolic burdens, genetic circuit inefficiencies [27]. |

Concept Drift

Concept drift refers to an evolution in the fundamental statistical properties of the target variable a model is trying to predict, which invalidates the model's initial assumptions [24]. In security analytics, for instance, this is evident when malware authors change their obfuscation techniques, making models trained on past malware families less effective [24]. In biosensing, a similar phenomenon can occur if the relationship between a biomarker concentration and a disease state shifts, or if a bacterial strain evolves, changing the spectroscopic or electrochemical signature that a model was trained to recognize [17].

Data Drift

Data drift, also known as covariate shift, happens when the distribution of the input features changes between the training and deployment phases, but the conditional distribution of the output given the input remains consistent [26] [25]. For a biosensor, this could be caused by the gradual degradation of a sensor's physical components, leading to a baseline shift in the electrochemical signal, or by changes in the sample matrix that affect the background signal [17] [6]. The model's fundamental logic may still be sound, but its performance degrades because it is receiving input data that is statistically different from what it was trained on.

Process-Model Mismatch

While related, process-model mismatch (PMM) is often discussed in the context of controlling biological systems, such as in bioreactor optimization or synthetic biology. It describes a significant discrepancy between a mathematical model's predictions and the actual bioprocess [27] [28]. For example, in a microbial bioprocess engineered for isopropanol production, a PMM can arise from prediction errors in cell growth rates, leading to suboptimal timing for pathway activation and, consequently, reduced product yield [27]. This represents a systemic mismatch at the process level, which can be mitigated through hybrid control strategies that combine in-silico models with in-cell genetic circuits.

Experimental Comparisons of Drift Correction Strategies

Researchers have developed various computational and biological strategies to combat drift. The following section compares the experimental performance of these approaches, providing key data on their efficacy.

Algorithmic Performance for Signal Correction

A comprehensive 2025 study systematically evaluated 26 regression algorithms for their ability to model and predict electrochemical biosensor responses, a key step in compensating for data drift [6]. The following table summarizes the performance of the top-performing model categories.

Table 2: Performance Comparison of ML Algorithms for Biosensor Signal Prediction [6]

| Model Category | Key Algorithms Tested | Best Performing Model | Reported R² | Key Advantage for Biosensing |

|---|---|---|---|---|

| Tree-Based | Random Forest, XGBoost, LightGBM | XGBoost | >0.95 [6] | High predictive accuracy, handles complex parameter interactions. |

| Kernel-Based | Support Vector Regression (SVR) | SVR | >0.90 [6] | Effective in high-dimensional spaces, good generalization. |

| Gaussian Process | Gaussian Process Regression (GPR) | GPR | >0.92 [6] | Provides uncertainty estimates with predictions. |

| Neural Networks | Multi-Layer Perceptron (MLP) | MLP (with single hidden layer) | >0.90 [6] | Models complex non-linear relationships. |

| Stacked Ensemble | Stack of GPR, XGBoost, and ANN | Novel Stacked Ensemble | >0.97 [6] | Highest accuracy, leverages strengths of multiple models. |

Experimental Protocol: The study used a 10-fold cross-validation on a dataset of enzymatic glucose biosensor responses. The features included fabrication and operational parameters such as enzyme amount, crosslinker (glutaraldehyde) amount, and pH. The target variable was the electrochemical current response. Performance was evaluated using R², RMSE, MAE, and MSE [6].

Key Insight: The stacked ensemble model demonstrated superior performance by combining the strengths of GPR, XGBoost, and ANN, achieving an R² value greater than 0.97. This highlights the potential of hybrid ML approaches to create highly robust software-based drift correction systems [6].

Bio-Hybrid Controller Performance for Process-Model Mismatch

Beyond pure computational methods, synthetic biology offers innovative "bio-hybrid" solutions. The Hybrid In Silico/In-Cell Controller (HISICC) architecture combines model-based optimization with autonomous genetic circuits inside engineered cells to correct for PMM [27] [28]. The table below compares strains with and without this technology.

Table 3: Performance of HISICC vs. No-Feedback Systems in Engineered E. coli

| Engineered System / Strain | Control Strategy | Target Product | Key Performance Metric | Robustness to PMM |

|---|---|---|---|---|

| TA1415 / FA2 (No-Feedback) | In-silico feedforward only [27] [28] | Isopropanol / Fatty Acids | Baseline Yield | Low: Yield significantly drops with growth rate PMM [27] [28]. |

| TA2445 / FA3 (HISICC) | In-silico + Cell Density Feedback [27] | Isopropanol | Improved Yield | High: Effectively compensates for PMM by modifying pathway activation timing [27]. |

| FA3 (HISICC) | In-silico + Malonyl-CoA Feedback [28] | Fatty Acids | 27% Higher Yield vs. FA2 [28] | High: Slows cytotoxic enzyme accumulation before it reaches critical levels [28]. |

Experimental Protocol for HISICC:

- Strain Engineering: Engineer producer strains (e.g., E. coli) with genetic circuits. For example, TA2445 includes a metabolic toggle switch (MTS) and a quorum-sensing circuit to autonomously activate it based on cell density [27]. FA3 incorporates a sensor device using the FapR transcription factor to respond to malonyl-CoA and regulate enzyme expression [28].

- Mathematical Modeling: Construct mechanistic models (e.g., two-compartment or Monod-based growth models) of the strains to design in-silico feedforward controllers that optimize inducer inputs (e.g., IPTG) [27] [28].

- Simulation & Validation: Conduct multi-round simulations assuming various magnitudes of PMM (e.g., in cell growth rates or enzyme expression). Compare the product yields of HISICC-equipped strains against no-feedback control strains to evaluate robustness [27] [28].

The following diagram illustrates the logical workflow and components of a HISICC system for regulating a metabolic pathway, as demonstrated in the fatty acid production strain FA3 [28].

Diagram 1: HISICC for Metabolic Regulation

Detection and Mitigation Methodologies

A robust drift management strategy requires both detecting the presence of drift and implementing a mitigation protocol. The following table outlines standard methods used in the field.

Table 4: Drift Detection Methods and Mitigation Protocols

| Method Category | Specific Methods & Algorithms | Brief Description | Best for Drift Type |

|---|---|---|---|

| Statistical Process Control | DDM (Drift Detection Method), EDDM (Early DDM) [24] | Monitors the model's error rate over time; triggers warning/drift phase upon passing set thresholds [24]. | Concept Drift |

| Windowing & Change Detection | ADWIN (ADaptive WINdowing) [24] [26], KSWIN (Kolmogorov-Smirnov Windowing) [24] | Maintains a window of recent data and detects significant statistical changes between older and newer data in the window [24]. | Concept & Data Drift |

| Distribution-based Tests | Kolmogorov-Smirnov (K-S) Test [22], Wasserstein Distance [22] | Measures whether two data sets originate from the same distribution or quantifies the "distance" between them [22]. | Data Drift |

| Mitigation Strategy | Protocol Details | Resource Intensity | References |

| Periodic Retraining | Retrain models on a fixed schedule using the most recent data. | Medium (Requires labeled data and compute) | [22] [25] |

| Automated Drift Detection & Retraining | Use detection algorithms (e.g., ADWIN) to trigger retraining automatically only when drift is detected. | High (Requires integrated MLOps pipeline) | [22] [26] |

| Online Learning | Update models incrementally with each new data point as it arrives. | Low to Medium | [22] |

| Hybrid Control (HISICC) | Implement a bio-hybrid system with in-cell feedback controllers to handle intracellular PMM. | Very High (Requires genetic engineering) | [27] [28] |

The Scientist's Toolkit: Research Reagent Solutions

Implementing the experimental protocols described in this guide requires specific biological and computational reagents. The following table details key solutions used in the cited research.

Table 5: Essential Research Reagents for Drift Correction Studies

| Reagent / Material | Function in Experiment | Example Usage |

|---|---|---|

| Engineered E. coli Strains | Production chassis with integrated genetic circuits for feedback control. | Strains TA1415, TA2445 (for IPA production) [27]; Strains FA2, FA3 (for fatty acid production) [28]. |

| Inducer Molecules (e.g., IPTG) | External input to tune genetic circuit activity and enzyme expression; optimized by the in-silico controller. | Used to induce metabolic toggle switch in TA1415 [27] and initiate ACC expression in FA3 [28]. |

| Acylated Homoserine Lactone (AHL) | Intercellular signaling molecule for quorum sensing; enables cell-density feedback. | Used in strain TA2445 to autonomously activate the metabolic pathway at a critical cell density [27]. |

| Transcription Factors (e.g., FapR) | Intracellular biosensing components that detect metabolite levels and regulate gene expression. | FapR in FA3 senses malonyl-CoA concentration and triggers LacI expression to repress ACC, creating negative feedback [28]. |

| ML Drift Detection Libraries (Python) | Software packages for implementing statistical drift detection and monitoring. | Kolmogorov-Smirnov test, ADWIN, and PSI are popular methods implemented in Python for open-source drift detection [22]. |

The global biosensors market, projected to grow from USD 31.8 billion in 2025 to USD 76.2 billion by 2035, is experiencing a paradigm shift driven by stringent regulatory requirements and the demand for reliable, real-time data across healthcare, environmental monitoring, and food safety [29]. A significant challenge impeding this growth is sensor drift, where a biosensor's output gradually deviates from its true value over time due to environmental interference, biofouling, or component degradation. This drift poses substantial risks, particularly in medical diagnostics and continuous monitoring, where inaccuracies can directly impact patient health and regulatory compliance [30] [29].

Machine learning (ML) is emerging as a transformative solution, moving biosensors from static measurement tools to self-correcting, intelligent systems. This guide objectively compares the performance of various ML-driven drift correction methodologies, providing researchers and drug development professionals with experimental data and protocols to evaluate these advanced systems within a rigorous performance evaluation framework [19].

Market and Regulatory Landscape

Growth Drivers and Application Segments

The strong market momentum is sustained by the rising burden of chronic diseases, an increased emphasis on preventive care, and the integration of biosensors into point-of-care diagnostics and wearable health technologies [29]. The medical biosensor segment dominates, holding a 62.0% revenue share, with glucose sensors alone accounting for over 55% of this segment's value due to their critical role in diabetes management [29]. Non-medical applications in food safety, environmental monitoring, and agriculture are also expanding rapidly, further amplifying the need for reliable, long-term sensing [31].

Table: Global Biosensors Market Overview (2025-2035)

| Metric | Value | Context |

|---|---|---|

| Market Size (2025) | USD 31.8 Billion | Initial baseline market value [29] |

| Projected Market Size (2035) | USD 76.2 Billion | Forecasted value at end of period [29] |

| CAGR (2025-2035) | 9.1% | Compound Annual Growth Rate [29] |

| Leading Segment | Blood Glucose Biosensors | Driven by global diabetes prevalence [29] |

| Key Growth Region | Asia-Pacific | Rapidly expanding market [29] |

The Regulatory Hurdle of Sensor Drift

A primary challenge for commercial and clinical adoption is the stringent regulatory environment for medical devices, which requires extensive testing and validation to ensure safety and effectiveness [29]. Sensor drift introduces a dynamic variable that can compromise device accuracy throughout its operational lifespan, creating a significant barrier to regulatory approval. Furthermore, the stability and reproducibility of biosensors under fluctuating environmental conditions remain significant technical obstacles [29]. Overcoming these hurdles necessitates robust, embedded correction mechanisms, making ML-based drift compensation not just a technical improvement but a critical enabler for market entry and regulatory compliance.

Comparative Analysis of ML-Driven Drift Correction Methodologies

This section compares emerging intelligent calibration approaches against traditional methods, with performance data summarized from recent studies.

AutoML Calibration for Indoor Air Quality Sensors

A novel automated machine learning (AutoML) framework was developed to calibrate low-cost indoor PM2.5 sensors, which are highly susceptible to interference from environmental variables like humidity [30].

- Experimental Protocol: The study was conducted in a controlled indoor chamber using two different sensor models exposed to diverse pollution sources. The multi-stage calibration connected low-cost field sensors to intermediate drift-correction reference sensors and a reference-grade instrument. Crucially, it applied separate calibration models for low and high concentration ranges to handle the non-linear sensor response [30].

- Performance Data: The AutoML-driven calibration significantly improved sensor performance, achieving a strong correlation with reference measurements (R² > 0.90). Error metrics were substantially reduced, with the root-mean-square error (RMSE) and mean absolute error (MAE) roughly halved relative to uncalibrated data. Bias was effectively minimized, yielding calibrated readings closely aligned with the reference instrument [30].

AI-Enhanced Data Processing and Signal Interpretation

Beyond specific calibration frameworks, AI and ML algorithms are being deeply integrated into the biosensor data pipeline to enhance signal integrity [19].

- Algorithm Diversity: ML subsets like supervised learning (using labeled data for classification/regression), unsupervised learning (for uncovering hidden structures in unlabeled data), and reinforcement learning (where an agent learns optimal actions through trial and error in a dynamic environment) are all being applied to biosensor data [19].

- Common ML Models: Key algorithms demonstrating success in biosensor applications include:

- Support Vector Machines (SVM): Effective for classification tasks, such as identifying healthy versus diseased states from complex sensor data [19].

- Random Forests (RF): An ensemble method that reduces overfitting and improves generalization by aggregating multiple decision trees [19].

- k-Nearest Neighbors (k-NN): A simple yet effective method for classification and regression in scenarios with complex decision boundaries [19].

Table: Performance Comparison of ML-Driven Biosensor Correction Systems

| Correction Method / Technology | Key Advantage | Reported Performance Uplift | Example Application |

|---|---|---|---|

| AutoML Multi-Stage Calibration [30] | Automated model selection; Handles non-linearity via range-specific models | R² > 0.90; RMSE & MAE reduced by ~50% | Low-cost PM2.5 sensor calibration |

| Support Vector Machines (SVM) [19] | Powerful non-linear classification via kernel functions | High accuracy in healthy vs. diseased state classification | Medical diagnostics from complex sensor data |

| Random Forests (RF) [19] | Reduces overfitting; robust generalization | Improved prediction accuracy & stability on unseen data | Analytical chemistry, complex mixture analysis |

| Deep Learning (DL) [19] | Automated feature extraction from raw data | Enhanced sensitivity & specificity by filtering noise | Image-based sensors, EEG signal processing |

| Reinforcement Learning (RL) [19] | Adaptive, real-time optimization in dynamic environments | Maximizes long-term accuracy and sensor lifetime | Implantable sensors for continuous monitoring |

Experimental Protocols for Drift Correction Validation

For researchers aiming to validate ML-based drift correction methods, the following detailed protocols provide a foundation for rigorous experimental design.

Protocol for Environmental Sensor Calibration

This protocol is adapted from the AutoML PM2.5 calibration study [30].

Setup and Instrumentation:

- Device Under Test (DUT): Deploy the low-cost biosensor(s) to be calibrated.

- Reference Network: Co-locate the DUT with intermediate drift-correction reference sensors and a primary reference-grade instrument (e.g., a gravimetric sampler for PM2.5).

- Environmental Control: Conduct tests in a controlled chamber (e.g., for temperature, humidity) but expose sensors to uncontrolled, natural ambient conditions and diverse, relevant pollution sources to simulate real-world variability.

Data Collection:

- Collect simultaneous, time-synchronized data from the DUT and the reference instrument across the entire intended measurement range of the sensor.

- Ensure the dataset captures a wide variety of environmental conditions and pollution concentrations.

Model Training and Validation:

- Data Segmentation: Split the collected dataset into training and validation sets. Further, segment the data into low (clean air) and high (pollution events) concentration ranges.

- Model Application: Employ an AutoML platform to automatically select and train the optimal ML model (e.g., SVM, RF) for each concentration range.

- Performance Metrics: Validate the model by comparing the DUT's calibrated output against the reference instrument using metrics like R², RMSE, MAE, and bias.

Protocol for Validating AI-Enhanced Biomedical Sensors

This general protocol is suited for clinical or biomedical applications, such as validating a new implantable or wearable biosensor [32].

Verification and Analytic Validation:

- Verification: Confirm the sensor's raw signal is physiologically plausible. This involves testing the sensor in controlled solutions with known analyte concentrations and benchmarking the output.

- Analytic Validation: Assess the performance of the algorithms used for noise filtering, artifact correction, and scoring of raw data. Determine the stability and accuracy of the resulting metrics (e.g., heart rate variability, glucose concentration) against a gold standard.

Clinical Validation:

- Design a study to evaluate whether the sensor's AI-corrected output accurately predicts or correlates with a clinically relevant outcome or state.

- For example, in a study on exposure therapy, biosensor data (e.g., heart rate, electrodermal activity) should correlate with the patient's subjective units of distress and observed habituation during therapeutic sessions [32].

Contextual Testing:

- Test the biosensor in the intended environment (lab, clinic, naturalistic setting) to evaluate factors like battery life, ease of use, and data storage/transmission, which are critical for real-world reliability and regulatory approval [32].

The Scientist's Toolkit: Key Research Reagent Solutions

The development and validation of self-correcting biosensors rely on a suite of specialized materials and technologies. The following table details key components and their functions in advanced biosensor systems.

Table: Essential Research Reagents and Materials for Intelligent Biosensor Development

| Reagent / Material | Function in Biosensor Development | Example Application |

|---|---|---|

| Covalent Organic Frameworks (COFs) [33] | Porous, tunable materials that enhance reticular electrochemiluminescence and sensing performance. | Signal amplification in electrochemical biosensors. |

| Aptamers [34] | Single-stranded DNA or RNA molecules acting as synthetic biorecognition elements; offer high stability and specificity. | Target capture in implantable biosensors for continuous biomarker monitoring (e.g., in IBD) [34]. |

| Triboelectric Nanogenerators (TENGs) [35] | Self-powering technology that harvests ambient energy to create battery-free devices. | Powering all-in-one, self-powered wearable biosensor systems [35]. |

| Streptavidin-Functionalized Nanoparticles [33] | Provide a high-density signal amplification platform and enable specific binding to biotinylated proteins. | Labels in time-resolved luminescent immunoassays [33]. |

| Universal Stress Protein (UspA) Promoter [33] | A biological element in whole-cell biosensors that gets activated in response to specific stressors. | Engineered bacterial systems for detecting cobalt contamination in food [33]. |

| Nanostructured Electrodes [29] | Electrodes engineered at the nanoscale to increase surface area, improving sensitivity and detection limits. | Key component in high-performance electrochemical biosensors. |

Visualizing Workflows and System Architectures

The following diagrams illustrate the core concepts, workflows, and system architectures discussed in this guide, providing a visual reference for the development of intelligent biosensors.

ML-Driven Drift Correction Workflow

ML Biosensor Correction Workflow

Biosensor Data Processing with AI

AI Data Processing Pipeline

The Self-Correcting Biosensor Feedback Loop

Self Correction Feedback Loop

AI in Action: A Technical Deep Dive into ML Algorithms for Drift Compensation

Ensemble machine learning methods are revolutionizing data correction in biosensing. By combining multiple models to improve stability and accuracy, these techniques directly address critical barriers like signal drift and false responses that hinder biosensor reliability [36]. This guide provides a performance-focused comparison of two leading ensemble algorithms, Random Forest (RF) and eXtreme Gradient Boosting (XGBoost), for error correction and drift compensation in biosensor applications, drawing on recent experimental studies.

Performance Comparison at a Glance

The following table summarizes the quantitative performance of Random Forest and XGBoost against other common machine learning algorithms as reported in recent scientific literature for sensor data correction tasks.

Table 1: Comparative Performance of Machine Learning Algorithms in Sensor Data Correction

| Application Context | Key Performance Metrics | Random Forest (RF) Performance | XGBoost Performance | Other Algorithms (for context) |

|---|---|---|---|---|

| Machine Failure Prediction [37] | Classification Accuracy, F1-Score | Accuracy: 99.5%, Excellent balance between recall and precision [37] | Evaluated, but RF was top performer [37] | SVM, KNN, Logistic Regression, Naive Bayes |

| COVID-19 Mortality Forecasting [38] | R², MAE, RMSE | R²: 0.983, MAE: 0.61, RMSE: 2.79 [38] | Very close performance to RF [38] | Decision Tree, K-Nearest Neighbors (KNN) |

| Low-Cost Air Quality Sensor Calibration [39] | R², RMSE, MAE | Evaluated, but Gradient Boosting and kNN were top performers [39] | Evaluated for PM sensors; Random Forest and XGBoost were top performers [39] | Gradient Boosting, kNN, Decision Tree, SVM |

| Electrochemical Biosensor Optimization [6] | RMSE, MAE, R² | Among the best-performing models in systematic evaluation [6] | Part of a novel stacked ensemble that showed high performance [6] | GPR, ANN, Stacked Ensembles |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of the experimental groundwork behind these comparisons, here are the detailed methodologies from two key studies.

Table 2: Key Experimental Protocols from Cited Research

| Protocol Element | Theory-Guided Biosensor Error Correction [36] | Systematic Regression Framework for Biosensors [6] |

|---|---|---|

| Primary Objective | Reduce false results and time delay in cantilever biosensors for microRNA detection. [36] | Predict electrochemical current response based on biosensor fabrication parameters to reduce experimental burden. [6] |

| Data Preprocessing | Normalized dynamic biosensor signal change. Used data augmentation (jittering, scaling, warping) to address data sparsity and class imbalance. [36] | Enzymatic glucose biosensor data. Features included enzyme amount, crosslinker amount, and pH. [6] |

| Feature Engineering | Theory-guided features: 14 features from biosensor binding theory (e.g., rate of change during initial transient). Traditional features: 511 features via TSFRESH. [36] | Not explicitly detailed, but feature importance and SHAP analysis were used for model interpretability. [6] |

| Model Training & Evaluation | Classification of target concentration bins. Stratified 5-fold cross-validation. Performance assessed via F1 score, precision, and recall. [36] | 10-fold cross-validation across 26 regression models. Evaluated with RMSE, MAE, MSE, and R². [6] |

| Key Outcome | Theory-guided features improved model performance and efficiency. Enabled accurate quantification using only the initial transient response, reducing data acquisition time. [36] | A stacked ensemble (GPR, XGBoost, ANN) demonstrated high predictive accuracy. Models provided actionable design insights (e.g., enzyme loading thresholds). [6] |

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table catalogues key materials and computational tools essential for conducting experiments in machine learning-based biosensor error correction.

Table 3: Essential Research Reagents and Computational Tools

| Item Name | Function/Application | Relevant Context |

|---|---|---|

| Cantilever Biosensor | A piezoelectric transducer that measures resonant frequency shift upon target analyte (e.g., microRNA) binding. [36] | Used for dynamic response data acquisition in time-series classification tasks. [36] |

| Enzymatic Glucose Biosensor | An electrochemical sensor with a biological recognition element (enzyme) for detecting glucose. [6] | Serves as a source of experimental data for predicting signal intensity from fabrication parameters. [6] |

| Low-Cost Air Quality Sensors (LCS) | Affordable sensors for pollutants (e.g., PM2.5, CO2) used in IoT-based monitoring systems. [39] | Require ML calibration to correct for inaccuracies caused by sensitivity to environmental factors like temperature and humidity. [39] |

| TSFRESH (Python Package) | A tool for automated generation of a large number of time-series features. [36] | Used for "traditional feature engineering" to provide a baseline for comparison with theory-guided features. [36] |

| SHAP (SHapley Additive exPlanations) | A game-theoretic method to explain the output of any machine learning model. [37] [6] | Used for model interpretability, identifying influential features, and supporting transparent decision-making. [37] [6] |

Workflow Visualization

The diagram below illustrates the core comparative workflow for implementing Random Forest and XGBoost for biosensor error correction, from data preparation to model deployment.

ML Correction Workflow

Key Insights for Practitioners

Random Forest demonstrates exceptional performance in classification tasks, such as fault prediction and concentration binning, due to its inherent robustness against overfitting and ability to handle imbalanced data [37] [38]. Its parallel tree building makes it relatively straightforward to implement.

XGBoost often matches or comes very close to Random Forest's performance, particularly in structured data tasks [38]. Its key strength lies in its sequential error-correction and built-in regularization, which can make it generalize exceptionally well. It is also a common component in high-performing stacked ensembles [6].

Algorithm selection depends on the primary goal. For maximum interpretability and robust classification, Random Forest is an excellent choice. For pushing predictive accuracy on regression or ranking tasks and when computational efficiency is key, XGBoost is a strong contender. The most advanced approaches may involve stacking both into a hybrid ensemble [6].

Beyond Algorithm Choice: Success heavily relies on domain-informed feature engineering. Integrating biosensor theory to create features (e.g., initial binding rate) can significantly boost performance and reduce data needs compared to purely data-driven feature extraction [36]. Furthermore, tools like SHAP are critical for explaining model decisions, building trust, and providing actionable insights for biosensor redesign [37] [6].

Sequential drift, the gradual and often unpredictable change in sensor signal response over time, presents a fundamental challenge to the reliability and long-term stability of biosensing systems. In sensitive applications from medical diagnostics to environmental monitoring, this drift can compromise data integrity, leading to inaccurate readings and potentially severe real-world consequences. Traditional compensation methods, including manual recalibration and linear algorithmic corrections, often prove inadequate for the complex, nonlinear nature of drift observed in real-world conditions. Consequently, advanced temporal modeling techniques have emerged as a critical solution. Among these, Long Short-Term Memory (LSTM) networks, a specialized form of recurrent neural network (RNN), have demonstrated a remarkable capacity for learning complex temporal dependencies and forecasting sequential patterns. This guide provides a performance-focused comparison of LSTM-based drift compensation methods against other leading machine learning and statistical approaches, offering researchers a data-driven foundation for model selection.

Core Technologies in Drift Compensation

LSTM Networks: Architecture and Strengths

LSTM networks are explicitly designed to overcome the limitations of traditional RNNs in capturing long-range temporal dependencies. Their core innovation lies in a gated memory cell architecture, which regulates the flow of information through three specialized gates:

- Forget Gate: Determines what information from the previous cell state should be discarded.

- Input Gate: Controls the extent to which new information should be stored in the cell state.

- Output Gate: Governs what information from the current cell state is output to the hidden state.

This gating mechanism allows LSTM to maintain a memory over long sequences, making it exceptionally well-suited for modeling the slow, cumulative process of sensor drift. The model effectively learns to separate the underlying drift component from the true signal and other noise, enabling precise compensation [40] [41]. Its primary strength lies in modeling complex, nonlinear drift dynamics without requiring pre-specified assumptions about the drift's functional form [42].

Alternative Modeling Approaches

Several other modeling paradigms are commonly applied to the drift compensation problem, each with distinct operational principles.

- Classical Time-Series Models (e.g., ARIMA, SARIMA): These statistical models are effective for data with clear linear trends and strong seasonality. However, they struggle with the nonlinearities and complex dependencies inherent in many biosensor drift scenarios, often resulting in inferior performance compared to deep learning models [43].

- Temporal Convolutional Networks (TCNs): TCNs use causal, dilated convolutions to process sequential data. They offer advantages in computational efficiency and parallelization, making them strong candidates for resource-constrained, real-time applications like embedded drift compensation on microcontrollers [44].

- Hybrid LSTM-Ensemble Models: These approaches combine the predictive power of LSTM with the robustness of other classifiers. A prominent example integrates an LSTM with a Support Vector Machine (SVM) within a multi-class ensemble learning framework. The LSTM learns temporal, drift-invariant features, which are then classified by the SVM, enhancing overall robustness [41].

Table 1: Comparison of Core Drift Compensation Modeling Approaches

| Model Type | Key Mechanism | Strengths | Weaknesses |

|---|---|---|---|

| LSTM [40] [41] | Gated memory cell & internal state | Excels at capturing long-term, nonlinear dependencies; models complex drift dynamics. | Can be computationally intensive; requires careful hyperparameter tuning. |

| TCN [44] | Causal, dilated 1D convolutions | Stable gradients, faster training, efficient for real-time/embedded use. | May require more layers to capture very long-range dependencies. |

| SARIMA [43] | Autoregression & moving averages with seasonal components | Highly interpretable; good for data with strong, linear seasonal patterns. | Poor performance on nonlinear data; assumes stationary data after differencing. |

| LSTM-SVM Ensemble [41] | LSTM for feature extraction, SVM for classification | Improved classification accuracy under drift; combines temporal and discriminative learning. | Increased model complexity; requires integration of two different model types. |

Experimental Performance Comparison

Empirical studies across various domains provide quantitative evidence of the performance of these models in temporal forecasting and drift compensation tasks.

Forecasting Accuracy

In a comparative study of renewable energy forecasting for Dhaka city, the LSTM model significantly outperformed classical time-series models. It achieved a superior R² score of 0.9860, compared to -0.0008 for ARIMA and -0.1104 for SARIMA. This result underscores LSTM's superior ability to learn complex temporal patterns where linear models fail [43]. A Monte Carlo simulation study comparing nine neural network architectures further reinforced the robustness of LSTM and its hybrids (LSTM-RNN, LSTM-GRU), which demonstrated consistent, top-tier performance across diverse time-series datasets, including sunspot activity and dissolved oxygen concentrations [45].

Drift Compensation and Anomaly Correction

Specialized LSTM variants have been developed to directly address data quality issues. The Corrector LSTM (cLSTM) introduces a "Read & Write" paradigm that dynamically adjusts training data during the learning process. It forecasts cell states and refines input data based on discrepancies between actual and predicted states. This architecture has demonstrated superior forecasting accuracy and anomaly detection capabilities on standard benchmarks like the Numenta Anomaly Benchmark (NAB) and the M4 competition dataset when compared to standard, "read-only" LSTM models [46].

For gas sensor drift compensation, a lightweight Temporal CNN (TCNN) enhanced with a Hadamard spectral transform achieved a mean absolute error below 1 mV (equivalent to <1 ppm) on long-term recordings. While not an LSTM, this TCNN approach highlights the effectiveness of advanced temporal models and the potential for deployment on low-power, embedded systems (TinyML) after model quantization [44].

Table 2: Summary of Quantitative Performance Metrics from Experimental Studies

| Study & Application | Model(s) | Key Performance Metric(s) | Result |

|---|---|---|---|

| Renewable Energy Forecasting [43] | LSTM | R² Score | 0.9860 |

| ARIMA | R² Score | -0.0008 | |

| SARIMA | R² Score | -0.1104 | |

| Gas Sensor Drift Compensation [44] | Spectral-Temporal TCNN | Mean Absolute Error | < 1 mV (< 1 ppm) |

| Monte Carlo NN Benchmark [45] | LSTM, LSTM-RNN, LSTM-GRU | Consistent ranking | Top-tier performance across multiple datasets |

| Remaining Useful Life Prediction [42] | LSTM-Wiener Process | Prognostic performance | Superior accuracy and uncertainty quantification for mechanical systems |

Experimental Protocols and Methodologies

To ensure reproducibility, this section outlines the standard workflow and key methodologies cited in the performance comparisons.

Standard LSTM Workflow for Drift Compensation

The typical pipeline for developing an LSTM-based drift compensation model involves several critical stages, from data preparation to deployment.

LSTM Drift Compensation Workflow

- Raw Sensor Data Acquisition: Collect time-series data from the biosensor system over a sufficiently long period to capture drift behavior. This often requires exposure to varying environmental conditions (e.g., humidity, temperature) to model their impact [44] [42].

- Data Preprocessing: This stage involves normalizing the sensor signals to a consistent scale, handling missing values, and potentially performing initial noise filtering [42].

- Feature Engineering/Extraction: For complex data, relevant features may be extracted from the raw signal. In some frameworks, Principal Component Analysis (PCA) or Kernel PCA (KPCA) is used to reduce the dimensionality of the feature space, isolating the most informative components related to drift and analyte concentration [42].

- LSTM Model Training: The processed sequential data is used to train the LSTM network. The model learns to predict the subsequent values in the sensor's signal. Hyperparameter tuning, potentially using methods like Bayesian Optimization, is conducted to optimize learning rates, number of layers, and units [42].

- Drift Prediction & Compensation: The trained LSTM model forecasts the future trajectory of the sensor signal, which includes the learned drift component. This predicted drift is then subtracted from the actual sensor reading to yield a corrected, drift-free signal [44] [46].

- Model Validation & Deployment: The model's performance is rigorously evaluated on a held-out test set not seen during training. Metrics like Mean Absolute Error (MAE) and Root Mean Square Error (RMSE) are calculated. For resource-constrained settings, the model may be quantized to reduce its size and power consumption before deployment [44].

Protocol for LSTM-Ensemble Models

The methodology for the LSTM-SVM multi-class ensemble model, as described for gas recognition under drift, involves a synergistic process [41]:

- Feature Learning with LSTM: The LSTM network is trained on the sequential sensor data. Its hidden states at each time step serve as high-level, temporal feature representations of the input.