Machine Learning for Electrochemical Biosensor Drift Compensation: Algorithm Comparison and Implementation Strategies

This article provides a comprehensive comparison of drift compensation algorithms for electrochemical biosensors, addressing a critical challenge that limits their reliability in research and clinical applications.

Machine Learning for Electrochemical Biosensor Drift Compensation: Algorithm Comparison and Implementation Strategies

Abstract

This article provides a comprehensive comparison of drift compensation algorithms for electrochemical biosensors, addressing a critical challenge that limits their reliability in research and clinical applications. We explore the fundamental causes of sensor drift, including signal instability, calibration drift, and low reproducibility in large-scale fabrication. The review systematically categorizes and evaluates offline and online compensation methodologies, from traditional domain adaptation to advanced active learning frameworks. Practical troubleshooting guidance is offered for optimizing algorithm performance under real-world constraints like limited labeling budgets. Finally, we present a rigorous validation framework using standardized metrics and benchmark datasets, empowering researchers and drug development professionals to select and implement optimal drift compensation strategies for enhanced sensor accuracy and longevity.

Understanding Biosensor Drift: Fundamental Challenges and Impact on Biomedical Applications

Sensor drift is a critical phenomenon in electrochemical systems, representing a gradual, unidirectional change in a sensor's output signal that occurs over time despite constant input conditions. This instability fundamentally compromises measurement accuracy and reliability, presenting a major obstacle for applications requiring long-term stability, from continuous health monitoring to environmental sensing [1] [2]. Drift manifests through two primary mechanisms: signal instability, characterized by random fluctuations or progressive deviation in the baseline signal, and calibration shift, where the fundamental relationship between the analyte concentration and the sensor's output changes [2] [3]. These changes can result from various factors including sensor aging, environmental parameter fluctuations (e.g., temperature, humidity), biofouling, and electrolyte degradation [2] [3]. Understanding and compensating for these drift mechanisms is essential for developing reliable electrochemical biosensors, particularly for point-of-care diagnostics and deployable field sensors where frequent manual recalibration is impractical [1] [4].

Experimental Protocols for Studying Sensor Drift

Protocol for Long-Term Drift Assessment in Environmental Sensors

A comprehensive approach for evaluating long-term drift in electrochemical sensors was demonstrated through a six-month field study monitoring nitrogen dioxide (NO₂) [2]. The methodology provides a robust template for assessing sensor stability under real-world conditions.

Sensor Setup and Data Collection: The experiment utilized NO₂-B41F electrochemical sensors (Alphasense LTD) placed in a monitoring station beside a highway. A key aspect was the dynamic air-sampling mode using a pump and mass flow controller to maintain a constant airflow of 500 mL/min, eliminating the variable of wind speed variation. The sensors' working electrode (WE) and auxiliary electrode (AE) voltages were recorded at 200 Hz, then averaged over 10-second intervals, and finally aggregated into 15-minute averages to align with reference data from a high-precision chemiluminescence analyzer [2].

Environmental Factor Monitoring: Temperature and relative humidity data were continuously collected alongside sensor readings to quantify their influence on the signal response. The monitoring station maintained a controlled internal temperature of 22°C to mitigate extreme external temperature variations, though the influence was not entirely eliminated [2].

Baseline and Sensitivity Tracking: The fundamental relationship for calculating concentration was expressed as ( NO_2 = WE \times a - AE \times b + c ), where coefficients ( a ), ( b ), and ( c ) are determined via multiple linear regression. Long-term drift was observed as a gradual change in these coefficients—specifically, the baseline (related to coefficient ( c )) and the sensitivity (related to coefficients ( a ) and ( b )) over the multi-month deployment [2].

Protocol for Drift Mitigation in BioFETs

Research on Carbon Nanotube (CNT)-based BioFETs (Field-Effect Transistors) outlines a rigorous methodology to mitigate signal drift for ultrasensitive biomarker detection [1].

Polymer Brush Interface: A polyethylene glycol-like polymer brush (POEGMA) was grafted above the CNT channel. This interface serves a dual purpose: it increases the sensing distance (Debye length) in high ionic strength solutions (like 1X PBS) to overcome charge screening, and it provides a non-fouling surface for antibody immobilization [1].

Stable Electrical Testing Configuration: Drift was minimized by employing a stable palladium (Pd) pseudo-reference electrode, eliminating the need for a bulky Ag/AgCl reference electrode. This contributes to a more compact, point-of-care compatible form factor [1].

Infrequent DC Sweep Methodology: Instead of relying on static measurements or AC techniques that are more susceptible to drift, the protocol enforced a testing methodology based on infrequent DC current-voltage sweeps. This reduces the exposure time and cumulative effect of ion diffusion into the sensing region, a primary cause of signal drift in solution-gated transistors [1].

Comparison of Drift Compensation Algorithms

The table below summarizes the core principles, advantages, and limitations of different algorithmic approaches for sensor drift compensation.

Table 1: Comparison of Sensor Drift Compensation Algorithms

| Algorithm/Strategy | Fundamental Principle | Key Methodological Features | Advantages | Limitations |

|---|---|---|---|---|

| Piecewise Direct Standardization (PDS) | Transfers a calibration model from a primary condition to a secondary condition by mapping response variables between instruments or time periods [5] [6]. | Uses a moving window and multivariate regression to relate a small number of transfer samples from the new condition to the original calibration model [5]. | Corrects for both signal intensity variations and peak shifts; reduces need for full recalibration with many samples [5]. | Requires a set of transfer samples; performance depends on the selection of an appropriate window size [5]. |

| Multi-Sensor MLE with Credibility Index | Estimates true signal from multiple redundant low-cost sensors using Maximum Likelihood Estimation (MLE), weighted by dynamically updated sensor credibility [3]. | Calculates a time-varying credibility index for each sensor based on its historical and current agreement with the estimated truth. Allows on-the-fly drift correction [3]. | Can estimate true signal even when the majority (~80%) of sensors are unreliable; enables use of low-cost sensor arrays [3]. | Requires multiple sensors measuring the same analyte; increased system complexity and power for data fusion. |

| Particle Swarm Optimization (PSO) | An unsupervised, empirical method that models drift as a linear function of time and optimizes correction parameters using a bio-inspired search algorithm [2]. | Optimizes the slope (sensitivity change) and intercept (baseline change) of a linear correction model to compensate for aging effects without labeled data [2]. | Does not require reference (labeled) data for recalibration; demonstrated effectiveness over 3-month periods in field studies [2]. | Model is empirical and may not generalize to all drift behaviors; assumes drift is a linear function of time. |

| Signal Fidelity Index (SFI)-Aware Calibration | A data-centric approach that quantifies diagnostic data quality at the patient level and uses it to adjust machine learning model predictions [7]. | Derives an SFI from components like diagnostic specificity, temporal consistency, and medication alignment. Applies a multiplicative adjustment to model outputs [7]. | Improves model generalizability across different healthcare systems without needing outcome labels; addresses data quality drift [7]. | Primarily tested on simulated EHR data for dementia; clinical real-world validation is pending. |

Signaling Pathways and System Workflows

Fundamental Sensor Drift Pathways

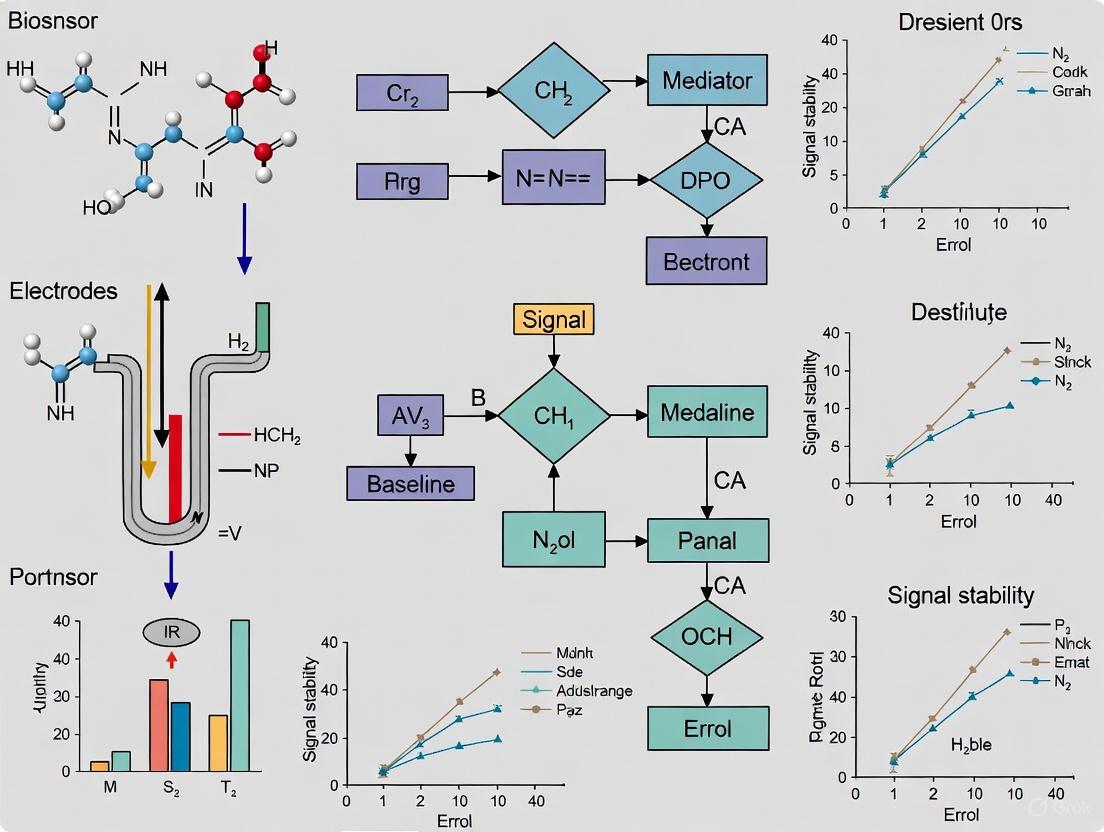

The following diagram illustrates the primary causes and effects that define the problem of sensor drift in electrochemical systems.

Multi-Sensor Data Fusion Workflow

This diagram outlines the workflow for the MLE and credibility-based data fusion approach, a modern strategy for combating drift in sensor networks.

The Scientist's Toolkit: Key Research Reagents and Materials

The table below details essential materials and reagents frequently employed in the development and stabilization of electrochemical biosensors, as identified in the research.

Table 2: Key Research Reagents and Materials for Electrochemical Biosensor Development

| Material/Reagent | Primary Function in Biosensor Development | Specific Role in Addressing Drift & Stability |

|---|---|---|

| Carbon Nanotubes (CNTs) | Transducer material for Field-Effect Transistors (BioFETs) due to high electrical sensitivity [1]. | High mobility and chemical inertness can improve baseline stability, but devices require passivation and specific measurement protocols to mitigate ion diffusion drift [1]. |

| Poly(oligo(ethylene glycol) methyl ether methacrylate) (POEGMA) | A polymer brush interface immobilized on the sensor surface [1]. | Extends the Debye length in biological solutions to overcome charge screening; provides a non-fouling layer that reduces biofouling-induced drift [1]. |

| Gold Nanoparticles (AuNPs) | Nanomaterial for electrode modification to enhance signal amplification and bioreceptor immobilization [8]. | Excellent electrical conductivity and chemical stability provide a robust platform for biomolecule attachment, contributing to consistent sensor response and reduced signal noise [8]. |

| Metal-Organic Frameworks (MOFs) | Porous crystalline materials used to modify electrode surfaces [8]. | High surface area increases loading capacity of recognition elements; can be decorated with signal amplifiers (e.g., AgNPs) to enhance sensitivity and stability [8]. |

| Pseudo-Reference Electrodes (e.g., Pd) | Alternative to traditional Ag/AgCl reference electrodes in miniaturized systems [1]. | Enables stable potential in a compact form factor, crucial for point-of-care device stability and mitigating drift associated with bulky, unreliable reference systems [1]. |

| Particle Swarm Optimization (PSO) Algorithm | A computational algorithm, not a wet-lab reagent, used for model parameter identification [2]. | Serves as an "in-silico reagent" to empirically identify optimal parameters for unsupervised drift correction models without requiring labeled calibration data [2]. |

Electrochemical biosensors represent a powerful tool for diagnostic applications, yet their performance and longevity are critically limited by three interconnected challenges: material degradation at the electrode interface, interference from environmental factors, and biofouling in complex biological samples. These root causes collectively contribute to signal drift, reduced sensitivity, and ultimately, unreliable data in both clinical and research settings. Material degradation involves the physical and chemical breakdown of sensor components, environmental factors encompass variables like temperature and pH that affect sensor performance, and biofouling refers to the nonspecific adsorption of biomolecules onto sensor surfaces. Understanding these mechanisms is essential for developing effective drift compensation algorithms, as the most sophisticated computational approaches must account for the underlying physical and chemical processes that generate signal artifacts. This review examines the fundamental mechanisms of these failure modes and provides a comparative analysis of experimental methodologies for their investigation, with particular emphasis on implications for algorithmic compensation strategies in electrochemical biosensing systems.

Material Degradation Mechanisms and Experimental Analysis

Material degradation in electrochemical biosensors encompasses various chemical and physical processes that deteriorate electrode materials and sensing interfaces over time. Common degradation mechanisms include electrode oxidation, catalyst poisoning, loss of recognition element activity, and delamination of functional coatings. These processes directly impact sensor performance by increasing background noise, reducing signal-to-noise ratio, and altering the sensor's electrochemical response characteristics.

At the molecular level, material degradation often begins with surface oxidation of electrode materials. For noble metal electrodes like gold and platinum, repeated potential cycling in electrochemical measurements can lead to the formation of oxide layers that alter electron transfer kinetics. A study investigating electrochemical gas sensors observed that prolonged exposure to operational potentials significantly changed the baseline current and sensitivity of the sensors [9]. Similarly, carbon-based electrodes can undergo oxidative degradation that creates additional oxygen-containing functional groups, modifying their electrochemical properties and binding characteristics for biomolecules.

The degradation of recognition elements represents another critical failure mechanism. Enzymes used in biosensors can undergo denaturation or lose cofactors, while antibodies may experience structural changes that reduce their binding affinity. Aptamers can suffer from nuclease degradation or undergo conformational changes that diminish their specificity. This degradation is often accelerated by environmental factors such as temperature fluctuations, pH extremes, or exposure to reactive oxygen species. Research on electrochemical diagnostics has highlighted how these processes contribute to signal drift in biosensors designed for continuous monitoring applications [10].

Table 1: Experimental Techniques for Material Degradation Analysis

| Technique | Measured Parameters | Degradation Insights | Applicable Sensor Types |

|---|---|---|---|

| Electrochemical Impedance Spectroscopy (EIS) | Charge transfer resistance (Rct), Solution resistance (Rs), Double-layer capacitance (Cdl) | Changes in electrode surface properties, coating integrity, and interface stability | All electrochemical biosensors, particularly aptasensors and immunosensors |

| Cyclic Voltammetry (CV) | Peak current, Peak potential separation, Electroactive surface area | Evolution of electron transfer kinetics, surface fouling, catalyst degradation | Enzyme-based sensors, metal and carbon electrodes |

| Scanning Electron Microscopy (SEM) | Surface morphology, cracks, delamination, pitting | Physical degradation of electrode materials and functional layers | Sensors with nanostructured materials and multilayer fabrication |

| X-ray Photoelectron Spectroscopy (XPS) | Elemental composition, chemical states, surface contaminants | Chemical changes in electrode materials, oxidation state evolution | Solid-state sensors, metal and metal oxide electrodes |

Experimental protocols for investigating material degradation typically employ accelerated aging studies combined with detailed materials characterization. A standard approach involves subjecting sensors to extreme conditions (elevated temperature, voltage, or chemical exposure) while monitoring performance degradation through regular electrochemical measurements. For example, researchers might cycle sensors through extended potential windows while tracking changes in redox peak positions and currents using cyclic voltammetry. Simultaneously, surface analysis techniques such as SEM-EDS and XPS provide correlative information about physical and chemical changes at the electrode interface [11].

The degradation kinetics of functional materials can follow different patterns depending on the dominant mechanism. Some materials exhibit linear degradation rates, while others show exponential decay or more complex behaviors influenced by multiple simultaneous processes. Understanding these patterns is crucial for developing accurate drift compensation algorithms that can project performance degradation over time [12].

Environmental Interference Factors and Compensation Methodologies

Environmental factors represent a significant source of variability and drift in electrochemical biosensor performance. Key interfering parameters include temperature, relative humidity, pH, ionic strength, and the presence of electroactive interferents in complex samples. These factors influence sensor response through multiple mechanisms, including alteration of reaction kinetics, changes in diffusion rates, modulation of biomolecule activity, and shifts in electrochemical potentials.

Temperature effects are particularly pervasive, affecting nearly all aspects of sensor performance. Research on electrochemical gas sensors demonstrated that temperature variations can cause significant signal drift, with some sensors exhibiting response changes of 2-5% per °C [9]. Temperature influences electrochemical reactions through Arrhenius-type kinetics, affects membrane permeability in mediated systems, and alters the conformation of biological recognition elements. Similarly, pH variations can profoundly impact enzyme activity, alter the charge state of functional groups, and shift the formal potential of redox reactions.

Electrochemical biosensors operating in complex biological matrices like blood, serum, or urine face additional challenges from electroactive interferents such as ascorbic acid, uric acid, and acetaminophen. These compounds can undergo oxidation or reduction at similar potentials to the target analyte, generating non-specific signals that obscure the desired measurement. The problem is particularly acute in continuous monitoring applications where sensors are exposed to undiluted biological samples for extended periods.

Table 2: Environmental Interference Factors and Experimental Characterization Methods

| Interference Factor | Impact on Sensor Performance | Standard Test Protocol | Typical Compensation Approaches |

|---|---|---|---|

| Temperature Fluctuations | Alters reaction kinetics, diffusion rates, and biomolecule stability; typically 2-5%/°C effect | Performance characterization across operational range (e.g., 15-40°C) with controlled analyte concentrations | Integrated temperature sensors with algorithm-based correction; thermostatted systems |

| pH Variations | Affects enzyme activity, antibody affinity, and redox potentials; can cause signal shifts up to 10%/pH unit | Buffer systems with varying pH but constant analyte concentration; real-time pH monitoring | pH-stat systems; dual-sensor designs with pH correction; pH-resistant recognition elements |

| Electroactive Interferents | Non-specific signals from ascorbate, urate, acetaminophen; false positive readings | Spiked interference studies measuring sensor response to interferents without target analyte | Permselective membranes; potential stepping protocols; multivariate calibration |

| Ionic Strength Changes | Alters double-layer structure and charge transfer kinetics; affects extraction efficiency | Solutions with varying salt concentration but constant analyte level | Ionic strength adjustment buffers; constant background electrolyte; calibration in matched matrices |

Experimental characterization of environmental interference follows systematic protocols that isolate individual variables while holding others constant. For temperature studies, sensors are typically placed in environmental chambers where temperature can be precisely controlled while analyte concentrations are maintained at known levels. Similarly, pH interference is quantified using buffer systems that span the expected operational range while keeping ionic strength and analyte concentration constant. These controlled studies generate interference models that can be incorporated into compensation algorithms.

Advanced compensation approaches for environmental interference increasingly leverage machine learning techniques. Multivariate regression models can simultaneously account for multiple interfering factors by incorporating signals from additional sensors that monitor the interfering parameters themselves. For instance, research on AI-enhanced electrochemical sensing has demonstrated how machine learning algorithms can learn complex relationships between environmental parameters and sensor response, enabling real-time compensation without requiring explicit physical models of each interference mechanism [13].

Biofouling Mechanisms and Antifouling Strategies

Biofouling refers to the nonspecific adsorption of proteins, cells, and other biological materials onto sensor surfaces, creating a diffusion barrier and potentially fouling the electrode interface. This process represents a particularly challenging form of degradation for biosensors operating in complex biological fluids like blood, serum, or interstitial fluid. The fouling process typically begins with rapid adsorption of proteins within seconds to minutes of exposure, followed by slower reorganization of the protein layer and potential subsequent cellular attachment.

The biofouling mechanism involves complex interactions between sensor surface properties and biological components. Key factors influencing fouling include surface energy, charge, roughness, and hydrophobicity. Proteins typically adsorb to surfaces through a combination of hydrophobic interactions, electrostatic forces, and van der Waals interactions. This initial protein layer then mediates subsequent attachment of cells and other biological components, leading to the formation of a complex fouling layer that impedes analyte access to the sensor interface.

Research on marine sensors and biomedical devices has revealed that biofouling follows characteristic kinetic profiles, often with an initial rapid phase followed by a slower continuous accumulation. Studies on marine engineering equipment have shown that biofilm thickness can reach 50-150 μm in natural seawater environments, with similar processes occurring in biological fluids [14]. The resulting fouling layer acts as a diffusion barrier, increasing response time and reducing sensitivity to the target analyte. In severe cases, complete signal loss can occur.

Antifouling strategies have evolved to address these challenges through both material and algorithmic approaches. Surface modification with antifouling polymers represents a primary materials-based strategy. Research on biosensors for blood analysis demonstrated that dual-loop constrained antifouling peptides (DLC-AP) exhibit exceptional resistance to fouling in complex biological media [15]. These peptides form tightly packed surfaces that resist protein adsorption through a combination of steric repulsion and hydration layers. Other material approaches include surface grafting of polyethylene glycol, zwitterionic polymers, and hydrogels that create a physical and energetic barrier to protein adsorption.

Table 3: Experimental Biofouling Characterization and Antifouling Strategies

| Method Category | Specific Techniques | Experimental Outputs | Algorithmic Compensation Potential |

|---|---|---|---|

| Surface Characterization | SEM, Quartz Crystal Microbalance with Dissipation (QCM-D), Surface Plasmon Resonance | Fouling layer thickness, viscoelastic properties, adsorption kinetics | Input for diffusion-limited response models; time-dependent sensitivity correction |

| Electrochemical Assessment | EIS, Chronoamperometry, Open Circuit Potential Monitoring | Charge transfer resistance increase, diffusion-limited current reduction | Direct input for drift compensation algorithms; signal decay modeling |

| Antifouling Materials | PEGylation, zwitterionic polymers, peptide-based coatings, natural antifoulants | Fouling resistance, stability in biological media, non-specific signal reduction | Reduced need for compensation; extended operational lifetime |

| Active Antifouling | Electrochemical cleaning, ultrasound, magnetic activation | On-demand fouling removal, restored sensor functionality | Event-based reset in calibration models; discontinuous operation protocols |

Experimental evaluation of biofouling typically involves exposing sensor surfaces to relevant biological fluids while monitoring the accumulation of fouling material and its impact on sensor performance. Quartz crystal microbalance with dissipation (QCM-D) provides detailed information about mass adsorption and viscoelastic properties of the fouling layer. Electrochemical impedance spectroscopy tracks the increasing charge transfer resistance associated with fouling layer formation. Additionally, SEM imaging of fouled surfaces reveals the morphology and distribution of adsorbed material [16] [14].

For biosensors where complete fouling prevention remains challenging, algorithmic approaches can partially compensate for fouling effects. These typically model the fouling process as a time-dependent decrease in sensitivity coupled with an increase in response time. By characterizing the fouling kinetics under controlled conditions, compensation algorithms can project the progression of fouling effects and adjust readings accordingly. However, severe fouling typically requires physical cleaning or sensor replacement, highlighting the importance of materials-based antifouling strategies as a first line of defense [13].

Experimental Protocols for Root Cause Investigation

Accelerated Degradation Testing

Standardized experimental protocols are essential for systematic investigation of material degradation, environmental interference, and biofouling. Accelerated degradation studies subject sensors to elevated stress conditions to observe failure mechanisms within practical timeframes. A typical protocol involves exposing multiple sensor replicates to conditions such as elevated temperature (e.g., 37-50°C), continuous potential application, or extended cycling in relevant biological matrices. Performance metrics including sensitivity, selectivity, response time, and baseline stability are monitored at regular intervals throughout the accelerated aging process.

The field comparison of electrochemical gas sensors employed a comprehensive approach by collecting data over six months with one-minute time resolution, comparing sensor performance against reference instruments [9]. Similar longitudinal studies are valuable for biosensors, though the timeframe can be compressed through accelerated conditions. Control sensors stored under ideal conditions provide a baseline for distinguishing natural variation from genuine degradation.

Environmental Interference Characterization

Systematic characterization of environmental interference requires controlled variation of individual parameters while maintaining others constant. For temperature studies, sensors are placed in environmental chambers with precise temperature control (±0.1°C), with testing conducted across the entire anticipated operational range (e.g., 15-40°C for physiological monitoring). Similar approaches apply to pH studies using appropriately buffered solutions, and ionic strength variations using solutions with fixed analyte concentration but varying background electrolyte.

Multivariate experimental designs are particularly efficient for characterizing interactions between multiple environmental factors. These approaches vary multiple parameters simultaneously according to statistical design principles, enabling efficient modeling of complex interference behavior. The resulting models can directly inform compensation algorithms that account for coupled interference effects [13].

Biofouling Assessment Protocols

Biofouling evaluation requires exposure to relevant biological fluids under controlled conditions. For medical biosensors, protocols typically involve exposure to undiluted serum, plasma, or whole blood under physiological temperature with gentle agitation to simulate in vivo conditions. Performance assessment before, during, and after exposure quantifies the progression of fouling effects. Control experiments with buffer solutions help distinguish fouling-specific effects from general sensor degradation.

Advanced biofouling assessment incorporates multiple complementary techniques. Electrochemical impedance spectroscopy provides sensitive detection of initial fouling layer formation through changes in charge transfer resistance. Quartz crystal microbalance with dissipation monitoring offers detailed information about mass adsorption and viscoelastic properties. Finally, surface analysis techniques including SEM, AFM, and XPS characterize the morphology and composition of fouling layers after exposure [15] [14].

Diagram 1: Interrelationships between root causes, mechanisms, and compensation approaches in electrochemical biosensor degradation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Materials for Degradation Studies

| Category | Specific Items | Research Applications | Experimental Function |

|---|---|---|---|

| Electrode Materials | Gold, platinum, glassy carbon, screen-printed carbon electrodes, indium tin oxide | Substrate fabrication, comparative studies | Provide conductive surfaces for biomolecule immobilization and electron transfer |

| Recognition Elements | Glucose oxidase, horseradish peroxidase, specific antibodies, DNA aptamers | Biosensor development, stability assessment | Target capture and signal generation; stability testing under various conditions |

| Antifouling Agents | Polyethylene glycol, zwitterionic polymers, antifouling peptides, Tween 20 | Surface modification, fouling resistance evaluation | Reduce nonspecific adsorption; extend functional lifetime in complex media |

| Characterization Reagents | Potassium ferricyanide, ruthenium hexamine, redox probes | Electrode characterization, degradation monitoring | Benchmark electron transfer kinetics; track surface changes over time |

| Biological Matrices | Fetal bovine serum, human plasma, whole blood, artificial sweat | Real-world performance testing, biofouling studies | Simulate operational environment; accelerate degradation processes |

| Stabilization Compounds | BSA, trehalose, sucrose, glycerol, hydrogel matrices | Recognition element stabilization, shelf-life studies | Preserve biomolecule activity during storage and operation |

Implications for Drift Compensation Algorithm Development

The systematic investigation of root causes in sensor degradation directly informs the development of more effective drift compensation algorithms. Material degradation studies provide essential data on failure modes and progression rates that can be incorporated into model-based compensation approaches. Understanding whether degradation follows linear, exponential, or more complex patterns enables selection of appropriate mathematical frameworks for projection and correction.

Environmental interference characterization generates the multivariate response models needed for sophisticated compensation algorithms. Research on electrochemical gas sensors demonstrated that algorithms incorporating temperature and humidity corrections significantly outperformed uncorrected sensors, particularly when the correction models were trained on extensive field data [9]. Similar approaches apply to biosensors, where interference from pH, ionic strength, and competing electroactive species can be modeled and compensated.

Biofouling presents particular challenges for algorithmic compensation due to its progressive nature and potential for abrupt changes. Effective compensation typically requires combined hardware and software approaches, where antifouling materials slow the fouling process while algorithms project and correct for the remaining gradual performance decline. Research on AI-enhanced electrochemical sensing demonstrates how machine learning can detect fouling-induced pattern changes in multidimensional signal data, enabling adaptive compensation that evolves with the sensor's condition [13].

The most robust compensation strategies employ multimodal sensing that incorporates additional measurements to inform the correction process. Reference sensors that monitor environmental parameters, nonspecific binding, or electrode integrity provide valuable inputs for discrimination between true analyte signals and artifactual drift. This approach aligns with research showing that electrochemical diagnostic systems incorporating multiple measurement modalities and AI-based data fusion achieve superior stability in complex real-world environments [10].

Material degradation, environmental interference, and biofouling represent fundamental challenges that must be addressed through combined materials science, sensor design, and algorithmic innovation. Systematic experimental characterization of these root causes provides the essential foundation for developing effective compensation strategies. Accelerated degradation studies, environmental interference mapping, and biofouling assessment generate the quantitative relationships needed for model-based drift correction. The integration of these physical insights with advanced machine learning approaches represents the most promising path toward electrochemical biosensors with the stability and reliability required for demanding applications in clinical diagnostics and drug development. Future research should focus on developing standardized characterization protocols, multi-modal sensing architectures, and adaptive algorithms that can continuously learn and compensate for evolving sensor performance throughout the operational lifetime.

Electrochemical biosensors have emerged as powerful tools in biomedical diagnostics and therapeutic monitoring, enabling the detection of specific biomarkers through electrical signals generated by electrochemical reactions [10]. These sensors are integral to point-of-care devices, from continuous glucose monitors for diabetic patients to systems for detecting cancer biomarkers in blood [17] [10]. However, a significant challenge impeding their reliability is sensor drift, a phenomenon where the sensor's output gradually deviates over time despite unchanged target analyte concentrations [18]. This drift arises from complex factors, including the physical and chemical aging of sensor materials, environmental variations in temperature and humidity, and the inherent instability of biological recognition elements such as enzymes and antibodies [2] [18] [19].

In biomedical applications, the consequences of uncorrected drift are severe. For diagnostic accuracy, drift can lead to both false positives and false negatives, potentially misdirecting clinical diagnoses. In therapeutic monitoring, such as tracking drug concentrations like vancomycin, drift can result in inaccurate dosage adjustments, compromising patient safety [19]. The escalating focus on personalized medicine and the need for early disease diagnosis demand data of exceptional quality [17]. Therefore, developing effective drift compensation algorithms is not merely a technical exercise but a critical prerequisite for the clinical adoption of electrochemical biosensors. This guide objectively compares the performance of state-of-the-art drift compensation algorithms, providing researchers with the experimental data and protocols needed to evaluate these methods for their specific applications.

Comparison of Drift Compensation Algorithms

Drift compensation strategies can be broadly categorized into model-based and AI-driven approaches. The following table summarizes the key performance metrics of several advanced algorithms as demonstrated in recent experimental studies.

Table 1: Performance Comparison of Drift Compensation Algorithms

| Algorithm Name | Core Mechanism | Reported Performance Metrics | Task Demonstrated | Experimental Duration/Scope |

|---|---|---|---|---|

| Online Domain-adaptive Extreme Learning Machine (ODELM) [20] | Active learning query strategy + online model updating | ~9-14% accuracy improvement; achieves >95% classification accuracy with minimal labeling cost | Gas classification, Concentration prediction | Long-term sensor data with evolving drift |

| Intrinsic Characteristics Method [18] | Leverages invariant relationship between transient and steady-state response features | ~20% increase in correct classification rate (SVM); efficacy maintained for 22 months | Gas classification (Ethanol, Ethylene) | 36-month dataset |

| Spectral-Temporal TCNN [21] | Temporal Convolutional Neural Network + Hadamard spectral transform | Mean Absolute Error <1 mV (<1 ppm); >70% model compression via quantization | Ethylene concentration prediction | Long-term deployments in agricultural settings |

| Empirical Unsupervised Correction [2] | Multiple Linear Regression + Particle Swarm Optimization | Maintained adequate accuracy for 3+ months without labeled data | NO₂ concentration monitoring | 6-month field deployment |

| Kinetic Differential Measurement (KDM) [19] | Differential measurement at two square-wave frequencies to correct for signal drift | Accuracy better than ±10% in whole blood at body temperature | Vancomycin concentration measurement | In-vitro calibration |

A critical differentiator among these algorithms is their approach to labeling cost – the requirement for fresh, experimentally obtained calibration data, which is often expensive and labor-intensive. ODELM specifically addresses this by using intelligent query strategies to select the most valuable samples for labeling, thereby minimizing this cost while maintaining high performance [20]. In contrast, the Empirical Unsupervised method demonstrates the ability to operate for extended periods without any labeled data post-initial calibration, using PSO to identify correction parameters [2].

Furthermore, the computational demand and deployability of these algorithms vary significantly. The Spectral-Temporal TCNN is notable for its TinyML implementation, where the model is quantized and designed to run on resource-constrained microcontrollers, enabling real-time, embedded drift compensation without cloud dependency [21]. This is a paradigm shift from more computationally heavy models that may not be suitable for edge devices in wearable medical sensors.

Experimental Protocols for Drift Compensation

To ensure the validity and reproducibility of drift compensation research, standardized experimental protocols are essential. The following workflows detail the methodologies from key studies, providing a template for future investigations.

Protocol for Online Drift Compensation with Active Learning

This protocol, adapted from [20], outlines the steps for validating an online drift compensation framework like ODELM.

Table 2: Key Research Reagent Solutions

| Reagent/Material | Function in Experiment |

|---|---|

| Electrochemical Sensor Array | Core sensing element; provides raw signal data in response to target analytes. |

| Target Gases (e.g., Ethanol, Ethylene) | Analytes used to challenge the sensor and simulate real-world detection scenarios. |

| Synthetic Air | Used as a background gas and for sensor cleaning/recovery between analyte exposures. |

| Data Acquisition System | Hardware for recording sensor responses (e.g., voltage, current) at a specified sampling rate. |

| Reference Instrument/Labeled Data | Provides ground truth measurements for target analyte concentration for supervised learning. |

Workflow:

- Data Collection: Expose the sensor array to target analytes across a range of concentrations. Record the sensor responses (e.g., current, voltage, impedance) over an extended period (months to years) to capture natural drift. The data is divided into a source domain (initial, non-drifted data) and a target domain (later, drifted data) [20] [18].

- Query Strategy Implementation: For each new batch of data from the target domain, employ a query strategy (e.g., QSGC for classification) to select the most "valuable" unlabeled sample. This sample is typically one where the model's prediction is most uncertain and thus most informative for model updating [20].

- Sample Labeling: The selected sample is then labeled, which involves obtaining its true concentration or class from a reference instrument. This step incurs the "labeling cost" [20].

- Model Update: The ODELM model is updated using only this single newly labeled sample. The update process adjusts the model's parameters to better align with the drifted data distribution without requiring storage of past data [20].

- Performance Evaluation: The updated model is used to predict the classes or concentrations of the remaining samples in the batch. Performance metrics (e.g., classification accuracy, mean absolute error) are calculated against the ground truth to evaluate the effectiveness of the drift compensation [20].

Protocol for Intrinsic Characteristics-Based Drift Compensation

This protocol, based on [18], describes a method that leverages the inherent properties of the sensor response curve.

Workflow:

- Feature Extraction: For each sensor response curve during the gas adsorption phase, extract both a steady-state feature (e.g., Fs = Max(R) - Min(R)) and a transient feature (e.g., the average rising amplitude derived from an exponential moving average calculation) [18].

- Model the Invariant Relationship: Establish a mathematical relationship (e.g., a linear regression) between the transient feature and the steady-state feature using the non-drifted data from the first month. This relationship is considered an intrinsic characteristic of the sensor that remains stable over time [18].

- Apply Drift Compensation: For new, drifted data from subsequent months, use the established model to predict what the steady-state feature should be based on the measured transient feature. The difference between the predicted and the actual measured steady-state feature is used to calculate a compensation factor [18].

- Adjust Sensor Output: Apply the compensation factor to adjust the drifted steady-state feature value back to its expected value, effectively compensating for the drift [18].

- Validation: Use the compensated features for downstream tasks like gas classification with an SVM classifier and compare the accuracy with the results obtained using uncompensated features [18].

Implications for Diagnostic Accuracy and Therapeutic Monitoring

The performance of drift compensation algorithms has direct and profound implications for biomedical applications. In therapeutic drug monitoring, the KDM method used in Electrochemical Aptamer-Based (EAB) sensors demonstrates the critical importance of matching calibration conditions to the measurement environment. Studies show that calibrating vancomycin sensors in fresh, body-temperature whole blood yields an accuracy better than ±10%, which is clinically acceptable. In contrast, using a calibration curve obtained at room temperature can lead to significant underestimation of drug concentration, potentially leading to dangerous dosage errors [19].

For disease diagnosis, where biomarkers for conditions like cancer, stroke, or cardiovascular diseases are often present at trace concentrations, sustained sensor accuracy is paramount [17]. Algorithms like the Intrinsic Characteristics Method [18] and the Online Domain-adaptive ELM [20], which maintain high classification accuracy over many months, are essential for ensuring that diagnostic results are reliable long after a sensor is deployed or calibrated. This long-term stability is a key enabler for the deployment of sensors in resource-limited settings where frequent recalibration is not feasible.

The move towards wearable and implantable sensors for continuous health monitoring creates a pressing need for lightweight, low-power compensation algorithms that can run directly on the device. The TinyML-based TCNN approach, which compensates for drift in real-time with minimal power consumption and without cloud connectivity, represents a significant step forward in making intelligent, self-correcting medical devices a practical reality [21]. As the field progresses, the integration of these robust, adaptive algorithms will be the cornerstone of building trustworthy biomedical sensing systems that can provide accurate data for both clinical diagnostics and personalized therapeutic interventions.

The transition of electrochemical biosensors from a research prototype in a controlled laboratory to a reliable, approved product in a clinical or field setting is fraught with challenges. This gap, often termed the translational "Valley of Death," is where many promising technologies fail due to technical, regulatory, and economic hurdles [22]. For electrochemical biosensors, a primary technical challenge that emerges in real-world use is sensor drift—the gradual change in sensor output over time despite constant analyte concentration [23]. This phenomenon severely compromises the long-term stability and reliability required for clinical decision-making, continuous health monitoring, and decentralized point-of-care testing [22] [24]. This review objectively compares the performance of contemporary drift compensation algorithms, framing their evaluation within the critical context of overcoming the Valley of Death. We provide a structured comparison of algorithmic methodologies, supported by experimental data and detailed protocols, to guide researchers and developers in selecting and optimizing strategies that enhance the real-world viability of electrochemical diagnostic platforms.

Comparative Analysis of Drift Compensation Algorithms

Drift compensation strategies can be broadly categorized into signal preprocessing approaches and machine learning-based models. The following tables provide a structured comparison of their core methodologies and documented performance.

Table 1: Comparison of Signal Preprocessing and Machine Learning Drift Compensation Algorithms

| Algorithm Category | Specific Technique | Underlying Principle | Key Advantages | Documented Limitations |

|---|---|---|---|---|

| Signal Preprocessing | Principal Component Analysis (PCA) | Decomposes signal into components, filtering out drift-like variances [23]. | Simplicity; does not require frequent recalibration with labeled data. | Assumes drift is the primary source of variance; may remove biologically relevant signal components. |

| Orthogonal Signal Correction | Removes signal components orthogonal to the analyte of interest [23]. | Directly targets data variance unrelated to the target analyte. | Performance is highly dependent on the initial model and data quality. | |

| Wavelet Analysis | Multi-resolution analysis to separate baseline drift from high-frequency analytical signal [23]. | Adaptable to different types of drift profiles and frequencies. | Choice of wavelet base and decomposition level can significantly impact results. | |

| Machine Learning Models | Active Learning (AL) with Uncertainty Sampling | Selects the most informative samples from a data stream for expert labeling to update the model [23]. | Reduces labeling effort; enables continuous model adaptation to slow drift. | Highly reliant on expert annotation accuracy; susceptible to "noisy label" problem [23]. |

| Gaussian Mixture Model (GMM) | Models drifted data distribution per class, assuming slow temporal variation [23]. | Robust to slow data distortion; can automatically determine relabeling budget. | Performance may degrade with rapid or abrupt drift changes. | |

| Multi-objective Optimization | Seeks a projection that balances classification accuracy and drift invariance [23]. | Explicitly optimizes for drift compensation as a primary objective. | Computationally intensive; can be complex to implement and tune. |

Table 2: Quantitative Performance Metrics of Drift Compensation Algorithms

| Algorithm / Study | Sensor / Application | Key Performance Metrics Before Compensation | Key Performance Metrics After Compensation | Reference |

|---|---|---|---|---|

| Active Learning (AL) with MPEGMM | Electronic Nose (Gas Sensor Array) | N/A | Accuracy: ~85-90% (higher than reference methods); Computation: Lower than reference methods [23]. | [23] |

| Traditional Active Learning | Electronic Nose (Gas Sensor Array) | N/A | Accuracy: Lower than MPEGMM-enhanced method due to "noisy labels" [23]. | [23] |

| AI-/ML-Integrated Biosensors | General Electrochemical Biosensors | Varies with application. | Sensitivity/Selectivity: "Greatly enhanced," "significantly improved"; Accuracy: Increased for pattern recognition in complex signals [25] [10]. | [25] [10] |

Experimental Protocols for Drift Compensation Evaluation

To ensure fair and reproducible comparison of drift compensation algorithms, standardized experimental protocols are essential. The following details a core methodology for generating and evaluating sensor drift.

Protocol for Generating a Drift-Calibration Dataset

This protocol is adapted from methodologies used to create benchmark datasets for evaluating E-nose drift compensation, which are directly applicable to electrochemical biosensors [23].

- Sensor System Setup: Utilize a multi-electrode electrochemical sensor array. The specific electrode composition (e.g., gold, carbon, modified with nanomaterials) should be documented.

- Analyte Selection and Sample Preparation: Define a set of target analytes or samples (e.g., specific biomarkers, pathogen cultures, chemical solutions). For each measurement cycle, prepare samples with known, fixed concentrations.

- Longitudinal Data Acquisition: Place the sensor system in its operational environment (e.g., controlled temperature, humidity). At regular, frequent intervals (e.g., daily, weekly) over an extended period (e.g., several months), expose the sensor array to the predefined set of samples and record the electrochemical responses (e.g., voltammetric, amperometric, impedimetric). The same samples at the same concentrations are measured at every cycle.

- Data Labeling: Each recorded response is labeled with its corresponding analyte class and time stamp. This creates a dataset where the "ground truth" is known, but the sensor signals gradually change over time due to drift.

Protocol for Algorithm Performance Benchmarking

- Data Partitioning: Split the longitudinal drift dataset chronologically. Use the earliest data (e.g., from the first few weeks) as the initial training set. The subsequent data is used as the test set to evaluate algorithm performance over time.

- Algorithm Implementation: Implement the drift compensation algorithms to be compared (e.g., PCA, AL, GMM). For learning-based methods, the initial training set is used to train the model.

- Performance Metrics and Evaluation:

- Recognition Accuracy: The primary metric is the classification accuracy of the sensor system on the chronological test set over time. A robust algorithm will maintain a high, stable accuracy.

- Computational Cost: Measure the time and processing resources required for the algorithm to update the model when new (calibration) data is available.

- Label Efficiency: For active learning methods, report the number of data samples that required expert labeling to achieve a target accuracy level.

Workflow Visualization of an Active Learning-Based Drift Compensation System

The diagram below illustrates the logical workflow of an Active Learning (AL) system, enhanced with a class-label appraisal mechanism to correct for expert labeling errors, a key innovation for robust real-world deployment [23].

Active Learning Drift Compensation with Label Appraisal

The Scientist's Toolkit: Essential Reagents and Materials

The development and implementation of robust electrochemical biosensors and their drift compensation algorithms rely on a suite of key materials and reagents.

Table 3: Key Research Reagent Solutions for Electrochemical Biosensor Development

| Item | Function in Biosensor Development | Specific Application in Drift & Stability Research |

|---|---|---|

| Gold Nanoparticles (AuNPs) | Signal amplification; enhance electron transfer efficiency; platform for bioreceptor immobilization [8]. | Used to modify electrode surfaces to improve conductivity and stability, potentially mitigating drift caused by poor signal strength [22] [8]. |

| Carbon Nanotubes (CNTs) | Increase electrode surface area; improve electrical conductivity; enhance loading of biorecognition elements [22] [24]. | Integrated into electrode substrates to create a more robust and electrochemically stable interface, reducing baseline noise and drift. |

| Ion-Selective Electrodes (ISEs) | Potentiometric sensing of specific ions (e.g., K⁺, Na⁺, Ca²⁺) in clinical samples [10]. | Serve as a model system for studying and compensating for drift in potentiometric measurements, a common issue for these sensors [10]. |

| Bioreceptors (Antibodies, Aptamers) | Provide high specificity for binding to target analytes (biomarkers, pathogens) [22] [24]. | The stability and longevity of these layers are critical. Their degradation is a major biological source of sensor drift and performance loss over time. |

| Metal-Organic Frameworks (MOFs) | Porous materials for high-density immobilization of enzymes or other receptors; can enhance sensitivity [8]. | Investigated for creating more stable and reproducible sensing interfaces, addressing a key source of variability and drift. |

| Drift Calibration Samples | Samples with known, fixed analyte concentrations measured over time. | Essential for generating the datasets required to train, validate, and benchmark the performance of drift compensation algorithms [23]. |

The journey from a laboratory prototype to a clinically viable electrochemical biosensor hinges on directly addressing the practical challenge of sensor drift. While advanced materials can improve baseline sensor stability, sophisticated drift compensation algorithms are indispensable for ensuring long-term analytical reliability. As demonstrated, Active Learning frameworks, especially those with integrated class-label appraisal like MPEGMM, offer a promising path forward by enabling continuous calibration with minimal expert intervention [23]. The integration of artificial intelligence and machine learning further enhances the ability to identify complex, non-linear patterns in drifted data, improving both sensitivity and selectivity [25] [10]. However, overcoming the Valley of Death requires more than algorithmic excellence. It demands a concerted effort toward standardized validation using real-world samples—a practice notably absent in most current research [24]—and the development of integrated systems that combine robust sensing hardware with intelligent, adaptive software. By prioritizing these areas, the research community can accelerate the translation of electrochemical biosensors into tools that truly impact healthcare, food safety, and environmental monitoring.

Electrochemical biosensors are powerful tools in drug development and diagnostic research, but their long-term reliability is often compromised by signal drift, a phenomenon where the sensor's output gradually changes over time despite constant analyte concentration. This drift stems from a complex interplay of factors, including the aging of electrochemical components, passivation or fouling of electrode surfaces, and changes in the properties of the biorecognition layer [26] [27]. For researchers and scientists relying on these sensors for critical data, understanding, quantifying, and correcting for drift is not merely an academic exercise but a practical necessity for ensuring data integrity. The manifestation and impact of drift vary significantly across different electrochemical techniques, necessitating a comparative approach to developing effective compensation algorithms.

This guide provides a structured comparison of how drift manifests and is addressed in three foundational techniques: Electrochemical Impedance Spectroscopy (EIS), Amperometry, and Voltammetry. By summarizing key quantitative data, detailing experimental protocols, and outlining available correction methodologies, this resource aims to inform the selection and optimization of biosensor platforms within the context of advanced drift compensation algorithm research.

Comparative Analysis of Drift Across Techniques

Table 1: Comparative Manifestations of Drift in Key Electrochemical Techniques

| Technique | Primary Drift Manifestations | Key Quantitative Metrics | Common Causes & Contributing Factors | Typical Correction/Compensation Methods |

|---|---|---|---|---|

| Electrochemical Impedance Spectroscopy (EIS) | - Baseline signal drift in charge-transfer resistance (Rct) [26]- Distortion in low-frequency data points [28] [29] | - Coefficient of variation of Rct < 3% in stable systems [26]- Significant deviation in Nyquist plot low-frequency arc | - Gold electrode etching by cyanide/chloride ions [26]- Spontaneous dissociation of Au-S bonds in SAMs [26]- System not at steady-state [28] | - "Drift Correction" algorithms in software (e.g., EC-Lab, Gamry) [28] [29]- Pre-conditioning protocols (voltage cycling & incubation) [26] |

| Amperometry | - Continuous current decrease or increase over time [30]- Unstable baseline in amperograms | - Linear drift in current signal under constant potential | - Fouling of the working electrode surface [31]- Depletion of analyte in diffusion layer- Changes in double-layer capacitance | - Interrupted Amperometry (IA) to utilize capacitive current [30]- Background subtraction |

| Voltammetry (Cyclic) | - Shift in peak potentials (Ep) [32]- Decrease in peak current (ip) [32]- Change in charge transfer (Qn) [32] | - Polarization resistance (RP)- Effective capacitance (Ceff) [32] | - Progressive electrode activation/deactivation [32]- Degradation of electrode modifiers (e.g., Pt/C) [32] | - In-situ tracking with EIS and Principal Component Analysis (PCA) [32] |

Table 2: Summary of Experimental Data on Drift from Key Studies

| Study Focus | Technique Used | Sensor/System Details | Observed Drift & Key Findings | Compensation Method Evaluated |

|---|---|---|---|---|

| COVID-19 Antibody Detection [26] | Faradaic EIS | MHA SAM-modified Au-Interdigitated Electrode (IDA) | - Faradaic biosensors ~17x more sensitive than non-Faradaic- Baseline drift compromises reliability | - Pre-incubation in redox probe with intermittent EIS scanning- Achieved stable region with Rct CV <3% |

| Li-Ion Battery & Supercapacitor [28] [29] | EIS | 18650 Li-Ion Battery; 3F Commercial Supercapacitor | - Induced drift (DC current) caused significant distortion at low frequencies | - Proprietary drift correction algorithm (Gamry Framework)- Corrected data aligned with steady-state measurements |

| Benzenediol Sensor Diagnostics [32] | EIS & Cyclic Voltammetry (CV) | Unmodified & Pt/C-modified Screen-Printed Electrodes (SPEs) | - Unmodified SPEs: Progressive activation- Pt/C-SPEs: Early improvement followed by degradation | - Multivariate diagnostics via PCA of RP, Ceff, and Qn |

| Heavy Metal Detection [30] | Interrupted Amperometry (IA) | Static Mercury Drop Electrode (SMDE) | - Continuous current drift from dissolved oxygen interference- Required careful deaeration | - IA method using capacitive and faradaic current- Achieved LOD of 0.26 nM for Cd²⁺ |

Detailed Experimental Protocols for Drift Analysis

Protocol for Investigating EIS Baseline Drift in Biosensors

This protocol, adapted from a study on SARS-CoV-2 antibody sensors, is designed to investigate and mitigate baseline drift in Faradaic EIS systems using redox probes like [Fe(CN)6]−3/−4 [26].

Key Research Reagent Solutions:

- Redox Probe Solution: 5 mM [Fe(CN)6]−3/−4 in 1x Tris-buffered saline.

- SAM Formation Solution: 1-10 mM solution of mercaptohexanoic acid (MHA) or similar thiol in ethanol.

- Biorecognition Element: Recombinant SARS-CoV-2 spike protein or other relevant antigen/antibody, prepared in a suitable buffer like PBS.

Methodology:

- Electrode Functionalization: Clean the gold interdigitated electrode (IDA) thoroughly. Immerse the electrode in the MHA solution for several hours to form a dense self-assembled monolayer (SAM). Rinse with pure ethanol and dry under a gentle nitrogen stream.

- Baseline Drift Mitigation (Pre-conditioning): Incubate the MHA SAM-coated IDA in the 5 mM [Fe(CN)6]−3/−4 redox probe solution. Apply an intermittent bias voltage by repeatedly recording EIS spectra. A typical setup uses an excitation AC sinusoidal voltage of ±10 mV superimposed on a 0.4 V DC bias, scanning frequencies from 0.1 MHz to 1 Hz. Monitor the charge-transfer resistance (Rct) until it stabilizes.

- Biorecognition Immobilization: Covalently conjugate the biorecognition element (e.g., spike protein) to the carboxylic acid terminal of the MHA SAM using standard carbodiimide crosslinking chemistry (e.g., EDC/NHS).

- EIS Measurement & Data Analysis: Perform EIS measurements in the redox probe solution after each modification step (bare electrode, after SAM, after biorecognition conjugation) and upon exposure to the target analyte. The system is considered stable when the coefficient of variation of the Rct is below 3% in the relaxed region [26].

Protocol for Multivariate Drift Diagnostics using EIS and CV

This protocol employs EIS and Cyclic Voltammetry (CV) in tandem, analyzed via multivariate methods, to track sensor health and diagnose drift in a model system, providing a rich dataset for algorithm training [32].

Key Research Reagent Solutions:

- Electrolyte/Analyte Solution: 1.0 mM Benzenediol isomers (catechol, resorcinol, hydroquinone) in 0.1 M H2SO4.

- Electrodes: Unmodified and Pt/C-modified Screen-Printed Electrodes (SPEs).

Methodology:

- System Setup: Use a standard three-electrode configuration (SPE as working, reference, and counter) connected to a potentiostat capable of EIS and CV.

- Accelerated Aging via Cycling: Perform repeated Cyclic Voltammetry cycles (e.g., 50 cycles) in the benzenediol solution. A typical CV range is -0.2 V to +0.8 V vs. Ag/AgCl at a scan rate of 50 mV/s.

- In-situ Impedance Monitoring: At regular intervals during the CV cycling (e.g., every 10 cycles), pause and perform an EIS measurement. A standard setup is a frequency range from 100 kHz to 0.1 Hz with a 10 mV AC amplitude at the open circuit potential.

- Data Extraction and Modeling: After the experiment, extract key parameters from the data:

- From EIS: Fit the spectra to an equivalent circuit to extract the polarization resistance (RP) and effective capacitance (Ceff).

- From CV: Calculate the net charge transfer (Qn) for each cycle by integrating the area under the current-potential curve.

- Multivariate Analysis: Compile the extracted parameters (RP, Ceff, Qn) into a data matrix. Input this matrix into a Principal Component Analysis (PCA) model. The scores plot of the first two principal components will reveal the directional evolution (drift) of the sensor's performance over time, distinguishing between different degradation patterns [32].

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Drift Studies

| Reagent/Material | Function in Drift Research | Example Application |

|---|---|---|

| Redox Probes (e.g., [Fe(CN)₆]⁻³/⁻⁴) | Provides a Faradaic current for enhanced signal sensitivity. Used to monitor changes in charge-transfer resistance (Rct), a key indicator of drift. | EIS biosensors for protein/antibody detection [26]. |

| Self-Assembled Monolayer (SAM) Reagents (e.g., MHA, MUA) | Creates a well-defined, organized layer on gold electrodes. Used to immobilize biorecognition elements and study drift originating from monolayer defects. | Investigating origin of baseline drift and developing mitigation protocols [26]. |

| Screen-Printed Electrodes (SPEs) | Disposable, low-cost, and mass-producible platforms. Ideal for studying unit-to-unit variability and drift in modified electrodes. | Multivariate diagnostics of sensor drift using EIS and CV in model systems [32]. |

| Model Analytic Systems (e.g., Benzenediols) | Well-understood, reversible redox couples used as benchmark analytes. Allow for controlled studies of sensor performance and degradation without biorecognition complexity. | Validating diagnostic frameworks for tracking sensor health [32]. |

| Deaeration Agents (e.g., Ultrapure Argon) | Removes dissolved oxygen from solutions. Oxygen is a common electroactive impurity that can cause significant current drift in amperometric and voltammetric measurements. | Essential for achieving low detection limits in sensitive techniques like Interrupted Amperometry [30]. |

Drift Compensation Algorithms and Data Processing

A critical component of modern drift management is the implementation of algorithmic corrections, which can be broadly categorized into instrumental and data-driven approaches.

Instrumental algorithms are often embedded in potentiostat software. For EIS, a common method is the "Drift Correction" feature, which compensates for a system's transient state during measurement. This is particularly useful for systems with long relaxation times, such as batteries or corroding samples. The method, patented by Biologic and implemented in software like EC-Lab and Gamry Framework, often uses Fourier transform-based compensation. It works by calculating the discrete Fourier transforms of the potential and current signals and applying a correction using adjacent frequencies to isolate the periodic impedance response from the non-stationary drift [28] [29].

For data-driven approaches, multivariate analysis is powerful. As demonstrated in the benzenediol study, combining EIS and CV data into a PCA model allows for the visualization of sensor drift as a smooth trajectory in the principal component space. This not only quantifies drift but can also help identify its root cause based on the direction of the trajectory [32]. In the realm of predictive maintenance for sensor networks, unsupervised anomaly detection algorithms—such as Robust Covariance, One-Class Support Vector Machines, and Isolation Forests—are being evaluated. These algorithms define a confidence region around calibration data and monitor incoming sensor signals during operation. A signal drifting outside this pre-defined region can trigger a recalibration alert, enabling dynamic maintenance schedules tailored to each sensor's unique drift behavior [27].

The manifestation of drift is an inherent challenge in electrochemical biosensing, but as this guide illustrates, its impact can be quantified, understood, and mitigated. The choice of electrochemical technique directly influences the nature of the observed drift: EIS grapples with baseline stability in Rct, Amperometry contends with Faradaic current stability, and Cyclic Voltammetry reveals drift through evolving redox peaks. The experimental protocols and tools outlined provide a foundation for rigorous drift analysis.

The future of robust electrochemical biosensors, particularly for long-term studies in drug development, lies in the sophisticated integration of physical sensor design (e.g., stable SAMs), instrumental correction techniques, and advanced data analytics like PCA and anomaly detection. Research into drift compensation algorithms must, therefore, be grounded in a clear understanding of these technique-specific manifestations, leveraging the comparative insights and structured methodologies presented here to develop next-generation solutions that ensure data reliability and sensor longevity.

Algorithm Deep Dive: From Traditional Calibration to AI-Powered Compensation Frameworks

Sensor drift is a pervasive challenge that undermines the long-term reliability and accuracy of electrochemical biosensors. This phenomenon refers to the gradual change in a sensor's response over time despite constant analyte concentrations, resulting from factors such as sensor aging, environmental parameter variations, and physicochemical alterations in sensing materials [2]. Drift compensation through algorithmic post-processing has emerged as a cost-effective strategy to extend the usable lifespan of biosensors without requiring hardware modifications. Among the most promising approaches are domain adaptation and subspace learning techniques, which leverage machine learning to correct for distributional shifts in sensor data [33] [34]. This guide provides an objective comparison of these methodological families, supported by experimental data and implementation protocols to inform researcher selection for specific applications.

Domain adaptation approaches treat drift as a domain shift problem, where data distributions differ between source (training) and target (deployment) domains [33] [35]. These methods transfer knowledge from the source domain while adapting to the target domain's characteristics. Subspace learning methods, conversely, aim to find a common latent subspace where the distributional differences between source and target domains are minimized [36]. Both approaches offer distinct advantages and limitations for offline compensation scenarios where batch processing of collected sensor data is feasible.

Comparative Analysis of Algorithmic Performance

The table below summarizes key performance metrics for prominent domain adaptation and subspace learning methods evaluated on standardized drift datasets.

Table 1: Performance Comparison of Drift Compensation Algorithms

| Method | Algorithm Type | Reported Accuracy | Dataset Duration | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| DAEL (Domain Adaptation Extreme Learning Machine) [34] | Domain Adaptation | 85.7%-91.9% (across batches) | 36 months | Fast execution, handles high-dimensional data | Requires some labeled target data |

| DAEL-C (Cross Domain Adaptation) [33] | Domain Adaptation with Ensemble Learning | ~90% (average) | 36 months | Effective for long-term drift, robust classification | Computationally intensive for large arrays |

| DAEL-D (Discriminative Domain Adaptation) [33] | Domain Adaptation with Ensemble Learning | >91% (average) | 36 months | Prioritizes target domain representatives | Complex weight updating strategy |

| Subspace Alignment with LFDA [36] | Subspace Learning | ~80% (after 22 months) | 22 months | Utilizes label information, preserves local structure | Performance degrades with extreme drift |

| MPEGMM (Mislabel Probability Estimation) [23] | Active Learning with Gaussian Models | >85% (with noisy labels) | Not specified | Handles label noise, adaptive relabeling budget | Assumes slow-varying drift pattern |

Table 2: Experimental Conditions and Sensor Types in Validation Studies

| Study | Sensor Type | Target Analytes | Experimental Conditions | Comparison Baselines |

|---|---|---|---|---|

| Zhang et al. [34] | Metal Oxide Semiconductor (MOS) | Ammonia, Acetaldehyde, Acetone, Ethylene, Ethanol, Toluene | Laboratory setting with controlled gas exposures | Traditional ELM, SVM, None-drift compensation |

| Yan et al. [33] | Chemical Sensor Array | Six volatile organic compounds | UCSD drift dataset spanning three years | Component Correction, SVM, ELM |

| Sun et al. [36] | Electrochemical Gas Sensors | Multiple gases (unspecified) | 22 months of continuous monitoring | Standard SVM without compensation |

Key Performance Insights

The quantitative results demonstrate that domain adaptation methods consistently outperform traditional machine learning approaches and subspace learning techniques in long-term drift scenarios. DAEL frameworks maintain classification accuracy above 85% even after 36 months of sensor deployment, representing a significant improvement over uncompensated baselines which can degrade to approximately 56.2% accuracy [33]. The ensemble approach employed in DAEL-C and DAEL-D provides particular robustness against extreme distribution shifts, though at the cost of increased computational complexity during training.

Subspace learning methods offer a more computationally efficient alternative with moderate performance, maintaining approximately 80% accuracy over 22 months of continuous monitoring [36]. These approaches demonstrate special utility when label information is scarce in the target domain, as they can leverage the geometric structure of the data to align distributions. However, their performance advantages diminish significantly when confronted with abrupt drift patterns or non-linear distribution shifts.

Experimental Protocols and Methodologies

Domain Adaptation Implementation

The Domain Adaptation Extreme Learning Machine (DAELM) follows a structured experimental protocol to compensate for sensor drift. The methodology begins with data collection from both source and target domains, where the source domain contains labeled data from initial sensor deployments, and the target domain comprises both labeled and unlabeled data from drifted sensors [34]. The labeled target samples are typically limited, simulating realistic scenarios where comprehensive recalibration is impractical.

The algorithmic workflow involves several key stages. First, random feature mapping transforms the input data using random weights and biases in the hidden layer. Next, output weight calculation determines the initial model using the source domain data. The core adaptation occurs through domain alignment, where the model minimizes the distribution difference between source and target domains using Maximum Mean Discrepancy (MMD) or similar metrics. Finally, model updating incorporates target domain labels to refine predictions, often through iterative optimization [34].

Subspace Learning Methodology

Subspace learning approaches for drift compensation operate on the principle of identifying a common latent space where distribution differences between source and target domains are minimized. The experimental protocol typically involves several methodical steps. First, feature extraction derives relevant characteristics from the raw sensor responses, which may include steady-state values, transient features, or spectral components [36]. Next, subspace projection maps both source and target domain data into a lower-dimensional space using techniques such as Local Fisher Discriminant Analysis (LFDA) or Principal Component Analysis (PCA).

The core of the methodology involves subspace alignment, where the source subspace is transformed to align with the target subspace through a linear transformation matrix. This alignment minimizes the divergence between domains while preserving the discriminative structure of the data. Finally, classification or regression occurs in the aligned subspace using traditional machine learning models such as Support Vector Machines (SVM) [36]. The entire process focuses on maintaining the intrinsic data structure while mitigating distributional shifts caused by sensor drift.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials for Drift Compensation Studies

| Reagent/Resource | Specifications | Research Function | Example Applications |

|---|---|---|---|

| Electrochemical Sensor Array | 16-32 sensors with varied selectivity | Generate drift-affected data streams | MOS sensors (TGS series) for VOC detection [33] |

| Standardized Gas Delivery System | Precision mass flow controllers | Ensure consistent analyte exposure | Dynamic air-sampling at 500 mL/min [2] |

| Reference Analyzer | Certified environmental monitors | Provide ground truth measurements | Chemiluminescence NO2 analyzers [2] |

| Data Acquisition Hardware | High-resolution ADC (≥16-bit) | Digitize analog sensor signals | 10s averaging followed by 15-min recording [2] |

| Drift Validation Dataset | Long-term temporal records (≥3 years) | Algorithm benchmarking | UCSD sensor drift dataset [33] |

Technical Implementation Considerations

Computational Requirements

The computational demands of drift compensation algorithms vary significantly between methodological families. Domain adaptation approaches typically require substantial processing resources during the training phase, particularly for ensemble methods like DAEL-C and DAEL-D that maintain multiple classifier instances [33]. However, once trained, their inference time is generally minimal, making them suitable for applications where computational resources are available during calibration but limited during deployment.

Subspace learning methods generally offer more favorable computational characteristics, with most complexity concentrated in the initial subspace projection phase [36]. The alignment transformation is typically computationally efficient, making these approaches suitable for embedded systems with limited processing capabilities. For both approaches, memory requirements scale with the number of sensors in the array and the dimensionality of the feature space, with domain adaptation generally demanding more memory to store multiple model instances.

Parameter Optimization Strategies

Effective implementation of both domain adaptation and subspace learning methods requires careful parameter optimization. For domain adaptation approaches, key parameters include the number of base classifiers in ensemble methods, the regularization strength for domain alignment, and the ratio of source to target domain influence [33]. Systematic optimization using techniques such as particle swarm optimization (PSO) has been shown to enhance model performance, particularly for long-term drift compensation [2].