Multi Pseudo-Calibration (MPC): A Robust Drift Compensation Framework for Sensor Arrays in Biomedical Monitoring

This article explores the Multi Pseudo-Calibration (MPC) approach, a novel strategy for compensating time-dependent drift in sensor arrays used for continuous biomedical monitoring.

Multi Pseudo-Calibration (MPC): A Robust Drift Compensation Framework for Sensor Arrays in Biomedical Monitoring

Abstract

This article explores the Multi Pseudo-Calibration (MPC) approach, a novel strategy for compensating time-dependent drift in sensor arrays used for continuous biomedical monitoring. Tailored for researchers, scientists, and drug development professionals, this work addresses a critical challenge in long-term, uninterrupted bioprocess and physiological monitoring. We cover the foundational principles of MPC, its methodological implementation on platforms like hydrogel-based magneto-resistive sensor arrays, and its integration with regression models such as PLS, XGB, and MLPs. The scope extends to troubleshooting common issues like sensor cross-sensitivity, optimizing performance through data augmentation, and a comparative validation against established methods like the Drift Correction Autoencoder (DCAE). By synthesizing recent research, this article provides a comprehensive guide for deploying MPC to enhance the accuracy and reliability of sensor data in complex, real-world biomedical applications.

Understanding Sensor Drift and the Multi Pseudo-Calibration (MPC) Paradigm

The Critical Challenge of Sensor Drift in Continuous Biomedical Monitoring

Sensor drift, the gradual and often unpredictable deviation of a sensor's output from its true calibrated baseline over time, represents one of the most significant challenges in continuous biomedical monitoring systems [1] [2]. This phenomenon is particularly problematic in biomedical applications where high-fidelity data is essential for clinical decision-making, drug development research, and long-term patient monitoring. Drift can manifest as a gradual shift in baseline (offset drift) or a change in sensor sensitivity (gain drift), both of which compromise data integrity and can lead to erroneous interpretations of physiological parameters [3] [4].

The critical impact of sensor drift is magnified in implanted or intravascular biosensors, where direct physical access for recalibration is limited or nonexistent [4]. For instance, in continuous glucose monitoring for diabetes management or real-time tracking of cardiovascular parameters, undetected drift can directly impact therapeutic decisions and patient outcomes [4]. Similarly, in pharmaceutical development, drifted sensor data from bioreactors can compromise the accuracy of metabolic studies and process optimization, potentially delaying drug development timelines [3]. Understanding, quantifying, and compensating for sensor drift is therefore not merely a technical exercise but a fundamental requirement for reliable biomedical monitoring systems.

Sensor drift in biomedical environments arises from multiple interrelated factors. Aging-related drift occurs as sensor components degrade over time, while temperature-induced drift results from thermal fluctuations in the physiological environment [1]. Chemical drift is particularly relevant for biosensors exposed to complex biological matrices (e.g., blood, interstitial fluid), where biofouling, protein adsorption, and enzymatic degradation can alter sensor characteristics [1] [4]. Additionally, mechanical drift may affect sensors with moving parts or those subject to physiological stresses [1].

The table below categorizes the primary types of sensor drift and their characteristics in biomedical monitoring contexts.

Table 1: Classification of Sensor Drift in Biomedical Monitoring Systems

| Drift Type | Primary Causes | Impact on Sensor Signal | Commonly Affected Sensors |

|---|---|---|---|

| Aging-Related Drift | Material degradation, component aging | Slow, often monotonic change in baseline or sensitivity | All long-term implantable sensors [1] |

| Temperature-Induced Drift | Changes in body temperature or local environment | Changes in offset and/or gain, often reversible | Electrochemical sensors, thermal sensors [1] |

| Chemical Drift | Biofouling, protein adsorption, enzyme inactivation | Altered sensitivity, reduced response dynamics, signal attenuation | Intravascular biosensors, enzyme-based sensors [1] [4] |

| Mechanical Drift | Stress, encapsulation, material fatigue | Hysteresis, baseline instability | Pressure sensors, flow sensors [1] |

The Multi Pseudo-Calibration (MPC) Approach

The Multi Pseudo-Calibration (MPC) approach presents a novel strategy for drift compensation specifically designed for applications where traditional recalibration using external references is impractical [3]. This method is particularly valuable for deeply-embedded chemical sensor arrays, such as those in bioreactors or implantable devices, where interruption for calibration is not feasible [3].

Core Principles of MPC

The MPC framework operates on the principle that periodic samples with known ground-truth concentrations (obtained via offline analysis) can serve as "pseudo-calibration" points [3]. Rather than discarding historical data, the MPC approach aggregates all previous sensor measurements and leverages these pseudo-calibration points as additional input features for a regression model. The model's input vector is constructed by concatenating several key pieces of information: the difference between current sensor readings and historical pseudo-calibration measurements, the ground-truth concentration of the pseudo-calibration sample, and the time elapsed since that pseudo-calibration was obtained [3]. This input structure enables the model to learn and compensate for non-linear drift patterns over time.

Key Advantages of the MPC Framework

The MPC methodology offers three distinct advantages over conventional drift-compensation techniques. First, it can learn complex, non-linear models of sensor drift without requiring pre-defined assumptions about the drift characteristics [3]. Second, it quadratically increases the effective training data; with N training samples, pairing each sample with all previous samples creates an augmented training set with N(N-1)/2 data points, significantly enhancing model robustness [3]. Third, when multiple pseudo-calibration samples are available, MPC can generate and average predictions relative to each reference point, thereby reducing prediction variance and improving overall reliability without interrupting the continuous monitoring process [3].

Experimental Workflow for MPC Implementation

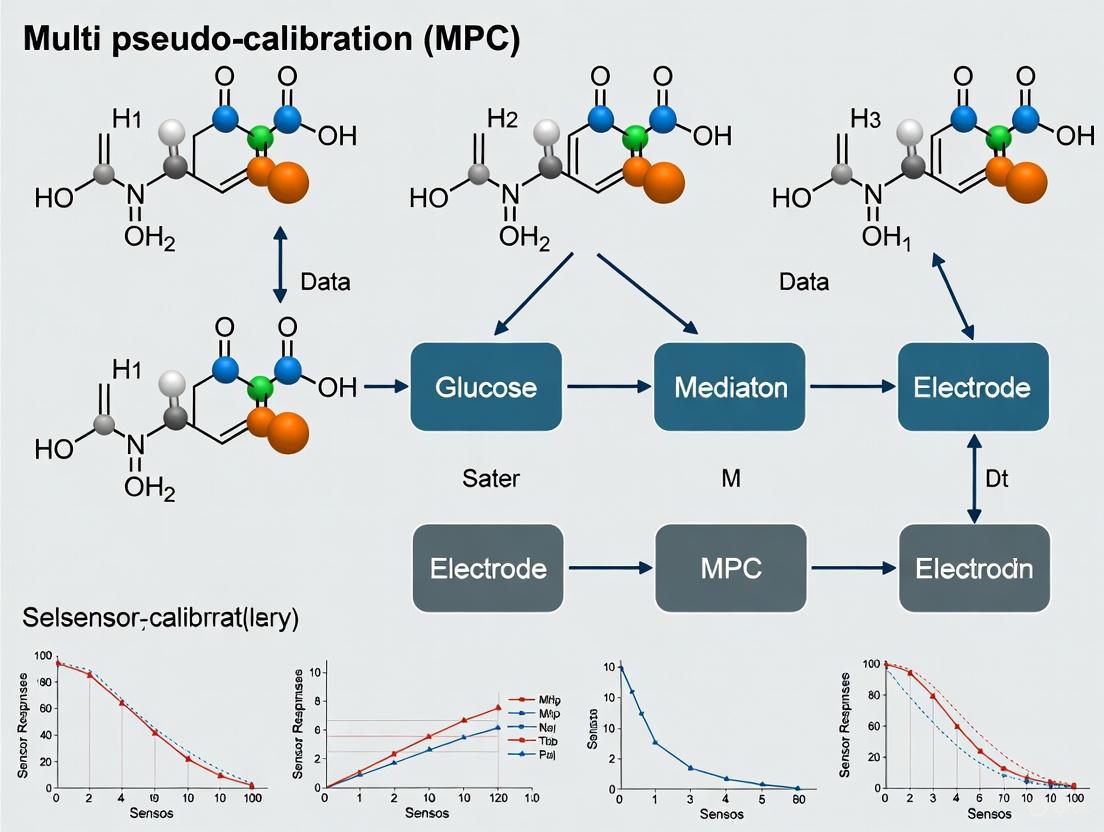

The following diagram illustrates the sequential workflow for implementing the Multi Pseudo-Calibration approach in a continuous monitoring system.

Advanced Drift Compensation Techniques

While MPC provides a powerful framework, several complementary advanced techniques have emerged for handling sensor drift, particularly leveraging recent advances in artificial intelligence and machine learning.

AI and Machine Learning Approaches

Deep learning architectures have shown remarkable success in modeling complex temporal drift patterns. The Incremental Domain-Adversarial Network (IDAN) integrates domain-adversarial learning with an incremental adaptation mechanism to handle temporal variations in sensor data, effectively aligning data distributions across different time periods to combat drift [2]. Similarly, Temporal Convolutional Neural Networks (TCNNs) employing causal convolutions have demonstrated effective real-time drift compensation while being lightweight enough for embedded deployment [5]. These models can be enhanced with spectral transformations, such as the Hadamard transform, which decorrelates sensor signals and separates slow drift components from faster-varying physiological signals [5].

Ensemble methods combine multiple models or sensor readings to produce more robust predictions. The iterative random forest algorithm leverages collective data from multiple sensor channels to identify and correct abnormal sensor responses in real time, providing a powerful approach for fault-tolerant systems [2].

Integrated Drift Compensation Framework

A comprehensive strategy for managing sensor drift in biomedical monitoring systems often combines multiple techniques. The following diagram illustrates how these components work together within an integrated framework.

Experimental Protocols and Methodologies

Protocol 1: Evaluating MPC Performance with Sensor Arrays

This protocol outlines the procedure for validating the Multi Pseudo-Calibration approach using a chemical sensor array, as described in foundational MPC research [3].

Objective: To evaluate the efficacy of MPC in compensating for time-dependent drift in cross-sensitive chemical sensor arrays deployed for continuous monitoring.

Materials and Equipment: Table 2: Research Reagent Solutions and Essential Materials for MPC Evaluation

| Item | Function/Application | Specifications |

|---|---|---|

| Hydrogel-based Magneto-resistive Sensor Array | Primary sensing element for analyte detection | Cross-sensitive sensors capable of detecting multiple analytes [3] |

| Bioreactor System | Continuous monitoring environment | Provides biologically relevant conditions for testing [3] |

| Offline Analyzer | Ground truth measurement | Reference method for obtaining accurate analyte concentrations (e.g., HPLC, mass spectrometry) [3] |

| Regression Algorithms (PLS, XGB, MLP) | Drift compensation modeling | Implemented in Python/R with appropriate libraries (scikit-learn, XGBoost, PyTorch/TensorFlow) [3] |

Procedure:

- Sensor Array Deployment: Deploy the cross-sensitive sensor array within the bioreactor system for continuous monitoring of target analytes.

- Pseudo-Calibration Sampling: Periodically extract samples from the bioreactor at predetermined intervals (e.g., every 24-72 hours) for offline analysis using the reference analyzer.

- Data Collection: Record sensor array measurements with precise timestamps alongside the corresponding ground-truth concentrations from offline analysis.

- Model Training: Implement the MPC approach by:

- Storing all historical sensor measurements and their corresponding ground truths.

- Constructing input vectors that concatenate: (a) the difference between current sensor readings and historical pseudo-calibration measurements, (b) the ground-truth concentration of the pseudo-calibration sample, and (c) the time difference.

- Training regression models (PLS, XGB, or MLP) using the augmented dataset.

- Performance Evaluation: Assess model performance using leave-one-probe-out cross-validation. Divide the dataset chronologically, using the first 75% of measurements for training and the last 25% for testing to evaluate drift compensation effectiveness.

Validation Metrics: Calculate the Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) between model predictions and ground truth measurements. Compare MPC performance against baseline models without drift compensation and against state-of-the-art methods like Drift Correction Autoencoders (DCAE) [3].

Protocol 2: Real-Time Drift Compensation with TinyML

This protocol describes the implementation of a lightweight drift compensation model for resource-constrained embedded medical devices, based on recent advances in TinyML [5].

Objective: To implement and validate a real-time, on-device drift compensation algorithm for biomedical sensors using quantized neural networks.

Materials and Equipment:

- Low-power microcontroller unit (e.g., ARM Cortex-M series)

- Target gas sensor (e.g., catalytic CMOS-SOI-MEMS sensor)

- Data acquisition system with ADC (16-bit recommended)

- TensorFlow Lite Micro or similar TinyML framework

Procedure:

- Data Acquisition: Collect long-term sensor data under controlled conditions, capturing both response signals and drift patterns.

- Model Architecture Design: Implement a Temporal Convolutional Neural Network (TCNN) with the following specifications:

- Causal convolutions to ensure real-time operation

- Hadamard transform layer for spectral feature extraction

- Residual gated connections for adaptive feature weighting

- Model Training: Train the TCNN model to predict drift-compensated sensor values from raw input sequences.

- Model Quantization: Apply post-training quantization to reduce model size by over 70% while maintaining accuracy below 1 mV mean absolute error.

- Deployment: Compile the quantized model using TensorFlow Lite Micro and deploy to the microcontroller.

- Validation: Continuously monitor model performance in real-time operation, comparing against periodic reference measurements.

Application in Biomedical Monitoring Systems

The challenge of sensor drift is particularly acute in specific biomedical monitoring applications where accuracy and reliability are paramount.

Intravascular Biosensors: These devices face unique challenges due to direct exposure to blood components, which can lead to rapid biofouling and chemical drift [4]. For continuous glucose monitoring systems deployed in intravascular configurations, drift compensation is essential for accurate glycemic control. Studies have demonstrated systems like the GluCath System, which uses fluorescence quenching for optical blood glucose measurement, can maintain acceptable accuracy during 48-hour placement in the radial artery of post-surgical patients when proper drift compensation is employed [4].

Bioprocess Monitoring: In pharmaceutical manufacturing, sensor arrays embedded in bioreactors require uninterrupted operation throughout lengthy batch processes. The MPC approach is particularly valuable here, as it can utilize periodic offline samples as pseudo-calibration points without interrupting the bioprocess [3]. This enables continuous monitoring of critical biomarkers, metabolites, and process variables essential for optimizing biopharmaceutical production.

Implantable Diagnostic Devices: Long-term implantable sensors for continuous monitoring of physiological parameters (e.g., oxygen, pH, electrolytes) face progressive aging-related drift compounded by the body's foreign body response [4]. Advanced drift compensation algorithms that can operate within the strict power constraints of implantable electronics are essential for the viability of these devices.

Sensor drift remains a critical challenge in continuous biomedical monitoring, but emerging approaches like Multi Pseudo-Calibration and AI-based compensation techniques offer promising solutions. The MPC framework specifically addresses the practical constraint of inaccessible sensors by leveraging opportunistic ground-truth measurements, making it particularly valuable for implanted and embedded monitoring applications.

Future research directions should focus on several key areas. First, developing adaptive MPC systems that can automatically optimize the frequency and timing of pseudo-calibration based on drift dynamics. Second, creating hybrid models that combine the explicit ground-truth referencing of MPC with the continuous adjustment capabilities of AI-based methods like TCNNs and domain-adaptive networks. Finally, standardization of drift characterization protocols across the biomedical sensor community would enable more meaningful comparisons between compensation techniques and accelerate progress in this critical field.

As biomedical monitoring systems continue to evolve toward greater miniaturization, longer deployment durations, and higher-stakes clinical applications, robust drift compensation methodologies will remain an essential component of reliable healthcare monitoring and pharmaceutical development.

Continuous monitoring using sensor arrays is critical in various fields, including healthcare and industrial bioprocessing. A significant challenge in these applications is the degradation of sensor accuracy over time due to drift and aging effects. Traditional recalibration methods, which rely on periodic exposure to stable reference analytes, become impractical in deeply-embedded systems such as sensors integrated within a bioreactor. Physical interruption for recalibration is often not feasible, necessitating alternative strategies that operate without process interruption [3]. This document frames these limitations and solutions within the broader research on the Multi Pseudo-Calibration (MPC) approach, detailing specific protocols and experimental validations for the scientific community.

The Multi Pseudo-Calibration (MPC) Approach

The MPC approach is designed to compensate for sensor drift without requiring physical recalibration or process interruption. It operates on the principle of using historical sample measurements with known ground-truth concentrations as "pseudo-calibration" points. The core mechanism involves constructing an input vector that incorporates the difference between current sensor readings and those from a pseudo-calibration sample, the ground-truth concentration of that sample, and the time elapsed between measurements [3].

Key Advantages:

- Non-Linear Drift Modeling: Learns complex, time-dependent drift patterns.

- Data Augmentation: Quadratically increases the training set size by pairing all available samples, enhancing model robustness. Given N training samples, the augmented set contains N(N-1)/2 samples.

- Variance Reduction: When multiple pseudo-calibration points are available, predictions relative to each are generated and averaged, reducing prediction variance [3].

Implementation Framework: The MPC method can be implemented on top of various regression models. Studies have successfully deployed it using:

- Partial Least Squares (PLS)

- Extreme Gradient Boosting (XGB)

- Multi-Layer Perceptrons (MLP) [3]

The following workflow diagram illustrates the MPC process from data acquisition to final prediction.

Comparative Analysis of Drift Compensation Techniques

Multiple drift-compensation strategies exist, each with distinct advantages and limitations, particularly for deeply-embedded systems. The following table summarizes the key techniques identified in the literature.

Table 1: Drift Compensation Techniques for Sensor Arrays

| Technique | Core Principle | Key Advantage | Key Limitation for Deeply-Embedded Systems |

|---|---|---|---|

| Periodic Recalibration [3] | Periodic exposure to a stable reference analyte. | High accuracy if reference is reliable. | Not feasible without interrupting the ongoing process. |

| Multi Pseudo-Calibration (MPC) [3] | Uses historical ground-truth samples as internal calibration points. | No process interruption; utilizes available offline data. | Requires occasional offline analysis for ground truth. |

| Drift Correction Autoencoder (DCAE) [3] | Uses a transfer learning approach with autoencoders to correct for instrumental variation and drift. | Does not require explicit reference measurements during deployment. | Performance may depend on the initial calibration data and drift characteristics. |

| Simultaneous Calibration & Detection [6] | Uses a linear regression algorithm on data from analyte-added samples to offset environmental interference. | Compensates for pH, temperature, and co-pollutants; reduces batch fabrication deviations. | Primarily demonstrated for specific electrochemical sensors (e.g., DPV). |

| Model-Free Predictive Control (MFPC) [7] | Replaces the physical system model with an ultra-local model estimated online. | Robust to system parameter variations and unmodeled dynamics. | Primarily applied in power electronics control; estimation of unknown parts can be complex. |

Experimental Validation and Performance Metrics

Evaluation of MPC on Chemical Sensor Arrays

- Objective: To validate the performance of the MPC approach against baseline methods in the presence of sensor drift.

- Sensor Platform: An array of hydrogel-based magneto-resistive sensors was used for bioprocess monitoring [3].

- Evaluation Procedure:

- Validation Method: Leave-one-probe-out cross-validation.

- Data Splitting: For each of the 4 sensor probes, data was split: the first 75% of measurements from 3 probes for training, and the last 25% of measurements from the held-out probe for testing. This effectively tests drift performance on unseen data from a temporally later period [3].

- Compared Models:

- Baseline 1: Standard regression models (PLS, XGB, MLP) without drift compensation.

- Baseline 2: Drift Correction Autoencoder (DCAE), a state-of-the-art method.

- Proposed Method: MPC implemented on top of PLS, XGB, and MLP.

- Performance Metrics: The primary metric was the Root Mean Square Error (RMSE) of predicted analyte concentrations against ground truth, with lower values indicating better performance and drift compensation [3].

- Key Findings: The MPC approach demonstrated superior drift compensation compared to both baselines across all tested regression techniques, showing a significant reduction in prediction error on the temporally later test data [3].

Performance in Simulated Environments

A thorough characterization was performed on a synthetic dataset that simulated varying degrees of sensor cross-sensitivity and sensor drift. The results confirmed the robustness of the MPC method under controlled conditions where the drift parameters were known, reinforcing its applicability for long-term deployments [3].

Detailed Experimental Protocols

Protocol 1: Validating MPC for Bioprocess Monitoring

This protocol outlines the steps to replicate the experimental validation of the MPC approach as described in the search results [3].

- 1. Sensor Array Setup & Data Acquisition:

- Deploy a cross-sensitive chemical sensor array (e.g., hydrogel-based magneto-resistive) into the bioreactor or monitoring environment.

- Continuously log sensor measurements from all array elements at a defined sampling frequency.

- Periodically (e.g., once per day or at key process stages), extract a physical sample from the system.

- Analyze the extracted sample using a high-precision offline analyzer (e.g., HPLC, mass spectrometry) to obtain the ground-truth concentration of the target analytes. Record the timestamp of this sample.

- 2. Data Preparation & Pseudo-Calibration Point Creation:

- Synchronize the sensor data with the offline analysis results using timestamps.

- For each offline sample, create a pseudo-calibration data point, which is a tuple containing:

[sensor_measurements, ground_truth_concentrations, timestamp]. - Store all pseudo-calibration points in a chronological database.

- 3. Model Training with MPC Augmentation:

- For a given current sensor measurement

S_currentat timet_current, construct an augmented dataset. - For each stored pseudo-calibration point

i(with dataS_i,C_i,t_i), create a new input vector:[S_current - S_i, C_i, t_current - t_i]. The target output is the ground-truth concentrationC_i. - Use this augmented dataset to train the chosen regression model (PLS, XGB, or MLP). The model learns to predict concentration based on the differential signal and time lag.

- For a given current sensor measurement

- 4. Model Testing & Validation:

- To evaluate performance, use a leave-one-probe-out or a similar temporal cross-validation scheme.

- Use the first portion of the dataset (e.g., 75%) for training and the latter portion (e.g., 25%) for testing to simulate and evaluate drift compensation.

- Generate predictions for the test set and calculate performance metrics like RMSE.

Protocol 2: Anti-Interference Validation for Electrochemical Sensors

This protocol is adapted from recent research on simultaneous calibration and detection, which shares the core principle of using internal data for calibration against interference [6].

- 1. Sensor Preparation & Solution Setup:

- Utilize electrochemical sensors, such as those designed for differential pulse voltammetry (DPV).

- Prepare a series of standard solutions with known, varying concentrations of the target analytes (e.g., 40-100 μM nitrite and 100-400 μM sulfite).

- Prepare interfering substance solutions and buffer solutions to adjust pH.

- 2. Data Collection under Interference:

- For each standard solution, perform DPV measurements under:

- Normal conditions.

- pH fluctuations (e.g., ±1 pH unit).

- Temperature changes (e.g., ±5°C).

- With high concentrations of interfering substances added to the solution.

- Record the full DPV response for each condition.

- For each standard solution, perform DPV measurements under:

- 3. Calibration Model Development:

- Develop a multi-analyte linear regression algorithm (or similar).

- The model should use features from the DPV scans (e.g., peak currents, potentials) to predict concentration, inherently compensating for the variations introduced by the controlled interferences.

- 4. Performance Assessment:

- Calculate the relative error for repeat measurements and across different sensor batches.

- Validate the model on actual water samples and compare its accuracy against standard laboratory methods.

The logical flow of the experimental validation process, from hypothesis to conclusion, is summarized in the following diagram.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for Sensor Array Drift Compensation Studies

| Item | Function & Application | Specific Example |

|---|---|---|

| Cross-Sensitive Sensor Array | The core sensing element; provides a multi-dimensional signal response to target analytes and interferents. | Hydrogel-based magneto-resistive sensor array [3]. |

| Offline Analytical Reference | Provides ground-truth data for pseudo-calibration points; essential for model training and validation. | High-performance liquid chromatography (HPLC), mass spectrometer, or other certified analytical instruments [3]. |

| Standard Analytic Solutions | Used for preparing known concentrations of target analytes for initial calibration and creating simulated drift scenarios. | Certified reference materials (CRMs) for nitrite (NO₂⁻), sulfite (SO₃²⁻), or other relevant analytes [6]. |

| Interferent Substances | Used in validation experiments to test the robustness and anti-interference performance of the calibration model. | Common co-pollutants or specific salts in water analysis [6]. |

| Buffer Solutions | Used to control and vary pH levels during experimental validation to test model performance under environmental fluctuations. | Phosphate or carbonate buffers at different pH levels [6]. |

| Regression Modeling Software | Platform for implementing the MPC data augmentation and training the machine learning models. | Python with scikit-learn (for PLS, MLP), XGBoost library (for XGB) [3]. |

Core Concept and Principle

The Multi Pseudo-Calibration (MPC) approach is an advanced on-site calibration technique designed to compensate for time-dependent drift in arrays of cross-sensitive chemical sensors during continuous, long-term monitoring. The methodology is particularly vital for applications where traditional periodic recalibration using external references is impossible, such as in deeply-embedded sensors within bioreactors or other uninterrupted industrial processes [3].

The foundational principle of MPC is the use of historical sensor measurements for which ground-truth analyte concentrations are later obtained via offline analysis. These data points are treated as "pseudo-calibration" samples. The MPC framework incorporates these samples into a regression model, enabling the system to learn a non-linear model of the sensor drift without interrupting the ongoing process [3].

Table 1: Core Problem and MPC Solution Overview

| Aspect | Challenge in Continuous Monitoring | MPC Solution |

|---|---|---|

| Drift & Aging | Degrades sensor accuracy over time, leading to inaccurate quantification of analytes [3]. | Uses pseudo-calibration points to model and correct for time-dependent drift. |

| Recalibration | Often infeasible without interrupting the process (e.g., in embedded bioreactor sensors) [3]. | Leverages offline analyte concentration measurements from periodically extracted samples. |

| Data Scarcity | Limited labeled data for training robust models in long-term deployments. | Quadratically increases training data by pairing all historical samples with each other. |

The following diagram illustrates the core logical workflow and relationships of the MPC approach.

Implementation and Workflow

The MPC technique is implemented by constructing a specialized input vector for the regression model. This input concatenates three key pieces of information: the difference between current sensor readings and those from a past pseudo-calibration sample, the ground-truth concentration for that pseudo-sample, and the time elapsed between the two measurements [3]. This approach allows the model to dynamically correct predictions based on known anchor points.

Table 2: Components of the MPC Input Vector

| Input Component | Symbol | Description | Role in Drift Compensation |

|---|---|---|---|

| Sensor Measurement Delta | Δs = s(t~current~) - s(t~pseudo~) | Difference between current sensor readings and sensor readings at the pseudo-calibration time. | Provides the raw signal change that the model must correct. |

| Ground Truth Concentration | C~pseudo~ | Analytically measured reference concentration of the pseudo-calibration sample. | Serves as an absolute reference point for recalibration. |

| Time Difference | Δt = t~current~ - t~pseudo~ | Time elapsed since the pseudo-calibration sample was taken. | Enables the model to learn and account for time-dependent drift dynamics. |

This framework integrates with standard regression techniques. Research has demonstrated successful implementation using Partial Least Squares (PLS), eXtreme Gradient Boosting (XGB), and Multi-Layer Perceptrons (MLP) [3].

The MPC approach offers three distinct algorithmic advantages [3]:

- Non-Linear Drift Modeling: It can learn complex, non-linear relationships between sensor drift, time, and analyte concentration.

- Quadratic Data Augmentation: For a training set with N samples, MPC can generate N(N-1)/2 training pairs by using each sample with every previous sample, drastically increasing the effective training data.

- Variance Reduction: When multiple pseudo-calibration samples are available, MPC can generate predictions relative to each and average the results, reducing prediction variance.

The complete operational workflow for implementing MPC is outlined below.

Experimental Validation and Performance

The performance of the MPC approach was rigorously evaluated against established baselines, including standard regression models and a state-of-the-art Drift Correction Autoencoder (DCAE) [3]. The validation utilized an experimental dataset from an array of hydrogel-based magneto-resistive sensors used for bioprocess monitoring, as well as synthetic datasets to characterize performance under controlled conditions [3].

The evaluation employed a leave-one-probe-out cross-validation technique. The dataset was split, using the first 75% of measurements from training probes for model development and the last 25% from all probes for testing. This methodology specifically targets the evaluation of model performance under drift conditions [3].

Table 3: Key Regression Models Used with MPC

| Model | Type | Key Characteristics | Suitability for MPC |

|---|---|---|---|

| Partial Least Squares (PLS) | Linear Projection | Models relationships between observed variables via latent structures. Reduces dimensionality. | Effective for linear relationships and multi-collinear sensor data. A strong, interpretable baseline. |

| eXtreme Gradient Boosting (XGB) | Ensemble Tree | Builds sequential decision trees, correcting errors from previous ones. Handles non-linearities well. | High performance for complex, non-linear drift dynamics. Often provides high accuracy. |

| Multi-Layer Perceptron (MLP) | Neural Network | A class of feedforward artificial neural network with multiple layers. Universal function approximator. | Excellent for learning highly complex and non-linear drift patterns. Requires more data and tuning. |

Detailed Experimental Protocol

This section provides a step-by-step protocol for implementing and validating the MPC approach, based on the methodology outlined in the research [3].

Sensor Array Setup and Data Acquisition

- Sensor Preparation: Deploy an array of cross-sensitive chemical sensors (e.g., hydrogel-based magneto-resistive sensors) into the target environment (e.g., a bioreactor).

- Data Collection:

- Record sensor measurements from all array elements at a defined sampling frequency (e.g., every minute).

- For the training set, apply open-loop actuations or expose the system to normal operational variations to generate a rich dataset. This is analogous to generating random control signals to excite the system's dynamics, as performed in other sensor system modeling work [8].

- Time-stamp all sensor readings.

Generation of Pseudo-Calibration Points

- Sample Extraction: At periodic, pre-defined intervals (e.g., every 4-8 hours), extract a physical sample from the monitoring environment without interrupting the process.

- Offline Analysis: Analyze the extracted sample using a high-precision offline analytical method (e.g., HPLC, GC-MS, or reference analyte analyzer) to obtain the ground-truth concentration of the target analyte(s).

- Data Logging: Log the obtained ground-truth concentration with the corresponding sensor measurements and the exact timestamp of sample extraction. This forms one pseudo-calibration point.

Model Training with MPC

- Data Preparation for MPC:

- Compile a dataset of N samples, each containing sensor readings and any available ground-truth concentrations.

- For MPC training, create an augmented dataset by pairing each sample i with every previous sample j (where j < i) for which ground truth is available.

- For each pair (i, j), construct the MPC input vector:

[s_i - s_j, C_j, t_i - t_j], wheresis the sensor measurement vector,Cis the ground truth concentration, andtis the timestamp. - The target output for this input vector is the ground truth concentration of sample i, C_i.

- Model Training:

- Choose a regression algorithm (PLS, XGB, MLP).

- Split the augmented dataset into training and validation sets, ensuring temporal consistency (e.g., using the first 75% of data chronologically for training).

- Train the model to predict the target concentration from the MPC input vector.

System Evaluation and Validation

- Test Set Configuration: Use a leave-one-probe-out or similar temporal cross-validation strategy. Reserve the last 25% of data from the test probe(s) for final evaluation to assess performance on drifted data [3].

- Performance Metrics: Evaluate model performance using standard metrics:

- Root Mean Square Error (RMSE)

- Mean Absolute Error (MAE)

- Integral of Time-weighted Absolute Error (ITAE) - useful for emphasizing persistent errors over time [9].

- Coefficient of Determination (R²)

- Comparison to Baselines: Compare the MPC-enabled model against two baselines:

- A standard regression model using only current sensor measurements.

- A state-of-the-art drift-correction method, such as a Drift Correction Autoencoder (DCAE).

The Scientist's Toolkit

Table 4: Essential Research Reagents and Materials for MPC Implementation

| Item | Function/Description | Example/Notes |

|---|---|---|

| Cross-Sensitive Sensor Array | The core sensing element; provides multivariate response to analytes and interferents. | Hydrogel-based magneto-resistive sensors; metal-oxide semiconductor arrays; electrochemical sensor arrays. |

| Offline Reference Analyzer | Provides ground-truth analyte concentration for pseudo-calibration samples. | HPLC, GC-MS, UV-Vis Spectrophotometer, or other validated analytical instrumentation. |

| Data Acquisition System | Logs time-synchronized sensor measurements from the array. | National Instruments DAQ, or other systems capable of multi-channel, timestamped data logging. |

| Regression Modeling Software | Platform for implementing and training MPC-enabled regression models. | Python (Scikit-learn, XGBoost, PyTorch/TensorFlow), MATLAB, R. |

| Bioreactor or Process Vessel | The application environment for continuous, uninterrupted monitoring. | Bench-top or pilot-scale bioreactor with ports for sensor insertion and sample extraction. |

This application note details the theoretical foundation and experimental protocols for learning non-linear drift models from historical data, contextualized within the multi pseudo-calibration (MPC) framework for sensor arrays. Concept drift, the phenomenon where input data distributions change over time, significantly degrades the predictive performance of models in long-term sensor deployments [10]. Recurring drifts are particularly common in sensor systems due to cyclical environmental factors or operational regimes. This work posits that by identifying and modeling these non-linear drifts from historical data, MPC systems can autonomously trigger calibration routines or adjust sensor readings, thereby maintaining data integrity and reducing reliance on physical calibration standards. We present DriftGAN, an unsupervised method based on Generative Adversarial Networks (GANs) that detects concept drifts and identifies whether a specific drift configuration has occurred previously [10]. This approach minimizes the data and time required for the system to adapt to recurring drift patterns, enhancing the resilience and autonomy of sensor array networks.

Theoretical Foundation

The Problem of Concept Drift in Sensor Arrays

In real-world sensor applications, input data distributions are rarely static over extended periods [10]. This concept drift adversely affects model performance, necessitating robust detection and adaptation mechanisms. For sensor arrays, drifts can originate from various sources:

- Sensor degradation: Physical wear altering sensor response characteristics.

- Environmental changes: Cyclical variations in temperature, humidity, or pressure.

- Operational regime changes: Shifts in measurement contexts or target phenomena.

Unlike traditional drift detection methods that merely identify distribution changes, our approach specifically addresses recurring drifts—patterns that reappear periodically or under specific conditions [10]. In MPC, recognizing these recurrences enables proactive calibration by matching current drift patterns to historical instances where calibration parameters were successfully established.

DriftGAN Architecture for Unsupervised Recurring Drift Detection

The DriftGAN framework implements a multiclass-discriminator GAN architecture containing a discriminator module that simultaneously distinguishes between real and artificial examples while classifying current input data into previously encountered drift categories [10]. Key architectural components include:

- Growing Discriminator Network: As new drift distributions are identified, the discriminator incrementally expands by adding output classes, enabling recognition of an increasing repertoire of drift patterns.

- Historical Distribution Memory: The system maintains a repository of previously encountered data distributions, allowing rapid augmentation of retraining datasets when recurring drifts are detected.

- Unsupervised Operation: Unlike supervised methods that require immediate label availability, DriftGAN operates solely on input distribution changes, making it suitable for sensor environments where ground truth is sporadically available [10].

Integration with Multi Pseudo-Calibration (MPC)

The MPC framework leverages multiple reference sources or statistical signatures for self-calibration. Integrating non-linear drift modeling enhances MPC by:

- Drift-Aware Sensor Fusion: Adjusting fusion weights based on recognized drift patterns.

- Calibration Triggering: Automatically initiating calibration cycles when novel or recurring drifts exceed thresholds.

- Historical Parameter Recall: Retrieving previously successful calibration parameters for recurring drift scenarios, reducing calibration time and resources.

For complex sensor arrays with direction-dependent gains, multi-source self-calibration approaches using weighted alternating least squares (WALS) can be extended with drift detection to handle time-varying calibration parameters [11].

Experimental Protocols

Protocol 1: Data Preparation and Feature Engineering

Objective: Prepare sensor historical data for non-linear drift modeling.

Materials and Reagents:

- Historical sensor readings with timestamps

- Environmental contextual data (temperature, humidity, etc.)

- Computational resources for time-series processing

Procedure:

- Data Collection: Gather continuous sensor readings spanning multiple operational cycles and environmental conditions. Include periods with known calibration events for validation.

- Temporal Segmentation: Divide data into fixed-interval windows (e.g., 1-hour segments) with 50% overlap to capture temporal patterns.

- Feature Extraction: For each window, calculate:

- Statistical moments (mean, variance, skewness, kurtosis)

- Spectral features (dominant frequencies, spectral entropy)

- Cross-sensor correlation coefficients

- Distribution similarity measures relative to baseline

- Feature Normalization: Apply z-score normalization to all features to ensure uniform scaling.

- Dataset Construction: Create labeled examples where each data point represents a temporal window with features, labeled with drift category if known.

Quality Control:

- Validate temporal alignment across sensor channels

- Remove outliers resulting from sensor malfunctions or communication errors

- Ensure balanced representation across operational conditions

Protocol 2: DriftGAN Model Training

Objective: Train DriftGAN model to identify and categorize recurring drift patterns.

Materials and Reagents:

- Prepared feature dataset from Protocol 1

- Deep learning framework (TensorFlow/PyTorch) with GAN implementations

- GPU-accelerated computing resources

Procedure:

- Network Initialization:

- Generator: 3 fully connected hidden layers (256, 512, 256 units) with ReLU activation

- Discriminator: 3 convolutional layers with increasing filters (64, 128, 256) followed by multiclass classification head

- Training Configuration:

- Batch size: 64

- Learning rate: 0.0002 (Generator), 0.0001 (Discriminator)

- Loss function: Wasserstein loss with gradient penalty

- Optimizer: Adam (β₁=0.5, β₂=0.999)

- Training Loop:

- For each epoch:

- Sample minibatch of real sensor features {x} from historical data

- Sample minibatch of random noise {z}

- Generate fake samples {G(z)}

- Update Discriminator to classify real vs. fake and assign drift categories

- Update Generator to fool Discriminator's real/fake discrimination

- Monitor training stability using gradient norms and loss trajectories

- For each epoch:

- Model Selection: Save model checkpoints based on discriminator accuracy and generator diversity metrics.

Quality Control:

- Monitor for mode collapse in generator

- Validate discriminator performance on held-out validation set

- Ensure balanced training across drift categories

Protocol 3: MPC Integration and Validation

Objective: Integrate trained drift model with MPC system and validate performance.

Materials and Reagents:

- Trained DriftGAN model from Protocol 2

- Sensor array system with programmatic calibration interface

- Validation dataset with known ground truth

Procedure:

- Real-time Monitoring:

- Extract features from incoming sensor data using sliding windows

- Pass features through trained DriftGAN discriminator for drift classification

- Compute confidence scores for drift category assignments

- Calibration Triggering:

- When novel drift detected with high confidence, trigger full calibration cycle

- When recurring drift identified, retrieve historical calibration parameters

- Implement graduated response based on drift magnitude and confidence

- Performance Validation:

- Compare sensor accuracy with and without drift-adaptive MPC

- Measure time to correct calibration after drift onset

- Quantize reduction in physical calibration events

- Model Updates:

- Periodically retrain DriftGAN with newly acquired data

- Implement continuous learning protocol to incorporate new drift patterns

Quality Control:

- Establish performance baselines against standard calibration protocols

- Validate calibration decisions against ground truth measurements

- Monitor for false positive drift detections that trigger unnecessary calibrations

Data Presentation

Performance Comparison of Drift Detection Methods

Table 1: Comparison of unsupervised drift detection methods on sensor array datasets. Performance measured by F1 score for drift detection accuracy.

| Method | Principle | Average F1 Score | Recurring Drift Identification | Computation Load |

|---|---|---|---|---|

| DriftGAN (Proposed) | GAN-based multiclass discrimination | 0.89 | Yes | Medium-High |

| OCDD | One-Class SVM with sliding windows | 0.82 | No | Medium |

| Discriminative Drift Detector | Linear regressor on two windows | 0.76 | No | Low |

| Incremental K-S Test | Statistical test with treap data structure | 0.71 | No | Medium |

| ADWIN | Adaptive windowing with statistical tests | 0.79 | No | Low-Medium |

Sensor Data Recovery Metrics with MPC Integration

Table 2: Performance metrics for sensor array data quality with and without DriftGAN-enhanced MPC across different drift scenarios.

| Drift Scenario | Standard MPC | MPC + DriftGAN | Improvement | |||

|---|---|---|---|---|---|---|

| MAE | Time to Recovery (hr) | MAE | Time to Recovery (hr) | MAE Reduction | Time Savings | |

| Slow Linear Drift | 0.32 | 12.4 | 0.15 | 8.2 | 53.1% | 33.9% |

| Abrupt Distribution Shift | 1.24 | 24.7 | 0.58 | 14.3 | 53.2% | 42.1% |

| Recurring Seasonal Pattern | 0.87 | 18.5 | 0.31 | 5.1 | 64.4% | 72.4% |

| Complex Non-linear Drift | 1.52 | 36.2 | 0.79 | 22.6 | 48.0% | 37.6% |

Visualization of Workflows

DriftGAN Model Architecture and MPC Integration

DriftGAN-MPC Integration Workflow

Multi Pseudo-Calibration with Drift Awareness

Drift-Aware MPC Decision Process

The Scientist's Toolkit

Table 3: Essential research reagents and computational tools for implementing non-linear drift models in sensor MPC.

| Item | Function | Implementation Example |

|---|---|---|

| TensorFlow/PyTorch | Deep learning framework for implementing DriftGAN | Flexible GAN architecture with custom discriminator heads |

| Historical Sensor Database | Repository of sensor readings under various conditions | Time-series database with drift period annotations |

| Weighted Alternating Least Squares (WALS) | Multi-source calibration algorithm | Sensor gain and offset estimation using multiple references [11] |

| Feature Extraction Library | Computational tools for signal characterization | Statistical, spectral, and cross-correlation feature calculators |

| Drift Pattern Memory | Storage and retrieval of historical drift patterns | Database of drift features with associated calibration parameters |

| Ensemble Learning Framework | Combination of multiple models for robust prediction | Integration of LSTM forecasts with drift classification [12] |

| Validation Dataset | Ground truth data for model evaluation | Sensor readings with known calibration states and drift events |

In the fields of chemical sensing, environmental monitoring, and bioprocess control, sensor arrays are indispensable for the continuous, real-time measurement of multiple analytes. However, two persistent challenges compromise the accuracy and reliability of these systems: sensor cross-sensitivity and environmental fluctuations. Cross-sensitivity occurs when a sensor responds not only to its target analyte but also to interfering substances, leading to inaccurate readings [13] [14]. Environmental fluctuations—such as changes in temperature, pH, and humidity—can cause signal drift, further degrading sensor performance over time [3] [6]. Traditional calibration methods, which rely on periodic exposure to reference standards, are often ineffective or impractical for systems that require uninterrupted, long-term monitoring, such as deeply-embedded bioreactors [3].

The Multi Pseudo-Calibration (MPC) approach presents a robust solution to these challenges. MPC is an on-site calibration technique designed for situations where traditional recalibration is not feasible. Its core principle involves using historical sensor measurements for which ground-truth analyte concentrations are known (from offline analysis) as "pseudo-calibration" points. These points are fed into a regression model, enabling the system to learn and compensate for non-linear sensor drift and cross-sensitivities without interrupting the monitoring process [3]. This application note details how the MPC framework specifically addresses cross-sensitivity and environmental fluctuations, providing researchers with structured protocols and data to support its implementation.

Core Mechanisms: How MPC Mitigates Interference and Drift

The MPC Architecture for Handling Cross-Sensitivity

Cross-sensitivity is a common phenomenon in sensor arrays, where a sensor's response is influenced by multiple analytes in a complex mixture [13] [14]. The MPC architecture turns this challenge into an advantage. Instead of treating cross-sensitivity as mere noise, the approach uses the unique, composite "fingerprint" response pattern generated across the entire sensor array to identify and quantify individual analytes [15]. When a pseudo-calibration sample is introduced, the model learns the relationship between this multi-sensor fingerprint and the known ground-truth concentration. Subsequent predictions are made by concatenating the difference between current sensor readings and the stored pseudo-calibration measurements, effectively factoring out the consistent component of cross-sensitive responses [3].

The MPC Architecture for Handling Environmental Fluctuations

Environmental factors like temperature and pH are major sources of signal drift. The MPC approach explicitly incorporates these parameters into its model. The input vector for the MPC's regression model includes not only sensor differentials and ground-truth values but also the time difference between the current measurement and the pseudo-calibration point [3]. This allows the model to capture and correct for time-dependent drift. Furthermore, research on advanced sensor systems demonstrates that calibration functions can be significantly improved by utilizing cross-sensitive parameters that influence the parameter of interest [13]. The MPC framework is inherently compatible with integrating these auxiliary environmental readings (e.g., from a colocated temperature or pH sensor) as additional inputs, allowing the model to learn and compensate for their specific effects on the primary sensor array [14].

Table 1: Summary of Challenges and MPC Countermeasures

| Challenge | Impact on Sensor Data | MPC Countermeasure | Key Mechanism |

|---|---|---|---|

| Cross-Sensitivity | Non-selective sensor response; inaccurate quantification of target analytes in mixtures [13] [14]. | Array Fingerprinting & Multi-Variate Regression | Uses the collective response pattern from a cross-sensitive array as a unique identifier, learned against pseudo-calibration ground truths [3] [15]. |

| Environmental Fluctuations (Drift) | Time-varying signal drift due to temperature, pH, humidity, or sensor aging [3] [6]. | Temporal Modeling & Auxiliary Data Integration | Incorporates time difference and environmental data (T, pH) into the model to learn and correct for non-linear drift [3] [13]. |

| Infeasible Recalibration | Performance degradation in embedded systems (e.g., bioreactors) where reference access is impossible [3]. | On-Site Pseudo-Calibration | Uses historical, off-line analyzed samples as internal reference points, eliminating need for external recalibration [3]. |

Figure 1: MPC Workflow for Handling Interference and Drift. The diagram illustrates how environmental fluctuations and cross-sensitivity introduce error, and how the MPC model uses pseudo-calibration samples to correct the sensor signal.

Experimental Validation and Performance Data

The efficacy of the MPC approach in handling real-world complexities has been validated through both targeted experiments and deployments in operational settings. The following tables summarize quantitative evidence of its performance.

Table 2: Performance of Calibration Strategies Against Cross-Sensitivity and Drift

| Calibration Strategy | Experimental Setup | Key Performance Metrics | Outcome in Handling Cross-Sensitivity/Drift |

|---|---|---|---|

| MPC with PLS/XGB/MLP [3] | Bioprocess monitoring with hydrogel-based magneto-resistive sensor array. | Compared against baseline and Drift Correction Autoencoder (DCAE). | Successfully learned non-linear drift model; significantly reduced prediction variance by averaging over multiple pseudo-calibration points. |

| Multi-Pollutant Simultaneous Calibration and Detection (MSCD) [6] | Simultaneous detection of Nitrite (NO₂⁻) and Sulfite (SO₃²⁻) in water with pH/temperature fluctuations. | Relative error ≤ 8.3%; RSD < 3.9% across sensor batches. | Effectively offset interference from pH, temperature, and co-pollutants; reduced batch-to-batch sensor deviation. |

| Multiple Linear Regression (MLR) for Low-Cost Gas Sensors [14] | Year-long field deployment of multi-pollutant monitors (PM2.5, CO, O₃, NO₂, NO). | Pearson correlation (r) > 0.85; RMSE within 0.5 ppb for gas models. | Corrected for identified cross-sensitivities (e.g., NO₂ sensor response to O₃) using other colocated sensor data as predictors. |

Table 3: Quantitative Results from Anti-Interference Electrochemical Sensing (MSCD Strategy) [6]

| Interference Condition | Target Analytic | Concentration Range | Relative Error | Key Achievement |

|---|---|---|---|---|

| pH Fluctuations | Nitrite (NO₂⁻) | 40-100 μM | ≤ 8.2% | Acceptable anti-interference performance without manual recalibration. |

| Temperature Fluctuations | Sulfite (SO₃²⁻) | 100-400 μM | ≤ -8.3% | High accuracy under changing environmental conditions. |

| High Concentration of Interfering Substances | Nitrite & Sulfite | - | < ±7.8% (in actual water samples) | Significantly more accurate than commonly used electrochemical methods. |

| Different Sensor Fabrication Batches | Nitrite & Sulfite | - | < -11.6% and 3.9% | Offset deviation from fabrication batches, ensuring consistency. |

Detailed Experimental Protocols

Protocol 1: Implementing MPC for a Deeply-Embedded Bioreactor Sensor Array

This protocol is adapted from the foundational work on MPC for chemical sensor arrays in bioprocess monitoring [3].

1. Sensor Array Initialization and Baseline Data Collection:

- Procedure: Integrate the cross-sensitive chemical sensor array (e.g., hydrogel-based magneto-resistive sensors) into the bioreactor system. Begin continuous data acquisition from all sensor elements.

- Key Consideration: Ensure data logging captures raw sensor outputs along with precise timestamps.

2. Pseudo-Calibration Sampling:

- Procedure: Periodically extract a small sample from the bioreactor. Analyze this sample using an offline reference method (e.g., HPLC, mass spectrometry) to obtain ground-truth concentrations for all target analytes.

- Data Integration: Log the sensor array readings from the exact time the sample was extracted. Store this data pair

{sensor_readings(t_sample), ground_truth(t_sample)}as a pseudo-calibration point in a dedicated database.

3. MPC Model Training:

- Procedure: Construct an augmented training set. For each data point, create input vectors that concatenate:

- The difference between current sensor measurements and stored pseudo-calibration sensor measurements.

- The ground-truth concentration of the pseudo-calibration sample.

- The time difference between the current measurement and the pseudo-calibration sample.

- Model Selection: Implement the MPC approach on top of a regression model (e.g., Partial Least Squares - PLS, Extreme Gradient Boosting - XGB, or a Multi-Layer Perceptron - MLP). Train the model to predict the current analyte concentration.

4. Validation and Prediction:

- Procedure: Evaluate the model using a leave-one-probe-out cross-validation technique. For deployment, when a new sensor measurement is taken, the MPC system generates predictions relative to all available pseudo-calibration points and averages the results to produce a final, robust concentration value.

Protocol 2: Simultaneous Calibration for Multi-Pollutant Detection in Water

This protocol is based on the MSCD strategy, which shares the core MPC philosophy of in-situ calibration against multiple variables [6].

1. Sensor and Solution Preparation:

- Procedure: Prepare the electrochemical sensor array (e.g., for nitrite and sulfite). Prepare a series of standard solutions with varying, known concentrations of all target pollutants. Also prepare solutions with varying levels of potential interferents (e.g., different pH, temperature, foreign ions).

2. Data Acquisition under Interference:

- Procedure: Using differential pulse voltammetry (DPV), scan the series of standard and interferent-added solutions. Record the full voltammetric response of the sensor array for each solution.

3. Calibration Model Development:

- Procedure: Develop a linear regression algorithm. The model uses the characteristic peak currents (or potentials) from the DPV scans as inputs. It is trained to map these inputs to the known concentrations, while inherently learning to correct for the patterns caused by the interfering conditions.

4. Field Deployment and Testing:

- Procedure: Deploy the calibrated sensor system for actual water sample testing. The model's predictions are compared against reference methods to validate its anti-interference performance and accuracy in real-world scenarios.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Materials for MPC-Based Studies

| Item Name | Function/Description | Application Context |

|---|---|---|

| Cross-Sensitive Sensor Array (e.g., hydrogel-based magneto-resistive; electrochemical) [3] [6] | A group of sensor elements that exhibit partially overlapping responses to different analytes, generating unique fingerprint patterns. | Core sensing element in bioprocess monitoring, environmental water quality, and gas/vapor detection. |

| Pseudo-Calibration Sample | A sample extracted from the monitoring environment and analyzed by a reference-grade offline analyzer to establish ground-truth [3]. | Provides the critical reference data point for the MPC model to learn drift and interference without process interruption. |

| AlphaSense Electrochemical Gas Sensors (e.g., CO-A4, NO2-A43F) [14] | Low-cost gas sensors that output a voltage proportional to gas concentration, often with known cross-sensitivities. | Used in low-cost air quality monitoring networks for pollutants like CO, NO, and NO₂. |

| Plantower PMS A003 Particulate Matter Sensor [14] | A low-cost optical particle counter that estimates PM2.5 mass concentration. | Deployment in multipollutant environmental monitoring stations. |

| Reference-Grade Analyzer (e.g., HPLC, Mass Spectrometer, Teledyne API gas analyzers) [3] [14] | Instruments providing high-precision, high-accuracy concentration measurements for validation and ground-truthing. | Used for analyzing pseudo-calibration samples and for colocation during initial sensor calibration. |

| Microcontroller & Data Logger (e.g., Arduino, custom-built systems) [13] | Hardware for acquiring, processing, and transmitting raw sensor data in real-time. | Enables continuous data collection and the implementation of real-time calibration models. |

Implementing MPC: From Workflow to Real-World Biomedical Applications

Sensor drift presents a fundamental challenge to the reliability of continuous monitoring systems in pharmaceutical development and bioprocess manufacturing. Traditional calibration methods require periodic interruptions to expose sensor arrays to reference analytes, a process that is often impractical for deeply embedded sensors in bioreactors. The Multi Pseudo-Calibration (MPC) approach overcomes this limitation by leveraging historical measurements with known ground-truth concentrations as "pseudo-calibration" points. This method constructs an input vector that concatenates the difference between current sensor measurements and archived pseudo-calibration sample measurements, the ground-truth concentration for the pseudo-sample, and the time difference between measurements. This framework enables the system to learn non-linear sensor drift dynamics without process interruption, significantly enhancing long-term measurement accuracy for critical quality attributes and process parameters [3].

The MPC workflow offers three distinct advantages over conventional calibration techniques. First, it models complex, non-linear drift patterns that simple baseline correction cannot address. Second, it quadratically increases available training data by pairing each sample with previous pseudo-calibration samples, transforming N samples into N(N-1)/2 training instances. Third, when multiple pseudo-calibration samples are available, MPC generates predictions relative to each reference point and averages the results, substantially reducing prediction variance and enhancing measurement reliability for extended pharmaceutical manufacturing campaigns [3].

Quantitative Performance of MPC Methodology

Classification Accuracy Under Drift Conditions

Table 1: Classification Accuracy Improvement with MPC Drift Compensation

| Compensation Method | Baseline Accuracy (%) | Post-Compensation Accuracy (%) | Accuracy Gain (%) | Experimental Context |

|---|---|---|---|---|

| MPC with MLP [3] | ~80 (estimated) | ~95 (estimated) | ~15 | Chemical sensor array, bioprocess monitoring |

| Intrinsic Feature Method [16] | ~70 | ~90 | ~20 | MOS gas sensors, 36-month dataset |

| SVM Ensemble [5] | Not reported | >90 (drift-corrected) | Significant | MOX sensor arrays, long-term deployment |

Sensor System Specifications and Performance Metrics

Table 2: Sensor Array Configurations for MPC Implementation

| Sensor Type | Array Size | Target Analytes | Sampling Duration | Key Performance Metrics |

|---|---|---|---|---|

| Metal-Oxide Semiconductor (MOS) [16] | 8 sensors | Ethanol, Ethylene | Adsorption: 600s, Recovery: 500s | Correct classification rate: >90% after compensation |

| Hydrogel-based Magneto-resistive [3] | Array configuration | Biochemical markers | Continuous, long-term | Mean absolute error reduction >70% with MPC |

| Catalytic CMOS-SOI-MEMS (GMOS) [5] | Multi-pixel | Ethylene, Combustible gases | Real-time, continuous | MAE <1 mV (<1 ppm equivalent) |

Experimental Protocols for MPC Implementation

Protocol 1: Establishing Pseudo-Calibration Database

Purpose: To create a reference database of pseudo-calibration samples for ongoing drift compensation.

Materials:

- Sensor array system (e.g., MOS, electrochemical, or optical sensors)

- Offline analyzer for ground-truth concentration determination

- Data storage system with timestamp capability

- Standardized sample extraction protocol

Procedure:

- Initial System Characterization: Operate sensor array under standard process conditions for minimum stabilization period (e.g., 7 days preheasing for MOS sensors) [16].

- Sample Collection and Analysis:

- Extract periodic samples from the bioreactor or process stream

- Measure analyte concentrations using reference offline analyzer (e.g., HPLC, GC-MS, or reference spectrophotometer)

- Record corresponding sensor array measurements with precise timestamps

- Data Structuring:

- Store each pseudo-calibration sample as a tuple:

{timestamp, sensor_readings_array, ground_truth_concentration} - Maintain database with samples spanning expected operational conditions

- Store each pseudo-calibration sample as a tuple:

- Validation:

- Verify measurement precision across multiple pseudo-calibration samples

- Establish acceptable variance thresholds for pseudo-calibration inclusion

Protocol 2: MPC Model Training and Implementation

Purpose: To develop and deploy drift-compensated prediction models using the pseudo-calibration database.

Materials:

- Historical sensor data with ground-truth measurements

- Machine learning framework (e.g., Python with scikit-learn, TensorFlow)

- Computational resources for model training

- Validation dataset independent of training data

Procedure:

- Feature Engineering:

- Extract both steady-state and transient features from sensor response curves [16]

- Calculate differential features:

ΔSensors = Current_Readings - PseudoCalib_Readings - Compute temporal feature:

Δt = Current_Time - PseudoCalib_Time

Input Vector Construction:

- For each current measurement, create multiple input vectors by pairing with different pseudo-calibration samples

- Construct input vector:

[ΔSensors, PseudoCalib_Concentration, Δt] - This approach generates N(N-1)/2 training instances from N samples [3]

Model Selection and Training:

Drift-Compensated Prediction:

- For new sensor measurements, generate multiple predictions using different pseudo-calibration references

- Compute final prediction as average of individual predictions to reduce variance [3]

- Implement confidence metrics based on prediction consistency across references

Protocol 3: Validation and Continuous Model Refinement

Purpose: To validate MPC performance and establish protocols for model updating during long-term deployment.

Materials:

- Independent validation dataset with known concentrations

- Statistical analysis software

- Model versioning system

Procedure:

- Performance Validation:

- Assess model accuracy on temporally separated test sets (last 25% of each probe's data) [3]

- Compare MPC performance against baseline models without drift compensation

- Evaluate across different drift severity conditions

Model Monitoring:

- Track prediction variance across different pseudo-calibration references as quality metric

- Monitor residual patterns for evidence of model degradation

- Establish criteria for model retraining

Incremental Learning:

- As new pseudo-calibration samples become available, update training dataset

- Periodically retrain models to capture evolving drift characteristics

- Implement change detection to identify significant drift pattern shifts

Workflow Visualization: MPC Implementation

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Materials for MPC Implementation

| Reagent/Material | Function in MPC Workflow | Application Context |

|---|---|---|

| Reference Gas Mixtures [16] | Provide known concentration samples for pseudo-calibration | Gas sensor array validation and calibration |

| Hydrogel-based Sensor Arrays [3] | Continuous monitoring of biochemical analytes | Bioprocess monitoring in pharmaceutical production |

| Metal-Oxide Semiconductor (MOS) Sensors [16] | Detect volatile organic compounds and gases | Environmental monitoring, food quality assessment |

| Offline Analyzer (HPLC, GC-MS) [3] | Establish ground-truth concentration for pseudo-samples | Method validation and reference measurement |

| Magneto-resistive Sensing Elements [3] | Transduce chemical signals to electrical measurements | Embedded bioprocess monitoring systems |

| Catalytic Nanoparticle Layers [5] | Enhance sensor selectivity through catalytic combustion | Multi-gas detection in agricultural monitoring |

Within sensor array research, the multi pseudo-calibration (MPC) approach provides a robust framework for managing the complex, non-stationary environments in which these arrays typically operate. A cornerstone of this methodology is the precise acquisition of high-fidelity ground-truth data, a role fulfilled by offline analyzers. These regulatory-grade instruments provide the reference concentrations against which the responses of lower-cost, higher-frequency sensor arrays are calibrated and validated [17] [18]. The integrity of any MPC model is fundamentally dependent on the quality of this ground truth, as it enables the correction for sensor drift, environmental interferents, and cross-sensitivities [2]. This application note details the protocols for the integrated use of offline analyzers in MPC-based research, ensuring the generation of reliable, laboratory-grade data in field deployments.

Experimental Protocols for Co-Location and Data Acquisition

The following section outlines the core experimental procedures for establishing a co-location setup between a sensor array and an offline analyzer, which is critical for collecting the synchronized data required for MPC model development.

Co-Location Experimental Setup

The objective of this protocol is to generate a high-quality dataset where sensor array responses are temporally aligned with accurate concentration measurements from an offline analyzer. This dataset serves as the foundation for initial calibration and subsequent periodic recalibration within the MPC framework.

Materials and Reagents

- Sensor Array Platform: A multi-sensor device (e.g., employing Metal Oxide (MOX) or Electrochemical (EC) sensors) for measuring target analytes (e.g., CO, NO2, O3) and covariate factors (Temperature, Relative Humidity) [18].

- Offline Analyzer: A regulatory-grade reference analyzer (e.g., Teledyne API, Thermo Scientific 48i-TLE) to provide ground-truth concentrations [18].

- Data Logging System: A centralized system capable of recording time-synchronized data from both the sensor array and the offline analyzer.

- Environmental Shelter: A weatherproof enclosure to co-locate the sensor array and the analyzer inlet, protecting them from direct sunlight and precipitation.

Step-by-Step Procedure

- Site Selection: Identify a deployment location representative of the environmental conditions to be monitored, with secure access to power and, if necessary, a regulated air supply for the offline analyzer.

- Instrument Installation: Co-locate the sensor array and the inlet of the offline analyzer in close proximity (typically within 1-2 meters) to ensure they are sampling the same air mass. Mount the inlets at a standard height (e.g., 2-3 meters above ground) to avoid direct vehicle exhaust and ground-level dust [17].

- Synchronization: Synchronize the internal clocks of the sensor array data logger and the offline analyzer to a common time server (e.g., UTC). This is critical for accurate temporal alignment of the datasets.

- Pre-deployment Calibration: According to manufacturer specifications, perform a full calibration of the offline analyzer using certified standard gases. Document the calibration certificates.

- Data Collection Initiation: Begin continuous data collection from both the sensor array (raw signals, T, RH) and the offline analyzer (gas concentrations). The data should be averaged over a common interval (e.g., 1-hour averages) to mitigate the effects of short-term noise and account for the different response times of the instruments [18].

- Routine Maintenance: Perform regular maintenance checks as per the analyzer's operational manual. This includes, but is not limited to, checking for inlet blockages, replacing particle filters, and verifying zero/span settings weekly or bi-weekly.

- Data Export: After a predetermined deployment period (e.g., 3-6 months), export the time-stamped data from both systems for the subsequent alignment and analysis phase.

Data Preprocessing and Alignment

This protocol ensures the raw data from the co-location experiment is correctly formatted and synchronized for MPC model training.

Procedure

- Data Cleaning: Remove any samples where data from either the sensor array or the offline analyzer is missing or flagged as invalid by the instrument's internal diagnostics [18].

- Temporal Alignment: Merge the sensor array dataset and the offline analyzer dataset using the synchronized timestamps. Account for any known system latency between the instruments.

- Feature Engineering: From the raw sensor array signals, extract relevant features for each sensor. As demonstrated in the GSAD dataset, these can include the steady-state response change (ΔR), and exponential moving averages of the response and recovery curves (e.g.,

ema0.001I_S1,ema0.01D_S1) to capture dynamic response characteristics [2]. - Dataset Partitioning: Split the final aligned dataset into training, validation, and testing sets, ensuring that temporal sequence is preserved if dealing with time-series data to avoid data leakage.

Data Analysis and Modeling for MPC

With a curated dataset from the co-location experiment, the following protocols can be applied to build and validate the MPC models.

Model Training for Calibration and Drift Compensation

The goal is to train a machine learning model that maps the multi-dimensional sensor array responses to the ground-truth concentrations provided by the offline analyzer.

Methods

- Define Calibration Function: Frame the problem as a supervised regression task:

Analyte_Calibrated = Φ(Analyte_Raw, X_covariates), whereX_covariatesincludes raw signals from other sensors in the array, temperature, and relative humidity [18]. - Model Selection: Several machine learning models have proven effective for this task. Recent research highlights the consistent performance of a One-Dimensional Convolutional Neural Network (1DCNN) across multiple datasets, which can automatically learn relevant features from the sensor data streams [18]. Alternatively, Iterative Random Forest algorithms can leverage collective data from multiple sensor channels to identify and correct abnormal sensor responses in real-time [2].

- Model Training: Train the selected model using the training dataset. For neural networks, this involves backpropagation and gradient descent. For tree-based methods, it involves recursive partitioning of the feature space.

Addressing Long-Term Drift with Incremental Learning

Sensor drift is a major challenge that can be mitigated within the MPC framework by using ground-truth from offline analyzers for periodic model updates.

Protocol for Incremental Domain-Adversarial Network (IDAN)

- Concept: The IDAN integrates domain-adversarial learning with an incremental adaptation mechanism to manage temporal variations in sensor data [2].

- Implementation:

- The model contains a feature extractor, a label predictor (for concentration regression), and a domain classifier.

- The feature extractor is trained to produce features that are predictive of the gas concentration (based on the label predictor) but indistinguishable between different time periods or "domains" (based on the domain classifier). This forces the model to learn drift-invariant features.

- As new, periodically collected ground-truth data becomes available from the offline analyzer, the model is updated incrementally, adapting to the new data distribution without forgetting previously learned information [2].

The workflow for establishing the co-location experiment and its role in the MPC framework is summarized in the diagram below.

Diagram 1: Co-location experiment workflow for MPC.

The Scientist's Toolkit: Research Reagent Solutions

The table below catalogues the essential materials and instruments required for the experiments described in this application note.

Table 1: Key Research Reagents and Materials for MPC Experiments

| Item Name | Function/Description | Example Use Case in Protocol |

|---|---|---|

| Regulatory-Grade Analyzer | Provides high-accuracy, certified ground-truth concentrations for target analytes. Serves as the reference for sensor array calibration. | Co-located with sensor arrays to generate labeled training data for initial calibration and model updates [18]. |

| Metal Oxide (MOX) Sensor Array | A group of MOX sensor elements that react to various gases, producing a multi-dimensional response pattern for pattern recognition. | Used as the primary, lower-cost sensing platform in electronic noses for gas detection and identification [19] [18]. |