Optimizing Biosensor Fabrication: A Practical Guide to Factorial Design for Enhanced Performance

This article provides a comprehensive guide for researchers and drug development professionals on the application of factorial design to optimize biosensor fabrication parameters.

Optimizing Biosensor Fabrication: A Practical Guide to Factorial Design for Enhanced Performance

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the application of factorial design to optimize biosensor fabrication parameters. It covers foundational principles, practical methodologies, advanced troubleshooting techniques, and rigorous validation protocols. By systematically exploring factor interactions and leveraging modern computational tools, this review demonstrates how factorial design can significantly enhance biosensor sensitivity, selectivity, and reproducibility while reducing development time and costs. The content bridges theoretical concepts with real-world applications, offering actionable strategies for developing next-generation biosensing platforms for biomedical research and clinical diagnostics.

Understanding Factorial Design: Core Principles for Biosensor Development

The fabrication of high-performance biosensors is a complex multivariate process where numerous parameters—from the composition of the sensing interface to the immobilization of biological recognition elements—interact to determine the final device's sensitivity, selectivity, and reproducibility. Traditional one-variable-at-a-time (OVAT) optimization approaches are inefficient, time-consuming, and critically, incapable of detecting interactions between variables [1] [2]. In response, Design of Experiments (DoE) has emerged as a powerful, statistically rigorous framework that enables researchers to systematically investigate multiple factors and their interactions simultaneously, leading to more robust and optimally performing biosensors with a reduced experimental effort [1] [3].

This guide provides an in-depth introduction to the application of DoE in biosensor fabrication, framed within the context of factorial design. It covers fundamental principles, presents concrete case studies with quantitative outcomes, and offers detailed experimental protocols to equip researchers with the tools needed to implement these methodologies in their own work, ultimately accelerating the development of reliable biosensing platforms for point-of-care diagnostics and other applications [1] [4].

Fundamental Principles of Factorial Design

At its core, DoE is a model-based optimization strategy. It involves a pre-defined set of experiments that allows for the construction of a data-driven model linking variations in input parameters (e.g., material properties, fabrication conditions) to the sensor's output performance (the response) [1]. The most foundational DoE approach is the 2^k full factorial design, where 'k' represents the number of factors being investigated.

In a 2^k design, each factor is studied at two levels, conventionally coded as -1 (low) and +1 (high). The experimental matrix consists of 2^k unique runs, covering all possible combinations of these factor levels. This design is orthogonal, meaning the factors are varied independently, which allows for the independent estimation of both the main effects of each factor and their interaction effects [1] [3]. Interaction effects occur when the influence of one factor on the response depends on the level of another factor—a phenomenon that invariably escapes detection in OVAT approaches [2].

The data collected from the factorial design is used to fit a linear regression model. The significance of each effect is typically determined using Analysis of Variance (ANOVA). A first-order model for a 2^3 factorial design would be:

Y = β₀ + β₁X₁ + β₂X₂ + β₃X₃ + β₁₂X₁X₂ + β₁₃X₁X₃ + β₂₃X₂X₃ + β₁₂₃X₁X₂X₃ + ε

Where Y is the predicted response, β₀ is the overall mean, β₁, β₂, β₃ are the main effects, β₁₂, β₁₃, β₂₃ are the two-factor interactions, β₁₂₃ is the three-factor interaction, and ε is the error [1]. For systems where the response exhibits curvature, second-order models (e.g., using Central Composite Designs) are required [1] [5].

Application of DoE in Biosensor Development: Case Studies and Quantitative Outcomes

The systematic application of DoE can dramatically enhance biosensor performance, as demonstrated in the following case studies which highlight the quantification of factor effects and the achievement of superior detection limits.

Case Study 1: Ultrasonic Pyrolytic Deposition of SnO₂ Thin Films A study optimizing SnO₂ thin films for sensing applications used a 2^3 full factorial design to analyze the effects of suspension concentration (X₁), substrate temperature (X₂), and deposition height (X₃) on the intensity of the main XRD diffraction peak, a proxy for film quality [3]. The statistical analysis, summarized in the table below, identified suspension concentration as the most influential factor and revealed significant interaction effects.

Table 1: Statistical Analysis of a 2^3 Full Factorial Design for SnO₂ Thin Film Deposition [3]

| Factor | Effect Estimate | p-value | Conclusion |

|---|---|---|---|

| Suspension Concentration (X₁) | +125.8 | < 0.001 | Most significant positive effect |

| Substrate Temperature (X₂) | -15.2 | 0.02 | Significant negative effect |

| Deposition Height (X₃) | +8.5 | 0.08 | Not statistically significant |

| X₁*X₂ Interaction | -22.1 | 0.01 | Significant interaction |

| X₁*X₃ Interaction | +10.3 | 0.06 | Not statistically significant |

| Model R² | 0.9908 | Excellent predictive capability |

The optimal conditions were found at a high suspension concentration (0.002 g/mL), low substrate temperature (60°C), and short deposition height (10 cm). The model's high coefficient of determination (R² = 0.9908) confirmed its accuracy for predicting deposition outcomes [3].

Case Study 2: A Femtomolar Enzymatic Glucose Biosensor In a groundbreaking study, a complex electrochemical biosensor was fabricated for glucose determination in 3D cell cultures. The biosensor structure was GO/AuPtPd NPs/Ch-IL/MWCNTs-IL/GCE. A two-step experimental design was employed to optimize the biosensor, which was then evaluated using multiple first-order multivariate calibration algorithms [6].

Table 2: Performance of an Optimized Glucose Biosensor using Different Calibration Algorithms [6]

| Performance Metric | Value | Conditions / Algorithm |

|---|---|---|

| Linear Detection Range | 0.5 to 35 fM | |

| Limit of Detection (LOD) | 0.21 fM | |

| Sensitivity | 0.9931 μA/fM | |

| Michaelis-Menten Constant (K_m) | 0.38 fM | Showcasing high affinity |

| Best-performing Algorithm | RBF-ANN and LS-SVM |

The exploitation of the first-order advantage allowed for accurate glucose measurement despite interfering substances in the cell culture matrix. This case highlights how DoE guides not only the physical fabrication but also the optimal data processing strategy for the biosensor [6].

Detailed Experimental Protocol: A Representative DoE Workflow

The following protocol outlines the key steps for implementing a full factorial design in a biosensor fabrication process, using the optimization of a laser-scribed graphene (LSG) electrode as a representative example [5].

Step 1: Define the Objective and Response Clearly state the goal. For example: "To optimize the manufacturing parameters of LSG electrodes to maximize the electrochemical active surface area (EASA)." The primary response (Y) is the calculated EASA, determined via cyclic voltammetry in a 20 mM K₃[Fe(CN)₆] solution using the Randles-Ševčík equation [5].

Step 2: Select Factors and Levels Identify critical controllable factors and assign two levels for each based on preliminary knowledge.

- Factor A (Laser Speed): Low = 15%, High = 25% of maximum speed.

- Factor B (Laser Power): Low = 12%, High = 18% of maximum power.

- Factor C (Electrode Width): Low = 0.7 mm, High = 1.4 mm. Maintain constant other parameters like laser focus and substrate material [5].

Step 3: Establish the Experimental Design Matrix For this 2^3 design, the matrix consists of 8 unique runs. It is good practice to include replicates (e.g., 2 replicates for a total of 16 runs) to estimate experimental error.

Table 3: Experimental Design Matrix for LSG Electrode Optimization [5]

| Standard Order | Run Order | A: Laser Speed | B: Laser Power | C: Electrode Width | Response: EASA (cm²) |

|---|---|---|---|---|---|

| 1 | 5 | -1 (15%) | -1 (12%) | -1 (0.7 mm) | ... |

| 2 | 2 | +1 (25%) | -1 (12%) | -1 (0.7 mm) | ... |

| 3 | 7 | -1 (15%) | +1 (18%) | -1 (0.7 mm) | ... |

| 4 | 8 | +1 (25%) | +1 (18%) | -1 (0.7 mm) | ... |

| 5 | 1 | -1 (15%) | -1 (12%) | +1 (1.4 mm) | ... |

| 6 | 3 | +1 (25%) | -1 (12%) | +1 (1.4 mm) | ... |

| 7 | 6 | -1 (15%) | +1 (18%) | +1 (1.4 mm) | ... |

| 8 | 4 | +1 (25%) | +1 (18%) | +1 (1.4 mm) | ... |

Step 4: Execute Experiments and Measure Responses Perform the runs in a randomized order to avoid confounding the effects of factors with systematic external influences. Fabricate the LSG electrodes according to each run's parameters and measure the EASA for each [5].

Step 5: Analyze Data and Build Model Use statistical software (e.g., JMP, Minitab) to perform ANOVA on the collected EASA data. Identify which main effects and interactions are statistically significant (typically p < 0.05). Construct a regression model to predict EASA based on the factor levels.

Step 6: Validate the Model and Determine Optimum Perform confirmation experiments at the optimal settings predicted by the model. Compare the measured response with the predicted value to validate the model's accuracy. The optimized LSG electrode can then be used for its intended biosensing application, such as the label-free detection of L-histidine in artificial sweat [5].

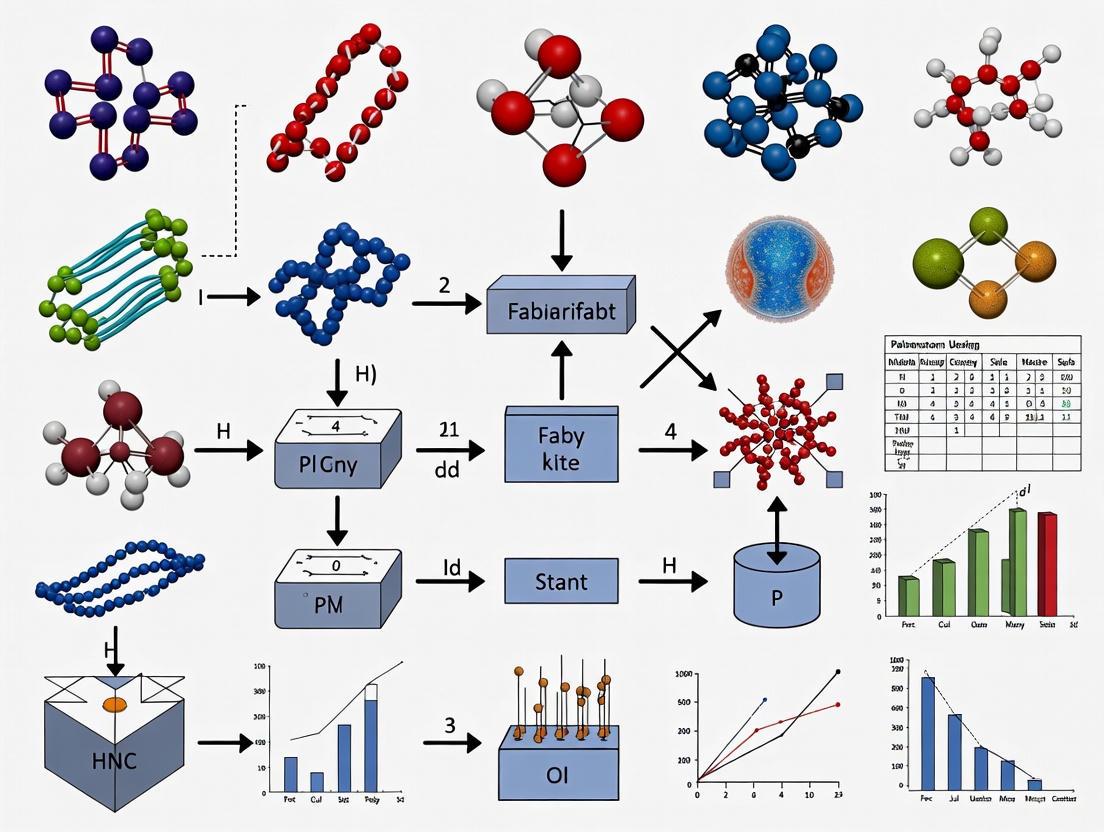

Visualizing the DoE Workflow for Biosensor Fabrication

The following diagram illustrates the iterative, model-based process of using Design of Experiments to optimize a biosensor, from initial planning to final validation.

DoE Optimization Process

Essential Research Reagent Solutions for DoE in Biosensor Fabrication

The table below lists key materials and reagents commonly employed in the fabrication and characterization of biosensors, as referenced in the case studies.

Table 4: Key Research Reagents and Materials for Biosensor Fabrication [6] [3] [5]

| Reagent / Material | Function / Application | Example from Literature |

|---|---|---|

| Multi-walled Carbon Nanotubes (MWCNTs) | Enhances electron transfer and provides a high-surface-area platform for biolayer immobilization. | Used in a composite with ionic liquid for a glucose biosensor [6]. |

| Ionic Liquids (e.g., Ch-IL, MWCNTs-IL) | Improve electrochemical stability, conductivity, and serve as a dispersing agent for nanomaterials. | Component of the composite electrode for glucose sensing [6]. |

| Noble Metal Nanoparticles (Au, Pt, Pd) | Catalyze electrochemical reactions, enhance signal amplification, and facilitate biomolecule immobilization. | AuPtPd nanoparticles were electro-synthesized in the glucose biosensor [6]. |

| Glucose Oxidase (GOx) | Biological recognition element for glucose; catalyzes its oxidation. | Immobilized on the nanocomposite for the final biosensor structure [6]. |

| Tin(IV) Oxide (SnO₂) | n-type semiconductor used in thin-film-based sensors. | Optimized via DoE for deposition via ultrasonic spray pyrolysis [3]. |

| Polyimide Film | Flexible, thermally stable substrate for fabricating electrodes. | Used as the substrate for laser-scribed graphene (LSG) electrodes [5]. |

| Potassium Ferricyanide (K₃[Fe(CN)₆]) | Redox probe for electrochemical characterization of electrode surfaces. | Used in cyclic voltammetry to measure EASA of LSG electrodes [5]. |

Design of Experiments is an indispensable methodology that moves biosensor development from a artisanal, trial-and-error process to a systematic, efficient, and data-driven engineering discipline. By leveraging full factorial and other statistical designs, researchers can comprehensively explore complex fabrication parameter spaces, quantify interaction effects, and rapidly converge on optimal configurations. This approach not only enhances key performance metrics like sensitivity and detection limit but also improves the reproducibility and robustness of biosensors, paving the way for their successful translation into reliable point-of-care diagnostic devices [6] [1] [4].

In the field of biosensor fabrication, optimizing multiple parameters simultaneously is crucial for developing high-performance devices. Factorial designs provide a systematic and efficient experimental framework for this purpose, allowing researchers to study the effects of multiple fabrication factors and their interactions concurrently [7] [8]. Unlike the traditional one-factor-at-a-time (OFAT) approach, which can miss critical interactions between parameters, factorial designs enable scientists to explore how factors like substrate materials, bioreceptor concentration, and fabrication temperature work together to influence biosensor performance [8]. This methodology is particularly valuable in biosensor development where complex relationships between material properties, biological elements, and transduction mechanisms determine the final device characteristics such as sensitivity, stability, and reproducibility [9].

Core Terminology and Definitions

Fundamental Concepts

Factorial design operates on several key concepts that form the foundation for experimental planning and analysis:

- Factor: A major independent variable that the researcher controls or manipulates during the experiment. In biosensor fabrication, factors represent critical fabrication parameters that may influence the final device performance [7].

- Level: The specific values or settings chosen for each factor [7]. A factor must have at least two levels to be included in a factorial design.

- Response: The measured outcome or dependent variable that quantifies the experimental results [8]. In biosensor research, responses typically relate to device performance metrics.

- Interaction: When the effect of one factor on the response depends on the level of another factor [7]. Interactions indicate that factors do not operate independently.

Notation and Structure

Factorial designs are described using a shorthand notation where the number of digits indicates how many factors are being studied, and the value of each digit indicates how many levels each factor has [7]. For example, a 2×3 factorial design has two factors, with the first factor having two levels and the second having three levels, requiring 2×3=6 experimental runs [7]. A 2³ design indicates three factors, each with two levels, requiring 8 experimental runs [8].

Table: Factorial Design Notation Examples

| Design Notation | Number of Factors | Number of Levels per Factor | Total Experimental Runs |

|---|---|---|---|

| 2² | 2 | 2 each | 4 |

| 2³ | 3 | 2 each | 8 |

| 2×3 | 2 | 2 and 3 | 6 |

| 3³ | 3 | 3 each | 27 |

Application in Biosensor Fabrication Research

Critical Factors in Biosensor Development

Biosensor fabrication involves numerous parameters that can be optimized through factorial designs. These factors typically correspond to the three fundamental components of a biosensor [9]:

- Substrate Factors: Material type, thickness, flexibility, and surface modification parameters.

- Bioreceptor Factors: Immobilization methods, concentration, orientation, and stability parameters.

- Transduction Factors: Active material properties, electrode design, and signal processing parameters.

The flexibility of biosensors presents unique design challenges, as substrates must withstand mechanical deformation while maintaining the function of bioreceptors and active elements [9]. Factorial designs are particularly valuable for navigating these complex parameter spaces efficiently.

Example: Bioink Formulation Optimization

Consider a biosensor development project focusing on 3D-bioprinted electrodes. Researchers might investigate two critical factors: bioink composition (with three levels: alginate-based, gelatin-based, or multicomponent) and crosslinking method (with two levels: ionic or UV) [10]. This would constitute a 2×3 factorial design requiring six experimental conditions. The responses might include electrical conductivity, printability, and long-term stability of the printed electrodes. Through such experimental structures, researchers can identify not only which bioink performs best overall but also whether the optimal crosslinking method depends on the specific bioink composition—valuable interaction information that would be missed in OFAT approaches [10].

Experimental Protocols and Methodologies

Designing a Factorial Experiment

Implementing a factorial design for biosensor optimization involves several methodical steps:

Factor Selection: Identify critical parameters likely to influence biosensor performance based on theoretical understanding and preliminary experiments [9]. Common factors in biosensor fabrication include material composition, surface treatment conditions, and bioreceptor immobilization parameters.

Level Determination: Establish appropriate levels for each factor that span a realistic operational range. For quantitative factors like temperature or concentration, levels should represent meaningful extremes (e.g., low and high values) that are practically achievable [8].

Experimental Randomization: Randomize the order of experimental runs to prevent confounding from extraneous variables [8]. This is particularly critical in biosensor fabrication where environmental conditions or reagent batches might introduce variability.

Response Measurement: Define precise protocols for measuring response variables relevant to biosensor function, such as sensitivity, limit of detection, response time, and stability [9].

Data Analysis: Employ appropriate statistical methods to quantify main effects and interaction effects, typically using analysis of variance (ANOVA) techniques.

Detailed Protocol: Optimizing Flexible Electrode Formulation

Table: Experimental Design for Electrode Formulation Optimization

| Factor | Level 1 | Level 2 | Level 3 | Control Parameters |

|---|---|---|---|---|

| Conductive Filler (%) | 15% | 25% | 35% | Base polymer: PDMS |

| Substrate Thickness (µm) | 100 | 200 | - | Curing temp: 70°C |

| Curing Time (min) | 30 | 60 | - | Mixing speed: 200 rpm |

Procedure:

- Prepare electrode formulations according to the 3×2×2 factorial design, resulting in 12 experimental conditions.

- Randomize the preparation order to minimize batch effects.

- Fabricate three biosensor replicates for each condition using standardized deposition techniques.

- Characterize the electrochemical performance of each biosensor using impedance spectroscopy.

- Subject samples to mechanical testing to assess flexibility and durability.

- Analyze data to identify significant main effects and interactions between factors.

Visualization of Factorial Design Concepts

Factorial Design Structure: This diagram illustrates the fundamental components of a factorial design and their relationships. Factors (independent variables) and their Levels (specific settings) combine to form the Experimental Structure. The measured Responses (dependent variables) are analyzed to identify both Main Effects (individual factor impacts) and Interactions (combined effects), which are then evaluated through Statistical Analysis to draw meaningful conclusions about the system being studied [7] [8].

Interpreting Results: This diagram outlines the three primary outcomes possible in factorial experiments. After testing all Experimental Conditions (combinations of factor levels), researchers may find: Main Effects Only (indicating factors act independently), Interaction Present (where the effect of one factor depends on another factor's level), or Null Result (where no factors significantly affect the response). Each outcome requires different interpretation and leads to distinct conclusions about the system [7].

Research Reagent Solutions for Biosensor Fabrication

Table: Essential Materials for Biosensor Fabrication Research

| Material Category | Specific Examples | Function in Biosensor Development |

|---|---|---|

| Substrate Materials | PET, Polyimide, PDMS, Graphene | Provides mechanical support and flexibility; forms the primary structure of the biosensor [9]. |

| Biorecognition Elements | Antibodies, Aptamers, Enzymes, DNA/RNA | Specifically binds to target analytes; provides detection specificity [9]. |

| Transduction Materials | Conductive polymers, Metal nanoparticles, Carbon nanomaterials | Converts biological recognition events into measurable signals [9]. |

| Bioink Components | Alginate, Gelatin, Multicomponent hydrogels | Enables 3D bioprinting of biosensor structures; provides environment for bioreceptor immobilization [10]. |

| Immobilization Reagents | Glutaraldehyde, EDC/NHS, SAMs | Fixes biorecognition elements to substrate while maintaining functionality [9]. |

Advantages Over Traditional Experimental Approaches

Factorial designs offer several significant advantages for biosensor research compared to one-factor-at-a-time approaches:

Interaction Detection: The ability to identify interactions between fabrication parameters is perhaps the most valuable feature of factorial designs [7] [8]. For instance, the optimal temperature for bioreceptor immobilization might depend on the substrate material being used—a critical insight that would be missed in OFAT experiments.

Efficiency: Factorial designs provide more information with fewer experimental runs than OFAT approaches [8]. A full factorial design with k factors each at 2 levels requires 2^k runs, while OFAT might require many more runs to obtain equivalent information.

Generalizability: Results from factorial designs apply across a broader range of conditions since each factor is tested at multiple levels of other factors [8]. This leads to more robust biosensor fabrication protocols that are less sensitive to minor variations in process conditions.

Statistical Power: Factorial designs allow for more precise estimation of main effects because each effect is estimated across the varying conditions of other factors, providing a better representation of real-world variability [8].

These advantages make factorial designs particularly suitable for complex biosensor optimization problems where multiple interacting parameters determine final device performance and where experimental resources including specialized materials and characterization equipment are often limited [9].

In the development of high-performance biosensors, the optimization of fabrication parameters—such as probe concentration, immobilization time, and substrate chemistry—is paramount. A systematic approach to experimentation is required to navigate this multi-factor space efficiently. Factorial designs provide a powerful statistical framework for this purpose, enabling researchers to understand complex factor effects and interactions. This whitepaper details three core methodologies—Full Factorial, Fractional Factorial, and Response Surface Methodologies—within the context of optimizing biosensor fabrication for enhanced sensitivity and specificity.

Full Factorial Designs

A full factorial design investigates every possible combination of factors and their levels. For k factors, each at 2 levels (typically denoted as -1 for low and +1 for high), this requires 2k experimental runs.

2.1. Application in Biosensor Fabrication A study aimed to optimize an electrochemical DNA biosensor's signal-to-noise ratio. The three factors investigated were:

- A: Probe DNA Concentration (nM)

- B: Immobilization Time (minutes)

- C: Hybridization Temperature (°C)

A 2³ full factorial design was employed, requiring 8 experiments.

2.2. Experimental Protocol

- Substrate Preparation: Clean gold electrodes via electrochemical cycling in sulfuric acid.

- Probe Immobilization: For each run, apply the specified probe DNA concentration (A) to the electrode surface and allow immobilization for the set time (B).

- Hybridization: Introduce the target DNA sequence and incubate at the designated temperature (C) for 60 minutes.

- Signal Measurement: Measure the electrochemical current (e.g., via Differential Pulse Voltammetry) for each biosensor. The response is the recorded current in microamps (µA).

2.3. Data Analysis The quantitative results from the hypothetical experiment are summarized below.

Table 1: 2³ Full Factorial Design Matrix and Results for DNA Biosensor Optimization

| Standard Order | A: Probe (nM) | B: Time (min) | C: Temp (°C) | Signal (µA) |

|---|---|---|---|---|

| 1 | 25 (-1) | 30 (-1) | 25 (-1) | 1.2 |

| 2 | 100 (+1) | 30 (-1) | 25 (-1) | 2.1 |

| 3 | 25 (-1) | 120 (+1) | 25 (-1) | 1.8 |

| 4 | 100 (+1) | 120 (+1) | 25 (-1) | 3.0 |

| 5 | 25 (-1) | 30 (-1) | 50 (+1) | 0.8 |

| 6 | 100 (+1) | 30 (-1) | 50 (+1) | 1.5 |

| 7 | 25 (-1) | 120 (+1) | 50 (+1) | 1.1 |

| 8 | 100 (+1) | 120 (+1) | 50 (+1) | 2.4 |

Analysis of this data through ANOVA (Analysis of Variance) would reveal the main effects of each factor and their two- and three-way interactions. For instance, the data suggests a strong positive effect of increasing Probe Concentration (A) and a negative effect of high Hybridization Temperature (C).

Diagram 1: Full Factorial Experimental Workflow

Fractional Factorial Designs

When the number of factors is large, a full factorial design becomes prohibitively expensive. Fractional factorial designs use a carefully selected fraction (e.g., 1/2, 1/4) of the full factorial runs, sacrificing the ability to estimate some higher-order interactions, which are often negligible.

3.1. Application in Biosensor Fabrication For screening 5 factors affecting a nanoparticle-enhanced optical biosensor, a 25-1 fractional factorial design (Resolution V) can be used. This requires only 16 runs instead of 32.

3.2. Experimental Protocol

- Factors: A) Nanoparticle Size, B) Coating Thickness, C) Laser Power, D) Flow Rate, E) Buffer pH.

- Design: Generate a 16-run design matrix using a defining relation (e.g., I = ABCDE). This design confounds some two-way interactions with three-way interactions but allows clear estimation of all main effects.

- Execution: Fabricate biosensors and measure the optical response (e.g., shift in resonance wavelength) for each of the 16 experimental conditions.

Table 2: Comparison of Full vs. Fractional Factorial Designs

| Feature | Full Factorial | Fractional Factorial (Resolution V) |

|---|---|---|

| Runs for 5 Factors | 32 | 16 |

| Main Effects | Unambiguously estimated | Unambiguously estimated |

| Two-Factor Interactions | All estimated | Some are confounded with other two-factor interactions |

| Aliasing | None | Present, but controlled by design resolution |

| Primary Use | Detailed study of few factors | Screening many factors to identify vital few |

| Efficiency | Low | High |

Diagram 2: Fractional Factorial Screening Workflow

Response Surface Methodologies (RSM)

Once the critical factors are identified via fractional factorial designs, RSM is used to model curvature and find the true optimum. Central Composite Design (CCD) is the most common RSM design.

4.1. Application in Biosensor Fabrication After identifying Probe Concentration (X1) and Immobilization Time (X2) as vital factors, a CCD is used to model the response surface and find the parameter set that maximizes the biosensor's current response.

4.2. Experimental Protocol

- Design: A CCD includes factorial points, axial (star) points, and center points. For 2 factors, this typically requires 9-13 runs.

- Execution: Conduct experiments according to the CCD matrix, which includes levels beyond the original -1/+1 range (e.g., -α, +α).

- Modeling: Fit the data to a second-order polynomial model:

Y = β₀ + β₁X₁ + β₂X₂ + β₁₁X₁² + β₂₂X₂² + β₁₂X₁X₂ + ε - Optimization: Use the fitted model to generate a 3D response surface plot and contour plot to visually identify the optimum.

Table 3: Central Composite Design (CCD) Matrix and Results

| Run Type | X1: Probe (nM) | X2: Time (min) | Signal (µA) |

|---|---|---|---|

| Factorial | 25 (-1) | 30 (-1) | 1.2 |

| Factorial | 100 (+1) | 30 (-1) | 2.1 |

| Factorial | 25 (-1) | 120 (+1) | 1.8 |

| Factorial | 100 (+1) | 120 (+1) | 3.0 |

| Axial | 10 (-α) | 75 (0) | 0.9 |

| Axial | 115 (+α) | 75 (0) | 2.8 |

| Axial | 62.5 (0) | 15 (-α) | 1.5 |

| Axial | 62.5 (0) | 135 (+α) | 2.2 |

| Center | 62.5 (0) | 75 (0) | 2.5 |

| Center | 62.5 (0) | 75 (0) | 2.6 |

| Center | 62.5 (0) | 75 (0) | 2.4 |

Diagram 3: Response Surface Methodology Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Biosensor Fabrication Experiments

| Item | Function in Experiment |

|---|---|

| Functionalized Substrate (e.g., Gold slide, Graphene oxide) | Provides a surface for the immobilization of biorecognition elements (probes). |

| Biorecognition Element (e.g., DNA probe, Antibody, Enzyme) | The core component that confers specificity by binding to the target analyte. |

| Crosslinking Reagents (e.g., EDC/NHS) | Facilitates covalent bonding between the probe and the substrate surface. |

| Blocking Agents (e.g., BSA, Ethanolamine) | Reduces non-specific binding to the sensor surface, improving signal-to-noise ratio. |

| Target Analyte | The molecule of interest (e.g., a specific DNA sequence, protein, or small molecule) whose detection is the goal. |

| Signal Transduction Reagent (e.g., Redox mediator, Fluorescent dye) | Generates a measurable signal (electrical, optical) upon target binding. |

| Buffer Solutions (e.g., PBS, SSC) | Maintains stable pH and ionic strength, which are critical for biomolecular interactions. |

Advantages Over One-Variable-at-a-Time (OVAT) Optimization

In the field of biosensor fabrication and metabolic engineering, optimization of multiple parameters is crucial for achieving peak performance. Traditional One-Variable-at-a-Time (OVAT) approaches have been widely used due to their straightforward implementation, where researchers optimize a single factor while keeping all others constant. However, this method presents significant limitations, especially in complex, multivariate systems where factors interact in non-linear ways. The emergence of systematic optimization approaches, particularly factorial design and Response Surface Methodology (RSM), represents a paradigm shift, enabling researchers to efficiently navigate complex experimental spaces and uncover optimal conditions that would remain hidden with OVAT approaches [11] [12].

The fundamental weakness of OVAT optimization lies in its inability to detect interactions between variables. In biosensor systems, where fabrication parameters, biological recognition elements, and detection conditions often exhibit interdependent effects, this limitation becomes critical. Experimental design (DoE) addresses this deficiency by systematically varying all factors simultaneously, allowing for the construction of mathematical models that accurately predict system behavior across the entire experimental domain [11]. This technical guide explores the distinct advantages of multivariate optimization approaches over OVAT methods, providing researchers with the theoretical foundation and practical protocols needed to implement these powerful strategies in biosensor development and related fields.

Theoretical Foundations and Limitations of OVAT

The OVAT Methodology and Its inherent Flaws

The OVAT approach follows a sequential optimization path where each factor is optimized individually while other parameters remain fixed. This method appears logically sound initially but contains fundamental flaws that become apparent in complex systems. The procedure typically begins with a baseline condition, after which Factor A is varied while Factors B, C, and D remain constant. Once the "optimal" value for Factor A is determined, it remains fixed at that value while Factor B is varied, and so on throughout all parameters of interest [12] [2].

The primary limitation of this approach is its inability to detect interaction effects between variables. In biological and sensor systems, it is common for one factor to influence the effect of another—a phenomenon that consistently eludes detection in OVAT approaches [11]. Additionally, the so-called optimum identified through OVAT is highly dependent on the starting conditions and the order in which variables are optimized, often resulting in suboptimal performance [12] [2]. As the number of variables increases, OVAT becomes increasingly resource-intensive while providing diminishing returns in optimization quality. For systems with numerous interacting components, such as multi-gene metabolic pathways or complex biosensor architectures, OVAT may never reach the true global optimum, instead becoming trapped in local performance maxima [2].

Quantitative Comparison: Experimental Efficiency

The experimental burden of OVAT increases multiplicatively with additional factors, while multivariate approaches like factorial design offer more efficient exploration of the parameter space. The table below illustrates this dramatic difference in experimental requirements.

Table 1: Experimental Effort Comparison: OVAT vs. Factorial Design

| Number of Variables | Number of Levels | OVAT Experiments Required | Full Factorial Design Experiments | Efficiency Ratio |

|---|---|---|---|---|

| 3 | 2 | 12 | 8 | 1.5× |

| 4 | 2 | 20 | 16 | 1.25× |

| 6 | 2 | 44 | 64 | 0.69× |

| 6 | 3 | 728 | 729 | ~1× |

| 6 | Mixed (2-4 levels) | 486 (OVAT) vs. 30 (DoE) | 30 (D-optimal design) | 16.2× [12] |

As demonstrated in the table, while full factorial designs can sometimes require more experiments than OVAT for systems with many factors and levels, strategic experimental designs like D-optimal designs can dramatically reduce the experimental burden. In one documented case, optimizing a paper-based electrochemical biosensor for miRNA detection required only 30 experiments with a D-optimal design compared to 486 experiments with an OVAT approach—a 94% reduction in experimental effort [12].

Multivariate Optimization Approaches: Methodologies and Protocols

Fundamental Concepts of Factorial Design

Factorial designs form the foundation of multivariate optimization, systematically exploring how multiple factors simultaneously affect a response variable. The most basic is the 2^k factorial design, where k represents the number of factors, each investigated at two levels (typically coded as -1 for low level and +1 for high level) [11]. These designs allow researchers to estimate not only the main effects of each factor but also interaction effects between factors.

For a 2^2 factorial design (two factors, each at two levels), the mathematical model takes the form:

Y = b₀ + b₁X₁ + b₂X₂ + b₁₂X₁X₂ [11]

Where Y is the predicted response, b₀ is the overall mean response, b₁ and b₂ represent the main effects of factors X₁ and X₂, and b₁₂ quantifies the interaction effect between X₁ and X₂. The experimental matrix for this design consists of four experiments (2^2), with responses measured at each corner of the experimental domain [11].

When system curvature is suspected, second-order models become necessary. Central composite designs (CCD) augment initial factorial designs with additional points (axial and center points) to estimate quadratic terms, thereby enhancing the predictive capability of the model [11] [13]. These designs are particularly valuable when approaching optimal conditions where response surfaces often exhibit curvature.

Key Experimental Protocols

Protocol 1: Screening Significant Factors Using Full Factorial Design

Objective: Identify factors with significant effects on biosensor performance from a large set of potential variables [11] [2].

Procedure:

- Select 3-5 factors suspected to influence the critical response (e.g., sensitivity, limit of detection).

- Define practical high (+1) and low (-1) levels for each factor based on preliminary knowledge.

- Execute all 2^k experiments in randomized order to minimize systematic error.

- Measure responses for each experimental combination.

- Calculate main effects and interaction effects using statistical software.

- Identify statistically significant factors (typically using ANOVA) for further optimization.

Application Example: This approach was used to identify significant nutrient factors affecting recombinant protein production in E. coli, leading to 18-fold higher enzyme activity compared to previous reports [2].

Protocol 2: Response Surface Optimization with Central Composite Design

Objective: Locate optimal factor levels and characterize the response surface near the optimum [13] [14].

Procedure:

- Select 2-4 most significant factors identified from screening designs.

- Define five levels for each factor (-α, -1, 0, +1, +α) where α depends on the number of factors.

- Execute the experimental sequence comprising: 2^k factorial points, 2k axial points, and 3-6 center points (typically 20-30 total experiments).

- Fit experimental data to a second-order polynomial model.

- Validate model adequacy through residual analysis and lack-of-fit tests.

- Generate response surface plots and contour plots to visualize factor relationships.

- Determine optimal factor levels using numerical optimization or ridge analysis.

Application Example: Researchers optimized an amperometric immunosensor for tetanus antibody detection using a circumscribed central composite design (CCCD), efficiently optimizing four key parameters (BSA concentration, incubation times, and antibody dilution) that would have required extensive experimentation with OVAT [13].

Protocol 3: D-Optimal Design for Constrained Experimental Space

Objective: Optimize multiple factors with different numbers of levels when classical designs are inefficient or the experimental space is constrained [12].

Procedure:

- Identify all factors to be optimized, noting the number of levels for each.

- Define any constraints or forbidden combinations based on practical limitations.

- Specify the desired mathematical model (typically quadratic).

- Use statistical software to generate a design that maximizes the determinant of the information matrix (X'X).

- Execute the experiments in randomized order.

- Analyze data using regression modeling to identify optimal conditions.

Application Example: A hybridization-based paper electrochemical biosensor for miRNA-29c detection was optimized using a D-optimal design, evaluating six variables with only 30 experiments instead of the 486 required by OVAT, resulting in a 5-fold improvement in detection limit [12].

Comparative Analysis: OVAT vs. Multivariate Approaches

Performance and Efficiency Outcomes

Direct comparisons between OVAT and multivariate approaches demonstrate clear advantages for designed experiments across multiple performance metrics.

Table 2: Documented Performance Improvements with Multivariate Optimization

| Application Domain | Optimization Method | Key Improvement Over OVAT | Reference |

|---|---|---|---|

| Electrochemical biosensor for miRNA-29c | D-optimal design | 5-fold improvement in LOD; 94% reduction in experiments | [12] |

| Glucose biosensor | Full factorial design | 93% reduction in nanoconjugate usage; operational stability improved from 50% to 75% current retention | [12] |

| Pigment production in T. albobiverticillius | Central Composite Design | Identified optimal nutrient concentrations (3 g/L yeast extract, 1 g/L K₂HPO₄, 0.2 g/L MgSO₄·7H₂O) that significantly increased yield | [14] |

| Heavy metal detection sensor | Central Composite Design | Lower detection limit (1 nM vs. 12 nM with OVAT) with only 13 experiments | [12] |

| Recombinant protein production | Full factorial design | 18-fold higher enzyme activity and product titers | [2] |

Advantages of Multivariate Approaches

The documented case studies reveal several consistent advantages of multivariate optimization over OVAT:

Detection of Interaction Effects: Multivariate approaches can identify and quantify interactions between factors, which is impossible with OVAT. For instance, the effect of gold nanoparticle concentration in a biosensor might depend on the immobilization method used—a critical insight that would be missed with sequential optimization [11].

Reduced Experimental Burden: By testing factors simultaneously rather than sequentially, multivariate approaches typically require fewer experiments to reach optimum conditions, saving time and resources [12].

Comprehensive Process Understanding: The mathematical models generated from designed experiments provide predictive capability across the entire experimental domain, not just at the tested points [11].

Identification of True Optima: By considering the simultaneous effects of all factors, multivariate approaches are more likely to identify global optima rather than being trapped in local performance maxima [2].

Robustness to Factor Interdependence: Biological systems typically exhibit complex interdependencies between factors. Multivariate approaches explicitly model these relationships, leading to more robust optimization [11] [2].

Implementation Framework and Technical Considerations

Research Reagent Solutions for Experimental Design

Successful implementation of multivariate optimization requires specific reagents and materials tailored to the experimental system.

Table 3: Essential Research Reagents for Biosensor Optimization Studies

| Reagent/Material Category | Specific Examples | Function in Optimization | Considerations |

|---|---|---|---|

| Conductive Inks/Nanomaterials | Carbon nanoparticles, silver nanoparticles, graphene solutions [15] | Electrode modification to enhance signal transduction | Concentration, deposition method, compatibility with substrate |

| Biological Recognition Elements | Antibodies, DNA probes, enzymes, aptamers [16] [17] | Target capture and specific binding | Immobilization method, concentration, orientation, stability |

| Blocking Agents/Passivation | Bovine Serum Albumin (BSA), casein, synthetic blockers [13] | Reduce non-specific binding | Concentration, incubation time, compatibility with detection method |

| Signal Generation Components | Enzymes (HRP, AP), redox mediators, electrochemical reporters [13] | Convert biological event to measurable signal | Concentration, stability, kinetic parameters |

| Substrate Materials | Polyimide, screen-printed electrodes, fabric substrates [15] [18] | Physical support for biosensor construction | Surface chemistry, compatibility with biological elements |

| Surface Modification Reagents | EDC/NHS, glutaraldehyde, dopamine [17] [18] | Covalent immobilization of recognition elements | Concentration, reaction time, effect on biorecognition |

Workflow Integration and Decision Framework

Implementing multivariate optimization requires strategic planning and integration with existing research workflows. The following diagram illustrates a systematic approach for transitioning from OVAT to multivariate optimization methods:

Experimental Design Selection Workflow

This decision framework helps researchers select the appropriate experimental design based on their specific optimization goals, number of factors, and resource constraints. The systematic approach ensures efficient resource allocation while maximizing information gain from the optimization process.

The limitations of One-Variable-at-a-Time optimization become increasingly evident as biosensor systems grow more complex. The inability to detect factor interactions, the tendency to converge on local optima, and the inefficient use of experimental resources make OVAT unsuitable for modern biosensor development and related biotechnology applications. In contrast, multivariate optimization approaches including factorial designs, response surface methodology, and D-optimal designs provide a rigorous framework for efficient, comprehensive system optimization.

The documented evidence demonstrates that systematic experimental design can reduce experimental effort by over 90% while simultaneously improving key performance metrics such as detection limits, sensitivity, and stability. By adopting these methodologies, researchers can not only accelerate development timelines but also gain deeper insights into their systems through predictive mathematical models. As the field of biosensing continues to advance toward increasingly sophisticated multiplexed detection systems and point-of-care applications, the implementation of robust multivariate optimization strategies will become increasingly essential for developing competitive, high-performance diagnostic platforms.

The Role of DoE in Systematic Parameter Screening

The fabrication of high-performance biosensors is a complex, multi-parameter process where factors such as biorecognition element concentration, immobilization time, and detection conditions interact in ways that are difficult to predict. Traditional one-factor-at-a-time (OFAT) optimization approaches, while straightforward, are fundamentally flawed for such multi-factorial systems as they cannot detect interaction effects between variables and often lead to the identification of local, rather than global, optimum conditions [19]. This methodological limitation hinders the widespread adoption of biosensors as dependable point-of-care tests [11] [1].

Design of Experiments (DoE) is a powerful chemometric tool that provides a systematic, statistically sound framework for optimizing such complex processes. Unlike OFAT, a pre-planned DoE approach varies multiple factors simultaneously according to a predetermined experimental matrix. This enables the development of a data-driven model that connects variations in input variables to the sensor's output performance, efficiently revealing both main effects and critical interactions with minimal experimental effort [11] [1]. For ultrasensitive biosensors targeting sub-femtomolar detection limits—where enhancing the signal-to-noise ratio and ensuring reproducibility are paramount—the rigorous application of DoE is particularly crucial [1].

This guide details the core principles of DoE and provides actionable protocols for its application in the systematic screening and optimization of biosensor fabrication parameters, framed within the context of advanced factorial design research.

Core DoE Methodologies and Quantitative Comparisons

Selecting the appropriate experimental design is the first critical step in a DoE workflow. The choice depends on the optimization goal—whether it is initial factor screening or detailed response surface mapping.

Fundamental Designs for Factor Screening

Full Factorial Designs are the foundation for many screening studies. A 2k full factorial design involves testing k factors, each at two levels (commonly coded as -1 and +1). This requires 2k experimental runs and is efficient for fitting first-order models and estimating all two-factor interactions [11] [19]. For example, with 3 factors, 8 experiments are needed; with 5 factors, 32 are required. The experimental matrix for a 2^2 factorial design is shown in [11].

Fractional Factorial Designs are used when the number of factors is large, and running a full factorial design is prohibitively expensive. These designs sacrifice the ability to estimate some higher-order interactions to significantly reduce the number of required runs, making them ideal for initial screening to identify the most influential factors [19].

Advanced Designs for Response Surface Optimization

Once the critical few factors are identified, more complex designs are employed to model curvature in the response and locate the true optimum.

Central Composite Designs (CCD) are the most popular class of designs for fitting second-order (quadratic) models. A CCD augments a factorial design (full or fractional) with additional axial (star) points and center points, allowing for the estimation of curvature in the response surface [1].

Mixture Designs are used when the factors are components of a mixture (e.g., the formulation of a sensing layer) and their proportions must sum to 100%. In these designs, changing one component's proportion necessarily changes the proportions of others [1].

Table 1: Comparison of Common Experimental Designs for Biosensor Optimization

| Design Type | Primary Objective | Model Order | Key Advantages | Typical Experimental Effort |

|---|---|---|---|---|

| Full Factorial | Factor screening & interaction analysis | First-Order | Identifies all main effects and interaction effects. | 2k runs (e.g., 4 runs for 2 factors; 8 for 3) [11] |

| Fractional Factorial | Screening many factors efficiently | First-Order | Drastically reduces runs when many factors are involved. | 2(k-p) runs (e.g., 8 runs for 5-7 factors) [19] |

| Central Composite (CCD) | Response surface mapping & optimization | Second-Order | Models curvature; finds optimal factor settings. | Higher than factorial (e.g., 14-20 runs for 3 factors) [1] |

| Mixture Design | Optimizing component proportions | Specialized Mixture | Handles the constraint of a fixed total mixture. | Varies (e.g., Simplex-Lattice) [1] |

Practical Implementation and Workflow

Implementing DoE is an iterative process that moves from broad screening to focused optimization, maximizing learning while conserving resources.

The Sequential DoE Workflow

A single experimental design is rarely sufficient for final process optimization. A sequential approach is recommended [1] [19]:

- Screening: Use a fractional factorial design to identify the few critical factors from a long list of potential variables.

- Optimization: Apply a response surface methodology (RSM) design, like a CCD, to the critical factors to model the response and locate the optimum.

- Verification: Conduct confirmatory experiments at the predicted optimal conditions to validate the model.

It is advisable not to allocate more than 40% of the total experimental budget to the initial screening design [1].

Data Analysis and Model Building

The responses from the experimental runs are used to build a mathematical model via linear regression. For a 2-factor screening design, the postulated first-order model with interaction is:

Y = b₀ + b₁X₁ + b₂X₂ + b₁₂X₁X₂ [11]

Where:

- Y is the predicted response (e.g., sensor sensitivity).

- b₀ is the constant term (overall mean).

- b₁ and b₂ are the coefficients for the main effects of factors X₁ and X₂.

- b₁₂ is the coefficient for the interaction effect between X₁ and X₂.

The model's adequacy must be checked by analyzing the residuals (the differences between measured and predicted values). If the model fit is poor, the experimental domain or the model itself may need to be redefined [1].

Figure 1: The iterative cycle of Design of Experiments, highlighting its data-driven and reflective nature [11] [1] [19].

Case Study: Optimizing a Nanoparticle-Functionalized Gas Sensor

A recent study on a multi-sensor screening platform provides an excellent example of a systematic, DoE-like approach to optimizing sensor materials [20].

Research Goal and Experimental Protocol

- Objective: To systematically screen how the type and areal density of metallic nanoparticles (NPs) affect the performance of SnO₂-based gas sensors.

- Platform: A custom Si-based chip integrating 16 individual sensor structures, allowing for parallel testing [20].

- Factors and Levels:

- Factor A - NP Type: 3 levels (Au, Ni₀.₃Pt₀.₇, Pd).

- Factor B - NP Areal Density: 5 levels (controlled via concentration of NP solution during ESJET printing).

- Constant Parameters: Base material (50 nm ultrathin SnO₂ film), target gases (50 ppm CO, 50 ppm HC mix), operating temperature (300°C) [20].

- Response Variables: Sensor response (change in electrical conductance) under three different humidity conditions (25%, 50%, 75% r.h.).

- Protocol:

- Deposit and structure SnO₂ film on the platform chip.

- Functionalize individual sensor structures with different NP types and densities via ESJET printing.

- Mount the chip in a custom gas test chamber with controlled temperature and gas flow.

- Expose all 16 sensors simultaneously to target gases and record conductance responses.

- Analyze data to determine the NP type and density that maximize sensor response and minimize humidity interference [20].

Key Findings and The Scientist's Toolkit

The study successfully identified non-intuitive optimal conditions: both Au and NiPt nanoparticles enhanced sensor responses towards CO and the hydrocarbon mixture, with performance reaching a maximum at a specific, type-dependent NP concentration. Pd nanoparticles, by contrast, did not show this enhancement [20].

Table 2: Research Reagent Solutions for Nanomaterial-Based Sensor Optimization

| Material / Reagent | Function in the Experiment | Application Note |

|---|---|---|

| SnO₂ (Tin Dioxide) | Base metal oxide sensing layer; its conductance changes upon gas exposure. | Deposited as a 50 nm ultrathin film via spray pyrolysis for high surface-area-to-volume ratio [20]. |

| Au, NiPt, Pd Nanoparticles | Catalytic functionalization to enhance sensitivity and selectivity. | Synthesized as colloidal solutions and printed via ESJET for precise control over type and density [20]. |

| ESJET Printing System | Non-contact, high-resolution dispensing technology for nanomaterial solutions. | Enables precise functionalization of multiple sensor areas with different NP types/densities on a single chip [20]. |

| Custom Si Platform Chip | Substrate with 16 integrated sensor structures and heating element. | Allows high-throughput, parallel testing of multiple material combinations under identical conditions [20]. |

Advanced Applications: Machine Learning and DoE

The principles of systematic optimization are being extended through integration with machine learning (ML). In one advanced study, researchers introduced a machine learning-optimized graphene-based biosensor for breast cancer detection [21]. The sensor employed a multilayer architecture (Ag–SiO₂–Ag) to amplify optical response. ML models were used to systematically refine the sensor's structural parameters, a task analogous to a complex DoE optimization. This hybrid approach led to a peak sensitivity of 1785 nm/RIU, demonstrating superior performance compared to conventional designs and underscoring the potential of data-driven strategies to push the boundaries of biosensor capabilities [21].

Figure 2: Machine learning augments the DoE paradigm by efficiently navigating complex parameter spaces to find optimal sensor configurations [21].

The adoption of Design of Experiments is a critical step toward maturing biosensor technology from promising laboratory prototypes to robust, commercially viable diagnostic tools. By replacing inefficient OFAT methods with a structured, model-based approach, researchers can comprehensively understand the complex interplay of fabrication parameters, ultimately achieving higher sensitivity, stability, and reproducibility. The integration of DoE with high-throughput screening platforms and machine learning algorithms represents the cutting edge of biosensor optimization, paving the way for the next generation of personalized healthcare and point-of-care diagnostics.

Implementing Factorial Design: Step-by-Step Protocols and Case Studies

Defining Optimization Objectives and Critical Quality Attributes

In the field of biosensor fabrication, moving from empirical, trial-and-error development to a systematic, science-based approach is crucial for achieving robust, reliable, and commercially viable devices. This paradigm shift is anchored in two foundational concepts: the precise definition of optimization objectives and the identification of Critical Quality Attributes (CQAs). Within the broader context of factorial design research for biosensor parameters, these elements provide the necessary framework for guiding experimental efforts, ensuring that the resulting biosensors meet stringent performance requirements for sensitivity, selectivity, and stability.

Optimization objectives define the specific, measurable goals of the biosensor development process, such as achieving a sub-femtomolar limit of detection or maintaining performance under mechanical stress. CQAs, on the other hand, are the key physical, chemical, biological, or microbiological properties that must be controlled within an appropriate limit, range, or distribution to ensure the desired product quality [22]. For a biosensor, typical CQAs include analytical sensitivity, specificity, signal-to-noise ratio, and reproducibility. The relationship between these elements is integral to the Quality by Design (QbD) framework, a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding and control [22] [23]. This guide provides a detailed technical roadmap for defining these critical elements within a factorial design framework, enabling researchers to efficiently optimize biosensor fabrication parameters.

The Quality by Design (QbD) Framework and Biosensor Development

Core Principles of QbD

The QbD framework, as formalized by the International Council for Harmonisation (ICH) Q8 guidelines, is defined as "a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding and process control, based on sound science and quality risk management" [22]. Its implementation in pharmaceutical development has demonstrated a 40% reduction in batch failures and enhanced process robustness through real-time monitoring [22]. These same principles are directly transferable and highly beneficial for biosensor fabrication, which often faces similar challenges of complexity, reproducibility, and scalability.

The core principles of QbD include:

- A Proactive Approach: Quality is built into the product and process through deliberate design, rather than being confirmed solely through retrospective testing of the final product.

- Science-Based and Risk-Based: Decisions are based on sound scientific rationale and quality risk management to identify parameters that critically impact product quality.

- The Design Space: A multidimensional combination of input variables (e.g., material attributes and process parameters) that have been demonstrated to provide assurance of quality [22] [23]. Operating within the approved design space offers regulatory flexibility.

- Control Strategy: A planned set of controls, derived from current product and process understanding, that ensures process performance and product quality.

The QbD Workflow for Biosensor Fabrication

The implementation of QbD follows a structured workflow. The following diagram illustrates the sequential stages, from defining target profiles to continuous improvement, providing a logical roadmap for development.

Diagram 1: The QbD Workflow for Systematic Development. This workflow transitions from defining quality targets to implementing lifecycle management.

Defining the Quality Target Product Profile (QTPP)

The Quality Target Product Profile (QTPP) is a prospective summary of the quality characteristics of a biosensor that will ideally be achieved to ensure the desired quality, taking into account safety and efficacy. It forms the foundation for all subsequent development steps [22]. The QTPP is a strategic document that outlines the "user's wishlist" and serves as the compass for the entire development effort.

Key Elements of a Biosensor QTPP

For a biosensor, the QTPP should include, but not be limited to, the following elements:

- Intended Use and Application: The specific analyte (e.g., glucose, dopamine, a specific DNA sequence, a pathogenic antigen) and the sample matrix (e.g., blood, serum, urine, environmental sample). This directly influences the required selectivity and robustness.

- Dosage Form/Design: This translates to the physical form of the biosensor—whether it is a flexible epidermal sensor [9], an implantable microelectrode [24], a cartridge-based system, or a paper-based strip.

- Bio-recognition Element: The specific reagent or mechanism for target capture (e.g., immobilized ssDNA probe, enzyme like glucose oxidase, antibody, aptamer) [25] [24] [26].

- Delivery System/Platform: The technology platform used (e.g., electrochemical, optical, field-effect transistor).

- Performance Attributes: Target values for key performance indicators such as Limit of Detection (LOD), Limit of Quantification (LOQ), dynamic range, and response time.

- Stability/Shelf-Life: The required stability of the biosensor under defined storage conditions.

- Safety and Biocompatibility: For sensors used in vivo or on skin, biocompatibility of all materials is a critical attribute [9].

Identifying Critical Quality Attributes (CQAs)

With the QTPP as a guide, the next step is to identify the Critical Quality Attributes (CQAs). CQAs are physical, chemical, biological, or microbiological properties or characteristics that should be within an appropriate limit, range, or distribution to ensure the desired product quality [22]. In simpler terms, CQAs are the metrics that, if controlled, will ensure your biosensor meets the goals laid out in the QTPP.

CQA Classification and Examples

CQAs can be categorized based on the aspect of the biosensor they describe. The following table provides a structured overview of common biosensor CQAs, their definitions, and illustrative examples from recent research.

Table 1: Classification and Examples of Critical Quality Attributes (CQAs) in Biosensors

| CQA Category | Definition | Exemplary Biosensor CQAs | Research Example |

|---|---|---|---|

| Analytical Performance | Attributes defining the core sensing capability and accuracy. | - Limit of Detection (LOD): The lowest analyte concentration that can be reliably detected.- Selectivity/Specificity: The ability to distinguish the target analyte from interferents.- Dynamic Range: The interval between the upper and lower analyte concentrations for which the sensor provides a quantifiable response.- Linearity: The ability to obtain results directly proportional to analyte concentration.- Accuracy & Precision: Closeness to the true value and reproducibility of the measurement. | LOD lower than femtomolar for early disease diagnosis [11]. Selective co-detection of dopamine and glucose using unique voltammetric signatures [24]. |

| Physical/Chemical Properties | Attributes related to the material composition and structure of the biosensor. | - Surface Morphology: The physical structure and roughness of the sensing layer.- Bioreceptor Density & Orientation: The amount and activity of immobilized recognition elements on the sensor surface.- Electrochemical Properties: Characteristics like charge transfer resistance and double-layer capacitance [24]. | Hydrogel membrane quality and uniformity on carbon-fiber microelectrodes [24]. Ink-jet printed electrode geometry and CNT network structure [25]. |

| Performance in Use | Attributes defining behavior under operational conditions, including mechanical stress. | - Stability & Shelf-Life: The ability to maintain performance over time under specified storage conditions.- Robustness: The capacity of the method to remain unaffected by small, deliberate variations in method parameters.- Mechanical Flexibility: For flexible biosensors, the ability to function before, during, and after bending without performance degradation [25] [9]. | Quantitative performance analysis of flexible CNT-based DNA sensors under bending stress [25]. Stable, sensitive, and selective co-detection of glucose and DA using a chitosan matrix [24]. |

The Role of Factorial Design (DoE) in Optimization

Moving Beyond One-Factor-at-a-Time (OFAT)

Traditional OFAT optimization, where one variable is changed while all others are held constant, is inefficient and fundamentally flawed for complex systems. It ignores interactions between factors, which occur when the effect of one independent variable on the response depends on the value of another variable [11] [19]. This can lead to finding a local optimum instead of the global optimum, as illustrated in the diagram below.

Diagram 2: OFAT vs. DoE Optimization Path. OFAT approaches risk finding local optima, while DoE efficiently maps the experimental space to find the global optimum.

Design of Experiments (DoE) Fundamentals

Design of Experiments (DoE) is a powerful chemometric tool that provides a systematic and statistically reliable methodology for optimization [11]. It involves strategically designing a set of experiments where multiple parameters are varied simultaneously. This approach allows for:

- Efficiency: Maximizing the amount of information gained with a minimal number of experimental runs [23] [19].

- Interaction Detection: Uncovering and quantifying interactions between fabrication parameters.

- Model Building: Developing a mathematical model (e.g., a linear or quadratic function) that describes the relationship between input factors and output responses (CQAs) [11] [19].

- Global Knowledge: The experimental plan is established a priori, enabling the prediction of the response at any point within the experimental domain, providing comprehensive, global knowledge for optimization [11].

Selecting and Executing a DoE

A typical DoE process involves multiple stages, from initial screening to detailed optimization. The workflow below outlines this iterative process and the key designs used at each stage.

Diagram 3: Iterative DoE Process for Biosensor Optimization. The process typically begins with screening designs to identify critical factors, followed by optimization designs to model responses and define the design space.

Common Experimental Designs

- Screening Designs: Used when many potential factors exist. The goal is to identify the "vital few" factors that have the most significant impact on the CQAs.

- Full Factorial Designs: A basic design where all possible combinations of all factor levels are run. For k factors, each at 2 levels, this requires 2k runs. It is effective for fitting first-order models and identifying interactions [11] [19].

- Definitive Screening Design (DSD): A modern, highly efficient design that requires only 2k+1 or 2k+3 runs. It can screen many factors and simultaneously identify main effects, quadratic effects, and two-factor interactions, often serving as a single-step alternative to traditional screening and optimization [27].

- Optimization Designs: Used after critical factors are identified to model the response surface and find the optimal region.

- Response Surface Methodology (RSM): A collection of statistical and mathematical techniques used to develop, improve, and optimize processes where the response of interest is influenced by several variables [19].

- Central Composite Design (CCD): A popular RSM design that augments a factorial or fractional factorial design with axial points and center points to allow for estimation of curvature (quadratic effects) in the response model [11].

Table 2: Comparison of Common Experimental Designs for Biosensor Development

| Design Type | Primary Purpose | Key Advantages | Typical Number of Runs for k=5 | Model Fitted |

|---|---|---|---|---|

| Full Factorial (2^k) | Screening & Interaction Analysis | Identifies all main effects and interactions. | 32 | First-Order + Interactions |

| Definitive Screening Design (DSD) | High-Efficiency Screening & Initial Optimization | Minimal runs; uncorrelated main effects from interactions; identifies quadratic effects [27]. | 11-13 | First-Order + Some Quadratics & Interactions |

| Central Composite Design (CCD) | Response Surface Mapping & Optimization | Accurately models curvature in the response surface. | ~32 - 48 (depends on replicates) | Full Second-Order |

Practical Application: A Case Study in DNA Vaccine Fermentation

A study on the fermentation process for a DNA vaccine production provides an excellent example of QbD and DoE application in a bioprocess analogous to biosensor bioreceptor production. The CQA was the supercoiled plasmid DNA content (target ≥80%), with performance attributes including volumetric and specific yield [27].

Experimental Protocol for Process Characterization

1. Define QTPP and CQAs: The QTPP was a DNA vaccine with high supercoiled DNA content. The CQA was explicitly defined.

2. Risk Assessment & Parameter Selection: Based on prior knowledge, five critical Process Parameters (PPs) were selected: Temperature, pH, Dissolved Oxygen (%DO), Cultivation Time, and Feed Rate [27].

3. DoE Selection and Execution: A Definitive Screening Design (DSD) was employed with 5 factors, requiring only 13 experimental runs (including 3 center points for error estimation) [27].

4. Model Building and Analysis: Predictive models for the CQA and PAs were built using data from the DSD runs. Model selection was based on statistical criteria (AICc and BIC). The relationship was described by a quadratic model:

y = β₀ + Σβᵢxᵢ + ΣΣβᵢⱼxᵢxⱼ + Σβᵢᵢxᵢ² + ε

where y is the response, β₀ is a constant, βᵢ, βᵢⱼ, βᵢᵢ are coefficients for linear, interaction, and quadratic terms, and ε is error [27].

5. Establishment of Design Space and Control Strategy: The model was used to simulate 100,000 runs via Monte Carlo simulation, predicting the tolerance intervals for the CQA and PAs. This defined the operational ranges (Proven Acceptable Ranges - PARs) for the PPs to ensure the CQA (supercoiled content) consistently met the 80% specification [27].

The Scientist's Toolkit: Essential Reagents and Materials

The successful fabrication and optimization of biosensors rely on a suite of specialized materials and reagents. The following table details key items and their functions in a typical biosensor research and development setting.

Table 3: Key Research Reagent Solutions for Biosensor Fabrication and Optimization

| Category / Item | Function in Biosensor Development | Exemplary Application |

|---|---|---|

| Biorecognition Elements | Provides specificity by binding the target analyte. | Glucose Oxidase (GOx): Enzyme for glucose biosensors [24]. Lactate Oxidase (LacOx): Enzyme for lactate detection [24]. Single-Stranded DNA (ssDNA) probes: For DNA hybridization sensors [25]. Antibodies: For immunosensors detecting proteins (e.g., Tau-441) [26]. Aptamers: For specific recognition of targets like Salmonella [26]. |

| Substrate Materials | Forms the primary mechanical support for the biosensor. | Polyethylene Terephthalate (PET): Flexible, transparent substrate for electrodes [25]. Polyimide: Flexible, thermally stable substrate [9]. |

| Conductive & Sensing Materials | Transduces the biological binding event into a measurable signal. | Carbon Nanotubes (CNTs): Create a high-surface-area network for sensing [25]. Graphene Foam / 3D Graphene: High-conductivity electrode material for electrochemical detection [26]. Silver (Ag) Ink: For ink-jet printing of conductive electrodes [25]. Liquid Metal (e.g., EGaIn): For stretchable and conductive composites in wearable sensors [26]. |

| Immobilization & Encapsulation | Entraps or attaches biorecognition elements to the transducer surface. | Chitosan Hydrogel: A biopolymer electrodeposited to entrap oxidase enzymes on electrode surfaces [24]. Covalent Organic Frameworks (COFs): Porous materials for immobilizing enzymes or antibodies in immunoassays [26]. EDC-NHS Chemistry: A standard carbodiimide chemistry for covalent immobilization of biomolecules onto carboxyl-functionalized surfaces [26]. |

| Analytical Tools | Characterizes and validates biosensor performance. | Fast-Scan Cyclic Voltammetry (FSCV): Electrochemical method for detecting electroactive neurochemicals like dopamine [24]. Electrochemical Impedance Spectroscopy (EIS): Characterizes the physical nature of the electrode/solution interface and monitors binding events [24]. Surface-Enhanced Raman Spectroscopy (SERS): Provides highly sensitive optical detection [26]. |

Defining precise optimization objectives and Critical Quality Attributes is not merely a regulatory formality but a cornerstone of efficient and successful biosensor development. By adopting the QbD framework and leveraging the power of factorial Design of Experiments, researchers can transition from ad-hoc, OFAT experimentation to a predictive, science-driven paradigm. This systematic approach enables a deeper understanding of the complex interactions between fabrication parameters and the resulting biosensor CQAs, ultimately leading to the establishment of a robust design space. The result is a more efficient development pathway, reduced costs, and the reliable production of high-performance biosensors capable of meeting the rigorous demands of modern diagnostics, environmental monitoring, and research.

Selecting Fabrication Factors and Appropriate Ranges

The performance of a biosensor—its sensitivity, selectivity, stability, and reproducibility—is intrinsically governed by the complex interplay of numerous fabrication parameters. Optimizing these factors in isolation overlooks critical interactions, making factorial design of experiments (DOE) a powerful and efficient methodology for biosensor development [16]. This guide provides an in-depth technical framework for identifying key fabrication factors and their applicable ranges, specifically structured within a factorial design context to enable systematic optimization for researchers and drug development professionals.

Core Components of a Biosensor and Their Fabrication Factors

A biosensor typically consists of three fundamental components: a biological recognition element, a transducer, and a substrate that provides mechanical support [9] [16]. Each component introduces specific, tunable fabrication factors that directly influence the final device's performance.

Table 1: Core Biosensor Components and Key Fabrication Factors

| Biosensor Component | Function | Key Fabrication Factors |

|---|---|---|

| Biological Recognition Element | Binds specifically to the target analyte [16]. | Type (enzyme, antibody, aptamer), immobilization method, surface density, orientation, activity. |

| Transducer | Converts the biological recognition event into a measurable signal [9] [16]. | Material (Au, Pt, graphene, CNTs), geometry (2D, 3D), surface area/porosity, functionalization. |

| Substrate | Provides the primary mechanical support for the entire system [9]. | Material (PDMS, PET, PI), flexibility, stiffness, surface energy, biocompatibility. |

Critical Fabrication Factors and Experimentally-Determined Ranges

Substrate and Mechanical Properties

The substrate forms the foundational skeleton of the biosensor, and its properties are critical for non-planar, soft, or dynamic biological interfaces [9].

Table 2: Substrate and Mechanical Fabrication Factors

| Factor | Impact on Performance | Typical Ranges & Materials |

|---|---|---|

| Substrate Material | Determines biocompatibility, flexibility, and chemical/thermal stability [9]. | Polydimethylsiloxane (PDMS), Polyethylene Terephthalate (PET), Polyimide (PI), conductive polymers. |

| Stiffness/Elastic Modulus | Affects conformal contact with soft tissues; mismatch can cause signal drift [9]. | 0.1 MPa to 3 MPa (to match biological tissues like skin). |

| Surface Energy & Roughness | Influences adhesion for subsequent layers and bioreceptor immobilization efficiency [9]. | Water contact angle: 30°-110°; Roughness (Ra): 1 nm - 1 µm. |

Biorecognition Element Immobilization

The method and quality of immobilizing the biorecognition layer are paramount for assay sensitivity and specificity.

Table 3: Biorecognition Immobilization Factors

| Factor | Impact on Performance | Typical Ranges & Methods |

|---|---|---|

| Immobilization Method | Controls orientation, activity, and stability of the recognition element [16]. | Physical Adsorption, Covalent Bonding (EDC/NHS), Avidin-Biotin, Affinity Binding. |

| Surface Density | Directly affects signal magnitude; too high a density can cause steric hindrance [16]. | ( 10^1 ) to ( 10^5 ) molecules per µm². |

| Bioink Formulation (3D Printing) | Enables spatial control and can enhance signal by creating a porous, high-surface-area matrix [10]. | Alginate, GelMA, or PEG-based hydrogels with 1-20% (w/v) polymer concentration. |

Transducer Material and Nanostructuring

The transducer's composition and morphology are primary levers for enhancing electrochemical and optical signals.

Table 4: Transducer Fabrication Factors

| Factor | Impact on Performance | Typical Ranges & Materials |

|---|---|---|

| Nanomaterial Type | Defines electrical conductivity, catalytic activity, and plasmonic properties [16] [17]. | Gold Nanoparticles (AuNPs), Graphene, Carbon Nanotubes (CNTs), Metal-Organic Frameworks (MOFs). |