Performance Verification in Real Agricultural Samples: A Framework for Robust and Holistic Analysis

This article provides a comprehensive guide for researchers and scientists on establishing robust performance verification protocols for analytical methods used with real agricultural samples.

Performance Verification in Real Agricultural Samples: A Framework for Robust and Holistic Analysis

Abstract

This article provides a comprehensive guide for researchers and scientists on establishing robust performance verification protocols for analytical methods used with real agricultural samples. It addresses the critical need to move beyond idealized conditions, covering foundational principles, methodological application, troubleshooting of common pitfalls, and rigorous validation strategies. By integrating insights from agricultural science, data analytics, and quality management systems, this resource aims to equip professionals with the knowledge to generate reliable, reproducible, and actionable data that accounts for the inherent complexity and variability of agricultural matrices, thereby supporting confident decision-making in research and development.

Laying the Groundwork: Core Principles of Performance Verification in Complex Agricultural Matrices

Defining Performance Verification vs. Validation in an Agricultural Context

In agricultural research, particularly when analyzing real-world samples, the concepts of verification and validation (V&V) form the bedrock of scientific credibility. Though sometimes used interchangeably, they represent fundamentally different processes. A clear understanding and implementation of both are crucial for ensuring that research findings are not only methodologically sound but also relevant and applicable to real agricultural settings [1] [2].

Verification answers the question, "Are we building the system right?" It is an internal process checking that a product, service, or system complies with regulations, requirements, specifications, or imposed conditions. In contrast, validation answers the question, "Are we building the right system?" It is an external process ensuring that the system meets the needs and requirements of its intended users and the intended use environment [1]. This distinction is critical for research on real agricultural samples, where environmental variability and complex biological systems can create significant gaps between theoretical specifications and practical efficacy.

The low level of consensus on science-based approaches to monitoring and verifying the efficacy of agricultural solutions has left many initiatives vulnerable to allegations of greenwashing [3]. This guide provides a clear, objective comparison to fortify research practices against such criticisms.

Conceptual Framework: Verification vs. Validation

Core Definitions and Relationships

The following table distills the key differences between verification and validation, providing a quick-reference guide for researchers.

Table 1: Core Definitions and Comparisons of Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Core Question | "Are we building it right?" [1] | "Are we building the right thing?" [1] |

| Primary Focus | Compliance with specifications, regulations, or imposed conditions [1]. | Fitness for purpose, meeting user needs in the intended environment [1]. |

| Nature of Process | Often an internal process [1]. | Often an external process involving end-users [1]. |

| Context in HACCP | Activities that determine the validity of the HACCP plan and that the system is operating according to the plan [2]. | The element of verification focused on collecting and evaluating scientific and technical information to determine if the HACCP plan, when properly implemented, will effectively control the hazards [2]. |

| Analogy | Confirming a pesticide is mixed to the exact concentration specified in the protocol. | Confirming that the applied pesticide effectively controls the target pest under real field conditions. |

It is entirely possible for a product or method to pass verification but fail validation. This occurs when a product is built as per specifications, but the specifications themselves fail to address the user's actual needs [1]. In agriculture, a soil sensor might be verified to detect nitrogen at a specified precision in the lab (meeting its design specs), but fail validation if it cannot function reliably in the varied soil types and moisture conditions of a real farm.

The V&V Workflow in Agricultural Research

The relationship between verification, validation, and the research lifecycle can be visualized as a cohesive workflow. The diagram below illustrates how these processes ensure both technical correctness and real-world relevance.

Application in Agricultural Domains

Food Safety and HACCP Systems

The Hazard Analysis and Critical Control Point (HACCP) system provides a clear example of V&V in practice. Here, validation is actually a component of the broader verification process [2].

- HACCP Validation Objective: To establish that implemented process controls are capable of providing control of the identified hazards. It provides a measure of the amount of control and ensures the HACCP plan will perform as expected when implemented [2].

- HACCP Verification Objective: To determine that an establishment is able to consistently apply their HACCP plan as designed [2].

A concrete example from beef safety illustrates the peril of confusing these terms. One establishment might validate an organic acid spray by demonstrating it achieves a specific log reduction of a pathogen on carcasses in a lab study. A second establishment might use a different, ineffective chemical compound but verify that it is applied consistently at the correct concentration and coverage. The second HACCP system is verified but not validated, rendering it unsuccessful at controlling the identified hazard. Only a system that is both validated and verified will perform optimally [2].

Analytical Method Development

For analytical methods, such as those used to detect pesticide residues in food products, validation is a fundamental requirement to prove the method is "fit-for-purpose" [4]. This process provides evidence that when correctly applied, the method produces reliable and accurate results with an acceptable degree of certainty.

Table 2: Key Attributes Tested During Analytical Method Validation [4]

| Attribute | Function in Validation |

|---|---|

| Selectivity/Specificity | Ensures the method can distinguish and quantify the analyte in the presence of other components. |

| Accuracy and Precision | Accuracy measures closeness to the true value; Precision measures reproducibility of results. |

| Repeatability | Consistency of results under the same operating conditions over a short period. |

| Reproducibility | The precision between different laboratories, a crucial indicator of robustness. |

| Limit of Detection (LOD) | The lowest amount of analyte that can be detected, but not necessarily quantified. |

| Limit of Quantification (LOQ) | The lowest amount of analyte that can be quantitatively determined with acceptable precision and accuracy. |

| Linearity and Range | The ability to obtain results directly proportional to analyte concentration, within a given range. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters. |

Field Research and Environmental Markets

In field research, the confirmation of findings is described by the terms repeatability, replicability, and reproducibility [5]. These concepts align closely with the principles of V&V.

- Repeatability: The ability of a research group to obtain consistent results when an analysis or experiment is repeated within a study or under the same conditions. This is akin to internal verification.

- Replicability: The ability of a single research group to obtain consistent results from a previous study using the same methods in different environments (e.g., multiple seasons or locations). This strengthens internal validation.

- Reproducibility: The ability of an independent research team to obtain comparable results from a study directed at the same research question, often using different data, cultivars, or locations [5]. This is the highest form of external validation, confirming that results are robust and broadly applicable.

In emerging environmental services markets, such as those for climate-smart agriculture (CSA), independent third-party V&V is critical for integrity. For example, under the USDA's guidelines for biofuels, validation confirms a project meets all program rules, while verification confirms that the projected outcomes (e.g., greenhouse gas reductions) have been achieved and quantified according to the standard [6] [7]. This independent check is essential for building trust in environmental claims and market-traded credits.

Experimental Protocols for V&V

Protocol for Validating an Analytical Method

The following workflow outlines the key steps for validating an analytical method, such as one for pesticide residue analysis, incorporating both verification and validation principles.

Detailed Methodologies:

- Determining Accuracy and Precision: Spike a blank sample matrix with known concentrations of the analyte (e.g., a pesticide) across the validated range (e.g., low, mid, high). Analyze a minimum of five replicates per concentration level. Calculate accuracy as percent recovery and precision as the relative standard deviation (RSD) of the replicates [4].

- Establishing LOD and LOQ: Fortify samples at progressively lower concentrations. The LOD is typically the concentration that yields a signal-to-noise ratio of 3:1. The LOQ is the lowest concentration that can be quantified with acceptable accuracy and precision, typically with a signal-to-noise ratio of 10:1 and an RSD ≤ 20% [4].

- Robustness Testing: Deliberately vary key method parameters (e.g., mobile phase pH ± 0.2 units, column temperature ± 5°C) and observe the impact on results. This verifies the method's resilience to minor, expected fluctuations in routine use.

Protocol for Verifying and Validating a Field Experiment

Robust field experiments are the foundation of applied agricultural research. The following protocol ensures their integrity from design through to conclusion.

Table 3: Essential Research Reagent Solutions for Field Experimentation

| Research 'Reagent' | Function in Experimental Protocol |

|---|---|

| Experimental Units (Plots) | The physical areas to which treatments are applied; the fundamental unit for replication and randomization [8]. |

| Treatment List | The specific interventions (e.g., fertilizers, cultivars) being tested, including necessary controls [8]. |

| Controls (Positive/Negative) | Provides a baseline for comparison. A negative control (e.g., no nematicide) shows the minimal effect, while a positive control (e.g., current standard nematicide) shows the expected effect [8]. |

| Replication | The application of individual treatments to more than one plot. This accounts for uncontrolled variation (experimental error) and allows for a more accurate estimate of treatment performance [8]. |

| Randomization | The assignment of treatments to plots with no discernable pattern. This prevents unintentional bias from environmental gradients or neighboring plot effects [8]. |

Detailed Methodologies:

Treatment Selection and Replication:

- Precisely define the objective of the study. For example, "to determine the effect of two nitrogen fertilizers (A and B) and a control on the yield of Corn Hybrid X."

- Select all treatments necessary, including controls. A factorial arrangement may be needed for complex questions (e.g., testing fertilizers across multiple hybrids) [8].

- Apply each treatment to a minimum of four replicated plots to mitigate experimental error. More replications (five or six) are better for detecting smaller differences or when variability is high [8].

Randomization and Layout:

- Assign each treatment a number. For each block of replicates, randomize the order of treatments by drawing numbers from a hat or using a random number generator.

- Arrange the plots within each block according to the randomized order. This process must be repeated for every block to ensure no systematic bias in treatment placement [8].

Data Collection and Statistical Verification:

- Collect data (e.g., yield, pest counts) consistently from each plot.

- Use analysis of variance (ANOVA) to determine if differences among treatment means are statistically significant, thus verifying that observed effects are unlikely to be due to random chance alone [8].

In agricultural research, verification and validation are not synonymous; they are complementary processes that together form an indispensable framework for scientific rigor. Verification ensures that research is conducted correctly according to its plan and specifications, while validation confirms that the research is solving the right problem and that its outcomes are meaningful in a real-world context.

Mastering this distinction is crucial for researchers, scientists, and drug development professionals working with real agricultural samples. It strengthens the defensibility of research findings, enhances credibility with stakeholders, and protects against allegations of insufficient evidence or greenwashing. As agricultural challenges grow more complex, a disciplined approach to V&V will be paramount in developing solutions that are both scientifically sound and practically effective.

Why Real Agricultural Samples Present Unique Verification Challenges

Performance verification forms the critical backbone of reliable agricultural research, ensuring that data collected from field trials and sample analyses accurately reflects real-world conditions and can be trusted to inform decisions. However, the path to verification is fraught with challenges unique to the agricultural context. Unlike controlled laboratory settings, agricultural research must account for immense variability in environmental conditions, biological diversity, and operational logistics. This guide examines these unique verification challenges, compares current methodological approaches, and provides a detailed framework for validating performance in agricultural studies.

The Inherent Complexity of Agricultural Sample Verification

Verifying the performance of measurements, sensors, or treatments in agricultural research is fundamentally more complex than in many other fields. This complexity stems from the dynamic, heterogeneous, and often unpredictable nature of agricultural environments.

The core challenge lies in the contextual variability of real agricultural samples. As highlighted in agro-informatics research, data from field trials is often recorded in disparate locations and formats, sometimes even using outdated pen-and-paper methods, which creates significant bottlenecks in data flow and standardization [9]. This lack of standardization directly impacts verification by making it difficult to compare results across different trials or seasons.

Furthermore, agricultural samples are inherently temporally dynamic. Soil properties, plant physiology, and pest pressures change not only from season to season but within single growing cycles. This dynamism means that a verification protocol valid at one timepoint may not be applicable weeks later. The push for more automated metadata collection, as seen in tools like the Meta Ag app, aims to capture this spatiotemporal context by using geofence-based event detection and structured input validation [10]. Without this precise contextual logging, verifying that a measurement was taken under consistent conditions becomes exceptionally challenging.

Biological variability adds another layer of complexity. Individual plants, even within the same field, exhibit genetic and phenotypic differences that affect how they respond to treatments. This variability necessitates large sample sizes and sophisticated statistical methods to distinguish true treatment effects from natural variation, a verification hurdle less pronounced in more predictable industrial or clinical settings.

Comparative Analysis of Verification Methodologies

The table below summarizes the core challenges of agricultural sample verification and compares how different methodological approaches perform in addressing them.

Table 1: Comparison of Verification Approaches for Agricultural Research

| Verification Challenge | Traditional Lab-Based Approach | Digital Field-Based Approach | Integrated Agro-Informatics Platform |

|---|---|---|---|

| Data Standardization | Low; relies on manual, inconsistent recording [9] | Medium; uses digital forms but limited interoperability | High; ensures data harmonization and collaborative sharing [9] |

| Contextual Metadata Capture | Poor; often incomplete or missing | Good; automated via GPS, timestamps, and chatbots [10] | Excellent; integrates automated context with operational data [10] |

| Spatial Variability Management | Limited; small, non-representative samples | Moderate; GPS-enabled data points | High; geofence triggers and spatial data integration [10] |

| Temporal Consistency | Low; delayed data processing and analysis | Medium; real-time capture but disjointed analysis | High; real-time data collation and validation [9] |

| Performance Verification Capability | Manual, ad hoc, and error-prone [11] | Semi-automated for data collection | Automated output-based verification and predictive modeling [9] [11] |

| Impact on Trial Efficiency | Inefficient; can waste 4-5 days per trial on data wrangling [9] | Moderate efficiency gains | Up to 20% improvement in trial efficiencies [9] |

The data shows that integrated platforms significantly outperform other methods by addressing multiple verification challenges simultaneously. For instance, they can improve data accuracy by up to 25% through real-time validation and standardized parameters [9]. This is a critical verification metric, as accurate raw data is the foundation of any valid performance conclusion.

Experimental Protocols for Performance Verification

Establishing robust experimental protocols is essential for overcoming verification challenges. The following section outlines detailed methodologies for key experiments cited in this field.

Protocol 1: Field Trial Management for Agronomic Product Testing

This protocol is designed to generate verifiable and statistically sound data on the efficacy of agronomic products like fertilizers or crop protection agents.

- Trial Design and Planning: Use software capabilities to pre-define trial protocols, including treatment assignments, randomization patterns, and replication schemes (typically a minimum of 4 replications per treatment). Clearly document the research question and primary endpoints [9].

- Implementation and Data Collection:

- In-Field Assessments: Utilize mobile applications with offline capabilities to assign and track field assessments and sampling. Data is captured using customizable electronic notebook templates to ensure protocol adherence [9].

- Metadata Automation: Employ a framework like Meta Ag to automatically capture critical contextual metadata (e.g., precise location via GPS, time, operator ID, environmental conditions) for every action [10].

- Real-Time Validation: Enable real-time data collation and validation at the trial level to identify and correct outliers or sensor defects immediately [9].

- Data Analysis and Verification:

- Conduct both single-trial and cross-trial analysis to assess product performance and identify optimal market conditions based on soil, climate, and crop type [9].

- Employ advanced analytics, including predictive modeling and dynamic crop models, to move beyond basic comparisons and generate deeper performance insights [9].

- Perform automated output-based verification of model performance against pre-defined operational requirements, a method adapted from building energy modeling frameworks [11].

Protocol 2: Soil Contamination and Nutrient Analysis

This protocol focuses on verifying the safety and fertility of soils, a prerequisite for sustainable crop production.

- Systematic Grid Sampling: Establish a sampling grid across the field to account for spatial heterogeneity. The density of the grid should be informed by historical variability or preliminary remote sensing.

- Multi-Parameter Sensor Deployment: Use portable sensors or lab analysis to measure key parameters:

- Contaminants: Test for heavy metals (e.g., lead, cadmium) and pesticide residues.

- Macronutrients: Analyze levels of Nitrogen (N), Phosphorus (P), and Potassium (K).

- Soil Health Indicators: Measure pH, organic matter content, and electrical conductivity [12].

- Data Integration and Interpretation:

- Feed sensor data into a digital agriculture platform that layers soil test results with other data, such as yield maps from previous seasons.

- Generate precision nutrient management maps that prescribe variable rate fertilizer applications, thereby verifying the economic and agronomic value of the testing protocol [12].

Visualizing Workflows and Relationships

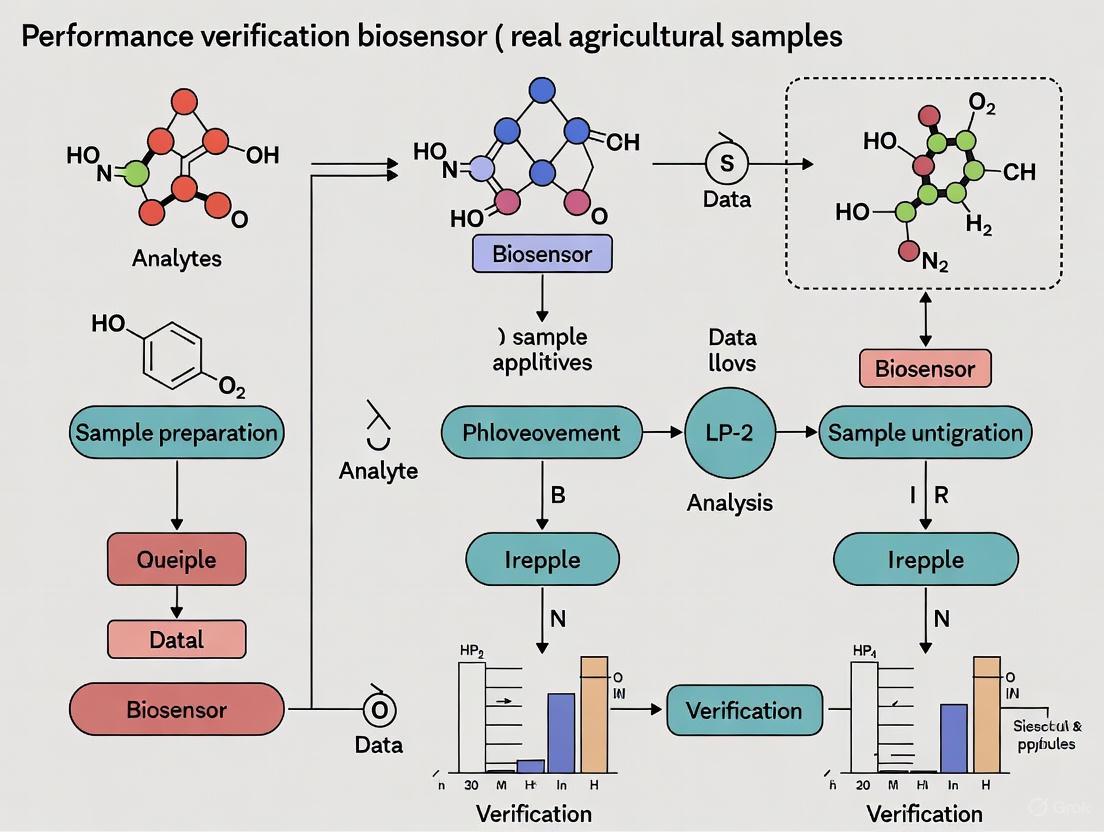

The following diagrams, created using the specified color palette, illustrate key workflows and logical relationships in agricultural performance verification.

Agricultural Verification Workflow

Data Standardization Challenge

The Scientist's Toolkit: Key Research Reagent Solutions

For researchers designing experiments involving real agricultural samples, having the right tools is paramount for ensuring verifiable results. The following table details essential solutions and their functions.

Table 2: Essential Research Tools for Agricultural Sample Verification

| Research Tool / Solution | Primary Function | Role in Performance Verification |

|---|---|---|

| Agronomic Trial Management Software | Centralized platform for planning, operating, and analyzing field trials [9]. | Provides standardized data parameters and governance, ensuring consistency and auditability across all trial operations. |

| Automated Metadata Collection App | Smartphone-based framework for capturing spatiotemporal context and operational data [10]. | Reduces human error, creates validated activity logs, and ensures the "who, what, where, when" of field operations is intact for later analysis. |

| Portable Sensor & Testing Kits | In-field analysis of soil nutrients, water quality, and crop health [12]. | Enables real-time, on-site measurement validation and rapid response, bypassing the delays of lab analysis. |

| Cloud-Based Data Analytics Platform | System for collaborative data sharing, analysis, and visualization [9]. | Facilitates cross-trial analysis and the use of predictive models to verify trends and patterns against larger datasets. |

| Geofencing Technology | Virtual perimeter that triggers an automated response when a device enters/exits [10]. | Automatically verifies that data collection and field activities occur at the correct, pre-defined locations. |

The verification of performance in real agricultural samples remains a formidable challenge due to the sector's inherent variability and complexity. However, the evolution from traditional, manual methods toward integrated, data-driven platforms marks a significant advancement. These modern solutions, which emphasize data standardization, automated metadata capture, and sophisticated analytics, are directly addressing the core verification hurdles. By adopting the detailed experimental protocols and tools outlined in this guide, researchers and agricultural professionals can enhance the reliability, efficiency, and impact of their work, ultimately driving innovation in global agriculture.

Key Metrics and Dimensions for Holistic Assessment

Evaluating agricultural performance requires moving beyond singular productivity metrics to a multidimensional framework that captures economic, environmental, and social dimensions. In agricultural research, particularly when verifying the performance of real samples such as crop varieties or management practices, a holistic assessment is critical for understanding true system impacts. This approach recognizes that agricultural systems are complex and interconnected, where improvements in one dimension (e.g., yield) may create trade-offs in others (e.g., environmental sustainability) [13]. The transformation toward sustainable agrifood systems depends on assessment methods that can capture these interactions and support informed decision-making for researchers, policymakers, and practitioners.

Performance verification in agricultural samples research—whether for new crop cultivars, sustainable farming practices, or innovative technologies—increasingly demands this comprehensive perspective. While traditional research often prioritized yield and economic metrics, contemporary approaches must balance these with environmental impacts, resource efficiency, and social considerations [14]. This guide compares the key dimensions, metrics, and methodologies for holistic performance assessment, providing researchers with structured frameworks for comprehensive evaluation of agricultural innovations using real-world samples and experimental data.

Theoretical Frameworks for Holistic Assessment

Core Characteristics of Holistic Assessment

A truly holistic assessment extends beyond simply measuring multiple dimensions. Based on a systematic review of 206 assessment approaches, four key characteristics define holistic systems assessment [13]:

- Multidimensional Performance Measurement: Assessing performance across environmental, economic, and social domains simultaneously, moving beyond isolated single-dimension evaluation.

- Multiple Stakeholder Perspectives: Incorporating viewpoints from various actors in the food system, recognizing that different stakeholders may assess performance differently.

- Evaluation of Emergent System Properties: Examining properties that arise from system interactions rather than from individual components alone.

- Analysis of Synergies and Trade-offs: Collecting and presenting data to reveal interactions between metrics, enabling better understanding of system dynamics when designing solutions.

This comprehensive framing addresses the limitations of conventional assessments that focus predominantly on productivity and economic outcomes while neglecting social dimensions and system properties [13]. The holistic approach is particularly valuable for comparing alternative agricultural systems, such as conventional versus agroecological practices, where the full benefits and trade-offs may only become apparent through multidimensional assessment.

Performance Dimensions and Indicator Categories

Agricultural performance assessment frameworks typically organize indicators into several interconnected dimensions. Table 1 summarizes the primary dimensions and their corresponding indicator categories used in holistic assessment.

Table 1: Key Dimensions and Indicator Categories for Holistic Agricultural Assessment

| Dimension | Indicator Categories | Specific Metric Examples |

|---|---|---|

| Productivity & Efficiency [14] | Yield EfficiencyLabor EfficiencyEquipment UtilizationResource Use Efficiency | Yield per acreOutput per labor hourMachine downtime versus utilizationWater usage per unit output |

| Economic & Financial [14] | ProfitabilityCost StructureFinancial HealthMarket Performance | Net income per acreCost of production per unitDebt-to-asset ratioGross margin per unit |

| Environmental & Resource Management [14] [15] | Soil HealthWater ManagementBiodiversity & Ecosystem ServicesClimate Impact | Soil organic matterWater usage efficiencyHabitat diversityCarbon footprint |

| Quality & Safety [14] | Product QualityFood SafetyAnimal Welfare (where applicable) | Protein content, Baking qualityContaminant levels, MycotoxinsMortality rates, Disease incidence |

| Social & Governance [15] | Social EquityCommunity BenefitsGovernanceKnowledge & Innovation | Labor conditionsLocal employment generationDecision-making processesInvestment in agricultural knowledge systems |

The integration of these dimensions creates a comprehensive picture of agricultural system performance. While many existing assessments now incorporate multiple dimensions, most still neglect the critical analysis of synergies, trade-offs, and emergent properties [13]. For instance, research on wheat breeding has demonstrated significant negative correlations between grain yield and nutritional quality, illustrating the type of trade-offs that holistic assessment can reveal [16].

Experimental Approaches for Multidimensional Assessment

Methodologies for Field-Based Performance Trials

Robust experimental design is fundamental for credible performance verification of agricultural samples. Well-structured field trials follow standardized protocols to ensure reproducibility and comparability of results across different environments and growing conditions [17]. The Ohio Wheat Performance Test provides an exemplary methodology for multidimensional crop assessment, incorporating the following experimental protocols [17]:

Site Selection and Replication: Trials are conducted across multiple geographically diverse locations (typically 5+ sites) with different soil types and microclimates to account for environmental variability. Each location utilizes a randomized complete block design with four replications per site to reduce spatial variability effects.

Standardized Plot Management: Plots consist of 7 rows spaced 7.5 inches apart and 25 feet long. Cultural practices including planting dates, fertilization, and pest management are standardized across sites while following regional recommended practices. Planting occurs within a defined window relative to biological benchmarks (e.g., within 17 days of the fly-free date for wheat).

Data Collection Protocols: Researchers collect comprehensive data across multiple dimensions:

- Yield: Harvested grain weight converted to bushels per acre at standardized moisture content (13.5%)

- Agronomic Traits: Plant height, lodging percentage, heading date

- Disease Resistance: Visual assessment of major diseases (e.g., Fusarium head blight, powdery mildew) under natural and inoculated conditions using standardized rating scales

- Quality Parameters: Test weight, protein content, milling yield, baking characteristics

- Environmental Data: Soil characteristics, weather conditions, input applications

Statistical Analysis: Data analysis includes calculation of least significant difference (LSD) values to determine statistical significance between treatments or varieties. Multi-year and multi-location analyses provide more reliable performance estimates than single-season, single-location trials.

This comprehensive approach to performance verification ensures that results reflect real-world conditions while enabling meaningful comparisons between agricultural samples.

Assessment Workflow for Holistic Evaluation

The following diagram illustrates the integrated workflow for holistic performance assessment of agricultural samples, from experimental design through data interpretation:

Diagram: Holistic Assessment Workflow for Agricultural Samples

This workflow emphasizes the sequential yet integrated nature of holistic assessment, where data from multiple dimensions are collected systematically and analyzed to reveal interactions and trade-offs.

Comparative Performance Data for Agricultural Systems

Wheat Cultivar Assessment Case Study

Large-scale experimental data from wheat breeding programs provides valuable insights into the practical application of holistic assessment. Research evaluating 282 bread wheat cultivars across seven decades of breeding examined 63 different traits related to agronomy, quality, and nutrients [16]. The findings demonstrate both the feasibility and necessity of multidimensional assessment for meaningful performance verification.

Table 2 presents selected data from this comprehensive study, illustrating performance trends and correlations across different trait categories:

Table 2: Wheat Performance Trends Across Multiple Dimensions (Based on 282 Cultivars) [16]

| Trait Category | Specific Traits | Performance Trend | Key Correlations |

|---|---|---|---|

| Agronomic Traits | Grain yieldPlant heightDisease resistance | Significant improvement over decadesReduction over timeSubstantial improvement | Negative correlation with protein contentNegative correlation with lodgingPositive correlation with yield stability |

| Quality Traits | Protein contentSedimentation volumeFalling number | Slight decrease over timeImprovement for baking qualityMaintained or slightly improved | Negative correlation with grain yieldPositive correlation with loaf volumeAssociated with starch quality |

| Nutritional Traits | Mineral contentSugar contentOligosaccharides | Slight decrease over timeVariable by specific compoundVariable by specific compound | Negative correlation with grain yieldLow to moderate heritabilityLow heritability for some compounds |

This multidimensional analysis revealed significant negative correlations between grain yield and both baking quality and mineral content, highlighting critical trade-offs that breeders must navigate [16]. Such findings underscore the importance of holistic assessment rather than single-trait optimization in agricultural research.

Regional Performance Trial Data

Regional performance trials provide another valuable source of comparative data for agricultural samples. The Ohio Wheat Performance Test evaluates numerous varieties across multiple locations, generating comprehensive data on yield, quality, and disease resistance [17]. Table 3 summarizes key findings from the 2025 trial, demonstrating the range of performance across varieties:

Table 3: Ohio Wheat Performance Test Results (2025) - Selected Varietal Data [17]

| Performance Dimension | Measurement Method | Range Across Varieties | Statistical Significance (LSD) |

|---|---|---|---|

| Grain Yield | Bushels per acre at 13.5% moisture | 42.7 - 116.6 bu/acre | Varies by location (4.8-9.1 bu/acre) |

| Test Weight | Pounds per bushel | 53.8 - 58.1 lb/bu | 0.3-0.7 lb/bu |

| Disease Resistance | Visual rating scales (% infection) | 5-95% for leaf blightSusceptible to Resistant for FHB | Qualitative categories |

| Quality Parameters | Flour yield percentageFlour softness | Varies by varietyVaries by variety | Not specified |

These regional trials highlight the significant variability in performance across different environments and the importance of multi-location testing for robust conclusions. The data provides researchers with comparative information for selecting varieties best suited to specific production systems and markets [17].

Research Reagent Solutions for Agricultural Assessment

Essential Tools and Technologies

Comprehensive performance assessment of agricultural samples requires specialized research reagents, equipment, and methodologies. The following table details key solutions used in advanced agricultural research:

Table 4: Research Reagent Solutions for Agricultural Performance Assessment

| Research Solution | Function/Application | Specific Use Cases | References |

|---|---|---|---|

| Near-Infrared Spectroscopy (NIR) | Rapid determination of protein content, moisture, and other composition parameters | High-throughput screening of grain quality traits in breeding programs | [16] |

| Falling Number Apparatus | Measures α-amylase activity in grain samples through viscometry | Assessment of pre-harvest sprouting damage and baking quality | [16] [18] |

| Solvent Retention Capacity (SRC) Profile | Evaluates flour functionality by measuring solvent absorption | Predicting performance for specific end-uses (cookies, bread, cakes) | [18] |

| X-ray Fluorescence (XRF) Detection | Rapid elemental analysis for mineral content | High-throughput measurement of nutritional quality traits in breeding programs | [16] |

| Disease Screening Assays | Inoculated disease nurseries with mist irrigation | Controlled evaluation of disease resistance under high pressure | [17] |

| Rapid Visco-Analyzer (RVA) | Measures pasting properties of flour-water suspensions | Starch quality assessment for various food applications | [16] |

| Molecular Markers | DNA-based markers linked to traits of interest | Marker-assisted selection for complex traits in breeding programs | [16] |

These research solutions enable precise, efficient measurement of the diverse traits included in holistic assessment frameworks. The trend in agricultural research is toward developing faster, more accurate methods that can handle the high throughput needed for effective selection in breeding programs and quality verification in production systems [16].

Analysis of Synergies and Trade-offs in Agricultural Systems

Integration of Multiple Performance Dimensions

A critical component of holistic assessment is analyzing how different performance dimensions interact within agricultural systems. Research consistently demonstrates that optimizing for a single metric often creates trade-offs in other dimensions. The correlation network analysis from wheat research visually represents these complex relationships between agronomic, quality, and nutritional traits [16].

The following diagram illustrates the key relationships and trade-offs between different performance dimensions in agricultural systems:

Diagram: Key Relationships Between Agricultural Performance Dimensions

These relationship patterns demonstrate why holistic assessment is essential for sustainable agricultural innovation. For instance, the well-documented negative correlation between grain yield and protein/nutritional content presents a significant challenge for breeding programs [16]. Similarly, tensions between productivity and environmental impacts require careful management through innovative practices that can maintain yields while reducing environmental footprints.

Methodologies for Trade-off Analysis

Advanced statistical methods enable researchers to quantify and analyze these trade-offs:

- Correlation Network Analysis: Mapping relationships between multiple traits to identify clusters of associated characteristics and potential conflicts [16]

- Multivariate Analysis: Techniques such as principal component analysis that can visualize the positioning of different agricultural systems or varieties across multiple performance dimensions

- Economic-Environmental Trade-off Analysis: Calculating the economic costs of environmental improvements or the environmental costs of economic gains

- Multi-criteria Decision Analysis: Formal frameworks for evaluating alternatives when multiple, often conflicting, criteria must be considered simultaneously

These analytical approaches help researchers and decision-makers navigate the complex trade-offs inherent in agricultural systems and identify solutions that offer the best balance across multiple performance dimensions.

Holistic assessment of agricultural performance requires integrated evaluation across productivity, economic, environmental, and social dimensions. The frameworks, methodologies, and data presented in this guide provide researchers with evidence-based approaches for comprehensive performance verification of agricultural samples. By adopting these multidimensional assessment strategies, agricultural scientists can generate more meaningful comparisons between alternatives, identify significant trade-offs, and contribute to the development of truly sustainable agricultural systems.

The future of agricultural research will increasingly demand this holistic perspective as stakeholders recognize the interconnectedness of agricultural outcomes. Continuing to refine assessment methodologies, develop new research tools, and implement integrated analysis will be essential for addressing the complex challenges facing global food systems while meeting productivity, sustainability, and nutritional goals.

The Critical Role of Cross-Validation and Data Structure

In data-driven agricultural research, the reliability of a predictive model is not determined solely by the algorithm chosen but by the rigor of its validation. The structure of agricultural data—often spatial, temporal, and hierarchical—poses unique challenges that conventional random validation methods fail to address, leading to over-optimistic performance estimates and models that break down when deployed in real-world settings. This guide objectively compares cross-validation (CV) strategies, demonstrating that the choice of validation method can have a greater impact on real-world performance than the choice of model itself. Framed within the critical thesis of performance verification using real agricultural samples, we provide experimental data and protocols to guide researchers toward more robust and generalizable model evaluation.

Comparative Analysis of Cross-Validation Strategies

The following table summarizes the performance outcomes of various cross-validation strategies when applied to real agricultural prediction tasks, highlighting the critical influence of data structure on model generalizability.

Table 1: Comparison of Cross-Validation Strategies on Agricultural Prediction Performance

| Cross-Validation Strategy | Key Principle | Application Context in Agriculture | Reported Impact on Predictive Performance |

|---|---|---|---|

| Random k-Fold CV | Randomly splits the entire dataset into k folds. | Common baseline method; assumes data is independent and identically distributed. | Poor error tracking for out-of-distribution data; creates over-optimistic performance estimates [19] [20]. |

| Spatial CV (e.g., Cluster-Based) | Splits data based on spatial clusters to keep locations together. | Yield prediction using UAV remote sensing; managing spatial autocorrelation. | Provides a more realistic expectation of model performance when applied to new, unseen spatial domains (e.g., new fields) [19]. |

| Leave-One-Field-Out CV | Uses all data from one entire field as the test set. | Multi-field experiments; evaluating model transferability across distinct geographic locations. | Yields better predictive performance on independent test fields compared to random CV, ensuring robust extrapolation [19]. |

| Farm-Fold CV | Uses all data from one entire farm as the test set. | Animal health monitoring (e.g., lameness detection) using accelerometer data from multiple farms. | Likely to give a more robust, realistic estimate of general model performance across different farms, preventing overfitting to farm-specific conditions [21]. |

| k-Fold n-Step Forward CV | Sorts data by a key property (e.g., logP) and uses time-series-like forward validation. | Mimics real-world optimization of molecular structures in drug discovery for agriculture-relevant compounds (e.g., biopesticides) [22]. | More helpful than conventional CV in describing real-world accuracy and applicability for out-of-distribution data [22]. |

Experimental Protocols for Robust Validation

To ensure the validity and applicability of research findings, it is essential to follow experimentally robust protocols. Below are detailed methodologies for key validation experiments cited in this guide.

Protocol: Evaluating Model Transferability with Spatial and Leave-One-Field-Out CV

This protocol is based on experiments evaluating UAV-based soybean yield prediction models [19].

- 1. Objective: To establish and validate a yield prediction model that is robust and transferable across different spatial domains (fields).

- 2. Materials & Data Collection:

- Platform: Unmanned Aerial Vehicle (UAV).

- Sensors: Multispectral or hyperspectral sensors.

- Data Output: High-resolution imagery used to calculate a suite of Vegetation Indices (VIs).

- Ground Truth: Georeferenced yield monitor data collected from multiple fields.

- 3. Model Training:

- Feature Set: Use derived VIs as features for the model.

- Algorithms: Train multiple models, including Random Forest, XGBoost, LASSO regression, and a stacked ensemble of these learners.

- 4. Cross-Validation & Testing:

- Random k-Fold CV: Implement as a baseline. Randomly split the dataset (e.g., 80/20) into training and test sets, repeated with k-folds.

- Spatial CV: Perform clustering (e.g., k-means) on the spatial coordinates of the data points. Assign entire clusters to different folds for testing.

- Leave-One-Field-Out CV: Designate all data points from a single, entire field as the test set, using data from all other fields for training. Repeat this for every field.

- Independent Test: Finally, evaluate all models trained under the different CV strategies on a completely independent field not used in any previous step.

- 5. Analysis: Compare the performance metrics (e.g., R², RMSE) of the models from the different CV strategies on the independent test set. The strategy whose performance metrics most closely match the final independent test performance is the most reliable for extrapolation objectives [19].

Protocol: Assessing Generalizability with Farm-Fold Cross-Validation

This protocol is derived from research on automated lameness detection in dairy cattle using accelerometer data [21].

- 1. Objective: To develop a machine learning model for detecting foot lesions in dairy cows that performs reliably across different herds and farms.

- 2. Materials & Data Collection:

- Sensors: 3-axis accelerometers (e.g., AX3 Logging accelerometer) attached to a hind limb of dairy cows.

- Population: 383 dairy cows from 11 commercial, pasture-based dairy herds.

- Data: Continuous recording of accelerometer data in 3 perpendicular axes (x, y, z) over a trial period.

- Ground Truth: Binary outcome for severe foot lesions, determined by standardized clinical assessment of each claw by veterinarians.

- 3. Data Preprocessing:

- Data Reduction: To ease computational cost, sub-sample the high-frequency data (e.g., retain one measurement per 30 seconds).

- Standardization: Standardize each feature to have a mean of zero and a standard deviation of one.

- Dimensionality Reduction: Apply techniques like Principal Component Analysis (PCA) or functional PCA (fPCA) to the high-dimensional accelerometer data to reduce the number of features while retaining key information [21].

- 4. Model Training & Validation:

- Apply machine learning models (e.g., Random Forests) to both the raw data and the dimensionally-reduced data.

- n-Fold CV (nCV): Perform a standard k-fold cross-validation where data from all farms are randomly mixed and split into folds.

- Farm-Fold CV (fCV): Hold out all data from one entire farm as the test set, and use data from the remaining 10 farms for training. Repeat this process for each farm.

- 5. Analysis: Compare the performance metrics (e.g., AUC, accuracy) between the nCV and fCV approaches. The fCV approach will provide a more realistic and conservative estimate of how the model is expected to perform when deployed on a new, previously unseen farm [21].

The following workflow diagram synthesizes these protocols into a unified process for developing and validating robust predictive models in agricultural research.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and methodological solutions essential for conducting rigorous cross-validation studies in agricultural science.

Table 2: Essential Research Toolkit for Cross-Validation in Agricultural Science

| Tool / Solution | Category | Primary Function | Relevance to Robust Validation |

|---|---|---|---|

| Scikit-learn [22] | Software Library | Provides a unified interface for a wide range of machine learning models and utilities. | Offers standard implementations of k-fold CV; foundational for building custom validation splitters (e.g., spatial, group). |

| R Statistical Software [21] | Software Environment | A comprehensive environment for statistical computing and graphics. | Used for complex data manipulation, statistical analysis, and implementing specialized validation strategies like farm-fold CV. |

| Stratified Cross-Validation [23] | Methodological Technique | Ensures that each fold of the data has the same proportion of outcome classes as the whole dataset. | Critical for classification problems with imbalanced classes (e.g., rare disease detection) to prevent biased performance estimates. |

| Principal Component Analysis (PCA) [21] | Dimensionality Reduction | Reduces the number of features in a high-dimensional dataset (e.g., accelerometer data) while preserving variance. | Mitigates overfitting in models trained on wide data (many features, few samples), leading to more generalizable results. |

| Functional PCA (fPCA) [21] | Dimensionality Reduction | An extension of PCA designed specifically for time-series or functional data. | Retains key temporal patterns in sensor data, improving model performance for dynamic agricultural processes. |

| Nested Cross-Validation [23] | Methodological Technique | Uses an outer loop for performance estimation and an inner loop for model/hyperparameter selection. | Reduces optimistic bias introduced when using the same data for both model tuning and final performance assessment. |

| Spatial Clustering Algorithms [19] | Preprocessing Tool | Groups data points based on their geographic coordinates (e.g., k-means clustering). | Enables the creation of folds for spatial cross-validation, which is essential for realistic estimation of model transferability to new locations. |

The experimental data and protocols presented in this guide lead to an unambiguous conclusion: in agricultural research, the conventional practice of random data splitting for validation is fundamentally inadequate for estimating real-world model performance. As evidenced by studies in crop yield prediction [19] and animal health monitoring [21], spatially-aware and group-based cross-validation strategies (e.g., leave-one-field-out, farm-fold CV) are not merely academic exercises but necessary practices. They provide a critical reality check, exposing models to the true variance encountered in agricultural systems and preventing the deployment of overfitted, unreliable tools. For researchers committed to performance verification with real agricultural samples, adopting these structured validation methods is a non-negotiable standard for ensuring that predictive models deliver genuine value and robustness in practice.

From Theory to Field: Methodologies for Effective Verification in Agricultural Samples

In agricultural and environmental research, the analysis of complex samples like soil, water, and crops presents significant analytical challenges. These matrices contain numerous interfering compounds that can compromise accuracy, making robust verification protocols for sample selection and preparation not merely beneficial but essential for data integrity. The primary goal of such protocols is to ensure that analytical methods consistently produce reliable, accurate, and reproducible results for monitoring pesticides, herbicides, and other agrochemicals in real-world samples [24]. This guide objectively compares leading sample preparation techniques, providing the experimental data and methodological details needed for researchers to design verification protocols that stand up to scientific and regulatory scrutiny.

A well-designed verification protocol establishes documented evidence that provides a high degree of assurance that a specific process will consistently produce results meeting predetermined specifications and quality attributes [25]. In the context of agricultural samples, this involves rigorous testing of precision, accuracy, linear range, detection limit, and reportable range specific to the matrix being analyzed [25].

Core Principles of Analytical Verification

Before comparing specific techniques, it is crucial to establish the fundamental principles that underpin any verification protocol. Verification and validation, though sometimes used interchangeably, serve distinct purposes. Verification confirms through objective evidence that specified requirements have been fulfilled—answering "Did we implement the method correctly?" In contrast, validation provides objective evidence that the method meets the needs for its intended use—answering "Did we develop the right method for our analytical problem?" [26].

For analytical methods applied to agricultural samples, verification must confirm several key performance characteristics [25]:

- Precision: The closeness of agreement between independent test results obtained under stipulated conditions. This includes repeatability (within-run) and intermediate precision (long-term, inter-assay).

- Accuracy: The agreement between the test result and an accepted reference value or the closeness of the measured value to the true value.

- Linearity and Reportable Range: The ability of the method to obtain test results directly proportional to the concentration of analyte in the sample within a given range, including the Analytical Measurement Range (AMR) and Clinically Reportable Range (CRR).

- Limit of Detection (LOD) and Limit of Quantitation (LOQ): The lowest amount of analyte that can be detected and reliably quantified, respectively.

- Analytical Specificity: The ability of the method to measure the analyte unequivocally in the presence of other components, including interferents.

Comparative Analysis of Sample Preparation Techniques

Sample preparation is the most critical pre-analytical step for complex agricultural matrices. The ideal technique effectively extracts target analytes while minimizing co-extraction of interfering compounds, is compatible with the analytical instrument, and offers practical efficiency for the laboratory's throughput needs. For pesticide analysis in food and feed, multi-residue methods that can comprehensively screen for hundreds of compounds are increasingly essential for cost-effective monitoring [27].

The following table compares the primary sample preparation techniques used in agricultural and environmental analysis, based on their application to herbicide and pesticide extraction.

Table 1: Comparison of Sample Preparation Techniques for Agricultural Analysis

| Technique | Key Principle | Optimal Use Cases | Advantages | Limitations |

|---|---|---|---|---|

| QuEChERS [27] | Quick, Easy, Cheap, Effective, Rugged, and Safe extraction using acetonitrile partitioning and dispersive SPE cleanup. | Multi-residue pesticide analysis in food, feed, and soil; high-throughput screening. | Rapid; minimal solvent use; effective for a wide polarity range; easily adaptable. | May require method optimization for different matrices; potential for matrix effects. |

| Solvent Extraction (Dichloromethane) [24] | Liquid-liquid extraction using organic solvent to partition analytes from aqueous or solid matrix. | Targeted analysis of specific compounds like acetochlor in soil; less complex matrices. | High extraction efficiency for non-polar analytes; simple methodology. | Uses hazardous chlorinated solvents; often requires evaporation and reconstitution; less environmentally friendly. |

| Immunoassay Extraction [24] | Extraction optimized for compatibility with antibody-based detection, often involving buffer reconstitution. | Single-analyte or single-class analysis where high specificity is needed; screening applications. | High specificity; can tolerate some matrix interferences due to antibody specificity. | Primarily for single analytes/classes; requires specialized immunoreagents; limited quantitative scope. |

Performance Data from Experimental Studies

Objective performance data is crucial for selecting a sample preparation technique. A 2025 study on acetochlor analysis in soil provides a direct comparison of QuEChERS and traditional solvent extraction, offering key metrics for verification protocols [24].

Table 2: Experimental Performance Data for Acetochlor Extraction from Soil [24]

| Extraction Technique | Extraction Solvent/Protocol | Average Recovery (%) | Limit of Detection (LOD) in Soil | Working Range in Soil |

|---|---|---|---|---|

| Solvent Extraction | Dichloromethane, evaporation, and reconstitution in phosphate buffer with gelatin. | 74 - 124% | 0.3 µg/g | 0.66 - 5.7 µg/g |

| QuEChERS | Acetonitrile extraction with commercial kit (Copure). | Data reported but optimal recovery was achieved with the solvent extraction method above. | - | - |

This data highlights a critical point for verification: even standardized techniques like QuEChERS may require matrix-specific optimization. The study found that a traditional solvent extraction protocol, followed by careful evaporation and reconstitution in a buffered solution with a stabilizer like gelatin, provided the most effective approach for the immunoassay of acetochlor in gray forest soil, delivering acceptable recovery rates and a well-defined working range [24].

For multi-residue analysis in food commodities, QuEChERS demonstrates robust performance. An application note using Quality Control materials (strawberry purée, baby food, animal feed) showed that a QuEChERS-based LC-MS/MS method for over 200 pesticides met SANTE guideline tolerances. The method demonstrated trueness in the range of 100-130% and all calculated %RSDs (Relative Standard Deviations) were less than 20%, confirming its precision and accuracy for complex matrices [27].

Detailed Experimental Protocols for Verification

Verification of a QuEChERS Protocol for Multi-Residue Analysis

This protocol is adapted from a validated method for pesticide analysis in food and feed using Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) [27].

1. Sample Description and Preparation:

- Obtain representative samples (e.g., 10 g strawberry purée, 5 g cereal-based baby food, 2 g animal feed).

- For homogeneous samples (like purées), proceed directly. For heterogeneous solids, freeze-dry and grind to a fine, homogeneous powder.

2. Extraction (QuEChERS CEN Method):

- Weigh the prepared sample into a 50 mL centrifuge tube.

- Add 10 mL of acetonitrile and shake vigorously for 1 minute.

- Add a pre-packaged salts mixture (containing MgSO4 and NaCl) from a DisQue QuEChERS kit to induce partitioning.

- Shake immediately and vigorously for 1 minute to prevent salt aggregation.

- Centrifuge at >4000 RCF for 5 minutes.

3. Clean-up (For complex matrices like baby food and feed):

- Use a pass-through clean-up with Oasis HLB Plus Short cartridges.

- Load the acetonitrile (upper) layer from the centrifuge tube onto the cartridge and collect the eluent.

4. Analysis:

- Inject 1 µL of the pure acetonitrile extract without dilution. The use of a post-injector extension loop is recommended to improve peak shape for early-eluting compounds.

- Analyze via LC-MS/MS with Multiple Reaction Monitoring (MRM). The analytical column is an ACQUITY Premier HSS T3 (2.1 x 100 mm, 1.8 µm) at 40°C. The mobile phase is (A) water with 0.1% formic acid and 5 mM ammonium formate and (B) a 1:1 mix of methanol and acetonitrile with 0.1% formic acid and 5 mM ammonium formate, run with a gradient.

5. Data Review:

- Use software with exception-focused review (e.g., waters_connect for Quantitation) to automatically flag results outside pre-set tolerances (e.g., SANTE guidelines), increasing review efficiency [27].

The following workflow diagram illustrates the complete QuEChERS process for multi-residue analysis:

Diagram 1: QuEChERS Sample Preparation Workflow

Verification of a Targeted Solvent Extraction Protocol

This protocol is derived from a 2025 study for the extraction of the herbicide acetochlor from soil for immunoenzyme assay [24].

1. Soil Sample Preparation:

- Air-dry the gray forest soil sample at room temperature and sieve it through a 1 mm mesh.

2. Extraction:

- Weigh 1 g of the prepared soil into a glass tube.

- Add 5 mL of dichloromethane.

- Shake the mixture for 30 minutes on a mechanical shaker.

- Centrifuge the sample at 1500 RCF for 5 minutes.

3. Extract Processing for Immunoassay:

- Transfer the organic (dichloromethane) supernatant to a new tube.

- Carefully evaporate the dichloromethane extract to dryness under a stream of nitrogen or in a vacuum evaporator.

- Reconstitute the dry residue in 1 mL of a 10 mM phosphate-buffered solution (pH 7.4) containing 0.1% gelatin. This step is critical for transferring the hydrophobic analyte into an aqueous solution compatible with the immunoassay.

4. Analysis:

- Analyze the reconstituted extract using the developed enzyme immunoassay. The assay uses specific rabbit antibodies against an acetochlor derivative, with detection via horseradish peroxidase-labeled anti-species antibodies and a 3,3',5,5'-tetramethylbenzidine substrate.

Verification Metrics from the Study [24]:

- Recovery: Test the protocol by spiking soil samples with known concentrations of acetochlor. The acceptable recovery range was 74-124%.

- Limit of Detection (LOD): The LOD for the overall method (including extraction) was determined to be 0.3 µg/g of soil.

- Linearity/Working Range: The working range in soil was verified from 0.66 to 5.7 µg/g.

The Scientist's Toolkit: Essential Research Reagent Solutions

Selecting the right reagents and materials is fundamental to executing a successful verification protocol. The following table details key solutions used in the featured experiments.

Table 3: Essential Research Reagents and Materials for Sample Preparation

| Item | Function in Protocol | Example from Search Results |

|---|---|---|

| ACN & Buffers | Primary extraction solvent (ACN) and medium for reconstitution or immunoassay (buffers). | Phosphate buffer (10 mM, pH 7.4) with 0.1% gelatin for reconstitution [24]; Acetonitrile for QuEChERS [27]. |

| QuEChERS Kits | Standardized salts and sorbents for extraction and clean-up, ensuring reproducibility. | DisQue QuEChERS kits for extraction [27]; Copure kits for acetochlor extraction [24]. |

| Solid-Phase Extraction (SPE) Cartridges | Clean-up step to remove interfering matrix components from the extract. | Oasis HLB Plus Short cartridges for pass-through clean-up of baby food and animal feed extracts [27]. |

| Analytical Columns | Stationary phase for chromatographic separation of analytes prior to detection. | ACQUITY Premier HSS T3 Column (1.8 µm) for LC-MS/MS separation of pesticides [27]. |

| Antibodies & Conjugates | Key immunoreagents for the specific recognition and detection of target analytes in immunoassays. | Rabbit antibodies specific to acetochlor; horseradish peroxidase-labeled anti-species antibodies [24]. |

| Certified Reference Materials (CRMs) | Materials with certified analyte concentrations used for method validation, verification, and quality control. | FAPAS Quality Control Materials (strawberry purée, baby food, animal feed) [27]. |

Statistical Considerations for Sample Size and Verification

A robust verification protocol must include statistical rationale for sample size, especially when dealing with natural variations in agricultural samples. Statistical techniques for design verification provide frameworks for making pass/fail decisions based on risk [28].

For quantitative tests, variable sampling plans can be employed. These require fewer samples than attribute (pass/fail) plans—sometimes as few as 15-50 samples, assuming normality—while still providing a high degree of confidence [28]. The required sample size is linked to the confidence statement the verification aims to make. For instance, a common requirement is to demonstrate with 95% confidence that more than 99% of units meet the specification (denoted 95%/99%). The appropriate sampling plan is selected based on the risk level of the product or decision [28].

Table 4: Linking Risk to Statistical Confidence in Verification [28]

| Product Risk/Harm Level | Design Verification Confidence | Reduced Level (e.g., Stress Tests) |

|---|---|---|

| High | 95% Confidence / 99% Reliability | 95% Confidence / 95% Reliability |

| Moderate | 95% Confidence / 97% Reliability | 95% Confidence / 90% Reliability |

| Low | 95% Confidence / 90% Reliability | 95% Confidence / 80% Reliability |

In practice, when verifying a method's precision, testing 20 replicates of a sample for intra-assay variation is a common approach. For inter-assay variation, running abnormal samples multiple times per run over at least 5 days is recommended to capture day-to-day variability [25]. For verifying a reference interval, selecting 20 representative samples from healthy individuals is considered sufficient, with the test being validated if no more than 2 samples fall outside the proposed limits [25].

The following diagram outlines the logical decision process for establishing a statistically sound sample size in a verification protocol:

Diagram 2: Sample Size Selection Logic for Verification

Designing a verification protocol for sample selection and preparation in agricultural research is a systematic process that demands a clear understanding of analytical principles, matrix effects, and statistical rigor. As demonstrated by the comparative data, no single preparation technique is universally superior; the choice between QuEChERS, targeted solvent extraction, or other methods depends on the specific analytes, matrices, and analytical endpoints. A successful protocol is one that is thoroughly documented, provides objective evidence of performance against pre-defined criteria (precision, accuracy, LOD, etc.), and is grounded in a statistical framework appropriate for the decision's risk. By adhering to these principles and leveraging the detailed protocols and comparisons provided, researchers and drug development professionals can ensure their analytical data for real agricultural samples is both reliable and defensible.

Selecting and Benchmarking Against Appropriate Reference Methods

In agricultural research, the selection and validation of appropriate reference methods forms the critical foundation for reliable scientific advancement. As the sector increasingly embraces data-driven approaches through Agriculture 4.0 technologies, establishing robust benchmarking protocols ensures that new methodologies generate accurate, reproducible, and actionable insights [29] [4]. Performance verification against validated references separates meaningful innovation from merely novel techniques, particularly when analyzing complex agricultural samples with inherent biological variability.

This guide examines the frameworks, experimental approaches, and analytical considerations essential for selecting and benchmarking reference methods in agricultural research contexts. By addressing both theoretical foundations and practical applications, we provide researchers with structured protocols for establishing method credibility across diverse agricultural testing scenarios.

Theoretical Frameworks for Reference Method Selection

Hierarchical Framework for Reference Measures

A structured approach to reference method selection prioritizes scientific rigor through a hierarchical framework that classifies potential comparators based on key attributes. This system, developed for sensor-based digital health technologies but applicable to agricultural contexts, guides investigators toward the most appropriate reference standard for their specific validation needs [30].

Table 1: Hierarchy of Reference Measures for Method Validation

| Category | Definition | Key Attributes | Examples in Agricultural Context | Rationale for Hierarchy Position |

|---|---|---|---|---|

| Defining | Sets the medical/ scientific definition for a physiological process or construct | Objective data capture without human measurement; source data retainable | Polysomnography for sleep staging in animal studies | Highest standard with associated professional guidelines |

| Principal | Directly and objectively measures the physiological process or construct of interest | Objective data capture; possible human analysis of acquired data | Respiration monitoring systems for animal respiratory rate | Superior to manual methods due to elimination of observer bias |

| Manual | Relies on observation or measurement by trained professionals | Can be seen, heard, or felt; may involve equipment; potential for source data retention | Visual assessment of seed germination status | Standardization through trained professionals |

| Reported | Based on reports from patients or observers about health status | Subjective identification or quantification; typically single measurement per timepoint | Grower-reported crop health assessments | Higher subjectivity and interpretation variability |

This hierarchical approach emphasizes that not all potential reference measures offer equivalent scientific validity. Defining reference measures represent the gold standard when available, while principal reference measures provide acceptable alternatives when validated according to established protocols [30]. The framework sequentially guides investigators through compiling preliminary information, then selecting existing references, developing novel comparators, or identifying multiple anchor measures when direct comparators are unavailable.

Method Validation in Regulatory Contexts

Standardized organizations like ISO provide specific technical requirements for establishing or revising reference methods, particularly in food safety and agricultural microbiology. According to ISO 17468, validation requirements during method revision depend directly on the nature of technical changes [31]. Major technical changes—such as modifications in detection technology or substantial procedural alterations—require full revalidation, while minor changes like editorial corrections may not affect validated performance characteristics [31].

The standardization process typically involves six technical stages, with interlaboratory studies representing the final validation step where performance characteristics are formally established. This structured approach ensures that reference methods maintain consistency and reliability across different laboratory environments and agricultural sample types [31].

Experimental Approaches for Method Benchmarking

Performance Assessment Through Experimental Data Manipulation

Robust benchmarking requires exposing both reference and novel methods to controlled challenges that simulate real-world variability. Research on hyperspectral classification of tomato seeds demonstrates three strategic data manipulations for thorough performance assessment [32]:

- Object Assignment Error: Introducing 0-50% misclassifications in training data quantifies method resilience to labeling inaccuracies

- Spectral Repeatability: Adding 0-10% stochastic noise to reflectance values tests stability under measurement variability

- Training Data Set Size: Reducing observations by 0-50% evaluates performance dependency on sample size

In tomato seed germination studies, these manipulations revealed that classification accuracy decreased linearly with both increasing assignment errors and reduced spectral repeatability [32]. Interestingly, reducing training data by 20% had negligible impact on classification accuracy, suggesting potential efficiency improvements in data collection protocols.

Cross-Validation Strategies for Agricultural Data

The choice of cross-validation strategy significantly impacts reliability of performance estimates in agricultural studies. Research highlights that leave-one-out cross-validation systematically underestimates correlation-based metrics despite being unbiased for error-based metrics [20]. More importantly, overlooking experimental block effects (seasonal variations, herd differences) introduces upward bias in performance measures, emphasizing the necessity of block cross-validation when predictions target new, unseen environments [20].

Table 2: Method Comparison in Agricultural Analysis Studies

| Study Focus | Methods Compared | Performance Metrics | Key Findings | Agricultural Application |

|---|---|---|---|---|

| Serum Protein Analysis [33] | Capillary electrophoresis (3 methods) vs. agarose gel electrophoresis | Correlation coefficient, band sharpness, quantitative comparison | All CE methods showed correlation ≥0.92 with HRAGE for monoclonal bands; JG-CE method preferred due to superior band sharpness | Animal health monitoring and disease detection |

| Hemoglobin A1 Measurement [34] | Liquid chromatography vs. agar gel electrophoresis | Coefficient of variation (CV), regression analysis | HPLC showed better precision (CV 2-4.4%) than electrophoresis (CV 4.6-9%); excellent agreement between methods | Livestock health assessment and monitoring |

| Farming Sustainability [35] | Data envelopment analysis benchmarking | Holistic sustainability scores | 50% of flocks achieved maximum scores; breed and human factors identified as performance drivers | Sustainable agricultural system evaluation |

| Seed Germination Prediction [32] | LDA vs. SVM classification | RMSE, response to data manipulations | SVM showed better performance (RMSE 10.44-12.58) than LDA (RMSE 10.56-26.15) for validation samples | Seed quality assessment and prediction |

Common methodological pitfalls include reusing test data during model selection and relying on single metrics for classification tasks with imbalanced class distributions [20]. Proper separation of training, validation, and test sets remains fundamental to avoiding over-optimistic performance estimates in agricultural research.

Implementation in Agricultural Research Contexts

Integrated Assessment Frameworks

Agricultural method validation benefits from integrated frameworks that capture multiple sustainability dimensions simultaneously. Research on free-range laying hen flocks demonstrated how data envelopment analysis combines multiple sustainability objectives into single efficiency scores, revealing that approximately half of studied flocks achieved maximum scores across animal welfare, productivity, and environmental measures [35]. This One Health approach linking human, animal, and environmental wellbeing provides a template for comprehensive agricultural method assessment that transcends single-metric validation [35].

Analytical Method Validation Standards

For analytical chemistry applications in agriculture, method validation provides evidence that procedures correctly applied produce fit-for-purpose results [4]. Key validation parameters include:

- Selectivity: Ability to distinguish target analytes from interferents

- Trueness and Precision: Accuracy and reproducibility across measurements

- Linearity and Range: Concentration response relationship and working limits

- Limit of Detection/Quantification: Sensitivity thresholds

In pesticide residue analysis, regulatory guidelines further require evaluation of matrix effects, method robustness, interlaboratory testing, and storage stability [4]. These comprehensive requirements acknowledge the complex sample matrices encountered in agricultural analyses and the need for methods that maintain performance across diverse agricultural products.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Agricultural Method Validation

| Reagent/Solution | Function in Validation | Application Examples |

|---|---|---|

| Hyperspectral Imaging Systems | Non-destructive quality trait assessment | Seed germination prediction, composition analysis [32] |

| Reference Standards (Certified) | Establishing accuracy and calibration | Pesticide residue quantification, metabolite detection [4] |

| Quality Control Materials | Monitoring precision and reproducibility | Interlaboratory study validation [31] |

| Electrophoresis Kits (HRAGE, CE) | Biomolecule separation and quantification | Serum protein analysis, genetic marker detection [33] |

| Chromatography Columns and Buffers | Compound separation and identification | Hemoglobin variant analysis, metabolic profiling [34] |

| Sensor Verification Tools | Validating digital measurement systems | Precision agriculture monitoring, animal health sensing [30] |

Method Selection Workflow

Selecting and benchmarking appropriate reference methods requires systematic approaches that acknowledge methodological hierarchies, contextual constraints, and performance requirements specific to agricultural research. The frameworks and experimental protocols presented provide structured pathways for establishing method validity across diverse agricultural applications. As Agriculture 4.0 technologies continue to evolve, maintaining rigorous validation standards ensures that scientific advances translate to reliable practical applications in complex agricultural systems. By adopting comprehensive benchmarking strategies that address both technical performance and real-world applicability, researchers can advance agricultural science with confidence in their methodological foundations.

Leveraging AI and Machine Learning for Data Analysis and Pattern Recognition