Real-Time Bioprocess Monitoring: Foundational Concepts, PAT Tools, and Implementation Strategies for Biopharma

This article provides a comprehensive overview of the foundational concepts and practical applications of real-time bioprocess monitoring for researchers, scientists, and drug development professionals.

Real-Time Bioprocess Monitoring: Foundational Concepts, PAT Tools, and Implementation Strategies for Biopharma

Abstract

This article provides a comprehensive overview of the foundational concepts and practical applications of real-time bioprocess monitoring for researchers, scientists, and drug development professionals. It explores the core principles and economic drivers behind the shift from offline to real-time monitoring, details the specific technologies and methodologies—including spectroscopy and flow cytometry—used for in-line, on-line, and at-line analysis. The content further addresses common implementation challenges and optimization strategies for integrated continuous bioprocessing and concludes with a rigorous analysis of validation requirements, regulatory trends, and a comparative evaluation of emerging analytical techniques shaping the future of biomanufacturing.

The Fundamentals of Real-Time Bioprocess Monitoring: From Offline Analysis to PAT

In the rapidly evolving field of bioprocess monitoring, the ability to track critical process parameters (CPPs) and critical quality attributes (CQAs) in real-time has become fundamental to advancing research and drug development. The shift from traditional laboratory-based, offline analysis to integrated, real-time monitoring represents a paradigm shift in how scientists and researchers approach process understanding and control. This transition is driven by the need for improved product consistency, reduced cycle times, and more effective control of complex biological systems [1]. Within this context, understanding the precise definitions, operational mechanisms, and appropriate applications of in-line, on-line, and at-line monitoring configurations is crucial for designing robust and efficient bioprocesses.

The foundational concepts of these monitoring strategies extend across various industries but hold particular significance in biopharmaceutical manufacturing and advanced therapy development. The selection of an appropriate monitoring strategy directly impacts data frequency, process control capability, and ultimately, the success of research and development activities. This guide provides a comprehensive technical examination of these configurations, framed within the broader thesis that strategic implementation of real-time monitoring is indispensable for modern bioprocess research and the development of next-generation therapeutics [2] [1].

Defining the Monitoring Configurations

The terms in-line, on-line, at-line, and offline describe the physical and functional relationship between the analytical sensor or instrument and the process stream. Each configuration offers distinct advantages and limitations related to data latency, automation, and integration.

In-line Monitoring

In-line measurement involves the direct integration of a sensor or probe into the bioreactor or material stream itself, allowing for analysis without any modification to the process fluid [2] [3]. The sensor is in direct contact with the process medium, providing continuous, real-time data on parameters such as pH, dissolved oxygen, temperature, and chemical composition under actual operating conditions [2]. This method is non-invasive to the process flow and is particularly well-suited for monitoring lean phase flows with low particle concentrations [3].

- Key Feature: The sensor is embedded within the vessel or process line.

- Primary Advantage: Provides uncompromised, real-time data from the exact process environment.

- Typical Applications: Common in chemical synthesis and bioreactor monitoring for tracking properties like composition, temperature, and physical changes [2].

On-line Monitoring

On-line measurement involves automatically diverting a representative sample from the main process stream to an external analyzer through a bypass loop or sample line [2] [3]. This sample is then analyzed, and the data is fed back to the control system in real-time or near-real-time. After analysis, the sample can be either returned to the process or discarded [3]. This approach is ideal for dense phase flows with high particle concentrations and for techniques that require specific preparation or conditioning not possible in the main line [3].

- Key Feature: An external analyzer receives a continuous or semi-continuous sample stream.

- Primary Advantage: Enables real-time analysis with instruments that cannot be placed directly in the process stream, offering greater flexibility for maintenance and calibration without disrupting the process [2].

- Typical Applications: Widely used for bioprocess, water, and solid material analysis where automated, real-time evaluation is needed without interrupting the process [2].

At-line Monitoring

At-line measurement requires an operator or automated system to manually extract a sample from the process and transport it to a nearby analyzer located in the production area or an adjacent lab [2] [3]. While faster than offline analysis, there is a inherent delay—ranging from minutes to an hour—between sampling and result generation. This method strikes a balance between the immediacy of in-line/on-line systems and the depth of offline laboratory analysis.

- Key Feature: Rapid analysis performed close to the process, but not directly integrated.

- Primary Advantage: Offers quicker results than offline methods while allowing for more complex sample preparation or analysis that may not be feasible in an on-line setup.

- Typical Applications: Ideal for measurements requiring precision and specific requirements, but where real-time data is not critical for immediate process control [2].

Offline Monitoring

Offline analysis represents the traditional approach, where samples are manually removed from the process and transported to a distant, centralized laboratory for analysis [2] [3]. This process can take hours or even days to yield results. While it often provides highly precise and comprehensive data using sophisticated laboratory equipment, its lack of real-time capability means it is unsuitable for immediate process control. It remains essential for complex analyses, regulatory assays, and validating other monitoring methods [2].

Comparative Analysis of Monitoring Strategies

Selecting the optimal monitoring configuration requires a multi-faceted analysis of technical requirements, process constraints, and research objectives. The following tables provide a structured comparison to guide this decision-making process.

Table 1: Technical and Operational Characteristics Comparison

| Factor | In-line | On-line | At-line | Offline |

|---|---|---|---|---|

| Sensor Location | Directly in process stream [3] | External analyzer, sample diverted from stream [3] | Near-process analyzer [2] | Remote laboratory [2] |

| Sample Handling | No removal; direct measurement [2] | Automated transfer via sample line [3] | Manual transfer [2] | Manual transport to distant lab [2] |

| Data Frequency | Continuous, real-time [2] | Continuous / real-time [2] | Periodic, dependent on manual sampling [2] | Low, delayed by hours or days [2] |

| Degree of Automation | Fully automated [2] | Fully automated [2] | Manual intervention required [2] | Fully manual process [2] |

| Process Control Capability | Real-time, fully automated control [2] | Real-time adjustments possible [2] | Limited, manual adjustments needed [2] | Reactive, after-the-fact changes [2] |

Table 2: Strategic Evaluation and Application Suitability

| Factor | In-line | On-line | At-line | Offline |

|---|---|---|---|---|

| Reproducibility | High, continuous real-time results [2] | High, automated and frequent [2] | Moderate, manual intervention [2] | Low, manual sampling and delays [2] |

| Flexibility & Maintenance | Low, difficult to replace/maintain (embedded) [2] | High, external instruments allow easier maintenance [2] | Moderate, requires manual handling [2] | Low, manual processes dominate [2] |

| Implementation & Operational Cost | High capital cost, lower operational cost | High capital cost, moderate operational cost | Moderate cost | Low capital cost, high recurring labor cost |

| Safety | High, reduces human exposure [2] | High, automation limits exposure [2] | Moderate, manual handling needed [2] | Low, manual intervention required [2] |

| Ideal Application Context | Critical, fast-changing parameters (e.g., pH, DO) [2] | Automated, real-time analysis where sensor cannot be in-line (e.g., Raman) [4] | Quality checks, method development, backup analysis [2] | Reference methods, complex assays, regulatory testing [2] |

The quantitative data underscores the inherent trade-offs. The global market for advanced real-time monitoring technologies, such as real-time bioprocess Raman analyzers, is projected to grow from USD 22.1 million in 2025 to USD 35.3 million by 2035, reflecting a compound annual growth rate (CAGR) of 4.8% [4]. This growth is driven by the rising demand for Process Analytical Technology (PAT) in biopharmaceutical manufacturing [4]. The instruments segment (which includes in-line probes and on-line analyzers) dominates this market with a 75% share, while the bioprocess analysis application holds a 69% share, highlighting the industrial shift towards integrated, real-time monitoring solutions [4].

Experimental Protocols for Monitoring Methodologies

Implementing these monitoring configurations requires rigorous methodological protocols. Below are detailed experimental frameworks for key techniques cited in contemporary bioprocess research.

Protocol for Real-Time Bioprocess Raman Analysis

Raman spectroscopy is a powerful on-line tool for monitoring cell culture processes, providing multivariate data on nutrients, metabolites, and product titer.

1. Objective: To implement real-time Raman spectroscopy for the monitoring and prediction of key process variables (e.g., glucose, lactate, viable cell density) in a mammalian cell bioreactor.

2. Research Reagent Solutions & Essential Materials: Table 3: Key Materials for Raman-Based Bioprocess Monitoring

| Item | Function/Description |

|---|---|

| Raman Analyzer | The core instrument (e.g., from Kaiser Optical Systems or Thermo Fisher Scientific); includes a laser source, spectrometer, and detector for collecting spectroscopic fingerprints [4]. |

| Raman Probe | An immersion probe sterilized-in-place (SIP) or steamed-in-place (CIP) that is inserted directly into the bioreactor. It delivers laser light to the sample and collects the scattered light [4]. |

| Bioreactor System | A controlled vessel (e.g., bench-top or single-use bioreactor) for cell culture, equipped with standard probes (pH, DO) and ports for probe insertion. |

| Calibration Standards | Solutions with known concentrations of analytes of interest (e.g., glucose, glutamine) for building initial calibration models. |

| Chemometric Software | Advanced software for developing multivariate calibration models (e.g., PLS regression) that correlate Raman spectra with reference data from at-line or offline analyzers [1]. |

3. Methodology:

- Step 1: System Installation & Sterilization: Install the Raman probe into a dedicated port on the bioreactor. Follow manufacturer's and site-specific procedures for SIP or CIP to achieve sterility.

- Step 2: Data Acquisition: Initiate spectral acquisition at the start of the bioreactor run. Spectra are typically collected every 5-15 minutes throughout the entire batch or fed-batch process.

- Step 3: Reference Sampling: Concurrently with spectral acquisition, manually withdraw at-line samples from the bioreactor. Analyze these immediately using a reference method (e.g., bioanalyzer for metabolites, cell counter for VCD).

- Step 4: Model Development & Maintenance: Use the collected spectra and corresponding reference data to build or refine a PLS regression model. The model is validated using an independent set of data not used for calibration.

- Step 5: Real-Time Prediction: Once validated, the model is deployed to predict process variables in real-time from each new spectrum acquired, enabling continuous monitoring without manual sampling.

Protocol for In-line vs. At-line Comparison Study

A critical experiment for validating a new in-line sensor is a direct comparison against the established at-line method.

1. Objective: To validate the performance of a new in-line sensor (e.g., for capacitance measuring VCD) against the standard at-line method (e.g., automated cell counter).

2. Methodology:

- Step 1: Parallel Data Collection: Over the course of multiple bioreactor runs, collect data from the in-line sensor and the at-line analyzer simultaneously at predefined intervals.

- Step 2: Statistical Analysis: Perform a statistical comparison (e.g., linear regression, Bland-Altman analysis) between the in-line and at-line measurements to determine correlation, bias, and precision.

- Step 3: Dynamic Response Testing: Introduce a process perturbation (e.g., a nutrient feed or temperature shift) to assess the dynamic response time and sensitivity of the in-line sensor compared to the slower, discrete at-line method.

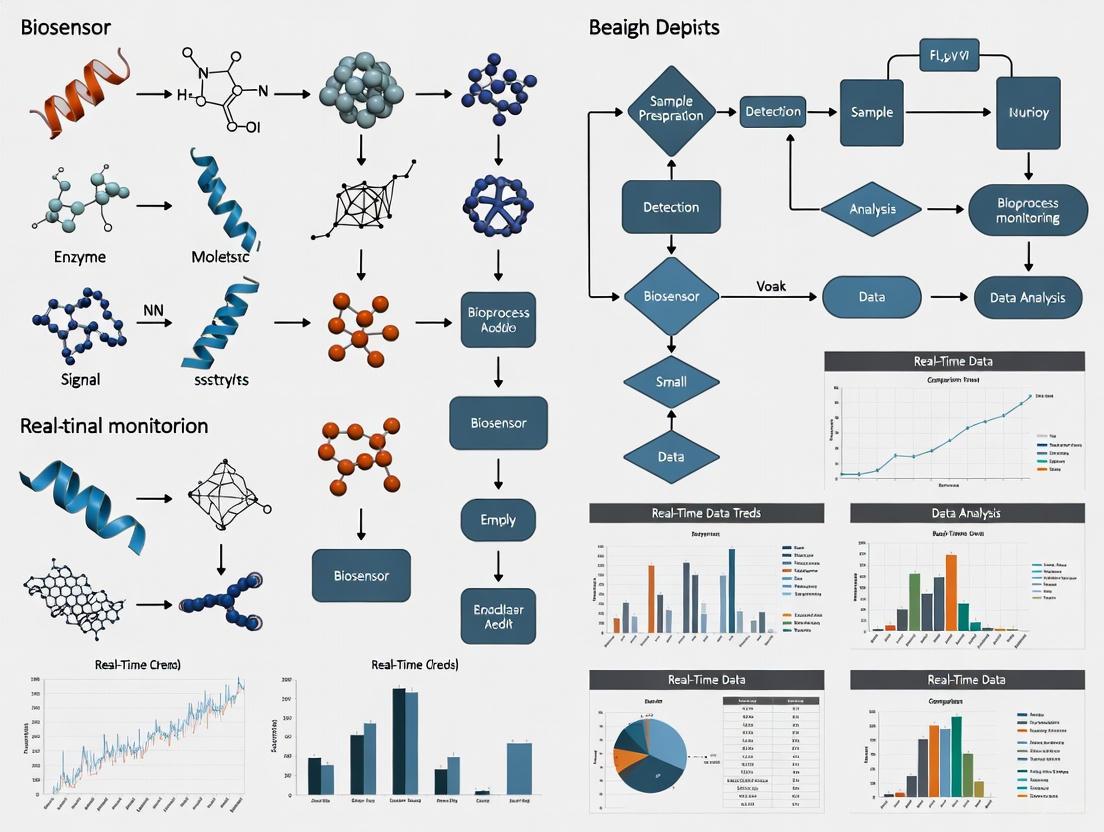

Visualization of Monitoring Configurations

The following diagram illustrates the logical relationship and data flow between the different monitoring configurations within a bioprocess unit, such as a bioreactor.

Diagram 1: Data flow in bioprocess monitoring configurations.

The diagram above visually summarizes the core concepts:

- In-line monitoring establishes a direct, two-way interaction with the bioreactor.

- On-line monitoring is connected via an automated sample stream from a bypass loop.

- At-line and Offline monitoring both rely on manual sampling from a common sample port, with the key difference being the location and sophistication of the subsequent analysis, leading to varying degrees of data delay.

The strategic implementation of in-line, on-line, and at-line monitoring configurations forms the bedrock of advanced, data-driven bioprocess research. As the industry moves inexorably towards continuous processing and heightened regulatory expectations for quality-by-design, the role of real-time analytics becomes increasingly critical [1]. Each configuration—from the direct immersion of in-line sensors to the flexible externality of on-line analyzers and the rapid feedback of at-line systems—offers a unique set of capabilities that can be leveraged to de-risk process development, accelerate timelines, and enhance product quality. The foundational knowledge of these systems empowers researchers and drug development professionals to design more intelligent, responsive, and efficient bioprocesses, ultimately contributing to the accelerated delivery of novel therapeutics to patients.

The Process Analytical Technology (PAT) initiative, as defined by the U.S. Food and Drug Administration (FDA), is a regulatory framework designed to encourage innovation in pharmaceutical development, manufacturing, and quality assurance [5]. PAT enables manufacturers to measure and control a process based on the Critical Quality Attributes (CQAs) of the product in real time, thereby optimizing quality while reducing the cost and time of product development and manufacturing [6]. This framework is intrinsically linked to Quality by Design (QbD), a systematic approach to drug development that begins with predefined objectives and emphasizes product and process understanding and process control, all based on sound science and quality risk management [7]. The core philosophy of both PAT and QbD is that "quality should be built into a product" with a thorough understanding of both the product and the process, rather than relying solely on end-product testing [6] [5].

In the context of modern biopharmaceuticals, which include complex molecules like monoclonal antibodies and gene therapies, the limitations of traditional Quality by Testing (QbT) have become pronounced [8]. QbT involves batch testing where quality is only confirmed after manufacture, leaving little scope for corrective action and potentially leading to rejected batches [8]. In contrast, the PAT-enabled QbD approach provides a framework for real-time monitoring and control, facilitating Real-Time Release (RTR) of products and representing a fundamental shift towards more intelligent, efficient, and robust biomanufacturing paradigms [8]. This is particularly crucial given the biopharmaceutical industry's shift towards continuous processing and the production of increasingly complex therapeutics [9] [8].

Core Principles and Regulatory Framework

The Ten Guiding Principles of QbD

The QbD approach is built upon a foundation of ten guiding principles that ensure a comprehensive and science-based framework for drug development [7]:

- A clear line of sight from clinical to product release and stability: This ensures that all product requirements and performance characteristics are clearly defined and traceable from clinical needs through to final product release.

- Quality Risk Management (QRM) in every aspect of development: QRM, as detailed in ICH Q9, is designed to ensure that drug CQAs are defined and maintained throughout the product lifecycle.

- Enhanced product understanding: Manufacturers must understand all critical and key multiple factors influencing the product and all primary sources of variation.

- Assay understanding: Analytical methods for measuring CQAs must be fit for use, with understood key factors and process steps that influence method variation.

- Process understanding and characterization: Factors influencing the production process and associated variations must be thoroughly understood.

- Generation of transfer functions: The understanding of how process factors (X) influence responses (Y) should be expressed in the form of equations, derived from scientific knowledge or structured experimentation.

- Improved product specification limits and justification: Specification limits must be part of an overall control strategy and linked to CQAs, based on scientific knowledge and transfer functions.

- Robust design space and edge of failure: The multidimensional combination of input variables and process parameters that provide assurance of quality must be defined, including understanding of the edges of failure.

- Use of modern control strategies and PAT: Controls should include in-process, post-process, and closed-loop process measurement and adjustment during processing.

- Continuous improvement and validation throughout a product’s lifecycle: Processes should be continuously monitored and validated using measures of process capability, variation, and controllability.

The PAT Framework and Its Components

PAT provides the technological and methodological backbone to implement QbD principles in a manufacturing environment. The key goal of PAT is the integration of analytical technologies in-line, on-line, or at-line with manufacturing equipment for process monitoring and control [8]. The PAT framework as outlined by the FDA involves [5] [8]:

- Timely measurements during processing of CQAs and performance attributes of raw and in-process materials.

- A system for designing, analyzing, and controlling manufacturing through these measurements.

- The ultimate goal of ensuring final product quality.

The implementation of PAT is a key driver for QbD and is essential for achieving real-time release of products [8]. It relies on a holistic framework where each element—sensor technology, data analysis techniques, control strategies, and process optimization routines—must be carefully selected and integrated [10].

Table 1: PAT Measurement Approaches and Their Characteristics

| PAT Approach | Description | Common Technologies | Advantages |

|---|---|---|---|

| In-line/In-situ | Sensor placed directly in the process stream | pH, DO, Raman spectroscopy [5] [11] | Real-time data; no sample removal; minimal risk of contamination |

| On-line | Automated sample diversion from process stream to analyzer | Process mass spectrometry [11] | Near real-time data; continuous monitoring |

| At-line | Manual sample removal to nearby analyzer | HPLC, wet chemistry [8] | Rapid analysis; off-the-shelf equipment |

| Off-line | Sample removal to remote laboratory for analysis | Traditional lab assays | High accuracy; extensive analysis capabilities |

Figure 1: The Logical Workflow of QbD and PAT Implementation

Implementation in Bioprocessing: Methodologies and Protocols

Defining the Foundation: QTPP, CQAs, and CPPs

The implementation of QbD begins with defining the Quality Target Product Profile (QTPP), which is "a prospective summary of the quality characteristics of a drug product that ideally will be achieved to ensure desired quality, taking into account safety and efficacy of the drug product" [8]. The QTPP forms the basis for listing all potential Critical Quality Attributes (CQAs), which are physical, chemical, or biological properties that must remain within a specified range or limit to ensure the QTPP is met [8]. Subsequently, Critical Process Parameters (CPPs) are identified—these are process parameters whose variability impacts the CQAs and therefore must be monitored or controlled to ensure the product meets the desired quality [8].

In practical terms, for an upstream bioprocess such as a mammalian cell culture, the QTPP would define the required potency, purity, and safety of the therapeutic protein [12]. The CQAs might include critical glycosylation patterns, protein titer, and aggregate formation [5] [12]. The CPPs would then encompass parameters such as dissolved oxygen, pH, temperature, and nutrient levels that directly influence those CQAs [12].

Design of Experiments (DoE) and Process Characterization

A fundamental methodology in QbD implementation is Design of Experiments (DoE), which systematically tests the influence of different parameters on bioprocess outcomes [12]. DoE is used during process characterization studies to relate the CQAs to process variables and understand the effects of different factors and their interactions [8]. Based on this understanding, multidimensional models are built to link CQAs to various factors, enabling the definition of acceptable ranges for process parameters [8].

A typical DoE protocol for bioprocess characterization involves:

- Screening Experiments: Initial low-resolution screening to identify the most influential factors from a large set of potential parameters.

- Response Surface Methodology: Exploration of the relationship between the influential factors (identified in screening) and the CQAs to determine optimal parameter ranges.

- Robustness Testing: Verification that the process remains within control limits when small, intentional variations are introduced to the CPPs.

This approach allows for the creation of a design space, which the ICH Q8 defines as "the multidimensional combination and interaction of input variables (e.g., material attributes) and process parameters that have been demonstrated to provide assurance of quality" [7]. Operating within the design space is not considered a change, thus providing flexibility in process adjustments [7].

PAT Tool Integration and Real-Time Monitoring

With the design space established, PAT tools are integrated for real-time monitoring and control. The selection of appropriate PAT tools depends on the specific process and the attributes being monitored. Common PAT tools in bioprocessing include:

- Spectroscopic Techniques: Raman analyzers are increasingly critical for real-time process control, enabling enhanced process understanding and improved product consistency [4] [13]. They are used to monitor key parameters such as glucose, lactate, and amino acids in fermentation and cell culture processes [13]. Near-Infrared (NIR) and Mid-Infrared (MIR) spectroscopy are also employed for similar applications [8].

- Process Mass Spectrometry: Used for off-gas analysis to monitor oxygen and carbon dioxide levels in bioreactors, providing invaluable information on the physiological state of the culture [11].

- Biosensors: Offer high specificity for monitoring specific CQAs, such as metabolite concentrations [8].

Table 2: Key PAT Technologies for Bioprocess Monitoring

| Technology | Measurement Principle | Typical Applications in Bioprocessing | Implementation Mode |

|---|---|---|---|

| Raman Analyzer | Inelastic light scattering for molecular fingerprinting | Glucose, lactate, amino acids, protein concentration [13] | In-line |

| Process Mass Spectrometer | Magnetic sector technology for gas analysis | Dissolved O₂/CO₂, off-gas analysis [11] | On-line |

| NIR/MIR Spectroscopy | Molecular overtone and combination vibrations | Moisture content, protein structure, concentration [8] | At-line/In-line |

| Biosensors | Biological recognition element with transducer | Specific metabolites (e.g., glucose, lactate) [8] | In-line |

A specific protocol for implementing Raman spectroscopy for glucose monitoring in a bioreactor would involve:

- System Configuration: Installation of a Raman analyzer (e.g., Kaiser Optical Systems, Thermo Fisher Scientific) with a immersion probe designed for SIP/CIP compatibility [4] [5].

- Calibration Model Development: Collection of Raman spectra across a wide range of glucose concentrations during process development, coupled with off-line reference measurements (e.g., HPLC) to build a multivariate calibration model using techniques like Partial Least Squares (PLS) regression.

- Model Validation: Validation of the prediction model against a separate set of process data not used in calibration to ensure accuracy and robustness.

- Integration with Control System: Feeding the real-time glucose predictions from the Raman model into the bioreactor control system to automatically adjust nutrient feed pumps, maintaining optimal glucose levels throughout the cultivation.

Figure 2: PAT Implementation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of PAT and QbD requires a suite of specialized tools and reagents. The selection of these tools is critical for ensuring robust process monitoring and control.

Table 3: Essential Research Reagent Solutions for PAT and QbD

| Tool Category | Specific Examples | Function in PAT/QbD | Key Characteristics |

|---|---|---|---|

| PAT Sensors | Raman spectrometer probes, In-line pH and DO sensors [5] [11] | Real-time monitoring of CPPs and CQAs | CIP/SIP compatibility, calibration stability, robust signal |

| Cell Culture Media | Chemically defined media, Feed concentrates [12] | Provides nutrients for cell growth and product formation; DoE for optimization | Lot-to-lot consistency, free of animal-derived components |

| Calibration Standards | Gas mixtures for MS, Buffer solutions for pH [11] | Ensures accuracy and precision of PAT measurements | Traceable certification, stability, appropriate concentration ranges |

| Data Analysis Software | Multivariate Analysis (MVA) software, Chemometric tools [6] [8] | Converts raw sensor data into actionable process information | 21 CFR Part 11 compliance, compatibility with data formats |

| Chromatography Resins | Protein A affinity media, Ion exchangers [8] | Purification of target biologic; critical for DSP CQAs | High binding capacity, consistent performance, reuse stability |

Current Landscape and Future Perspectives

Market Trends and Adoption

The real-time bioprocess Raman analyzer market, a key segment of the PAT landscape, is projected to grow from USD 22.1 million in 2025 to USD 35.3 million by 2035, reflecting a compound annual growth rate (CAGR) of 4.8% [4]. This growth is driven by the increasing demand for process analytical technologies in biopharmaceutical manufacturing, advancements in Raman spectroscopy technology, and the growing need for real-time monitoring capabilities in bioprocess optimization [4]. Regionally, China leads in growth with a projected CAGR of 6.0%, followed by India at 5.8%, reflecting the rapid expansion of biopharmaceutical manufacturing in these regions [4].

The adoption of PAT is further accelerated by the biopharmaceutical industry's shift toward continuous processing and process intensification [9]. Single-use bioreactors, now used for more than 85% of pre-commercial pharmaceutical production, have lower maximum operating volumes, making process intensification and continuous manufacturing necessary to increase output while reducing costs [9]. PAT sensors and advanced data analytics are essential elements for the success of continuous process manufacturing [9].

Technological Advancements and Future Directions

The future of PAT is closely tied to the digital transformation of biomanufacturing, often referred to as Biopharma 4.0 [9]. Key technological advancements shaping this future include:

- Integration of AI and Machine Learning: The use of artificial intelligence and machine learning in Raman data interpretation enhances accuracy and predictive capabilities [13]. These technologies enable more sophisticated modeling of complex process relationships and predictive analytics for preventing process deviations.

- Digital Twins: The creation of digital replicas of bioprocesses enables virtual testing of process parameters, prediction of outcomes, and optimization without disrupting actual manufacturing [8].

- Advanced Data Analytics: Multivariate Data Analysis (MVDA) is becoming integral to all PAT technologies, essential for rapid scale-up and process understanding [9]. Companies are leveraging vast quantities of bioprocessing data to predict and prevent future process deviations [9].

- Miniaturization and Portability: Development of compact, portable analyzers (e.g., the MarqMetrix All-In-One Process Analyzer) increases flexibility and allows for deployment in various process scales [11].

These advancements are paving the way for fully automated, closed-loop control systems that can self-optimize in real-time, ultimately leading to more robust processes, higher product quality, and reduced manufacturing costs for biopharmaceuticals.

Real-time bioprocess monitoring has emerged as a foundational pillar of modern biopharmaceutical manufacturing, representing a paradigm shift from traditional offline analytical methods to dynamic, data-driven process control. This transformation is propelled by three interconnected drivers: robust regulatory support for advanced process analytical technologies, the imperative for enhanced product quality assurance, and the relentless pursuit of operational cost efficiency. Within the context of industrial biomanufacturing, these drivers collectively foster an environment where real-time monitoring is no longer optional but essential for producing complex biologics, vaccines, and advanced therapies consistently and sustainably [1]. This technical guide examines the core principles, experimental evidence, and implementation frameworks that underpin these key drivers, providing researchers and drug development professionals with a comprehensive resource for advancing bioprocess research and development.

The transition to real-time monitoring is supported by technological advancements in spectroscopic sensors, artificial intelligence (AI), and machine learning (ML) algorithms that enable unprecedented visibility into process parameters. The global bioprocess validation market, projected to grow from USD 537.30 million in 2025 to approximately USD 1,179.55 million by 2034 at a CAGR of 9.13%, reflects the critical importance of these technologies in modern biomanufacturing [14]. This growth is further evidenced by the expanding market for specialized monitoring tools like real-time bioprocess Raman analyzers, which are expected to reach USD 35.3 million by 2035 [4]. This guide delves into the technical specifics of how regulatory frameworks, quality-by-design (QbD) principles, and efficiency optimization strategies are fundamentally reshaping bioprocess monitoring through concrete experimental data, validated protocols, and scalable implementation models.

Regulatory Support for Real-Time Monitoring

Regulatory support constitutes the primary enabler for widespread adoption of real-time bioprocess monitoring technologies. Agencies including the U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) have established clear frameworks encouraging the implementation of Process Analytical Technology (PAT) and Quality by Design (QbD) principles [15]. These initiatives are not merely guidelines but represent a fundamental shift in regulatory philosophy toward lifecycle management and real-time quality monitoring, emphasizing the importance of building quality into processes rather than testing it in final products [1].

Evolving Regulatory Frameworks

The regulatory landscape in 2025 is characterized by collaborative, data-driven approaches that harmonize requirements across international jurisdictions. Key regulatory highlights include:

- ICH Q13 Adoption: Global implementation of ICH Q13 guidelines for continuous manufacturing provides a standardized pathway for regulatory approval of advanced manufacturing processes [1].

- Annex 1 (EU GMP) Implementation: Stricter contamination control strategies driven by Annex 1 requirements are pushing manufacturers toward closed-system processing with integrated real-time monitoring [1].

- Computer Software Assurance (CSA) Guidance: FDA's CSA guidance facilitates faster validation of digital tools and AI-driven monitoring systems, reducing implementation barriers for advanced analytics [1].

These frameworks collectively support the transition from discrete batch testing to continuous verification, wherein real-time monitoring data serves as evidence of consistent product quality throughout the manufacturing process. Regulatory agencies now recognize that real-time monitoring provides superior process understanding compared to traditional offline methods, which inherently introduce delays and potential sampling errors [16]. This evolution aligns with the FDA's 2004 PAT guidance but expands its application to increasingly complex modalities like cell and gene therapies, where conventional end-product testing is insufficient to ensure safety and efficacy [15].

PAT Implementation and Validation

Successful PAT implementation requires rigorous validation to demonstrate analytical method accuracy, precision, and robustness. The following table summarizes key validation parameters for spectroscopic PAT methods commonly used in real-time monitoring:

Table 1: Validation Requirements for Spectroscopic PAT Methods in Bioprocess Monitoring

| Validation Parameter | Requirements for PAT Applications | Reference Methodology |

|---|---|---|

| Specificity | Ability to detect and quantify specific analytes in complex broth | Comparison with reference analytical methods (HPLC, enzymatic assays) [16] |

| Linearity | Demonstrated across the operational range of the process | Serial dilutions of standard solutions across expected concentration range [15] |

| Accuracy | Mean recovery of 90-110% for key process analytes | Comparison with offline reference measurements [16] |

| Precision | RSD ≤ 5% for repeatability; RSD ≤ 10% for intermediate precision | Multiple measurements of quality control samples [15] |

| Range | Span covering normal operating ranges and expected deviations | Validation across minimum, target, and maximum expected values [16] |

| Robustness | Insensitive to minor variations in process parameters | Deliberate variation of factors like temperature, flow rate, and pressure [17] |

The validation approach must demonstrate that the monitoring system maintains performance throughout the intended process lifecycle. For AI and ML models used in spectral analysis, this includes validation of training datasets, algorithm selection, and ongoing performance monitoring [18]. Regulatory submissions now increasingly include digital validation packages that comprehensively document the development, training, and performance of AI-driven monitoring systems [14] [1].

Product Quality Enhancement Through Advanced Monitoring

Product quality represents the central imperative driving real-time monitoring adoption, particularly for complex biologics where consistent critical quality attributes (CQAs) determine therapeutic efficacy and safety. Advanced monitoring technologies enable unprecedented control over bioprocess parameters, directly impacting product titer, purity, and homogeneity.

Spectroscopic Monitoring Technologies

Multiple spectroscopic techniques have emerged as cornerstone technologies for real-time quality monitoring, each offering distinct advantages for specific applications:

Table 2: Comparative Analysis of Spectroscopic Techniques for Real-Time Bioprocess Monitoring

| Technique | Analytical Principle | Key Applications in Bioprocessing | Limitations |

|---|---|---|---|

| Raman Spectroscopy | Inelastic scattering of monochromatic light | Monitoring of substrate concentrations, metabolite levels, and product titer in upstream processes [4] [16] | Fluorescence interference; weak signal for low-concentration analytes [16] [15] |

| Near-Infrared (NIR) Spectroscopy | Molecular overtone and combination vibrations | Real-time measurement of glucose, ammonium ions, and biomass in fermentation processes [16] [17] | Overlapping absorption bands in complex mixtures; lower sensitivity [16] |

| Fluorescence Spectroscopy | Emission of light from electron energy transitions | Monitoring of intrinsic fluorophores (NADH, tryptophan) for cell metabolism and protein folding assessment [15] | Limited to fluorescent molecules; background interference [15] |

The integration of multiple spectroscopic techniques, known as combinatorial spectroscopy, has demonstrated superior performance compared to single-technique approaches. Recent research shows that combining NIR and Raman spectroscopy with AI-driven data fusion improves predictive model performance by 9.2–100.4% in terms of the coefficient of determination (R²) for key parameters including glucose, ammonium ions, biomass, and gentamicin C1a titer [16]. This multi-source spectral integration approach effectively compensates for the limitations of individual techniques, providing comprehensive monitoring capability across the entire bioprocess spectrum.

Experimental Protocol: AI-Driven Multi-Spectral Monitoring

A recent landmark study exemplifies the application of advanced monitoring for quality enhancement in antibiotic production [16]. The methodology provides a reproducible template for implementing AI-enhanced monitoring systems:

1. Experimental Setup and Strain Preparation

- The study utilized Micromonospora echinospora 49-92S KL01 strain, metabolically engineered for gentamicin C1a production.

- Seed culture was prepared in 250 mL flasks containing 60 mL of seed medium (cornmeal 20 g/L, soluble starch 15 g/L, defatted soybean meal 15 g/L, calcium carbonate 4 g/L, peptone 3 g/L, cobalt chloride 0.005 g/L, pH 7.2-7.4).

- Fed-batch fermentation was conducted in a 5 L bioreactor with an initial working volume of 2.5 L.

2. Spectral Data Acquisition and Integration

- NIR and Raman spectrometers were interfaced with the bioreactor via flow cells for continuous sampling.

- NIR spectra were collected in the range of 800-2500 nm with 2 nm resolution; Raman spectra were acquired with 785 nm excitation laser and spectral resolution of 2 cm⁻¹.

- Orthogonal feature selection algorithms were applied to reduce redundant spectral features and enhance model performance.

3. Machine Learning Model Development and Training

- Thirteen different ML algorithms were evaluated, including ridge regression, gradient boosting, and multilayer perceptron.

- Training datasets comprised 6,960 annotated spectra generated using an automated pipetting system to ensure broad concentration representation [18].

- Model performance was validated against offline reference methods (HPLC for gentamicin C1a, enzymatic assay for glucose).

4. Integration with Automated Control System

- Predictions from the combinatorial spectral model were fed to an automated control system that dynamically adjusted glucose feeding rates.

- The system maintained glucose concentrations at 5 g/L with accuracy and coefficient of variation below 2%.

5. Performance Metrics and Quality Outcomes

- The AI-driven system increased gentamicin C1a concentration to 346.5 mg/L, representing a 33.0% improvement over traditional intermittent feeding.

- Predictive models achieved R² > 0.99 in external validation for all key parameters.

- The system enabled real-time predictions within 1 minute, compared to >2 hours with conventional offline methods [16].

This experimental protocol demonstrates the tangible quality benefits achievable through integrated monitoring and control systems, highlighting the critical relationship between real-time data acquisition and enhanced product quality.

Figure 1: Integrated workflow for real-time multi-spectral bioprocess monitoring showing the pathway from data acquisition through AI analysis to precision control and quality outcomes.

Cost Efficiency Drivers and Implementation

Cost efficiency represents the third pivotal driver for real-time monitoring adoption, with economic considerations spanning capital investment, operational expenses, and overall productivity enhancements. The business case for implementing advanced monitoring technologies increasingly demonstrates compelling return on investment through multiple mechanisms.

Economic Analysis of Monitoring Technologies

The financial implications of real-time monitoring implementation must be evaluated through both direct and indirect cost benefits:

Table 3: Cost-Benefit Analysis of Real-Time Bioprocess Monitoring Implementation

| Cost Component | Traditional Approach | Real-Time Monitoring | Economic Impact |

|---|---|---|---|

| Capital Investment | Lower initial hardware costs | Significant instrumentation investment (Raman analyzer: $150,000-$500,000) [4] | Higher initial capital outlay with 3-5 year typical ROI |

| Labor Requirements | High manual sampling and analysis | Automated monitoring reduces labor by 30-50% [1] | Annual operational savings of $100,000-$500,000 depending on scale |

| Process Yields | Batch failures 5-15% depending on process complexity | 20-35% yield improvement through precise control [16] | Value of increased output typically exceeds monitoring costs |

| Batch Failure Rates | Reactive quality control leads to 3-7% batch loss | Proactive control reduces failures to <1% [14] | Avoided losses of $500,000-$2M per failed batch (therapeutics) |

| Validation Costs | Extensive offline method validation | Higher initial PAT validation offset by reduced ongoing QC | 15-25% reduction in total quality costs over product lifecycle [14] |

The implementation of continuous processing enabled by real-time monitoring demonstrates particularly compelling economics. Studies show that continuous bioprocessing can reduce capital and operating costs by 30-50% compared to batch processes, primarily through reduced facility footprint, lower buffer consumption, and increased productivity [1] [19]. These economic advantages are particularly significant for advanced therapies like cell and gene treatments, where manufacturing costs represent a substantial barrier to patient access.

Implementation Framework for Cost-Effective Monitoring

Successful implementation of real-time monitoring for cost efficiency requires a structured approach:

1. Technology Selection and Scalability Assessment

- Evaluate monitoring technologies based on process needs, scalability, and integration requirements.

- Consider modular systems that support technology transfer across scales [17].

- Prioritize multi-parameter systems that maximize information per capital dollar.

2. Integration with Existing Infrastructure

- Implement flow cells compatible with single-use and stainless-steel systems [17].

- Ensure compatibility with existing control systems and data architecture.

- Utilize standardized interfaces to minimize customization costs.

3. Lifecycle Cost Optimization

- Balance initial capital investment against long-term operational benefits.

- Implement predictive maintenance schedules to minimize downtime.

- Leverage cloud-based analytics to reduce IT infrastructure costs [14].

The expanding CDMO ecosystem provides additional cost-efficient implementation pathways, with specialized service providers offering access to advanced monitoring technologies without substantial capital investment [14] [1]. This outsourcing model particularly benefits smaller biotech companies and academic research institutions, democratizing access to sophisticated monitoring capabilities that would otherwise require prohibitive investment.

The Scientist's Toolkit: Essential Research Reagents and Technologies

Implementation of effective real-time monitoring requires specific research tools and technologies tailored to bioprocess applications. The following table catalogues essential solutions for researchers developing and optimizing monitoring systems:

Table 4: Essential Research Reagent Solutions for Real-Time Bioprocess Monitoring

| Technology/Reagent | Function | Application Example | Key Providers/References |

|---|---|---|---|

| Raman Analyzer Systems | In-line monitoring of substrate and metabolite concentrations | Real-time monitoring of fermentation processes; concentration prediction via ML models [4] [18] | Kaiser Optical Systems, Thermo Fisher Scientific, Tornado Spectral Systems [4] |

| NIR Flow Cells with Temperature Control | Precise optical measurements with thermal stability | Real-time, model-free quantitation in UF/DF processes; ensures data consistency [17] | Nirrin Technologies (patented flow-cell technology) [17] |

| Multi-Spectral Data Fusion Platforms | Integration of complementary spectroscopic data sources | Combined NIR and Raman monitoring for enhanced prediction accuracy [16] | Custom AI platforms as described in research [16] |

| Automated Sampling Systems | Sterile extraction of samples for at-line analysis | Numera system for continuous bioprocess monitoring in up- and downstream production [19] | Securecell AG (Numera system) [19] |

| Reference Standard Materials | Calibration and validation of spectroscopic models | Certified reference materials for method development and transfer | Bioprocess International reference standards [20] |

| Residual DNA Testing Kits | Monitoring of critical quality attributes in biologics | AccuRes qPCR kits for host cell DNA clearance verification [21] | Cygnus Technologies (AccuRes qPCR kits) [21] |

| Digital Twin Software Platforms | Virtual process modeling and predictive control | Process optimization through simulation; proactive deviation detection [1] | Various commercial and proprietary platforms [1] |

Integrated Data Analysis and Process Control

The full potential of real-time monitoring is realized only through sophisticated data analysis and closed-loop control systems that transform raw sensor data into automated process adjustments. This integration represents the convergence of monitoring technologies with Industry 4.0 principles in what is termed "Bioprocessing 4.0" [14].

AI and Machine Learning Integration

Artificial intelligence has revolutionized bioprocess monitoring by shifting from retrospective analysis to real-time, predictive, and automated validation methods [14]. The implementation pathway for AI integration involves several critical steps:

1. Data Preprocessing and Feature Selection

- Application of orthogonal feature selection methods to reduce redundant spectral features, demonstrated to improve model performance by 9.2-100.4% [16].

- Noise reduction through multiple spectrum averaging and application of data preprocessing algorithms [15].

- Handling of collinearity in spectroscopic data through specialized chemometric approaches.

2. Model Selection and Benchmarking

- Systematic comparison of machine learning approaches including convolutional neural networks, attention-based transformers, and ensemble methods.

- Recent benchmarking studies demonstrate that deep learning approaches significantly outperform traditional partial least squares regression in terms of coefficient of determination and mean absolute error [18].

- Evaluation of in-context learning approaches like Tabular Prior-data Fitted Networks for specific analyte prediction tasks.

3. Continuous Model Improvement

- Implementation of continuous learning systems that incorporate new process data.

- Regular model performance monitoring and recalibration protocols.

- Cross-validation against offline reference methods to maintain accuracy.

Figure 2: Multi-source spectral data integration process showing the pathway from raw data through preprocessing and AI analysis to critical process parameter prediction.

Implementation in Continuous Processing

The most advanced application of real-time monitoring occurs in continuous bioprocessing environments, where monitoring directly enables process control:

Integrated Continuous Bioprocessing Architecture

- Upstream Integration: Real-time monitoring of cell density, viability, and metabolite concentrations enables automated perfusion control [1] [19].

- Downstream Integration: Flow-through particle monitoring and product titer measurement facilitate continuous chromatography optimization [1].

- Process-Wide Control: Advanced monitoring tools extract process-relevant information along the entire continuous bioprocess, enabling system-wide control strategies [19].

The implementation of end-to-end integrated operations requires robustly designed process steps, advanced monitoring tools, and adaptive process-wide control to progress from proof-of-concept to reliable production facilities [19]. The regulatory support for continuous manufacturing, particularly through ICH Q13 adoption, has created a favorable environment for these integrated implementations [1].

Future Directions and Emerging Applications

The evolution of real-time monitoring continues to accelerate, with several emerging trends shaping future research and implementation directions:

Technology Development Pathways

- Hyper-personalization: Real-time manufacturing of patient-specific therapies requiring ultra-rapid monitoring and control systems [1].

- AI-designed Biologics: Integration of monitoring data with AI-driven biologics design to accelerate discovery and manufacturability assessment [1].

- Cell-free Biomanufacturing: Portable, on-demand monitoring systems for distributed manufacturing in remote locations [1].

- Decentralized Production: Microfactory configurations with compact monitoring technologies near point-of-care for critical biologics [1].

Strategic Implementation Considerations

For researchers and drug development professionals planning monitoring implementation, several strategic considerations emerge:

- Interoperability Standards: Selection of monitoring technologies that support data exchange through standardized interfaces.

- Workforce Development: Investment in cross-disciplinary training combining bioprocess engineering with data science competencies [1].

- Regulatory Engagement: Early collaboration with regulatory agencies to establish acceptable monitoring-based control strategies.

- Cost-Benefit Optimization: Balanced approach to technology investment that matches monitoring capability with process criticality.

The convergence of real-time monitoring with other Industry 4.0 technologies, particularly digital twins and predictive analytics, creates opportunities for unprecedented process understanding and control [14] [1]. As these technologies mature, real-time monitoring will evolve from a process verification tool to the central nervous system of intelligent biomanufacturing facilities, capable of autonomous optimization and continuous quality assurance.

The successful production of biologics hinges on the precise monitoring and control of a hierarchy of parameters, from fundamental physical measurements to complex product characteristics. This framework is built upon two foundational pillars: Critical Process Parameters (CPPs)—the measurable inputs and environmental conditions of the production process—and Critical Quality Attributes (CQAs)—the final product's quality characteristics that directly impact safety and efficacy [22] [23]. In modern bioprocessing, the connection between these two pillars is governed by the Quality by Design (QbD) paradigm, a systematic approach that emphasizes building quality into the product through process understanding and control, rather than relying solely on final product testing [24]. This principle is operationalized through Process Analytical Technology (PAT), a framework encouraging real-time monitoring of CPPs to ensure CQAs are consistently met [25] [26].

The drive towards advanced bioprocessing is further accelerated by Industry 4.0, which introduces smart technologies like Digital Twins—virtual replicas of physical processes that use real-time data for simulation, prediction, and optimization [25]. The convergence of QbD, PAT, and Industry 4.0 technologies represents the future of bioprocess monitoring, enabling a proactive and data-driven approach to manufacturing complex biologics. This guide details the essential parameters within this ecosystem, the methodologies for their measurement, and the advanced tools shaping the field.

Essential Process Parameters: The Foundation of Process Control

Critical Process Parameters are the controllable variables of a bioprocess that, when maintained within a defined range, ensure the process produces the desired product quality. The most fundamental CPPs consistently monitored across bioprocesses are physical and chemical environmental factors.

The Top Five Critical Process Parameters

1. Dissolved Oxygen (DO) Dissolved oxygen is a critical parameter for aerobic microorganisms, directly influencing cell growth, metabolism, and product formation [22]. It represents the amount of oxygen dissolved in the liquid medium. Insufficient DO levels can lead to decreased cell viability and compromised process efficiency, making continuous monitoring and control paramount for aerobic bioprocesses [22]. Measurement is traditionally done via probes that measure the partial pressure of oxygen or through non-invasive optical methods such as fluorescence-based sensors [22].

2. pH The acidity or alkalinity of the solution, measured as pH, profoundly influences microbial growth, enzyme activity, and the stability of the product [22]. Different organisms have specific pH ranges in which they thrive; deviations can inhibit growth or shift metabolism toward undesirable pathways [22]. Precise control of pH is achieved using electrodes and automated systems that add acid or base to maintain the setpoint, ensuring an environment favorable for producing the target compounds [22] [27].

3. Biomass Biomass refers to the concentration of cells in a culture and is a direct indicator of microbial or cellular growth [22]. Monitoring biomass provides insights into the health and viability of the culture and can serve as an indicator of contamination. The trajectory of biomass accumulation, often depicted as a growth curve, is pivotal for assessing process reproducibility and performance between fermentation runs [22].

4. Temperature Temperature is a central parameter that acts as a catalyst for optimal cell growth, metabolism, and the production of target compounds [22]. It profoundly influences enzymatic reactions and microbial activities. Both excessively high and low temperatures can impede these cellular activities, leading to reduced productivity or the formation of undesirable by-products. Temperature also affects the solubility of gases, such as oxygen, which are crucial for aerobic processes [22].

5. Substrate and Nutrient Concentration Substrates (e.g., sugars) and nutrients (e.g., vitamins, minerals) are the raw materials and fuel for cellular activities and product synthesis [22]. Achieving the right balance is fundamental; insufficient concentrations can limit growth and yields, while excesses can lead to wasteful metabolic pathways or the accumulation of inhibitory by-products. Monitoring these concentrations allows for tracking consumption and analyzing the efficiency of the bioprocess [22].

Monitoring Methodologies for CPPs

The methodology for measuring CPPs directly impacts the speed of data acquisition and the potential for real-time control. The PAT framework classifies these approaches as follows [26]:

Table: Bioprocess Monitoring and Control Methods

| Method | Description | Advantages | Disadvantages | Common Applications |

|---|---|---|---|---|

| Off-Line | Sample is removed and analyzed in a lab after physical pretreatment. | Can use sophisticated lab equipment (e.g., HPLC, mass spectrometry). | Significant time delay; manual handling prone to error; not suitable for PAT control. | Product titer, detailed quality attribute analysis. |

| At-Line | Sample is removed and analyzed automatically or manually near the process. | Shorter delay than off-line; potential for some automation. | Results may be too slow for fast-growing cultures; requires sterile sampling. | Parameters not suited for in-line measurement. |

| On-Line | Sample is diverted via a by-pass loop, measured automatically, and may be returned. | Enables real-time monitoring and control; simple sterilization. | Requires specific bioreactor design; added system complexity. | Automated sampling for complex sensors. |

| In-Line/In-Situ | Measurement occurs directly inside the bioreactor with a process sensor. | Real-time data; minimal delay; ideal for automated control. | Sensor must withstand process conditions (CIP/SIP); potential for drift. | pH, DO, temperature, pressure, DCO₂. |

In-line and on-line methods are the cornerstones of PAT, as they provide real-time data that can be fed directly to a Programmable Logic Controller (PLC) or Supervisory Control and Data Acquisition (SCADA) system for automated process control [27] [26]. This allows for immediate adjustments to input variables, keeping the process within the optimal operating range and ensuring consistency.

Critical Quality Attributes: Defining Product Quality

While CPPs relate to the process, Critical Quality Attributes (CQAs) are the measurable properties of the drug substance or drug product that must be within appropriate limits, ranges, or distributions to ensure the desired product quality [23]. They are directly linked to the safety and efficacy of the biologic medicine.

The Nature and Challenge of CQAs in Biologics

The complex and heterogeneous nature of biologics, which are often produced in living systems, introduces a wide array of potential quality attributes that are not a concern for small-molecule drugs [23]. Protein modifications, such as post-translational modifications (e.g., glycosylation) and degradation products (e.g., aggregation), can simultaneously affect multiple factors, including potency, pharmacokinetics, and immunogenicity [23]. This makes defining the criticality of each variant extremely challenging and places a premium on consistency of product quality [23].

Key CQAs for Biologics

For a typical biologic, such as a monoclonal antibody, key CQAs can be categorized as follows [28] [24]:

- Potency: The biological activity of the molecule, ensuring it performs its intended therapeutic function (e.g., target binding, cell killing) [24].

- Purity and Impurities: The level of the desired product and the minimization of process-related impurities like Host Cell Proteins (HCPs), DNA, and media components, as well as product-related impurities like aggregates [28] [24].

- Structural Integrity: Attributes including amino acid sequence, molecular size and charge variants, and glycosylation patterns. The specific glycosylation profile of a monoclonal antibody, for instance, is a well-known CQA that can impact its effector function and immunogenicity [28] [24].

- Obligatory Attributes: Certain attributes are always considered critical, such as endotoxin levels, mycoplasma levels, pH, and osmolality, as they directly impact patient safety [28]. Regulatory authorities typically specify acceptable ranges for these.

The Critical Link: Integrating CPPs and CQAs

The core objective of modern bioprocess development is to establish a definitive link between the process parameters (CPPs) and the final product quality (CQAs). This is achieved through a structured, risk-based workflow.

The CQA Identification and Risk Assessment Workflow

The process for identifying and ranking CQAs is iterative and knowledge-driven, evolving throughout a product's lifecycle [28]. The following diagram illustrates the key stages from initial identification to final categorization.

1. Define Quality Target Product Profile (QTPP): The process begins by defining the QTPP, a prospective summary of the quality characteristics of the drug product necessary to ensure the desired safety and efficacy [28].

2. Identify Potential CQAs (pCQAs): Based on the QTPP and prior knowledge (e.g., from platform molecules or literature), a list of potential CQAs is created. This includes product-specific attributes (e.g., glycosylation, charge variants), process-related impurities (e.g., HCPs), and obligatory CQAs (e.g., endotoxins) [28].

3. Risk Assessment and Scoring: Each pCQA is evaluated and scored based on two primary factors [28]: - Impact: The severity of the pCQA's effect on safety and efficacy. - Uncertainty: The level of confidence in the available information. A risk score is calculated (e.g., Impact × Uncertainty), creating a criticality continuum.

4. Filter pCQAs: The risk ranking is used to filter the list of pCQAs. Attributes with a high-risk score are designated as CQAs, while those with low scores may be considered non-critical [28].

5. Implement Control Strategy: The final CQAs are monitored through a defined control strategy, which includes specifying the CPPs that influence them, setting in-process controls, and defining the final product release specifications [24].

From Process Parameter to Product Attribute: An Experimental Framework

Establishing the cause-effect relationship between a CPP and a CQA requires targeted experimental protocols. A common approach involves forced degradation studies and enrichment studies [23] [28].

Protocol: Assessing the Impact of Bioreactor pH on Glycosylation CQA

1. Objective: To determine the impact of bioreactor pH (a CPP) on the distribution of glycan species (a CQA) of a monoclonal antibody.

2. Hypotheses:

- H₀: Variations in bioreactor pH within a defined range have no significant impact on the critical glycosylation profile.

- H₁: Bioreactor pH significantly alters the distribution of critical glycan species (e.g., high-mannose, afucosylation, galactosylation).

3. Experimental Design:

- A series of fed-batch bioreactor runs are performed using a representative mammalian cell line (e.g., CHO cells).

- The independent variable is the bioreactor pH setpoint. Multiple runs are conducted with pH controlled at different levels within a relevant range (e.g., 6.8, 7.0, 7.2, 7.4).

- All other CPPs (e.g., temperature, dissolved oxygen, feeding strategy) are kept constant.

- At harvest, the expressed antibody is purified using a standard protein A chromatography method.

4. Analytical Methods:

- Glycan Analysis: Released N-glycans are analyzed using Hydrophilic Interaction Liquid Chromatography with Fluorescence Detection (HILIC-FLD) or Liquid Chromatography-Mass Spectrometry (LC-MS). The relative percentages of key glycan species are quantified.

- Potency Assay: A cell-based or binding assay (e.g., ELISA, SPR) is performed to determine if the observed glycan changes correlate with altered biological activity (e.g., FcγRIIIa binding for ADCC).

5. Data Analysis and Criticality Determination:

- Statistical analysis (e.g., ANOVA) is used to determine if changes in glycan distribution across pH setpoints are significant.

- If a specific glycan variant (e.g., afucosylated G0) known to impact potency (e.g., enhanced ADCC) shows a statistically significant and meaningful change with pH, then:

- That glycan variant is confirmed as a CQA.

- Bioreactor pH is designated as a CPP for that CQA, and a proven acceptable range (PAR) for pH is established.

The Scientist's Toolkit: Key Research Reagent Solutions

Successfully executing bioprocess monitoring and CQA analysis requires a suite of specialized reagents, tools, and platforms.

Table: Essential Tools for Bioprocess Monitoring and CQA Analysis

| Tool / Reagent | Function / Description | Key Applications |

|---|---|---|

| Reference Biologic Aliquots | Small-quantity consumables of original, approved biologic drugs [28]. | Analytical method development; benchmarking for biosimilar development; in-vitro/in-vivo research controls. |

| Process Analytical Technology (PAT) Tools | Advanced sensors and spectrometers for real-time, in-line monitoring [25]. | Monitoring CPPs (pH, DO) and some CQAs (e.g., product titer, glycosylation) using NIR, Raman, or UV-Vis spectroscopy. |

| Smart pH/DO Sensors | In-line sensors with digital signal processing and direct PLC/SCADA connectivity [27] [26]. | Real-time monitoring and automated control of pH and dissolved oxygen in bioreactors. |

| Host Cell Protein (HCP) Assays | Immunoassays (e.g., ELISA) using polyclonal antibodies against host cell proteins [28]. | Quantification of HCP impurities, a key safety-related CQA, in drug substance and product. |

| Chromatography Systems (HPLC/UPLC) | High-/Ultra-Performance Liquid Chromatography for separation and analysis [29]. | Purity analysis, charge variant profiling (CE-SDS, icIEF), and quantification of product-related impurities. |

| Cell-Based Potency Assays | Bioassays that measure the biological activity of the biologic on living cells or tissues. | Determining the potency CQA; demonstrating lot-to-lot consistency and stability. |

Advanced Concepts: The Future of Real-Time Monitoring

The future of bioprocess monitoring lies in the deeper integration of data, models, and automation.

Digital Twins (DTs) are virtual replicas of a physical bioprocess that are updated in real-time with data from sensors [25]. They use hybrid models combining first principles (mechanistic knowledge) and Machine Learning (ML) to simulate, predict, and optimize process outcomes. A DT can act as a "soft sensor," predicting difficult-to-measure CQAs (like glycosylation) in real-time based on CPP data, enabling proactive control [25].

Artificial Intelligence (AI) and Machine Learning (ML) algorithms are being integrated directly into sensor systems and data analytics platforms [29] [30]. These tools can identify complex patterns in multivariate data, predict future process behavior, detect anomalies for early fault detection, and ultimately enable adaptive, self-optimizing bioprocesses. This represents the realization of the Industry 4.0 vision in biomanufacturing [25].

Tools and Techniques: A Deep Dive into Real-Time Monitoring Technologies

Vibrational spectroscopy, encompassing Raman and Infrared (IR) techniques, has emerged as a powerful analytical methodology for real-time bioprocess monitoring. These techniques provide non-destructive, chemical-free analysis of biological samples, producing characteristic chemical "fingerprints" with unique signature profiles essential for modern bioprocessing applications [31] [32]. The foundational principle of vibrational spectroscopy involves transitions between quantized vibrational energy states of molecules when they interact with electromagnetic radiation [31]. This review examines the technical applications of mid-infrared (IR) and Raman spectroscopy for analyzing metabolites and biomass within the framework of real-time bioprocess monitoring research, addressing the critical need for rapid, reproducible detection methodologies in biological systems [31].

The growing adoption of Process Analytical Technology (PAT) in biopharmaceutical manufacturing and other bioprocessing industries has accelerated the implementation of vibrational spectroscopy for real-time monitoring capabilities [4]. Unlike conventional analytical methods such as mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy, which require costly instrumentation, complex sample pretreatment, and well-trained technicians, vibrational spectroscopy techniques offer simplified operational workflows that are more amenable to implementation in various bioprocessing environments [31] [32]. This technical guide explores the fundamental principles, experimental protocols, and applications of Raman and IR spectroscopy for metabolite and biomass analysis, providing researchers and drug development professionals with comprehensive methodologies for enhancing bioprocess monitoring and optimization.

Fundamental Principles and Technical Comparisons

Infrared Spectroscopy Fundamentals

Mid-infrared spectroscopy (4000-400 cm⁻¹) operates on the principle of infrared absorption by functional groups in samples, resulting in vibrational motions including stretching, bending, deformation, or combination vibrations [31] [32]. When molecules absorb IR radiation, they undergo a change in dipole moment resulting from induced vibrational motion that rearranges their charge distribution [32]. The resulting absorption spectra provide detailed information about the chemical and biochemical substances present in the sample, making it particularly valuable for functional group identification and quantitative analysis [33].

A significant technical challenge in FT-IR spectroscopy is the strong interference from water in the mid-IR region, which masks key biochemical information, particularly in the Amide I (~1650 cm⁻¹) and lipids (3000-3500 cm⁻¹) absorption regions [31] [32]. This limitation can be mitigated through several approaches: mathematical removal of pure or scaled water spectra from acquired spectra, sample dehydration, using D₂O solution, or significantly lowering the effective path length using attenuated total reflectance (ATR) as a sampling technique [31] [32]. ATR-IR systems allow samples to be directly pressed against a crystal for spectral analysis, enabling the development of hand-held IR instruments suitable for field applications [34].

Raman Spectroscopy Fundamentals

Raman spectroscopy is based on an inelastic light-scattering phenomenon where incident photons irradiated on a sample cause molecules to scatter light [31] [32]. While most scattered light maintains the same frequency as the incident light, a small fraction undergoes frequency shifts due to interactions between light oscillation and molecular vibration—a phenomenon known as Raman scattering [32]. Unlike IR spectroscopy, Raman spectroscopy exhibits minimal water interference, providing a significant advantage for analyzing biological samples [31] [4].

The Raman effect is inherently weak, with only approximately 1 in 10⁸ photons undergoing Raman scattering [31]. This limitation can be addressed through longer acquisition times (which may cause sample damage due to laser exposure) or signal enhancement techniques such as Surface-Enhanced Raman Scattering (SERS) [31] [32]. SERS utilizes nanoscale roughened metallic surfaces (typically gold or silver) to enhance Raman signals by approximately 10⁸ orders of magnitude, with further amplification to 10¹¹ possible using surface-enhanced resonance Raman spectroscopy (SERRS) [31]. Another challenge in Raman spectroscopy is fluorescence interference, particularly when using visible wavelength lasers [32]. This interference can be addressed through mathematical correction, photobleaching pre-treatment, or using longer wavelength lasers (e.g., 1064 nm) [31] [32].

Complementary Techniques and Applications

IR and Raman spectroscopy are complementary analytical techniques due to their different molecular interaction mechanisms [32]. IR absorption is active for asymmetrical vibrations that change the dipole moment, while Raman scattering is active for symmetric vibrations that change polarizability [32]. This complementary relationship enables comprehensive molecular characterization, making vibrational spectroscopy particularly valuable for complex biological samples containing diverse molecular structures and functional groups.

Table 1: Comparative Analysis of Vibrational Spectroscopy Techniques

| Parameter | Mid-IR Spectroscopy | Raman Spectroscopy |

|---|---|---|

| Fundamental Principle | Absorption of IR radiation | Inelastic light scattering |

| Molecular Requirement | Change in dipole moment | Change in polarizability |

| Water Interference | Strong, masks key regions | Minimal, advantageous for biofluids |

| Key Limitations | Water absorption issues | Weak signal strength; Fluorescence interference |

| Enhancement Techniques | ATR sampling | SERS, SERRS |

| Typical Laser Excitation | N/A | 785 nm, 830 nm, 1064 nm |

| Spatial Resolution | ~10-20 μm (microspectroscopy) | ~1 μm (confocal microscopy) |

| Sample Preparation | Minimal to moderate | Minimal |

Experimental Protocols and Methodologies

Online FT-Raman Spectroscopy for Fermentation Monitoring

Protocol Objective: Real-time monitoring of biomass production, intracellular metabolites, and carbon substrates during submerged fermentation of oleaginous and carotenogenic microorganisms [35].

Materials and Equipment:

- FT-Raman spectrometer with 1064 nm excitation laser

- Bioreactor system (e.g., Infors Minifors 2)

- Flow cell integrated into recirculatory loop

- Sterilizable sampling interface

- Reference analytical equipment (HPLC for substrates, GC for metabolites)

Experimental Workflow:

- System Calibration: Develop multivariate regression models using reference measurements correlated with spectral data

- Sterile Installation: Connect flow cell to bioreactor via recirculatory loop while maintaining sterile barrier

- Spectral Acquisition: Collect spectra continuously (e.g., every 15-30 minutes) throughout fermentation

- Real-time Analysis: Process spectral data using pre-calibrated models to quantify parameters

- Model Validation: Verify predictions against offline reference measurements

Key Parameters Monitored:

- Carbon substrate utilization (glucose, glycerol)

- Biomass concentration

- Intracellular metabolites (triglycerides, free fatty acids, carotenoid pigments)

Performance Metrics: The methodology demonstrated excellent correlation with reference measurements, with coefficients of determination (R²) ranging 0.94-0.99 and 0.89-0.99 for all concentration parameters of Rhodotorula and Schizochytrium fermentation, respectively [35].

Vibrational Spectroscopy for Biofluid Analysis

Protocol Objective: Rapid, non-invasive analysis of metabolite biomarkers in biofluids for diagnostic applications [31] [32].

Materials and Equipment:

- Hand-held Raman spectrometer (785 nm or 830 nm excitation) or ATR-IR spectrometer

- Appropriate sample containers (quartz cuvettes for Raman, ATR crystal for IR)

- Software for spectral processing and multivariate analysis

- Reference standards for metabolite identification

Sample Preparation - Urine Analysis:

- Collect urine samples with minimal invasive procedures

- For Raman spectroscopy: minimal preparation required, possible dilution if necessary

- For IR spectroscopy: may require dehydration or use of D₂O solution to minimize water interference

- Transfer to appropriate measurement platform

Spectral Acquisition Parameters:

- Raman: 785 nm laser, 5-20 second acquisition time, multiple accumulations

- ATR-IR: 4 cm⁻¹ resolution, 32-64 scans per spectrum

- Background spectra collection before sample measurement

Data Analysis Workflow:

- Pre-processing: cosmic ray removal (Raman), atmospheric compensation, vector normalization

- Peak assignment to specific metabolites:

- Uric acid (567 cm⁻¹ in Raman)

- Creatinine (692 cm⁻¹ in normal, 1336 and 1427 cm⁻¹ in malignant in Raman)

- Glucose (1046 cm⁻¹ in Raman)

- Tryptophan (1417 cm⁻¹ in normal and 1547 cm⁻¹ in premalignant and malignant in Raman)

- Multivariate statistical analysis (PCA, PLS) for classification and quantification

Performance Characteristics: This approach enables simultaneous detection of multiple metabolites, providing rapid, highly specific, and non-invasive sample characterization suitable for clinical diagnostics and therapeutic monitoring [32].

Plant Stress Detection and Phenotyping

Protocol Objective: Non-destructive detection of biotic and abiotic stresses in plants using portable vibrational spectroscopy [34].

Materials and Equipment:

- Hand-held Raman spectrometer (830 nm excitation recommended) or ATR-IR instrument

- Plant leaf samples or in-field measurement capability

- Standard reference materials for calibration

- SERS substrates (if implementing enhanced detection)

Experimental Procedure: