Specific vs. Selective Sensing: A Strategic Guide for Enhanced Drug Discovery and Development

This article provides a comprehensive comparison of specific and selective sensing approaches, crucial for researchers and professionals in drug development.

Specific vs. Selective Sensing: A Strategic Guide for Enhanced Drug Discovery and Development

Abstract

This article provides a comprehensive comparison of specific and selective sensing approaches, crucial for researchers and professionals in drug development. It explores the foundational definitions, distinguishing the theoretical ideal of specificity from the practical spectrum of selectivity. The content delves into methodological applications across various stages of drug discovery, from target identification to clinical trials, and offers practical guidance for troubleshooting and optimizing sensing protocols. Finally, it examines validation frameworks and comparative analyses, empowering scientists to strategically select and implement the most effective sensing approach for their specific research and development goals.

Core Concepts: Demystifying Specificity and Selectivity in Pharmacological Sensing

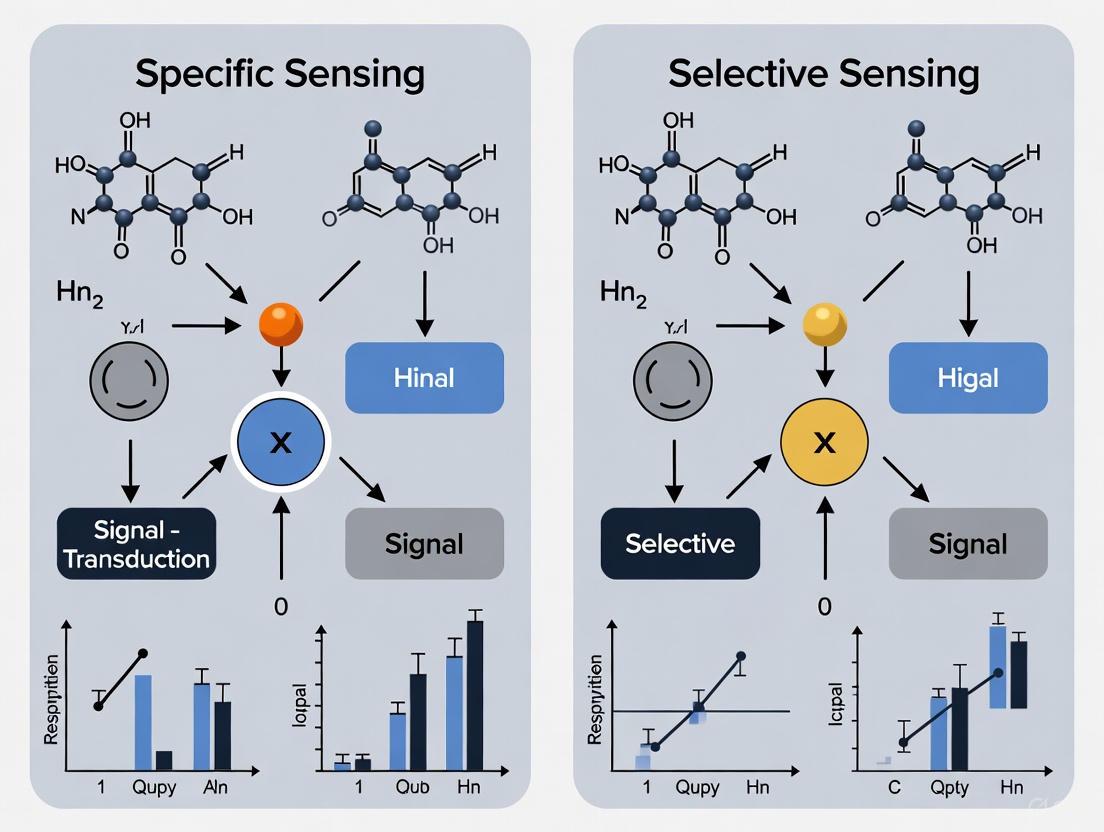

In the fields of chemical sensing and pharmaceutical development, the terms "selectivity" and "specificity" are often used interchangeably, yet they represent fundamentally distinct concepts in analytical science. Selectivity refers to a sensor's or method's preferential response to a target analyte among a group of similar interferents, operating on a spectrum of relative discrimination. In contrast, specificity describes an absolute, binary recognition capability where a sensing mechanism responds exclusively to a single target based on unique structural or mechanistic complementarity.

This distinction carries profound implications for diagnostic accuracy, drug discovery, and environmental monitoring. As sensing technologies evolve to address increasingly complex analytical challenges, understanding this spectrum from preferential to absolute recognition becomes crucial for researchers selecting appropriate methodologies for their specific applications. This whitepaper examines the theoretical foundations, experimental manifestations, and practical implications of this critical distinction through contemporary research examples, providing a framework for the strategic implementation of these complementary approaches in scientific research.

Theoretical Foundations and Definitions

The conceptual divide between selectivity and specificity originates from fundamental differences in recognition mechanisms and their practical implementations in sensing systems.

Selectivity emerges from differential affinity, where a recognition element interacts with multiple related compounds but exhibits a measurable preference for the target analyte. This preferential binding is quantifiable through ratios of response factors, binding constants, or inhibition coefficients. Selectivity is inherently relative and context-dependent, influenced by the composition of the sample matrix and the presence of structurally similar compounds. In sensor arrays, selectivity often arises from differential response patterns across multiple sensing elements rather than exclusive recognition at a single site [1].

Specificity implies a lock-and-key mechanism where molecular recognition depends on exact complementarity between the target and recognition element. This absolute recognition typically stems from unique structural features that prevent binding even to closely related analogs. Specificity is often binary—a response either occurs or does not—and is less susceptible to matrix effects when the recognition mechanism is truly specific [2].

The distinction manifests practically in the design and validation of analytical methods. Selective methods require comprehensive interference testing to establish the degree of preference, while specific methods demand demonstration of exclusive recognition under defined conditions.

Experimental Manifestations in Sensing Technologies

Molecular Imprinting: The Selective Approach

Molecularly Imprinted Polymers (MIPs) exemplify the selective approach through synthetic recognition sites complementary to target molecules in shape, size, and functional group orientation. The preparation of MIP-based sensors involves a multi-step process that creates preferential rather than absolute recognition capabilities.

Table 1: Key Stages in MIP Sensor Development [3]

| Stage | Process Description | Function |

|---|---|---|

| Template-Monomer Complexation | Functional monomers (e.g., methacrylic acid) form pre-polymerization complexes with template molecules (e.g., donepezil) via non-covalent interactions | Creates molecular memory through complementary binding sites |

| Cross-linking Polymerization | Ethylene glycol dimethacrylate (EGDMA) forms highly cross-linked polymer matrix around template-monomer complexes | Stabilizes recognition cavities and maintains structural integrity |

| Template Extraction | Template molecules removed from polymer matrix using solvent extraction | Liberates recognition sites for subsequent analyte binding |

| Sensor Integration | MIP particles incorporated into electrode membranes (e.g., PVC-based ion-selective membranes) | Transduces binding events into measurable signals (e.g., potentiometric) |

The experimental protocol for MIP-based sensor development follows a rigorous pathway:

Preparation of MIPs: Dissolve 0.5 mmol template drug (donepezil or memantine) in 40 mL dimethylsulfoxide (DMSO) porogenic solvent. Add 2.0 mmol methacrylic acid functional monomer and sonicate 15 minutes. Introduce 8.0 mmol ethylene glycol dimethacrylate cross-linker and 0.6 mmol azobisisobutyronitrile initiator, followed by nitrogen purging for 15 minutes. Incubate at 60°C for 24 hours for polymerization [3].

Template Removal: Extract template molecules through repeated washing with methanol:acetic acid (9:1 v/v) until no template is detectable in washings by HPLC.

Sensor Fabrication: Incorporate resulting MIP particles into ion-selective membrane composition containing polyvinyl chloride (PVC), plasticizer (e.g., 2-nitrophenyl octyl ether), and ionic additive. Dissolve components in tetrahydrofuran, cast on electrode surfaces (e.g., graphene-modified glassy carbon), and evaporate solvent to form sensing membrane.

The selectivity of MIP sensors is quantitatively demonstrated through potentiometric selectivity coefficients (log Kᵖᵒᵗ) determined via separate solution or mixed solution methods. For donepezil MIP sensors, selectivity coefficients of -3.42 against memantine and -3.75 against acetylcholinesterase demonstrate preferential recognition rather than absolute specificity [3].

Diagram 1: MIP sensor workflow demonstrating selective recognition

Aptamer-Based Recognition: The Specific Approach

Aptamers represent the specific approach through oligonucleotide sequences selected for exclusive binding to particular molecular targets. The GO-SELEX (Graphene Oxide-Systematic Evolution of Ligands by Exponential Enrichment) process exemplifies the development of highly specific recognition elements:

Library Incubation: Incubate initial single-stranded DNA library (∼10¹⁵ random sequences) with target molecule (e.g., azamethiphos) in binding buffer.

Partitioning: Add graphene oxide to mixture; unbound sequences adsorb to GO surface while target-bound aptamers remain in supernatant.

Amplification: Recover target-bound sequences from supernatant and amplify via PCR for next selection round.

Counter-Selection: Introduce non-target structural analogs (e.g., malathion, chlorpyrifos) during intermediate rounds to eliminate cross-reactive sequences [4].

The resulting aptamers achieve specificity through unique three-dimensional structures complementary to their targets. For the azamethiphos-specific aptamer, this approach yielded a dissociation constant (Kd) of 26.27 ± 1.27 nM with minimal cross-reactivity to structurally similar organophosphates [4].

Dual-Recognition Systems: Bridging the Spectrum

Hybrid systems combining MIPs and aptamers leverage both selective and specific mechanisms for enhanced analytical performance. The experimental protocol for such systems involves:

Aptamer Functionalization: Immobilize selected aptamer onto sensor surface (e.g., gold nanoparticle-modified electrode) via thiol or amino linkage.

MIP Formation: Perform electropolymerization of functional monomers (e.g., o-phenylenediamine) around aptamer-target complexes to create complementary recognition sites.

Synergistic Recognition: Employ aptamer for primary specific recognition and MIP for secondary selective enrichment, significantly improving sensitivity and robustness in complex matrices [4].

Table 2: Performance Comparison of Recognition Approaches [3] [4] [2]

| Parameter | MIP-Based Selective Sensors | Aptamer-Based Specific Sensors | Dual-Recognition Systems |

|---|---|---|---|

| Recognition Mechanism | Shape/complementarity-based cavities | 3D structure molecular fit | Combined mechanisms |

| Cross-reactivity Profile | Preferential with measurable interferent response | Minimal to non-detectable cross-reactivity | Enhanced discrimination |

| Development Time | Weeks | Months (including selection) | Several months |

| Stability | High thermal/chemical stability | Moderate (nuclease sensitivity) | High (MIP protects aptamer) |

| Detection Limit | nM to μM range | pM to nM range | pM range |

| Matrix Tolerance | Moderate (improved with design) | High for specific applications | Excellent in complex samples |

Pharmaceutical Case Study: Sodium Channel Inhibition

The distinction between selectivity and specificity is powerfully illustrated by sodium channel inhibitors in pain management, where mechanism of action directly correlates with therapeutic profile.

Non-selective sodium channel blockers (e.g., local anesthetics, anticonvulsants) inhibit multiple NaV subtypes (NaV1.1-NaV1.9) through interaction with conserved channel regions. This lack of discrimination produces dose-limiting side effects including CNS toxicity and cardiovascular impairment [2].

In contrast, suzetrigine exemplifies specific targeting through allosteric inhibition of NaV1.8 channels. The experimental protocol for demonstrating this absolute specificity involves:

Electrophysiology Studies: Express human NaV subtypes (NaV1.1-NaV1.9) in heterologous cell systems (HEK, CHO). Apply voltage clamp protocols to determine IC₅₀ values for suzetrigine across subtypes.

Binding Site Mapping: Construct NaV1.8/1.2 chimeras with exchanged voltage-sensing domains (VSDs). Identify critical VSD2 region as suzetrigine binding site through chimera inhibition profiling.

CNS Expression Analysis: Evaluate SCN10A (NaV1.8) gene expression in human CNS tissues using RNA-seq data from GTEx and Human Protein Atlas databases [2].

This comprehensive approach demonstrated suzetrigine's >31,000-fold selectivity for NaV1.8 over other subtypes and its lack of CNS expression, explaining its analgesic efficacy without addictive potential or CNS side effects [2].

Diagram 2: Specific versus non-specific sodium channel targeting

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents for Selectivity and Specificity Research [3] [4] [2]

| Reagent/Category | Function in Research | Specific Examples |

|---|---|---|

| Molecular Imprinting Components | Creates selective recognition cavities | Methacrylic acid (functional monomer), EGDMA (cross-linker), AIBN (initiator) |

| Aptamer Development Tools | Generates specific recognition elements | ssDNA library, graphene oxide (partitioning), Taq polymerase (PCR amplification) |

| Sensor Transduction Materials | Converts binding events to measurable signals | Graphene nanoplatelets (ion-to-electron transducer), PVC (membrane matrix), NPOE (plasticizer) |

| Cell-Based Assay Systems | Evaluates specificity in biological contexts | HEK/CHO cells expressing ion channels, primary human DRG neurons |

| Characterization Reagents | Quantifies binding and recognition parameters | Tetrodotoxin (NaV channel reference), radiolabeled ligands (binding assays) |

The distinction between selectivity as a preferential characteristic and specificity as an absolute property represents a fundamental paradigm in sensing science with far-reaching implications for research and development. Selective systems, exemplified by molecularly imprinted polymers, offer practical advantages for applications where preferential recognition among structural analogs suffices, providing robust, cost-effective solutions for many analytical challenges. Specific systems, embodied by high-affinity aptamers and targeted pharmaceuticals, deliver uncompromised recognition essential for applications where cross-reactivity carries significant consequences.

The evolving landscape of sensing technologies demonstrates that both approaches have distinct and complementary roles in advancing analytical science. Rather than representing opposing philosophies, selectivity and specificity define a spectrum of recognition capabilities that researchers can strategically leverage based on their specific analytical requirements, matrix complexities, and performance expectations. The most innovative solutions increasingly integrate both principles, creating hybrid systems that harness the practical advantages of selective materials with the exceptional discrimination of specific recognition elements, pushing the boundaries of what is analytically possible in complex biological and environmental matrices.

The pursuit of compounds that interact with high affinity towards a specific biological target is a cornerstone of drug discovery and molecular sensing. This endeavor is fundamentally governed by two parallel, yet distinct, conceptual frameworks: specificity and selectivity. A specific interaction, often the ideal in sensor design, is characterized by a receptor (like an antibody or aptamer) that binds exclusively to a single target analyte, recognizing no other [5]. In contrast, a selective interaction describes a compound that binds to a primary target with the highest affinity but may also interact with a range of secondary, off-targets to varying degrees [6] [5]. This reality is particularly acute in the field of protein kinase inhibitors, where a highly conserved ATP-binding site across more than 500 family members makes achieving specificity exceptionally difficult [6]. Consequently, the quantitative assessment of selectivity—measuring a compound's ability to discriminate between the primary target and off-targets—becomes paramount for understanding potential efficacy and toxicity liabilities early in the drug discovery process [6]. This guide details the mathematical models and experimental protocols used to quantify binding affinity and selectivity, providing researchers with the tools to navigate this critical landscape.

Mathematical Foundations of Binding Affinity and Selectivity

Core Affinity Metrics: IC₅₀ and Kᵢ

The foundation of quantifying molecular interactions lies in measuring binding affinity. Two of the most critical metrics are:

- IC₅₀ (Half-Maximal Inhibitory Concentration): This is the concentration of an inhibitor required to reduce a specific biological or biochemical activity by half. It is a functional assay metric, determined from dose-response curves. While it directly indicates potency, its value can be influenced by experimental conditions such as substrate concentration and assay duration.

- Kᵢ (Inhibition Constant): This represents the absolute dissociation constant of the enzyme-inhibitor complex. It is a true thermodynamic measurement of the inhibitor's affinity for the enzyme, independent of substrate concentration or incubation time. The Kᵢ is typically derived from IC₅₀ values using established equations like the Cheng-Prusoff equation for competitive inhibitors.

The binding curve, which plots the measured effect (e.g., percentage of inhibition) against the logarithm of the compound concentration, is the primary source for deriving these values. Mathematical models, ranging from simple one-site binding to more complex multiple binding site models, are then applied to this data to mathematically derive the binding affinity [7].

Quantitative Selectivity Metrics

Moving beyond affinity for a single target, selectivity metrics provide a numerical profile of a compound's interaction across multiple targets. The following table summarizes key traditional and novel metrics.

Table 1: Key Metrics for Quantifying Compound Selectivity

| Metric | Formula / Description | Interpretation | Pros & Cons |

|---|---|---|---|

| Standard Selectivity Score (S(x)) | ( S(x) = \frac{\text{number of values} \geq x}{\text{total number of values}} ) [6] | Measures the fraction of a profiling panel inhibited above a threshold x (e.g., pKd6, or 80% inhibition). |

Pro: Simple, quantitative.Con: Highly dependent on arbitrary threshold; loses nuance of affinity distribution [6]. |

| Gini Coefficient | Based on the Lorenz curve of sorted affinities. Calculated as ( G = \frac{A}{A+B} ), where A is the area between the line of equality and the Lorenz curve, and B is the area below the Lorenz curve. | Ranges from 0 (perfect non-selectivity, equal affinity for all) to 1 (absolute selectivity for one target). | Pro: Single, standardized metric; widely used.Con: Can be insensitive to the number of targets inhibited [6]. |

| Selectivity Entropy | Derived from information theory, calculating the entropy of the affinity distribution. | A lower entropy value indicates a more selective profile. | Pro: Accounts for the entire distribution of activities.Con: Less intuitive than other scores [6]. |

| Partition Index (PI) | ( PI = \frac{(n-1) \times K{d,primary}}{\sum K{d,off-targets}} ) [6] | Estimates the theoretical dilution factor required to abolish all off-target binding while maintaining primary target binding. | Pro: Provides a practical, theoretical utility.Con: Requires accurate Kd values for all targets. |

| Window Score (WS) | ( WS = Activity{primary} - \frac{\sum Activities{off-targets}}{n_{off-targets}} ) [6] | The difference between the activity on the primary target and the average activity on all off-targets. | Pro: Intuitive, easy to compute, offers a different viewpoint from S(x).Con: Sensitive to extreme off-target values. |

| Ranking Score (RS) | Based on the difference in rank between the primary target and other targets when activities are sorted. | A higher score indicates a larger activity gap between the primary target and the nearest off-targets. | Pro: Complements WS by focusing on rank-order of potency [6]. |

The Window Score (WS) and Ranking Score (RS) are two novel metrics designed to address the limitations of the standard selectivity score. The WS gives a direct measure of the activity gap, while the RS ensures that a compound with a large drop in activity after the first target is recognized as highly selective, even if its WS is affected by a long tail of very weak off-target interactions [6].

Experimental Protocols for Selectivity Assessment

Experimental Workflow for Kinase Profiling

A standardized workflow is essential for generating robust and comparable selectivity data. The following diagram outlines the key stages in a typical kinase selectivity profiling study.

Detailed Methodologies for Key Experiments

Protocol 1: Competition Binding Assay for Kd Determination (as used by Davis et al. [6])

- Objective: To measure the dissociation constant (Kd) for a compound against a large panel of kinases.

- Materials:

- Kinase panel (e.g., 439 wild-type and mutant protein kinases)

- Test compounds

- Immobilized ligand beads

- Procedure:

- Incubation: Incubate the kinase with the test compound at a single, high concentration (e.g., 10 µM).

- Binding: Add the mixture to immobilized ligand beads that bind the kinase.

- Detection: Detect bound kinase. If the test compound displaces the kinase from the beads, a Kd is measured.

- Kd Measurement: A Kd value is determined only for kinase-compound pairs where significant displacement is detected at the initial screening concentration.

- Data Output: A matrix of Kd values for each compound across the entire kinase panel.

Protocol 2: "HotSpot" Enzymatic Assay for Percentage Inhibition (as used by Anastassiadis et al. [6])

- Objective: To measure the percentage of enzyme activity inhibition by a compound at a fixed concentration.

- Materials:

- Kinase panel (e.g., 300 kinases)

- Test compounds

- ATP, substrate, and detection reagents

- Procedure:

- Reaction Setup: Combine kinase, a fixed concentration of compound (e.g., 0.5 µM), ATP, and substrate.

- Reaction: Allow the enzymatic reaction to proceed.

- Detection: Quantify the reaction product (e.g., using ADP detection reagents).

- Calculation: Calculate the percentage of inhibition relative to a DMSO control.

- Data Output: A matrix of percentage inhibition values for each compound across the kinase panel at the specified concentration.

Protocol 3: Cellular Potency Assay (NCI-60 Screen) [6]

- Objective: To determine the cellular potency (e.g., EC₅₀) of compounds against a panel of human tumor cell lines.

- Materials:

- NCI-60 cell line panel (60 cell lines from 9 different cancer types)

- Test compounds

- Procedure:

- Cell Treatment: Treat cells with the test compound across a range of concentrations.

- Viability Measurement: Measure cell viability or growth inhibition after a set period (e.g., 48 hours).

- Dose-Response Curve: Generate a dose-response curve for each cell line.

- EC₅₀ Calculation: Calculate the EC₅₀ (concentration for 50% of maximal effect) for each compound-cell line pair.

- Data Output: A profile of cellular potency across 60 different cancer cell lines, allowing for assessment of selectivity in a more physiologically relevant context.

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Selectivity Screening

| Item | Function in Selectivity Assessment |

|---|---|

| Kinase Profiling Panels | Pre-configured sets of hundreds of purified human kinases (wild-type and mutant) used for high-throughput screening to generate comprehensive interaction data [6]. |

| Immobilized Ligand Beads | Used in competition binding assays (e.g., Ambit) to capture kinases not bound by the test inhibitor, enabling Kd measurement [6]. |

| ATP & Substrate Peptides | Essential components of kinase enzymatic assays. The compound's ability to inhibit the transfer of phosphate from ATP to the substrate is the measured readout [6]. |

| ADP-Glo / HTRF Kinase Kits | Homogeneous, luminescence- or fluorescence-based detection kits that measure ADP production as a direct indicator of kinase activity, suitable for HTS [6]. |

| Cell-Based Assay Panels (e.g., NCI-60) | Panels of diverse human cell lines used to assess compound potency and selectivity in a cellular context, providing data on permeability, toxicity, and pathway engagement [6]. |

Data Visualization and Interpretation

Visualizing Selectivity Data

Effective visualization is key to interpreting complex selectivity data. The following diagram illustrates the logical relationship between raw data, calculated metrics, and the final selectivity assessment, which bridges the concepts of specific and selective sensing.

Selectivity data is often presented as heatmaps, where colors represent affinity or inhibition levels across a kinome tree, or as bar charts comparing the primary target's potency against key off-targets [8]. The choice of metric significantly influences the perceived selectivity profile. For instance, a compound may appear highly selective with a threshold-based metric like S(pKd7) but show a less promising profile with the Gini coefficient, underscoring the need to use multiple metrics for a comprehensive assessment [6].

Case Study: Applying Metrics in Kinase Research

Analysis of large kinase profiling datasets has demonstrated the complementary nature of different selectivity metrics. For example, when applied to the dataset from Davis et al., the novel Window Score (WS) and Ranking Score (RS) provided additional viewpoints for prioritizing compounds that might be overlooked using the standard selectivity score alone [6]. These metrics are particularly useful because they can be applied to diverse data types—including Kd, IC₅₀, and cellular EC₅₀—offering a universal tool for comparing selectivity profiles regardless of the experimental origin [6]. This is crucial, as selectivity profiles determined in enzymatic assays do not always correlate perfectly with those observed in cellular environments [6].

The quantitative assessment of binding selectivity is a critical component of modern drug discovery and sensor development. Moving beyond simple affinity measurements, robust mathematical frameworks comprising multiple metrics—such as the Window Score, Ranking Score, and Gini coefficient—provide a nuanced and multi-faceted profile of compound behavior [6]. This mathematical rigor allows researchers to frame their work within the broader context of specific versus selective sensing, strategically choosing the appropriate approach for their application [5]. By integrating these quantitative selectivity assessments early and throughout the discovery pipeline, and by employing standardized experimental protocols, scientists can make more informed decisions, de-risk the development of therapeutics, and ultimately increase the likelihood of clinical success.

The concept of the "magic bullet" (Zauberkugel), pioneered by German Nobel laureate Paul Ehrlich in the early 20th century, represents one of the most influential paradigms in the history of therapeutics [9]. Ehrlich envisioned a therapeutic agent that could selectively target and destroy disease-causing pathogens without harming the host's body [10]. This seminal idea laid the intellectual foundation for the entire field of chemotherapy and established the core principle of selective molecular targeting that drives modern drug discovery [11] [12].

Ehrlich's concept was inspired by both his immunological research and the observed selectivity of synthetic dyes used in biological staining [10]. His work established the fundamental principle that therapeutic efficacy depends on the precise molecular interaction between a drug and its target, encapsulated in his famous postulate: "wir müssen chemisch zielen lernen" ("we must learn to aim chemically") [9]. A century later, this principle has evolved into the sophisticated landscape of modern drug design, where computational methods and quantitative approaches enable unprecedented precision in developing targeted therapies [11] [13].

Table: Key Historical Milestones from Magic Bullet to Modern Drug Design

| Time Period | Key Development | Primary Approach | Representative Example |

|---|---|---|---|

| 1900-1910 | Concept of Magic Bullet & Chemotherapy | Empirical screening of synthetic compounds | Salvarsan (Compound 606) for syphilis [9] |

| 1910-1950 | Serum Therapy & Early Antibiotics | Biological agents & natural product isolation | Penicillin, Diphtheria antitoxin [10] |

| 1950-1980 | Rational Drug Design & High-Throughput Screening | Structure-activity relationships (SAR) | AZT for HIV [14] |

| 1980-2000 | Computational Drug Design & Combinatorial Chemistry | Ligand-based & structure-based design | Statins, ACE inhibitors [13] |

| 2000-Present | Systems Pharmacology & Polypharmacology | Network analysis & multi-target drug design | Targeted cancer therapies [13] |

Paul Ehrlich's Foundational Work

Theoretical Foundations and Predecessor Concepts

Ehrlich's magic bullet concept emerged from his systematic investigations across multiple scientific domains. His early work with aniline dyes demonstrated that certain chemicals could selectively stain specific tissues, cells, and cellular components, suggesting the possibility of analogous therapeutic selectivity [10]. This was complemented by his research in immunology, where he observed that antibodies produced in response to toxins could specifically target these pathogens without damaging host tissues—an early biological model of targeted therapy [9].

Ehrlich's side-chain theory (later revised as the receptor theory) provided the first theoretical framework explaining this selectivity [9] [10]. He proposed that chemical structures called "side chains" on cells and pathogens could form specific interactions with therapeutic compounds. This theory represented a radical departure from previous empirical approaches and established the fundamental principle that drug action depends on specific molecular complementarity between therapeutic agents and their targets [10].

Salvarsan: The First Magic Bullet

The practical realization of Ehrlich's concept emerged from his systematic search for a syphilis treatment. Together with Sahachiro Hata, Ehrlich tested hundreds of arsenic compounds, ultimately identifying Compound 606 (arsphenamine), which they named Salvarsan [9]. The discovery process exemplified both the potential and challenges of early targeted therapy development:

- Systematic Screening: Ehrlich and his team methodically synthesized and tested over 600 compounds in what was, for its time, an unprecedentedly large-scale drug development program [10].

- Experimental Validation: Hata's critical observation on August 31, 1909, that a single dose of Compound 606 cured syphilis-infected rabbits without adverse effects demonstrated its efficacy and selectivity [9].

- Clinical Translation: When administered to human patients with advanced syphilis, Salvarsan produced remarkable recoveries, particularly when administered during early disease stages [9].

Despite its success, Salvarsan also revealed the limitations of early magic bullets. The compound required difficult intravenous administration and carried significant side effects, including limb loss and multisystem failure in some cases [14]. This highlighted the challenge of achieving perfect selectivity and foreshadowed the ongoing balancing act between efficacy and toxicity in drug development.

Diagram Title: Theoretical and Experimental Path to Salvarsan

Evolution from Empirical to Targeted Approaches

Refinement of the Magic Bullet Concept

Following Ehrlich's initial breakthrough, the magic bullet concept evolved through several distinct phases. The mid-20th century saw the rise of antibiotic therapies, which represented a new class of magic bullets targeting bacterial pathogens [14]. However, subsequent decades revealed the limitations of single-target approaches, particularly for complex diseases like cancer, HIV/AIDS, and chronic conditions [15].

The failure of single-drug "miracle cures" became apparent with several high-profile cases:

- Azidothymidine (AZT) for HIV/AIDS: Initially heralded as a breakthrough treatment, AZT demonstrated severe side effects and limited long-term efficacy, disappointing patients who had hoped for a complete cure [14].

- Hydroxychloroquine for COVID-19: Recently, this antimalarial drug was promoted as a miracle cure despite limited evidence, following a familiar pattern of public desperation for simple solutions to complex diseases [14].

These examples underscore a critical evolution in understanding: true magic bullets remain elusive for many complex diseases, and effective therapy often requires multi-target approaches or combination therapies that address disease complexity [13].

The Rise of Quantitative and Computational Methods

Modern drug discovery has progressively incorporated quantitative approaches that enable more precise targeting. The introduction of randomized clinical trials in the mid-20th century established a rigorous methodology for evaluating therapeutic efficacy and safety [15]. Simultaneously, advances in analytical chemistry and pharmacokinetics provided tools to quantitatively measure drug distribution, metabolism, and target engagement [16].

The late 20th century witnessed the emergence of Computer-Aided Drug Discovery (CADD), which applies computational power to systematically explore the relationship between chemical structure and biological activity [12]. Key computational approaches include:

- Quantitative Structure-Activity Relationship (QSAR): Establishes mathematical models between chemical structural features and biological activity [12].

- Molecular Docking: Predicts the binding mode and affinity of ligands to target proteins [12].

- Chemical Similarity Searching: Identifies compounds with structural similarity to known active molecules [13].

These methods have progressively shifted drug discovery from Ehrlich's empirical screening toward prediction-driven design, dramatically improving the efficiency of identifying selective therapeutic agents [13] [12].

Table: Evolution of Quantitative Methods in Drug Development

| Method Category | Era of Prominence | Key Principles | Impact on Selectivity & Specificity |

|---|---|---|---|

| Empirical Compound Screening | 1900-1980 | Test compound libraries in biological assays | Low specificity; dependent on chance discovery |

| Structure-Activity Relationships (SAR) | 1960-1990 | Systematic modification of lead compounds | Medium specificity; incremental optimization |

| Quantitative Structure-Activity Relationship (QSAR) | 1970-Present | Mathematical modeling of chemical-biological activity relationships | High specificity; predictive optimization |

| Molecular Docking & Structure-Based Design | 1980-Present | Computational prediction of ligand-receptor interactions | Very high specificity; rational design |

| Systems Pharmacology & Polypharmacology | 2000-Present | Network analysis of multi-target drug interactions | Ultra-specificity; controlled polypharmacology |

Modern Drug Design: Principles and Methodologies

Ligand-Based Drug Design

Ligand-based drug design (LBDD) represents a direct evolution of Ehrlich's principle that chemical structure determines biological activity [13]. This approach is particularly valuable when the three-dimensional structure of the target is unknown. The fundamental principle underpinning LBDD is the chemical similarity principle, which states that structurally similar molecules tend to have similar biological properties [13].

Key methodologies in modern LBDD include:

- Chemical Fingerprinting: Molecular structures are encoded as binary fingerprints representing the presence or absence of specific structural features. Common implementations include:

- Path-based fingerprints (Daylight, Obabel FP2) that capture bond paths of different lengths

- Substructure-based fingerprints (MACCS keys) that use predefined structural fragments [13]

- Similarity Metrics: The Tanimoto index quantifies chemical similarity by comparing fingerprint bits, with values typically >0.7 indicating significant similarity [13].

- Similarity-Based Screening: Known active compounds are used as queries to search chemical databases for structurally similar candidates with potentially improved properties [13].

LBDD enables researchers to leverage existing chemical and biological knowledge to guide the discovery of new therapeutic agents, efficiently transitioning from initial hits to optimized leads [13].

Structure-Based Drug Design

Structure-based drug design (SBDD) represents the modern realization of Ehrlich's vision of molecular complementarity [13] [12]. This approach directly utilizes the three-dimensional structure of target proteins to design ligands with optimal shape and chemical complementarity. SBDD has been revolutionized by advances in structural biology (particularly X-ray crystallography and cryo-electron microscopy) and computational power [13].

The SBDD workflow typically involves:

- Target Identification and Validation: Selection of biologically relevant proteins with disease-modifying potential [13].

- Structure Determination: Experimental resolution of the target protein's three-dimensional structure [13].

- Binding Site Analysis: Identification and characterization of potential ligand binding pockets [13].

- De Novo Ligand Design or Screening: Computational generation or selection of compounds complementary to the binding site [12].

- Molecular Dynamics and Binding Affinity Optimization: Refinement of lead compounds through simulation and free energy calculations [12].

SBDD has produced numerous successful therapeutics, particularly for well-characterized enzyme targets, demonstrating the power of structure-guided approaches for achieving high selectivity [13].

Systems Pharmacology and Polypharmacology

Contemporary drug discovery has begun to transcend Ehrlich's single-target paradigm through systems pharmacology and polypharmacology [13]. These approaches recognize that many complex diseases involve multiple pathological pathways and that therapeutic efficacy often requires modulation of multiple targets [13].

Key concepts in this evolving paradigm include:

- Network Pharmacology: Analysis of drug-target interactions using bipartite networks that capture the complex relationships between multiple drugs and multiple targets [13].

- Chemical Similarity Networks: Clustering of diverse chemical structures into distinct scaffolds (chemotypes) that can be correlated with specific target profiles [13].

- Selective Polypharmacology: Intentional design of compounds that interact with a specific set of targets to achieve therapeutic efficacy while minimizing adverse effects [13].

This systems-level approach represents a sophisticated evolution beyond the single magic bullet toward targeted multi-specific therapies that address disease complexity while maintaining selectivity against critical off-targets [13].

Diagram Title: Modern Drug Design Approaches Workflow

Advanced Quantitative and Analytical Techniques

Complex Generic Drug Development

Modern drug development increasingly relies on sophisticated quantitative comparative approaches to establish therapeutic equivalence, particularly for complex drug products [17]. These methods address significant challenges in applying conventional statistical bioequivalence methods to complex data sets:

- API Sameness Assessment: Advanced analytical techniques including NMR, AFFF-MALS, and mass spectrometry are employed to establish active pharmaceutical ingredient (API) equivalence for complex generics [17].

- Particle Size Distribution Analysis: Equivalence testing of complex particle size distribution profiles using advanced metrics like Earth Mover's Distance [17].

- Dissolution Profile Similarity: Application of bootstrap bias-corrected similarity factors (f₂) for robust comparison of dissolution profiles [17].

These methodologies represent the cutting edge of quantitative pharmaceutical analysis, enabling precise characterization and comparison of complex drug products while maintaining regulatory standards [17].

Selective Sensing and Molecular Recognition

The principles of molecular recognition central to Ehrlich's magic bullet concept have found direct application in pharmaceutical analysis through molecularly imprinted polymers (MIPs) [3]. MIPs are synthetic polymers with specific recognition sites complementary to target molecules in shape, size, and functional group orientation [3].

Key applications in pharmaceutical analysis include:

- Potentiometric Ion-Selective Electrodes: MIPs enhance selectivity by creating specific recognition cavities that minimize interference from ions with similar charges or lipophilicity [3].

- Solid-Contact Ion-Selective Electrodes (SC-ISEs): Incorporation of graphene nanoplatelets as ion-to-electron transducer layers prevents water layer formation and enhances sensor stability [3].

- Multi-Analyte Determination: Concurrent quantification of drug combinations (e.g., donepezil and memantine for Alzheimer's disease) using MIP-based sensors with minimal cross-reactivity [3].

These sensing technologies directly operationalize the principle of molecular complementarity for analytical purposes, creating tools with antibody-like specificity through synthetic chemistry [3].

Table: Research Reagent Solutions for Selective Drug Development

| Reagent/Chemical | Category | Function in Research & Development |

|---|---|---|

| Molecularly Imprinted Polymers (MIPs) | Synthetic Receptor | Create specific molecular recognition sites for target analytes [3] |

| Graphene Nanoplatelets | Nanomaterial Transducer | Enhance electron transfer and prevent water layer formation in sensors [3] |

| Potassium Tetrakis(p-chlorophenyl) Borate | Ionic Additive | Cation exchanger in ion-selective membranes [3] |

| Ethylene Glycol Dimethacrylate (EGDMA) | Cross-linking Monomer | Creates rigid polymer structure with molecular memory in MIPs [3] |

| Methacrylic Acid (MAA) | Functional Monomer | Provides complementary functional groups for template binding in MIPs [3] |

| Azobisisobutyronitrile (AIBN) | Polymerization Initiator | Generates free radicals to initiate thermal polymerization [3] |

Experimental Protocols and Methodologies

Molecularly Imprinted Polymer Synthesis Protocol

The preparation of MIPs for selective drug sensing follows a well-established precipitation polymerization methodology [3]:

- Template-Monomer Complexation: Dissolve 0.5 mmol of the target drug (template) in 40.0 mL of dimethylsulfoxide (DMSO) as porogenic solvent. Add 2.0 mmol of methacrylic acid (MAA) as functional monomer and sonicate for 15 minutes to allow complex formation through non-covalent interactions [3].

- Polymerization Mixture Preparation: Add 8.0 mmol of ethylene glycol dimethacrylate (EGDMA) as cross-linking monomer and 0.6 mmol of azobisisobutyronitrile (AIBN) as radical initiator to the template-monomer solution. Sonicate the mixture for 1 minute to ensure homogeneity [3].

- Polymerization Process: Purge the mixture with nitrogen gas for 15 minutes to remove oxygen, then incubate in a thermostatic oil bath at 60°C for 24 hours to complete the polymerization reaction [3].

- Template Removal: After polymerization, extract the template molecules using appropriate washing solvents (typically methanol:acetic acid mixtures) to create specific recognition cavities [3].

- Polymer Characterization: Characterize the resulting MIPs using scanning electron microscopy (SEM), Brunauer-Emmett-Teller (BET) surface area analysis, and infrared spectroscopy to confirm structural properties and template removal [3].

Sensor Preparation and Drug Quantification Protocol

The development of MIP-based sensors for pharmaceutical analysis involves the following methodological steps [3]:

- Electrode Modification: Prepare graphene-modified glassy carbon electrodes (GCE) by applying graphene nanoplatelets as a hydrophobic transducer layer to prevent water layer formation and enhance charge transfer [3].

- Membrane Formulation: Incorporate the prepared MIPs into ion-selective membranes along with appropriate plasticizers (e.g., 2-nitrophenyl octyl ether) and polymeric matrices (e.g., polyvinyl chloride) [3].

- Sensor Calibration: Characterize sensor performance by measuring potential response across a concentration range of the target drug (typically 10⁻² to 10⁻⁷ M). Determine slope, detection limit, and working range for each sensor [3].

- Selectivity Assessment: Evaluate sensor specificity by measuring potential response in the presence of structurally similar compounds and co-formulated drugs. Calculate selectivity coefficients to quantify interference levels [3].

- Pharmaceutical Application: Apply the validated sensors to quantify target drugs in pharmaceutical formulations and biological samples (e.g., spiked human plasma) without prior separation, demonstrating practical utility [3].

The evolution from Paul Ehrlich's original magic bullet concept to modern drug design represents a continuous refinement of the fundamental principle of molecular specificity [11] [9]. While Ehrlich's vision of perfectly selective therapies has proven more complex than initially imagined, his core insight—that therapeutic efficacy depends on specific molecular recognition—has been overwhelmingly validated [11] [12].

Contemporary drug discovery has transcended Ehrlich's empirical screening through the development of sophisticated quantitative and computational methods that enable rational design of therapeutic agents [13] [12]. The paradigm has shifted from single-target magic bullets toward selective polypharmacology, where drugs are designed to engage multiple specific targets in a controlled manner to address disease complexity [13].

The most significant advances have emerged at the intersection of multiple disciplines: structural biology provides atomic-resolution targets, computational chemistry enables rational design, analytical technology permits precise quantification, and systems biology contextualizes drug action within complex biological networks [13] [12]. This integrated approach represents the contemporary realization of Ehrlich's vision—not as a simple magic bullet, but as a sophisticated toolkit for molecular targeting that continues to evolve toward greater precision, efficacy, and safety in pharmaceutical interventions [11].

In the realm of biosensing and therapeutic development, the concepts of specificity and selectivity represent two distinct paradigms for molecular recognition. Specificity refers to the ideal scenario where a recognition element interacts exclusively with a single target analyte, employing a classic "lock-and-key" mechanism that recognizes no other molecules [5]. This ideal is approached by certain biological pairs such as antibodies and their antigens, aptamers, and streptavidin/biotin combinations. In contrast, selectivity describes a practical approach where recognition elements demonstrate cross-reactivity with multiple related analytes, creating differential interaction patterns that collectively identify samples through a "fingerprint" rather than isolated target identification [5].

The fundamental thesis of this whitepaper argues that while true biological specificity remains largely theoretical, selectively pragmatic approaches offer more feasible and often more informative pathways for both sensing applications and drug development. The biological reality is that molecular interactions operate along a spectrum of binding affinities rather than exhibiting absolute binary recognition, making perfect specificity an elusive goal while selective recognition provides powerful tools for navigating complex biological systems.

Theoretical Foundations: The Specificity Fallacy in Biological Systems

The Myth of the Perfect Lock and Key

The concept of absolute biological specificity originates from Emil Fischer's 1894 "lock and key" analogy, which suggested precise molecular complementarity between enzymes and substrates. However, modern structural biology has revealed that protein binding sites exhibit considerable flexibility and adaptability, employing induced fit and conformational selection mechanisms that inherently allow for some degree of cross-reactivity [5]. Even highly specific biological recognition pairs such as antibodies demonstrate measurable cross-reactivity with structurally similar molecules, particularly when tested against large panels of potential interactors.

The theoretical ideal of a completely specific sensor—one that binds only its intended target with zero cross-reactivity—remains largely unattainable in practice due to several biological constraints. First, the conserved structural motifs across protein families and metabolite classes create inherent recognition similarities. Second, the dynamic nature of biological structures allows for transient interactions that may not be detected in initial validation studies but emerge in different biological contexts. Third, the limited chemical diversity of biological recognition elements compared to the vast array of potential analytes ensures that some degree of cross-reactivity is inevitable [5].

Selectivity as an Evolutionary Pragmatism

From an evolutionary perspective, selective rather than perfectly specific recognition provides significant advantages. Biological systems have evolved promiscuous interactions that enable functional adaptability, regulatory networks, and metabolic efficiency. This pragmatic approach is evident in immune recognition, where a limited repertoire of antibodies must recognize a virtually infinite array of potential antigens, and in signal transduction, where related kinase families share substrates while maintaining pathway fidelity [5].

The olfactory system provides a compelling biological example of selective sensing par excellence. Rather than employing specific receptors for each possible odorant molecule, the olfactory system uses a combination of broadly tuned receptors that generate unique activation patterns across the receptor array. This "combinatorial coding" strategy enables the recognition of vastly more odorants than the number of receptors available, demonstrating the power and efficiency of selective sensing in biological systems [5].

Quantitative Comparison: Specificity vs. Selectivity in Experimental Systems

Table 1: Performance Comparison of Specific vs. Selective Sensing Approaches

| Parameter | Specific Sensing | Selective Sensing | Measurement Basis |

|---|---|---|---|

| Cross-reactivity | Minimal (theoretical) to Low | Moderate to High | Ratio of signal for target vs. non-target analytes |

| Multiplexing Capability | Low (requires dedicated elements per target) | High (single array for multiple analytes) | Number of distinct analytes detectable simultaneously |

| Development Time | Long (months to years) | Moderate (weeks to months) | Time from concept to validated recognition |

| Tolerance to Environmental Variation | Low | High | Performance maintenance across pH, temperature, matrix changes |

| Unknown Analyte Detection | None | High | Ability to identify unanticipated analytes |

| Data Richness | Individual analyte concentration | Pattern-based sample fingerprint | Information content per experiment |

Table 2: Analytical Performance Metrics of Representative Sensing Platforms

| Platform | Sensitivity | Discriminatory Power | Complex Matrix Performance | Reference |

|---|---|---|---|---|

| Antibody-based (ELISA) | High (pM-nM) | Target-specific | Moderate (subject to interference) | [5] |

| Aptamer-based | High (pM-nM) | Target-specific | Moderate | [5] |

| Lectin Arrays | Moderate (nM-μM) | High for glycan patterns | High (tolerates biological fluids) | [5] |

| Quantum Sensors | Very High (fM-pM) | Pattern-based | Emerging | [18] |

| Sulfur Quantum Dot Probes | Moderate (μM range) | Selective for metal ions | High in complex plant extracts | [19] |

Experimental Paradigms: Methodologies for Selective Sensing

Array-Based Sensing Platforms

Array-based sensing employs multiple cross-reactive recognition elements that collectively generate response patterns for sample identification and classification. The experimental workflow typically involves:

Array Design and Fabrication: Selection and immobilization of multiple cross-reactive receptors (e.g., lectins, synthetic receptors, peptides) in defined spatial patterns [5].

Sample Exposure and Binding: Application of the sample to the array under controlled conditions (buffer composition, temperature, time) to allow differential binding to array elements.

Signal Detection and Acquisition: Measurement of binding events through various transduction mechanisms (fluorescence, colorimetric, electrochemical, magnetic).

Pattern Recognition and Data Analysis: Application of statistical and machine learning algorithms (principal component analysis, linear discriminant analysis, neural networks) to convert response patterns into sample classifications [5].

The critical advantage of this approach is that a relatively small number of sensing elements (n) can theoretically discriminate between a much larger number of analytes (potentially up to 2^n distinct states), making it highly efficient for complex sample analysis [5].

Fluorescence-Enhanced Sensing with Sulfur Quantum Dots

A recent example of selective sensing development demonstrates the detection of lead (II) ions in complex plant extracts using sulfur quantum dots (SQDs) in deep eutectic solvent (DES) micelles [19]. The detailed methodology includes:

Synthesis of Sulfur Quantum Dots:

- Prepare SQDs through sonication-assisted H₂O₂ etching approach

- Characterize SQDs using transmission electron microscopy for size distribution and UV-Vis and fluorescence spectroscopy for optical properties

DES Micelle Formation and SQD Incorporation:

- Form surfactant-like deep eutectic solvents with long alkyl chains

- Incorporate SQDs into DES micelles, noting significant aggregation that enhances luminescent intensity

- Optimize DES composition for maximum fluorescence enhancement

Sensor Calibration and Validation:

- Expose DES-sensitized SQDs to Pb²⁺ standards across concentration range (20-100 μM)

- Measure fluorescence quenching ("turn-off" response) with increasing Pb²⁺ concentration

- Establish calibration curve with limit of detection (1.61 μM) and linear range

- Validate method in Paris polyphylla extracts with recovery studies [19]

This approach demonstrates the selectivity principle through its preferential response to Pb²⁺ over other metal ions, achieved without absolute specificity through pattern-based recognition.

The Researcher's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Selective Sensing Development

| Reagent/Material | Function | Example Application | Technical Notes |

|---|---|---|---|

| Lectin Arrays | Selective recognition of carbohydrate motifs | Glycan profiling of cell surfaces and glycoproteins | Multiple lectins with overlapping specificities create discriminatory patterns [5] |

| Aptamer Libraries | Nucleic acid-based recognition elements | Detection of proteins, small molecules, cells | SELEX process generates recognition elements; more stable than antibodies [5] |

| Sulfur Quantum Dots | Fluorescent sensing probes | Heavy metal ion detection in complex samples | DES-sensitized SQDs show enhanced fluorescence and selective quenching [19] |

| Nitrogen-Vacancy (NV) Centers | Quantum sensing of magnetic fields | Nanoscale NMR, single-cell spectroscopy | Unprecedented spatial resolution; works under ambient conditions [18] |

| Deep Eutectic Solvents | Bio-compatible media for enhanced sensing | Improve quantum dot fluorescence and stability | Surfactant-like properties enable micelle formation for analyte concentration [19] |

| Optically Pumped Magnetometers | Ultrasensitive magnetic field detection | Magnetoencephalography, magnetocardiography | Does not require cryogenics; wearable sensor designs possible [18] |

Signaling Pathways and Molecular Interactions in Selective Recognition

Applications in Biomedical Research and Drug Development

Disease Diagnosis Through Pattern Recognition

The selective sensing approach has demonstrated particular utility in diagnostic applications where disease states are characterized by complex biomarker patterns rather than single analyte alterations. For example, diseases such as cancer, neurodegenerative disorders, and autoimmune conditions often involve subtle multiparameter changes in biomarker profiles that may not include pathognomonic single markers [5]. Selective sensor arrays can detect these patterns without requiring prior knowledge of all relevant biomarkers, operating in a "hypothesis-less" discovery mode that can reveal previously unrecognized diagnostic signatures [5].

This approach has been successfully applied to distinguish between disease and healthy states through patterns in:

- Serum glycoprotein profiles using lectin arrays

- Volatile organic compound patterns in breath using chemical sensor arrays

- Metabolomic fingerprints using spectroscopic methods with multivariate analysis

- Protein phosphorylation patterns using peptide substrate arrays [5]

Therapeutic Development and Validation

In drug development, selective sensing approaches provide powerful tools for:

- Mechanism of action studies through multiparameter cellular response profiling

- Toxicity screening using pattern-based responses in cell models

- Target engagement verification through downstream signaling pattern analysis

- Polypharmacology assessment for drugs with multiple targets [5]

The pharmaceutical industry increasingly recognizes that many effective drugs act through selective rather than specific interactions with multiple targets, creating a "therapeutic footprint" that corresponds to efficacy while minimizing side effects. Selective sensing platforms are ideally suited to characterize these complex interaction profiles during drug development.

Emerging Technologies and Future Directions

Quantum Sensing Platforms

Quantum technologies represent a frontier in selective sensing with potential applications across biomedical research. Two promising platforms include:

Optically Pumped Magnetometers (OPMs):

- Detect magnetic fields with sensitivities approaching 10 fT Hz⁻¹/² without cryogenic requirements

- Enable wearable magnetoencephalography with superior spatial resolution and patient comfort

- Allow vector magnetic field detection compared to single-component SQUID sensors [18]

Nitrogen-Vacancy (NV) Centers in Diamond:

- Provide nanoscale spatial resolution for magnetic field detection

- Function across wide temperature, pressure, and magnetic field ranges

- Enable single-molecule NMR and single-cell spectroscopy

- Applicable to local temperature sensing in biological systems [18]

These quantum platforms demonstrate how advances in physics and engineering are creating new opportunities for selective sensing with unprecedented sensitivity and spatial resolution.

Integrated Specific and Selective Sensing

The most promising future direction involves hybrid approaches that combine elements of both specific and selective sensing to create more powerful and informative platforms. Integration strategies include:

- Class-specific selectivity that limits cross-reactivity within biologically relevant analyte groups

- Specific sensor arrays that provide quantitative data on known biomarkers while selective elements discover novel patterns

- Multi-modal platforms that combine specific molecular recognition with selective physical parameter sensing [5]

This integrated approach leverages the complementary strengths of both paradigms: the quantitative precision of specific sensing for validated biomarkers and the discovery power of selective sensing for novel pattern recognition.

The biological reality of molecular recognition firmly establishes selectivity as the pragmatic approach for navigating complex biological systems, while true specificity remains largely theoretical. The experimental evidence from diverse fields including glycan sensing, metal ion detection, and quantum sensing demonstrates that selective approaches provide robust, informative, and practical solutions for biomedical research and therapeutic development.

As sensing technologies continue to advance, the integration of specific and selective elements will create increasingly powerful platforms for understanding biological complexity and developing effective interventions for human disease. By embracing the inherent selectivity of biological systems rather than pursuing the elusive goal of perfect specificity, researchers can develop more effective diagnostic tools and therapeutic strategies that acknowledge and exploit the complexity of living systems.

The development of β-adrenergic receptor antagonists (beta-blockers) represents a pivotal case study in the evolution of targeted therapeutic agents, mirroring the core principles of specific versus selective sensing approaches. Early first-generation beta-blockers were non-selective agents, inhibiting both β1 and β2 adrenergic receptors with similar affinity. This lack of specificity, while therapeutically beneficial for certain cardiac conditions, resulted in off-target effects in organ systems expressing β2 receptors, particularly the pulmonary and vascular systems [20]. The introduction of second-generation beta-blockers, including metoprolol, marked a significant advancement through their cardioselective properties, demonstrating preferential binding to β1 receptors predominantly located in cardiac tissue [21]. This case study examines metoprolol as a paradigm for receptor-selective drug design, analyzing its mechanistic basis, clinical consequences, and the experimental methodologies essential for quantifying its selectivity profile. The transition from non-selective to cardioselective agents exemplifies the broader research thesis that strategic selectivity in biological targeting can optimize therapeutic efficacy while mitigating adverse effect profiles.

Beta-Blocker Generations and Receptor Selectivity

Beta-blockers are classified into three generations based on their receptor affinity and additional pharmacological properties [20]. This evolution reflects the pharmaceutical industry's ongoing pursuit of greater receptor specificity to enhance clinical utility.

First-generation beta-blockers (e.g., propranolol, sotalol) are non-selective, meaning they antagonize both β1 and β2 adrenergic receptors with comparable potency [20]. While effective for many cardiac indications, their β2-blockade in bronchial smooth muscle can induce bronchoconstriction, making them potentially hazardous for patients with asthma or COPD [22] [23].

Second-generation beta-blockers (e.g., metoprolol, atenolol, bisoprolol) were developed to provide cardioselectivity. These agents have a higher affinity for β1-adrenergic receptors, which are predominantly located in the heart, over β2-receptors found in the lungs, blood vessels, and other tissues [21] [20]. Metoprolol, one of the first selective β1-antagonists, was specifically designed to have fewer side effects than its non-selective predecessors, particularly with regard to bronchospasm and peripheral vasoconstriction [21].

Third-generation beta-blockers (e.g., carvedilol, nebivolol) extend the selectivity concept by combining β1-blockade with complementary vasodilatory properties, achieved through additional mechanisms such as α1-adrenoreceptor blockade or nitric oxide-mediated vasodilation [20].

Table 1: Classification of Beta-Blockers by Generation and Selectivity

| Generation | Key Examples | Primary Receptor Target(s) | Distinguishing Pharmacological Properties |

|---|---|---|---|

| First (Non-selective) | Propranolol, Sotalol, Nadolol | β1, β2 (non-selective) | No preferential affinity; foundation class. |

| Second (Cardioselective) | Metoprolol, Atenolol, Bisoprolol | β1 (selective) | Preferentially blocks cardiac β1 receptors; reduced risk of bronchospasm. |

| Third (Vasodilatory) | Carvedilol, Nebivolol, Labetalol | β1, plus α1 (carvedilol) or β3 (nebivolol) | Additional mechanisms cause vasodilation; may have antioxidant/antifibrotic effects. |

The degree of cardioselectivity is dose-dependent. At lower therapeutic doses, metoprolol exhibits strong β1 preference, but this selectivity diminishes at higher doses as β2-receptor blockade becomes more significant [21].

Metoprolol: A Closer Look at a Cardioselective Agent

Mechanism of Action and Pharmacokinetics

Metoprolol is a lipophilic, second-generation beta-blocker that competitively antagonizes catecholamines at β1-adrenergic receptors. Its molecular structure, based on substituted phenylpropanolamine, provides the necessary configuration for selective β1 blockade [21].

Its mechanism of action in cardiomyocytes involves several precise steps [21]:

- Receptor Binding: Metoprolol binds competitively to β1-adrenergic receptors on the surface of cardiac cells, particularly in the sinoatrial (SA) node, atrioventricular (AV) node, and ventricular myocardium.

- Inhibition of Intracellular Signaling: By occupying the receptor, it inhibits catecholamine-driven activation of the intracellular cyclic adenosine monophosphate (cAMP) and protein kinase A (PKA) pathway.

- Modulation of Action Potentials: In the SA and AV nodes, metoprolol reduces the slope of phase 4 depolarization, thereby decreasing the firing rate of pacemaker cells and lowering heart rate (negative chronotropy).

- Reduced Contractility: In the ventricular myocardium, it decreases contractility (negative inotropy) by inhibiting the PKA-mediated calcium influx through L-type calcium channels, which is essential for the force of cardiac muscle contraction.

The net hemodynamic effects are a reduction in heart rate, cardiac output, and blood pressure, which lower myocardial oxygen demand and underlie its efficacy in conditions like hypertension, angina, and heart failure [21].

Metoprolol is primarily formulated as two salts: the immediate-release metoprolol tartrate and the extended-release metoprolol succinate. The succinate formulation is designed for once-daily dosing and provides more stable plasma concentrations, which is particularly advantageous in managing chronic heart failure [21] [24].

Clinical Impact of Selectivity: Efficacy and Safety

The cardioselectivity of metoprolol translates directly into its clinical efficacy and safety profile, particularly when compared to non-selective agents.

Hemodynamic and Symptomatic Efficacy: In hypertrophic obstructive cardiomyopathy (HOCM), a systematic review of 21 studies demonstrated that beta-blockers, including metoprolol, significantly reduce the left ventricular outflow tract (LVOT) gradient (SMD -1.57; 95% CI -2.07 to -1.07) and heart rate (SMD -1.19; 95% CI -2.24 to -0.14) [25]. These hemodynamic improvements were consistently associated with symptomatic benefits, including improved New York Heart Association (NYHA) functional class and exercise tolerance [25]. In heart failure with reduced ejection fraction (HFrEF), metoprolol succinate has proven to be a cornerstone therapy, improving survival and reducing hospitalizations [21] [26]. Furthermore, a recent meta-analysis confirms that beta-blockers significantly improve outcomes in post-myocardial infarction patients with mildly reduced ejection fraction (LVEF 40-49%), reducing a composite endpoint of all-cause death, new MI, or heart failure by 25% (HR 0.75) compared to no beta-blocker therapy [27].

Safety Advantages in Comorbid Conditions: The primary clinical advantage of metoprolol's selectivity becomes evident in patients with comorbid respiratory disease. A 2025 systematic review and meta-analysis specifically investigated the impact of beta-blockers on respiratory function, measured by forced expiratory volume in 1 second (FEV1), in asthma patients [22] [23]. The analysis revealed a statistically significant subgroup effect, demonstrating that cardio-selective beta-blockers are better tolerated than non-selective agents. While non-selective beta-blockers significantly decreased FEV1 compared to placebo (SMD -0.74), the effect of cardio-selective agents was less pronounced [22] [23]. This supports the conclusion that cardio-selective beta-blockers may be cautiously considered in patients with asthma and strong cardiovascular indications, provided appropriate monitoring is in place [23].

Table 2: Quantitative Clinical Outcomes of Beta-Blocker Therapy Across Conditions

| Clinical Condition | Key Efficacy Outcomes | Quantitative Measure of Effect | Context & Comparison |

|---|---|---|---|

| HOCM [25] | Reduction in LVOT Gradient | SMD: -1.57 (95% CI: -2.07 to -1.07) | Robust effect on hemodynamic obstruction. |

| HOCM [25] | Reduction in Heart Rate | SMD: -1.19 (95% CI: -2.24 to -0.14) | Effect remained heterogeneous. |

| Post-MI (LVEF 40-49%) [27] | Composite of Death, New MI, or HF | HR: 0.75 (95% CI: 0.58 to 0.97); Absolute Risk: 10.7% vs 14.4% | 25% relative risk reduction with beta-blockers. |

| Asthma (FEV1) [23] | FEV1 after Non-selective β-blocker | SMD: -0.74 vs. Placebo (95% CI: -1.15 to -0.34) | Significant decrease in lung function. |

| Asthma (FEV1) [23] | FEV1 after Cardio-selective β-blocker | Better than non-selective (P=0.03 for subgroup difference) | I² = 80%, indicating significantly better tolerance. |

Experimental Protocols for Evaluating Receptor Selectivity

Determining the receptor selectivity profile of a compound like metoprolol requires a multi-faceted experimental approach, ranging from isolated receptor assays to whole-organ physiology.

In Vitro Receptor Binding and Functional Assays

Objective: To quantify the binding affinity (Ki) and functional potency (IC50) of metoprolol for β1 and β2-adrenergic receptors and calculate its selectivity ratio.

Methodology:

- Membrane Preparation: Isolate plasma membranes from cell lines stably expressing human β1-adrenergic receptors (e.g., CHO cells) and, separately, β2-adrenergic receptors.

- Radioligand Binding Assay:

- Incubate the membrane preparations with a fixed concentration of a radioactive antagonist (e.g., [³H]-Dihydroalprenolol) and increasing concentrations of unlabeled metoprolol.

- Perform the assay in a binding buffer, incubate to equilibrium, and then separate the bound from free radioligand via rapid filtration.

- Quantify the radioactivity in the filter plates using a scintillation counter.

- Data Analysis: Plot the percentage of radioligand displaced versus the logarithm of metoprolol concentration. Use non-linear regression to determine the IC50 (concentration that inhibits 50% of specific binding). Calculate the inhibition constant (Ki) using the Cheng-Prusoff equation: Ki = IC50 / (1 + [L]/Kd), where [L] is the radioligand concentration and Kd is its dissociation constant. The β1/β2 selectivity ratio is calculated as Ki(β2) / Ki(β1).

- Functional Assay (cAMP Accumulation):

- Use the same transfected cell lines. Stimulate the cells with a non-selective β-agonist like isoprenaline to induce cAMP production.

- Pre-treat cells with increasing concentrations of metoprolol to antagonize the agonist response.

- Measure intracellular cAMP levels using a cAMP immunoassay or a reporter gene assay.

- Data Analysis: Generate concentration-response curves for isoprenaline in the presence and absence of metoprolol. Calculate the apparent pA2 value (the negative logarithm of the antagonist concentration that requires a twofold increase in agonist concentration to produce the same effect) for each receptor subtype. A higher pA2 value at β1 vs. β2 receptors confirms functional selectivity.

Ex Vivo and In Vivo Confirmation of Selectivity

Objective: To confirm the functional cardioselectivity of metoprolol in integrated physiological systems.

Methodology:

- Isolated Tissue Bath:

- Mount an isolated guinea pig or rat right atrial strip (rich in β1 receptors) and an isolated tracheal strip or bronchial ring (relaxation mediated by β2 receptors) in organ baths containing oxygenated physiological solution.

- Pre-contract the trachea with a muscarinic agonist (e.g., carbachol). For both tissues, establish a concentration-response curve to a non-selective β-agonist (isoprenaline) to induce tachycardia in the atria and bronchodilation in the trachea.

- Repeat the concentration-response curves after incubating the tissues with a fixed concentration of metoprolol.

- Data Analysis: Determine the dose-ratio (shift of the agonist curve) caused by metoprolol in each tissue. A significantly greater dose-ratio in the atrial tissue versus the tracheal tissue demonstrates functional cardioselectivity.

- In Vivo Hemodynamic and Pulmonary Model:

- Use anesthetized, instrumented animals (e.g., dogs or pigs). Implant a flow probe on the ascending aorta to measure cardiac output and a pressure transducer in the left ventricle to measure contractility (dP/dt).

- Instrument the airway to measure airway resistance.

- Administer increasing intravenous doses of metoprolol and monitor changes in heart rate, cardiac contractility, and airway resistance in response to subsequent challenge doses of a β2-selective agonist (e.g., salbutamol).

- Data Analysis: Compare the degree to which metoprolol inhibits the cardiac (β1-mediated) versus pulmonary (β2-mediated) responses to agonist challenge. A cardioselective agent will antagonize the cardiac effects at doses that have minimal impact on the agonist-induced bronchodilation.

Visualization of Signaling Pathways and Experimental Workflows

Beta-Blocker Selectivity and Signaling Pathways

Diagram 1: Metoprolol's Selective Antagonism of the β1-Adrenergic Signaling Pathway. This diagram illustrates the canonical cAMP-PKA signaling pathway activated by catecholamines. Metoprolol (red) competitively and preferentially inhibits the β1-receptor in cardiac tissue, thereby blunting the downstream effects on heart rate and contractility. Its relative sparing of the β2-receptor in pulmonary tissue underlies its improved safety profile in patients with reactive airways. AC, adenylate cyclase; PKA, protein kinase A.

Experimental Workflow for Assessing Selectivity

Diagram 2: A Tiered Experimental Workflow for Profiling Beta-Blocker Selectivity. The workflow progresses from molecular-level in vitro assays to confirm direct receptor interaction, through tissue-level ex vivo experiments to measure functional antagonism, and finally to integrated in vivo models that confirm the physiological manifestation of selectivity. This multi-tiered approach is critical for comprehensive drug characterization. HR, heart rate.

The Scientist's Toolkit: Key Research Reagents and Materials

The experimental protocols for evaluating beta-blocker selectivity rely on a standardized set of research tools and reagents.

Table 3: Essential Research Reagent Solutions for Beta-Blocker Selectivity Studies

| Reagent / Material | Function and Application in Research |

|---|---|

| Cloned Cell Lines(e.g., CHO, HEK293) | Engineered to stably express high levels of human β1 or β2 adrenergic receptors. Essential for in vitro binding and functional assays to determine compound affinity and selectivity free from other receptor interference. |

| Radiolabeled Ligands(e.g., [³H]-DHA, [¹²⁵I]-Cyanopindolol) | Allow for direct quantification of receptor binding. The degree to which a test compound (e.g., metoprolol) can displace a known radioligand is used to calculate its binding affinity (Ki). |

| cAMP Detection Kits(e.g., ELISA, FRET, HTRF) | Measure intracellular cAMP accumulation, the primary second messenger in β-receptor signaling. Used in functional assays to determine if receptor blockade by an antagonist effectively inhibits agonist-induced cAMP production. |

| Isolated Tissue Bath/Myograph System | Maintains viable isolated tissue specimens (atria, trachea) in a controlled physiological environment. Allows for direct measurement of tissue contraction and relaxation in response to drugs, providing ex vivo confirmation of functional selectivity. |

| Selective Agonists & Antagonists(e.g., Isoprenaline, ICI 118,551, CGP 20712A) | Pharmacological tools used as positive and negative controls. Isoprenaline (non-selective agonist) stimulates all β-receptors. CGP 20712A (β1-selective antagonist) and ICI 118,551 (β2-selective antagonist) are used to benchmark the selectivity of novel compounds. |

Metoprolol stands as a definitive case study in the successful application of receptor selectivity to advance drug therapy. Its development as a cardioselective β1-antagonist was a direct response to the clinical limitations of non-selective predecessors, embodying the core research thesis that specific targeting yields superior outcomes. The quantitative data from clinical studies and meta-analyses consistently affirm that this pharmacological selectivity translates into preserved hemodynamic efficacy alongside a mitigated risk of bronchoconstriction. For researchers and drug development professionals, the structured experimental frameworks for quantifying selectivity—from molecular assays to integrated physiological models—provide a validated roadmap for the future development of ever-more-precise therapeutic agents. The journey from propranolol to metoprolol illustrates that in the intricate balance of biological systems, strategic selectivity is not merely an advantage but a fundamental principle of effective and safe pharmacologic intervention.

Strategic Implementation: Applying Specific and Selective Sensing in Drug Development Pipelines

The efficacy of diagnostic assays and therapeutic monitoring hinges on the precise molecular recognition offered by specific sensing tools. Antibodies, aptamers, and enzymatic lock-and-key assays represent three cornerstone classes of these tools, each with distinct mechanisms and applications in biomedical research and drug development. Specificity, the ability to bind a single target, and selectivity, the ability to discriminate the target from similar interferents, are paramount for reliable results. This guide provides an in-depth technical comparison of these biorecognition elements, framing them within the critical context of specific versus selective sensing approaches. It is designed to equip researchers and scientists with the knowledge to select and optimize the appropriate tool for their specific application, from basic research to clinical diagnostics.