Statistical Methods for Biosensor Design: A Data-Driven Approach to Optimization

This article explores the transformative role of statistical methods and machine learning in navigating the complex design space of biosensors, a critical technology for healthcare, diagnostics, and biomanufacturing.

Statistical Methods for Biosensor Design: A Data-Driven Approach to Optimization

Abstract

This article explores the transformative role of statistical methods and machine learning in navigating the complex design space of biosensors, a critical technology for healthcare, diagnostics, and biomanufacturing. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive guide from foundational concepts to advanced applications. We cover the principles of tuning biosensor parameters like dynamic range and sensitivity, detail practical methodologies such as Design of Experiments (DoE) and automated high-throughput screening, and address troubleshooting for context-dependent performance. The piece concludes with validation strategies and comparative analyses of different biosensor architectures, highlighting how data-driven approaches accelerate the development of robust, high-performance biosensing systems for precision medicine and point-of-care diagnostics.

Navigating the Biosensor Design Space: Core Parameters and Challenges

A biosensor is an analytical device that integrates a biological recognition element with a physicochemical transducer to convert a biological event into a measurable signal [1]. This integrated system allows for the sensitive and specific detection of target analytes, ranging from simple metabolites to complex biomolecules and whole cells [1]. The performance and reliability of any biosensor are determined by a set of core, tunable parameters that define its operational characteristics and suitability for specific applications, whether in drug development, clinical diagnostics, or bioprocess monitoring [2] [1].

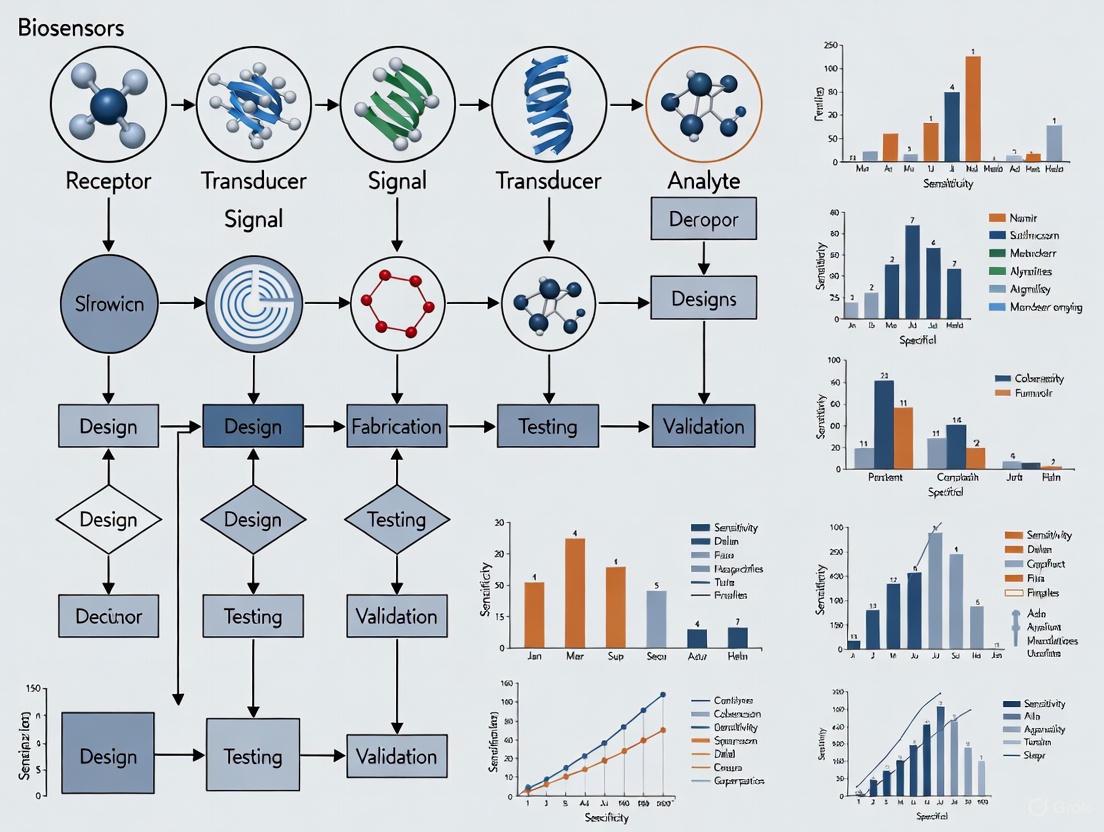

The design space of a biosensor encompasses all the variable elements that can be systematically modified to optimize its function. Understanding this design space is critical for researchers aiming to develop robust biosensors for precise biomolecular analysis. Key performance metrics include sensitivity (the minimum detectable signal change per analyte concentration), specificity (the ability to distinguish the target from interferents), dynamic range (the span between minimal and maximal detectable signals), operating range (the concentration window for optimal performance), and response time (the speed of reaction to analyte changes) [2]. Additional characteristics such as signal-to-noise ratio and stability under operational conditions further complete the performance profile [2] [1]. The following diagram illustrates the fundamental architecture of a biosensor and the flow of information from recognition to output.

Core Tunable Parameters in Biosensor Design

The performance of a biosensor is governed by the careful balancing of multiple interdependent parameters. These can be broadly categorized into parameters related to the biorecognition element, the transducer, and the overall system integration.

Biorecognition Element Selection and Properties

The choice of biorecognition element primarily determines the sensor's specificity and the range of analytes it can detect.

Table 1: Types of Biosensors and Their Characteristics

| Category | Biosensor Type | Sensing Principle | Key Advantages | Typical Analytes |

|---|---|---|---|---|

| Protein-Based | Transcription Factors (TFs) | Ligand binding induces DNA interaction to regulate gene expression [2]. | Suitable for high-throughput screening; broad analyte range [2]. | Metabolites, ions, small molecules [2]. |

| Protein-Based | Two-Component Systems (TCSs) | Sensor kinase autophosphorylates and transfers signal to a response regulator [2]. | High adaptability; environmental signal detection [2]. | Extracellular ions, nutrients [2]. |

| Protein-Based | Enzyme-based Sensors | Substrate-specific catalytic activity generates a measurable output [2]. | High specificity; rapid response [2]. | Sugars, lactate, glutamate [1]. |

| RNA-Based | Riboswitches | Ligand-induced RNA conformational change affects translation [2]. | Compact; integrates well into metabolic regulation [2]. | Nucleotides, amino acids [2]. |

| RNA-Based | Toehold Switches | Base-pairing with trigger RNA activates translation of downstream genes [2]. | Programmable; enables logic-gated pathway control [2]. | Specific RNA sequences [2]. |

Performance and Operational Parameters

Beyond the core recognition element, a suite of performance parameters must be characterized and tuned for optimal function.

Table 2: Key Performance Metrics for Biosensor Characterization

| Parameter | Definition | Tuning Methods | Impact on Performance |

|---|---|---|---|

| Dose-Response & Dynamic Range | The relationship between analyte concentration and output signal, defining the minimal and maximal detectable signals [2]. | Modifying promoter strength, ribosome binding sites (RBS), and operator regions [2]. | Defines the useful detection window; must match expected analyte concentrations [2]. |

| Sensitivity | The slope of the dose-response curve; the change in output per unit change in analyte concentration [2]. | Adjusting plasmid copy number, tuning protein expression levels [2]. | Determines the ability to detect small concentration changes; high sensitivity can reduce false negatives [2]. |

| Response Time | The speed at which the biosensor reacts to changes in analyte concentration [2]. | Using faster-acting components (e.g., riboswitches) or hybrid systems [2]. | Critical for real-time monitoring; slow response hinders dynamic control [2]. |

| Signal-to-Noise Ratio | The ratio of the specific output signal to the background variability [2]. | Directed evolution, optimizing immobilization methods, using antifouling coatings [2] [1]. | Affects resolution and reliability; low SNR can obscure true signal in high-throughput screens [2]. |

| Specificity | The ability to respond only to the intended target analyte and not to structurally similar compounds [1]. | Engineering substrate binding pockets (e.g., chimeric fusions), using high-affinity aptamers [2] [1]. | Reduces false positives in complex samples like serum or cell lysates [1]. |

Engineering Methodologies and Experimental Protocols

Engineering an effective biosensor involves iterative cycles of design, build, test, and analysis. The workflow below outlines a generalized protocol for developing and optimizing a biosensor, incorporating both rational design and high-throughput screening strategies.

Detailed Protocol: Development of a Protein-Based Biosensor (SweetTrac1)

The development of SweetTrac1, a biosensor derived from the Arabidopsis SWEET1 sugar transporter, provides a concrete example of a biosensor engineering pipeline [3].

Identification of Insertion Site:

- A circularly permuted green fluorescent protein (cpsfGFP) was inserted into the intracellular loop connecting the third and fourth transmembrane helices of the transporter [3].

- A homology model based on a related protein structure was used to select six potential insertion sites.

- Functional Screening: The optimal insertion site (after K93) was identified using a yeast complementation assay. Chimeras were expressed in a Saccharomyces cerevisiae strain (EBY4000) that lacks endogenous hexose carriers. The site that best restored growth on glucose media was selected, as it indicated retained transport functionality [3].

Linker Optimization via High-Throughput Screening:

- A gene library was created with degenerate codons to randomize the amino acid sequences of the linkers connecting the split transporter to the cpsfGFP.

- Fluorescence-Activated Cell Sorting (FACS): Approximately 450,000 yeast cells expressing the biosensor library were screened via FACS to remove non-fluorescent variants and isolate the top ~900 cells with the highest green fluorescence [3].

- Response-Based Selection: The sorted cells were regrown and individually tested for fluorescence change in response to glucose addition. Forty-four outliers with the largest responses were sequenced to identify successful linker combinations [3].

- Consensus Design: Statistical analysis of the winning linker sequences was performed. The most frequent amino acids at each position were combined to create the final variant, SweetTrac1, with linkers DGQ and LTR [3].

Functional Characterization and Validation:

- Transport Assay: The biosensor's ability to transport glucose was confirmed using [14C]-glucose influx assays in the EBY4000 yeast strain, verifying that its kinetics were similar to the wild-type transporter [3].

- Specificity Control: Key amino acids near the substrate-binding site were mutated (e.g., P23A, N73A, N192A). Mutants that lost transport capability also lost the fluorescence response, confirming that the signal was correlated with substrate binding and transport [3].

- Photophysical Characterization: Excitation and emission spectra of SweetTrac1 were recorded, showing a major excitation peak at ~490 nm and an emission peak at ~515 nm, with intensity increasing upon glucose addition [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagent Solutions for Biosensor Development and Implementation

| Reagent / Material | Function / Description | Example Application |

|---|---|---|

| Circularly Permuted GFP (cpsfGFP) | A fluorescent protein variant where the original N- and C-termini are linked and new termini are created elsewhere, making it sensitive to conformational changes [3]. | Core component in FRET-based and conformation-based biosensors like SweetTrac1 [3]. |

| Fluorescence-Activated Cell Sorter (FACS) | An instrument that rapidly analyzes and sorts individual cells based on their fluorescent properties [3]. | High-throughput screening of biosensor variant libraries for fluorescence intensity and dynamic range [2] [3]. |

| Spherical Nucleic Acids (SNAs) | Gold nanoparticles densely functionalized with a shell of oligonucleotides (DNA or RNA) [4]. | Used as probes in colorimetric and lateral flow biosensors; density of DNA can be tuned to modulate binding accessibility [4]. |

| CRISPR/Cas System (e.g., Cas12a) | A programmable gene-editing system that can also be repurposed for diagnostics due to its collateral cleavage activity upon target recognition [5]. | Provides extreme specificity for nucleic acid detection; can be coupled with isothermal amplification for portable biosensors [5] [4]. |

| Nanomaterials (e.g., MXenes, Quantum Dots) | Engineered materials with high surface-to-volume ratio and unique optical, electrical, or catalytic properties [5] [6]. | Used to enhance signal transduction, improve sensitivity, and facilitate miniaturization in electrochemical and optical biosensors [6]. |

Data Interpretation and Analysis Frameworks

The final, critical phase of working with biosensors involves converting raw data into reliable, quantitative information. A mathematical model based on mass action kinetics can be formulated to correlate the fluorescence response of a biosensor (e.g., SweetTrac1) with the net transport rate of its substrate [3]. Such models allow researchers to move beyond qualitative observations to precise, quantitative measurements of analyte flux and concentration.

Statistical and machine learning approaches are increasingly vital for analyzing complex biosensor data, especially in multiplexed formats. For instance, deep learning models can be trained to analyze images from experiments where over 100 different fluorescent biosensors are tracked concurrently in barcoded cell populations [7]. This enables the deciphering of complex signaling network interactions and temporal relationships that would be impossible to unravel manually. The diagram below conceptualizes this data interpretation pipeline.

The process typically begins with raw signal preprocessing, including baseline correction and noise reduction, to isolate the specific biosensor response [1]. The cleaned data is then fitted to a mathematical model (e.g., a dose-response curve or kinetic transport model) to extract key parameters such as binding affinity ((Kd)), maximum response ((V{max})), and half-life [2] [3]. For highly multiplexed systems, advanced computational tools like deep learning are required to deconvolute signals from multiple biosensors and reveal underlying network structures [7]. This structured approach to data analysis ensures that the tunable parameters defined in the design phase are accurately measured and validated, closing the loop on the biosensor engineering cycle.

In the field of biosensing, the ability to quantitatively measure biological and chemical analytes with precision and reliability is paramount. Performance metrics provide the essential framework for evaluating, comparing, and optimizing biosensor designs, bridging the gap between theoretical potential and practical application. For researchers and drug development professionals exploring the biosensor design space with statistical methods, a deep understanding of these metrics is not merely academic—it is a fundamental requirement for innovation. These metrics, including sensitivity, dynamic range, specificity, and cooperativity, serve as quantifiable indicators of a biosensor's operational quality and determine its suitability for specific applications, from point-of-care diagnostics to real-time process monitoring in biomanufacturing [8]. The systematic evaluation of these parameters enables the data-driven selection and refinement of biosensors, ensuring they meet the stringent demands of modern biotechnology and pharmaceutical development.

This whitepaper provides an in-depth technical examination of these four core performance metrics. It delineates their precise definitions, establishes their quantitative significance, and presents structured experimental methodologies for their determination. Furthermore, it illustrates the practical application of these concepts through a contemporary case study and provides a foundational toolkit for researchers embarking on biosensor characterization. The integration of these metrics within a statistical research framework allows for the development of robust, predictable, and highly optimized sensing systems, ultimately accelerating the translation of novel biosensor technologies from laboratory benches to real-world applications.

Defining the Core Performance Metrics

A biosensor's performance is characterized by its ability to reliably detect and quantify a target analyte within a complex sample matrix. The following metrics form the cornerstone of this characterization.

Sensitivity refers to the magnitude of the output signal change for a given change in analyte concentration. A highly sensitive biosensor produces a significant signal shift in response to a small concentration change, which is critical for detecting low-abundance biomarkers or toxins. Sensitivity is often derived from the slope of the calibration curve within its linear range and directly influences the limit of detection (LOD), the lowest analyte concentration that can be reliably distinguished from zero [9] [10].

Dynamic Range describes the span of analyte concentrations over which the biosensor provides a usable quantitative response. It is bounded at the lower end by the LOD and at the upper end by the point of signal saturation, beyond which increases in concentration no longer produce a significant change in output. A wide dynamic range is essential for applications requiring the quantification of analytes that can vary widely in concentration, such as glucose in blood or metabolites in fermentation broths [9].

Specificity is the biosensor's ability to distinguish the target analyte from other non-target substances in the sample. High specificity minimizes false positives caused by interference from structurally similar molecules, matrix effects, or nonspecific binding. This metric is a direct reflection of the molecular recognition element's affinity for its intended ligand [9].

Cooperativity describes the nature of the binding interaction between the analyte and the recognition element, which influences the shape of the dose-response curve. Positive cooperativity results in a sigmoidal response curve, where the binding of the first analyte molecule facilitates the binding of subsequent molecules. This can sharpen the biosensor's response within a specific concentration window, which is advantageous for applications requiring a binary decision. Cooperativity is quantitatively described by the Hill coefficient [9].

Table 1: Key Performance Metrics for Biosensor Evaluation

| Metric | Definition | Quantitative Descriptor | Impact on Performance |

|---|---|---|---|

| Sensitivity | Change in output signal per unit change in analyte concentration. | Slope of the linear region of the calibration curve. | Determines the limit of detection and ability to measure small concentration changes. |

| Dynamic Range | Range of analyte concentrations between the lower and upper detection limits. | Concentration at LOD to concentration at signal saturation. | Defines the operational window for quantitative measurement without sample dilution. |

| Specificity | Ability to respond only to the target analyte and not to interferents. | Signal ratio (Target vs. Non-target analyte); Cross-reactivity data. | Reduces false positives and ensures measurement accuracy in complex samples. |

| Cooperativity | Degree to which binding of one analyte molecule influences subsequent binding. | Hill coefficient (nH); Shape of dose-response curve (sigmoidal vs. hyperbolic). | Affects the sharpness of the response transition and the effective switching range. |

Quantitative Analysis of Biosensor Performance

A rigorous, quantitative approach is necessary to compare biosensor performance across different designs and platforms. The data is typically generated from dose-response experiments and visualized through standardized curves.

Dose-Response Curves and Metric Extraction: The relationship between analyte concentration and biosensor output is fundamental. A typical dose-response curve for a biosensor, particularly one based on a transcription factor, is often sigmoidal when plotted on a semi-logarithmic axis [9]. From this curve, all key metrics can be derived. The dynamic range is visualized as the concentration range over which the signal transitions from its baseline to its maximum value. The sensitivity is highest in the linear portion of the curve's mid-section, corresponding to the steepest slope. The presence and degree of cooperativity are indicated by the steepness of this sigmoidal curve, which is quantified by the Hill slope. A Hill slope greater than 1 indicates positive cooperativity, a slope of 1 indicates non-cooperative (Michaelis-Menten) behavior, and a slope less than 1 suggests negative cooperativity [9].

Quantifying Specificity: Specificity is evaluated by challenging the biosensor with a panel of potential interferents that are structurally similar or commonly found in the intended sample matrix. The output signals are then compared to the signal generated by the target analyte. This data is often presented as a bar chart or tabulated as cross-reactivity percentages, calculated as (Signal from Interferent / Signal from Target) × 100% at equivalent concentrations. A highly specific biosensor will show minimal response to non-target molecules [9].

Table 2: Representative Performance Data from Various Biosensor Technologies

| Biosensor Technology / Target | Sensitivity (LOD) | Dynamic Range | Specificity (Key Interferents Tested) | Cooperativity (Hill Slope) | Source Context |

|---|---|---|---|---|---|

| Transcription Factor (TF)-Based Sensor (General) | Defined by calibration curve slope | Range between lower and upper limits | Difference in output for target vs. alternative ligands | High cooperativity from multi-step binding or TF multimerization | [9] |

| Gold Nanorod Molecular Probe (IgG) | Low nanomolar (10⁻⁹ M) | 10⁻⁹ M to 10⁻⁶ M | High; minimal non-specific binding after functionalization | Not Specified | [10] |

| cdGreen2 (c-di-GMP Biosensor) | Kd = 214 nM | Responds to conc. from <50 nM to >5 µM | High ligand specificity; validated via binding site mutation | Sigmoidal response; Hill slope = 2.30 (Positive) | [11] |

| SERS-based α-Fetoprotein Sensor | 16.73 ng/mL | 0 - 500 ng/mL | High; uses monoclonal anti-α-fetoprotein antibodies | Not Applicable | [12] |

Experimental Protocols for Metric Characterization

Standardized experimental protocols are crucial for generating reproducible and comparable data on biosensor performance. The following sections outline general methodologies for characterizing the core metrics.

Protocol for Determining Sensitivity and Dynamic Range

This protocol describes a general procedure for establishing the calibration curve of a biosensor, from which sensitivity and dynamic range are derived.

- Sample Preparation: Prepare a dilution series of the target analyte in an appropriate buffer. The series should span a concentration range expected to cover from below the anticipated LOD to well above the saturation point. Use a minimum of 8-10 data points, spaced logarithmically, for accurate curve fitting. Include a blank sample (zero analyte) for background subtraction.

- Signal Acquisition: For each concentration in the dilution series, introduce the sample to the biosensor and measure the output signal according to the biosensor's standard operating procedure (e.g., measure fluorescence, electrochemical current, or optical shift). For each concentration, perform a minimum of n=3 technical replicates to assess variability.

- Data Analysis:

- Plot the mean signal value (Y-axis) against the analyte concentration (X-axis). The X-axis is typically on a logarithmic scale for sigmoidal curves.

- Fit an appropriate mathematical model to the data. For many biosensors, a four-parameter logistic (4PL) curve (sigmoidal) model is used:

Y = Bottom + (Top - Bottom) / (1 + (EC50/X)^HillSlope). - The sensitivity at any point is the first derivative of the fitted curve. The linear dynamic range is often defined as the concentration range between EC20 and EC80 (the concentrations eliciting 20% and 80% of the maximum response, respectively).

- The Limit of Detection (LOD) is typically calculated as the concentration corresponding to the signal of the blank plus three times the standard deviation of the blank.

Protocol for Assessing Specificity and Cross-Reactivity

This protocol evaluates the biosensor's response to non-target molecules to confirm specificity.

- Interferent Selection: Compile a list of potential interferents, including molecules structurally analogous to the target, metabolites, and salts or proteins expected in the sample matrix.

- Sample Preparation: Prepare solutions of the target analyte at a concentration near its EC50. Separately, prepare solutions of each potential interferent at the same concentration, and optionally, at a higher physiological or environmentally relevant concentration. Also, prepare a mixture of the target and each interferent.

- Signal Acquisition and Analysis: Measure the biosensor's response to the target, each interferent alone, and the mixtures. The response to each interferent alone should be negligible compared to the target. The response to the mixture should not significantly differ from the target alone. Calculate the cross-reactivity percentage for each interferent as:

(Signal_Interferent / Signal_Target) * 100%.

Protocol for Evaluating Cooperativity

Cooperativity is assessed by analyzing the shape of the dose-response curve.

- Data Collection: Use the comprehensive dose-response data collected in Section 4.1.

- Curve Fitting: Fit the data to the Hill equation, a variant of the 4PL model:

Y = Bottom + (Top - Bottom) / (1 + (K_d / X)^nH), wherenHis the Hill coefficient. - Interpretation: The value of the Hill coefficient (

nH) quantifies cooperativity.nH > 1indicates positive cooperativity,nH = 1indicates no cooperativity (Michaelis-Menten kinetics), andnH < 1indicates negative cooperativity. The steepness of the curve is directly related to thenHvalue.

Case Study: Dissecting Performance in a Genetically Encoded c-di-GMP Biosensor

The development and characterization of "cdGreen2," a genetically encoded ratiometric biosensor for the bacterial second messenger c-di-GMP, serves as an exemplary model for applying the core performance metrics in a real-world research scenario [11].

Sensor Design and Objective: The researchers aimed to create a biosensor that could monitor dynamic changes of c-di-GMP with high temporal resolution in single bacterial cells. They started with a circularly permuted EGFP (cpEGFP) scaffold sandwiched between two c-di-GMP-binding domains from the protein BldD. A directed evolution approach using iterative fluorescence-activated cell sorting (FACS) under alternating c-di-GMP regimes was employed to select variants with improved performance [11].

Performance Characterization:

- Sensitivity and Dynamic Range: The purified cdGreen2 biosensor was tested in vitro with a dilution series of c-di-GMP. The resulting dose-response curve exhibited a sigmoidal profile, yielding a fitted dissociation constant (Kd) of 214 nM, indicating high sensitivity. The sensor was functional across a physiologically relevant range, responding to c-di-GMP concentrations from below 50 nM to over 5 µM in vivo [11].

- Cooperativity: The sigmoidal shape of the dose-response curve and a calculated Hill slope of 2.30 provided strong evidence for positive cooperativity in ligand binding. This is consistent with the intended design, as the BldD domains dimerize upon c-di-GMP binding, a process that inherently involves cooperative interactions [11].

- Specificity: To validate that the observed signal was specific to c-di-GMP, the researchers performed a critical control experiment. They introduced point mutations (Arg and Asp substitutions) into the ligand-binding motif of the BldD domain, which were known to abrogate c-di-GMP binding. The mutant sensor failed to respond to c-di-GMP, confirming that the signal generation was specifically dependent on ligand binding to the intended site and not an artifact [11].

This case study demonstrates a comprehensive performance evaluation, where quantitative metrics were used to validate the success of the engineering strategy and establish the biosensor's reliability for biological applications.

The Scientist's Toolkit: Research Reagent Solutions

The following table outlines key reagents and materials essential for the development and characterization of biosensors, as exemplified by the research in the cited literature.

Table 3: Essential Research Reagents for Biosensor Development and Characterization

| Reagent / Material | Function / Role | Example from Literature |

|---|---|---|

| Circularly Permuted Fluorescent Proteins (cpFP) | Scaffold for intensiometric biosensors; optical properties change upon analyte binding. | cpEGFP used as the core scaffold in the cdGreen2 biosensor [11]. |

| Ligand-Binding Domains | Provides specificity for the target analyte. | C-terminal domains (CTDs) of the BldD transcription factor used to bind c-di-GMP [11]. |

| Flexible/ Rigid Peptide Linkers | Genetically encodes connection between protein domains; tuning length/rigidity affects sensor performance. | Linker libraries with Gly-Ser (flexible) and Pro-Ala (stiff) repeats were engineered in cdGreen2 [11]. |

| Reference Fluorescent Protein | Enables ratiometric measurement, normalizing for sensor concentration and environmental variability. | mScarlet-I inserted into cdGreen2 to create the "Matryoshka" ratiometric version [11]. |

| Directed Evolution System | Platform for iterative screening and improvement of biosensor variants. | E. coli strain with tunable c-di-GMP levels used for FACS-based selection [11]. |

| Gold Nanorods (GNRs) | Nanoscale transducers for optical biosensors; plasmonic properties are sensitive to local environment. | Functionalized with antibodies to create gold nanorod molecular probes (GNrMPs) [10]. |

| Alkanethiols (e.g., MUA) | Forms a self-assembled monolayer on gold surfaces for subsequent biomolecule immobilization. | 11-mercaptoundecanoic acid (MUA) used to replace CTAB coating on GNRs to reduce nonspecific binding [10]. |

The rigorous characterization of sensitivity, dynamic range, specificity, and cooperativity is not the final step in biosensor development but a guiding principle throughout the design process. These metrics provide a common language for researchers to communicate performance, compare technologies, and identify areas for improvement. As the field advances, the integration of statistical modeling and machine learning with biosensor design is poised to further refine our understanding and control over these parameters [13] [14]. For drug development professionals and scientists, a mastery of these metrics is essential for selecting the right tool for the task, validating its performance, and ultimately, trusting the data it generates. By anchoring biosensor evaluation in these fundamental, quantitative principles, the scientific community can continue to push the boundaries of what is measurable, driving innovation in diagnostics, biomanufacturing, and fundamental biological research.

The engineering of synthetic genetic circuits represents a cornerstone of synthetic biology, enabling the reprogramming of cellular behavior for applications ranging from living therapeutics to advanced biomanufacturing [15]. A fundamental challenge that consistently emerges in this field is the pervasive interdependence of genetic components, where the function and performance of any single part are inextricably linked to its genetic context and the host's cellular machinery. This interdependency violates the ideal of modularity that underpins traditional engineering disciplines, making the predictable design of complex circuits exceptionally difficult. The "synthetic biology problem" is precisely this discrepancy between qualitative design intentions and quantitative performance outcomes in living systems [16]. These interdependencies manifest across multiple layers, from direct protein-DNA interactions to resource competition for the host's transcriptional and translational machinery. This technical guide explores the nature of these interdependencies within the context of advanced biosensor design, providing researchers with a framework for understanding, quantifying, and mitigating these challenges through statistical methods and robust design principles. The implications are significant for drug development professionals seeking to create reliable cellular biosensors or implement complex genetic programs in therapeutic cells.

Core Classes of Interdependencies

Component-Level Interdependencies

At the most fundamental level, interdependencies arise from the physical and functional interactions between genetic components. Transcriptional regulators, including DNA-binding proteins, invertases, and CRISPR-based systems, exhibit context-dependent behavior that complicates their modular composition [15].

DNA-Binding Proteins: Classic repressors like TetR, LacI, and CI, along with newer variants such as zinc finger proteins and TALEs, function by binding specific operator sequences to block RNA polymerase progression. While conceptually simple, their effective binding affinity and leakage levels are highly dependent on promoter architecture and the specific chromosomal context [15]. This context dependence means a repressor that functions optimally in one circuit may exhibit significantly different dynamics in another.

CRISPRi Systems: CRISPR interference systems utilize a catalytically dead Cas9 protein complexed with guide RNAs to target specific DNA sequences. Although celebrated for their designability through complementary guide RNA sequences, these systems introduce additional interdependencies between the guide RNA expression level, stability, and Cas9 binding kinetics [15]. Furthermore, the requirement for multiple gRNAs in complex circuits can create competition for limited dCas9 proteins, creating hidden coupling between seemingly independent regulation pathways.

Invertases and Recombinases: Site-specific recombinases such as Cre, Flp, and serine integrases facilitate irreversible genetic changes ideal for memory circuits. However, their reaction kinetics are comparatively slow (2-6 hours) and can generate mixed populations when targeting multicopy plasmids [15]. This stochasticity introduces interdependence between circuit state and copy number dynamics, making quantitative prediction challenging.

System-Level Interdependencies

As circuit complexity increases, new system-level interdependencies emerge that extend beyond direct component interactions:

Metabolic Burden: The engineered genetic circuit operates within a living cell that has finite resources. Heterologous gene expression necessarily competes with native cellular processes for energy, nucleotides, amino acids, and ribosomal machinery. This competition creates a hidden coupling between all circuit components, where overexpression of one gene can indirectly diminish the expression of others by draining shared cellular resources [16]. This effect becomes particularly pronounced in large circuits, ultimately limiting their design capacity.

Cross-Talk and Orthogonality: True orthogonality—where components function without unintended interactions—remains an elusive goal in genetic circuit engineering. Despite efforts to create orthogonal regulatory systems, unexpected cross-talk frequently occurs between synthetic components and native cellular networks, or between supposedly insulated synthetic modules [15]. This cross-talk can manifest as promoter leakage, non-specific transcription factor binding, or metabolic interference.

Context-Dependent Expression: The expression level of a genetic part is influenced by its surrounding sequence context. Upstream and downstream sequences can affect RNA stability, translation initiation, and transcription termination in ways that are difficult to predict from part characterization in isolation [16]. This context dependence means that the same promoter can exhibit different apparent strengths when placed in different positions within a circuit.

Table 1: Classification of Genetic Circuit Interdependencies

| Interdependency Class | Primary Manifestation | Impact on Circuit Function |

|---|---|---|

| Component-Level | Altered binding kinetics in new contexts | Unpredictable transfer functions, leakage |

| Resource Competition | Metabolic burden, shared polymerase pools | Growth coupling, reduced dynamic range |

| Genetic Context | Varying expression efficiency | Altered part performance between designs |

| Cross-Talk | Non-specific molecular interactions | Reduced signal-to-noise ratio, false activation |

Quantitative Analysis of Interdependencies

Measuring Context-Dependent Performance

The quantitative impact of interdependencies can be measured through systematic characterization of genetic parts across different contexts. Key performance metrics that exhibit context dependence include:

Transfer Functions: The relationship between input signal concentration and output expression level often shifts when regulators are placed in different circuit contexts. These shifts manifest as changes in the dynamic range, response coefficient, and leakage levels of the regulatory element [15].

Expression Noise: Both intrinsic and extrinsic noise profiles are sensitive to genetic context. The cell-to-cell variability in gene expression can change significantly when a part is moved from a characterization vector to its final circuit context, affecting circuit reliability [15].

Kinetic Parameters: The response time of genetic components, including their activation and deactivation rates, often shows context dependence due to differences in transcription factor abundance, mRNA stability, and translation efficiency in different circuit environments.

Table 2: Quantitative Metrics for Assessing Interdependencies

| Metric | Standard Measurement | Context-Dependent Variation | Experimental Assessment |

|---|---|---|---|

| Dynamic Range | Ratio of ON/OFF states | Can vary by >10-fold | Flow cytometry of reporter expression |

| Response Coefficient | Hill coefficient from dose response | Often changes between contexts | Titration of inducer with fluorescence measurement |

| Leakage Level | OFF-state expression | Highly dependent on context | Fluorescence in uninduced state |

| Response Time | Time to reach 50% maximal output | Affected by cellular resource availability | Time-course measurements after induction |

Circuit Compression as a Mitigation Strategy

A promising approach to mitigating interdependencies is circuit compression, which reduces the number of genetic components required to implement a given logical function. The Transcriptional Programming (T-Pro) approach leverages synthetic transcription factors and promoters to achieve complex logic with fewer parts, thereby reducing opportunities for adverse interactions [16].

Recent advances have demonstrated complete sets of 3-input Boolean logical operations (256 distinct functions) using engineered repressor/anti-repressor systems responsive to orthogonal signals (IPTG, D-ribose, and cellobiose). This compression approach reduces metabolic burden and context effects by minimizing the genetic footprint of complex circuits. On average, compression circuits are approximately 4-times smaller than canonical inverter-based genetic circuits while maintaining predictable performance [16].

Algorithmic enumeration methods have been developed to automatically identify maximally compressed circuit designs for any desired truth table. These algorithms model circuits as directed acyclic graphs and systematically enumerate solutions in order of increasing complexity, guaranteeing identification of the minimal implementation [16].

Experimental Protocols for Characterizing Interdependencies

Characterization of Part Performance in Context

Protocol 1: Context-Dependent Transfer Function Analysis

Cloning and Assembly: For each genetic part (promoter, RBS, etc.), create multiple construct variants using Golden Gate or Gibson assembly, placing the part in different contextual environments (different upstream/downstream sequences, vector backbones, and copy numbers) [16].

Transformation: Transform each construct into the target host organism (e.g., E. coli MG1655) using electroporation or heat shock, with at least three biological replicates per construct.

Cultivation and Induction: Inoculate 2 mL of LB medium with antibiotic and grow overnight. Dilute cultures 1:100 in fresh medium and grow to mid-log phase (OD600 ≈ 0.5). For inducible systems, create a dilution series of the inducer molecule (e.g., 0, 0.1, 1, 10, 100 μM) and incubate for 4-6 hours to reach steady state.

Flow Cytometry Analysis: Dilute cells 1:10 in PBS and analyze using a flow cytometer with appropriate laser/filter sets for fluorescent reporters (e.g., 488 nm laser with 530/30 filter for GFP). Collect at least 10,000 events per sample.

Data Processing: Calculate the mean fluorescence intensity for each sample after subtracting autofluorescence from uninduced controls. Fit the dose-response data to a Hill function to extract dynamic range, Hill coefficient, and EC50/Kd values.

Context Impact Quantification: Compare fitted parameters across different contexts to quantify the magnitude of context dependence for each part.

Protocol 2: Metabolic Burden Assessment

Strain Construction: Create isogenic strains carrying circuits of varying complexity (e.g., 1-gene, 3-gene, and 5-gene circuits) along with a control strain containing no circuit.

Growth Rate Measurement: Inoculate 5 mL cultures in biological triplicate and monitor OD600 every 30 minutes for 12-16 hours using a plate reader or spectrophotometer.

Data Analysis: Calculate the maximum growth rate (μmax) for each strain by fitting the exponential phase of growth. Compute the burden as the percentage reduction in μmax relative to the control strain.

Correlation Analysis: Plot circuit burden against the number of genes, promoter strength, or total coding sequence length to identify key determinants of metabolic load.

Predictive Design Workflows

Modern approaches to managing interdependencies incorporate predictive design workflows that explicitly account for context effects:

Parts Characterization: Systematically measure all basic parts (promoters, RBSs, terminators) in multiple contexts to build a data set of context-dependent parameters [16].

Model Training: Use machine learning or statistical models to predict part performance in new contexts based on sequence features and previously measured context effects.

Circuit Design: Utilize algorithmic tools to select part combinations that minimize adverse interactions while achieving target circuit functions [16].

Iterative Refinement: Employ directed evolution or rational design to refine parts for improved orthogonality and context independence.

These workflows enable quantitative prediction of circuit performance with average errors below 1.4-fold across multiple test cases, significantly improving first-pass success rates in genetic circuit construction [16].

Visualization of Interdependencies and Design Workflows

Diagram 1: Network of Interdependencies in Genetic Circuits

Diagram 2: Circuit Compression Reduces Interdependencies

Research Reagent Solutions for Managing Interdependencies

Table 3: Essential Research Reagents for Addressing Genetic Circuit Interdependencies

| Reagent / Tool | Primary Function | Utility in Managing Interdependencies |

|---|---|---|

| Orthogonal TF Systems (TetR, LacI, CelR variants) | Transcriptional regulation with minimal cross-talk | Enables parallel regulation pathways without interference [16] |

| Synthetic Anti-Repressors (EA1TAN, EA2TAN, EA3TAN) | Implementation of NOT/NOR logic without inversion | Reduces part count and context effects through circuit compression [16] |

| CRISPR-dCas9 Systems | Programmable transcription regulation | Allows design of large orthogonal regulator sets through guide RNA programming [15] |

| Algorithmic Enumeration Software | Automated circuit design with minimal part count | Identifies maximally compressed implementations to reduce interdependencies [16] |

| Context Characterization Vectors | Standardized measurement of part context dependence | Quantifies context effects for predictive modeling [16] |

| Fluorescent Protein Reporters (GFP, YFP, RFP) | Quantitative measurement of gene expression | Enables precise characterization of part performance across contexts [15] |

| Flow Cytometry | Single-cell resolution measurement of expression | Reveals cell-to-cell variability caused by context effects [16] |

The interdependencies within genetic circuit components represent a fundamental challenge in synthetic biology that cannot be eliminated but can be systematically managed. Through quantitative characterization of context effects, implementation of circuit compression strategies, and application of predictive design workflows, researchers can mitigate the adverse impacts of these interdependencies. The integration of statistical methods and computational tools with robust experimental characterization enables the design of genetic circuits with predictable performance, even as complexity increases. For researchers exploring biosensor design space, acknowledging and explicitly addressing these interdependencies is essential for creating reliable, high-performance systems for drug development and diagnostic applications. Future advances will likely come from continued development of orthogonal biological parts, improved predictive models of cellular resource allocation, and novel circuit architectures that inherently minimize component interference.

Foundational Statistical Concepts for Systematic Design Exploration

The systematic optimization of biosensors remains a primary obstacle limiting their widespread adoption as dependable point-of-care tests [17]. Traditional one-variable-at-a-time approaches often fail to detect critical interactions between factors and may not identify true optimal conditions, hindering the practical application of these biosensors in diagnostic settings [17]. Experimental design, or Design of Experiment (DoE), provides a powerful chemometric solution by enabling the systematic and statistically reliable optimization of multiple parameters simultaneously [17] [18]. This approach is particularly crucial for ultrasensitive biosensing platforms with sub-femtomolar detection limits, where challenges like enhancing the signal-to-noise ratio, improving selectivity, and ensuring reproducibility are most pronounced [17].

Within the broader context of exploring biosensor design space, statistical methods offer a structured framework for navigating complex parameter landscapes. By applying these methodologies, researchers can develop data-driven models that connect variations in input variables—such as materials properties and production parameters—to sensor outputs, thereby facilitating more efficient development and performance enhancement of biosensing devices [17]. This technical guide examines the core statistical concepts essential for systematic design exploration in biosensor research, providing researchers with both theoretical foundations and practical implementation protocols.

Core Statistical Concepts for Experimental Design

Fundamental Principles of Design of Experiments (DoE)

The experimental design approach hinges on developing data-driven models constructed using causal data collected across a comprehensive grid of experiments covering the entire experimental domain [17]. Unlike traditional univariate methods where each experiment is defined based on previous outcomes, DoE establishes an experimental plan a priori, enabling prediction of responses at any point within the experimental domain and providing global knowledge for optimization purposes [17]. A key advantage of DoE approaches is their ability to account for potential interactions among variables—when an independent variable exerts varying effects on the response based on the values of another independent variable—which consistently elude detection in one-variable-at-a-time approaches [17].

The DoE workflow typically involves multiple iterations, beginning with identifying all factors that may exhibit causality with the targeted output signal (response), establishing their experimental ranges, and determining the distribution of experiments within the experimental domain [17]. It is advisable not to allocate more than 40% of available resources to the initial set of experiments, as subsequent DoE iterations are often necessary to refine the problem by eliminating insignificant variables, redefining experimental domains, or adjusting hypothesized models [17].

Key Experimental Design Models

Factorial Designs

The 2^k factorial designs are first-order orthogonal designs requiring 2^k experiments, where k represents the number of variables being studied [17]. In these models, each factor is assigned two levels coded as -1 and +1, corresponding to the variable's range selected based on the specific application [17]. The experimental matrix defines the grid of experiments used to compute the coefficient of the model and contains 2^k rows (each representing an individual experiment) and k columns (each representing a specific variable) [17].

For a 2^2 factorial design involving two variables (X1 and X2), the postulated mathematical model is defined as: Y = b0 + b1X1 + b2X2 + b12X1X2 This includes a constant term (b0) corresponding to the response at the center point, two linear terms (b1, b2), and a two-term interaction (b12) [17]. Geometrically, the experimental domain for two variables forms a square with responses recorded at each corner, while three variables create a cubic domain and higher dimensions form hypercubes [17].

Table 1: Experimental Matrix for a 2^2 Factorial Design

| Test Number | X1 | X2 |

|---|---|---|

| 1 | -1 | -1 |

| 2 | +1 | -1 |

| 3 | -1 | +1 |

| 4 | +1 | +1 |

Advanced Design Configurations

When response functions demonstrate approximate linearity with respect to independent variables, first-order orthogonal designs can yield substantial information with minimal experimental effort [17]. However, when responses follow quadratic functions, second-order models become essential [17]. Central composite designs address this need by augmenting initial factorial designs to estimate quadratic terms, thereby enhancing the predictive capacity of the model [17].

For mixture components where the combined total must equal 100%, mixture designs are employed instead of standard factorial designs [17]. In these specialized designs, components cannot be altered independently since changing one component's proportion necessitates proportional adjustments to others [17].

Multi-Objective Optimization Framework

Biosensor design often requires balancing multiple, sometimes competing, objectives. A multi-objective H2/H∞ performance criterion has been developed for design specifications of biosensors to achieve H2 optimal matching of desired input/output responses alongside H∞ optimal filtering of intrinsic parameter fluctuations and external cellular noise [19]. This approach employs a Takagi-Sugeno (T-S) fuzzy model to interpolate several local linear stochastic systems to approximate nonlinear stochastic biosensor systems, transforming the design problem into a linear matrix inequality (LMI)-constrained multi-objective optimization problem [19].

Multi-objective evolutionary algorithms (MOEAs) provide effective solutions for these complex optimization scenarios, determining non-dominated Pareto optimal solutions by mimicking biological evolution events such as mutation, crossover, and selection [19]. This approach is particularly valuable when considering tradeoffs between design factors in multi-objective H2/H∞ design problems [19].

Experimental Protocols and Methodologies

Protocol for Full Factorial Design Implementation

Objective: To systematically optimize biosensor fabrication parameters using a full factorial design approach.

Materials and Equipment:

- Biosensor substrate components

- Biolayer reagents (antibodies, aptamers, or enzymes)

- Immobilization chemistry reagents

- Detection instrumentation (optical or electrochemical)

- Statistical analysis software

Procedure:

- Identify Critical Factors: Select k factors that may significantly influence biosensor performance (e.g., biorecognition element concentration, immobilization time, temperature, pH).

- Define Factor Levels: Establish two levels for each factor (-1 and +1) representing practical operating ranges based on preliminary experiments or literature values.

- Randomize Experimental Order: Generate a randomized test sequence to mitigate systematic effects.

- Execute Experimental Matrix: Perform all 2^k experiments according to the randomized sequence.

- Record Responses: Measure relevant performance metrics (sensitivity, selectivity, response time, stability) for each experimental run.

- Calculate Model Coefficients: Use linear regression to determine coefficients for the mathematical model.

- Analyze Significance: Apply statistical tests (e.g., ANOVA) to identify significant factors and interactions.

- Validate Model: Conduct confirmation experiments at predicted optimal conditions.

Data Analysis: For a biosensor optimization case with factors A (bioreceptor concentration) and B (incubation time), the model would be: Response = b0 + b1A + b2B + b12AB The coefficients quantify how each factor affects the response, with interaction term b12 indicating whether the effect of one factor depends on the level of the other.

Protocol for Multi-Objective Biosensor Optimization

Objective: To design a metal ion biosensor with optimal I/O response matching and noise filtering capabilities using multi-objective optimization.

Materials and Equipment:

- Component libraries (promoters, RBS sequences, reporter genes)

- Molecular biology reagents for genetic construction

- Host cells (e.g., E. coli)

- Metal ion solutions of varying concentrations

- Fluorescence or other detection equipment

Procedure:

- System Modeling: Develop a nonlinear stochastic model representing the biosensor system dynamics.

- Specify Design Requirements: Define desired input/output response characteristics and noise attenuation specifications.

- Formulate Multi-Objective Problem: Establish H2 performance criteria for I/O response matching and H∞ criteria for robust noise filtering.

- Component Selection: Choose appropriate biological parts from libraries (e.g., metal ion-induced promoters, constitutive promoters, reporter genes).

- Solve LMI-Constrained Optimization: Apply mathematical programming to identify parameter sets satisfying both objectives.

- Pareto Front Analysis: Use MOEA to generate and evaluate tradeoffs between competing objectives.

- Construct and Validate: Build the optimized biosensor design and experimentally verify performance.

Application Example: A metal ion biosensor was systematically designed by selecting promoter-RBS components from corresponding libraries: a metal ion-induced promoter-RBS component (Mi), a constitutive promoter-RBS component (Cj), and a quorum sensing-dependent promoter-RBS component (Ak) [19]. The dynamic model of this biosensor was described using differential equations accounting for concentrations of autoinducer synthase, autoinducer, transcriptional activator protein, and immature reporter protein [19].

Visualization of Statistical Design Workflows

DoE Iterative Optimization Process

Multi-Objective Biosensor Optimization

Research Reagent Solutions for Biosensor Optimization

Table 2: Essential Research Reagents for Biosensor Design and Optimization

| Reagent Category | Specific Examples | Function in Biosensor Development |

|---|---|---|

| Biolayer Components | Antibodies, aptamers, enzymes, molecularly imprinted polymers | Provide specific recognition capabilities for target analytes; crucial for biosensor specificity and sensitivity [17] |

| Transduction Elements | Electroactive mediators, fluorophores, quantum dots, nanoparticles | Enable conversion of biological recognition events into measurable optical or electrical signals [17] [20] |

| Immobilization Matrices | Hydrogels, sol-gels, self-assembled monolayers, conducting polymers | Facilitate stable attachment of biorecognition elements to transducer surfaces while maintaining biological activity [17] |

| Genetic Circuit Components | Promoters (metal ion-induced, constitutive), RBS sequences, reporter genes | Enable construction of synthetic biological biosensors using standardized biological parts [19] |

| Signal Amplification Reagents | Enzymes (HRP, AP), nanoparticles, dendrimers | Enhance detection sensitivity through catalytic or physical amplification of output signals [17] |

Implementation Considerations for Biosensor Researchers

Practical Guidelines for Experimental Design Application

Successful implementation of statistical design strategies requires careful consideration of several practical aspects. Researchers should begin with screening designs to identify the most influential factors before progressing to more comprehensive optimization designs [17]. Resource allocation should follow the 40% guideline, reserving sufficient budget and experimental capacity for iterative design improvements [17]. For biosensors intended for point-of-care applications, environmental factors such as temperature, pH, and sample matrix effects should be incorporated as design factors to ensure robustness under real-world conditions [20].

When working with biological components exhibiting natural variability, replication becomes crucial to account for this inherent variation. Randomized run orders help minimize the impact of uncontrolled environmental factors or systematic measurement drift. For multi-objective optimization scenarios, clearly defining acceptable tradeoff ranges between competing objectives before beginning the optimization process facilitates more efficient decision-making when analyzing Pareto fronts [19].

Analytical Methodologies for Data Interpretation

The analysis of data from designed experiments extends beyond determining optimal parameter settings to provide insights into underlying biological and physical mechanisms [17]. Residual analysis validates model adequacy by examining discrepancies between measured and predicted responses [17]. For factorial designs, the magnitude and sign of model coefficients directly indicate both the direction and relative impact of each factor on the response [17].

In multi-objective optimization, the Pareto front visualization enables researchers to make informed decisions about tradeoffs between competing performance criteria, such as sensitivity versus response time or specificity versus detection range [19]. For nonlinear biosensor systems, the T-S fuzzy modeling approach facilitates controller and observer design while maintaining mathematical tractability through linear matrix inequalities [19].

Systematic design exploration through statistical methods provides an essential framework for advancing biosensor technology, particularly as applications expand into point-of-care diagnostics, environmental monitoring, and space exploration [20]. The foundational concepts of experimental design—including factorial designs, response surface methodology, and multi-objective optimization—offer powerful approaches for navigating complex parameter spaces and interaction effects that routinely challenge conventional optimization strategies [17] [19].

As biosensor research progresses toward increasingly sophisticated applications, including lab-on-a-chip platforms for space exploration [20] and ultrasensitive detection systems [17], these statistical methodologies will play an increasingly vital role in ensuring reliable performance while minimizing development time and resources. By integrating these foundational statistical concepts into their research workflows, scientists and engineers can more effectively tackle the multifaceted challenges of biosensor design space exploration, accelerating the development of next-generation biosensing technologies.

A Practical Guide to DoE and Machine Learning for Biosensor Optimization

Implementing Design of Experiments (DoE) for Efficient Fractional Sampling

The development of advanced genetically encoded biosensors represents a cornerstone of modern synthetic biology, with applications spanning enzyme optimization, strain development, and microbial process control. However, the vast number of possible biosensor permutations creates a complex combinatorial design space that necessitates careful optimization of screening strategies [21]. This complexity is further compounded by biosensor performance traits, such as tunability, which require effector titration analysis under monoclonal screening conditions [21]. In this context, Design of Experiments (DoE) has emerged as a powerful statistical framework that enables researchers to efficiently navigate this intractably large design space through structured fractional sampling methods.

Traditional one-factor-at-a-time (OFAT) optimization approaches suffer from significant limitations in biosensor engineering. They are time and resource intensive due to the extensive number of experimental iterations required, and for systems in which variables are not perfectly independent, the final combination of variable set points after an OFAT approach is likely to be suboptimal [22]. The degree of suboptimality depends on the order in which variables were perturbed, potentially leaving researchers trapped in local maxima of performance [22].

DoE overcomes these limitations by allowing for the simultaneous analysis of multiple variables (factors) through carefully designed fractional factorial experiments. This approach provides a systematic method for exploring the relationship between factors and their effects on biosensor performance, capturing interaction effects that OFAT approaches inevitably miss. As the number of genes encoded in a designed metabolic pathway increases, the size of the total genetic design space quickly becomes intractable, making DoE not just beneficial but essential for efficient optimization [22].

Fundamental Principles of DoE for Biosensor Engineering

Key Variables in Biosensor Design Space

In DoE for biosensor optimization, variables play a pivotal role in shaping the experimental design and analysis process. These variables can be broadly classified as either categorical or continuous. Categorical variables delineate qualitative attributes into distinct groups, which can be further divided into nominal variables (representing categories without inherent ranking, such as promoter types or media components) and ordinal variables (showing a specific order or ranking but lacking consistent intervals between categories, such as the order of genes in a gene cluster) [22].

Continuous variables provide quantitative measurements with infinite values within a defined range, including parameters such as pH, temperature, or the strength of regulatory elements [22]. Recent advances in the design and measurement of promoter and ribosome binding site (RBS) strengths now allow these factors to be considered as continuous variables rather than as ordinal values, enabling more precise optimization of biosensor performance [22].

During a DoE experiment, these biological and physical factors of interest are discretized into a set of values referred to as levels. These levels are then tested in different combinations, and a model predicting the response of the system is generated based on input data gathered through iterative experimentation [22]. The total "design space" of the system represents all possible combinations of factor levels that are then used for optimization.

Types of DoE Approaches

DoE can be classified into several distinct methodologies based on the experimental goals and the nature of the system being optimized. The table below summarizes the primary DoE approaches relevant to biosensor development:

Table 1: Classification of DoE Approaches for Biosensor Optimization

| DoE Type | Primary Purpose | Key Characteristics | Typical Applications |

|---|---|---|---|

| Screening Designs | Identify significant factors from many variables | Tests a fraction of full factorial space; efficient for large variable sets | Initial phase of biosensor development; identifying critical genetic elements [22] |

| Full Factorial Designs | Characterize all possible factor combinations | Tests every possible combination of factors at all levels; comprehensive but resource-intensive | Small-scale systems with limited variables; understanding complete interaction effects [22] |

| Plackett-Burman Designs | Screening many factors with minimal experimental runs | Highly fractionalized designs; identifies most influential factors | Early-stage screening of promoter libraries, RBS variants, and transcription factor combinations [22] |

| Response Surface Methodology (RSM) | Optimization of critical factors | Models relationship between factors and responses; finds optimal factor settings | Fine-tuning dynamic range, sensitivity, and specificity of biosensors [23] |

| Definitive Screening Designs (DSD) | Combined screening and optimization | Efficiently screens many factors while capturing curvature effects | Comprehensive biosensor optimization with limited experimental resources [22] |

The selection of an appropriate DoE methodology depends on the specific stage of biosensor development, the number of factors being investigated, and the available experimental resources. For initial screening phases where many factors must be evaluated quickly, Plackett-Burman or other highly fractional factorial designs are most appropriate. Once critical factors have been identified, Response Surface Methodology (RSM) approaches such as Box-Behnken Design (BBD) or Central Composite Design (CCD) can be applied to fine-tune biosensor performance characteristics [22].

Experimental Framework for DoE in Biosensor Development

Automated Workflow for Biosensor Design Space Exploration

The implementation of DoE for biosensor optimization requires a structured experimental workflow that combines computational design with automated laboratory execution. The following diagram illustrates this integrated approach:

Diagram Title: DoE Biosensor Optimization Workflow

This workflow begins with the clear definition of biosensor performance objectives, which may include dynamic range, sensitivity, specificity, or operational stability. Subsequent steps involve identifying key genetic factors and their practical ranges, selecting an appropriate DoE framework based on the number of factors and experimental constraints, and generating an experimental design matrix that specifies which factor combinations will be tested [21].

Library construction is then performed using automated molecular biology techniques, followed by high-throughput characterization of biosensor variants. The resulting data undergoes statistical analysis to build predictive models that describe the relationship between genetic factors and biosensor performance. Finally, model predictions are validated through targeted experimentation, leading to the selection of optimized biosensor configurations [21] [23].

Key Experimental Protocols

Promoter and RBS Library Construction

The creation of diverse promoter and ribosome binding site (RBS) libraries forms the foundation for biosensor optimization through DoE. This protocol involves:

Library Design: Computational identification of natural promoter/RBS sequences with predicted variation in strength, followed by design of synthetic variants with systematic sequence modifications. Bioinformatic tools are employed to mine allosteric transcription factors that can serve as the sensing components of biosensors [23].

Automated Library Synthesis: Implementation of automated DNA assembly methods to generate comprehensive libraries of genetic constructs. This process typically utilizes robotic platforms for PCR assembly, Golden Gate assembly, or other standardized cloning techniques to ensure high-fidelity construction of variant libraries [21].

Library Quality Control: Verification of library diversity and sequence accuracy through next-generation sequencing of representative samples. This step is critical to ensure that the experimental library adequately represents the intended design space [21].

The resulting libraries and their corresponding expression data are transformed into structured dimensionless inputs, enabling computational mapping of the full experimental design space and facilitating the application of DoE algorithms [21].

Effector Titration Analysis

Characterization of biosensor response to varying effector concentrations is essential for quantifying performance metrics. The effector titration protocol includes:

Graded Effector Preparation: Preparation of a dilution series of the target effector molecule (e.g., terephthalate for PET hydrolase biosensors) across a concentration range spanning several orders of magnitude [23].

High-Throughput Screening: Implementation of automated cultivation and sampling systems to expose biosensor variants to different effector concentrations under controlled conditions. This process is coupled with high-throughput measurement of output signals (typically fluorescence or luminescence) using plate readers or flow cytometry systems [21].

Response Curve Modeling: Fitting of dose-response data to appropriate mathematical models (e.g., Hill equation) to extract quantitative performance parameters such as dynamic range, EC50, Hill coefficient, and background expression levels [23].

This fractional sampling approach, coupled with effector titration analysis using a high-throughput automation platform, enables comprehensive characterization of biosensor performance across the defined design space [21].

Data Analysis and Modeling Approaches

Statistical Analysis of DoE Results

The analysis of data generated from DoE experiments requires specialized statistical approaches to extract meaningful insights about factor effects and interactions. The key steps in this analytical process include:

Response Modeling: Development of mathematical models that describe the relationship between experimental factors (e.g., promoter strength, RBS strength, transcription factor concentration) and biosensor performance metrics (e.g., dynamic range, sensitivity). These models typically take the form of polynomial equations that include main effects and interaction terms [22].

Significance Testing: Application of statistical tests (e.g., ANOVA) to determine which factors and factor interactions have statistically significant effects on biosensor performance. This analysis helps identify the most critical elements for further optimization [22].

Model Diagnostics: Evaluation of model adequacy through analysis of residuals, checking for patterns that might indicate model misspecification or the presence of outliers that could distort results [22].

The statistical modeling process enables researchers to learn and optimize biosensor genetic circuits in a systematic, data-driven manner, moving beyond intuitive approaches that struggle to investigate multidimensional sequence/design space efficiently [23].

Response Surface Methodology for Optimization

For biosensor optimization, Response Surface Methodology (RSM) provides a powerful framework for identifying factor settings that maximize or minimize specific performance metrics. The implementation of RSM involves:

Experimental Design: Selection of an appropriate RSM design (e.g., Central Composite Design or Box-Behnken Design) that efficiently explores the design space around a promising baseline configuration identified during screening experiments [22].

Surface Modeling: Development of second-order polynomial models that capture the curvature in the response surface, enabling prediction of biosensor performance at untested factor combinations [22].

Optimization: Application of numerical optimization algorithms to identify factor settings that produce the desired biosensor performance characteristics. For multi-response optimization, desirability functions are often employed to balance potentially competing objectives [23].

The following diagram illustrates the conceptual process of statistical modeling and optimization in biosensor development:

Diagram Title: Statistical Modeling Process Flow

This modeling approach was successfully demonstrated in the development of TphR-based terephthalate biosensors, where researchers employed a DoE framework to simultaneously engineer the core promoter and operator regions of the responsive promoter [23]. Through a dual refactoring approach, they were able to explore an enhanced biosensor design space and assign their causative performance effects, ultimately developing tailored biosensors with enhanced dynamic range and diverse signal output, sensitivity, and steepness [23].

Research Reagent Solutions for DoE Implementation

The successful implementation of DoE for biosensor optimization relies on a suite of specialized research reagents and tools. The table below catalogues essential materials and their functions in fractional sampling workflows:

Table 2: Essential Research Reagents for DoE in Biosensor Development

| Reagent/Tool Category | Specific Examples | Function in DoE Workflow | Application Notes |

|---|---|---|---|

| Library Construction Tools | Promoter libraries, RBS libraries, plasmid vectors | Generation of genetic diversity for experimental sampling | Automated selection systems enhance throughput and reproducibility [21] |

| Allosteric Transcription Factors | TphR (for terephthalate), Rex (for NADH/NAD+) | Biosensor input modules that respond to specific effector molecules | Bioinformatic mining expands available sensing capabilities [23] [24] |

| Reporter Systems | Fluorescent proteins (GFP, YFP), luciferases | Quantitative measurement of biosensor output signals | Time-resolved fluorescence enables ratiometric measurements with one excitation/emission wavelength [24] |

| Microfluidic Platforms | Lab-on-a-chip devices, automated culture systems | Miniaturization and parallelization of biosensor characterization | Essential for real-time monitoring and high-throughput effector titration analyses [20] |

| Statistical Software | JMP, R, Python with DoE packages | Experimental design generation and data analysis | Enables efficient mapping of complex sequence-function relationships [22] |

These research reagents collectively enable the implementation of the integrated computational and experimental workflow necessary for efficient fractional sampling of biosensor design space. The selection of appropriate tools and reagents should be guided by the specific biosensor architecture and performance objectives.

Applications and Case Studies

Terephthalate Biosensor Optimization

A compelling case study in the application of DoE for biosensor optimization comes from the development of TphR-based terephthalate biosensors for monitoring polyethylene terephthalate (PET) plastic degradation. Researchers employed a DoE approach to build a framework for efficiently engineering activator-based biosensors with tailored performances [23]. By simultaneously engineering the core promoter and operator regions of the responsive promoter, and employing a dual refactoring approach, they were able to explore an enhanced biosensor design space and assign their causative performance effects [23].

This approach enabled the development of tailored biosensors with enhanced dynamic range and diverse signal output, sensitivity, and steepness. The optimized biosensors were subsequently applied to primary screening of PET hydrolases and enzyme condition screening, demonstrating the potential of statistical modeling in optimizing biosensors for tailored industrial and environmental applications [23].

NADH/NAD+ Biosensor Development

Another significant application of systematic biosensor optimization appears in the development of genetically encoded fluorescent NADH/NAD+ biosensors such as Peredox, SoNar, and Frex [24]. These biosensors, which utilize circularly permuted fluorescent proteins (cpFPs) coupled with bacterial NADH-binding proteins, enable monitoring of cellular redox states through ratiometric measurement approaches.

Research in this area has led to the development of novel analysis methods that use the fractional intensities of time-resolved fluorescence. When the conformations of these biosensors change upon NADH/NAD+ binding, the fractional intensities (αiτi) have opposite changing trends, and their ratios can be exploited to quantify NADH/NAD+ levels with larger dynamic range and higher resolution compared to commonly used fluorescence intensity and lifetime methods [24]. This approach requires only one excitation and one emission wavelength, simplifying the design while achieving highly sensitive analyte quantification.

The integration of Design of Experiments with automated biosensor engineering workflows represents a paradigm shift in genetic circuit optimization. This approach provides an agnostic framework for the development and optimization of future biosensor systems and genetic circuits, contributing a valuable regulatory toolkit for the synthetic biology community [21]. As the field advances, several emerging trends are likely to shape future applications of DoE in biosensor development:

First, the increasing adoption of machine learning approaches, particularly artificial neural networks, as alternatives or complements to traditional RSM methods promises to enhance the efficiency of design space exploration [22]. These techniques may prove particularly valuable for modeling complex, non-linear relationships between genetic factors and biosensor performance.

Second, the continued development of lab-on-a-chip technologies and microfluidic platforms will further enhance the throughput of biosensor characterization, enabling more comprehensive sampling of complex design spaces with reduced resource requirements [20]. These advancements are particularly relevant for space exploration and extreme environment monitoring, where portable, efficient biosensing platforms are essential [20].

Finally, the growing repository of well-characterized genetic parts and their quantitative performance data will facilitate more predictive biosensor design, potentially reducing the experimental burden required for optimization. As these databases expand, in silico design and optimization will play an increasingly prominent role in the biosensor development pipeline.