Statistical Validation of Biosensor Calibration Curves: A Framework for Accuracy, Compliance, and Clinical Translation

This article provides a comprehensive guide to the statistical validation of biosensor calibration curves, a critical process for ensuring the accuracy, reliability, and regulatory compliance of biosensing technologies in drug...

Statistical Validation of Biosensor Calibration Curves: A Framework for Accuracy, Compliance, and Clinical Translation

Abstract

This article provides a comprehensive guide to the statistical validation of biosensor calibration curves, a critical process for ensuring the accuracy, reliability, and regulatory compliance of biosensing technologies in drug development and clinical diagnostics. We explore the foundational principles of calibration, including key parameters like Limit of Detection (LOD), sensitivity, and linearity. The content details methodological approaches for constructing and analyzing curves across electrochemical, optical, and genetically encoded biosensors. A significant focus is placed on troubleshooting common issues such as signal drift and non-specific binding, and on leveraging machine learning for optimization. Finally, the article outlines rigorous validation protocols and comparative analyses of statistical models to equip researchers and scientists with the tools needed for robust biosensor deployment and successful clinical translation.

The Fundamentals of Biosensor Calibration: Principles, Parameters, and Purpose

In the field of biosensing, the calibration curve serves as the fundamental bridge connecting a biological recognition event to a quantifiable analytical signal. It is the mathematical model that transforms raw sensor output—whether electrochemical current, optical shift, or fluorescence intensity—into a reliable concentration measurement of the target analyte. The statistical validation of this curve is paramount, as it directly determines the accuracy, precision, and ultimate utility of any biosensor for applications in research, clinical diagnostics, and drug development. This guide provides a comparative evaluation of how different biosensor architectures and biorecognition elements influence the construction and performance of calibration curves, supported by experimental data and detailed methodologies.

A biosensor's performance is fundamentally governed by the interaction between its biological element (e.g., enzyme, antibody, aptamer, or whole cell) and the transducer. The calibration curve is the functional representation of this interaction, and its characteristics—linear range, limit of detection (LOD), sensitivity, and stability—vary significantly based on the underlying technology. Understanding these differences is crucial for selecting the appropriate biosensor for a given application and for the rigorous statistical validation required in regulated environments.

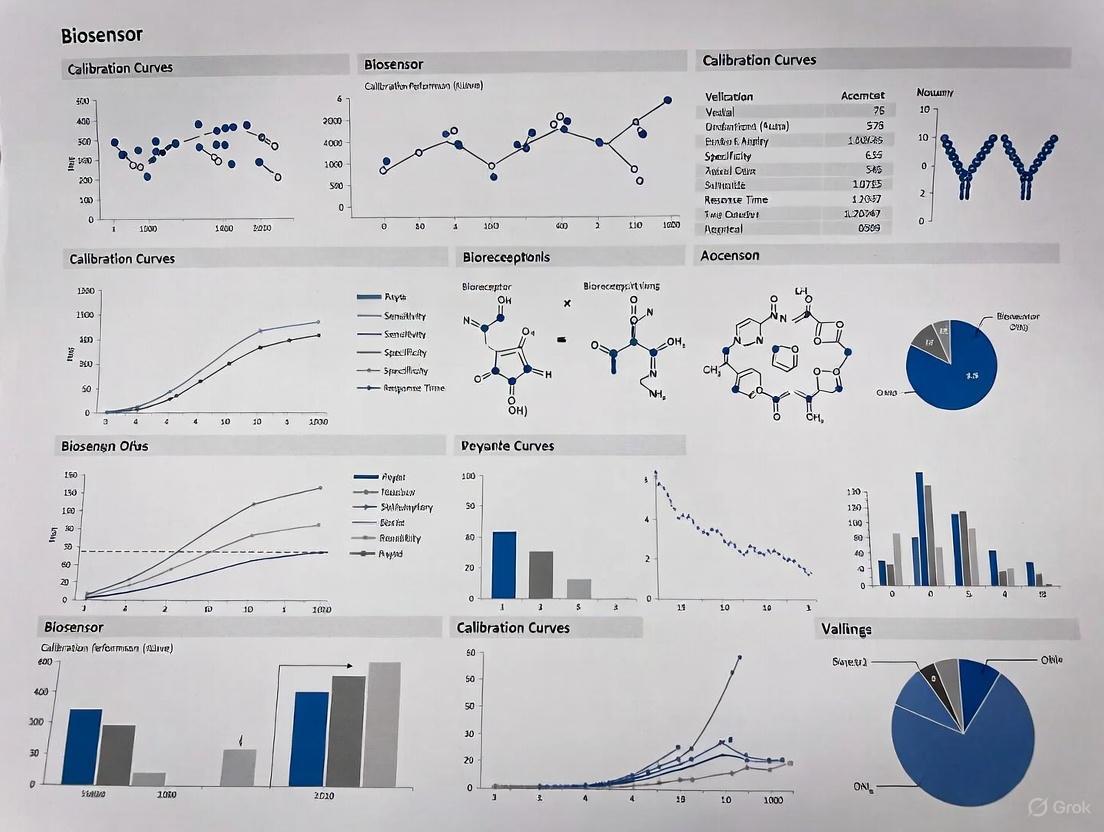

Comparative Analysis of Biosensor Calibration Performance

The following table summarizes the key analytical performance parameters of different biosensor types, as evidenced by recent experimental studies.

Table 1: Comparative Analytical Performance of Different Biosensor Platforms

| Biosensor Type / Biorecognition Element | Target Analyte | Linear Range | Limit of Detection (LOD) | Sensitivity | Key Observation |

|---|---|---|---|---|---|

| Amperometric (POx-based) [1] | Alanine Aminotransferase (ALT) | 1–500 U/L | 1 U/L | 0.75 nA/min at 100 U/L | Higher sensitivity, lower detection limit [1] |

| Amperometric (GlOx-based) [1] | Alanine Aminotransferase (ALT) | 5–500 U/L | 1 U/L | 0.49 nA/min at 100 U/L | Greater stability in complex solutions [1] |

| Electrochemical Aptamer-based (EAB) [2] | Vancomycin | Clinical Range (e.g., 6–42 µM) | N/R | N/R | Accuracy better than ±10% in whole blood at 37°C [2] |

| Genetically Engineered Microbial (GEM) [3] | Cd²⁺, Zn²⁺, Pb²⁺ | 1–6 ppb | N/R | R²: 0.9809 (Cd²⁺) | Specific detection of bioavailable heavy metals [3] |

| Silicon Photonic Microring (WGM) [4] | Cytokines | Sub-picomolar | Sub-picomolar | N/R | Achieved via enzymatically enhanced sandwich immunoassay [4] |

| Electrochemical Immunosensor [5] | Tau-441 Protein | 1 fM – 1 nM | 0.14 fM | N/R | High selectivity in human serum [5] |

N/R: Not explicitly reported in the context of the study.

Experimental Protocols for Biosensor Calibration

Protocol 1: Calibration of Amperometric Enzyme Biosensors for ALT Detection

This protocol details the methodology for constructing and calibrating biosensors for liver enzyme ALT, comparing two different oxidase-based biorecognition pathways [1].

- Biosensor Fabrication: Platinum disc working electrodes are first modified with a semi-permeable poly(meta-phenylenediamine) membrane via electrochemical polymerization to block interferents. The biorecognition layer is then immobilized using one of two methods:

- Pyruvate Oxidase (POx) Entrapment: An enzyme gel containing POx, glycerol, BSA, and HEPES buffer (pH 7.4) is mixed with a photopolymer (PVA-SbQ) and applied to the electrode. The layer is photopolymerized under UV light (365 nm) [1].

- Glutamate Oxidase (GlOx) Crosslinking: An enzyme gel containing GlOx, glycerol, and BSA in phosphate buffer (pH 6.5) is mixed with glutaraldehyde (GA) and applied to the electrode. The layer is air-dried to form covalent crosslinks [1].

- Calibration Curve Generation: Measurements are conducted in a stirred cell at room temperature with an applied potential of +0.6 V vs. Ag/AgCl. The biosensor is exposed to standard solutions of known ALT activity. The steady-state current (in nA/min) is plotted against the corresponding ALT concentration (U/L) to generate the calibration curve. Key parameters like linear range, LOD, and sensitivity are derived from this data [1].

Protocol 2: Calibration of Electrochemical Aptamer-Based (EAB) Sensors

This protocol outlines the calibration of EAB sensors for real-time, in-vivo measurement of molecules like the antibiotic vancomycin, highlighting the critical importance of matching calibration conditions to the measurement environment [2].

- Sensor Interrogation: The EAB sensor is interrogated using square wave voltammetry (SWV). The voltammogram peak currents are collected over a range of target concentrations. To correct for drift and enhance gain, voltammograms are collected at two frequencies and converted into a "Kinetic Differential Measurement" (KDM) value [2].

Calibration Curve Fitting: The averaged KDM values are fitted to a Hill-Langmuir isotherm to generate the calibration curve. The equation used is:

KDM = KDMmin + ( (KDMmax - KDMmin) * [Target]^nH ) / ( [Target]^nH + K1/2^nH )

where

KDM_minandKDM_maxare the minimum and maximum KDM values,nHis the Hill coefficient, andK_1/2is the midpoint of the binding curve [2].- Critical Calibration Parameters: For accurate quantification, calibration must be performed in a medium that closely mimics the in-vivo environment. Using freshly collected, undiluted whole blood at body temperature (37°C) as the calibration matrix is essential, as sensor response (gain and binding curve midpoint) is highly sensitive to temperature and media composition [2].

Visualizing Biosensor Calibration Workflows

The following diagrams illustrate the logical workflow for comparative biosensor evaluation and the general process of calibration curve generation.

Figure 1: A logical workflow for the comparative evaluation of different biosensor designs, leading to the generation of performance data.

Figure 2: The generalized workflow for generating and validating a biosensor calibration curve.

The Scientist's Toolkit: Essential Reagents and Materials

Successful biosensor development and calibration rely on a suite of specialized reagents and materials. The following table details key components and their functions in the experimental process.

Table 2: Key Research Reagent Solutions for Biosensor Development and Calibration

| Reagent / Material | Function in Biosensor Development & Calibration |

|---|---|

| Biorecognition Elements (Enzymes, Antibodies, Aptamers) | The core of biosensor specificity; binds the target analyte to initiate the signaling cascade [1] [2] [4]. |

| Cross-linking Reagents (Glutaraldehyde, BS³) | Covalently immobilizes the biorecognition element onto the transducer surface, ensuring stability and reusability [1] [4]. |

| Polymer Matrices (PVA-SbQ) | Entraps enzymes for immobilization via photopolymerization, forming a stable, permeable hydrogel layer [1]. |

| Electrode Modifiers (Multi-walled Carbon Nanotubes, Ionic Liquids) | Enhances the electroactive surface area and electron transfer kinetics of electrochemical transducers, improving sensitivity [6]. |

| Signal Probes (Streptavidin-Horseradish Peroxidase, SA-HRP) | Used in sandwich-type assays for enzymatic signal amplification, drastically improving the limit of detection [4]. |

| Blocking Agents (BSA, StartingBlock Buffer) | Minimizes non-specific binding to the sensor surface, thereby improving signal-to-noise ratio and assay specificity [4]. |

The process of defining a biosensor's calibration curve is a critical exercise in statistical validation, directly impacted by the choice of biological recognition element and transduction mechanism. As demonstrated, a Pyruvate Oxidase-based amperometric biosensor offers superior sensitivity for ALT detection, whereas a Glutamate Oxidase-based configuration trades some sensitivity for enhanced robustness in complex media [1]. For in-vivo applications, EAB sensors underscore the non-negotiable requirement that calibration conditions must rigorously match the measurement environment in terms of matrix, temperature, and sample freshness to achieve clinical-grade accuracy [2]. Ultimately, the selection of a biosensor platform and the validation of its calibration model must be guided by the specific analytical requirements of the application, including the required detection limits, operational environment, and the need for multiplexing. A deep understanding of the interplay between biorecognition chemistry and signal transduction is essential for transforming a raw biosensor signal into a reliable, quantifiable measure of biological activity.

In the field of biosensor research and development, the analytical validation of a sensing platform is paramount to establishing its reliability and utility for practical applications. The process involves statistically rigorous evaluation of core performance parameters to ensure the device produces accurate, reproducible, and meaningful data. Among these parameters, sensitivity, limit of detection (LOD), limit of quantification (LOQ), and linear range form the fundamental foundation for assessing biosensor capability [7]. These figures of merit determine whether a biosensor is suitable for detecting target analytes at clinically, environmentally, or industrially relevant concentrations. Proper characterization of these parameters through established statistical methods allows researchers to objectively compare different biosensing platforms and provides regulatory bodies with standardized metrics for approval.

The calibration curve serves as the central element in this validation process, providing the mathematical relationship between the biosensor's response and the analyte concentration. According to established analytical chemistry principles, the correct evaluation of sensor measurements requires strict adherence to definitions outlined in authoritative sources such as the Compendium of Analytical Nomenclature [7]. In biosensing literature, these terms are sometimes misused, particularly regarding sensitivity—which properly defines the slope of the calibration curve—and LOD, which represents the lowest detectable concentration distinguishable from background noise. This guide systematically examines each core parameter, provides standardized methodologies for their determination, and compares performance across diverse biosensor technologies to establish a framework for rigorous statistical validation.

Defining the Core Statistical Parameters

Sensitivity

In analytical chemistry and biosensing, sensitivity is formally defined as the slope of the calibration curve, representing the change in sensor response per unit change in analyte concentration [7]. This parameter should not be confused with the limit of detection, though these terms are sometimes mistakenly used interchangeably in literature. Sensitivity is quantitatively expressed with units of signal per concentration (e.g., μA·mL/ng, nm/RIU, or Hz/decade) and reflects how effectively a biosensor translates molecular recognition events into measurable signals. Higher sensitivity enables detection of smaller concentration changes, which is particularly crucial for applications requiring measurement of trace analytes such as disease biomarkers or environmental contaminants.

The sensitivity of a biosensor depends on multiple factors including the transduction mechanism, biorecognition element affinity, and surface functionalization quality. For instance, in an electrochemical impedance biosensor developed for monitoring Systemic Lupus Erythematosus, the sensitivity allowed detection of vascular cell adhesion molecule-1 (VCAM-1) in the range of 8 fg/ml to 800 pg/ml [8]. In optical biosensors, such as a graphene metasurfaces COVID-19 biosensor, sensitivity can reach 4000 nm/RIU (refractive index units), indicating substantial spectral shift per unit change in analyte concentration [9]. These examples highlight how different transduction principles yield different sensitivity values and measurement units.

Limit of Detection (LOD)

The limit of detection (LOD) is defined as the lowest concentration of an analyte that can be reliably distinguished from the blank or background signal, but not necessarily quantified as an exact value [7]. Statistically, the LOD is typically determined using the formula LOD = 3.3 × σ/S, where σ represents the standard deviation of the blank measurement (or the y-intercept of the calibration curve) and S is the sensitivity (slope) of the calibration curve [10]. The LOD represents a critical parameter for assessing biosensor utility in early disease diagnosis or trace contaminant monitoring where target analytes appear at very low concentrations.

The pursuit of increasingly lower LODs has driven substantial innovation in biosensor research, particularly through nanomaterials and signal amplification strategies. However, a significant paradox has emerged where extremely low LODs sometimes exceed practical requirements for specific applications [11]. For example, a biosensor capable of detecting picomolar concentrations of a biomarker represents a technical achievement, but becomes redundant if the biomarker's clinical relevance occurs in the nanomolar range. This emphasizes that LOD requirements must be guided by the intended application rather than technological capability alone.

Limit of Quantification (LOQ)

The limit of quantification (LOQ) represents the lowest concentration at which the analyte can not only be reliably detected but also quantified with acceptable precision and accuracy [10]. Statistically, the LOQ is calculated as LOQ = 10 × σ/S, where σ is the standard deviation of the blank and S is the sensitivity. While the LOD establishes the detection threshold, the LOQ defines the quantification threshold, making it a more stringent parameter for analytical applications requiring precise concentration measurements.

The relationship between LOD and LOQ establishes the working range of a biosensor, with the region between these values suitable for detection but not precise quantification. In a Genetically Engineered Microbial (GEM) biosensor for detecting Cd²⁺, Zn²⁺, and Pb²⁺, the linear quantification range was established between 1-6 ppb, with LOQ values ensuring reliable quantification within this interval [12]. For electronic noses (eNoses) used in beer maturation monitoring, determining LOQ for compounds like diacetyl was essential for assessing the technology's suitability for process control [10].

Linear Range

The linear range defines the concentration interval over which the biosensor response demonstrates a linear relationship with analyte concentration, typically evaluated through the coefficient of determination (R²) of the calibration curve [12]. This parameter determines the operational range where quantitative analysis can be performed without additional curve fitting or dilution protocols. The linear range is bounded at the lower end by the LOQ and at the upper end by signal saturation or nonlinear response.

A wide linear range is advantageous for applications where analyte concentration can vary significantly, such as therapeutic drug monitoring or environmental pollutant tracking. In the impedance biosensor for VCAM-1 detection, the linear range spanned from 8 fg/ml to 800 pg/ml, covering several orders of magnitude and making it suitable for clinical monitoring [8]. The dynamic range should encompass the physiologically or environmentally relevant concentrations for the target application, with considerations for potential dilution or concentration steps in sample preparation.

Table 1: Core Statistical Parameters for Biosensor Validation

| Parameter | Definition | Statistical Determination | Practical Significance |

|---|---|---|---|

| Sensitivity | Slope of the calibration curve | S = ΔSignal/ΔConcentration | Determines magnitude of response to concentration change |

| Limit of Detection (LOD) | Lowest detectable concentration | LOD = 3.3 × σ/S | Defines detection capability for trace analysis |

| Limit of Quantification (LOQ) | Lowest quantifiable concentration | LOQ = 10 × σ/S | Establishes lower limit for precise quantification |

| Linear Range | Concentration interval with linear response | Range between LOQ and signal saturation | Defines operational range for quantitative analysis |

Experimental Protocols for Parameter Determination

Calibration Curve Development

The foundation for determining all core statistical parameters is establishing a robust calibration curve. The standard protocol involves preparing a series of standard solutions with known analyte concentrations spanning the expected working range. For a novel GEM biosensor detecting heavy metals, researchers prepared stock solutions of Cd²⁺, Pb²⁺, and Zn²⁺ at 100 ppm, followed by serial dilution to create standards of 0.1, 0.5, 1.0, 2.0, 3.0, 4.0, and 5.0 ppm [12]. Each concentration should be measured with multiple replicates (typically n ≥ 3) in random order to account for experimental variability and potential drift. The biosensor response is recorded for each standard, and the data is plotted as response versus concentration.

The relationship between signal and concentration is then modeled mathematically, most commonly with linear regression, though other models may be appropriate for nonlinear systems. For the impedance biosensor detecting VCAM-1, the calibration response was performed with n = 5 replicates, with error calculated as standard deviation over the mean [8]. The resulting curve should include error bars representing the variability at each concentration point, providing visual representation of measurement precision throughout the range.

LOD and LOQ Calculation Methods

The standard approach for determining LOD and LOQ involves measuring the response of blank samples (containing all components except the analyte) to establish the baseline noise level. The standard deviation (σ) of these blank measurements is calculated, then used in the LOD = 3.3σ/S and LOQ = 10σ/S formulas, where S is the sensitivity (slope) from the calibration curve [10]. For multidimensional detection systems like electronic noses (eNoses), LOD determination requires specialized approaches such as principal component regression (PCR) or partial least squares regression (PLSR) to handle the multivariate data [10].

Alternative methods for LOD/LOQ determination include using the standard deviation of the y-intercept of the calibration curve or based on the confidence interval around the calibration curve. These methods are particularly useful when blank measurements are not feasible or when working with complex sample matrices that may introduce interfering signals. The specific calculation method should be clearly reported in experimental procedures to ensure proper interpretation and comparison across studies.

Sensitivity and Linear Range Assessment

Sensitivity is determined directly as the slope of the linear portion of the calibration curve, with steeper slopes indicating higher sensitivity. For the COVID-19 graphene metasurfaces biosensor, sensitivity was calculated based on wavelength shift per refractive index unit (nm/RIU), reaching 4000 nm/RIU [9]. The linear range is identified by determining the concentration interval where the calibration curve maintains linearity, typically with R² ≥ 0.990, though specific applications may require different thresholds.

The upper limit of the linear range is identified as the point where the sensor response deviates from linearity by more than 5% or where the R² value falls below acceptable limits. This assessment should include statistical tests for linearity, such as analysis of residuals or lack-of-fit tests, to ensure the linear model appropriately describes the relationship between concentration and response throughout the reported range.

Comparative Performance Analysis of Biosensor Technologies

Electrochemical Biosensors

Electrochemical biosensors, including impedimetric, amperometric, and potentiometric systems, represent some of the most widely developed biosensing platforms due to their cost-effectiveness, ease of miniaturization, and compatibility with point-of-care applications. The impedance biosensor for VCAM-1 detection demonstrated a detection range of 8 fg/ml to 800 pg/ml, with comparative analysis against ELISA platforms performed for 12 patient urine samples [8]. This wide dynamic range, spanning several orders of magnitude, highlights the capability of electrochemical platforms for clinical applications requiring detection of biomarkers across physiological and pathological concentrations.

The LOD paradox discussed in literature is particularly relevant for electrochemical biosensors, where extremely low detection limits may be technologically impressive but clinically unnecessary [11]. For instance, a biosensor detecting cardiac troponin at femtogram levels offers limited practical advantage over nanogram detection when clinical decision thresholds occur in the nanogram range. This emphasizes the importance of aligning sensor development with application requirements rather than pursuing lower LODs as an absolute metric of success.

Optical Biosensors

Optical biosensors, including surface plasmon resonance (SPR), photonic crystal fiber (PCF), and metasurface-based platforms, offer exceptional sensitivity and real-time detection capabilities. The graphene metasurfaces COVID-19 biosensor demonstrated remarkable sensitivity of 4000 nm/RIU with a detection limit of 0.078 in the infrared regime [9]. Similarly, advanced SPR-PCF biosensors have achieved wavelength sensitivities of 29,000 nm/RIU with resolution as low as 1.72 × 10⁻⁶ RIU [9]. These exceptional figures of merit make optical platforms particularly suitable for applications requiring ultra-sensitive detection or molecular interaction analysis.

The linear range of optical biosensors can sometimes be more limited than electrochemical systems due to signal saturation effects at higher concentrations. However, innovations in material science and detection schemes continue to expand these limits. The integration of machine learning optimization, as demonstrated by the COVID-19 biosensor achieving perfect correlation (R² = 100%) between predicted and experimental values, further enhances the reliability of parameter quantification within the linear range [9].

Genetically Engineered Microbial (GEM) Biosensors

Whole-cell biosensors utilizing genetically modified microorganisms offer unique advantages for detecting bioavailable fractions of contaminants and providing functional assessment of toxicity. The GEM biosensor developed for Cd²⁺, Zn²⁺, and Pb²⁺ detection exhibited linear quantification for these metals in the 1-6 ppb range, with R² values of 0.9809, 0.9761, and 0.9758 respectively [12]. The biosensor maintained normal physiological growth characteristics, enabling sustained monitoring capability—a crucial advantage for environmental applications.

GEM biosensors typically exhibit higher LODs than analytical instruments but provide information about bioavailability and toxicity that pure chemical analysis cannot offer. The calibration of these systems must account for biological factors such as growth phase, temperature, and nutrient availability, which can influence reporter gene expression independent of analyte concentration [12]. For the heavy metal GEM biosensor, optimal performance was achieved at 37°C and pH 7.0, resembling wildtype E. coli physiological conditions [12].

Table 2: Comparative Performance of Biosensor Technologies

| Biosensor Type | Representative LOD | Representative Linear Range | Key Applications | Advantages | Limitations |

|---|---|---|---|---|---|

| Electrochemical Impedance | 8 fg/ml (VCAM-1) [8] | 8 fg/ml - 800 pg/ml [8] | Clinical diagnostics, point-of-care testing | Low cost, portable, compatible with complex fluids | Matrix effects, requires reference electrode |

| Optical Metasurfaces | 0.078 (Detection Limit) [9] | Not specified | Viral detection, biomarker analysis | Ultra-high sensitivity, label-free detection | Complex fabrication, potential signal saturation |

| GEM Whole-Cell | 1 ppb (Cd²⁺, Zn²⁺, Pb²⁺) [12] | 1-6 ppb [12] | Environmental monitoring, toxicity assessment | Detects bioavailability, functional response | Lower specificity, biological variability |

| Electronic Noses | Compound-dependent [10] | Varies by analyte [10] | Food quality, process monitoring | Pattern recognition, multi-analyte capability | Drift issues, complex data analysis |

Research Reagent Solutions and Materials

Table 3: Essential Research Reagents and Materials for Biosensor Development

| Reagent/Material | Function | Example Application |

|---|---|---|

| Dithiobis succinimidyl propionate (DSP) | Cross-linker for antibody immobilization | Gold electrode functionalization in impedance biosensors [8] |

| Capture and Detection Antibodies | Biorecognition elements for target analyte | VCAM-1 detection in SLE monitoring [8] |

| Superblock Buffer | Blocks non-specific binding sites | Minimizes background signal in immunoassays [8] |

| Molecularly Imprinted Polymers | Biomimetic recognition elements | Synthetic alternatives to biological receptors [7] |

| Enhanced Green Fluorescent Protein (eGFP) | Reporter gene in whole-cell biosensors | Heavy metal detection in GEM biosensors [12] |

| Graphene Metasurfaces | Transduction element enhancing sensitivity | COVID-19 detection in infrared regime [9] |

| Metal Oxide Semiconductors | Sensing elements in eNose arrays | Beer maturation monitoring [10] |

| Electrochemical Cell with Potentiostat | Signal transduction and measurement | Impedance spectroscopy characterization [8] |

The statistical validation of biosensor performance through rigorous determination of sensitivity, LOD, LOQ, and linear range remains fundamental to technology development and implementation. These interconnected parameters provide a comprehensive framework for assessing analytical capability and application suitability. The comparative analysis presented in this guide demonstrates that optimal biosensor selection depends on aligning technical performance with application requirements, rather than pursuing extreme values in any single parameter. As the biosensing field evolves, standardized reporting of these core statistical parameters will enhance cross-study comparisons and accelerate the translation of research innovations into practical solutions for healthcare, environmental monitoring, and industrial process control. Future directions should emphasize the development of universal calibration protocols, particularly for emerging biosensor categories, to ensure consistent and reproducible performance validation across the research community.

In the fields of drug development and biomedical research, the generation of reliable, reproducible data is non-negotiable. Biosensors, which translate biological events into quantifiable signals, have become indispensable tools for monitoring biochemical activities in live cells, tracking therapeutic responses, and understanding disease mechanisms. However, the raw signal from a biosensor is often a complex product of biological activity, physical sensor properties, and instrumental variables. Robust calibration provides the critical link between this raw output and scientifically valid, quantitatively accurate data. It establishes a controlled framework that ensures measurements are consistent, comparable over time, and traceable to recognized standards—cornerstones of both data integrity and regulatory compliance.

The challenge is particularly acute for sensitive techniques like Förster resonance energy transfer (FRET) biosensors, where the commonly used acceptor-to-donor signal ratio (FRET ratio) is highly sensitive to imaging parameters such as laser intensity and detector sensitivity [13] [14]. Without proper calibration, data interpretation becomes complicated, and comparisons across different experimental sessions are fraught with uncertainty. Furthermore, regulatory bodies like the FDA and EMA impose strict requirements for data integrity and instrument performance in pharmaceutical and biotech settings, where inadequate calibration protocols can lead to severe consequences including product recalls and reputational damage [15]. This guide explores how implementing rigorous calibration methodologies, supported by statistical validation of calibration curves, is essential for transforming biosensors from qualitative indicators into trustworthy quantitative instruments.

Calibration Methodologies for Advanced Biosensors

Calibration Strategies for FRET Biosensors

FRET biosensors, which rely on energy transfer between donor and acceptor fluorescent proteins, are powerful tools for monitoring spatiotemporal dynamics of molecular activities. A recent groundbreaking approach addresses signal variability by incorporating calibration standards directly into experimental setups using FP-based barcodes [13] [14].

Theoretical modeling and experimental validation have demonstrated that both high- and low-FRET standards are necessary for effective calibration under different excitation intensities. Researchers have engineered "FRET-ON" and "FRET-OFF" standards that, when imaged in barcoded cells, enable normalization of fluorescence signals independent of imaging conditions [14]. This method also facilitates multiplexed imaging of multiple biosensors simultaneously.

The experimental workflow involves:

- Preparation of Control Cells: Generate donor-only, acceptor-only, FRET-ON (high FRET efficiency), and FRET-OFF (low FRET efficiency) cell lines.

- Barcoding and Mixing: Label these control cells and experimental cells expressing the biosensor of interest with distinct pairs of barcoding proteins (blue or red FPs targeted to different subcellular locations).

- Simultaneous Imaging: Image the mixed population of barcoded cells under standardized conditions.

- Signal Normalization: Use the signals from the FRET-ON and FRET-OFF standards to normalize the FRET ratio of the experimental biosensor, compensating for variability in laser power, detector sensitivity, and optical path differences.

This calibration approach not only produces imaging-condition-independent results but also restores the expected reciprocal changes in donor and acceptor signals that are often obscured by imaging fluctuations and photobleaching [13].

Self-Calibrating Biosensor Platforms

For point-of-care applications, self-calibrating biosensor designs eliminate the need for external standards by building correction mechanisms directly into the assay platform. A prime example is the self-calibrated SERS-Lateral Flow Immunoassay (SERS-LFIA) biosensor, which integrates an internal standard for real-time signal correction [16].

This innovative biosensor for detecting protein kinase biomarker PEAK1 uses a single type of silver nanoflower (AgNF) SERS nanoprobe but incorporates a control (C) dot as a self-calibration unit. The SERS signal at the C dot corrects for signal fluctuations caused by sample heterogeneity, instrumental factors (laser power fluctuations), manual preparation variances, and inter-batch differences [16]. This internal correction significantly enhances measurement accuracy and reproducibility without requiring multiple nanomaterials.

The key experimental steps include:

- Synthesis of AgNF SERS Substrate: Prepare silver nanoflowers using a one-pot method with silver nitrate, ethanol, sodium citrate, and ascorbic acid as a reducing agent.

- Functionalization: Bind the Raman reporter molecule (4-mercaptobenzoic acid) and specific antibodies to the AgNF surface to create SERS nanoprobes.

- Biosensor Assembly: Affix nanoprobes to the conjugation pad and integrate with sample pad, nitrocellulose membrane (containing test and control lines), and absorption pad.

- Detection and Calibration: As the sample migrates, target analyte competes for binding, and the ratio of test-to-control SERS signals provides a quantitative measurement corrected for internal variations.

This self-calibration principle is particularly valuable for clinical diagnostics and therapeutic monitoring where reproducibility across samples, operators, and instruments is crucial [16].

Comparative Performance Analysis of Calibration Approaches

Quantitative Comparison of Biosensor Calibration Methods

The following table summarizes the performance characteristics of different calibration methodologies based on recent experimental studies:

Table 1: Performance Comparison of Biosensor Calibration Methods

| Calibration Method | Reported Detection Range | Key Advantages | Implementation Complexity | Suitable Applications |

|---|---|---|---|---|

| FRET Standard Calibration [14] | Enables actual FRET efficiency determination | • Independent of imaging conditions• Enables multiplexing• Restores reciprocal donor/acceptor trends | High (requires engineered cell lines) | Live-cell imaging, Long-term kinetic studies, Multiplexed biosensing |

| Self-Calibrated SERS-LFIA [16] | 10-12 mg/mL to 10-4 mg/mL for PEAK1 | • Corrects for instrumental and preparation variances• Uses single nanomaterial type• Rapid response | Medium (nanomaterial synthesis required) | Point-of-care testing, Clinical biomarker detection, Field applications |

| Traditional Calibration (Reference) | Varies by specific technique | • Established protocols• Wide recognition | Low to Medium | General laboratory measurements |

Impact of Calibration on Data Quality and Accuracy

Experimental data demonstrates that calibrated measurement systems show significant improvement in accuracy and reliability. In hydrodynamic model testing, for instance, a novel calibration method for six-component force sensors achieved errors below 1% for most calibration points, with maximum errors not exceeding 7% [17]. This level of precision was achieved through a calibration device based on a dual-axis rotational mechanism that enabled multi-degree-of-freedom attitude adjustment and application of known forces and moments.

In the pharmaceutical and biotech sectors, proper calibration directly impacts compliance outcomes. Regulatory agencies emphasize calibration as a critical component of quality management systems, noting that accurate calibration helps maintain data integrity, ensure batch consistency, detect instrument drift, and provide reliable results for clinical and research applications [15].

Table 2: Impact of Calibration on Measurement System Performance

| Performance Metric | Uncalibrated System | Calibrated System | Improvement Factor |

|---|---|---|---|

| Measurement Consistency | Highly variable between sessions [14] | Consistent across experiments and instruments [14] [16] | Enables cross-experimental comparison |

| Error Margin | Potentially >10-20% | <1-7% in optimized systems [17] | 2-3x reduction in error |

| Long-Term Reliability | Degrades with instrument drift | Maintained through regular calibration [15] | Prevents invalid data collection |

| Regulatory Compliance | At risk for citations [15] | Audit-ready [15] | Mitigates regulatory risk |

Statistical Validation of Calibration Curves

Essential Validation Parameters

Statistical validation of calibration curves transforms them from simple fitting exercises into metrologically sound tools for quantitative analysis. For biosensor calibration, several key parameters must be established:

Linear Range and Dynamic Range: The concentration interval over which the response is linearly proportional to analyte concentration, verified through residual analysis and lack-of-fit tests. The self-calibrated SERS-LFIA biosensor for PEAK1 demonstrated an impressive dynamic range spanning 8 orders of magnitude (10-12 to 10-4 mg/mL) [16].

Limit of Detection (LOD) and Limit of Quantification (LOQ): LOD is typically defined as 3.3 × σ/S and LOQ as 10 × σ/S, where σ is the standard deviation of the blank response and S is the slope of the calibration curve. The electrochemical immunosensor for tau-441 protein achieved an LOD of 0.14 fM, highlighting the sensitivity possible with proper calibration [5].

Accuracy and Precision: Assessed through recovery studies (% relative error) and repeated measurements (% relative standard deviation). The six-component force sensor calibration demonstrated accuracy with most errors below 1% [17].

Robustness and Ruggedness: The ability of the method to remain unaffected by small, deliberate variations in method parameters. The self-calibrated SERS-LFIA specifically addresses this through its internal correction mechanism [16].

Regulatory Compliance and Quality Assurance

Building a Compliance-Focused Calibration Program

In regulated environments like pharmaceutical development, calibration is not merely technical but a fundamental quality system component. Regulatory bodies require demonstrable control over measurement systems that generate critical data [15]. A robust calibration program should include:

Documented Calibration Plans: Structured schedules defining which instruments require calibration, frequency, and methods, referencing applicable standards such as ISO/IEC 17025 or GMP [15].

Traceability to Recognized Standards: Using calibration equipment traceable to national or international standards (e.g., NIST), which is essential for proving measurement accuracy during audits [15].

Detailed Records and Audit Trails: Documenting every calibration event including before/after values, technician information, and certificate numbers, stored securely per data integrity requirements [15].

Regular Reviews and Risk-Based Adjustments: Periodically evaluating calibration strategy effectiveness and adjusting intervals based on equipment criticality and performance history [15].

The convergence of regulatory expectations and scientific rigor makes proper calibration indispensable. As biosensors increasingly integrate with digital health platforms and AI-powered analytics, maintaining calibration integrity across connected ecosystems becomes even more critical for regulatory acceptance [18].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents for Biosensor Calibration Experiments

| Reagent/Material | Function in Calibration | Example Applications | Key Considerations |

|---|---|---|---|

| FRET-ON/FRET-OFF Standards [14] | Provides high and low FRET references for signal normalization | Live-cell FRET biosensor calibration | Require genetic engineering; Must be spectrally compatible with biosensor |

| Silver Nanoflowers (AgNF) [16] | SERS substrate with significant enhancement factor (~10⁸) | Self-calibrating SERS biosensors | Synthesis conditions affect morphology and performance |

| Fluorescent Protein Barcodes [14] | Enables multiplexed identification of different cell populations | Multiplexed biosensor imaging | Must have separable spectra from biosensor FPs |

| Reference Materials (NIST-traceable) [15] | Establishes metrological traceability for quantitative measurements | Equipment calibration across all biosensor platforms | Documentation of traceability chain is critical for compliance |

| Functionalized Nanoparticles [16] | Serves as signal probes in lateral flow and other biosensors | Point-of-care biosensor development | Consistency in functionalization is key to reproducibility |

Robust calibration methodologies form the critical foundation for reliable biosensor data generation, ensuring both scientific validity and regulatory compliance. As demonstrated through various advanced approaches—from FRET standardization in live-cell imaging to self-calibrating SERS-LFIA platforms—systematic calibration transforms biosensors from qualitative indicators into precise quantitative instruments. The statistical validation of calibration curves provides the necessary metrological rigor, while adherence to documented calibration protocols supports data integrity requirements in regulated environments. For researchers and drug development professionals, investing in comprehensive calibration strategies is not merely a technical exercise but an essential commitment to generating trustworthy, reproducible scientific data that can withstand both scientific scrutiny and regulatory examination.

Building Robust Calibration Curves: Techniques and Best Practices Across Biosensor Platforms

The statistical validation of biosensor calibration curves is a cornerstone of reliable analytical measurement in pharmaceutical and clinical research. The performance of a biosensor—its sensitivity, specificity, and reproducibility—is profoundly influenced by two fundamental components of experimental design: the choice of calibration standards and the composition of the sample matrix in which measurements occur [19] [20]. Inadequate attention to these elements can introduce significant bias, increase noise, and lead to erroneous conclusions regarding analyte concentration, thereby jeopardizing drug development pipelines and diagnostic accuracy.

This guide provides a comparative analysis of strategies for selecting standards and matrices, framing them within the broader context of constructing statistically robust biosensor calibration models. We objectively evaluate different approaches, supported by experimental data, to equip researchers with the practical knowledge needed to optimize biosensor performance for point-of-care diagnostics and bioanalytical applications.

The Critical Role of Standards and Matrices in Calibration

A biosensor's calibration curve defines the mathematical relationship between its output signal and the concentration of the target analyte. This model is only valid if it accounts for, or is resistant to, the complex interplay between the biorecognition element, the transducer, and the sample environment.

- Signal Transduction and Interference: Biosensors convert a biological event into a quantifiable signal via electrochemical, optical, or other transducers [21] [22]. The sample matrix can directly modulate this process. For instance, in electrolyte-gated graphene field-effect transistor (EGGFET) biosensors, variations in the ionic strength, pH, or composition of the sample electrolyte can significantly shift the Fermi level of the graphene channel, altering the sensor's baseline response and sensitivity, potentially leading to false results [20].

- Nonspecific Binding (NSB): In label-free biosensing, a primary challenge is distinguishing the specific signal of the target analyte from the noise caused by NSB. Matrix constituents, such as serum proteins, can adhere to the bioreceptor or sensor substrate, increasing background signal and reducing assay accuracy [19]. The strategic use of reference controls is essential to subtract this NSB contribution.

Table 1: Impact of Sample Matrix on Biosensor Performance

| Matrix Characteristic | Impact on Biosensor Performance | Exemplary Evidence |

|---|---|---|

| Ionic Strength | Alters electrical double layer in electrochemical sensors, affecting electron transfer and gating properties; can induce Debye screening. | EGGFET immunoassay response is modulated by electrolyte concentration [20]. |

| pH | Can denature biorecognition elements (enzymes, antibodies); changes protonation states and electrostatic interactions, influencing NSB. | Proteins near their isoelectric point (pI) may exhibit increased hydrophobic NSB [19]. |

| Serum/Protein Content | Major source of NSB, leading to surface fouling and signal drift; can block access of target analyte to the bioreceptor. | Photonic microring resonator (PhRR) assays show significant NSB in serum vs. buffer [19]. |

| Complex Biological Fluids | Contains a multitude of interfering species that can cross-react with the bioreceptor or quench/amplify signals. | Fluorescent GEM biosensors require calibration in growth medium to account for complex interactions [3]. |

Comparative Analysis of Standard Selection Strategies

The selection of appropriate calibration standards is not merely a procedural step; it is an experimental design choice that directly impacts the accuracy of the concentration values extrapolated from the calibration model.

Pure vs. Matrix-Matched Standards

A critical decision is whether to use standards prepared in a simple buffer or to match the complex sample matrix.

- Pure Solvent Standards: Standards are prepared in a clean, well-defined buffer. This approach is simple and avoids the cost and complexity of a matching matrix.

- Matrix-Matched Standards: Standards are prepared in a solution that mimics the composition of the real sample (e.g., artificial serum, buffer with added protein). This is the gold standard for compensating for matrix effects.

Experimental data consistently demonstrates the superiority of matrix-matched calibration. A systematic study on a photonic microring resonator (PhRR) biosensor for detecting interleukin-17A (IL-17A) and C-Reactive Protein (CRP) highlighted that calibration in a diluted serum matrix was essential for achieving accurate quantification in clinical samples [19]. The matrix components altered the binding kinetics and signal amplitude compared to buffer-only conditions. Similarly, an EGGFET immunoassay for human immunoglobulin G (IgG) required a multi-channel design with calibration standards in a relevant matrix to achieve a recovery rate of 85–95% from spiked serum samples [20].

The Use of Internal and Reference Standards

To control for sensor-to-sensor variability and environmental drift, the use of internal references is a powerful strategy.

- Negative Control Reference Probes: A reference sensor spot functionalized with a non-interacting molecule is used to subtract NSB and bulk refractive index shifts in real-time [19]. The choice of this control is non-trivial.

- Systematic Selection of Reference Probes: An FDA-inspired framework for selecting negative controls systematically evaluated a panel of potential reference probes, including isotype-matched antibodies, bovine serum albumin (BSA), and anti-fluorescein isothiocyanate (anti-FITC) [19]. The key finding was that the optimal control was analyte-specific. For IL-17A, BSA scored highest (83%), while for CRP, a rat IgG1 isotype control was optimal (95%), outperforming the isotype-matched control.

Table 2: Comparison of Calibration Standard Strategies

| Strategy | Protocol Summary | Key Performance Data | Advantages & Limitations |

|---|---|---|---|

| Pure Solvent Standards | Prepare serial dilutions of the purified analyte in a simple buffer (e.g., PBS). | Can lead to significant under/over-estimation (e.g., <85% or >115% recovery) in complex samples [20]. | Simple, inexpensive Fails to correct for matrix effects |

| Matrix-Matched Standards | Prepare serial dilutions of the purified analyte in a surrogate of the sample (e.g., 1% FBS, artificial urine). | Enables accurate recovery (e.g., 85-95%) of spiked analytes from biological samples [19] [20]. | Corrects for matrix effects, gold standard More complex/costly, requires matrix characterization |

| Standard Addition | Spike known concentrations of analyte directly into the sample aliquot. | Effective for compensating for multiplicative matrix interferences in electrochemical sensors [6]. | Ideal for unique/irreproducible matrices Sample-intensive, increases analytical time |

| Internal Reference Control | Co-immobilize a non-interacting biomolecule (e.g., BSA, isotype IgG) on the sensor as a real-time negative control. | Improved assay linearity and accuracy; optimal control is analyte-specific (e.g., BSA scored 83% for IL-17A) [19]. | Corrects for NSB and drift in real-time Requires additional sensor real estate and optimization |

Experimental Protocols for Matrix Effect Assessment and Mitigation

Protocol: Evaluation of Matrix Effects on EGGFET Biosensors

This protocol, adapted from [20], details how to characterize the impact of electrolyte composition on sensor performance.

- Sensor Preparation: Fabricate EGGFET immunosensors with a multichannel design, including channels for calibration, sample, and negative control.

- Systematic Variation: Measure the transfer characteristics (e.g., source-drain current vs. gate voltage) of the graphene channel while varying:

- Ionic Strength: Use buffers with identical pH but different molarities of a salt (e.g., 1 mM to 100 mM PBS).

- pH: Use buffers with the same ionic strength but different pH levels (e.g., pH 5 to pH 9).

- Composition: Test different biological matrices (e.g., buffer, 1% FBS, 10% serum).

- Data Analysis: Quantify the shift in the Dirac point voltage (for graphene) and the change in transconductance (sensitivity). Use this data to define the acceptable operating range and to justify the need for matrix-matching or dilution.

Protocol: Systematic Optimization Using Design of Experiments (DoE)

Optimizing multiple interdependent parameters (e.g., immobilization density, buffer pH, ionic strength) one variable at a time is inefficient and can miss critical interactions. DoE is a powerful chemometric tool for this purpose [23].

- Define Factors and Responses: Identify input variables to optimize (e.g., pH, ionic strength, % serum) and the key output responses (e.g., signal-to-noise ratio, limit of detection, % recovery).

- Select Experimental Design: A Central Composite Design (CCD) is often used for response surface modeling, allowing for the estimation of quadratic effects and interactions between factors.

- Execute Experiments and Model Data: Run the experiments as per the CCD matrix. Use linear regression to build a mathematical model that predicts the response based on the input factors.

- Identify Optimal Conditions: The model can then be used to find the combination of factor levels that maximizes the desired performance metrics (e.g., best sensitivity and recovery). This approach has been successfully applied to optimize both optical and electronic ultrasensitive biosensors [23].

The following diagram illustrates the strategic decision-making workflow for selecting and validating standards and matrices, incorporating the DoE framework.

Essential Research Reagent Solutions

The successful implementation of the protocols and strategies described above relies on a toolkit of key reagents and materials.

Table 3: Essential Research Reagent Solutions for Biosensor Calibration

| Reagent / Material | Function in Experimental Design | Exemplary Use Case |

|---|---|---|

| Isotype Control Antibodies | Serves as a negative control reference probe to subtract nonspecific binding signals; should be matched to the capture antibody's host and isotope. | Used in PhRR and gFET biosensors to differentiate specific CRP binding from background serum protein adhesion [19]. |

| Bovine Serum Albumin (BSA) | A common blocking agent and potential negative control protein; reduces NSB by occupying non-specific sites on the sensor surface. | Evaluated as a reference probe for IL-17A detection, where it scored highest (83%) [19]. |

| Artificial Matrices (e.g., Artificial Serum, Urine) | A consistent and defined medium for preparing matrix-matched calibration standards, overcoming the variability of natural biofluids. | Critical for pre-clinical validation of biosensors intended for use in blood, serum, or urine [20]. |

| Certified Reference Materials (CRMs) | Highly pure and well-characterized analyte standards with certified concentrations; used to establish the fundamental accuracy of a calibration curve. | Provides traceability for quantifying biomarkers like CRP or viral antigens in diagnostic assays [19]. |

| Functionalized Nanomaterials (e.g., Graphene, AuNPs) | Enhance sensor sensitivity and provide a scaffold for bioreceptor immobilization; their properties must be consistent for reproducible calibration. | Graphene foam electrodes and gold nanoparticles are used to boost electrochemical and optical signals [21] [5] [20]. |

The path to a statistically valid biosensor calibration curve is paved with deliberate choices in experimental design. As the comparative data presented here demonstrates, the selection of matrix-matched standards and rigorously optimized reference controls is not merely a best practice but a necessity for achieving analytical accuracy in complex biological samples. The integration of systematic frameworks for control selection and chemometric tools like Design of Experiments provides a robust methodology for overcoming the challenges of nonspecific binding and matrix effects. By adopting these strategies, researchers and drug development professionals can enhance the reliability of their biosensor data, thereby accelerating the translation of these promising technologies from the laboratory to the clinic.

Electrochemical biosensors have received paramount attention for applications in biosensing, drug therapy, and toxicology analysis since their inception by Leland C. Clark [24]. The core of these sensors lies in their ability to transduce a biological recognition event into a quantifiable electrical signal, a process that relies heavily on the chosen electrochemical technique and the integrity of the data it produces. For researchers and drug development professionals focused on the statistical validation of biosensor calibration curves, the selection of an appropriate technique and rigorous pre-processing of the acquired data are critical for ensuring reliability, reproducibility, and accurate interpretation [24] [25].

This guide objectively compares three foundational techniques—Cyclic Voltammetry (CV), Differential Pulse Voltammetry (DPV), and Electrochemical Impedance Spectroscopy (EIS)—within the context of biosensor development. We provide a detailed comparison of their operating principles, data acquisition parameters, and pre-processing needs, supported by experimental protocols and data to inform method selection for robust calibration curve generation.

Comparative Analysis of Electrochemical Techniques

The table below summarizes the core characteristics, data outputs, and key performance metrics of CV, DPV, and EIS for easy comparison.

Table 1: Technical Comparison of CV, DPV, and EIS for Biosensor Applications

| Feature | Cyclic Voltammetry (CV) | Differential Pulse Voltammetry (DPV) | Electrochemical Impedance Spectroscopy (EIS) |

|---|---|---|---|

| Core Principle | Linear potential sweep followed by immediate reversal [26] | Series of small potential pulses superimposed on a linear baseline; current sampled before and after each pulse [27] | Application of a small amplitude sinusoidal voltage over a range of frequencies and measurement of the current response [25] |

| Primary Data Output | Current (I) vs. Potential (E) plot (Voltammogram) [26] | Difference current (ΔI = Ipost-pulse - Ipre-pulse) vs. Potential (E) plot [27] | Complex impedance (Z) and Phase Shift (θ) vs. Frequency (f) plot (Nyquist or Bode) |

| Key Readouts | Peak potential (Ep), Peak current (Ip), Peak separation (ΔE_p) [26] [28] | Peak potential (Ep), Peak height (ΔIp) [27] | Charge Transfer Resistance (Rct), Solution Resistance (Rs), Double Layer Capacitance (C_dl) [25] |

| Sensitivity | Moderate | High (minimizes non-Faradaic/charging current) [27] | Very High (capable of detecting subtle interfacial changes) [25] |

| Information Gained | Thermodynamics, kinetics of electron transfer, reaction mechanisms [26] [28] | Highly sensitive quantification of electroactive species concentration [27] | Interfacial properties, binding events, diffusion processes, kinetics [25] |

| Typical Experiment Duration | Fast (seconds to minutes per cycle) | Moderate | Slow (minutes to hours per spectrum) |

Experimental Protocols for Data Acquisition

Cyclic Voltammetry (CV) Protocol

CV is a potent tool for probing the thermodynamics and kinetics of redox processes, which is fundamental for characterizing the biorecognition element in a biosensor [26] [28].

- Workflow Overview:

- Detailed Methodology:

- Instrument Setup: Utilize a potentiostat configured with a standard three-electrode system: a Working Electrode (e.g., glassy carbon, gold), a Reference Electrode (e.g., Ag/AgCl), and a Counter Electrode (e.g., platinum wire) [26] [25].

- Solution Preparation: Prepare an electrolyte solution containing the redox-active analyte (e.g., 1 mM potassium ferricyanide in buffer) [26]. For biosensing, the working electrode is often modified with a bioreceptor (antibody, enzyme, aptamer) and nanostructured materials to enhance loading and electron transfer [24].

- Parameter Configuration:

- Initial/Final Potential (Ei/Ef): Set to a value where no Faradaic reaction occurs.

- Upper/Lower Potential (Eupper/Elower): Define the vertex potentials for the forward and reverse scans.

- Scan Rate (ν): Typically varied from 0.01 to 1 V/s to study kinetics. The peak current (I_p) is proportional to ν^1/2^, as described by the Randles-Ševčík equation:

I_p = (2.69×10^5) * n^(3/2) * A * D^(1/2) * C * ν^(1/2)[26] [28].

- Data Acquisition: Initiate the scan. The potentiostat applies the potential waveform and records the resulting current, generating a cyclic voltammogram.

Differential Pulse Voltammetry (DPV) Protocol

DPV is renowned for its high sensitivity in quantification, making it ideal for detecting low-abundance biomarkers or monitoring binding events that lead to a subtle change in electrochemical signal [27] [25].

- Workflow Overview:

- Detailed Methodology:

- Instrument & Solution Setup: Similar to CV, using a three-electrode system. The biosensor interface is critical, often functionalized with specific recognition elements like aptamers or antibodies [25].

- Parameter Configuration [27]:

- Initial and Final Potential: Define the start and end of the scan.

- Pulse Height: The magnitude of the applied potential pulse (e.g., 50 mV).

- Pulse Width: The duration of each pulse (e.g., 50 ms).

- Pulse Increment: The potential step by which the baseline is increased for each subsequent pulse.

- Sampling Parameters: Current is sampled briefly before the pulse (Ipre-pulse) and again at the end of the pulse (Ipost-pulse).

- Data Acquisition: The instrument executes the pulse sequence. The differential current,

ΔI = I_post-pulse - I_pre-pulse, is plotted against the baseline potential, yielding peaks where Faradaic processes occur. This technique minimizes the contribution of capacitive current, significantly enhancing signal-to-noise ratio for quantification [27].

Electrochemical Impedance Spectroscopy (EIS) Protocol

EIS is exceptionally sensitive to surface phenomena, making it a powerful tool for label-free detection of binding events (e.g., antibody-antigen interactions) on the biosensor surface [25].

- Workflow Overview:

- Detailed Methodology:

- Instrument & Solution Setup: Same three-electrode configuration as other methods.

- Parameter Configuration:

- DC Bias: A constant potential, often set to the formal potential of a redox probe (e.g., [Fe(CN)₆]³⁻/⁴⁻) added to the solution.

- AC Amplitude: A small sinusoidal perturbation, typically 5-10 mV, to maintain system linearity.

- Frequency Range: A broad spectrum is scanned, from high frequencies (e.g., 100 kHz) to low frequencies (e.g., 0.1 Hz) [25].

- Data Acquisition: The potentiostat applies the AC voltage and measures the magnitude and phase of the resulting current. The complex impedance (Z) is calculated and recorded across the frequency spectrum.

- Data Analysis: The raw data (commonly presented as a Nyquist plot) is fitted to an equivalent electrical circuit model. Key parameters like the charge transfer resistance (R_ct), which often increases upon a binding event, are extracted for quantification [25].

Essential Data Pre-processing Steps

Raw electrochemical data requires pre-processing to ensure its suitability for statistical validation and calibration curve generation.

Table 2: Key Pre-processing Steps for Electrochemical Data

| Pre-processing Step | Description | Application in CV/DPV/EIS |

|---|---|---|

| Baseline Correction | Subtracts non-Faradaic background current (e.g., capacitive charging) from the signal. | CV/DPV: Critical for accurate peak current and potential determination. EIS: Often involves checking for inductive loops at high frequency. |

| Signal Smoothing | Applies algorithms (e.g., Savitzky-Golay filter, moving average) to reduce high-frequency noise. | Used in all three techniques to improve signal-to-noise ratio without significantly distorting the signal shape. |

| Data Normalization | Adjusts data to account for experimental variations, such as electrode surface area. | CV: Normalizing current by (scan rate)^1/2^ allows comparison across different scan rates [28]. |

| Peak Identification & Fitting | Uses algorithms to locate peaks and fit them to mathematical models (e.g., Gaussian, Lorentzian) to extract parameters like height, area, and width. | CV/DPV: Essential for quantifying Ip and Ep. EIS: Not applicable; instead, circuit fitting is performed. |

The Scientist's Toolkit: Key Research Reagent Solutions

The performance of electrochemical biosensors is heavily dependent on the materials and reagents used in their fabrication and operation.

Table 3: Essential Materials and Reagents for Electrochemical Biosensors

| Item | Function/Benefit | Representative Examples |

|---|---|---|

| Working Electrodes | The platform for bioreceptor immobilization and where the electrochemical reaction occurs. Material choice dictates sensitivity and window. | Glassy Carbon Electrode (GCE), Gold Electrode (AuE), Platinum Electrode (PtE) [24] [25] |

| Nanostructured Materials | Enhance electrode surface area, improve loading of bioreceptors, and facilitate electron transfer, boosting signal and sensitivity. | Gold nanoparticles (AuNPs), Multi-Walled Carbon Nanotubes (MWCNTs), Graphene oxides [24] |

| Biorecognition Elements | Provide specificity by binding to the target analyte. The choice defines the sensor's selectivity. | Antibodies, Enzymes, Aptamers (short, single-stranded DNA/RNA), Lectins (for glycan detection) [24] [25] |

| Redox Probes | A reversible redox couple used as a reporter to monitor changes at the electrode interface, especially in EIS and some CV/DPV applications. | Potassium ferricyanide/ferrocyanide ([Fe(CN)₆]³⁻/⁴⁻), Methylene Blue [25] |

| Immobilization Matrices | Provide a stable scaffold for attaching bioreceptors to the electrode surface while maintaining their bioactivity. | Self-Assembled Monolayers (SAMs), conductive polymers, hydrogels, Nafion [24] |

In chemical analysis and biosensing, calibration is a fundamental process that establishes a reliable relationship between an analytical instrument's response and the known concentration of a target analyte [29]. This relationship, expressed as a calibration curve or equation, ensures that sensors and instruments provide accurate, reproducible quantitative data essential for research, diagnostics, and drug development [29] [30]. The choice of calibration model directly impacts key analytical figures of merit, including accuracy, precision, and the limit of detection (LOD) [31].

The most foundational application of these models is the calculation of the Limit of Detection, often formulated as LOD = 3σ/S, where 'σ' represents the standard deviation of the blank signal and 'S' is the analytical sensitivity (slope of the calibration curve) [30] [31]. This formula, while simple, rests entirely upon a properly constructed and validated calibration model. This guide provides a structured comparison of linear and non-linear regression approaches, equipping scientists with the knowledge to select and apply the optimal model for their specific biosensor validation needs.

Classical vs. Inverse Calibration Equations

Two primary forms of calibration equations exist: the classical and the inverse model. Their core difference lies in the designation of independent and dependent variables.

Classical Calibration Model: This traditional approach treats standard concentration values as the independent variable (

x) and the instrument's response as the dependent variable (y) [29]. The model is formulated as:y = f(x)or, for a linear relationship,y = b₀ + b₁x + εi[29]. When a new sample with an unknown concentration (x₀) is measured, yielding a responsey₀, the concentration must be calculated by inverting the function:x̂₀ = (y₀ - b₀)/b₁[29]. This model assumes that thexvalues (concentrations) have negligible measurement error [29].Inverse Calibration Model: This form reverses the variables, treating the instrument's response as the independent variable (

y) and the concentration as the dependent variable (x) [29]. The model is formulated as:x = g(y)or, linearly,x = c₀ + c₁y + εi[29]. The primary advantage is direct calculation; for a new responsey₀, the predicted concentration is computed simply asx̂₀ = c₀ + c₁y₀[29]. This approach avoids the complex error propagation that can occur when inverting the classical equation, especially for non-linear models [29].

Table 1: Core Characteristics of Classical and Inverse Calibration Equations

| Feature | Classical Equation | Inverse Equation |

|---|---|---|

| Independent Variable (x) | Standard Concentration | Instrument Response |

| Dependent Variable (y) | Instrument Response | Standard Concentration |

| General Form | y = f(x) |

x = g(y) |

| Prediction Calculation | x̂₀ = f⁻¹(y₀) (Requires inversion) |

x̂₀ = g(y₀) (Direct calculation) |

| Key Assumption | Negligible error in standard concentration values [29] | More robust when concentration error assumption is violated [29] |

Comparative Performance Evaluation

Theoretical differences between models must be validated with empirical performance data. A study comparing the two equations using data from humidity sensors and nine literature datasets proposed four key evaluation criteria: minimum predictive error (ei,min), maximum predictive error (ei,max), Mean Absolute Error (MAE), and residual plots [29].

The findings indicate that the inverse calibration equation often demonstrates superior predictive performance. Specifically, it can achieve a lower mean square error and better extrapolation performance compared to the classical approach [29]. Furthermore, as the calibration point moves further from the average of the standard values, the inverse equation's predictive ability becomes more advantageous [29]. This suggests that for many practical applications in biosensing, where measurements can span a wide dynamic range, the inverse model may offer greater reliability.

Table 2: Comparison of Predictive Performance for Two Humidity Sensor Types [29]

| Sensor Type / Performance Metric | Minimum Error (ei,min) | Maximum Error (ei,max) | Mean Absolute Error (MAE) |

|---|---|---|---|

| Capacitive Sensor (Classical Model) | Data not specified in source | Data not specified in source | Higher MAE reported |

| Capacitive Sensor (Inverse Model) | Data not specified in source | Data not specified in source | Lower MAE reported |

| Resistive Sensor (Classical Model) | Data not specified in source | Data not specified in source | Higher MAE reported |

| Resistive Sensor (Inverse Model) | Data not specified in source | Data not specified in source | Lower MAE reported |

Linear Regression Models and Handling Heteroscedasticity

Unweighted Least Squares

The most common linear regression model is unweighted least squares (also known as ordinary least squares, OLS), which fits a line y = bx + a by minimizing the sum of squared residuals across all data points [32]. A high correlation coefficient (r² > 0.99) is often used to accept the model [32]. However, a satisfactory r² value alone is insufficient, especially in bioanalytical methods with wide calibration ranges [32]. A critical flaw of unweighted regression emerges when data exhibits heteroscedasticity—where the variance of the instrument response increases with concentration [32]. In such cases, OLS gives unequal importance to data points, leading to inaccurate results, particularly at lower concentrations [32].

Weighted Least Squares

To address heteroscedasticity, weighted least squares (WLS) regression is employed. WLS assigns a weight to each data point, typically inversely proportional to the variance of its response [32]. Common weighting factors include:

- 1/x: Weight decreases linearly with concentration.

- 1/√x: Weight decreases with the square root of concentration.

- 1/x²: Weight decreases with the square of the concentration.

The optimal weighting factor is selected by comparing the % Relative Error (% RE) for each calibration standard across different models, choosing the factor that yields the minimum total % RE [32]. A statistical F-test on the residuals can also be used to confirm homoscedasticity (constant variance) [32].

Diagram 1: Workflow for Handling Heteroscedasticity in Linear Calibration. This diagram outlines the process of diagnosing unequal variance in calibration data and selecting an appropriate weighted regression model to mitigate its effects.

Non-Linear and Advanced Regression Models

When the relationship between analyte concentration and sensor response is inherently curved, linear models become inadequate, necessitating the use of non-linear regression models.

Polynomial Regression

A direct extension of linear regression, polynomial models fit the data to a polynomial function. The classical form is y = b₀ + b₁x + b₂x² + ... + bₖxᵏ, while the inverse form is x = c₀ + c₁y + c₂y² + ... + cₙyⁿ [29]. The quadratic regression (y = a + bx + cx²) is most common, as higher-order polynomials are generally discouraged due to overfitting risks [32].

Machine Learning for Complex Calibration

For highly complex data, particularly from modern biosensors like electronic noses/tongues or surface-enhanced Raman spectroscopy (SERS) platforms, machine learning (ML) models offer a powerful alternative [33] [34].

- Handling Saturation: ML can uncover hidden patterns in sensor signals, even in saturation regions where traditional models fail. For example, non-parametric models like regression trees and ensembles have been used to calibrate carbon nanotube Hg²⁺ sensors, significantly extending their dynamic range [34].

- Replacing Specificity: In bioreceptor-free biosensors, ML algorithms (e.g., Support Vector Machines (SVM), Artificial Neural Networks (ANNs)) can provide specificity by recognizing subtle, complex patterns in sensor array data, effectively acting as a software-based bioreceptor [33].

- Common Algorithms: Frequently used ML techniques include Principal Component Analysis (PCA) for dimensionality reduction, SVM and ANNs for classification and regression, and Partial Least Squares (PLS) regression for modeling relationships between observed variables [33] [35].

Table 3: Comparison of Linear and Non-Linear Calibration Approaches

| Model Type | Typical Formula | Best Use Cases | Advantages | Limitations |

|---|---|---|---|---|

| Unweighted Linear | y = b₀ + b₁x |

Linear, homoscedastic data over a narrow range. | Simple, interpretable, computationally fast. | Prone to bias from heteroscedasticity [32]. |

| Weighted Linear | y = b₀ + b₁x (with weights) |

Linear data with heteroscedastic variance. | Improves accuracy across a wide concentration range [32]. | Requires replication to estimate variance; choice of weight can be subjective. |

| Polynomial | y = b₀ + b₁x + b₂x² |

Mildly curved, non-linear relationships. | More flexible than linear models. | Can overfit; higher orders are difficult to interpret [32]. |

| Machine Learning (e.g., ANN, SVM) | Complex, model-dependent | Complex, high-dimensional data; sensor saturation; multi-analyte detection [33] [34]. | High predictive accuracy; handles complex non-linearity. | "Black box" nature; requires large datasets; computationally intensive. |

Experimental Protocols for Model Validation

Establishing a Calibration Curve

Protocol Example: HPLC-UV Method for Drug in Plasma [32]

- Standard Preparation: Prepare a series of standard solutions covering the expected concentration range (e.g., 100–3200 ng/mL).

- Sample Processing: Spike blank plasma with standard solutions. Extract the analyte using a technique like liquid-liquid extraction.

- Instrument Analysis: Analyze each standard sample using the analytical instrument (e.g., HPLC-UV). Record the instrument response (e.g., peak area).

- Data Collection: Tabulate the nominal concentration (

x) and the corresponding mean response (y) for each standard.

Computing Limit of Detection (LOD) and Limit of Quantification (LOQ)

The LOD and LOQ are crucial parameters that define the sensitivity of an analytical method. Their calculation is directly tied to the calibration model.

- LOD (Limit of Detection): The lowest concentration that can be detected, but not necessarily quantified, with acceptable certainty [30] [31]. A common convention is:

LOD = kσ / Swherekis a numerical factor (often 3),σis the standard deviation of the blank, andSis the slope of the calibration curve [30]. - LOQ (Limit of Quantification): The lowest concentration that can be quantitatively determined with acceptable accuracy and precision [31]. It is often calculated as

LOQ = 10σ / S[31].

The standard deviation σ can be estimated from:

a) The response of blank samples (repeated measurements of a matrix without the analyte) [30] [31].

b) The standard error of the regression (s_y/x) from the calibration curve itself [31].

Diagram 2: LOD and LOQ Calculation Workflow. This diagram illustrates the standard procedural steps for determining the Limit of Detection and Limit of Quantification based on blank measurements and calibration curve sensitivity.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Reagents and Materials for Biosensor Calibration Experiments

| Item / Solution | Function in Experiment | Example from Literature |

|---|---|---|

| Saturated Salt Solutions | Generates precise, known relative humidity environments for calibrating humidity sensors. | Used to calibrate capacitive and resistive humidity sensors, providing standard RH values [29]. |

| Blank Matrix | A sample of the biological fluid (e.g., plasma, serum) or medium without the analyte, used to prepare calibration standards and assess background signal. | Pooled human plasma used as a blank matrix for developing an HPLC method for Chlorthalidone [32]. |

| Certified Reference Materials | Solutions with precisely known analyte concentrations, used as the primary standard for establishing the calibration curve. | Essential for any quantitative method to ensure traceability and accuracy of the reported concentrations. |

| Functionalized Nanomaterials | Enhance sensor sensitivity and specificity. Used as the sensing interface in advanced biosensors. | Thymine-functionalized carbon nanotubes and gold nanoparticles used in an Hg²⁺ sensor [34]. Metamaterial-graphene structures in optical biosensors [36]. |

| Label-free Biosensing Chips | The transducer platform that converts a biological interaction into a measurable physical signal (e.g., electrochemical, optical). | The base for immunoassays and DNA detection; performance is characterized by the calibration curve and associated LOD [30]. |