Systematic vs. Sequential: A Comparative Analysis of DoE and Traditional Methods for Biosensor Optimization

This article provides a comprehensive comparison between the Design of Experiments (DoE) methodology and traditional One-Variable-at-a-Time (OVAT) approaches for optimizing biosensor performance.

Systematic vs. Sequential: A Comparative Analysis of DoE and Traditional Methods for Biosensor Optimization

Abstract

This article provides a comprehensive comparison between the Design of Experiments (DoE) methodology and traditional One-Variable-at-a-Time (OVAT) approaches for optimizing biosensor performance. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of both methods, details practical applications across various biosensor types—including optical, electrochemical, and whole-cell systems—and offers troubleshooting strategies for complex optimization challenges. Through validation case studies and a direct comparative analysis, we demonstrate how the systematic, multivariate DoE framework significantly enhances experimental efficiency, reveals critical factor interactions, and improves key biosensor metrics such as sensitivity, dynamic range, and signal-to-noise ratio, thereby accelerating the development of reliable point-of-care diagnostics.

Biosensor Optimization Fundamentals: From OVAT Limitations to DoE Principles

The Critical Need for Optimization in Ultrasensitive Biosensor Development

The progression of biomedical research and clinical practices hinges on the development of robust methodologies for accurately and sensitively detecting biomolecules. Ultrasensitive biosensors, particularly those with a limit of detection (LOD) lower than femtomolar, are increasingly regarded as essential for early diagnosis of progressive, life-threatening diseases [1] [2]. These technologies provide clinicians with a crucial tool for combating diseases by allowing for early interventions, which significantly improve the chances of successful treatment. Electrochemical biosensors have evolved as a potent method for detecting biological entities, offering significant advantages in sensitivity, selectivity, and portability through the integration of electrochemical techniques with nanomaterials, bio-recognition components, and microfluidics [3]. However, the widespread adoption of biosensors as dependable point-of-care tests is hindered by challenges in systematic optimization, which remains a primary obstacle limiting their reliability and performance [1] [2].

Traditional Optimization Approaches and Their Limitations

The One-Variable-at-a-Time (OVAT) Methodology

Traditional biosensor development has predominantly relied on the one-variable-at-a-time (OVAT) approach, where individual parameters are optimized independently while keeping all other factors constant. This method includes optimizing:

- Formulation of the detection interface

- Immobilization strategy of biorecognition elements

- Detection conditions and analytical parameters

While straightforward to implement, this approach is fundamentally problematic, particularly when dealing with interacting variables [1] [2]. The conditions established for sensor preparation and operation may not truly represent the optimum, as this method cannot detect interactions between variables.

Critical Limitations of Traditional Methods

The OVAT approach presents several significant limitations that impede the development of optimal biosensing platforms:

- Failure to detect variable interactions: OVAT consistently eludes detection of interactions that occur when an independent variable exerts varying effects on the response based on the values of another independent variable [1] [2].

- Localized knowledge: Each experiment is defined based on the outcomes of previous ones, resulting in localized knowledge of the optimization process rather than comprehensive, global understanding [2].

- Resource intensive: The sequential nature of OVAT optimization often requires more experimental effort to achieve suboptimal results [1].

- Non-optimal final conditions: The established conditions may not represent the true optimum, hindering practical applications in point-of-care diagnostic settings [2].

Design of Experiments (DoE): A Systematic Approach

Fundamental Principles of DoE

Design of Experiments (DoE) is a powerful chemometric tool that facilitates the systematic and statistically reliable optimization of parameters [1] [2]. This model-based optimization approach develops a data-driven model that connects variations in input variables (such as material properties and production parameters) to sensor outputs. Unlike traditional methods, DoE:

- Considers variable interactions and their combined effects on responses

- Provides global knowledge by establishing an experimental plan a priori

- Enables prediction of responses across the entire experimental domain

- Reduces experimental effort while enhancing information quality [1] [2]

Key Experimental Designs in Biosensor Optimization

Factorial Designs

The 2^k factorial designs are first-order orthogonal designs necessitating 2^k experiments, where k represents the number of variables being studied. In these models, each factor is assigned two levels coded as -1 and +1, corresponding to the variable's selected range [1] [2].

Table 1: Experimental Matrix of a 2^2 Factorial Design

| Test Number | X₁ | X₂ |

|---|---|---|

| 1 | -1 | -1 |

| 2 | +1 | -1 |

| 3 | -1 | +1 |

| 4 | +1 | +1 |

From a geometric perspective, the experimental domain forms a square (for 2 variables), a cube (for 3 variables), or a hypercube (for more variables) [1] [2].

Advanced DoE Designs

For more complex optimization challenges, advanced DoE designs offer enhanced capabilities:

- Central Composite Designs: Augment initial factorial designs to estimate quadratic terms, enhancing the predictive capacity of the model for responses following quadratic functions [1] [2].

- Mixture Designs: Used when the combined total of all components must equal 100%, where components cannot be altered independently [1] [2].

- Machine Learning Integration: Emerging approaches combine DoE with ML algorithms to predict key optical properties and identify influential design parameters, significantly accelerating sensor optimization [4].

DoE Workflow and Implementation

The experimental design process follows a structured workflow:

- Identify all factors with potential causality relationships with targeted output signals

- Establish experimental ranges and distribution of experiments within the experimental domain

- Conduct predetermined experiments in random order to mitigate systematic effects

- Construct mathematical models through linear regression

- Validate model adequacy and refine as necessary [1] [2]

As optimization often requires multiple iterations, it's advisable not to allocate more than 40% of available resources to the initial set of experiments [1].

Comparative Performance Analysis: DoE vs. Traditional Methods

Efficiency and Resource Utilization

DoE approaches demonstrate significant advantages in experimental efficiency and resource utilization:

- Reduced experimental effort: DoE requires diminished experimental effort compared to univariate strategies while providing more comprehensive information [1] [2].

- Global optimization: The experimental plan is established a priori, enabling response prediction across the entire experimental domain rather than localized knowledge [2].

- Comprehensive factor analysis: Multiple variables and their interactions are assessed simultaneously rather than sequentially.

Performance Enhancement in Biosensing Applications

The systematic approach of DoE has driven significant advancements in biosensor performance across various sensing platforms:

Table 2: Performance Comparison of Optimization Approaches in Biosensor Development

| Biosensor Platform | Optimization Method | Key Performance Metrics | Reference |

|---|---|---|---|

| Electrochemical miRNA sensors | Traditional OVAT | LOD: 0.044-4.5 fM, Linear range: 10 fM - 5×10⁷ fM | [3] |

| Graphene FET miRNA biosensor | Systematic optimization | LOD: 1.92 fM, Detection time: 10 min, Wide dynamic range: 10 fM - 100 pM | [5] |

| PCF-SPR biosensor | ML-enhanced DoE | Wavelength sensitivity: 125,000 nm/RIU, Resolution: 8×10⁻⁷ RIU | [4] |

| Paper-based pesticide sensor | Systematic optimization | LOD: 0.09 ppm, Preservation: 5 months at ambient conditions | [6] |

Enhanced Reproducibility and Reliability

DoE methodologies significantly enhance biosensor reproducibility and reliability—critical factors for clinical translation:

- Reduced variability: Systematic approaches minimize batch-to-batch variations in biosensor fabrication [7].

- Improved signal-to-noise ratio: Particularly crucial for ultrasensitive platforms with sub-femtomolar detection limits [1] [2].

- Enhanced robustness: Optimized parameters demonstrate greater stability against minor operational variations.

Experimental Protocols and Methodologies

Protocol: Full Factorial Design for Electrochemical Biosensor Optimization

Application: Optimization of nanomaterial-enhanced electrochemical biosensors [3] [1]

Step-by-Step Procedure:

- Identify critical factors: Select 3-4 key variables (e.g., nanomaterial concentration, incubation time, pH, biorecognition element density)

- Define factor levels: Establish high (+1) and low (-1) levels for each factor based on preliminary experiments

- Construct experimental matrix: Generate 2^k or 2^(k-p) fractional factorial design

- Randomize run order: Execute experiments in random sequence to minimize systematic error

- Measure responses: Record key performance metrics (LOD, sensitivity, selectivity, response time)

- Calculate factor effects: Determine main effects and interaction effects using statistical analysis

- Build predictive model: Develop linear regression model with interaction terms

- Verify optimal conditions: Conduct confirmation experiments at predicted optimum [1] [2]

Protocol: Central Composite Design for Optical Biosensors

Application: Optimization of PCF-SPR biosensors with complex response surfaces [1] [4]

Step-by-Step Procedure:

- Perform screening design: Identify significant factors using 2^k factorial design

- Augment with axial points: Add 2k axial points at distance ±α from center

- Include center points: Replicate center points to estimate pure error

- Execute randomized experiments: Measure wavelength sensitivity, amplitude sensitivity, and resolution

- Fit quadratic model: Develop second-order polynomial regression model

- Validate model adequacy: Check R², adjusted R², and prediction R²

- Generate response surfaces: Visualize factor-response relationships

- Apply machine learning: Implement ML regression (Random Forest, XGBoost) to predict performance [4]

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Biosensor Development and Optimization

| Material/Reagent | Function in Biosensor Development | Application Examples |

|---|---|---|

| Gold Nanoparticles (AuNPs) | Signal amplification, electron transfer enhancement, biocompatibility | Electrochemical detection of miRNA-21, LOD: 0.12 fM [3] |

| Graphene & 2D Materials | High surface area, excellent conductivity, flexibility | Graphene FETs for miRNA-155 detection, LOD: 1.92 fM [5] |

| Metal-Organic Frameworks (MOFs) | Tunable porosity, enhanced surface area, selective binding | Electrochemical sensor enhancement [3] |

| Platinum@Cerium oxide Nanostructures | Catalytic activity, electron transfer mediation | miR-21 detection, LOD: 1.41 fM [3] |

| Silicon Nanowires | High surface-to-volume ratio, sensitive charge detection | miR-21 detection, LOD: 1 fM [3] |

| Polylactic Acid (PLA) | Flexible substrate for wearable biosensors | Flexible GFET biosensors [5] |

| Poly-L-lysine (PLL) | Functionalization layer for biomolecule immobilization | PFLIG-GFET biosensor for clinical samples [5] |

| Chromogenic Substrates (IOA, ATCh) | Signal generation in colorimetric biosensors | Paper-based pesticide detection [6] |

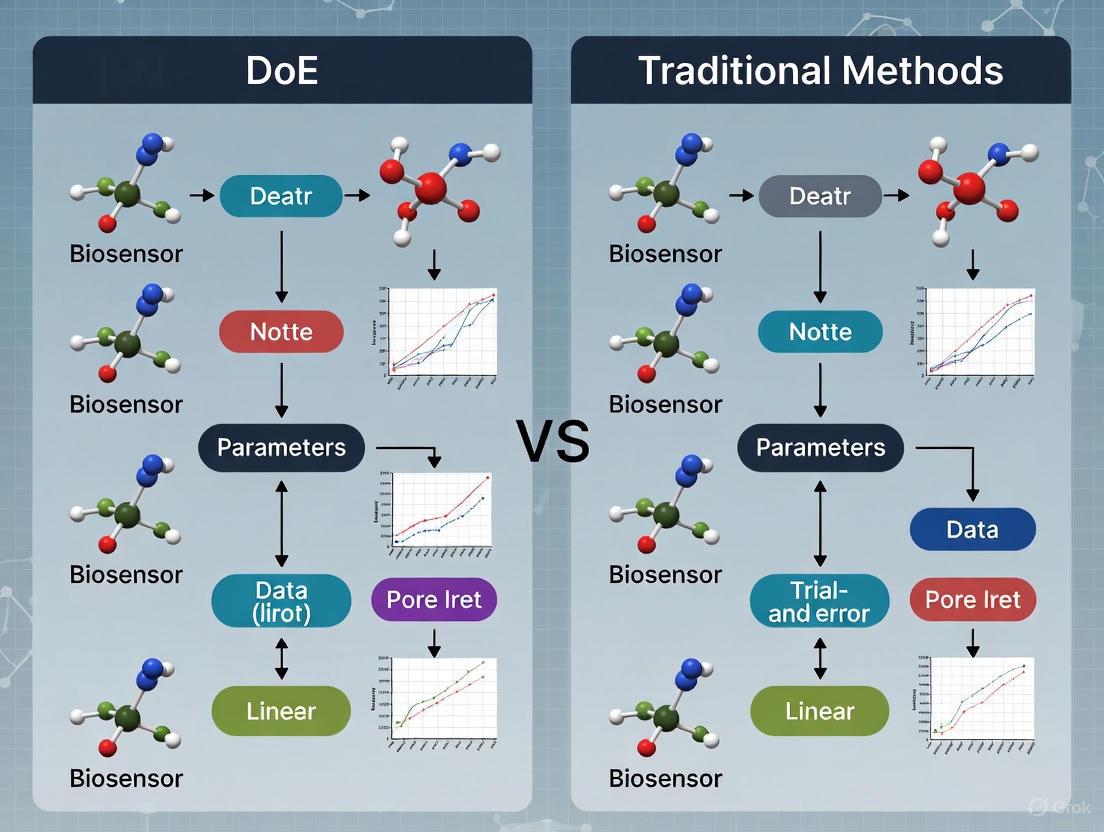

Visualization of Optimization Approaches

The critical need for optimization in ultrasensitive biosensor development cannot be overstated. As biosensors continue to evolve toward greater sensitivity, specificity, and point-of-care applicability, systematic optimization approaches like Design of Experiments provide a fundamental advantage over traditional methods. The comparative analysis demonstrates that DoE methodologies not only enhance biosensor performance but also improve development efficiency, reproducibility, and reliability—all essential factors for successful clinical translation.

Future perspectives in biosensor optimization point toward increased integration of machine learning and artificial intelligence with traditional DoE approaches [4] [7]. The emerging synergy between statistical modeling and AI-driven material informatics holds significant potential for accelerating the discovery of next-generation functional materials and biosensing architectures [7]. Furthermore, the application of explainable AI (XAI) methods provides unprecedented insights into the influence of design parameters, enabling more transparent and interpretable biosensor design processes [4].

As the biosensor field advances toward increasingly complex multi-parameter systems for real-world applications, the systematic optimization principles embodied by DoE will become increasingly indispensable. By bridging the gap between experimental design and computational optimization, these data-driven approaches underscore their transformative impact on enhancing reproducibility, efficiency, and scalability in biosensor research—ultimately accelerating the development of reliable diagnostic tools for improved healthcare outcomes.

The One-Variable-at-a-Time (OVAT) approach, also known as One-Factor-at-a-Time (OFAT), represents a traditional methodology for experimental optimization across scientific and engineering disciplines. This method involves systematically changing a single experimental factor while maintaining all other parameters constant [8]. The OVAT approach has been widely taught and implemented due to its straightforward conceptual framework, which aligns with conventional scientific training [9]. Researchers in fields ranging from biosensor development to pharmaceutical manufacturing have historically relied on this method for optimizing complex processes.

In the specific context of biosensor optimization, which encompasses both manufacturing parameters and operational conditions, the OVAT approach has been frequently employed for initial parameter screening [10]. The method's intuitive nature makes it particularly accessible to non-experts in statistical design, especially in situations where data is relatively inexpensive and abundant to collect [8]. The process begins with establishing baseline conditions for all factors, then sequentially varying each parameter of interest while documenting its individual effect on the output response. This systematic isolation of variables aims to establish clear cause-and-effect relationships between each factor and the measured outcome.

Despite its historical prevalence, the OVAT method faces significant criticism in modern analytical science, particularly when optimizing complex systems with interacting variables [11]. The approach provides only a partial understanding of how factors affect the response, potentially missing optimal conditions and leading to suboptimal system performance [11]. As the demand for more sophisticated and sensitive analytical platforms grows, particularly in fields like biosensor development where multiple manufacturing and operational variables must be optimized simultaneously, understanding the limitations and appropriate applications of OVAT becomes essential for researchers and drug development professionals.

Fundamental Principles and Methodology of OVAT

Core Operational Framework

The OVAT methodology follows a structured, sequential process that can be divided into distinct operational phases. The initial stage involves identifying all potentially relevant factors that may influence the system's output. Researchers then establish baseline conditions for these factors, selecting a starting point that typically represents the current best-known settings or literature values [10]. The optimization process proceeds by selecting one factor to vary across a predetermined range while maintaining all other factors at their baseline levels. After testing each level of the varied factor and measuring the corresponding system response, researchers identify the level that produces the most favorable outcome. This optimal level then becomes the new fixed value for that factor as the process repeats with the next variable [11] [8].

This sequential approach continues until all factors of interest have been individually optimized. The final optimized condition comprises the combination of all individually optimal factor levels identified through this iterative process. The underlying assumption of OVAT is that the global optimum can be approximated by combining the individual optimal levels of each factor, an assumption that holds true only when factors do not interact with each other [12].

Experimental Protocol in Practice

In practical laboratory settings, implementing OVAT requires careful experimental planning and execution. For example, in optimizing pigment production from the marine-derived fungus Talaromyces albobiverticillius 30548, researchers first employed OVAT to screen different nutrient sources [10] [13]. The experimental protocol involved testing five different carbon sources (glucose, sucrose, fructose, soluble starch, and malt extract) while maintaining constant concentrations of nitrogen sources and inorganic salts. After identifying sucrose as the optimal carbon source, researchers then varied nitrogen sources (sodium nitrate, peptone, tryptone, and yeast extract) while maintaining the optimal carbon source and constant inorganic salt concentrations [13].

This systematic approach allowed researchers to identify significant medium components (yeast extract, K₂HPO₄, and MgSO₄·7H₂O) for subsequent optimization phases [10]. The OVAT methodology in this context served as an initial screening tool to narrow down the many potential factors before applying more sophisticated optimization techniques. The stepwise protocol demonstrates how OVAT can provide a foundation for understanding individual factor effects, even in complex biological systems with multiple potential influencing variables.

Figure 1: OVAT Experimental Workflow - This diagram illustrates the sequential process of the One-Variable-at-a-Time approach, showing how factors are optimized individually while others remain constant.

OVAT in Practice: Experimental Applications and Case Studies

Biosensor Optimization Case Study

The application of OVAT in biosensor development demonstrates both the utility and limitations of this approach. In one documented case, researchers developed a paper-based electrochemical biosensor for detecting lung cancer-related microRNAs (miR-155 and miR-21) using an OVAT optimization strategy [11]. The researchers systematically varied parameters such as gold nanoparticle concentration, DNA probe immobilization conditions, ionic strength, and hybridization conditions while keeping other factors constant. This approach enabled preliminary optimization of the sensor, resulting in limits of detection (LODs) of 12.0 nM for miR-155 and 25.7 nM for miR-21 [11].

While this OVAT-based optimization yielded a functional biosensor, the reported detection limits remained relatively high compared to clinical requirements. The authors noted that this highlighted a key drawback of OVAT optimization, which considers only one variable at a time, neglecting possible interactions between factors and often leading to suboptimal performance [11]. Subsequent analysis suggested that had the authors employed a Design of Experiments (DoE) approach, they could have systematically explored the effects of multiple variables simultaneously, potentially identifying synergistic effects and uncovering actual optimal conditions for each parameter [11]. This case illustrates how OVAT can produce functional but potentially suboptimal results in complex biosensor systems where factor interactions may significantly influence performance.

Industrial Bioprocess Optimization

The OVAT approach has also been extensively applied in industrial bioprocess optimization, as demonstrated in pigment production from filamentous fungi. In optimizing pigment production from Talaromyces albobiverticillius 30548, researchers initially employed OVAT to screen different nutrient sources [10]. This involved testing various carbon sources (glucose, sucrose, fructose, soluble starch, and malt extract) while maintaining fixed concentrations of nitrogen sources and inorganic salts. After identifying sucrose as optimal, they then varied nitrogen sources (sodium nitrate, peptone, tryptone, and yeast extract) while maintaining optimal carbon source and constant salts [13].

This OVAT screening identified significant medium components (yeast extract, K₂HPO₄, and MgSO₄·7H₂O) for pigment and biomass production, with sucrose combined with yeast extract providing maximum yields of orange pigments (1.39 g/L) and red pigments (2.44 g/L), along with higher dry biomass (6.60 g/L) [10]. While effective for initial screening, the researchers recognized the limitations of OVAT and subsequently applied Response Surface Methodology (RSM) with Central Composite Design (CCD) to evaluate optimal concentrations and interactive effects between the identified nutrients [10]. This hybrid approach leveraged OVAT for initial factor screening before implementing more sophisticated optimization methodologies, demonstrating a practical application of OVAT within a broader optimization strategy.

Comparative Analysis: OVAT versus Design of Experiments (DoE)

Theoretical Foundations and Operational Differences

The fundamental distinction between OVAT and DoE approaches lies in how they handle multiple variables during experimentation. While OVAT varies factors sequentially while holding others constant, DoE involves systematically varying multiple factors simultaneously according to a predetermined experimental matrix [11] [1]. This fundamental operational difference leads to significant disparities in the type and quality of information obtained from the optimization process.

DoE approaches, including factorial designs, central composite designs, and D-optimal designs, are model-based optimization strategies that develop data-driven models connecting variations in input variables to system outputs [1]. These models enable researchers to not only identify individual factor effects but also quantify interaction effects between factors—something that OVAT approaches cannot accomplish [12]. The ability to detect and measure interactions represents a critical advantage for DoE in complex systems where factors may have interdependent effects on the response variable [11].

Practical Implications for Experimental Efficiency

The efficiency differences between OVAT and DoE become particularly pronounced as the number of experimental factors increases. The table below compares the experimental requirements for OVAT versus various DoE approaches across different numbers of optimization factors:

Table 1: Experimental Requirements Comparison: OVAT vs. DoE Approaches

| Number of Factors | OVAT Experiments* | Full Factorial DoE | D-Optimal DoE | Plackett-Burman |

|---|---|---|---|---|

| 3 factors | 15-30 experiments | 8 experiments | 10-15 experiments | 4 experiments |

| 6 factors | 30-60 experiments | 64 experiments | 30 experiments | 7 experiments |

| 8 factors | 40-80 experiments | 256 experiments | 40-50 experiments | 12 experiments |

*OVAT experiment estimates assume 5-10 levels tested per factor

A concrete example of this efficiency disparity comes from the development of a hybridization-based paper-based electrochemical biosensor for miRNA-29c detection [11]. The sensing platform involved six variables requiring optimization, including both sensor manufacturing parameters (gold nanoparticles, immobilized DNA probe) and working conditions (ionic strength, probe-target hybridization, electrochemical parameters) [11]. The adoption of a D-optimal DoE design allowed researchers to optimize the device using only 30 experiments, compared to the 486 experiments that would have been required with a comprehensive OVAT approach [11]. This represents a 94% reduction in experimental workload, demonstrating the dramatic efficiency advantages of DoE for multi-factor optimization problems.

Figure 2: Interaction Effects Detection - This diagram illustrates how DoE approaches can identify factor interactions that OVAT methodologies inevitably miss, leading to more comprehensive process understanding.

Performance Outcomes Comparison

The different methodological approaches of OVAT and DoE frequently lead to significantly different optimization outcomes, particularly in complex systems. The table below summarizes performance differences reported in case studies comparing both approaches:

Table 2: Performance Comparison: OVAT vs. DoE Optimization Outcomes

| Application Context | OVAT Performance | DoE Performance | Improvement |

|---|---|---|---|

| miRNA electrochemical biosensor [11] | Baseline detection limit | 5-fold lower LOD | 500% sensitivity improvement |

| Heavy metal electrochemical sensor [11] | 12 nM detection limit | 1 nM detection limit | 92% sensitivity improvement |

| Glucose biosensor stability [11] | 50% current retention after 12h | 75% current retention after 12h | 50% stability improvement |

| Fungal pigment production [10] | 6.60 g/L biomass | 15.98 g/L biomass | 142% yield improvement |

The performance advantages of DoE are particularly evident in the miRNA biosensor case study, where adopting a D-optimal DoE design resulted in a 5-fold improvement in the limit of detection compared to the non-DoE optimized biosensor [11]. Similarly, in optimizing an electrochemical glucose biosensor, DoE enabled researchers to achieve similar current density using 93% less nanoconjugate while improving operational stability from 50% to 75% amperometric current retained after 12 hours of use [11]. These performance improvements stem from DoE's ability to identify true optimal conditions by accounting for factor interactions, which OVAT approaches inherently cannot detect.

The Researcher's Toolkit: Essential Materials and Reagents

Successful implementation of OVAT optimization requires specific research tools and reagents tailored to the experimental context. The following table outlines key materials commonly employed in OVAT-based biosensor optimization studies:

Table 3: Essential Research Reagents for Biosensor Optimization Studies

| Reagent/Material | Function in Optimization | Application Example |

|---|---|---|

| Gold nanoparticles | Signal amplification in electrochemical biosensors | Varied concentration to enhance electron transfer [11] |

| DNA probes | Biorecognition element for nucleic acid detection | Immobilization density optimized for target hybridization [11] |

| Yeast extract | Complex nitrogen source for microbial growth | Optimized as nitrogen source for fungal pigment production [10] |

| MgSO₄·7H₂O | Enzyme cofactor and metabolic precursor | Concentration optimized for fungal metabolite production [10] |

| Screen-printed electrodes | Transduction platform for electrochemical detection | Surface modification parameters optimized sequentially [14] |

| Carbon nanotubes | Nanomaterial for electrode modification | Loading concentration optimized for signal enhancement [14] |

These fundamental materials represent core components across many biosensor optimization studies. Their concentrations, immobilization parameters, and processing conditions are typically varied sequentially in OVAT approaches to establish individual optimal ranges before proceeding to subsequent factors. The selection of appropriate ranges for each variable requires preliminary knowledge of the system, which may come from literature reviews, preliminary experiments, or theoretical considerations [10].

Critical Assessment: Advantages and Limitations of OVAT

Documented Advantages in Specific Contexts

Despite its methodological limitations, the OVAT approach maintains relevance in certain research contexts due to several distinct advantages. The method is conceptually straightforward and widely taught, making it accessible to researchers without extensive statistical training [9]. This accessibility particularly benefits non-experts and those entering new research fields where preliminary factor screening is necessary [8]. The logical progression of varying one factor at a time aligns with conventional scientific thinking about cause-and-effect relationships, making experimental procedures and results intuitively understandable to broad audiences.

OVAT approaches demonstrate particular utility in situations where data is relatively inexpensive and abundant to collect [8]. In early-stage exploratory research, where little prior knowledge exists about factor effects, OVAT can provide initial insights with minimal statistical analysis requirements. Some researchers have shown that OVAT can be more effective than fractional factorial designs under specific conditions: when the number of experimental runs is severely limited, the primary goal is to attain incremental improvements in the system, and experimental error is not large compared to factor effects (which must be additive and independent of each other) [8]. Additionally, OVAT serves as a valuable preliminary step before implementing more sophisticated DoE approaches, helping to identify critical factors for subsequent comprehensive optimization [10].

Fundamental Limitations and Methodological Flaws

The OVAT approach suffers from several fundamental limitations that restrict its effectiveness for complex optimization challenges. The most significant drawback is its inability to detect and quantify interactions between factors [11] [12]. When factors interact—meaning the effect of one factor depends on the level of another—OVAT may completely miss the true optimal conditions, potentially identifying suboptimal parameter combinations [11]. This limitation is particularly problematic in biosensor optimization, where interactions between manufacturing parameters (e.g., nanomaterial concentration) and operational conditions (e.g., ionic strength, temperature) are common.

Additional limitations include inefficiency in resource utilization, as OVAT requires more experimental runs for the same precision in effect estimation compared to statistically designed experiments [8] [9]. The method provides limited coverage of the experimental space, focusing on axial points while potentially missing optimal regions located elsewhere in the multidimensional factor space [9]. There is also no inherent mechanism to account for or quantify experimental error, including measurement variation, which makes it difficult to determine the statistical significance of observed effects [12]. These collective limitations explain why OVAT has been largely superseded by DoE methodologies in fields requiring rigorous optimization of complex multi-factor systems, particularly in biosensor development and pharmaceutical applications [11] [1].

The One-Variable-at-a-Time approach represents a foundational methodology in experimental optimization with specific utilities and recognized limitations. While its straightforward conceptual framework makes it accessible for preliminary factor screening and educational contexts, OVAT suffers from critical drawbacks in complex optimization scenarios, particularly its inability to detect factor interactions and its inefficiency in resource utilization [11] [8] [12]. The demonstrated performance advantages of Design of Experiments approaches—including 5-fold improvements in detection limits for biosensors and substantial reductions in experimental workload—highlight why DoE methodologies have largely superseded OVAT in rigorous scientific optimization [11].

Nevertheless, OVAT maintains relevance as an initial screening tool within broader optimization strategies, particularly when researchers possess limited prior knowledge about a system [10]. The method's accessibility ensures its continued application in early-stage research, though scientists should recognize its limitations and transition to more sophisticated DoE approaches for comprehensive optimization. For researchers and drug development professionals working with complex systems like biosensors, where multiple interacting factors influence performance outcomes, understanding both the appropriate applications and fundamental limitations of OVAT remains essential for designing efficient and effective optimization strategies.

In the field of biosensor development and drug discovery, efficient experimental optimization is crucial for advancing new technologies from concept to clinical application. The traditional One-Variable-at-a-Time (OVAT) approach has been widely used for process optimization, but it possesses fundamental limitations that can hinder research progress and outcomes. This guide examines these inherent constraints through direct comparison with the statistical approach of Design of Experiments (DoE), supported by experimental data and case studies from recent scientific literature.

OVAT vs. DoE: A Fundamental Comparison

OVAT (One-Variable-at-a-Time), also known as OFAT, is an experimental approach where researchers test factors individually while holding all other variables constant [8] [15]. After testing one factor, they return it to its baseline before investigating the next variable. This method has historically been popular due to its conceptual simplicity and straightforward implementation [15].

DoE (Design of Experiments) is a systematic, statistical approach that involves varying multiple factors simultaneously according to a predefined experimental matrix [16]. This methodology enables researchers to not only determine individual factor contributions but also to resolve factor interactions and create detailed maps of process behavior.

Table: Fundamental Methodological Differences Between OVAT and DoE

| Aspect | OVAT Approach | DoE Approach |

|---|---|---|

| Factor Variation | Factors tested sequentially | Multiple factors varied simultaneously |

| Interaction Detection | Cannot estimate interactions | Can resolve and quantify factor interactions |

| Experimental Efficiency | Requires more runs for same precision | More information with fewer experiments |

| Optima Identification | Prone to finding local optima | Better at identifying global optima |

| Error Estimation | Requires multiple replicates | Uses centerpoints to estimate pure error |

Core Limitations of the OVAT Approach

Experimental Inefficiency and Resource Intensity

The OVAT method requires a substantially larger number of experimental runs to achieve the same precision in effect estimation compared to factorial designs [8] [15]. This inefficiency stems from its sequential nature, where each variable must be tested individually while others remain fixed. In chemical reaction optimization, this approach is "simple but laborious and time consuming, requiring many individual runs across an often-large number of parameters" [16]. The increased number of runs directly translates to higher consumption of reagents, expensive materials, and researcher time—particularly problematic when working with precious samples or specialized equipment.

Inability to Detect Factor Interactions

Perhaps the most significant limitation of OVAT is its fundamental inability to detect interactions between factors [8] [15]. By varying only one factor at a time, OVAT assumes that factors act independently, which is often an unrealistic assumption in complex biological and chemical systems.

As demonstrated in radiochemistry optimization, "the setting of one factor may affect the influence of another" [16]. These interaction effects can be crucial in biosensor development, where parameters like pH, temperature, and reagent concentrations often exhibit interdependent effects on sensor performance. Without detecting these interactions, researchers risk drawing incomplete or misleading conclusions about their systems.

Propensity to Identify Local Rather Than Global Optima

OVAT optimization is "prone to finding only local optima" and may "miss the true set of optimal conditions" [16]. This occurs because the method only explores a limited path through the experimental space rather than mapping the entire response surface. The results are highly dependent on the starting conditions chosen by the researcher, potentially leading to suboptimal outcomes that don't represent the best possible configuration for a given biosensor or chemical process.

Case Studies and Experimental Evidence

Radiochemistry Optimization: DoE vs. OVAT Efficiency

In copper-mediated radiofluorination (CMRF) chemistry for PET tracer synthesis, researchers directly compared DoE and OVAT approaches [16]. Using DoE to construct factor screening and optimization studies, they "identified critical factors and modeled their behavior with more than two-fold greater experimental efficiency than the traditional OVAT approach." This enhanced efficiency is particularly valuable in radiochemistry, where reducing experimental runs lowers researcher exposure to ionizing radiation and conserves expensive precursors.

Table: Experimental Efficiency Comparison in Radiochemistry Optimization

| Metric | OVAT Approach | DoE Approach | Improvement |

|---|---|---|---|

| Experimental Runs | Substantially more required | Minimized via statistical design | >2x more efficient |

| Interaction Detection | Not possible | Full resolution of factor interactions | Critical insights gained |

| Optimal Condition Identification | Local optima likely | Global optima identified | Enhanced process performance |

| Resource Consumption | High | Optimized | Significant savings |

Biosensor Performance Optimization

Recent advances in biosensor development have leveraged DoE and machine learning to overcome OVAT limitations. In developing a photonic crystal fiber surface plasmon resonance (PCF-SPR) biosensor, researchers integrated "machine learning regression techniques to predict key optical properties" and "explainable AI methods to analyze model outputs and identify the most influential design parameters" [17]. This hybrid approach "significantly accelerates sensor optimization, reduces computational costs, and improves design efficiency compared to conventional methods."

Similarly, in optimizing a graphene-based biosensing platform for breast cancer detection, machine learning models were used to "systematically optimize structural parameters, enabling systematic refinement of detection accuracy and reproducibility" [18]. The optimized design demonstrated superior sensitivity compared to conventional configurations, achieving "a peak sensitivity of 1785 nm/RIU."

Recommended Experimental Protocols

DoE Factor Screening Protocol

For initial DoE implementation in biosensor optimization:

Define Objective: Clearly identify the primary response variable (e.g., sensitivity, specificity, signal-to-noise ratio).

Select Factors: Choose continuous (temperature, pH, concentration) and discrete (buffer type, membrane material) variables based on preliminary knowledge.

Design Screening Experiment: Implement a fractional factorial design to efficiently identify significant factors from a larger set of potential variables [16].

Execute and Analyze: Conduct experiments according to the design matrix, then use multiple linear regression to identify statistically significant factors.

Plan Optimization Phase: Use significant factors identified in screening to design more detailed response surface optimization studies.

Response Surface Methodology Protocol

For detailed optimization after factor screening:

Select Experimental Design: Choose central composite or Box-Behnken designs capable of modeling curvature and interactions [15].

Execute Design: Perform experiments across the defined factor space, including center points for error estimation.

Model Development: Fit a mathematical model (typically quadratic) to the response data using regression analysis.

Optimization: Use the fitted model to identify factor settings that optimize the response(s).

Validation: Confirm optimal conditions through additional experimental runs.

Research Reagent Solutions for Optimization Studies

Table: Essential Materials for Biosensor Optimization Experiments

| Reagent/Material | Function in Optimization | Application Examples |

|---|---|---|

| Graphene-based composites | Enhanced sensitivity and conductivity | Breast cancer biosensors [18] |

| Gold/Silver nanoparticles | Plasmonic enhancement | SERS-based immunoassays [19] |

| Photonic crystal fibers | Light propagation control | PCF-SPR biosensors [17] |

| Specific antibodies | Target recognition | α-fetoprotein detection [19] |

| Fluorescent dyes | Signal generation and detection | Various immunoassays |

| Buffer components | pH maintenance and stability | All biological assays |

The inherent limitations of OVAT—local optima identification, missed factor interactions, and experimental inefficiency—present significant challenges in biosensor optimization and drug development. The demonstrated superiority of DoE approaches in both efficiency and effectiveness underscores the value of statistical experimental design in modern research. As the field advances, integrating DoE with emerging technologies like machine learning and explainable AI provides a powerful framework for accelerating development cycles and enhancing diagnostic capabilities in biomedical applications.

The development of high-performance biosensors represents a critical frontier in medical diagnostics, environmental monitoring, and biotechnology. However, the transition from laboratory prototypes to reliable, commercially viable sensing platforms has been consistently hampered by optimization challenges [1]. Traditional one-variable-at-a-time (OVAT) approaches to biosensor optimization—where parameters are adjusted sequentially while others remain fixed—suffer from fundamental limitations in efficiently navigating complex, multidimensional experimental spaces [1] [2]. This comparative analysis examines Design of Experiments (DoE) as a systematic framework for biosensor optimization, contrasting its methodology and performance against traditional approaches with supporting experimental data.

Design of Experiments is a powerful chemometric tool that provides a structured, model-based approach to optimization [1] [2]. Unlike traditional methods, DoE simultaneously varies multiple experimental factors according to predetermined mathematical matrices, enabling researchers to not only determine individual variable effects but also to identify interaction effects between variables—a capability that consistently eludes OVAT approaches [1]. This perspective review demonstrates how DoE methodologies have been successfully applied to optimize both optical and electrical ultrasensitive biosensors, resulting in enhanced performance metrics including sensitivity, dynamic range, and signal-to-noise ratios while reducing overall experimental effort [1] [2].

Traditional OVAT Optimization: Limitations and Pitfalls

Fundamental Methodological Flaws

The traditional OVAT approach to biosensor optimization follows a sequential process wherein individual parameters such as bioreceptor concentration, cross-linking agent amount, pH, or temperature are optimized independently while other variables remain fixed at arbitrary levels [1]. This method appears logically straightforward but contains critical statistical and practical deficiencies that limit its effectiveness for complex biosensing systems.

The most significant limitation of OVAT methodology is its inherent inability to detect interaction effects between variables [1] [2]. In biosensor systems, interaction effects occur when the influence of one factor (e.g., enzyme concentration) on the response (e.g., signal intensity) depends on the level of another factor (e.g., pH). These interactions are particularly common in complex biological systems but remain completely undetectable through OVAT experimentation [1]. Consequently, conditions established through sequential optimization may not represent the true global optimum, potentially leading to suboptimal biosensor performance in practical applications [1] [2].

Practical Consequences for Biosensor Development

From a practical standpoint, OVAT optimization often leads to increased experimental effort, higher resource consumption, and prolonged development timelines [20]. While each individual OVAT experiment might appear efficient, the cumulative number of experiments required to explore multiple factors often exceeds the more economical experimental matrices employed in DoE [1]. Furthermore, the localized knowledge gained through OVAT provides limited understanding of the overall experimental domain, offering little predictive capability beyond the specifically tested conditions [1].

Recent studies highlight how traditional optimization approaches have hindered biosensor development. For enzymatic glucose biosensors, conventional optimization of parameters including enzyme amount, cross-linker concentration, conducting polymer properties, and measurement conditions has typically required extensive experimental setups and fabrication of numerous biosensors under different conditions [20]. This chemometric approach inevitably increases both cost and time consumption during the sensor design phase [20].

DoE Methodology: A Systematic Framework

Theoretical Foundations and Core Principles

Design of Experiments represents a fundamental shift from traditional optimization approaches by employing structured, statistically-based experimental matrices that efficiently explore multiple factors simultaneously [1] [2]. The DoE workflow begins with identifying all factors that may exhibit causal relationships with the targeted output signal (response) [1]. After factor selection, researchers establish experimental ranges and determine the distribution of experiments within the experimental domain [1].

The responses collected from these predetermined points are used to construct mathematical models through linear regression, elucidating the relationship between experimental conditions and outcomes [1]. Unlike OVAT's localized knowledge, DoE enables response prediction at any point within the experimental domain, providing comprehensive, global understanding of the system [1]. This approach not only offers significant empirical value but also yields data-driven models that can provide insights into the physical rationalization of observed effects, frequently offering valuable and unforeseen understanding of fundamental mechanisms underlying transduction and amplification processes [1].

Key Experimental Designs for Biosensor Optimization

Table 1: Common Experimental Designs in Biosensor Optimization

| Design Type | Experimental Requirements | Model Capability | Key Applications | Advantages |

|---|---|---|---|---|

| Full Factorial | 2k experiments for k factors [1] | First-order effects and interactions [1] | Initial screening of multiple factors [1] | Identifies all interaction effects; orthogonal design [1] |

| Central Composite | Augmented factorial design with center and axial points [1] | Second-order (quadratic) effects [1] | Response surface modeling and optimization [1] | Captures curvature in response; enables location of optima [1] |

| Mixture Design | Specialized for composition variables [1] | Component proportion effects [1] | Formulation optimization with constrained components [1] | Accounts for dependency between components (sum to 100%) [1] |

| Definitive Screening | Highly efficient for many factors [21] | Main effects and some interactions [21] | Systems with numerous potential factors [21] | Requires minimal runs; robust to active factor sparsity [21] |

Implementation Workflow

The implementation of DoE follows a systematic workflow that differs fundamentally from traditional approaches. The process typically involves multiple iterative cycles of design-build-test-learn, with no more than 40% of available resources allocated to the initial set of experiments [1]. Subsequent iterations use data from initial designs to refine the problem by eliminating insignificant variables, redefining experimental domains, or adjusting hypothesized models [1].

Diagram 1: DoE Iterative Workflow. The systematic process for implementing Design of Experiments emphasizes iterative refinement and model validation to reach optimal conditions efficiently.

Comparative Performance Analysis: DoE vs. Traditional Methods

Case Study: Whole Cell Biosensor Optimization

A definitive comparative study demonstrated the superior performance of DoE over traditional methods in optimizing whole-cell biosensors for detecting catabolic breakdown products of lignin biomass [21]. Researchers applied DoE methodology to systematically modify biosensor dose-response behavior by exploring multidimensional experimental space with minimal experimental runs [21].

Table 2: Performance Comparison for Whole Cell Biosensor Optimization

| Performance Metric | Traditional Methods | DoE Approach | Improvement Factor |

|---|---|---|---|

| Maximum Signal Output | Baseline | Up to 30-fold increase [21] | 30× |

| Dynamic Range | Baseline | >500-fold improvement [21] | >500× |

| Sensing Range | Limited | Expanded by ~4 orders of magnitude [21] | 10,000× |

| Sensitivity | Baseline | >1500-fold increase [21] | >1,500× |

| Dose-Response Modulation | Fixed response | Digital and analogue behavior achievable [21] | N/A |

| Experimental Efficiency | Resource-intensive iterative cycles [21] | Structured exploration with minimal runs [21] | Significant reduction |

The study demonstrated that DoE could efficiently map experimental space and develop genetic systems with greatly enhanced output signal, basal control, dynamic range, and sensitivity compared to standard iterative approaches [21]. The methodology enabled researchers to convert different, closely related genetic designs into continuous factors, avoiding the need to repeat all experimental conditions for each genetic design [21].

Case Study: Electrochemical Biosensor Optimization

Research on electrochemical biosensors further demonstrates DoE advantages. Traditional optimization of enzymatic glucose biosensors requires extensive experimentation to optimize parameters including enzyme amount, cross-linker concentration (e.g., glutaraldehyde), conducting polymer scan number, and measurement conditions [20]. This process necessitates fabricating numerous biosensors with different conditions, increasing both cost and development time [20].

Machine learning approaches applied to biosensor optimization have revealed complex, nonlinear relationships between fabrication parameters and electrochemical responses that traditional OVAT approaches would struggle to identify [20]. These relationships include interaction effects between factors such as enzyme loading, pH, and cross-linker concentration that significantly impact biosensor performance but remain undetectable through sequential optimization [20].

DoE Experimental Protocols and Implementation

Protocol 1: Full Factorial Design for Initial Screening

Objective: Identify significant factors and two-factor interactions affecting biosensor response [1].

Methodology:

- Select k factors for initial screening (typically 3-5 key parameters)

- Define low (-1) and high (+1) levels for each factor based on preliminary knowledge

- Construct 2k experimental matrix with coded factor levels [1]

- Randomize run order to minimize systematic bias

- Conduct experiments and record responses (e.g., signal intensity, limit of detection)

- Compute model coefficients using least squares method [1]

- Perform statistical analysis to identify significant effects and interactions

Mathematical Model: For a 2^2 factorial design, the model takes the form: Y = b0 + b1X1 + b2X2 + b12X1X2, where Y is the predicted response, b0 is the intercept, b1 and b2 are main effects, and b12 is the interaction effect [1].

Protocol 2: Central Composite Design for Response Optimization

Objective: Model curvature in response surface and locate optimal conditions [1].

Methodology:

- Begin with factorial design points (2k)

- Add center points (typically 3-6) to estimate pure error

- Add axial (star) points at distance ±α from center to estimate quadratic effects

- The total number of experiments = 2k + 2k + C0, where C0 is the number of center points

- Conduct experiments in randomized order

- Fit second-order model using regression analysis

- Validate model with confirmation experiments at predicted optimum

Application: This design is particularly valuable when the response follows a quadratic function with respect to experimental variables, allowing precise location of optimal conditions [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents for Biosensor Optimization Studies

| Reagent/Material | Function in Optimization | Application Examples |

|---|---|---|

| Glutaraldehyde | Cross-linking agent for enzyme immobilization [20] | Covalent binding of enzymes to nanomaterials; creates robust enzyme-substrate interaction [22] |

| N-Hydroxysuccinimide (NHS)/EDC | Carboxyl group activation for covalent bonding [19] | Functionalization steps for antibody attachment on sensor surfaces [19] |

| Gold Nanoparticles | Signal amplification and enhanced electron transfer [22] | Improving electrochemical response in immunosensors; increased surface area for biorecognition element immobilization [22] |

| Graphene & MXenes | Nanomaterials with high conductivity and surface area [20] [23] | Enhancing sensitivity in electrochemical and optical biosensors; Femtomolar-level detection [20] |

| Polydopamine | Versatile surface coating material [19] | Improving biocompatibility and facilitating functionalization through simple, environmentally friendly procedures [19] |

| Enzyme Solutions | Biological recognition elements [20] | Glucose oxidase, horseradish peroxidase, and other enzymes for specific analyte detection [20] |

Integration with Advanced Computational Methods

Machine Learning and DoE Synergy

Recent advances combine DoE with machine learning (ML) approaches to further enhance biosensor optimization. ML algorithms can model complex, nonlinear relationships between fabrication parameters and biosensor responses that may challenge traditional DoE models [20]. Studies have demonstrated that ML regression techniques can accurately predict biosensor performance based on key input parameters, potentially reducing experimental burden [20] [23].

The integration of explainable AI (XAI) methods, particularly Shapley Additive exPlanations (SHAP), with DoE provides enhanced interpretability of model outputs, helping researchers identify the most influential design parameters [23]. This hybrid approach significantly accelerates sensor optimization, reduces computational costs, and improves design efficiency compared to conventional methods [23].

Comparative Workflow Efficiency

Diagram 2: Workflow Comparison. Contrast between traditional OVAT (left) and DoE (right) approaches highlights DoE's parallel experimentation and model-based optimization versus OVAT's sequential process.

The comparative analysis clearly demonstrates that Design of Experiments provides a superior framework for biosensor optimization compared to traditional OVAT approaches. DoE systematically uncovers factor interactions, reduces experimental effort, and enables global optimization through structured experimentation and mathematical modeling [1] [2] [21]. The documented performance improvements across multiple biosensor platforms—including whole-cell, electrochemical, and optical biosensors—confirm the practical value of this methodology [1] [21] [23].

Future developments in biosensor optimization will likely involve tighter integration between DoE and machine learning approaches, leveraging the strengths of both methodologies [20] [23]. The growing application of explainable AI will further enhance interpretability, providing deeper insights into the fundamental relationships between design parameters and biosensor performance [23]. As biosensor technologies continue to advance toward increasingly complex multiplexed systems and point-of-care applications, the systematic framework offered by DoE will become increasingly essential for efficient development and optimization.

For researchers engaged in biosensor development, adopting DoE methodologies represents not merely a statistical improvement but a fundamental paradigm shift that accelerates development cycles, enhances performance, and provides deeper understanding of the complex systems under investigation. The experimental evidence and comparative data presented in this analysis provide a compelling case for implementing DoE as the standard approach for biosensor optimization across diverse applications and technology platforms.

In the field of biosensor development, researchers and drug development professionals face the constant challenge of creating devices with enhanced sensitivity, specificity, and reliability. Traditional optimization methods, particularly the "one factor at a time" (OFAT) approach, have shown significant limitations in efficiently navigating the complex multidimensional experimental spaces inherent to biosensor systems [1]. OFAT methodologies involve varying a single parameter while holding all others constant, which not only proves inefficient but more critically, fails to detect interactions between factors that can profoundly influence biosensor performance [24] [25].

Design of Experiments (DoE) provides a powerful, systematic framework that overcomes these limitations through structured multivariate experimentation [24]. DoE is a branch of applied statistics that deals with planning, conducting, analyzing, and interpreting controlled tests to evaluate the factors that control the value of a parameter or group of parameters [24]. By simultaneously manipulating multiple input factors according to statistically designed matrices, DoE enables researchers to efficiently identify optimal conditions while quantifying both main effects and interaction effects [26]. This approach is particularly valuable for optimizing complex genetic systems in biosensor development, where multiple protein-protein and protein-DNA interactions often display nonlinear effects that OFAT approaches cannot adequately characterize [21].

The following comparison guide examines the core principles of DoE, with specific focus on factorial designs and response surface methodology, and demonstrates how these approaches provide superior optimization capabilities compared to traditional methods for biosensor development.

Fundamental DoE Principles and Their Strategic Advantages

Core Principles of DoE

The foundation of DoE rests upon three key principles that ensure the validity and reliability of experimental results: randomization, replication, and blocking [27] [24].

Randomization: This principle involves the random assignment of experimental runs to different factor level combinations. Randomization helps mitigate the impact of nuisance variables and ensures that any observed effects can be attributed to the factors under investigation rather than uncontrolled sources of variation. In comparative experiments, such as evaluating treatments versus controls, random assignment of treatments is essential for eliminating potential biases from conclusions [27].

Replication: Replication refers to the repetition of complete experimental treatments, including the setup. This practice allows researchers to estimate the inherent variability in the experimental process and provides a measure of experimental error. Through replication, researchers can determine the precision of their estimates and obtain more reliable results [27] [24].

Blocking: Blocking is a technique used to account for known sources of variability in an experiment, such as differences in equipment, operators, or environmental conditions. By grouping experimental runs into homogeneous blocks, researchers can isolate and quantify the effects of these nuisance variables, leading to more precise estimates of the factor effects of primary interest [27] [26].

DoE Versus Traditional OFAT: A Comparative Analysis

The table below summarizes the key differences between the traditional OFAT approach and the structured DoE methodology:

Table 1: Comparison between OFAT and DoE Methodologies

| Aspect | OFAT (Traditional Approach) | DoE (Systematic Approach) |

|---|---|---|

| Experimental Strategy | Varies one factor while holding others constant | Varies multiple factors simultaneously according to statistical design |

| Interaction Detection | Cannot detect interactions between factors | Explicitly models and quantifies factor interactions |

| Efficiency | Inefficient; requires many experiments for few factors | Highly efficient; explores large experimental spaces with minimal runs |

| Model Building | Limited to first-order understanding | Enables building of predictive mathematical models |

| Optimal Condition Identification | May miss true optimum due to ignored interactions | Systematically locates optimal regions and identifies trade-offs |

| Biosensor Application | Problematic for complex systems with interacting components | Ideal for optimizing multifactorial biosensor systems |

Traditional OFAT approaches are inherently limited because they fail to capture interactions between factors. For instance, in biosensor development, the effect of changing an immobilization pH might depend on the temperature or ionic strength of the solution. OFAT would miss this critical dependency, potentially leading researchers to suboptimal configurations [1] [24].

In contrast, DoE approaches not only identify significant main effects but also quantify how factors interact. This capability is particularly valuable in biosensor optimization, where factors such as biorecognition element concentration, immobilization time, and detection conditions often exhibit complex interdependencies that significantly impact the final biosensor performance in terms of sensitivity, dynamic range, and limit of detection [1].

Factorial Designs: Screening and Evaluating Factors

Fundamentals of Factorial Designs

Factorial designs form the backbone of many DoE investigations, particularly in the initial stages of biosensor optimization. These designs involve studying the effects of multiple factors simultaneously by investigating all possible combinations of the factor levels [26]. The most basic factorial design is the 2-level full factorial, denoted as 2^k, where k represents the number of factors being studied, with each factor tested at two levels (typically coded as -1 and +1) [1].

The key advantage of factorial designs lies in their ability to estimate not only the main effects of each factor (the average change in response when a factor moves from its low to high level) but also the interaction effects between factors (how the effect of one factor depends on the level of another factor) [26]. This comprehensive assessment of both main and interaction effects enables researchers to develop a more complete understanding of their biosensor system than would be possible with OFAT approaches.

Types of Factorial Designs

Full factorial designs investigate all possible combinations of factors and levels, providing complete information on main effects and all orders of interactions. However, the number of experimental runs required for a full factorial design grows exponentially with the number of factors (2^k for a 2-level design), which can become resource-prohibitive when studying many factors [26] [25].

Fractional factorial designs address this limitation by strategically investigating only a subset (fraction) of the full factorial combinations. These designs operate on the principle of sparsity of effects, assuming that while there may be many possible effects, only a few (typically main effects and two-factor interactions) are likely to be important [25]. Although fractional factorial designs require fewer runs, this efficiency comes at the cost of aliasing, where certain effects cannot be distinguished from one another [25].

Table 2: Types of Factorial Designs and Their Characteristics

| Design Type | Number of Runs | Information Obtained | Best Use Cases |

|---|---|---|---|

| Full Factorial | 2^k (for 2-level designs) | All main effects and all interactions | When number of factors is small (typically ≤5) and resources allow |

| Fractional Factorial | 2^(k-p) (where p represents the fraction) | Main effects and lower-order interactions, but with aliasing | Screening many factors (typically >5) with limited resources |

| Mixed-Level Factorial | Varies based on level combinations | Effects of both categorical and continuous factors | When experimenting with different types of factors simultaneously |

Application in Biosensor Optimization

Factorial designs have proven particularly valuable in biosensor optimization. For example, in the development of whole-cell biosensors for detecting catabolic breakdown products of lignin biomass, researchers utilized a definitive screening design to efficiently map the experimental space by systematically modifying genetic components [21]. Through this approach, they successfully enhanced biosensor performance by increasing maximum signal output (up to 30-fold), improving dynamic range (>500-fold), expanding sensing range (approximately 4 orders of magnitude), and increasing sensitivity (by >1500-fold) compared to initial configurations [21].

The following diagram illustrates a typical workflow for applying factorial designs in biosensor optimization:

Response Surface Methodology: Modeling and Optimization

Fundamentals of Response Surface Methodology

Once significant factors have been identified through factorial designs, Response Surface Methodology (RSM) provides a powerful collection of statistical and mathematical techniques for modeling and optimization [28] [29]. RSM uses regression analysis to fit empirical models to experimental data, enabling researchers to locate optimal conditions within the experimental region [28].

The primary goal of RSM is to efficiently navigate the experimental space to identify factor settings that produce the best possible response values, whether the objective is to maximize, minimize, or achieve a target value [29]. This approach is particularly valuable in biosensor optimization, where multiple performance characteristics (e.g., sensitivity, dynamic range, specificity) must often be balanced simultaneously.

RSM typically employs sequential experimentation, beginning with first-order models for initial exploration and progressing to second-order models that can capture curvature in the response surface [29]. The general form of a second-order response surface model for k factors is:

[ y = \beta0 + \sum{i=1}^k \betai xi + \sum{i=1}^k \beta{ii} xi^2 + \sum{i

Where y represents the predicted response, β₀ is the constant term, βi represents the coefficients for linear effects, βii represents the coefficients for quadratic effects, β_ij represents the coefficients for interaction effects, and ε represents the random error [28] [29].

Key RSM Designs

Several specialized experimental designs have been developed specifically for response surface methodology:

Central Composite Designs (CCD): These designs consist of a two-level factorial or fractional factorial component (2^k) augmented with center points and axial (star) points [30] [31]. The axial points allow estimation of curvature, making CCDs appropriate for fitting second-order models. CCDs can be made rotatable through careful selection of the axial point distance (α), meaning the prediction variance depends only on the distance from the design center and not on direction [30] [31].

Box-Behnken Designs: These are spherical, rotatable designs that consist of combinations of factor levels at the midpoints of the edges of the experimental space and center points [30]. Box-Behnken designs are often more efficient than CCDs when the corners of the experimental space are expensive or impossible to reach.

The diagram below illustrates the structure of a central composite design for two factors:

Implementation of RSM

The implementation of RSM typically follows a structured sequence:

Problem Definition: Clearly define the optimization objectives and identify the critical response variables that characterize biosensor performance (e.g., limit of detection, signal-to-noise ratio, dynamic range) [28].

Factor Screening: Use factorial designs to identify the most influential factors from a larger set of potential variables [28] [25].

Experimental Design Selection: Choose an appropriate RSM design (e.g., CCD, Box-Behnken) based on the number of factors, resources available, and suspected complexity of the response surface [30].

Model Development: Conduct experiments according to the design matrix and use regression analysis to fit an appropriate response surface model to the data [28].

Model Validation: Check the adequacy of the fitted model using statistical tests (e.g., ANOVA, lack-of-fit tests) and residual analysis [28] [30].

Optimization: Use the validated model to locate optimal factor settings through techniques such as canonical analysis or numerical optimization [28] [29].

Confirmation: Conduct confirmation experiments at the predicted optimal conditions to verify model predictions [28].

Comparative Experimental Data: DoE vs Traditional Methods

Quantitative Performance Comparison

The superiority of DoE approaches over traditional OFAT methods is demonstrated through quantitative performance metrics across various optimization studies. The table below summarizes experimental data comparing the two approaches in biosensor optimization:

Table 3: Performance Comparison of DoE vs OFAT in Biosensor Optimization

| Performance Metric | OFAT Optimization | DoE Optimization | Improvement Factor |

|---|---|---|---|

| Maximum Signal Output | Baseline | Up to 30-fold increase | 30x [21] |

| Dynamic Range | Baseline | >500-fold improvement | >500x [21] |

| Sensing Range | Baseline | ~4 orders of magnitude expansion | ~10,000x [21] |

| Sensitivity | Baseline | >1500-fold increase | >1500x [21] |

| Experimental Effort | High (many sequential experiments) | Significantly reduced | ~50-80% reduction [1] |

| Interaction Detection | Not possible | Comprehensive mapping of factor interactions | N/A [24] |

Case Study: Whole-Cell Biosensor Optimization

A compelling example of DoE application in biosensor development comes from research on whole-cell biosensors for detecting catabolic breakdown products of lignin biomass [21]. Researchers applied a definitive screening design to optimize a protocatechuic acid (PCA)-responsive biosensor by systematically modifying three genetic factors: the promoter regulating the transcription factor (Preg), the output promoter (Pout), and the ribosome binding site controlling reporter expression (RBSout) [21].

The DoE approach enabled the researchers to efficiently explore the multidimensional experimental space and identify optimal combinations of genetic components that would have been virtually impossible to discover using OFAT. The resulting optimized biosensors exhibited dramatically improved performance characteristics, including both "digital" biosensors with sharp, switch-like dose-response behavior for clear binary classification and "analogue" biosensors with graded responses for quantifying analyte concentration over a wide range [21].

This case study illustrates how DoE not only enhances traditional biosensor performance metrics but also enables the engineering of novel response modalities that can be tailored to specific application requirements.

Essential Research Reagent Solutions for DoE Implementation

Successful implementation of DoE in biosensor optimization requires specific research reagents and materials. The following table details key solutions and their functions:

Table 4: Essential Research Reagent Solutions for DoE in Biosensor Optimization

| Reagent/Material | Function in DoE | Application Examples |

|---|---|---|

| Statistical Software Packages | Experimental design generation, data analysis, model fitting, and optimization | JMP, Minitab, Design-Expert, R with DoE packages [24] [31] |

| Coded Variable Templates | Standardization of factor levels across different measurement scales | Excel templates with pre-coded factor levels (-1, 0, +1) [24] |

| High-Throughput Screening Systems | Efficient execution of multiple experimental runs under controlled conditions | Automated liquid handling systems, multi-well plate readers [21] |

| Standardized Buffer Systems | Control of environmental factors during biosensor testing and characterization | pH buffers, ionic strength solutions, temperature control systems [1] |

| Reference Materials & Calibrants | Validation of measurement systems and response assessment | Certified reference materials, standard solutions for calibration [1] |

| Quality Control Samples | Monitoring of experimental process stability and variance estimation | Replicate samples, control charts for response measurement [27] |

The comparison between traditional OFAT approaches and systematic DoE methodologies reveals significant advantages for factorial designs and response surface methodology in biosensor optimization. Through their ability to efficiently explore complex experimental spaces, quantify factor interactions, and build predictive models, DoE approaches enable researchers to achieve performance enhancements that are difficult or impossible to attain with traditional methods.

The experimental data demonstrates that DoE can deliver order-of-magnitude improvements in key biosensor performance metrics, including signal output, dynamic range, sensing range, and sensitivity, while simultaneously reducing experimental effort. Furthermore, the structured nature of DoE provides researchers with deeper insights into their biosensor systems, enabling not just optimization of existing configurations but also the engineering of novel response behaviors tailored to specific application requirements.

For researchers, scientists, and drug development professionals working in biosensor development, adopting DoE principles represents a strategic opportunity to accelerate development timelines, enhance performance characteristics, and ultimately create more effective and reliable biosensing devices for point-of-care diagnostics and other applications.

Implementing DoE in Biosensor Development: From Theory to Practical Workflows

This guide compares the systematic Design of Experiments (DoE) approach against traditional one-variable-at-a-time (OVAT) methods for biosensor optimization. By structuring the process into distinct screening, optimization, and verification phases, researchers can efficiently identify critical factors, model complex interactions, and achieve robust performance with minimal experimental effort. Supported by experimental data and detailed protocols, this guide provides a framework for developing high-performance biosensing systems for drug development and diagnostic applications.

The development of high-performance biosensors is critical for advancements in drug development, clinical diagnostics, and environmental monitoring. Achieving optimal performance requires careful balancing of multiple parameters, including biorecognition element immobilization, detection interface formulation, and operational conditions [2]. Traditional OVAT approaches, which vary a single factor while holding others constant, present significant limitations in this multidimensional space. They fail to detect factor interactions, may identify false optima, and are inefficient, often requiring extensive experimental runs to cover the same ground [2] [21].

A systematic DoE workflow addresses these shortcomings by providing a structured, statistical framework for planning, conducting, and analyzing experiments. This methodology allows researchers to efficiently screen numerous factors, model complex response surfaces, and verify optimal conditions with a minimized number of experimental runs. For biosensor development, this translates to enhanced sensitivity, specificity, and dynamic range, accelerating the transition from research prototypes to reliable, commercially viable devices [2] [21].

DoE vs. Traditional OVAT: A Comparative Framework

The table below summarizes the fundamental differences between the structured DoE approach and the traditional OVAT method.

Table 1: Core Differences Between DoE and OVAT Methodologies